Duckietown is a technological platform designed to create, consume, and disseminate robotics and AI learning experiences.

Duckietown integrates the following components:

Additional resources:

Hardware is the most tangible part of Duckietown: a robotic ecosystem where fleets of autonomous vehicles (Duckiebots) interact with each other and with the urban environment they operate within (Duckietown).

Duckietown was designed to answer the question: what is the least hardware we need for deploying single- and multi-robot advanced autonomy solutions? In other words, what is the simplest robotic platform that allows us to write scientific papers?

The Duckiebot is a minimal autonomy platform. It allows for investigating complex autonomous behaviors, learning about real-world challenges, and doing research.

The main onboard sensor for both Duckiebots and Duckiedrones is a front-facing camera, as vision is a tough nut to crack, especially on computationally limited resources.

Duckiebot’s actuators include two DC motors (for moving in differential drive configuration) and RGB addressable LEDs for signaling to other Duckiebots and shedding light on the road. Additional sensors such as IMU, time-of-flight and wheel encoders are available.

Duckietown is fully decentralized (there are no mega computers doing all the calculations, or eyes in the sky providing guidance), and Duckiebots are fully autonomous. All decision making is done onboard, thanks to the power of Raspberry Pi and NVIDIA Jetson Nano boards – proper credit card sized computers.

The custom-designed onboard Duckiebattery battery offers hours of autonomy, advanced diagnostics, and pass-through charging, enabling the Duckiebots to know when it is time to go refill.

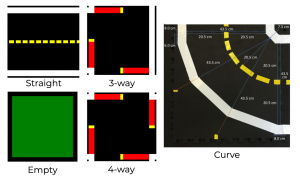

Duckietowns are structured and modular environments built on two layers: road and signal, to offer a repeatable but flexible driving experience, without fixed maps.

The road layer is defined by five segment types: straight, curve, 3-, 4-way intersection and empty tiles. Every segment is build on interconnectable tiles which can be rearranged to produce any number of city topographies while maintaining rigorous appearance specifications that guarantee the functionality of the robots.

The signal layer in Duckietown is made of traffic signs and road infrastructure.

The signs show both easily machine-readable markers (April Tags) and actual human-readable representations, to provide scalable complexity in perception. Signs enable Duckiebots to localize on the map, interpret the type and orientation of intersections, amongst other uses.

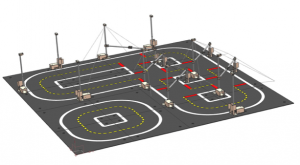

The road infrastructure is made of traffic lights and watchtowers, which are proper robots themselves. At hardware level, traffic lights are Duckiebots without wheels, and watchtowers are traffic lights without LEDs.

Although immobile, the road infrastructure enables every Duckietown to become itself a robot: it can sense, think and interact with the environment.

A Duckietown instrumented with a sufficient number of watchtowers can be transformed in a Duckietown Autolab: an accessible, reproducible setup for performance benchmarking of behaviors of fleets of self-driving cars. An Autolab can be programmed to localize robots and communicate with them in real time.

Duckietown is programmed to be scalable in terms of difficulty level, so that it can adapt to the user’s skill level: from zero to scientist level.

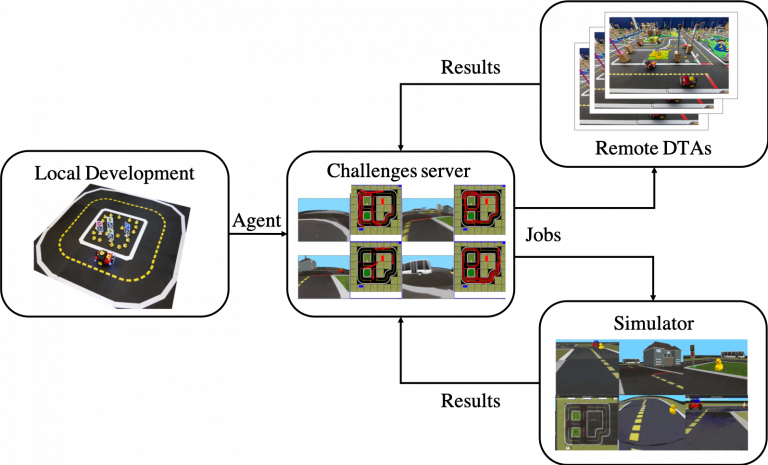

The software architecture in Duckietown allows users to develop on real hardware and simulation and evaluate their agents locally or in remote, on the cloud and on real hardware.

The Duckiebot and Duckietowns can be used via terminal (for experts) or a web duckie-dashboard GUI (for convenience).

The various robotics ecosystem functionalities offered in the base implementation are encapsulated in Docker containers, which include ROS (Robotic Operating System) and Python code.

Docker ensures code reproducibility: whatever “works” at some point in time will continues to work forever as all dependencies are included in the specific application container. In addition, Docker eases modularity, allowing to substitute individual functional blocks without encountering compatibility problems.

Communication between functionalities (perception, planning, high- and low-level control, etc.) is achieved through ROS, a well-known open source middleware for robotics.

Duckietown uses mostly Python, although ROS supports different languages too.

ROS nodes running inside Docker containers rely on a homemade operating system (duckie-OS) that provides an additional layer of abstraction. This step allows running complex sequences with single lines of code. For example, with only three lines of code you can train an agent in simulation, “package” it in a Docker container and deploy it on an actual Duckiebot (real or virtual).

Duckietown includes a simulator, Gym-Duckietown, written entirely in Python/OpenGL (Pyglet). The simulator is designed to be physically realistic and easily compatible with the real world, meaning that algorithms developed in the simulation can be ported to physical Duckiebots with a simple click.

In the simulator you can place agents (Duckiebots) in simulated cities: closed circuits of streets, intersections, curves, obstacles, pedestrians (ducks) and other Duckiebots. It can get pretty chaotic!

Gym-Duckietown is fast, open-source, and incredibly customizable. Although the simulator was initially designed to test algorithms for keeping vehicles in the driving lane, it has now become a fully functional urban driving simulator suitable for training and testing machine learning, reinforcement learning, imitation learning, and, of course, more traditional robotics algorithms.

With Gym-Duckietown you can explore a range of behaviors: from simple lane following to complete urban navigation with dynamic obstacles. In addition, the simulator comes with a number of features and tools that allow you to bring algorithms developed in simulation quickly to the physical robot, including advanced domain randomization capabilities, accurate physics at the level of dynamics and perception (and, most importantly: ducks waddling around).

As a complement to the simulation environment and standardized hardware, we provide a database of Duckiebot camera footage with associated technical data (camera calibrations, motor commands) that is continuously updated.

This amount of data is useful both for training certain types of machine learning algorithms (learning by imitation) and for developing perception algorithms that work in the real world.

Given the standardization of the hardware and its international distribution, this database contains information about a multitude of different environmental conditions (light, road surfaces, backgrounds, …) and experimental imperfections, which is what makes robotics fun!

To accompany Duckietown users in this adventure, we provide detailed step-by-step instructions for all steps of the learning process: from assembling a box of parts to controlling a fleet of self-driving cars roaming around in a smart city.

The spirit of the Duckietown operation manuals, which can be found in the Duckumentation, is to provide executive hands-on directives to get specific things to work. We include graphics, preliminary competence and expected learning outcomes for each section, as well as troubleshooting sections and demo videos when applicable.

The original subtitle of the Duckietown project was:

“From a box of components to a fleet of self-driving cars in just 1325 steps, without hiding anything.”

Since then, the steps might have become more but the spirit has remained the same. All the information to explore, use and develop using Duckietown are in our online library (the “Duckuments”, or “Duckiedocs”).

The documentation provides instructions, operating manuals, theoretical preliminaries, links to descriptions of the codes used, and “demos” of fundamental behaviors, at the level of a single robot or fleet.

The documentation is open source, collaborative (anyone can integrate contributions, which are moderated to ensure the quality), and free.