Sim-to-Sim-to-Real Transfer for Small Autonomous Vehicles

Project Resources

- Objective: To evaluate Sim-to-Real Transfer accuracy by comparing learning and control performance across simulators of varying fidelity.

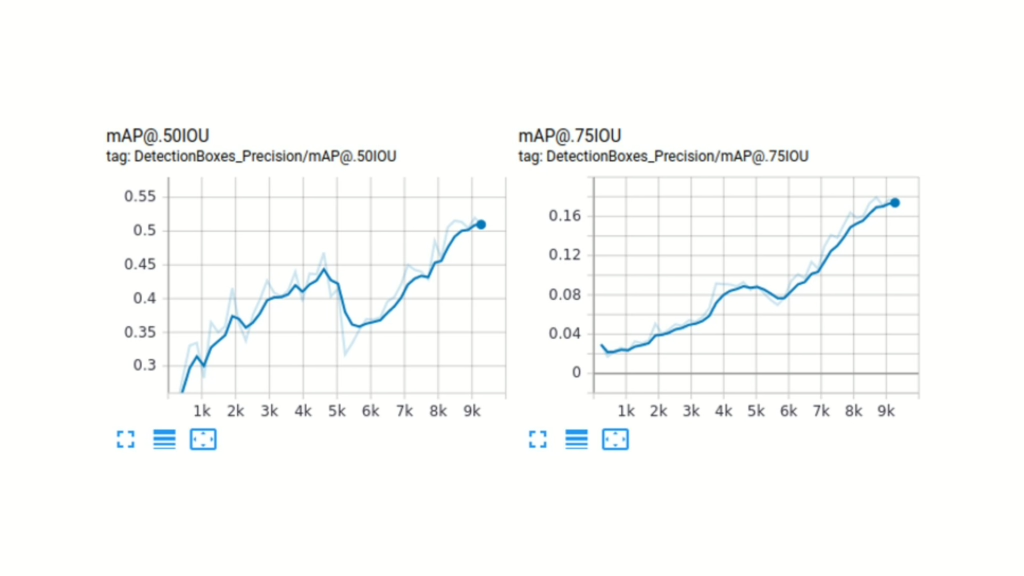

- Approach: Train, validate, and benchmark identical perception and control pipelines across low-fidelity simulation, high-fidelity simulation.

- Authors: Jurriaan Buitenweg, Dr. Cynthia Liem

Sim-to-Real Transfer for Small Autonomous Vehicles - objectives and approach

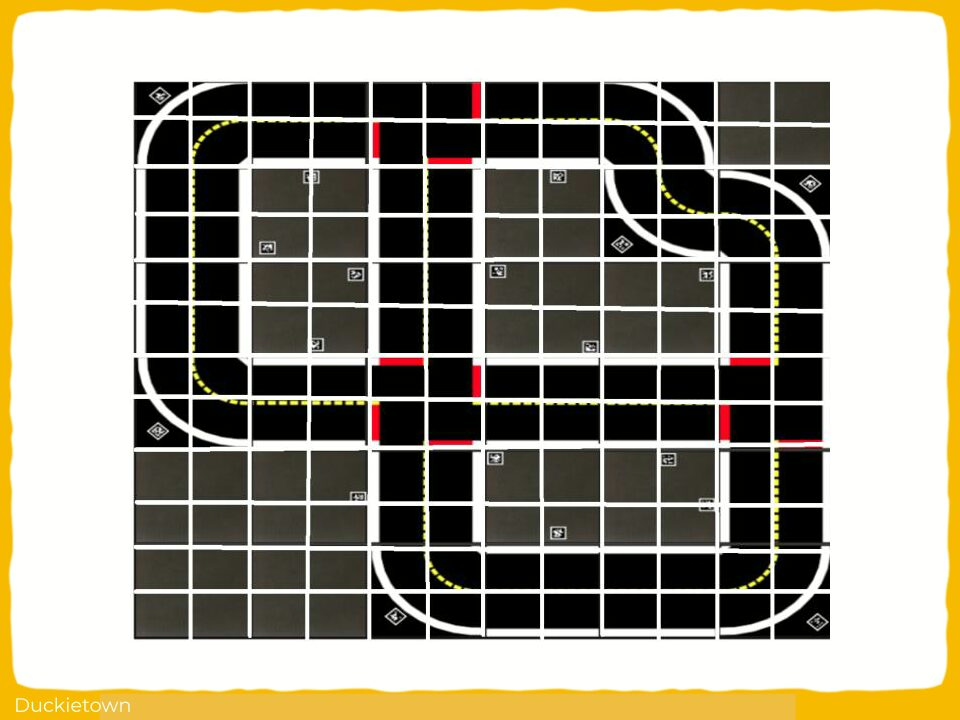

This study investigated whether an intermediate, low-fidelity simulator can be used to estimate the simulation-to-reality (Sim2Real) gap for autonomous driving models trained in a high-fidelity simulator.

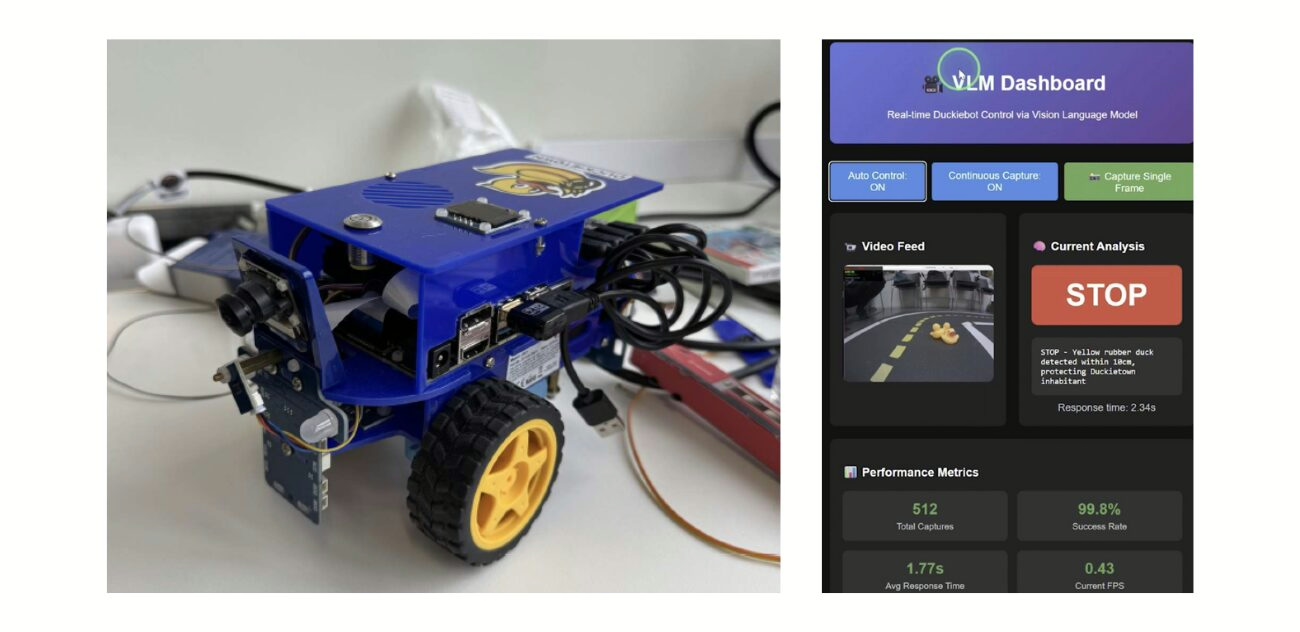

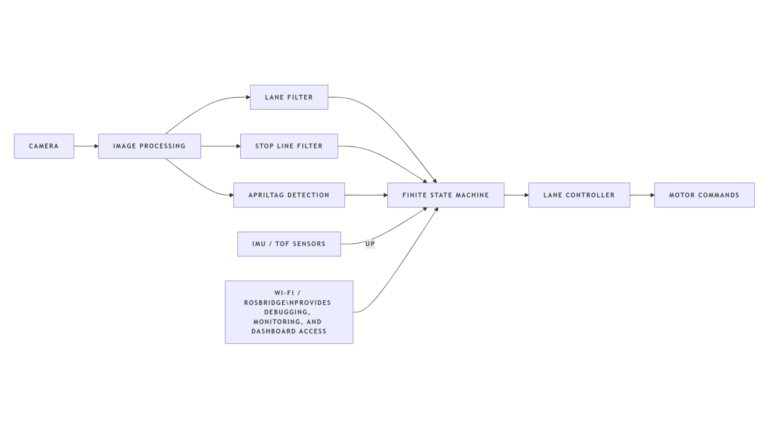

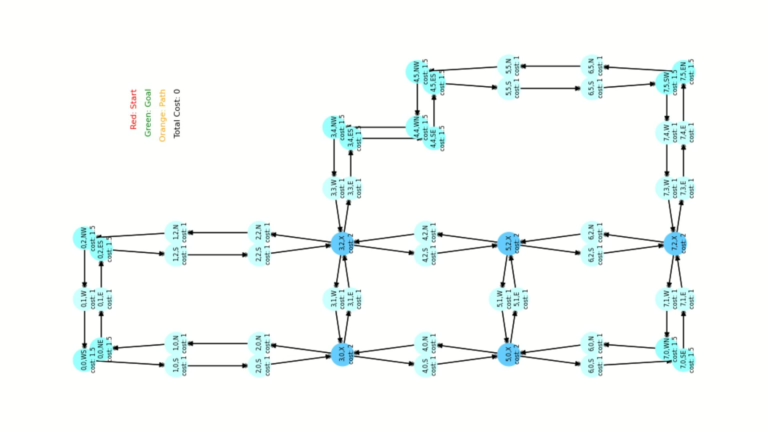

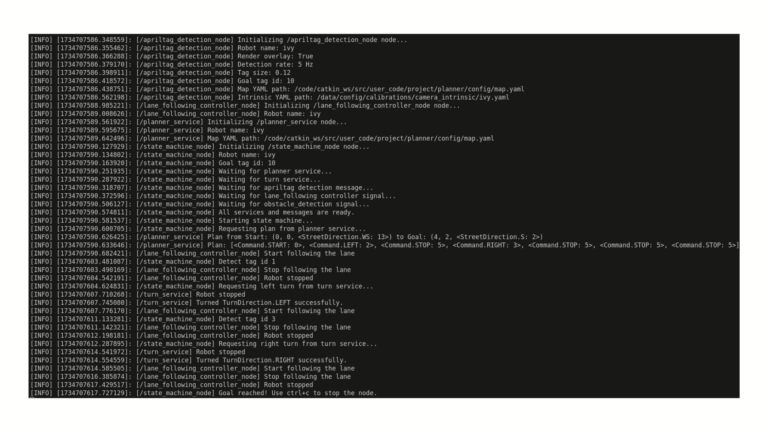

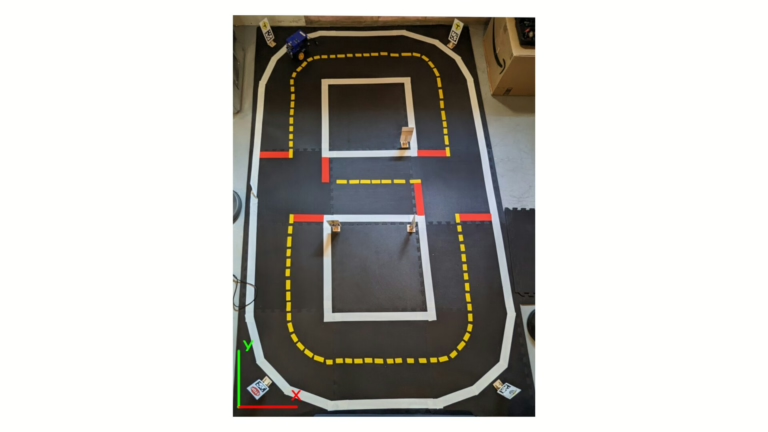

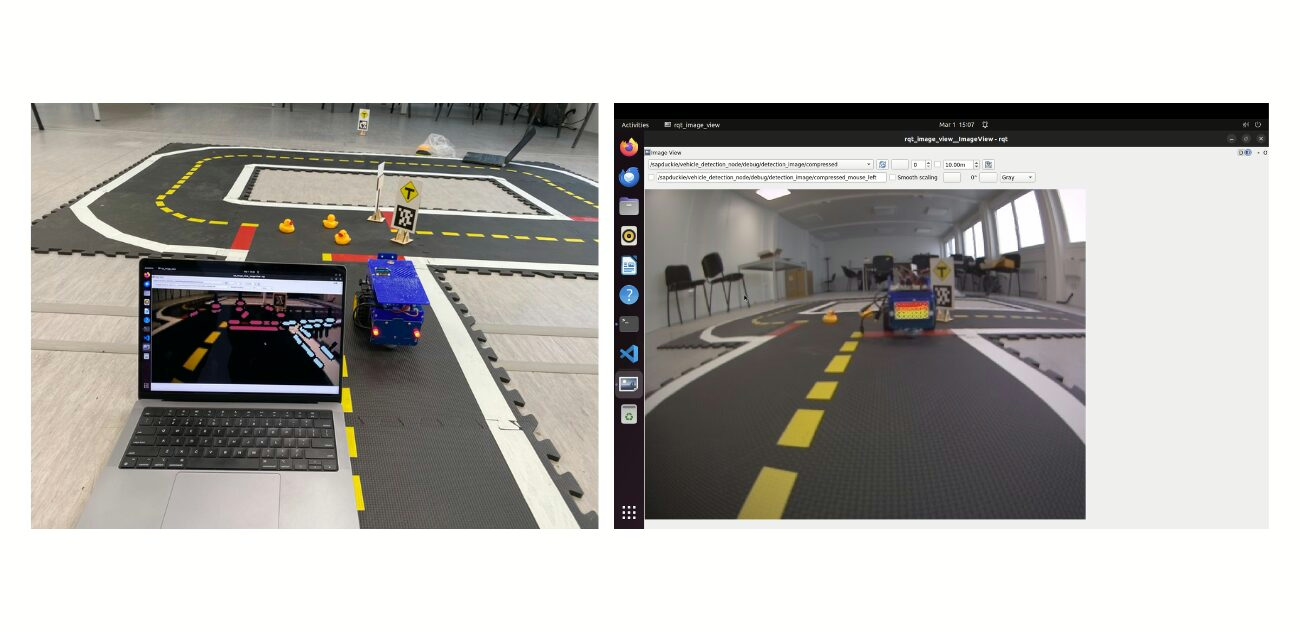

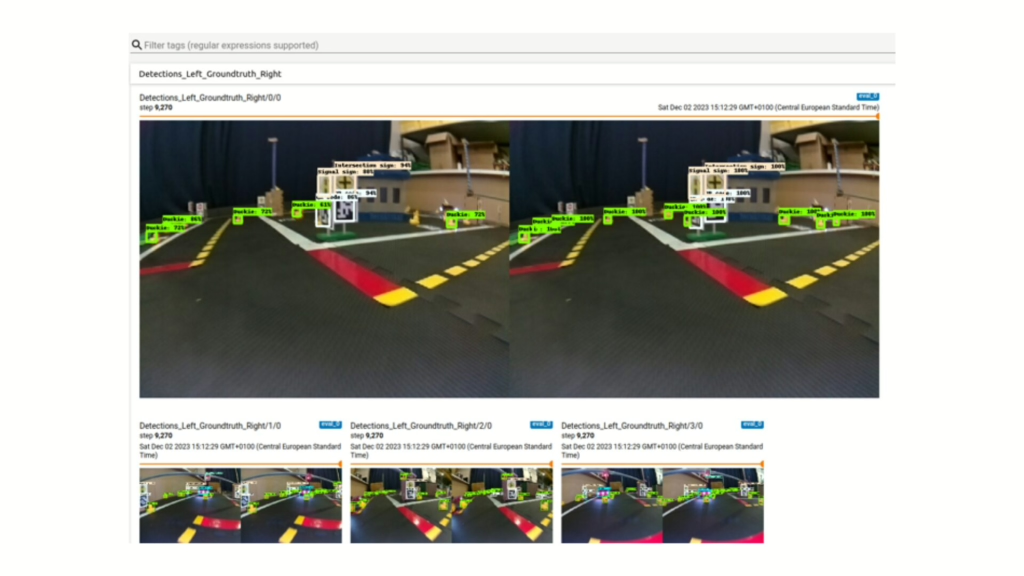

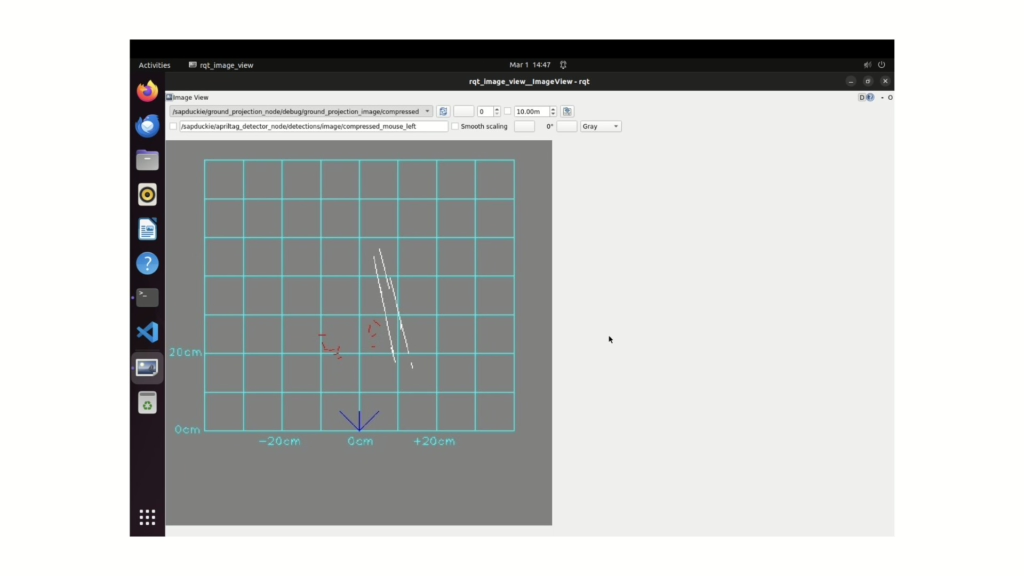

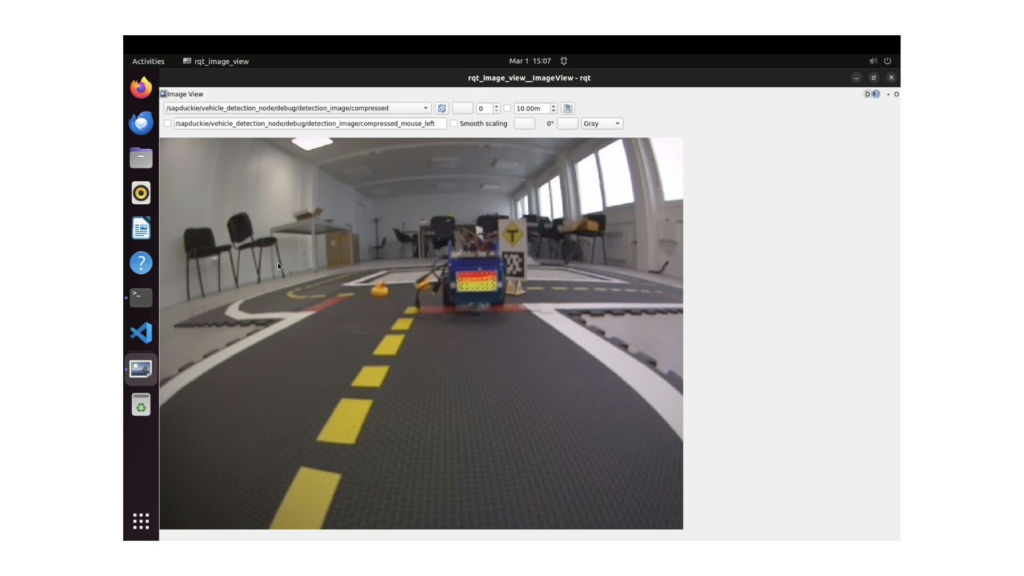

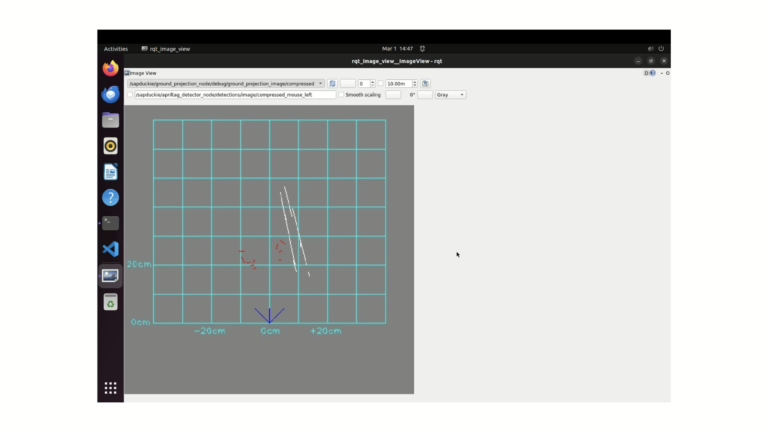

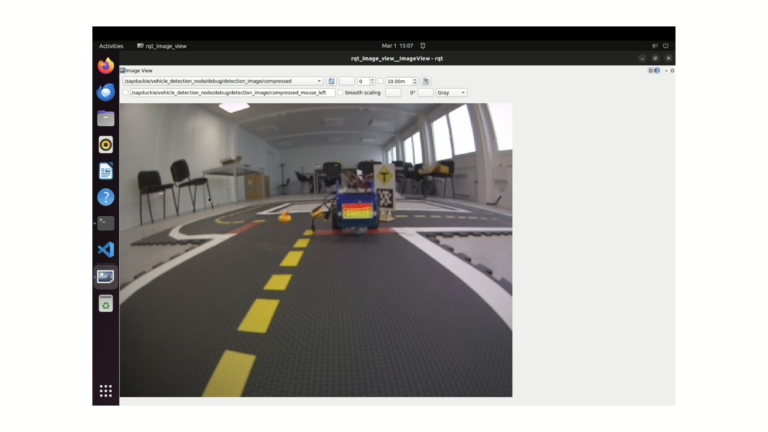

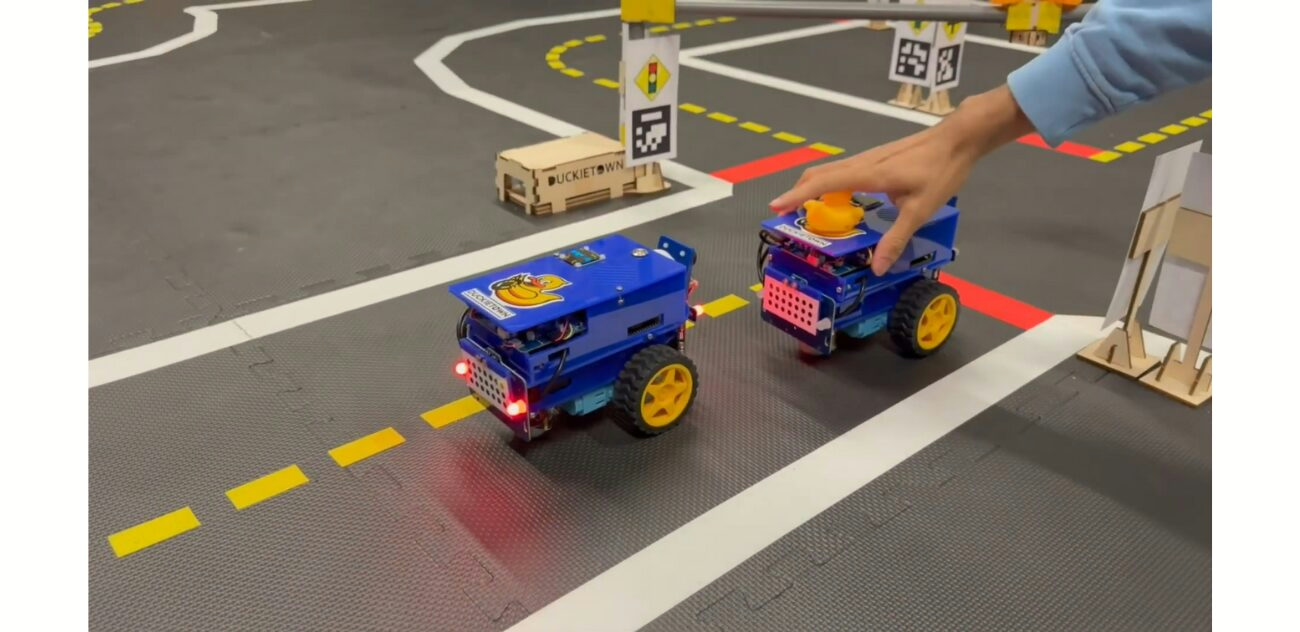

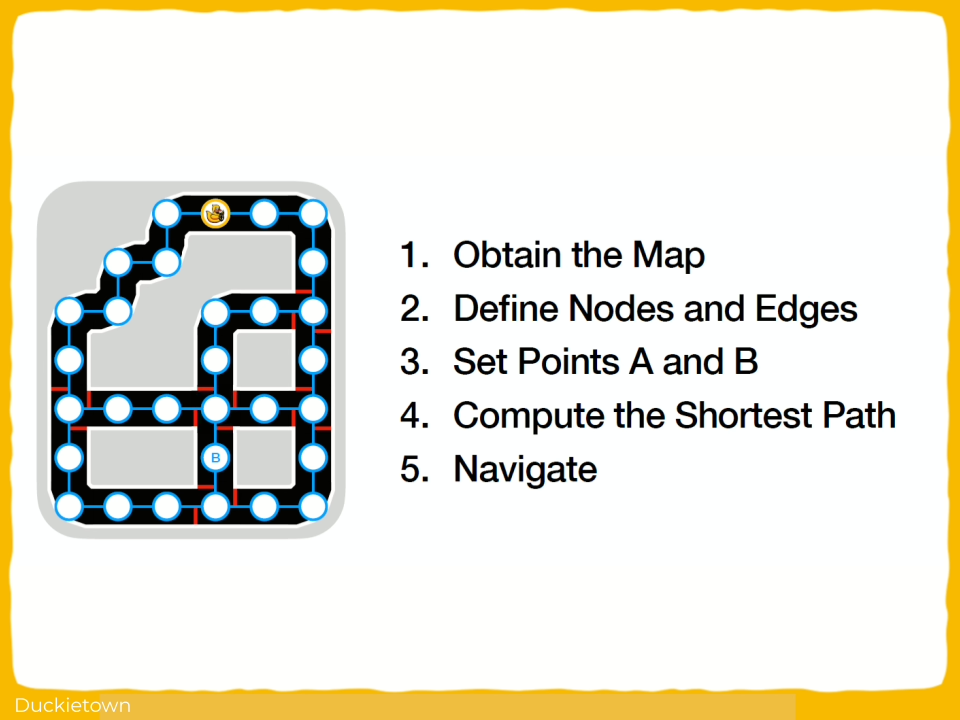

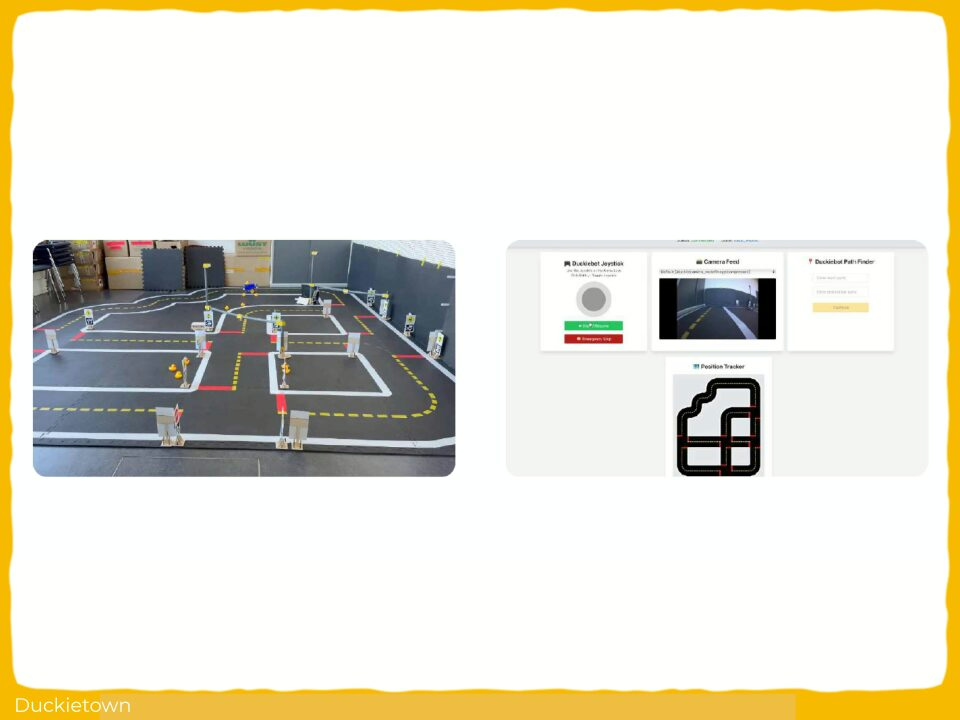

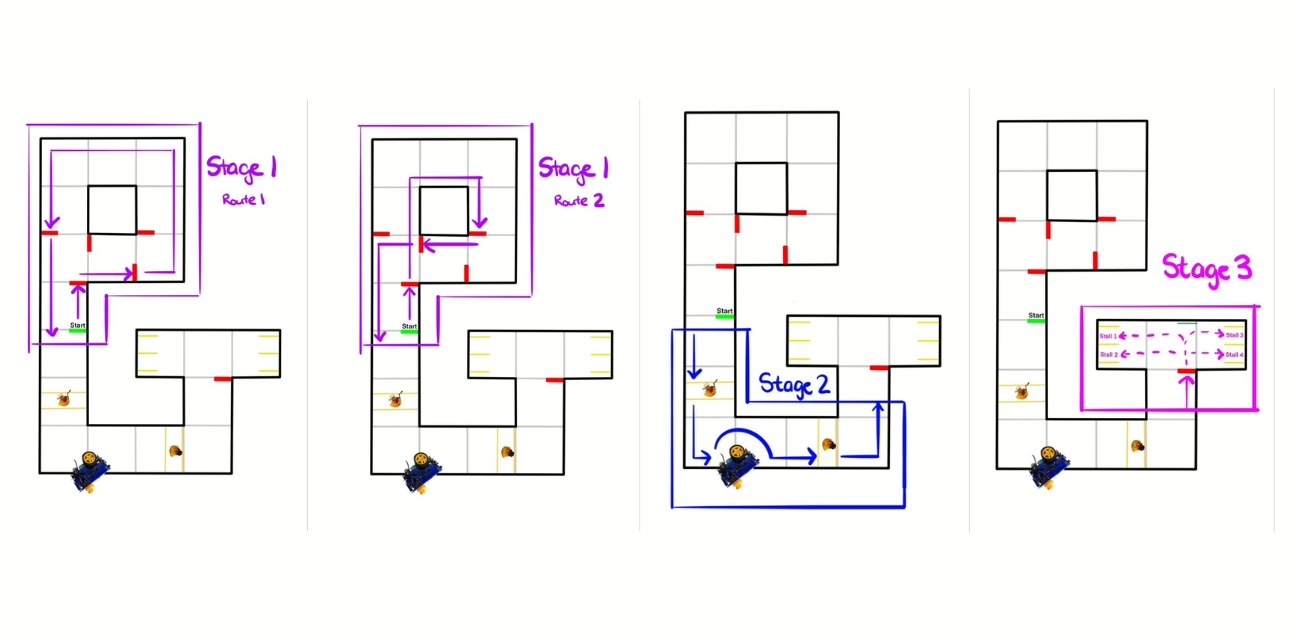

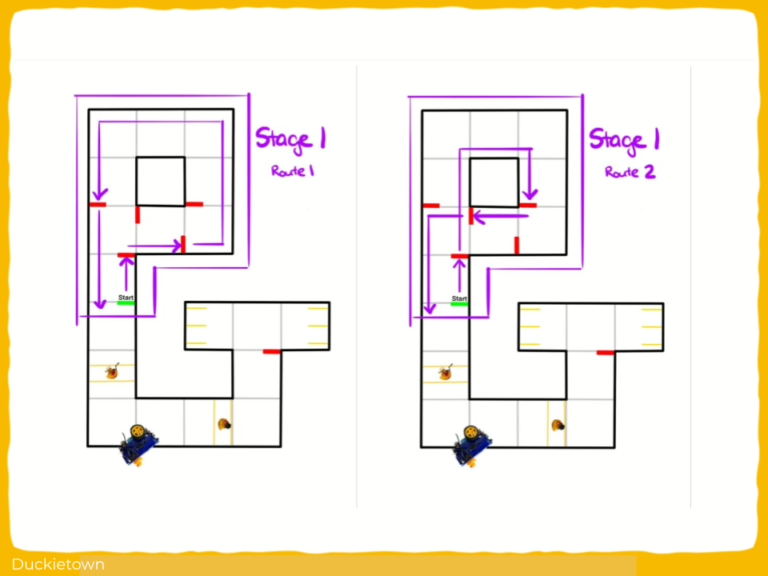

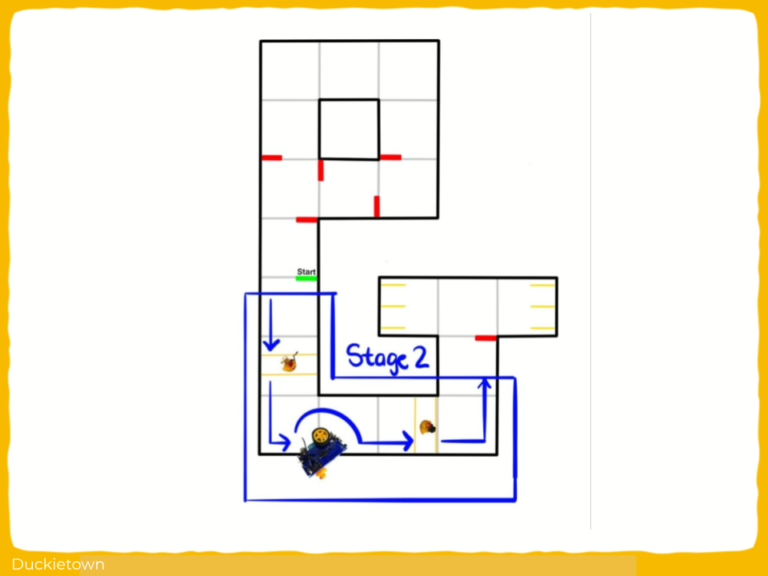

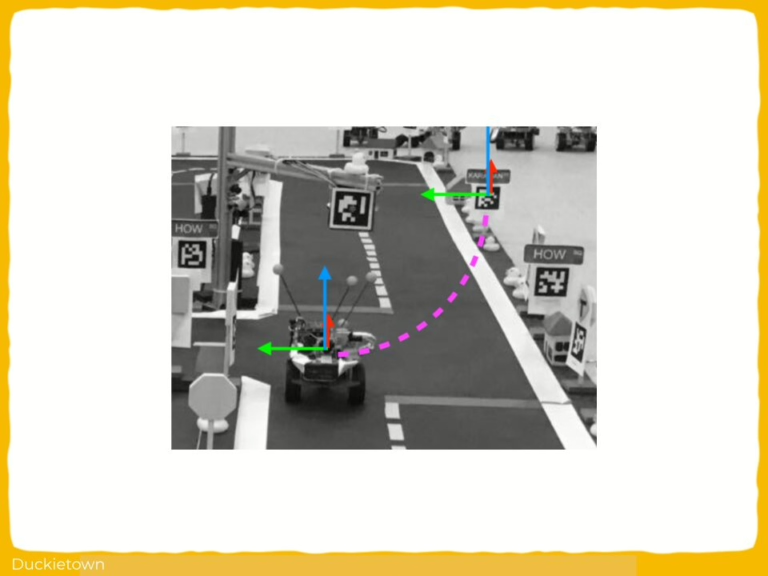

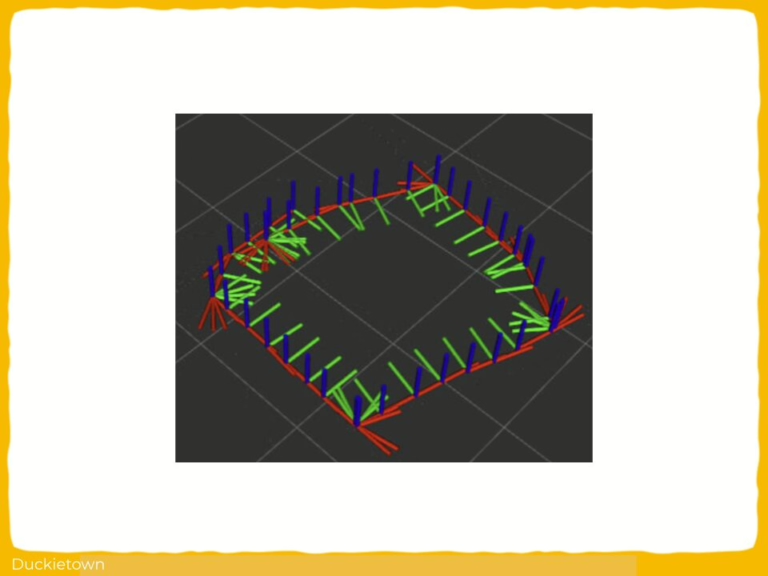

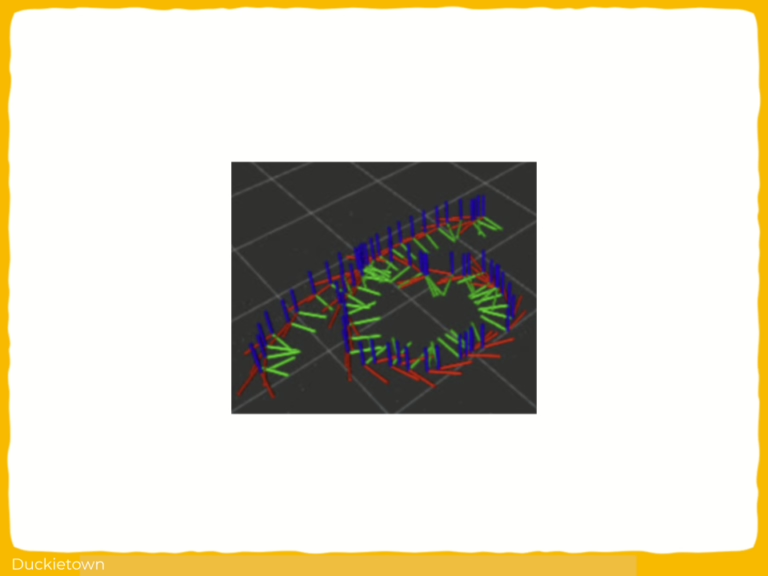

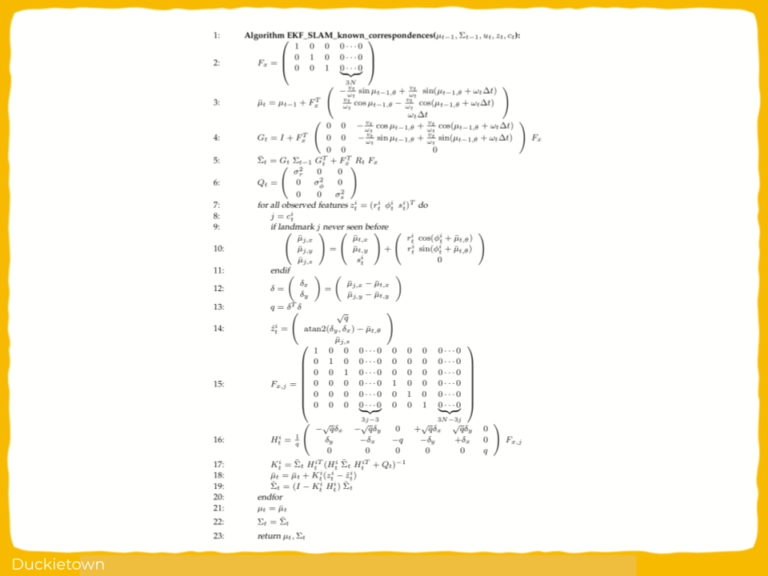

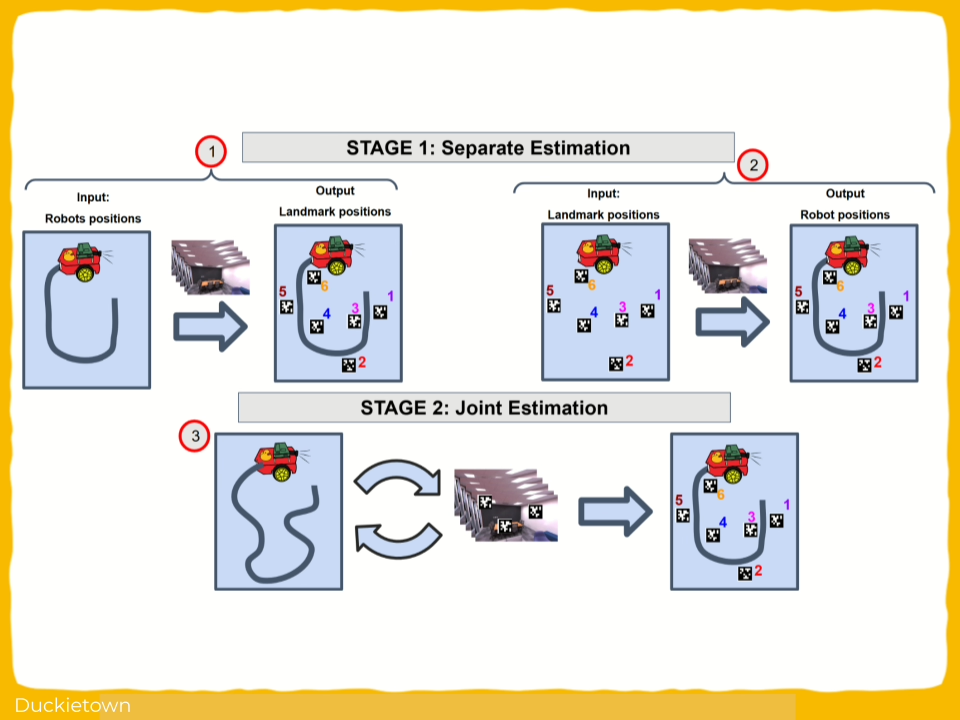

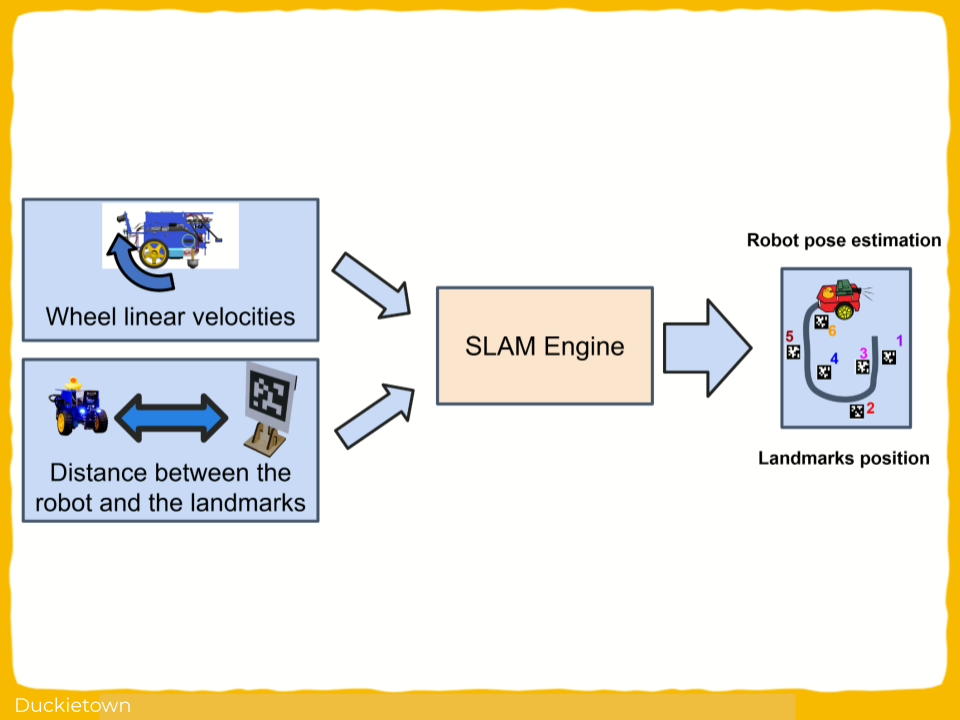

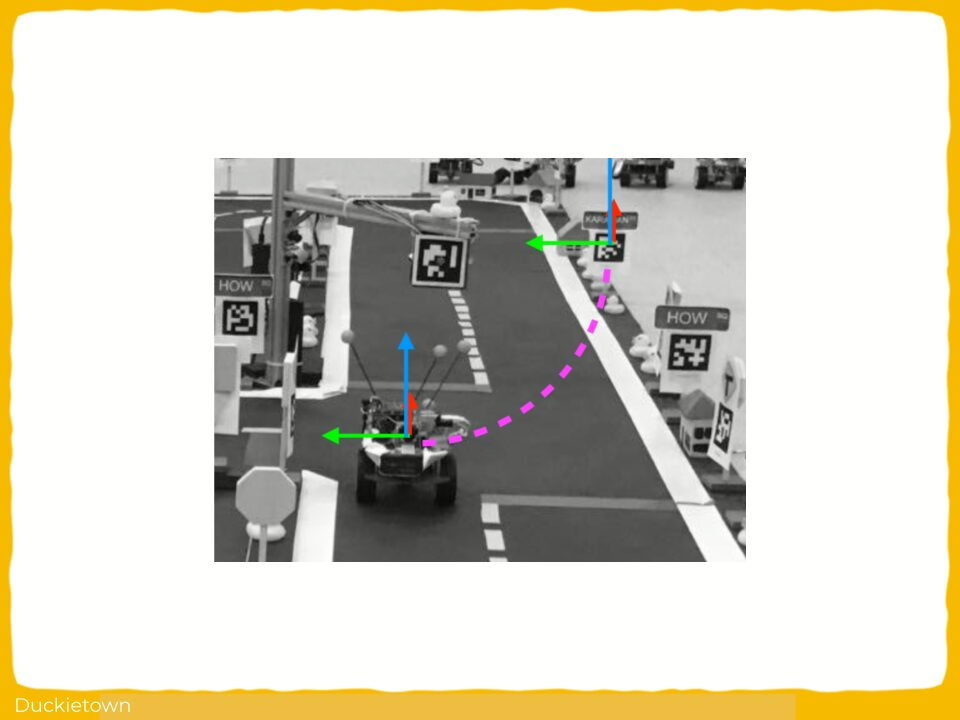

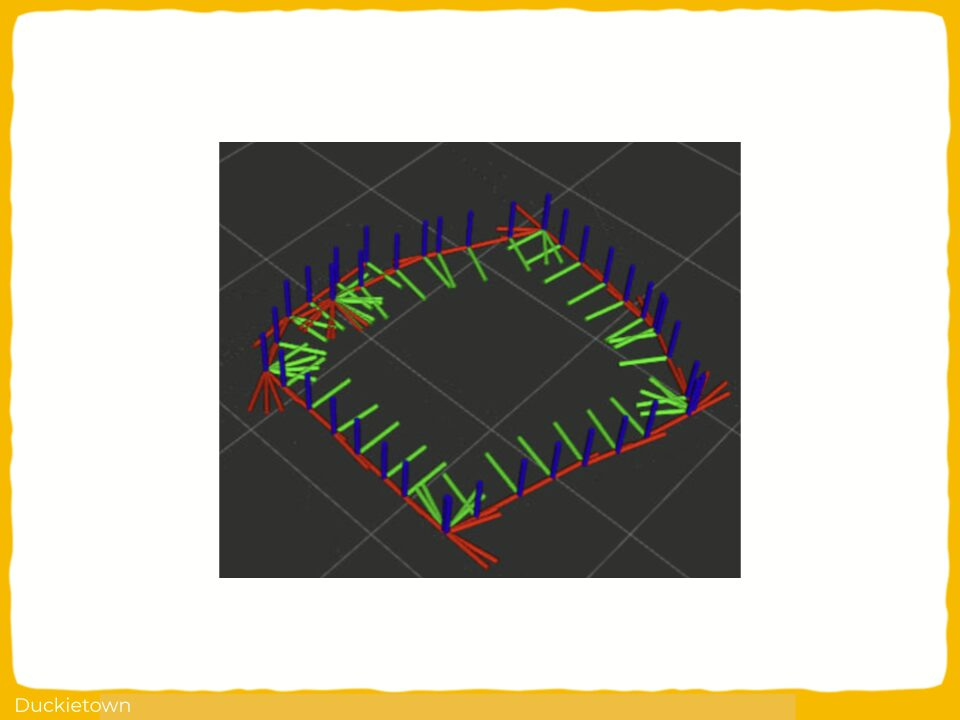

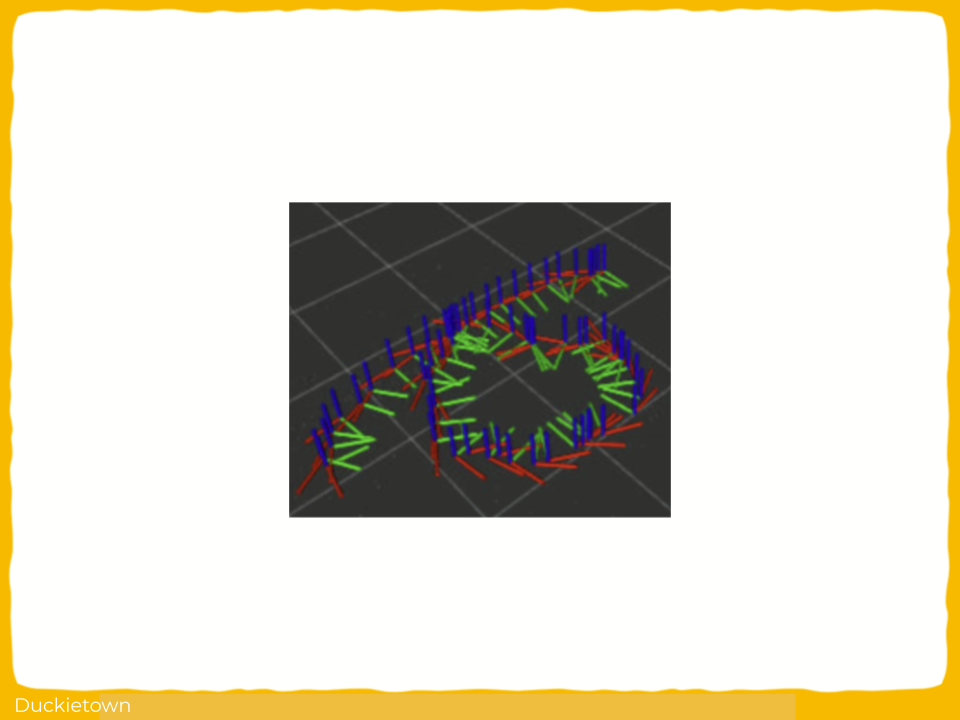

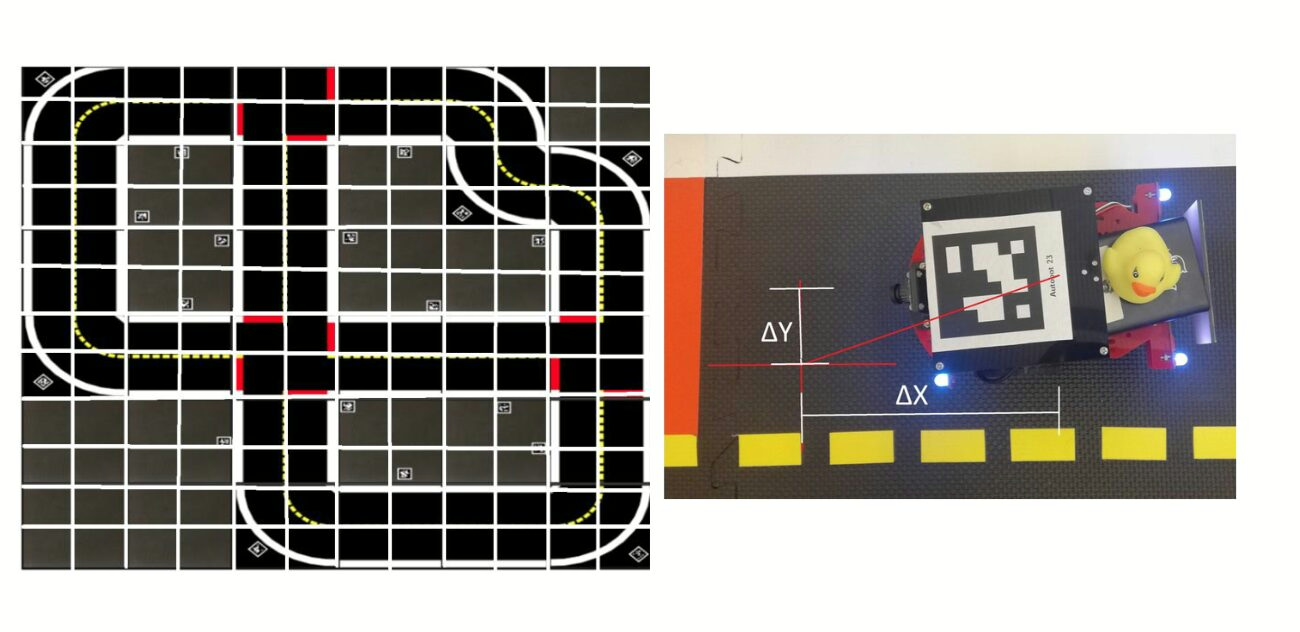

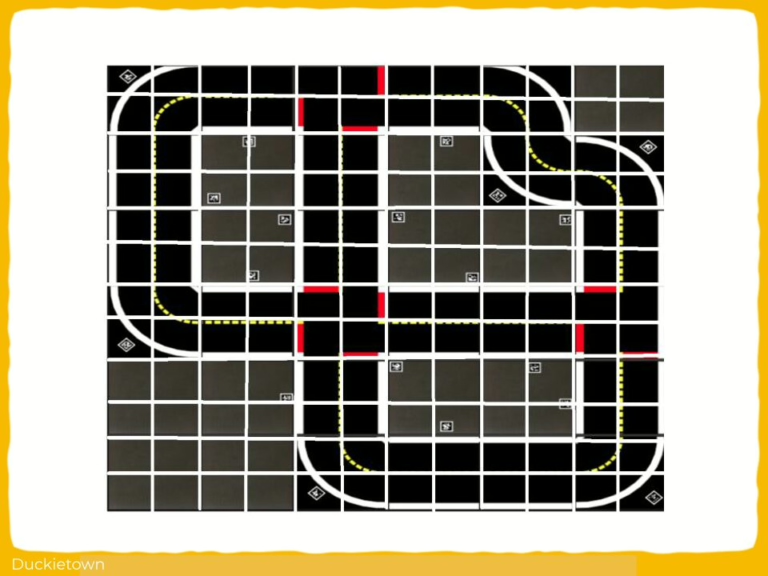

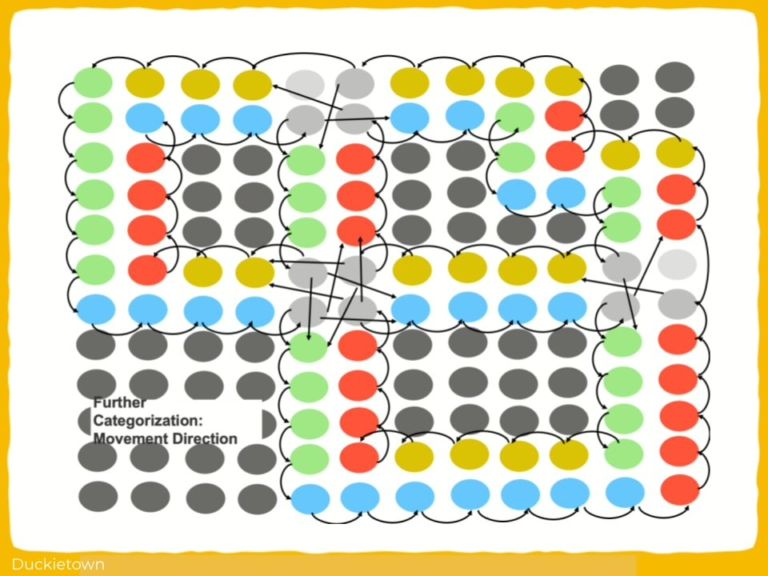

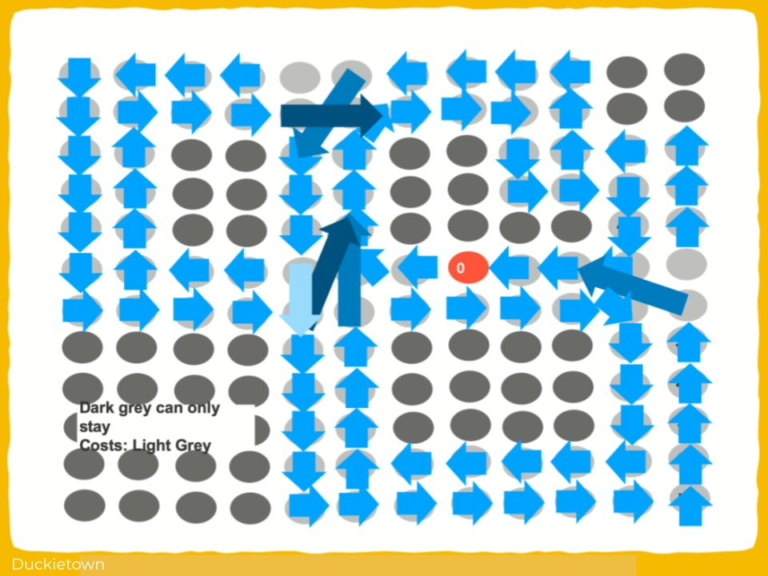

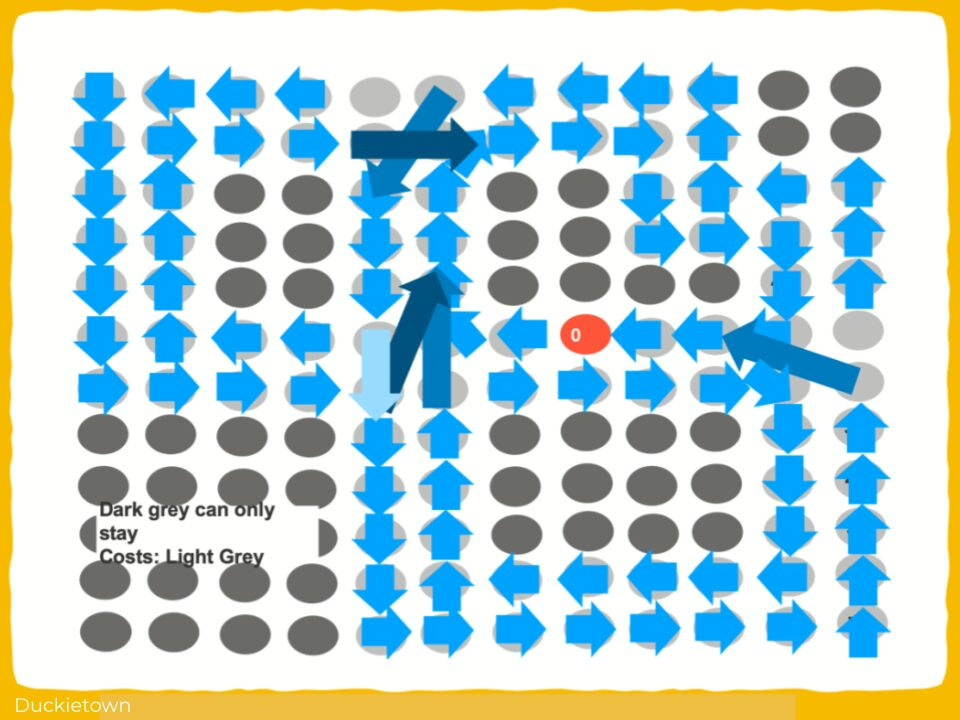

Specifically, it proposes a Sim-to-Sim-to-Real evaluation pipeline in which deep reinforcement learning models are trained in CARLA, evaluated in Gym Duckietown, and finally deployed on a physical Duckiebot.

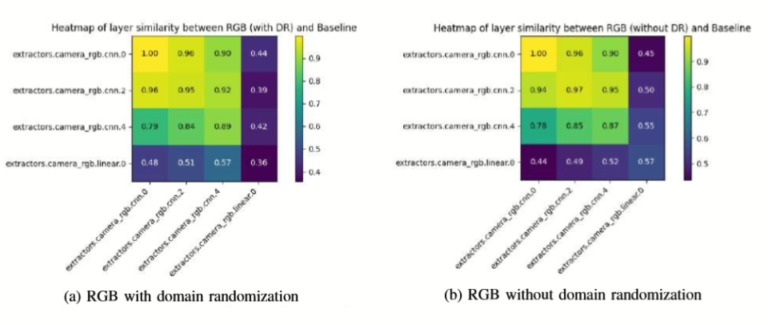

The objective is to determine to what extent performance in Gym Duckietown predicts real-world performance, and whether similarity in learned feature representations can serve as an indicator of successful Sim-to-Real transfer before deployment in the real world.

Sim-to-Real Transfer for Small Autonomous Vehicles - highlights

The challenges and the approach

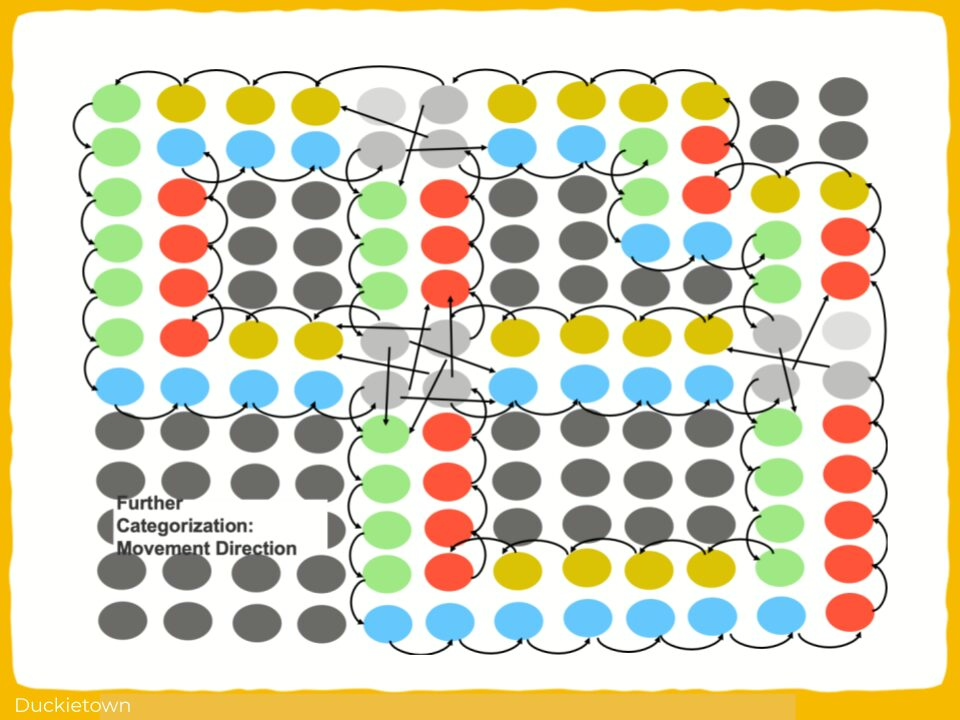

A core challenge addressed in this work is the inherent difficulty in ensuring that solutions developed in simulation remain effective when deployed on physical hardware.

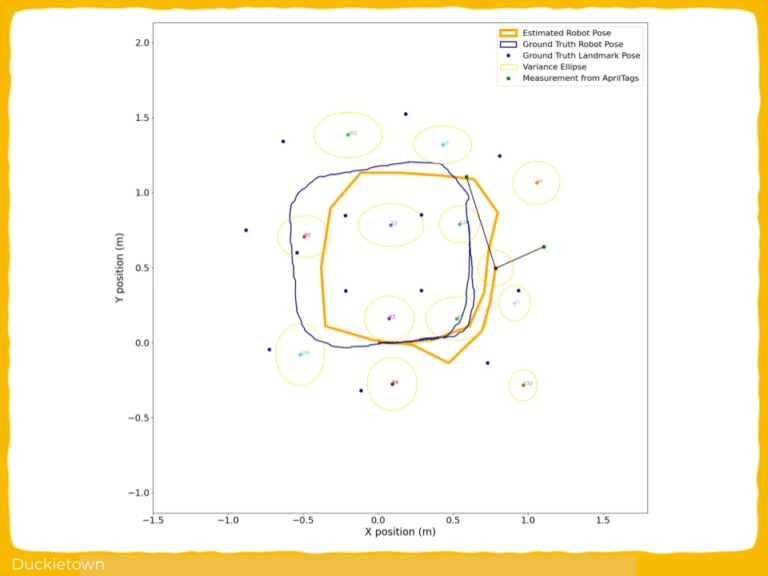

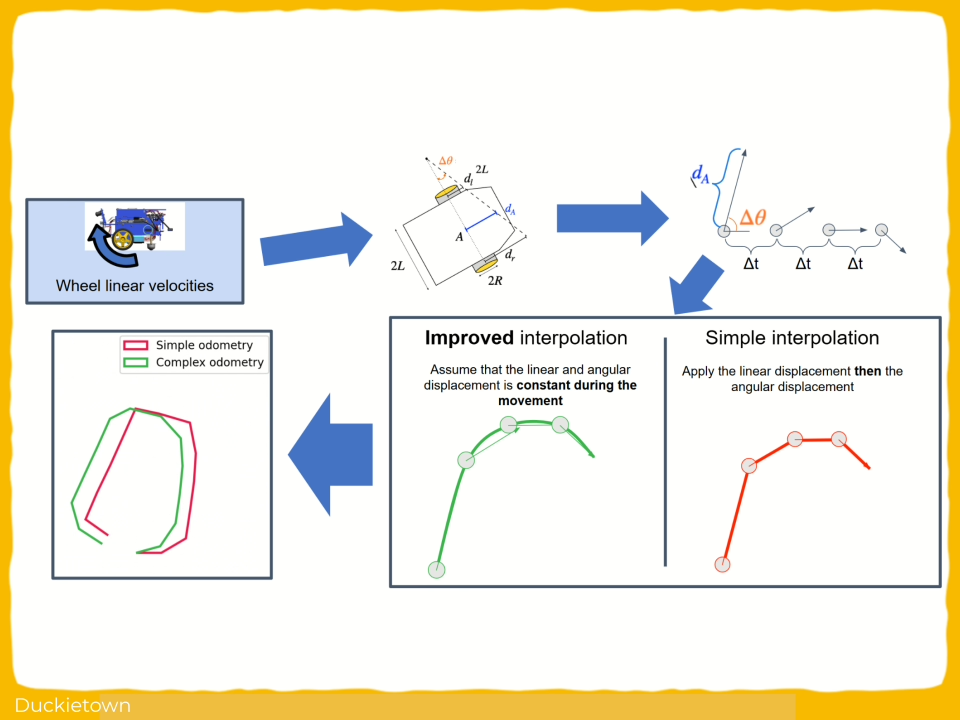

Discrepancies between simulated environments and real-world conditions can cause significant performance degradation, particularly for perception and control algorithms.

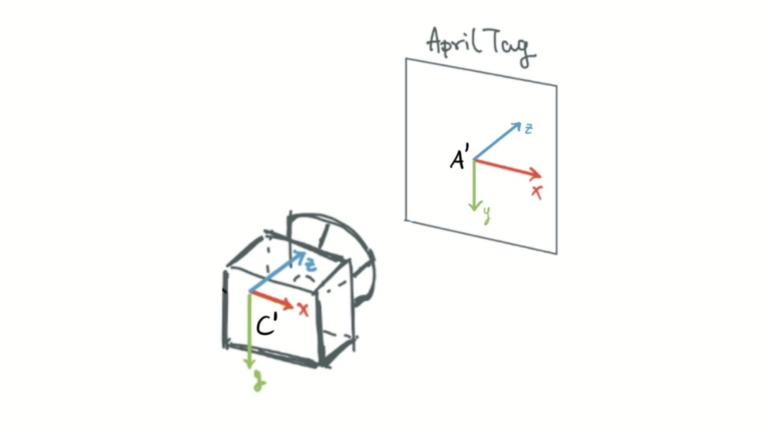

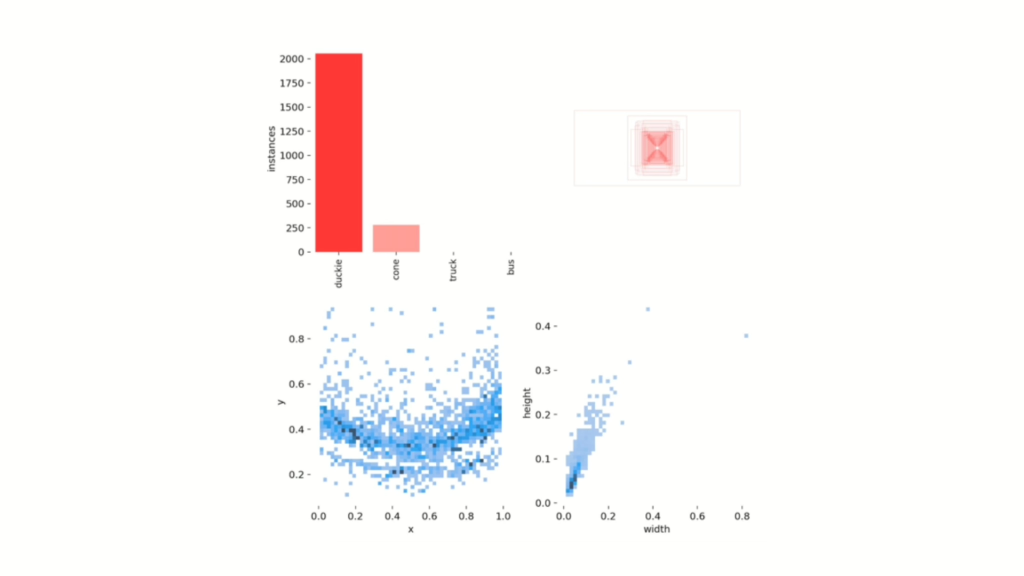

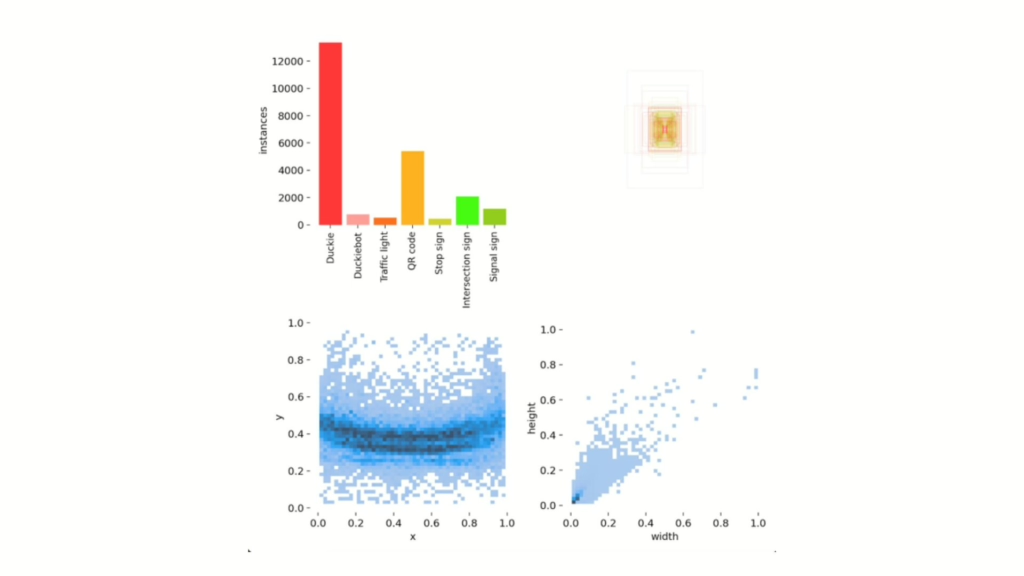

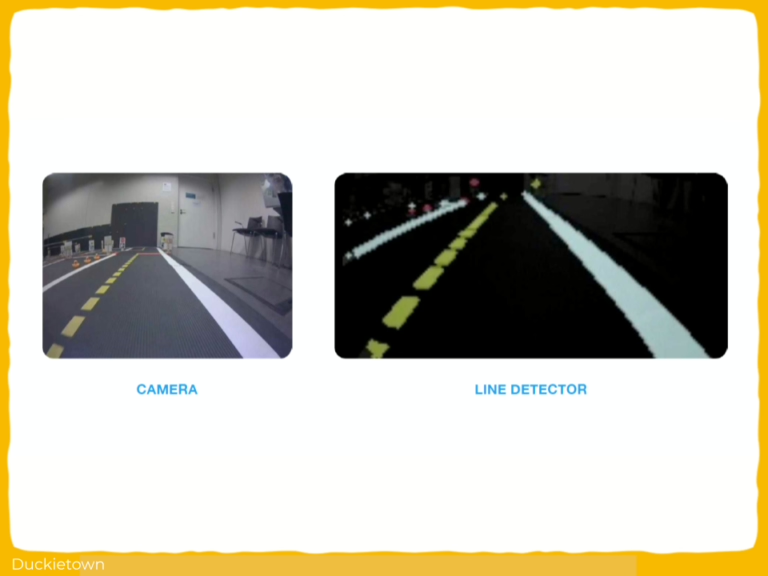

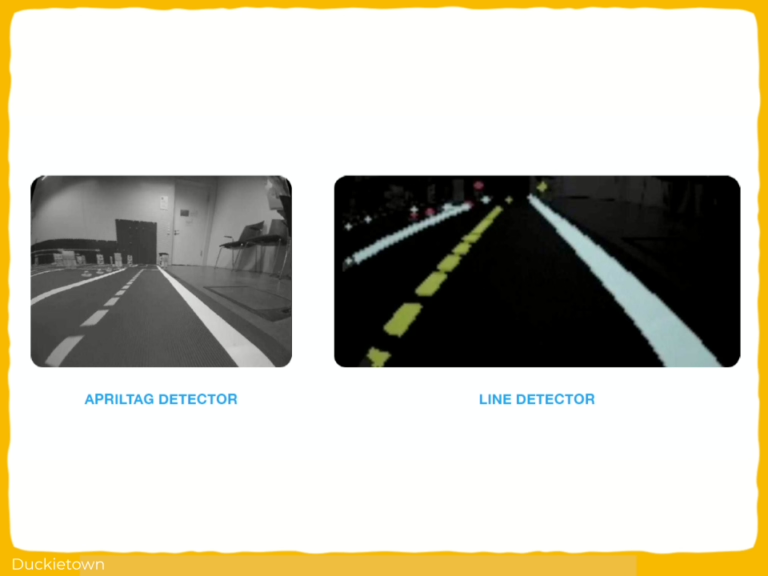

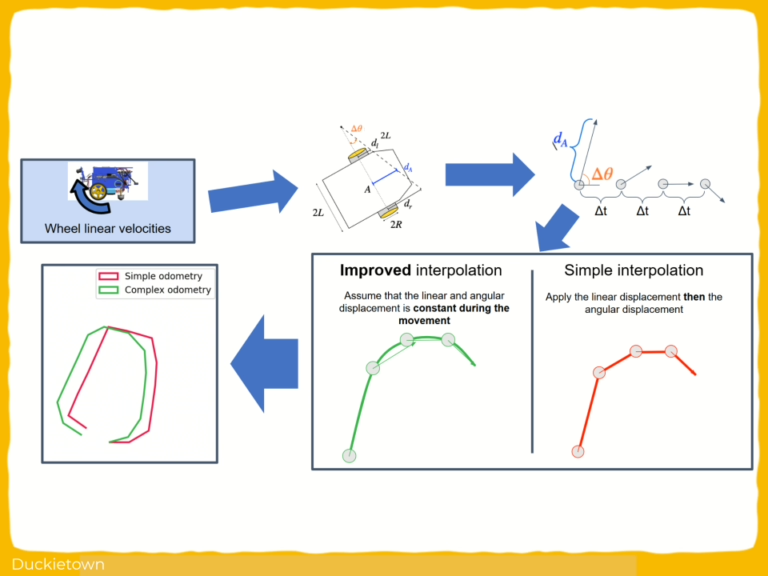

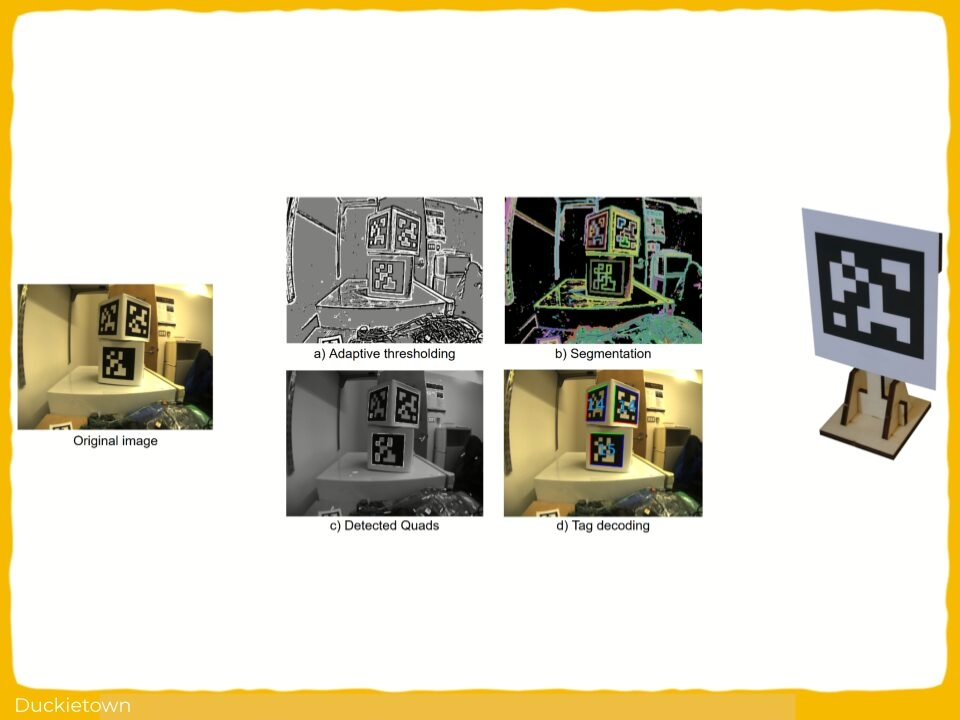

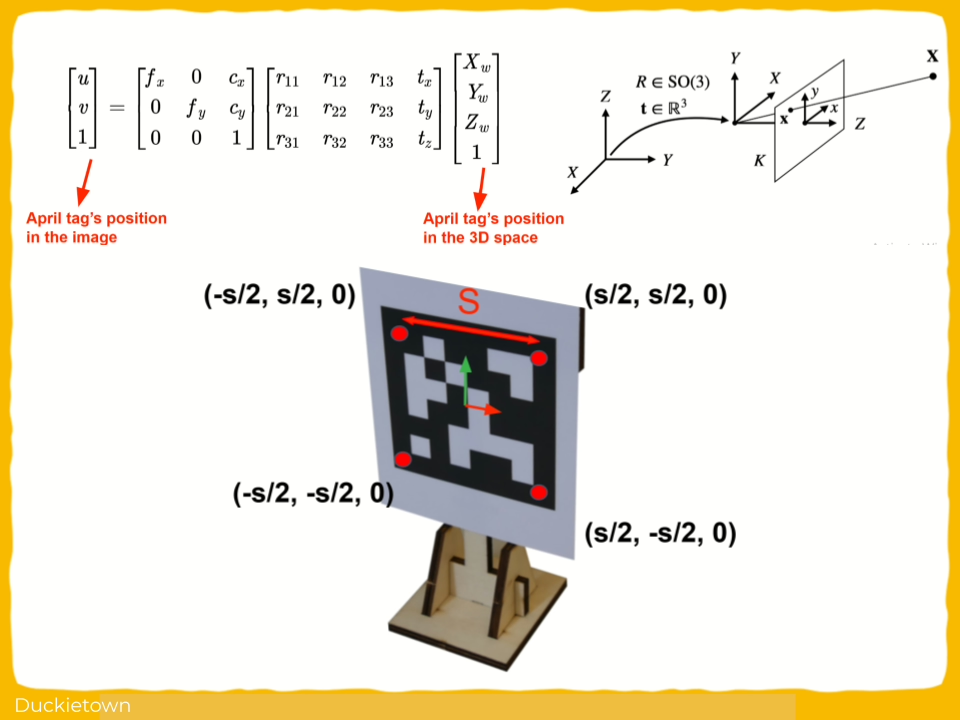

These challenges include visual domain shifts, differences in dynamics and sensor noise, and the need for robust generalization across environments. The study explored how intermediate simulation platforms can help anticipate real-world performance and provides insight into limitations of current sim-to-real transfer approaches, reinforcing the importance of iterative testing across simulation and physical testbeds.

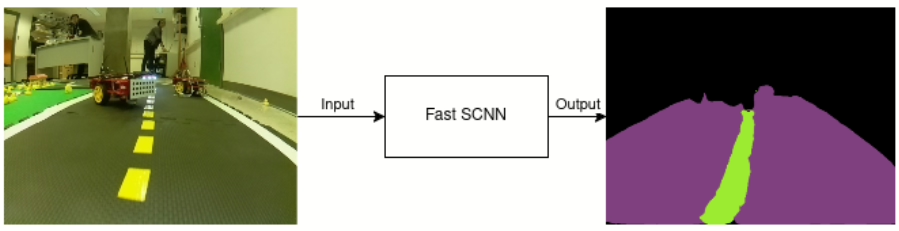

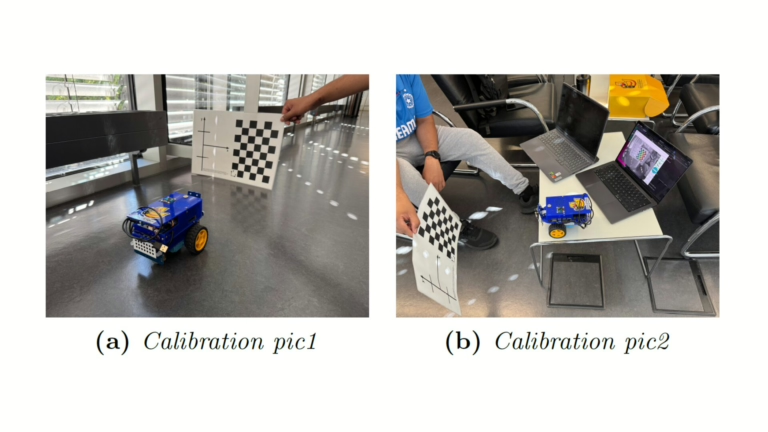

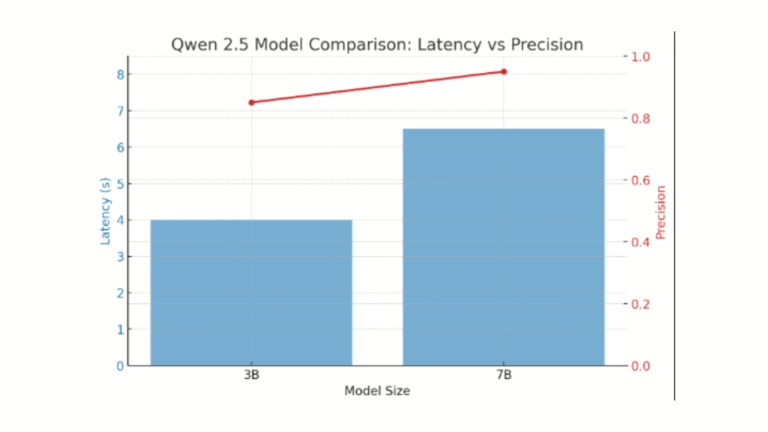

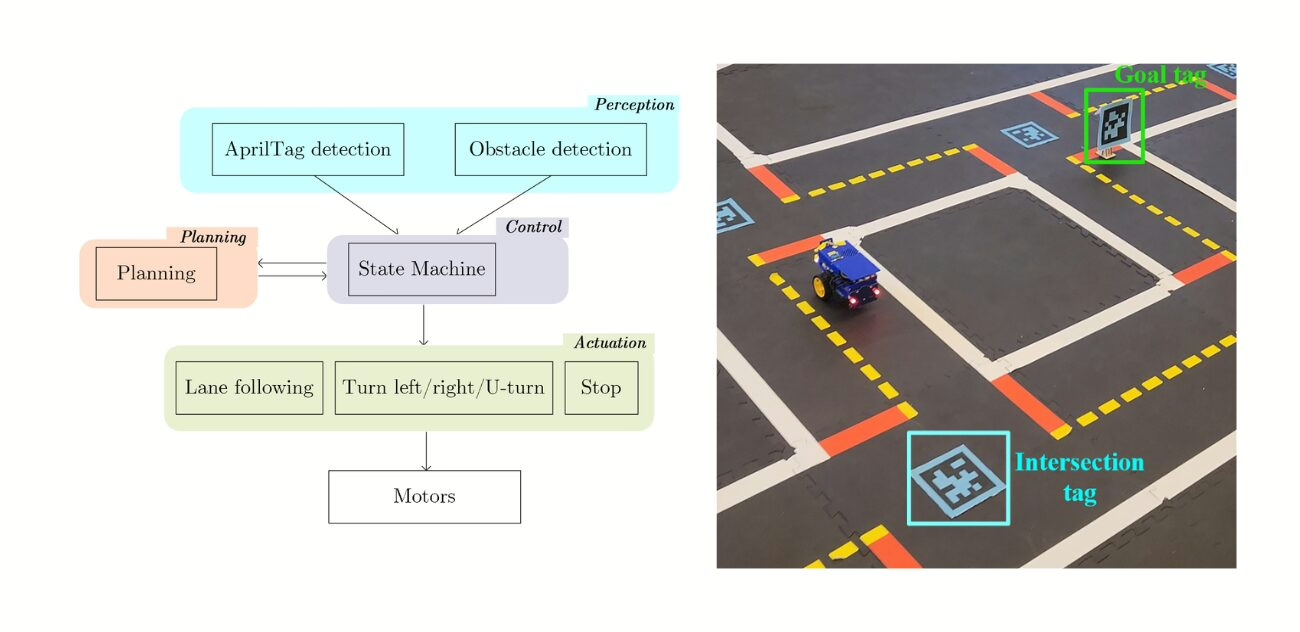

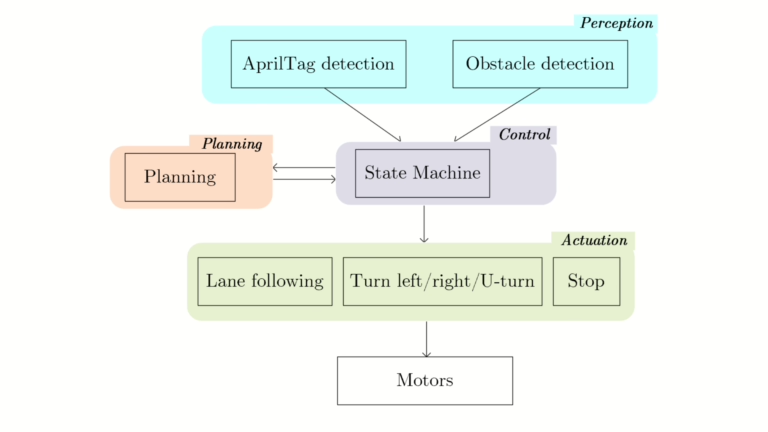

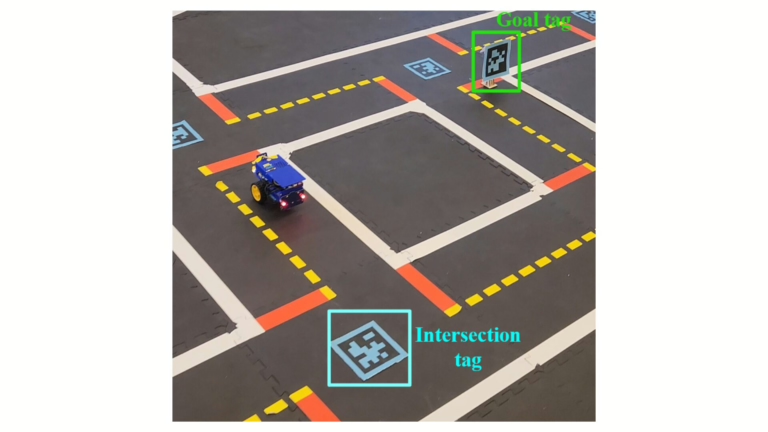

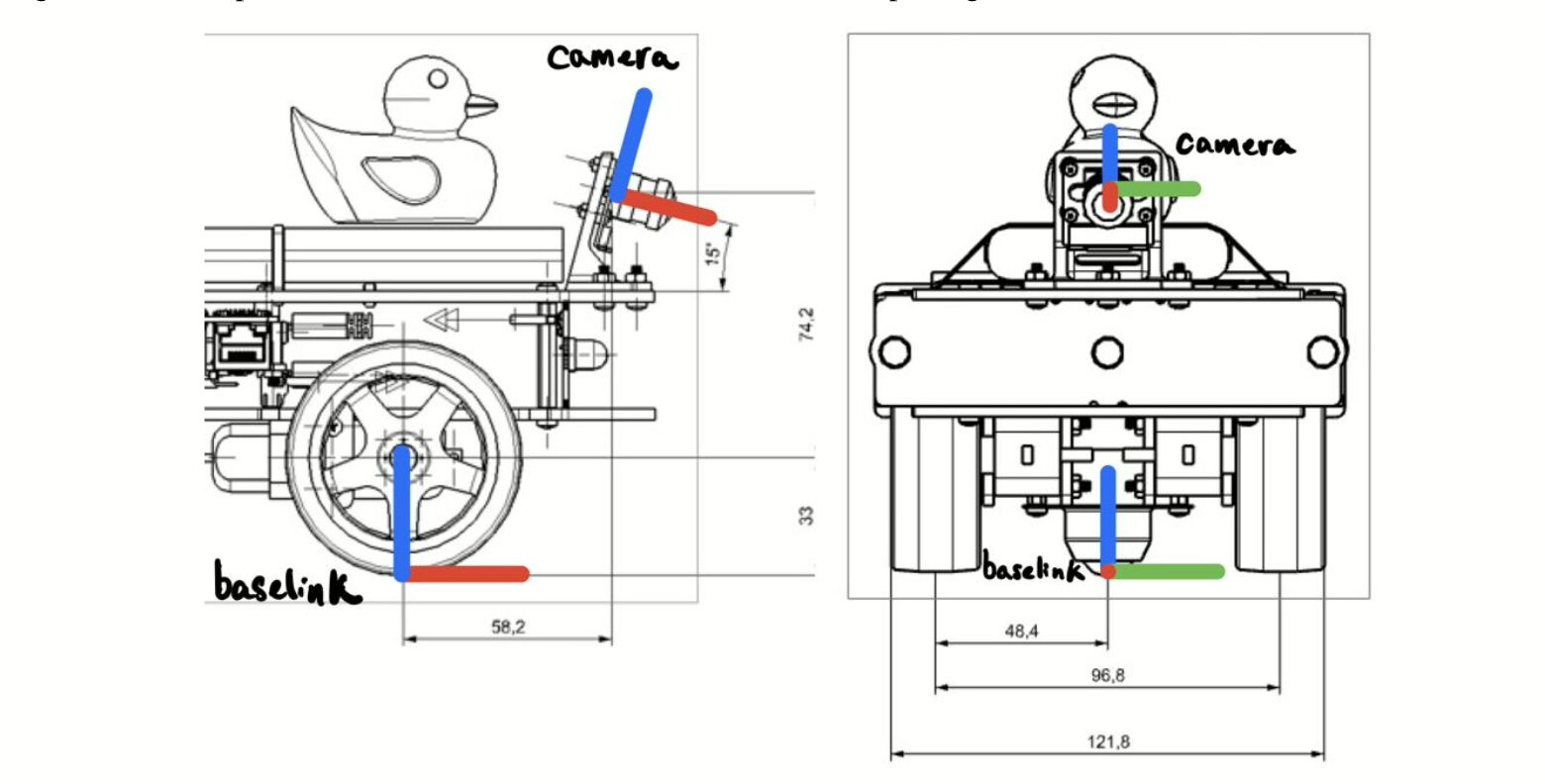

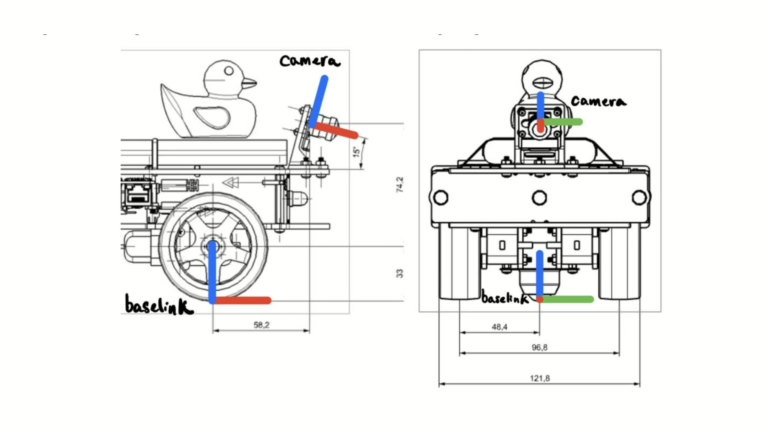

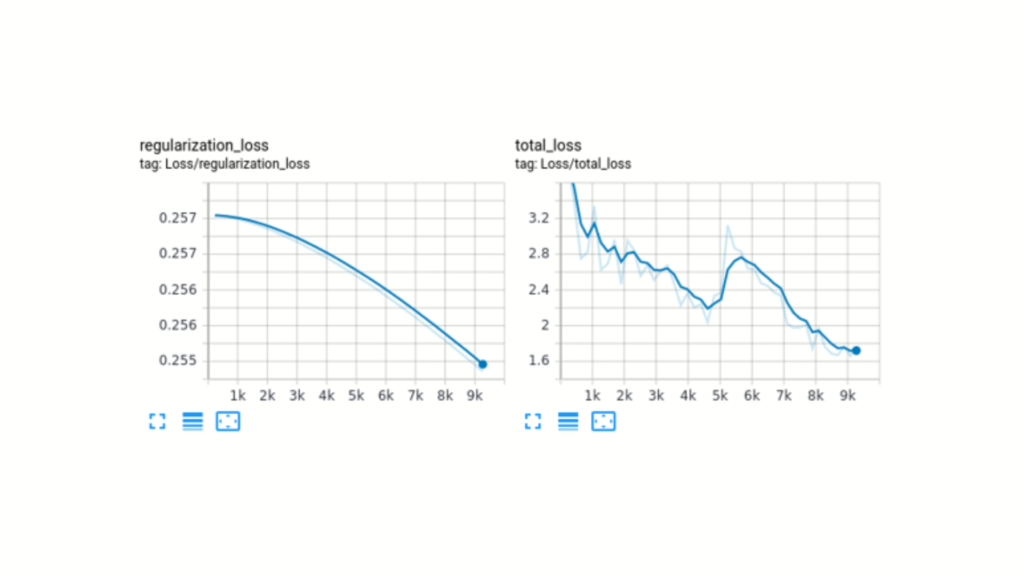

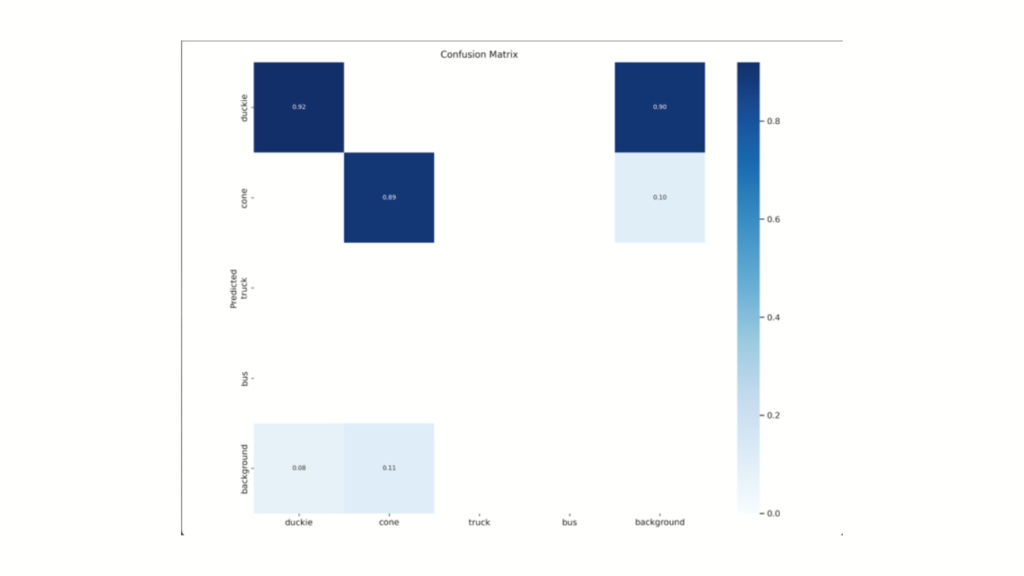

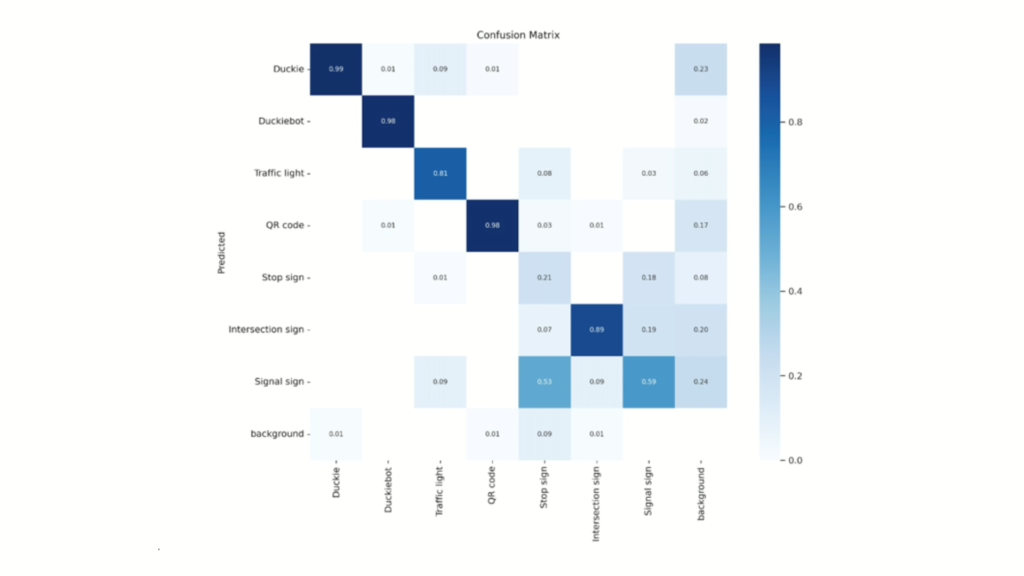

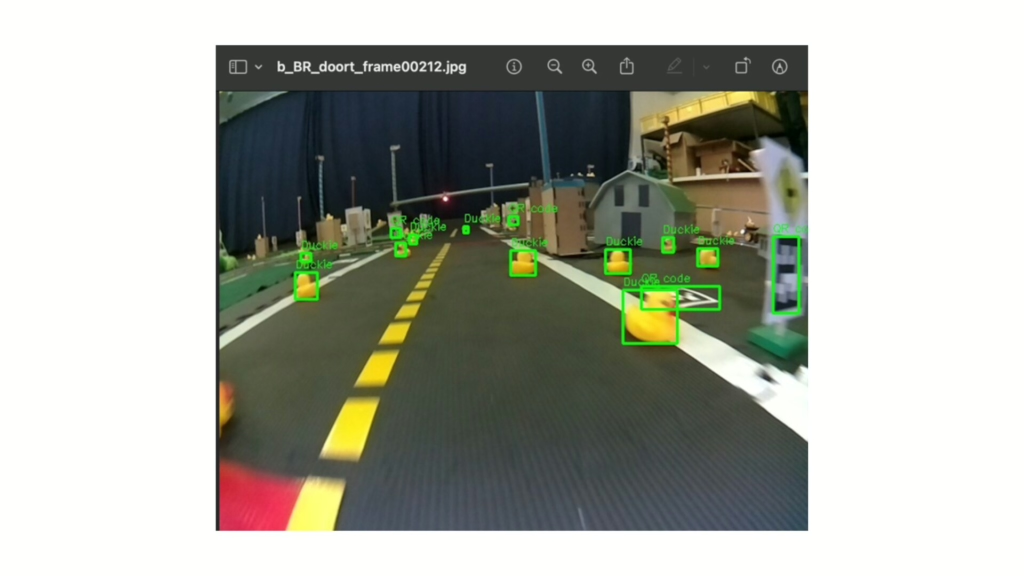

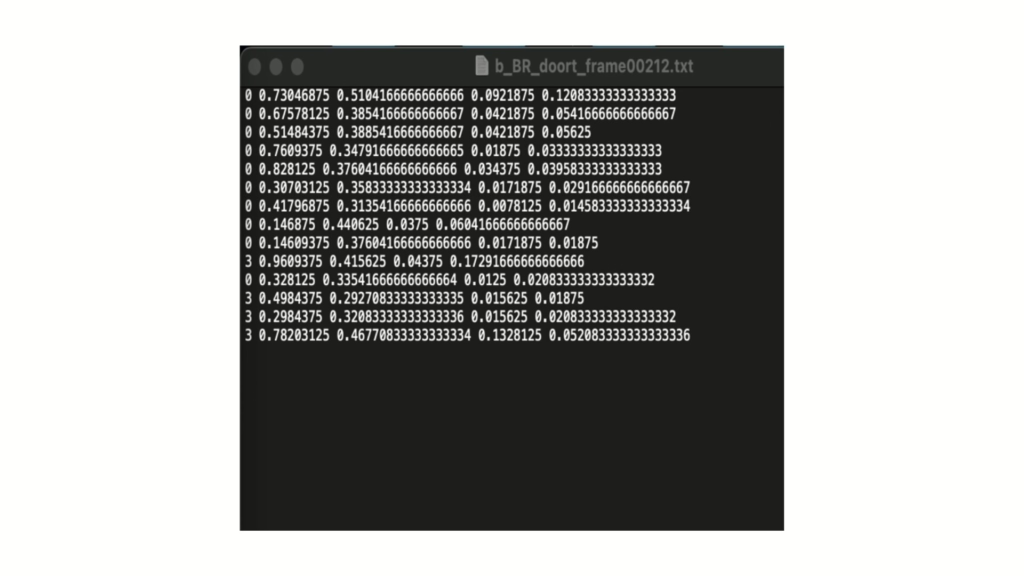

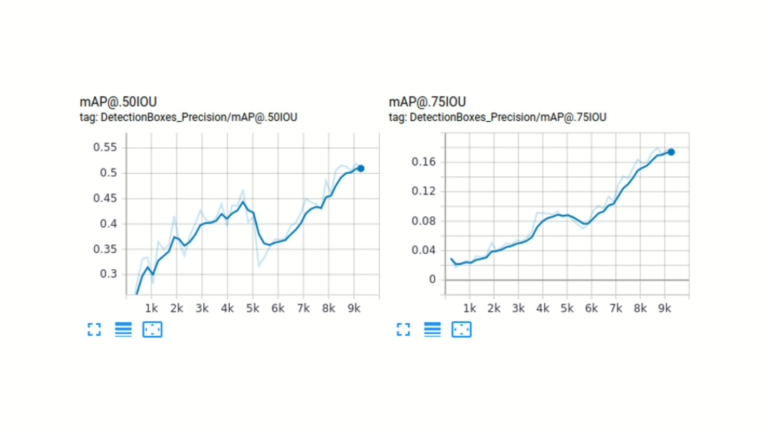

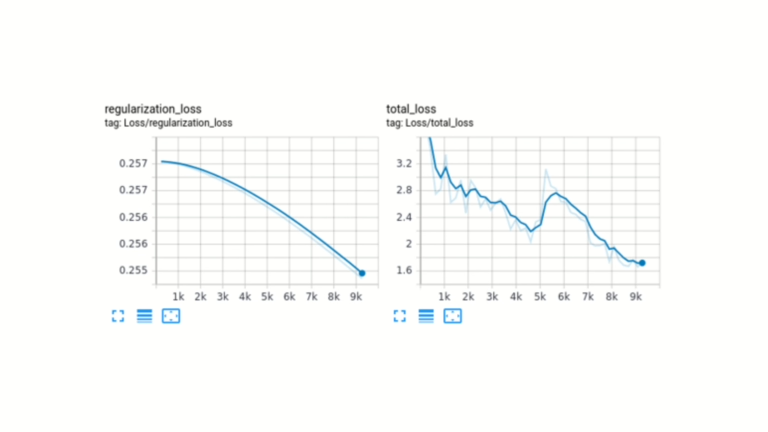

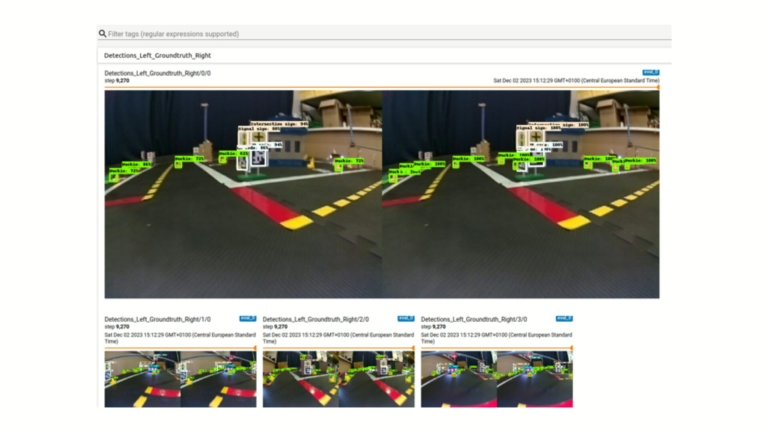

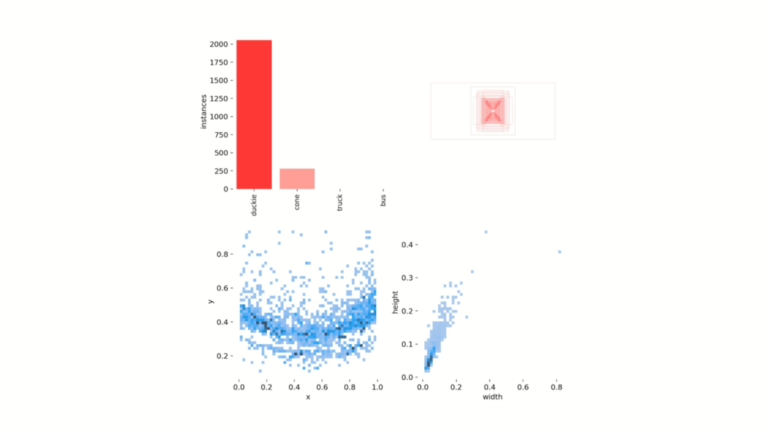

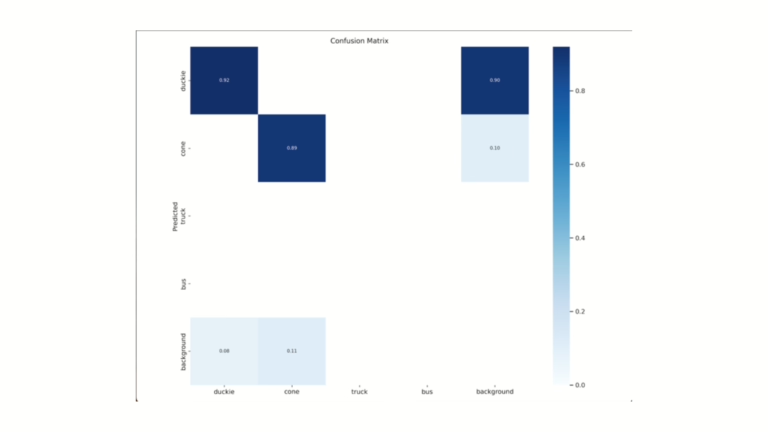

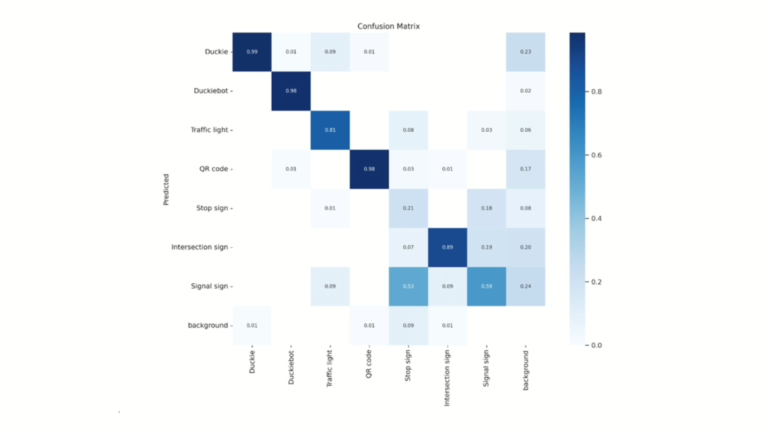

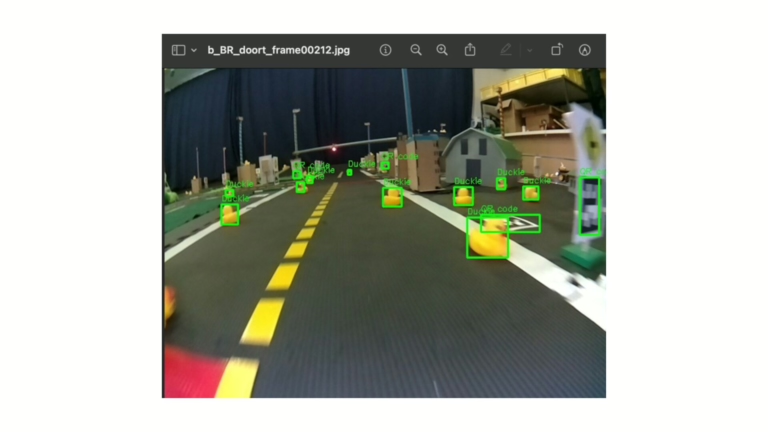

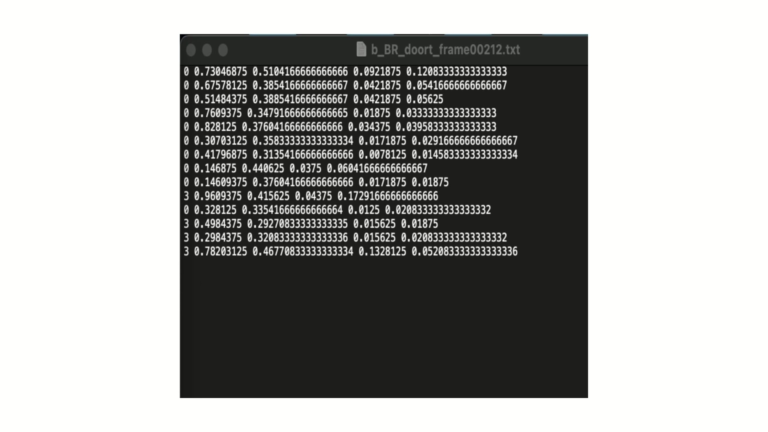

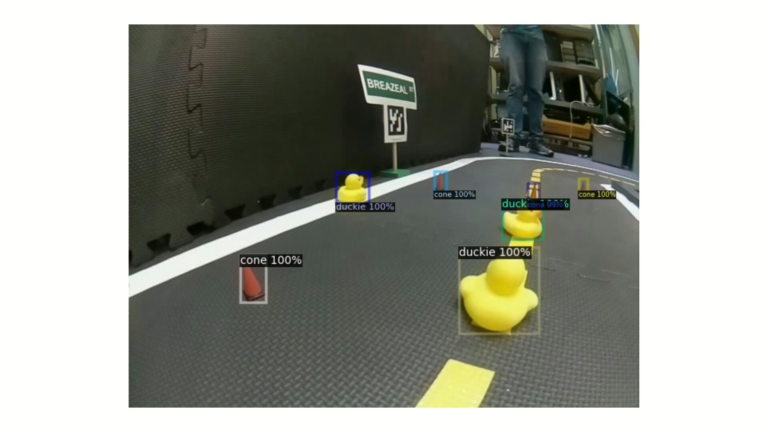

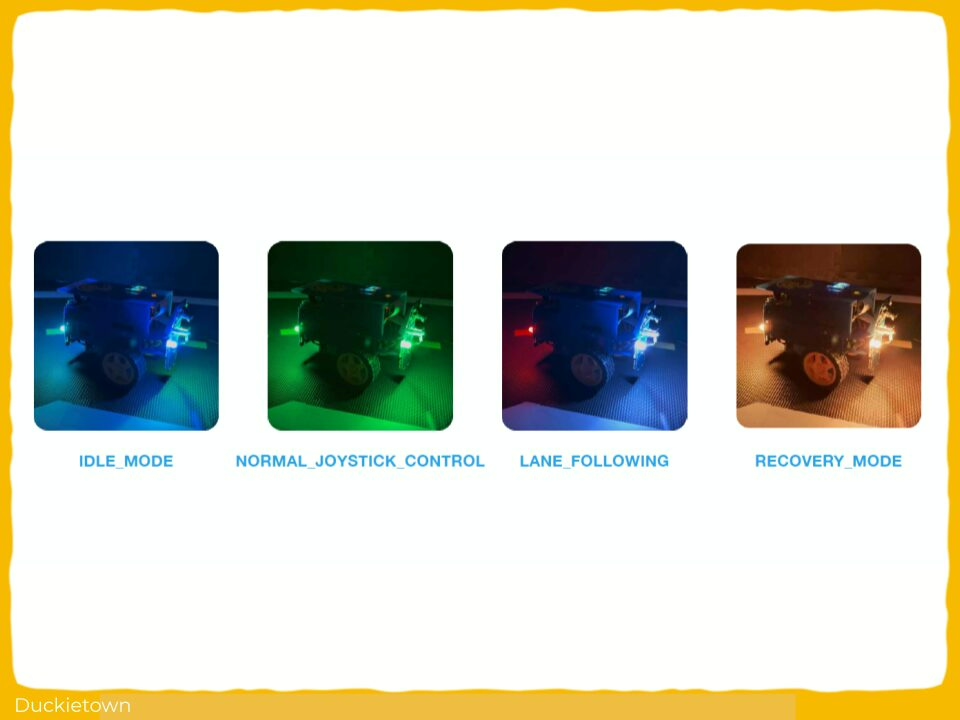

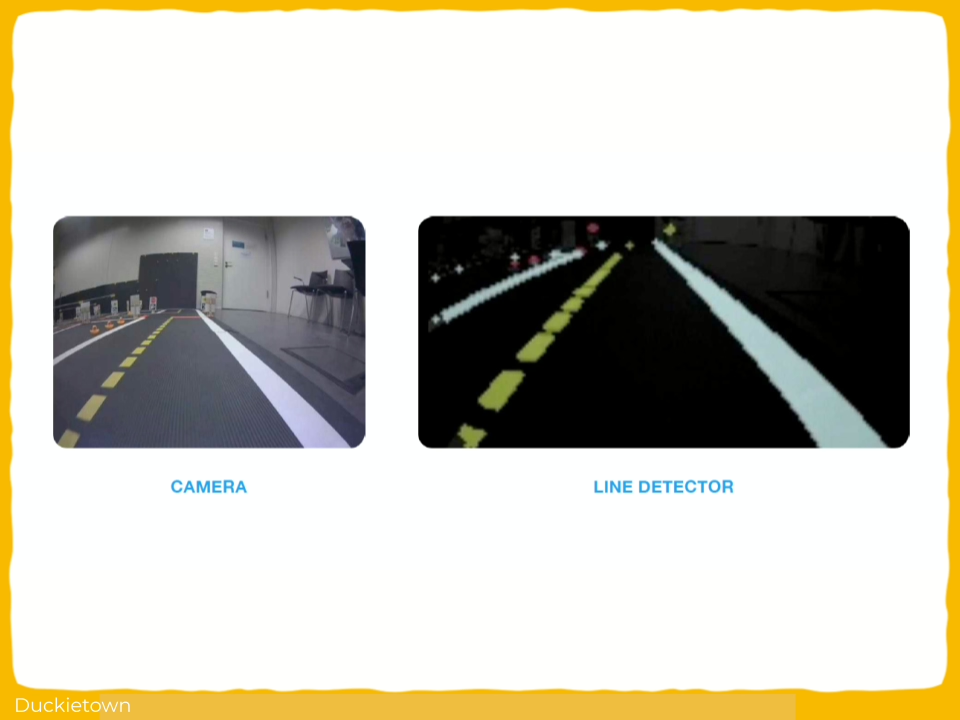

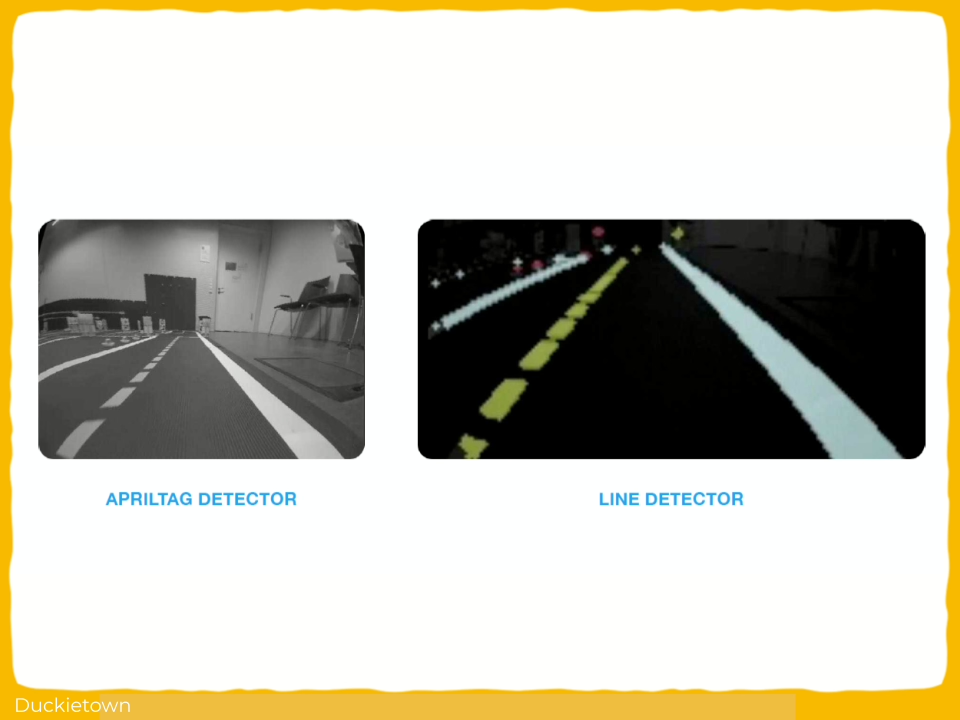

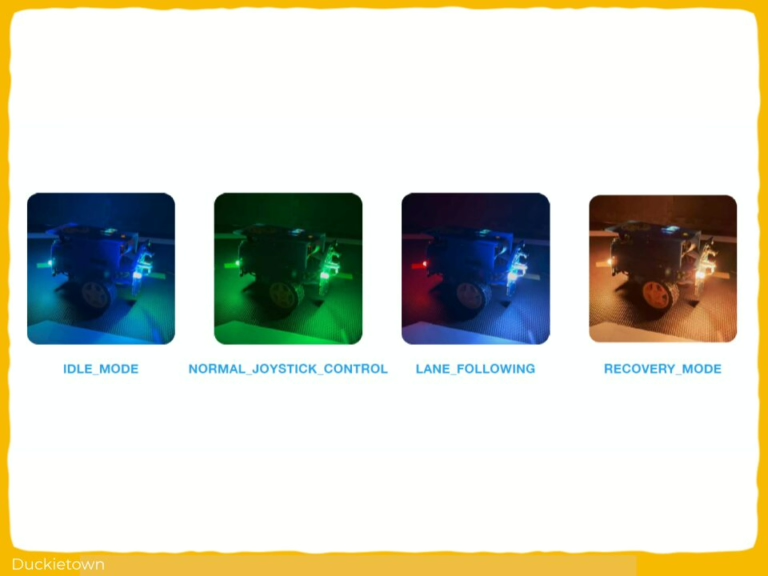

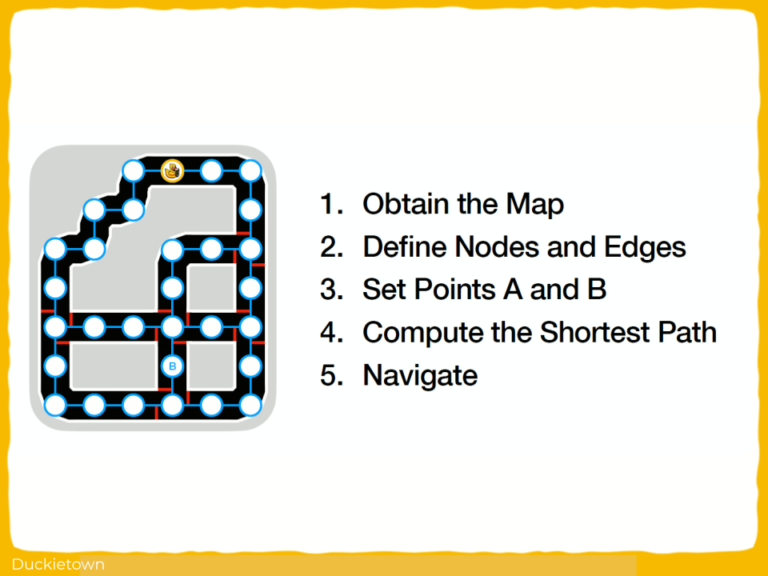

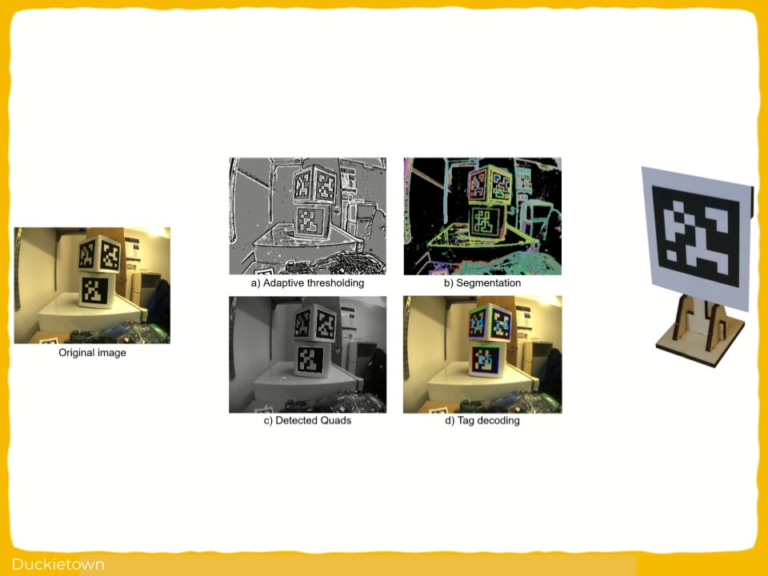

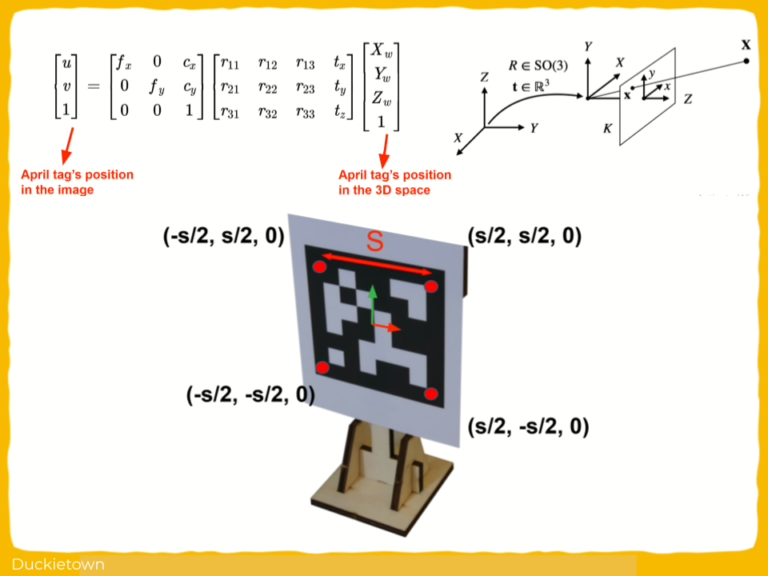

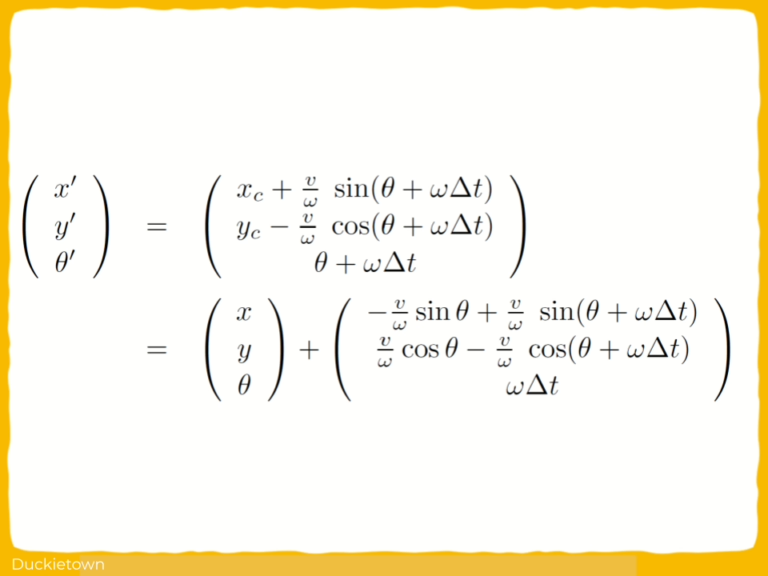

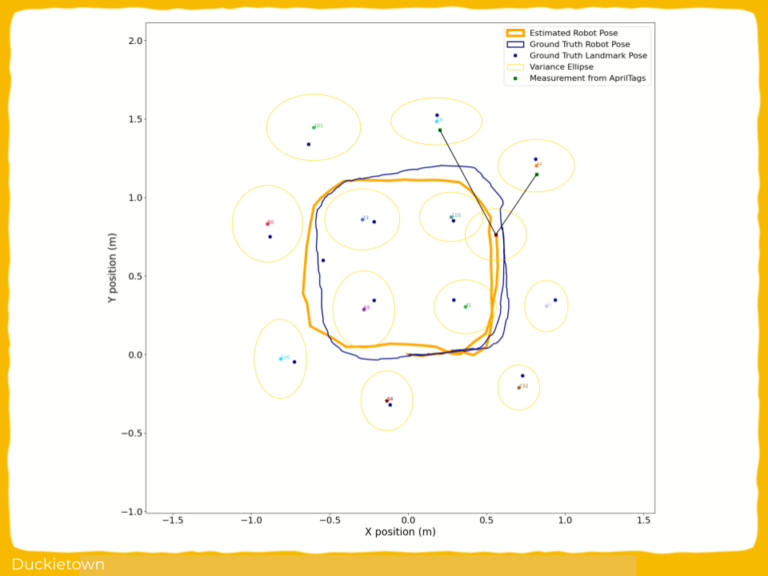

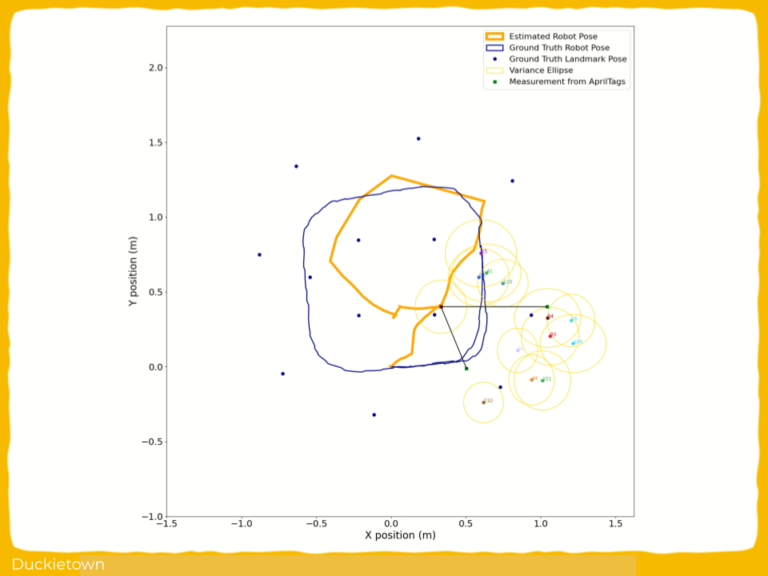

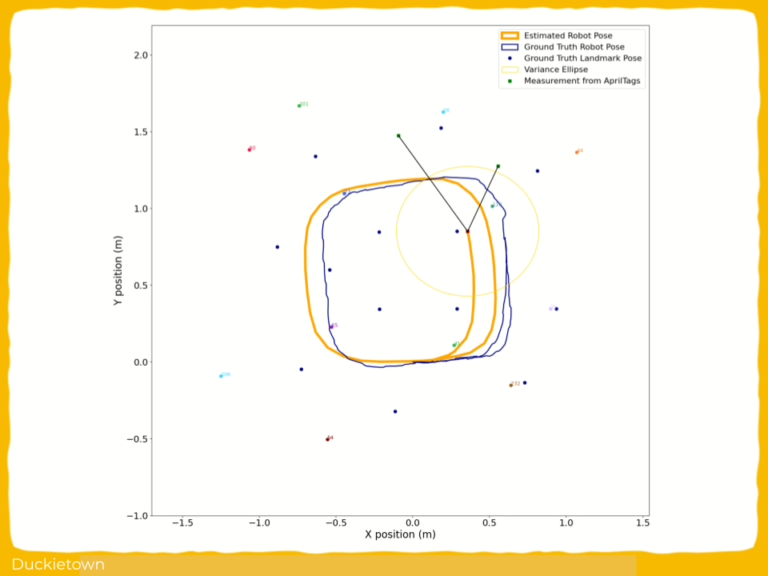

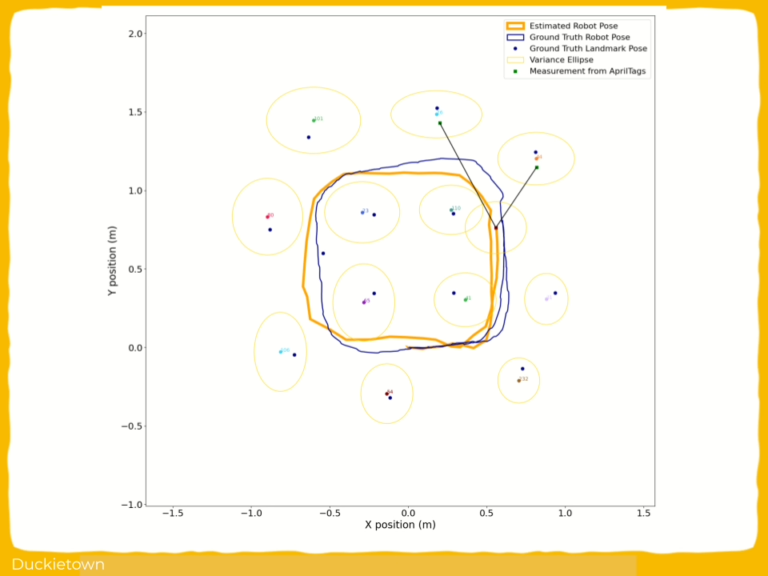

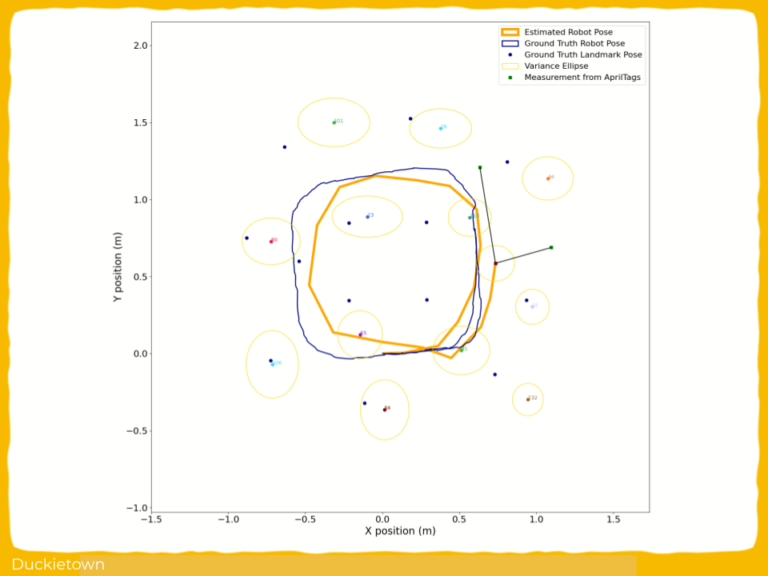

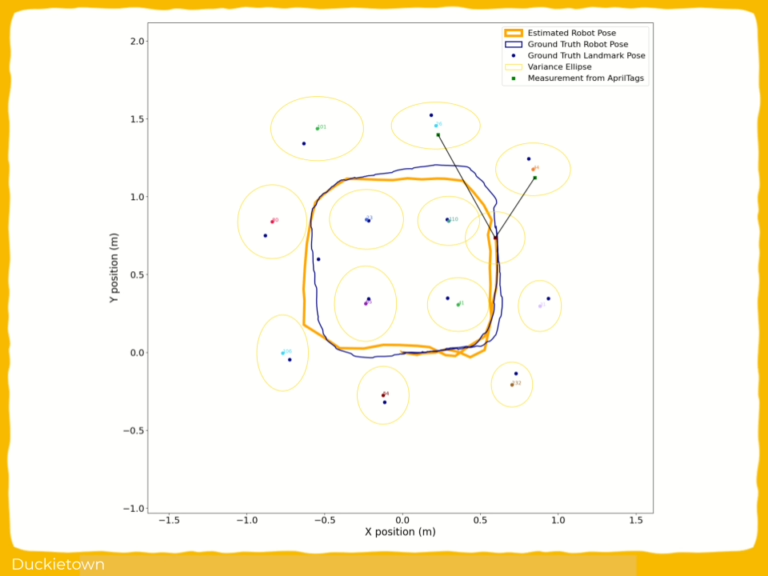

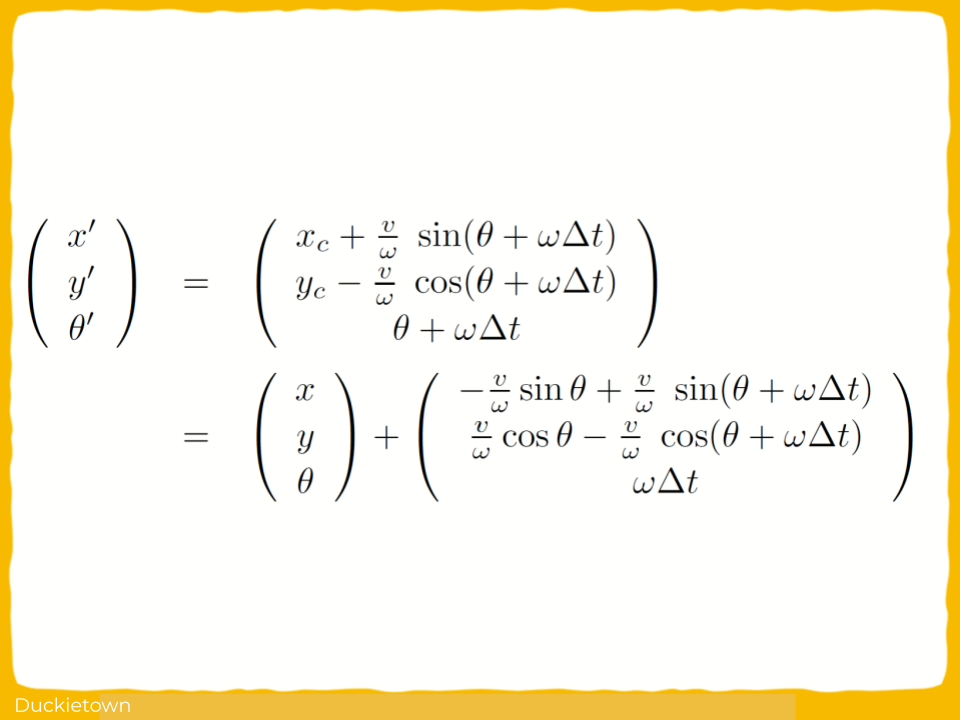

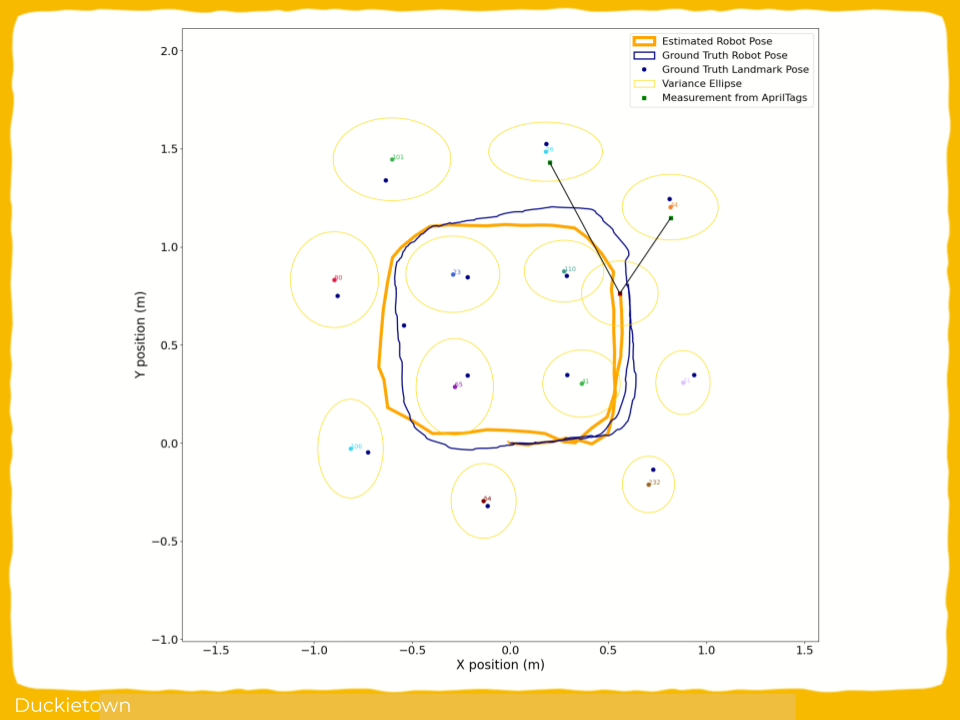

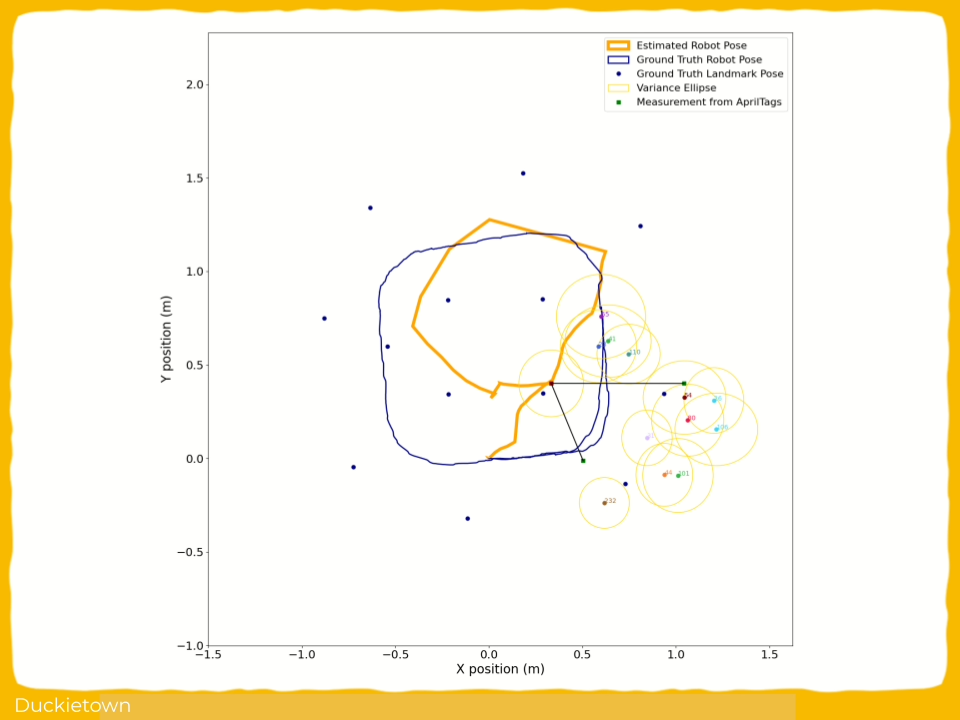

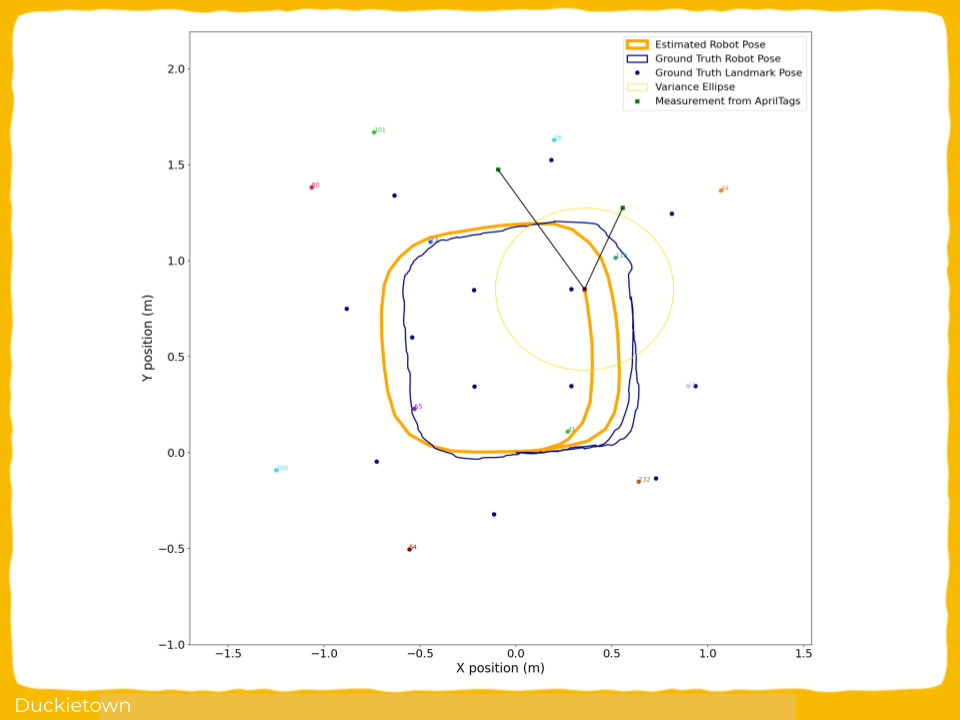

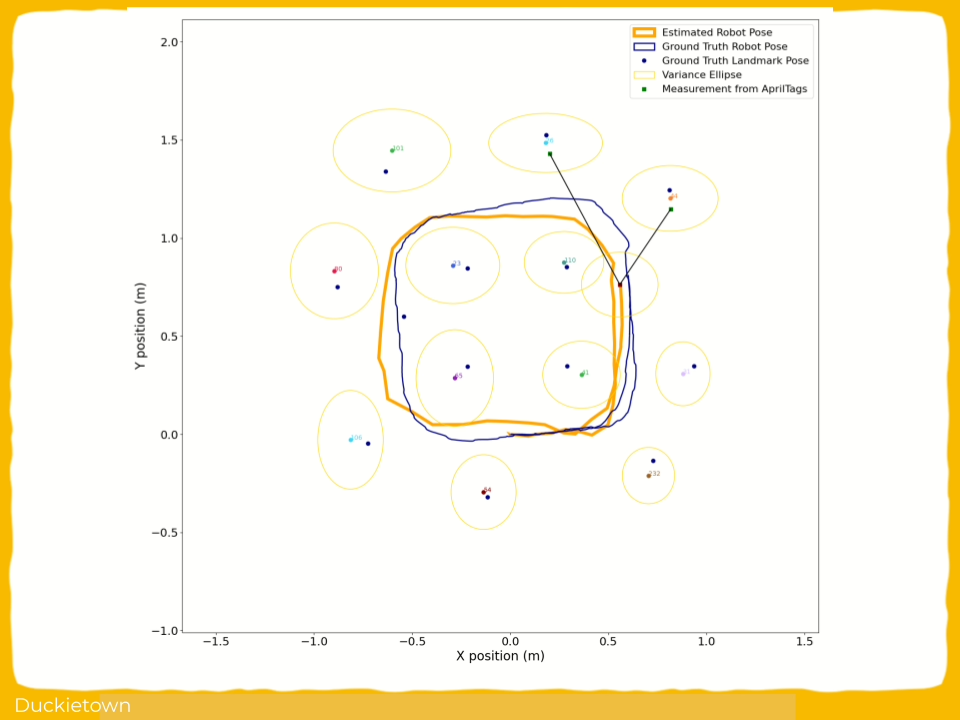

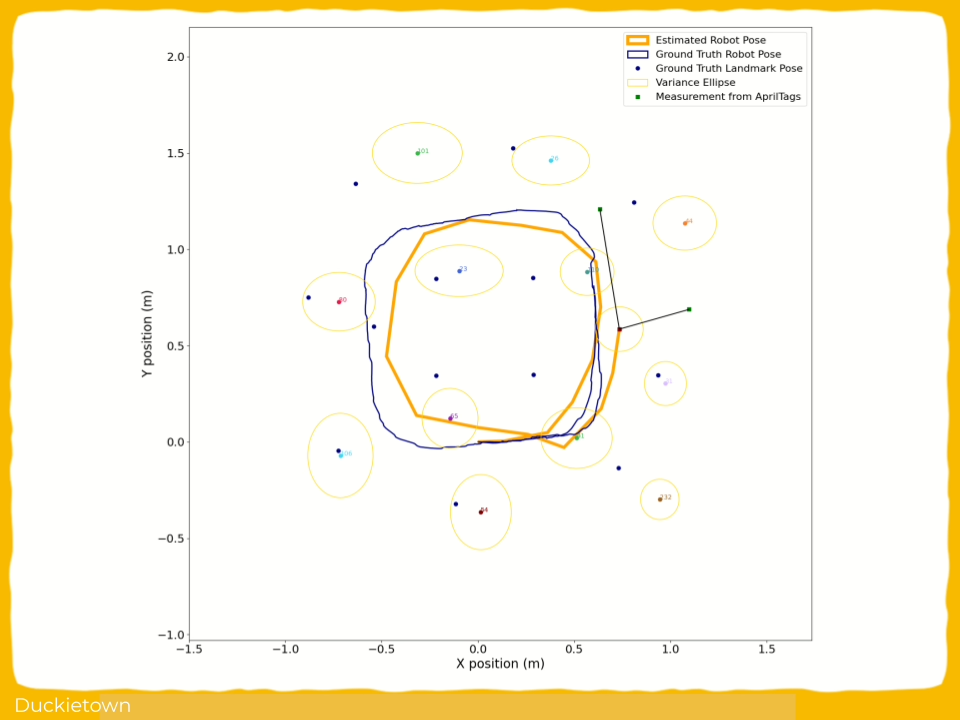

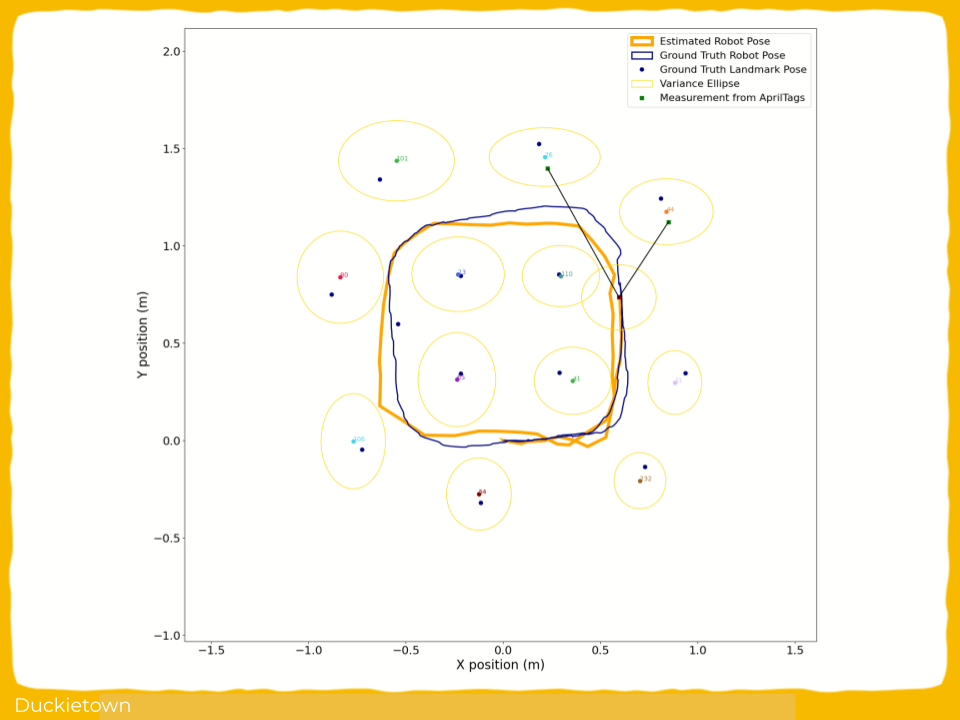

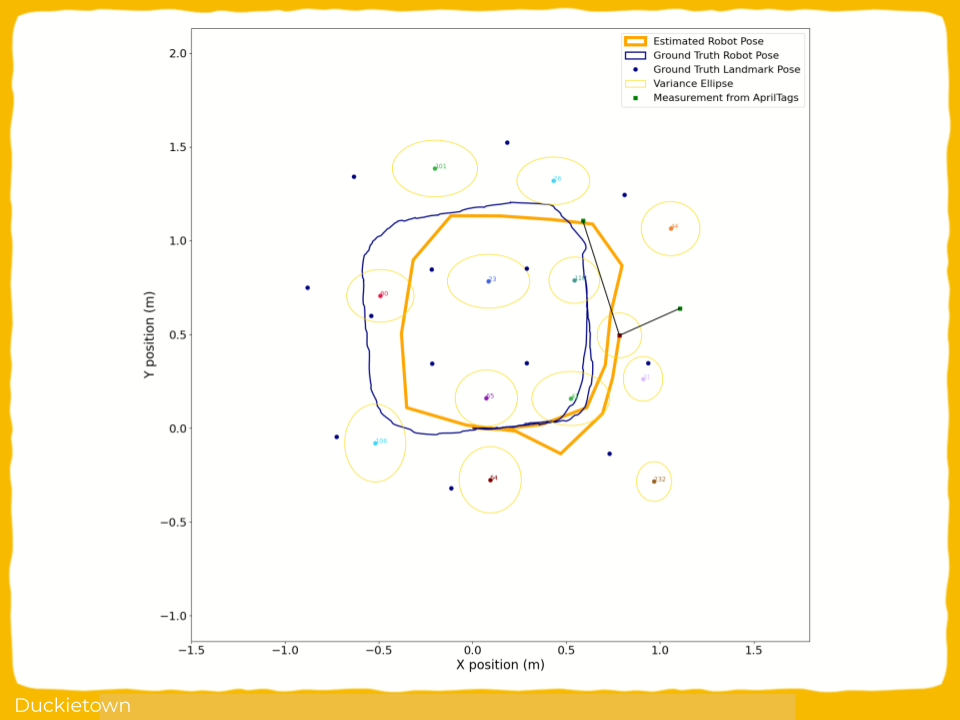

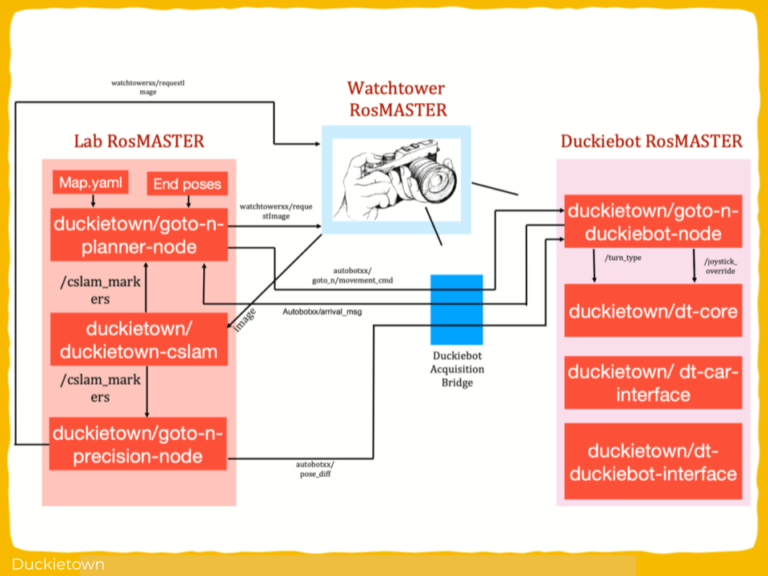

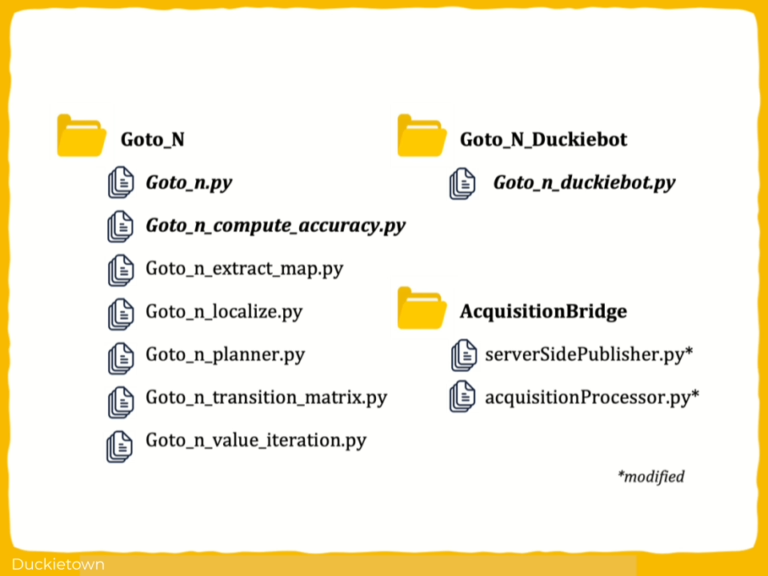

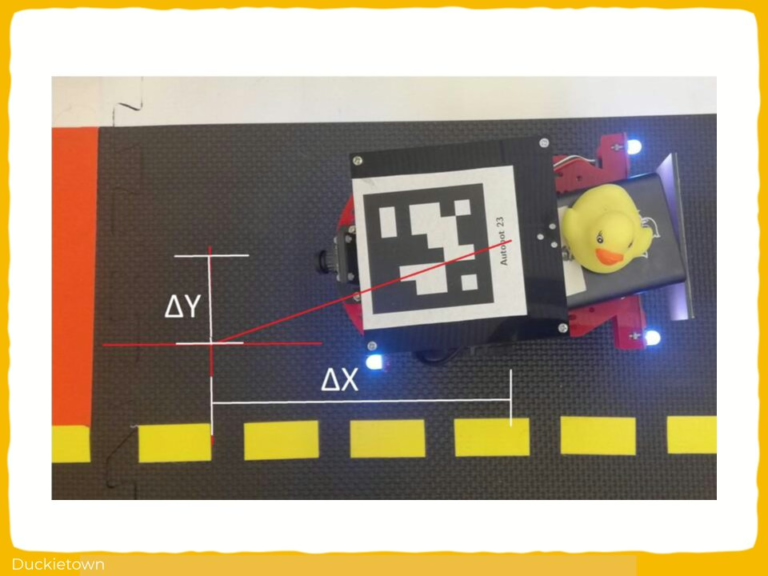

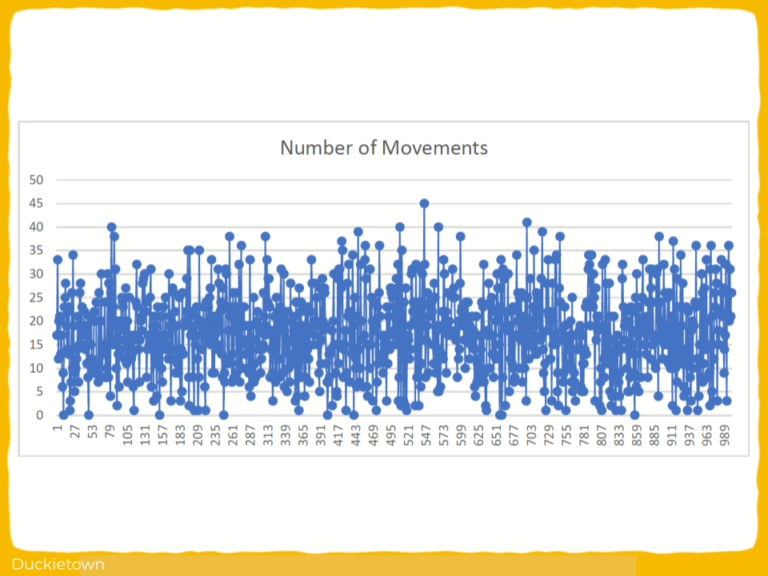

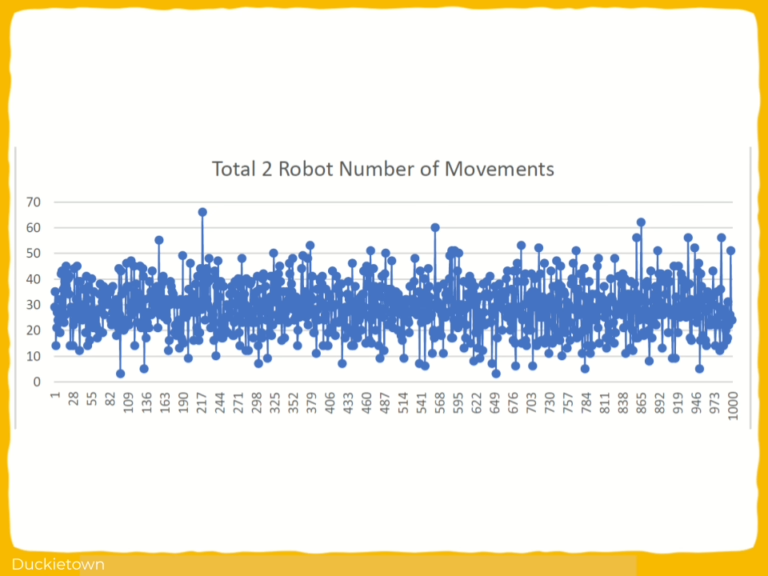

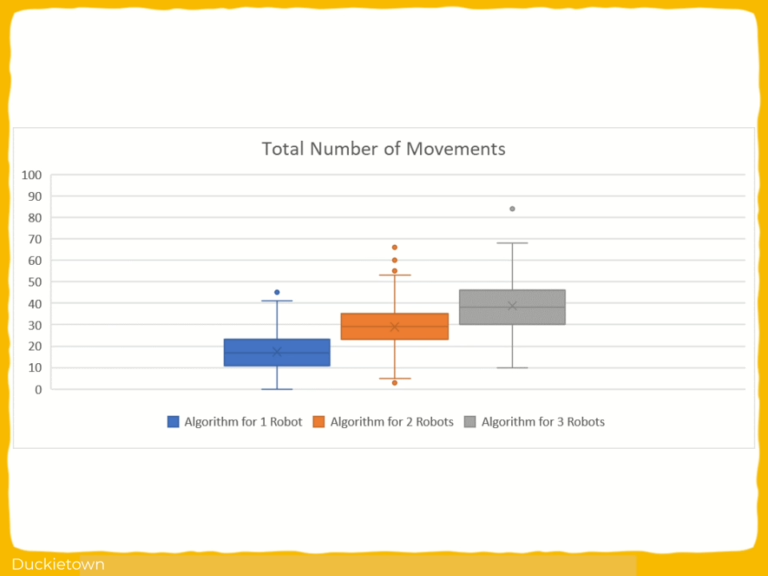

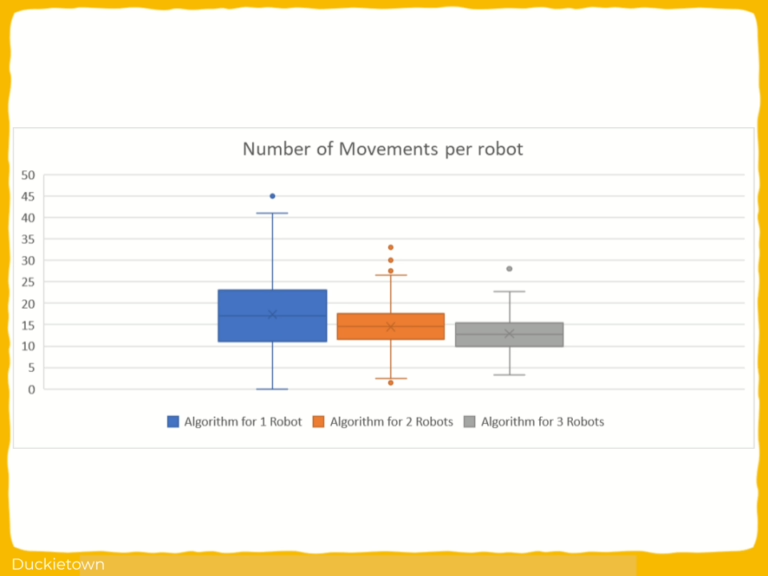

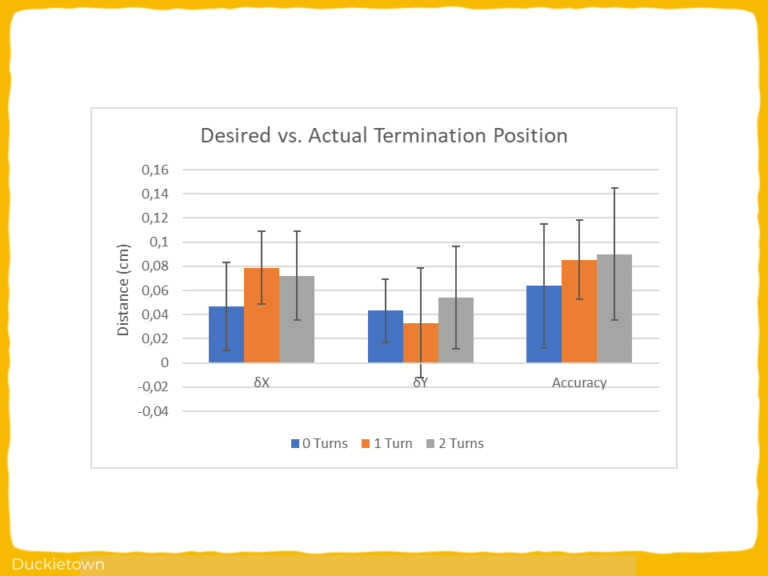

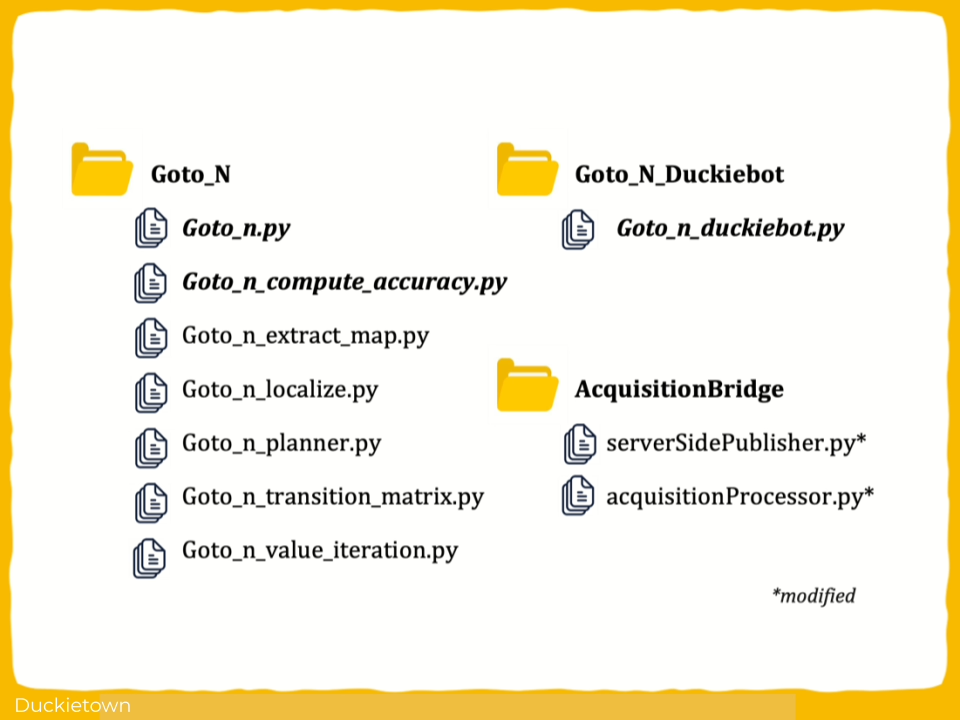

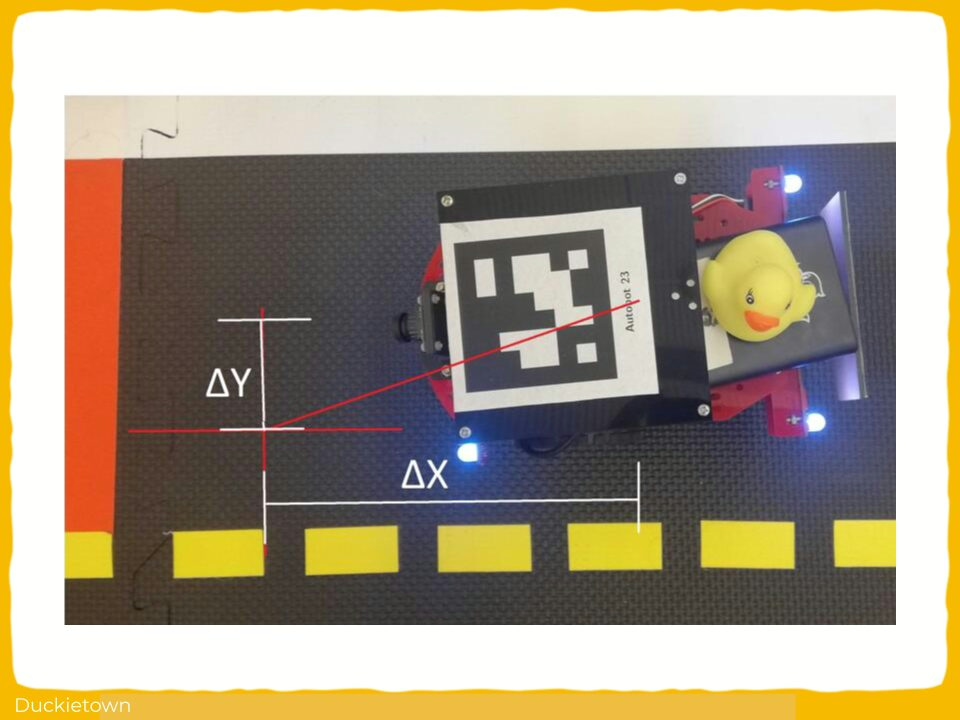

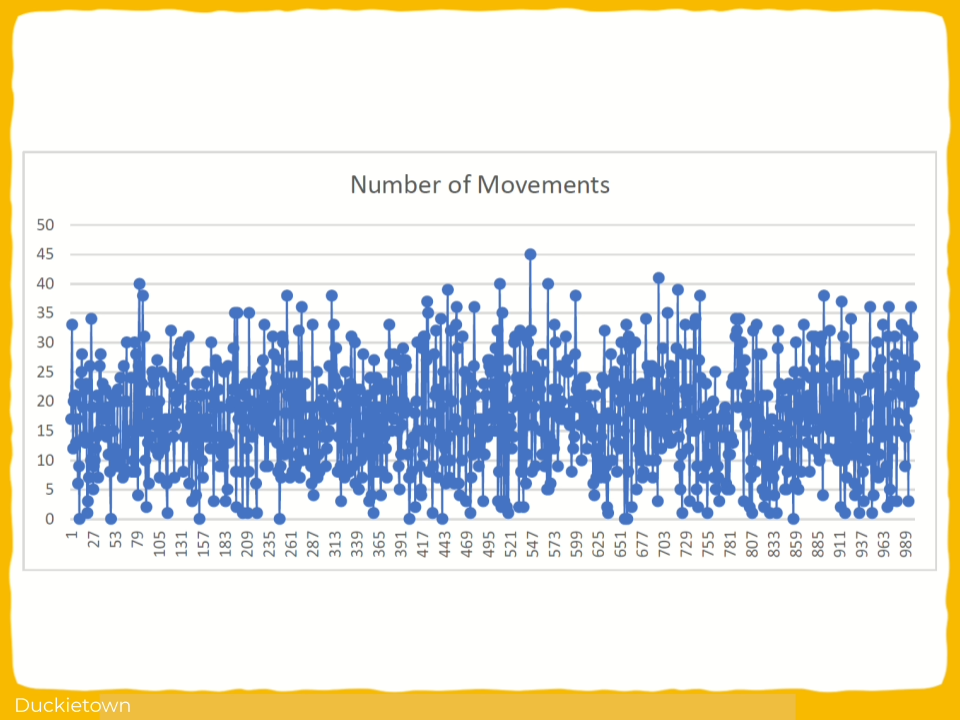

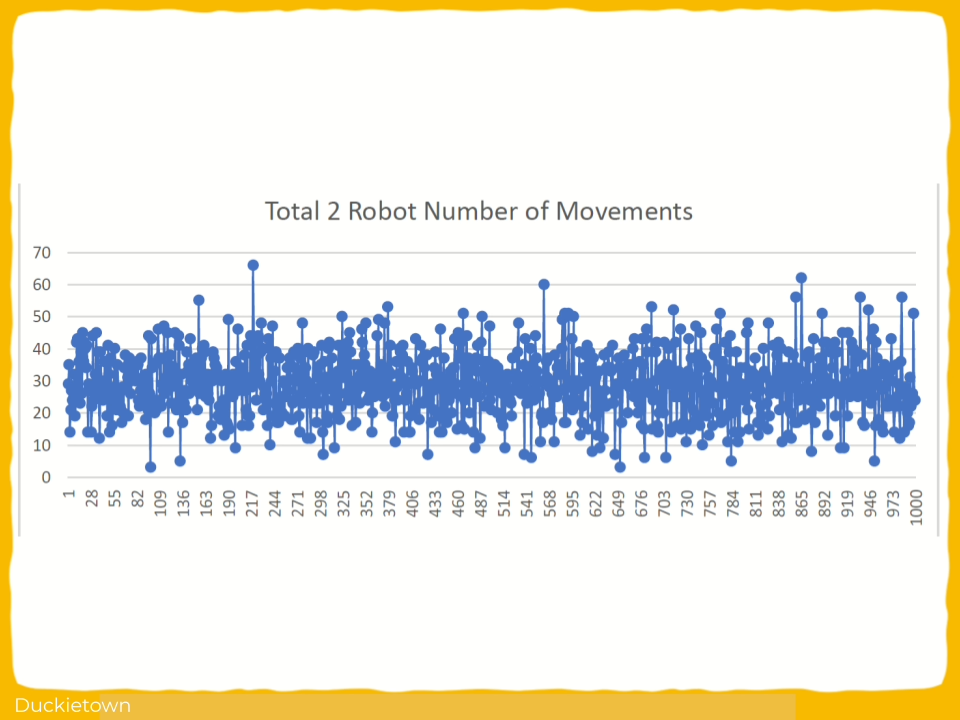

The approach followed consisted of deploying identical software stacks across low-fidelity and high-fidelity simulators, followed by execution on physical Duckietown vehicles. Metrics are collected for perception accuracy, trajectory tracking error, control stability, and failure rates. Domain discrepancies are analyzed by isolating sensing noise, actuator modeling, latency, and environmental dynamics. Challenges include simulator parameter mismatch, sensor noise modeling, real-time constraints, and non-linear vehicle dynamics that are not fully captured in simulation.

Conclusions

The findings suggest that it is feasible to train models in a high-fidelity simulator such as CARLA and use a low-fidelity simulator to estimate real- world performance, thereby providing an approximation of the Sim-to-Real gap. However, results obtained in the intermediate simulator are not sufficiently reliable to eliminate the need for real-world testing. Training in a low-fidelity simulator like Duckietown and evaluating in CARLA proved to be much less effective. This indicates that the proposed method is well-suited for high-to-low fidelity trans- fer like discussed above, but not the reverse. Future work should look at broadening this methodology by incorporating multiple training algorithms, simulators and environments.

Project Report

Looking for similar projects?

Check out the following works on sim-to-real with Duckietown:

Sim-to-Real Transfer for Small Autonomous Vehicles: authors

Jurriaan Buitenweg is currently working as a Machine Learning Engineer at Enjins, Netherlands.

Dr. Cynthia C. S. Liem is a tenured Associate Professor at the Multimedia Computing Group of Delft University of Technology.

Learn more

Duckietown is a modular, customizable platform for robotics and artificial intelligence education, enabling hands-on learning and real-world experimentation with autonomous systems.

Designed for teaching, learning, and research, Duckietown supports the full spectrum of autonomy development, from foundational computer science and robotics concepts to advanced AI and self-driving systems research.

These spotlight projects are shared to demonstrate how Duckietown bridges theory and practice in robotics and AI, empowering students to apply machine learning and autonomy techniques to physical robots while building practical skills valued in academic research and industry.