Real-Time Reinforcement Learning in Duckiematrix

Project Resources

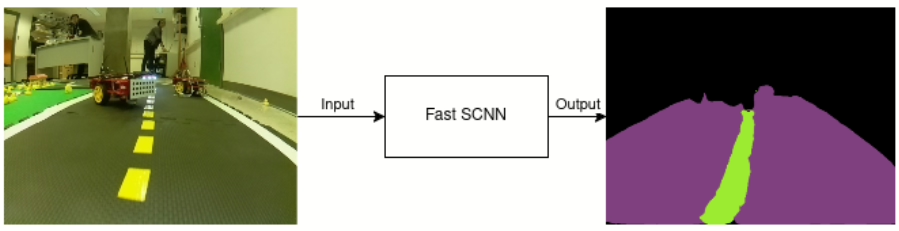

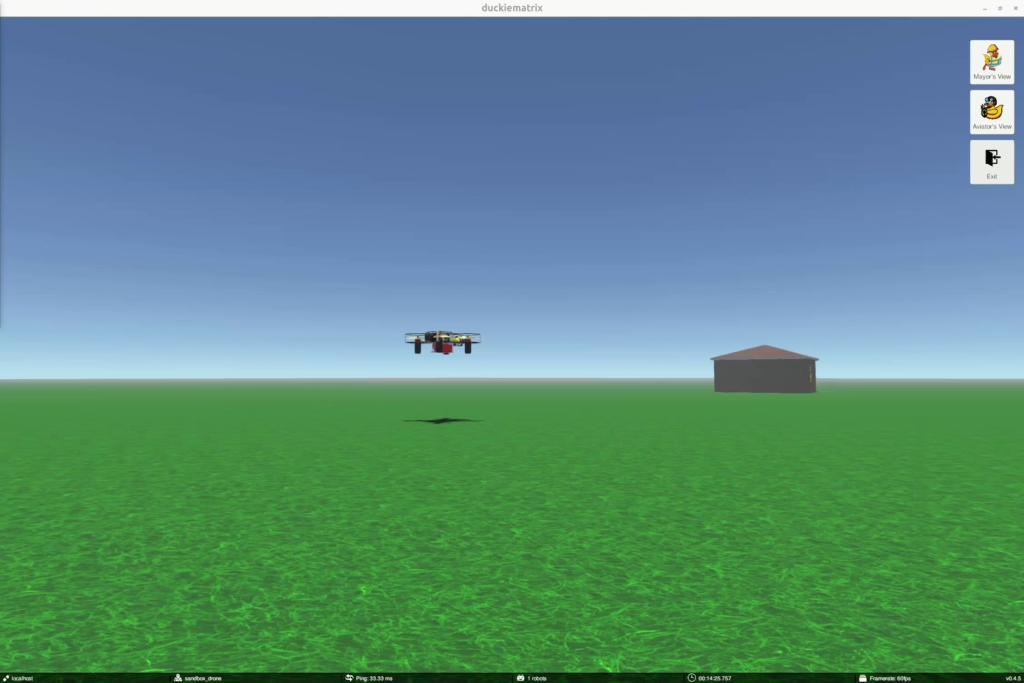

- Objective: Evaluate how computation delays affect Reinforcement Learning performance in Duckiematrix autonomous driving tasks.

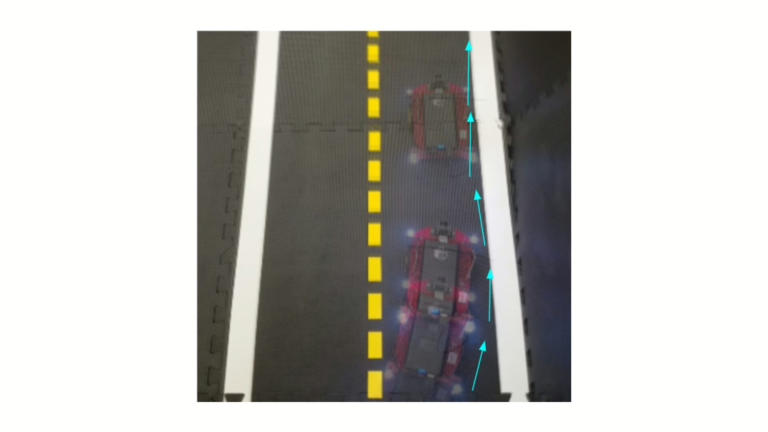

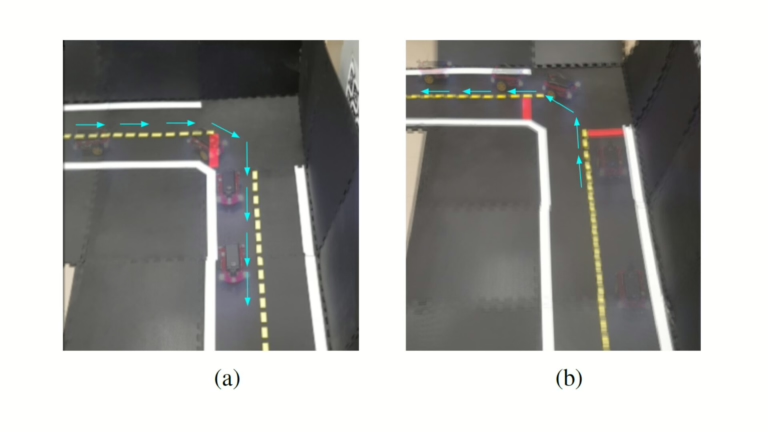

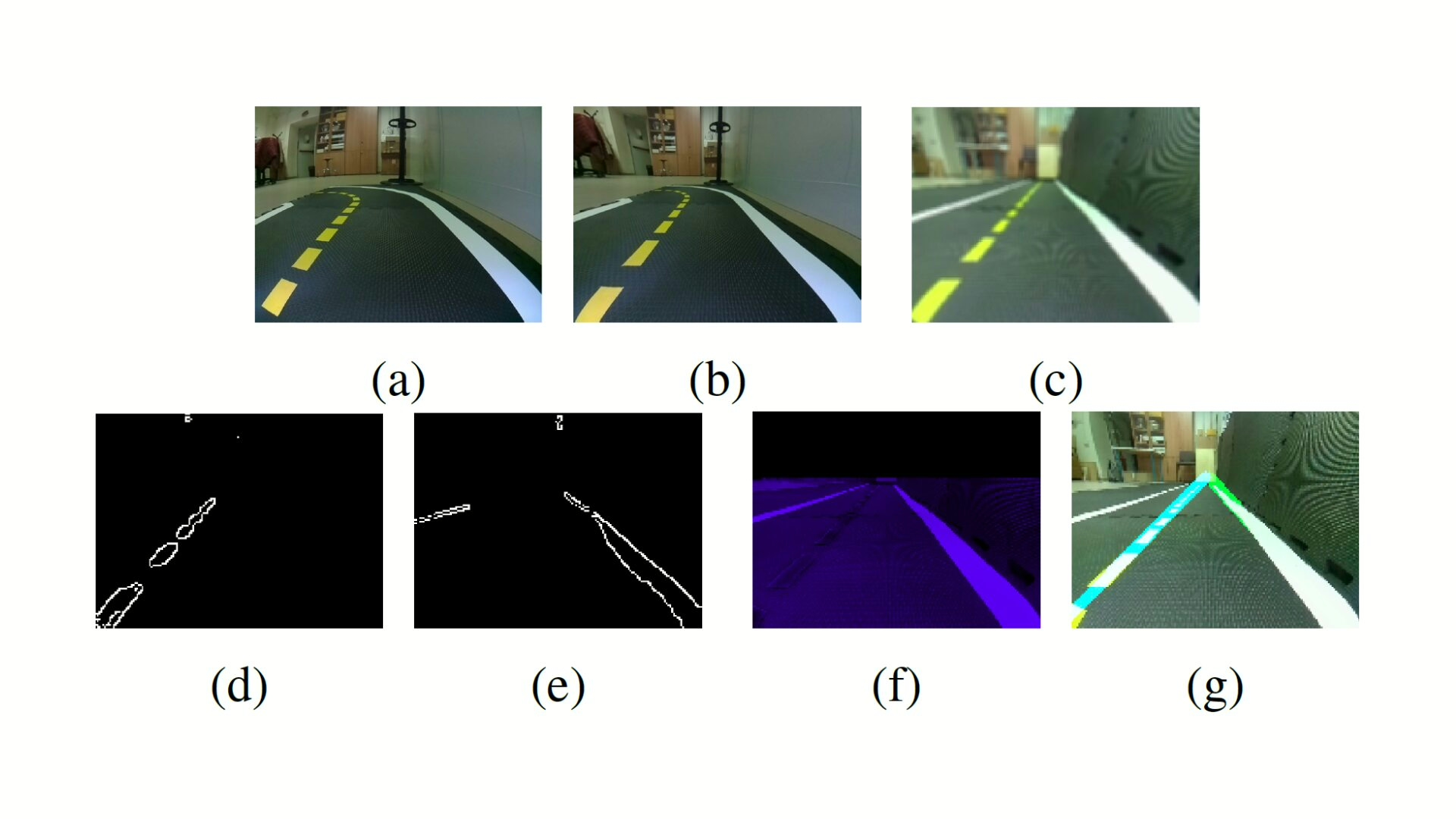

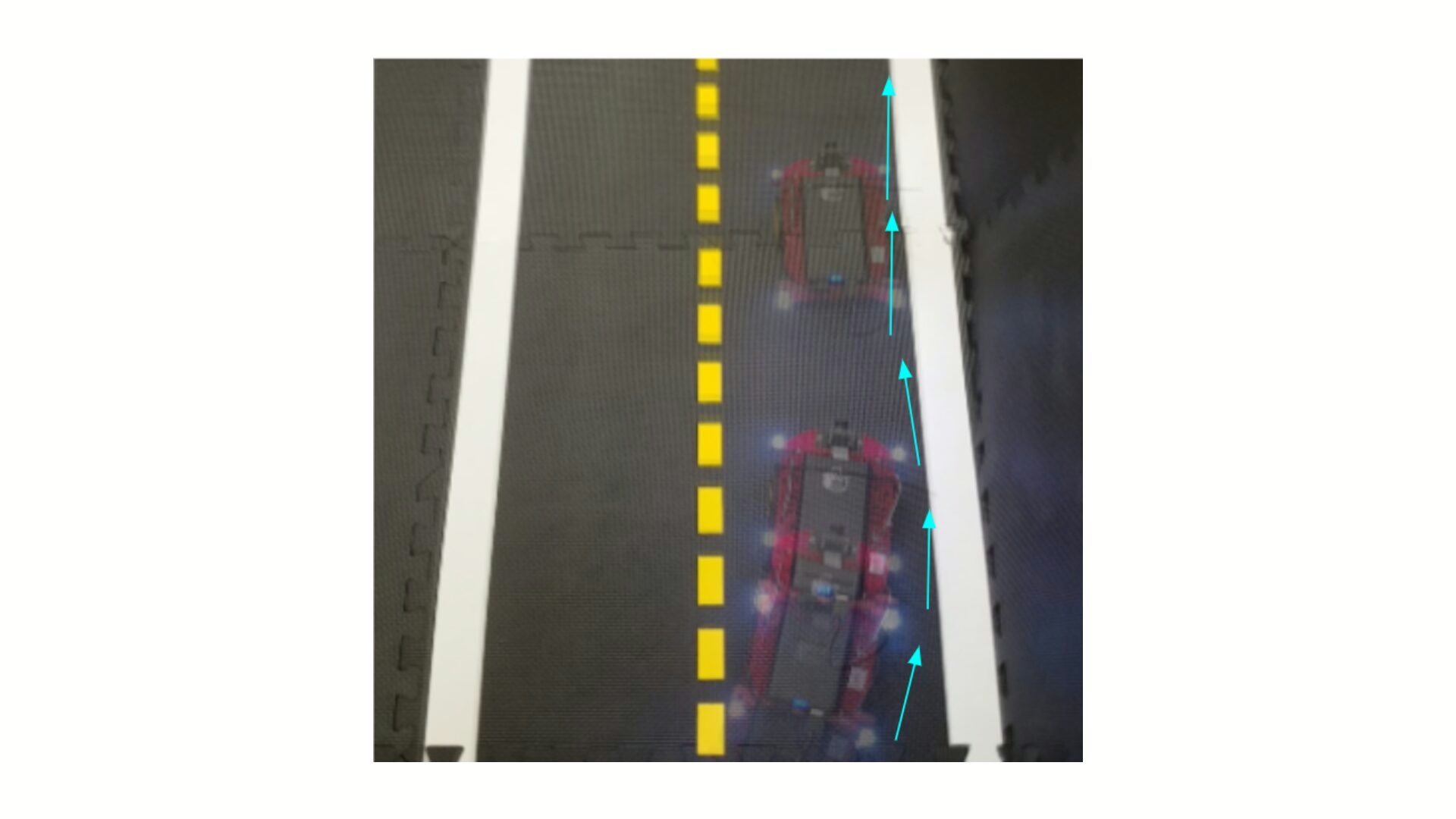

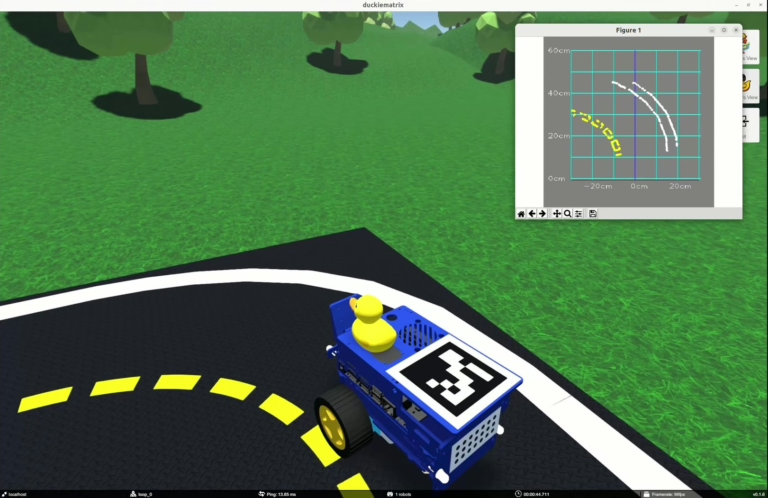

- Approach: Simulate real-time delays in Duckietown and compare classical RL with action-conditioned Real-Time RL policies.

- Authors: Guillaume Gagné-Labelle, Gabriel Sasseville and Nicolas Bosteels

Reinforcement Learning in Duckiematrix Real-Time - objectives and approach

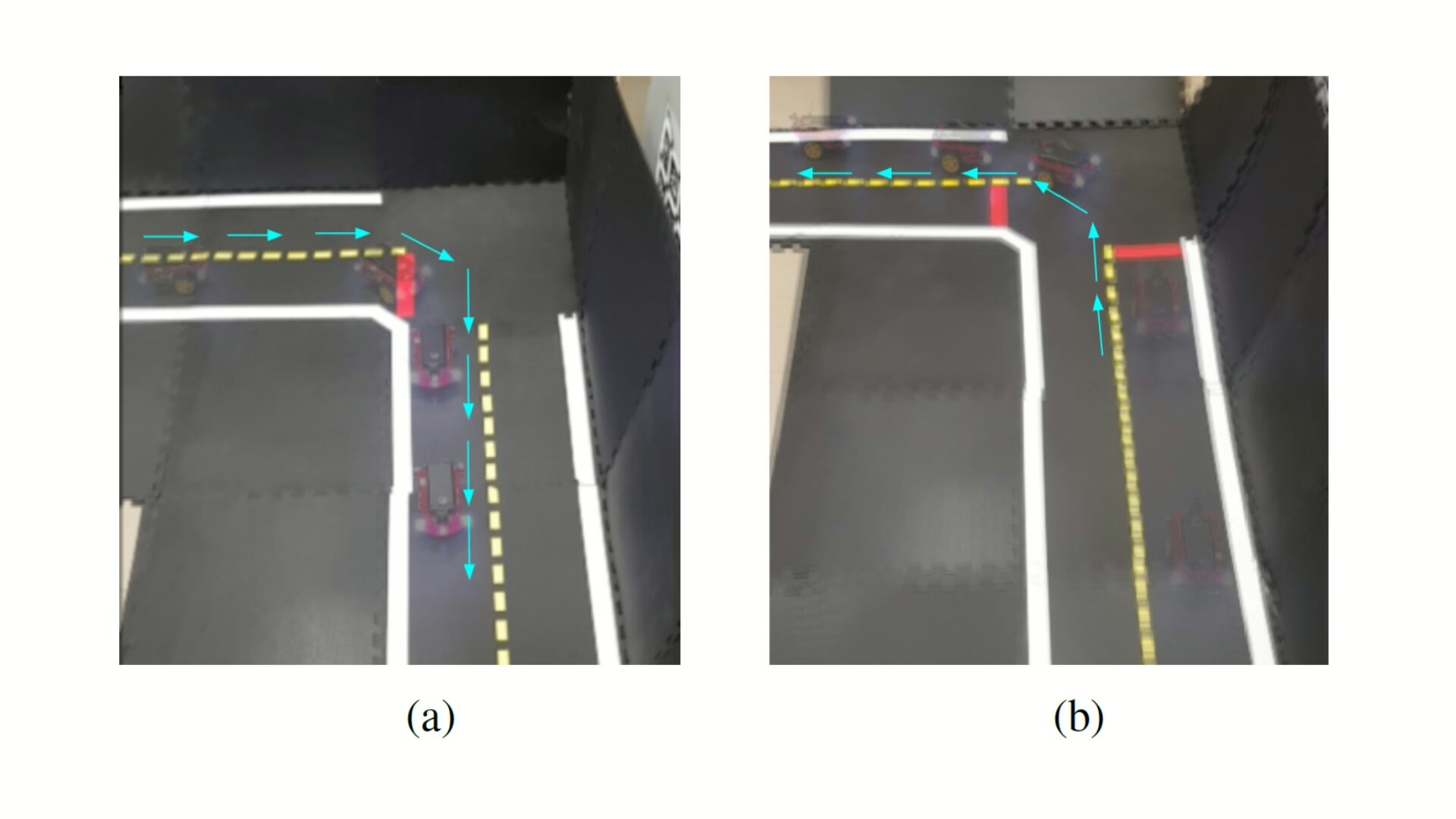

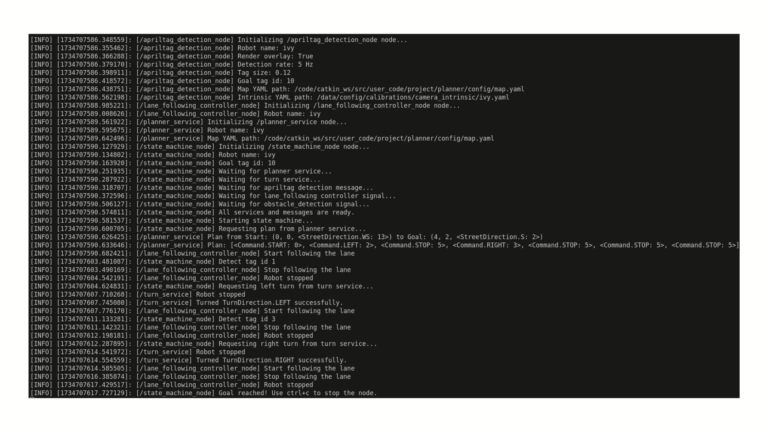

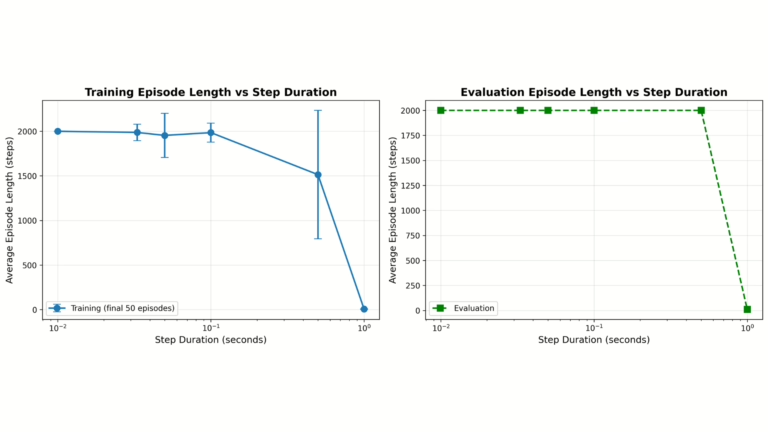

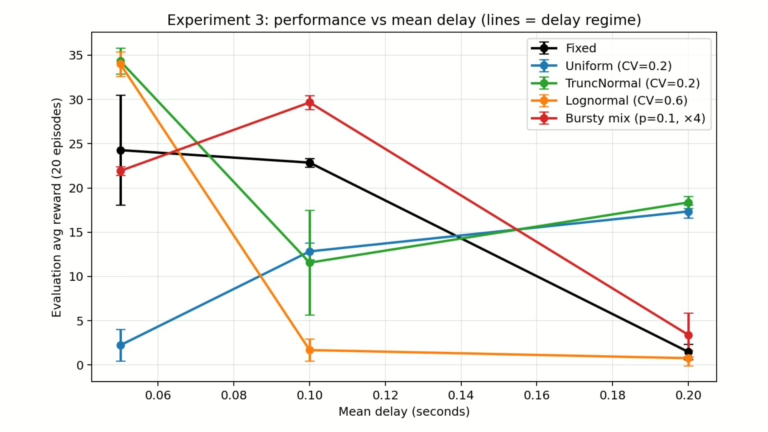

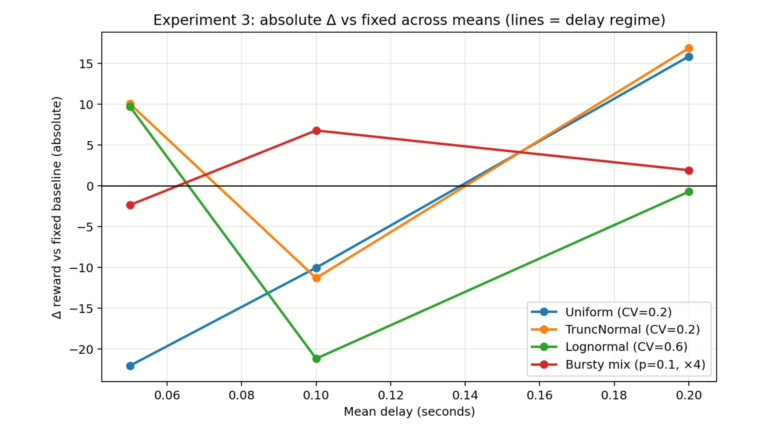

The objective of this project is to evaluate Reinforcement Learning performance in Duckiematrix under real-time constraints in Duckietown by quantifying the impact of computation delay on policy performance, reward, and episode length in autonomous driving tasks.

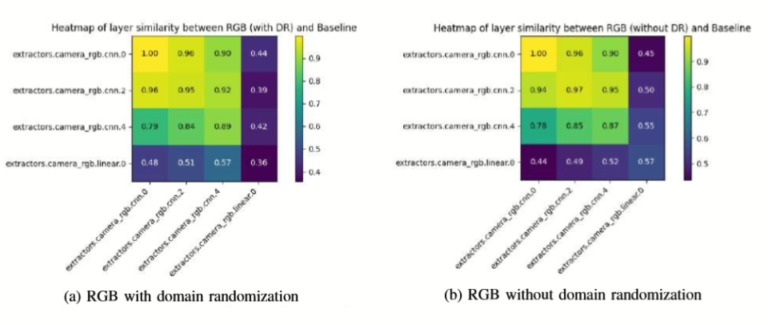

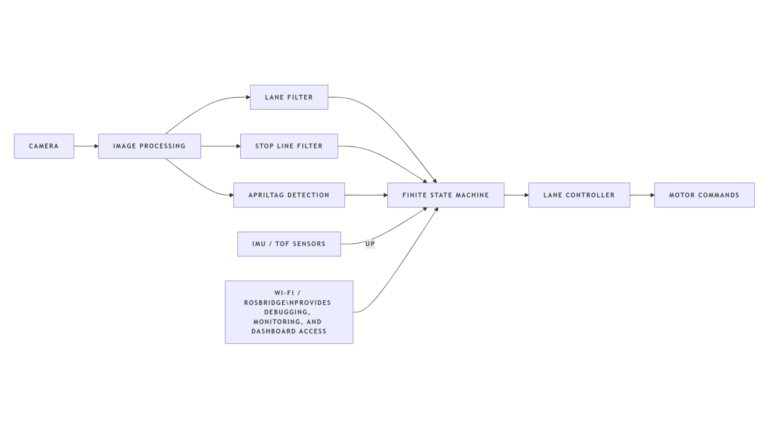

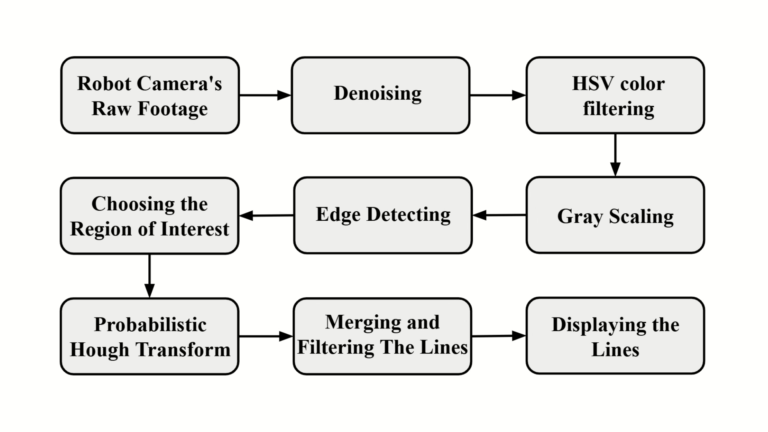

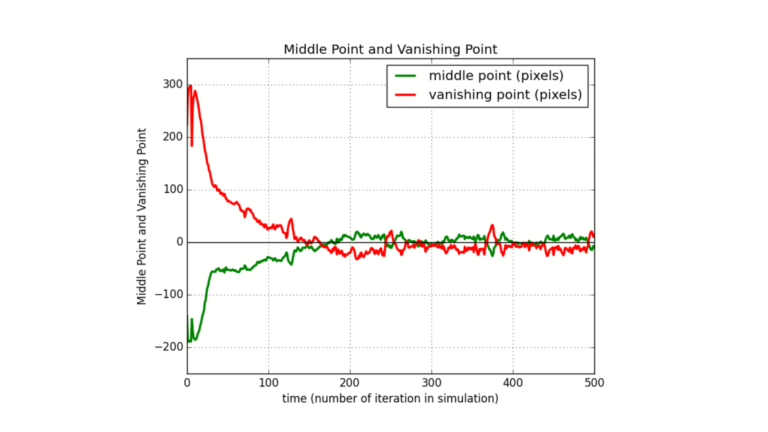

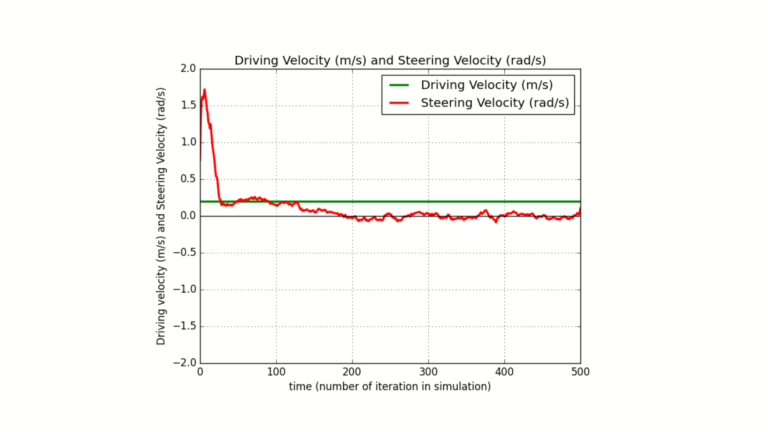

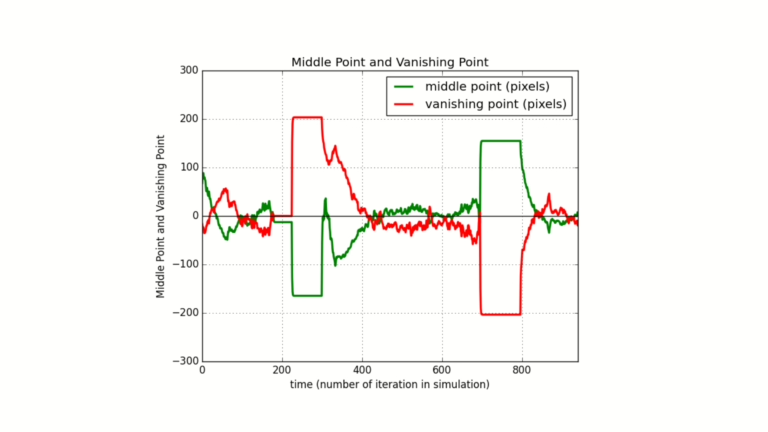

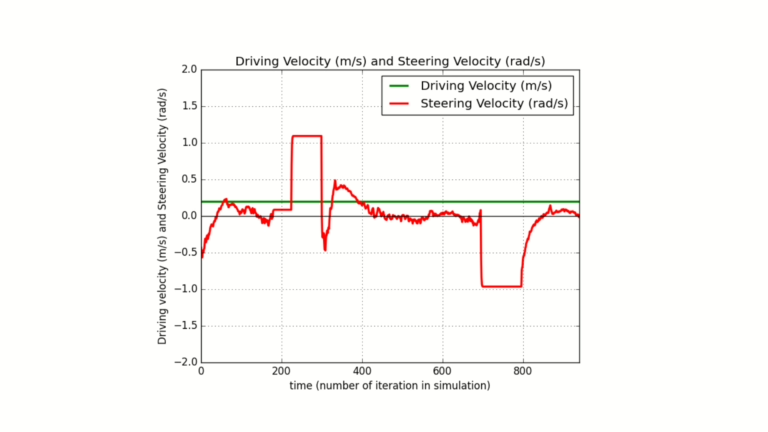

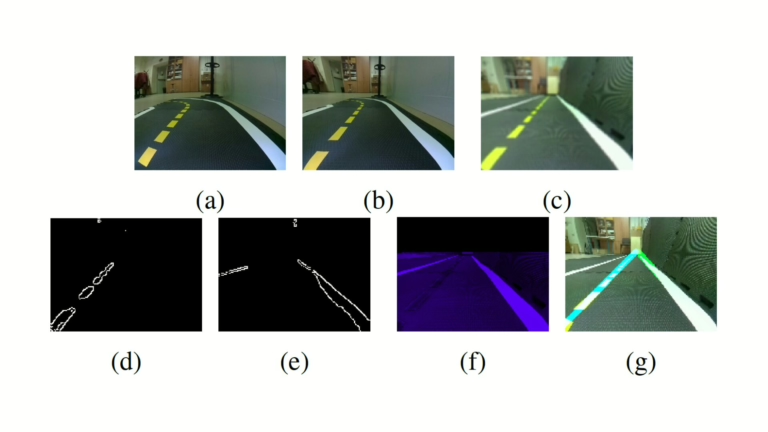

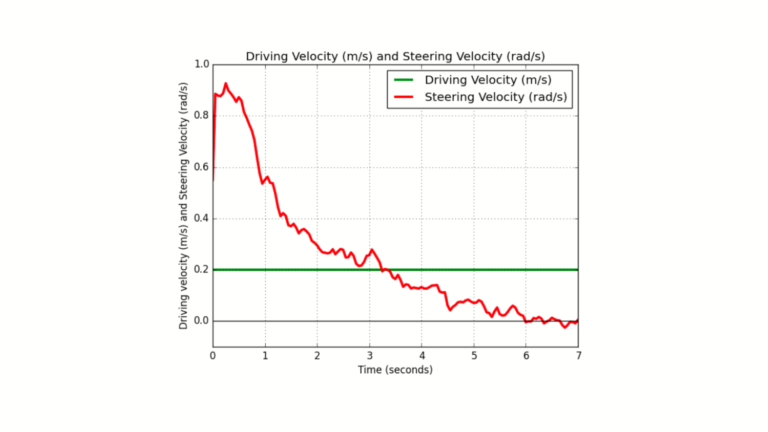

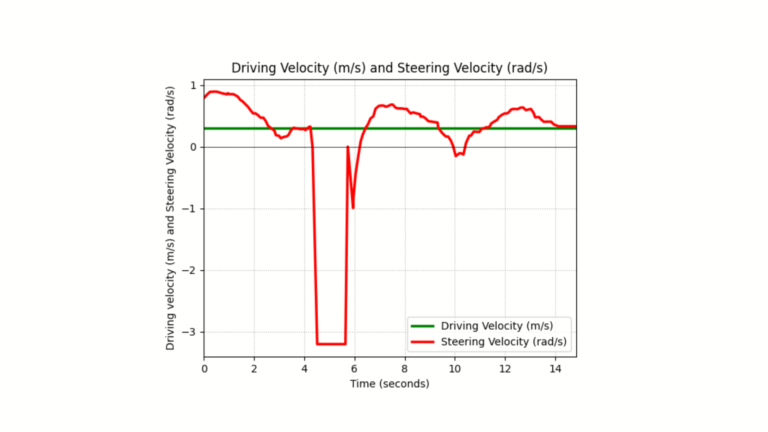

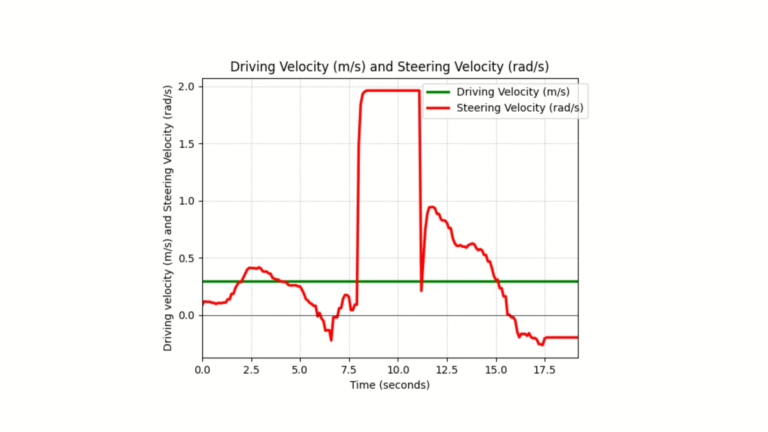

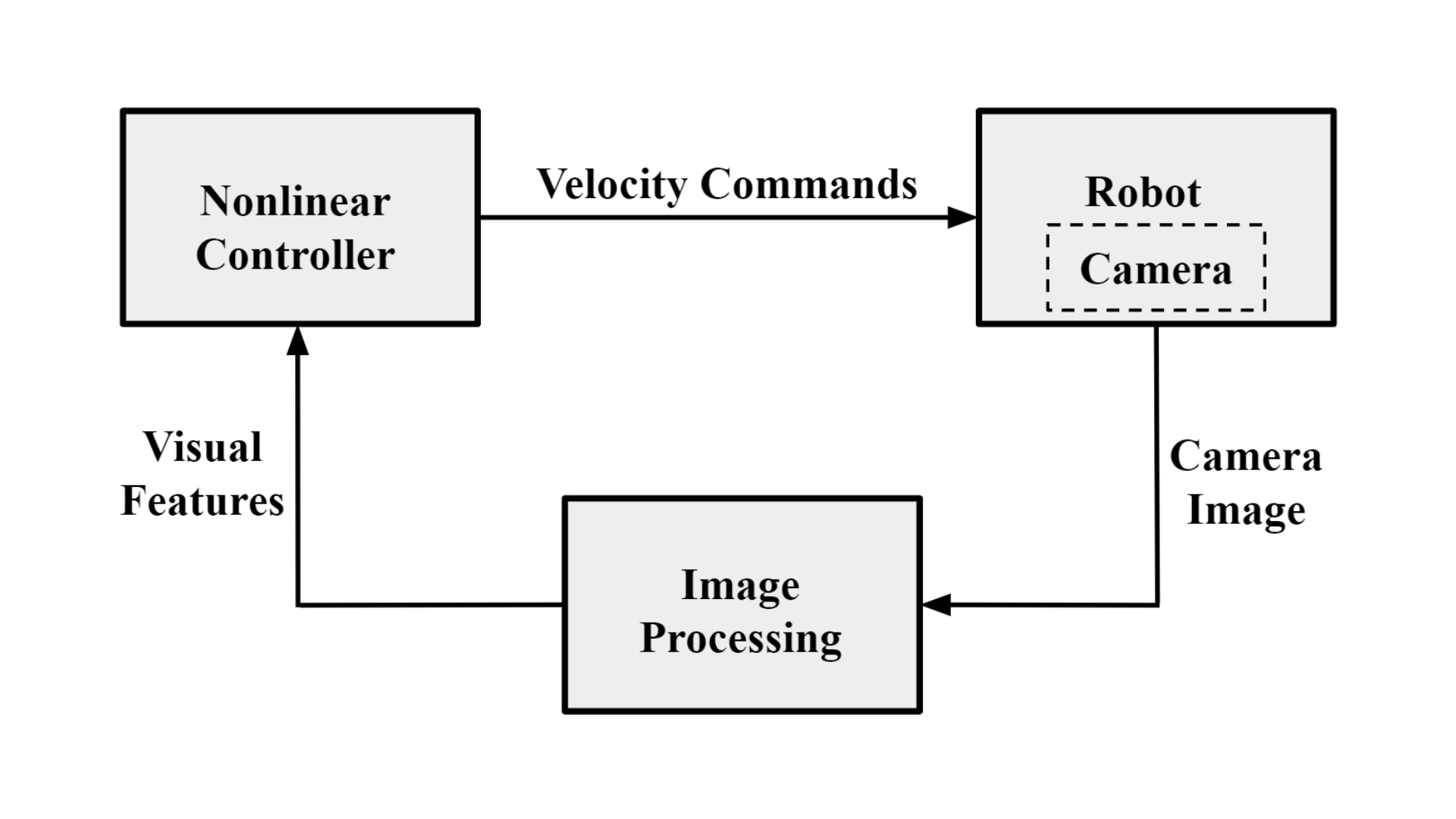

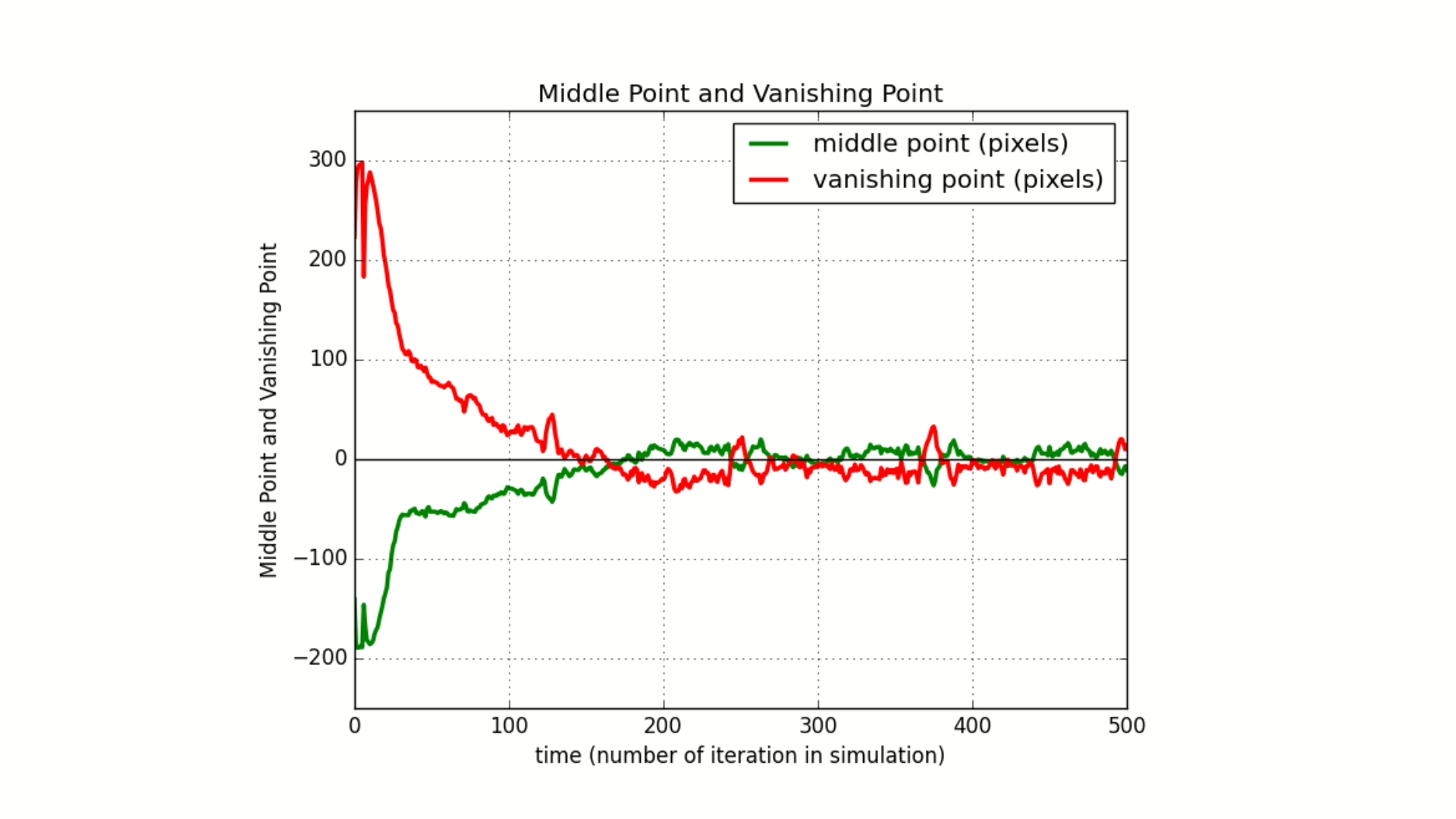

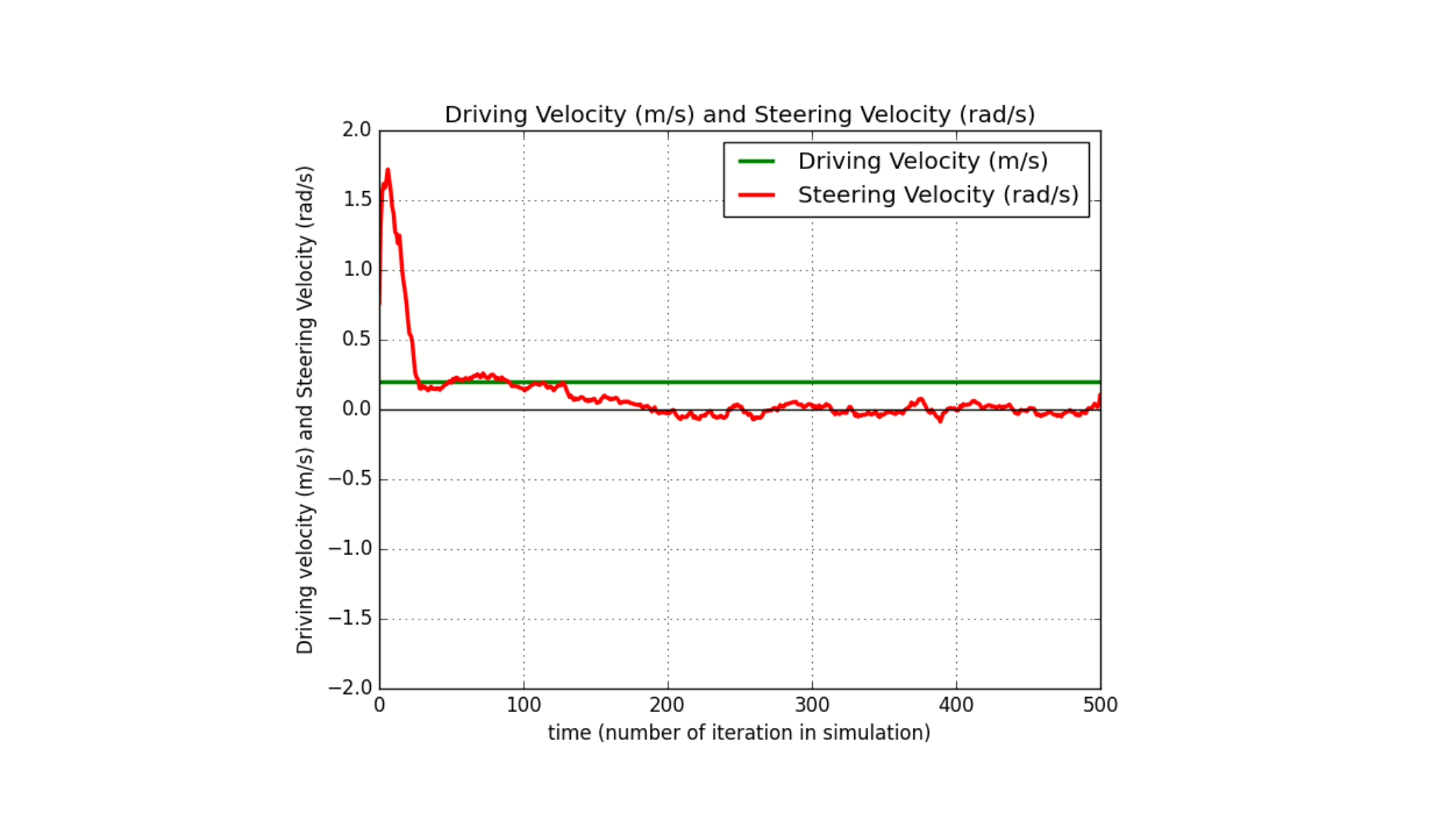

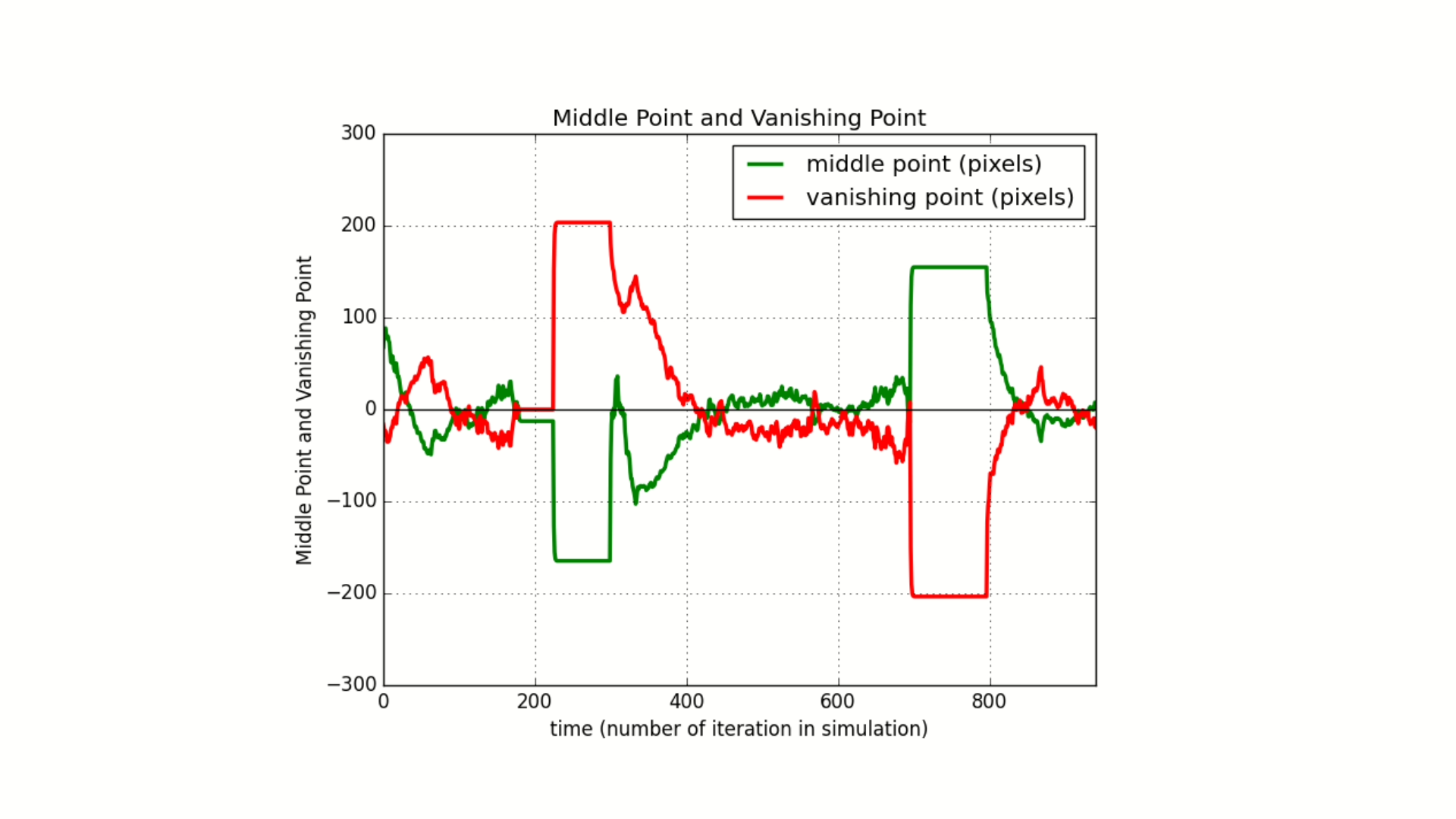

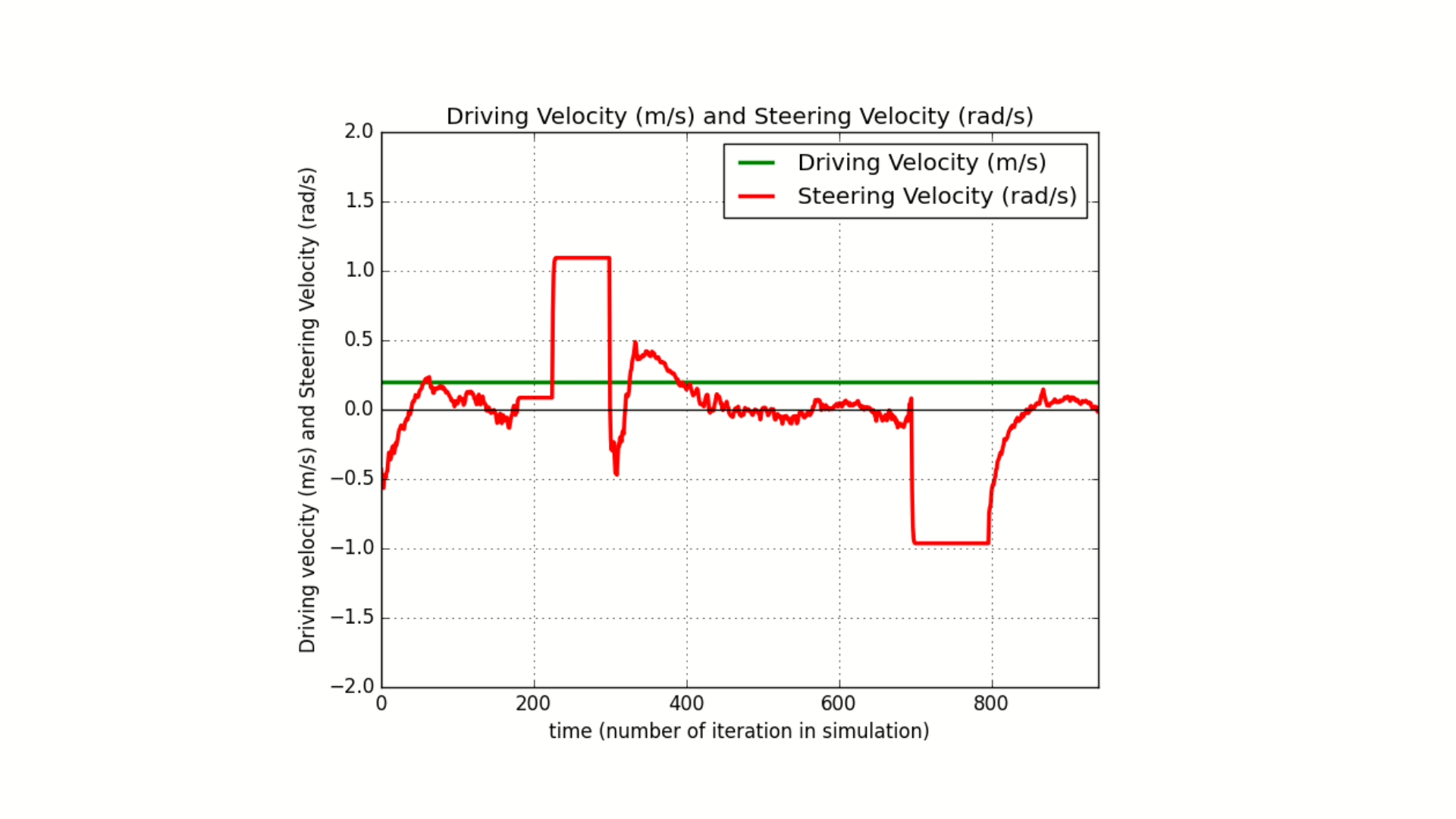

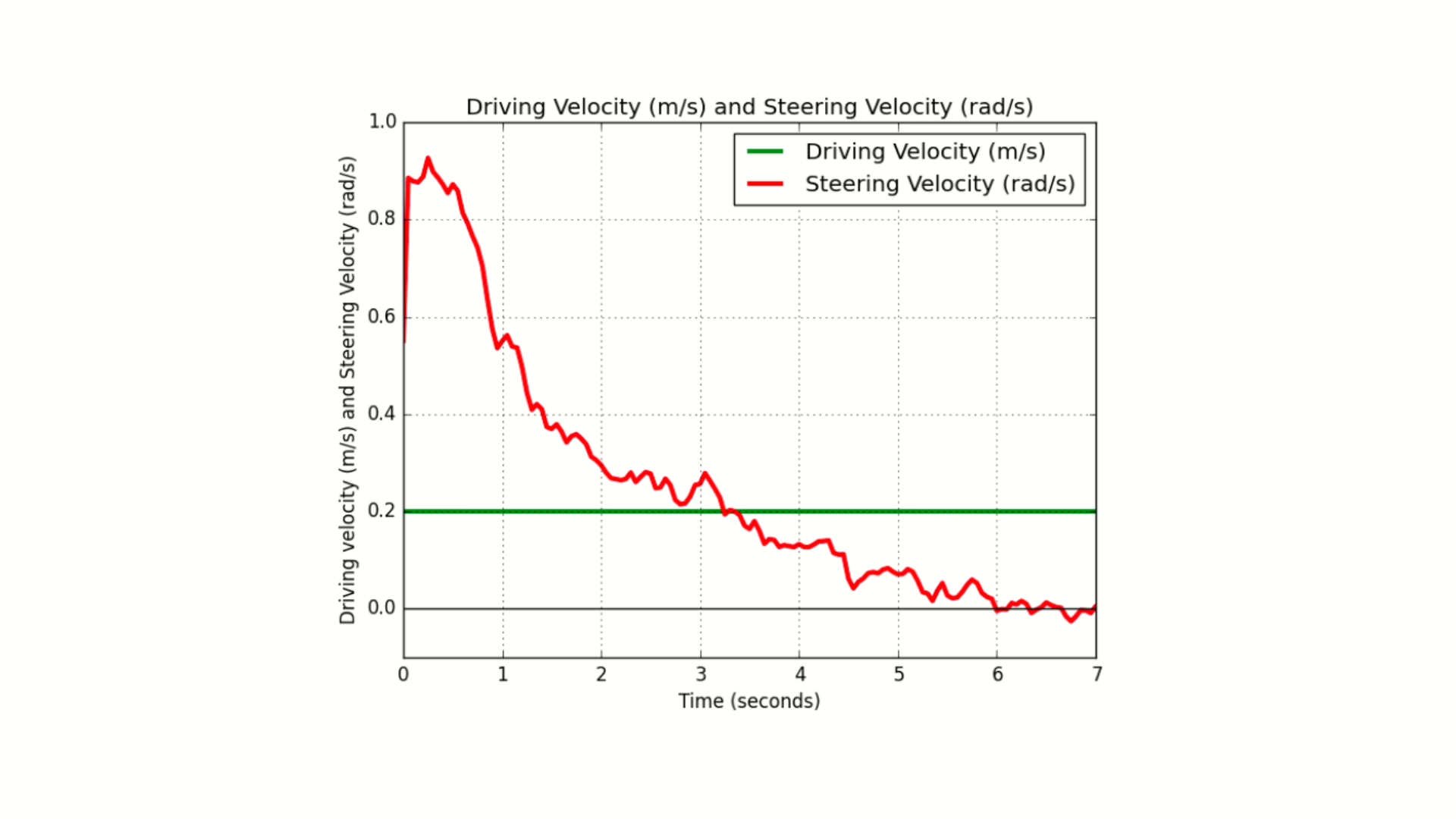

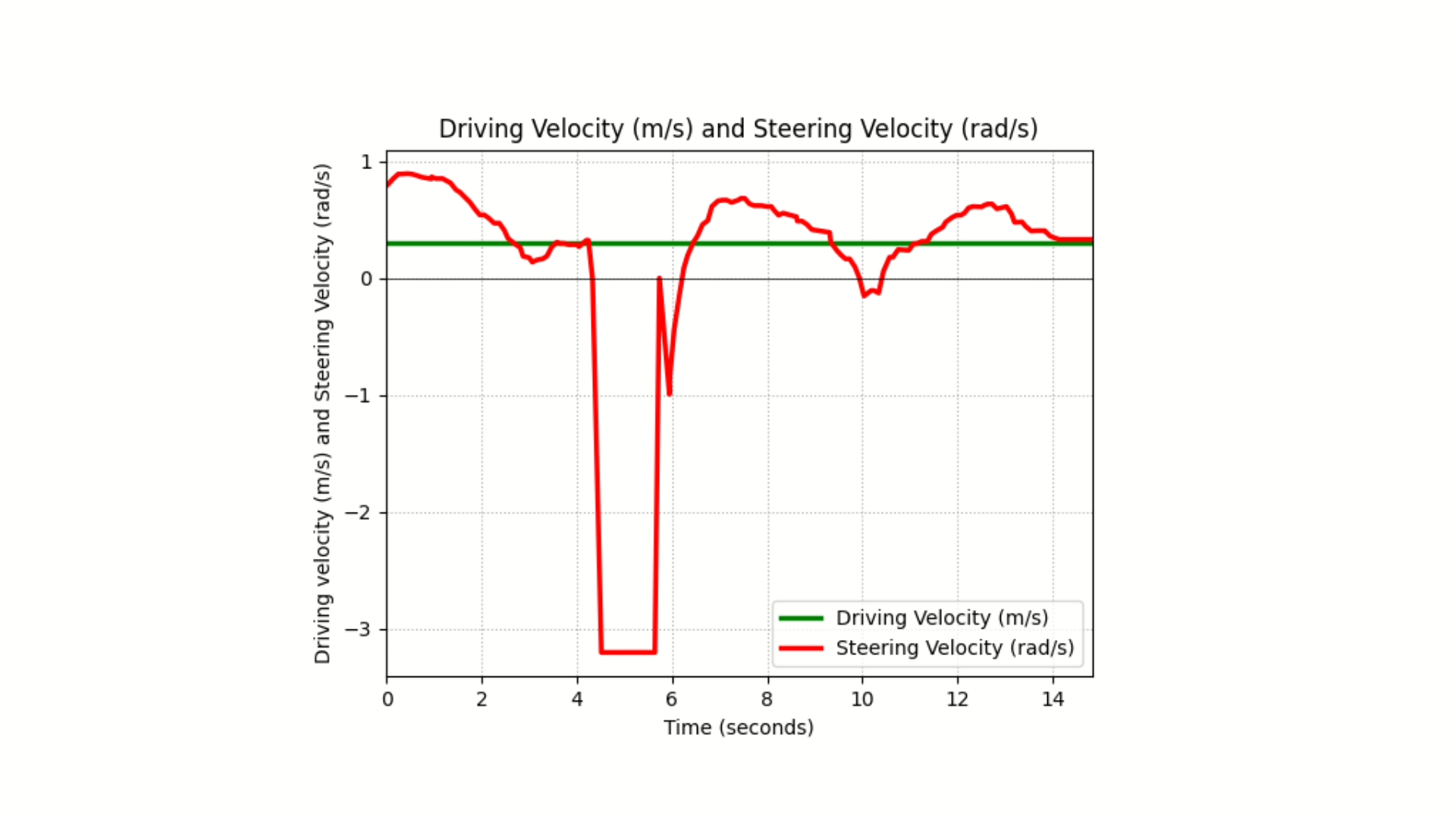

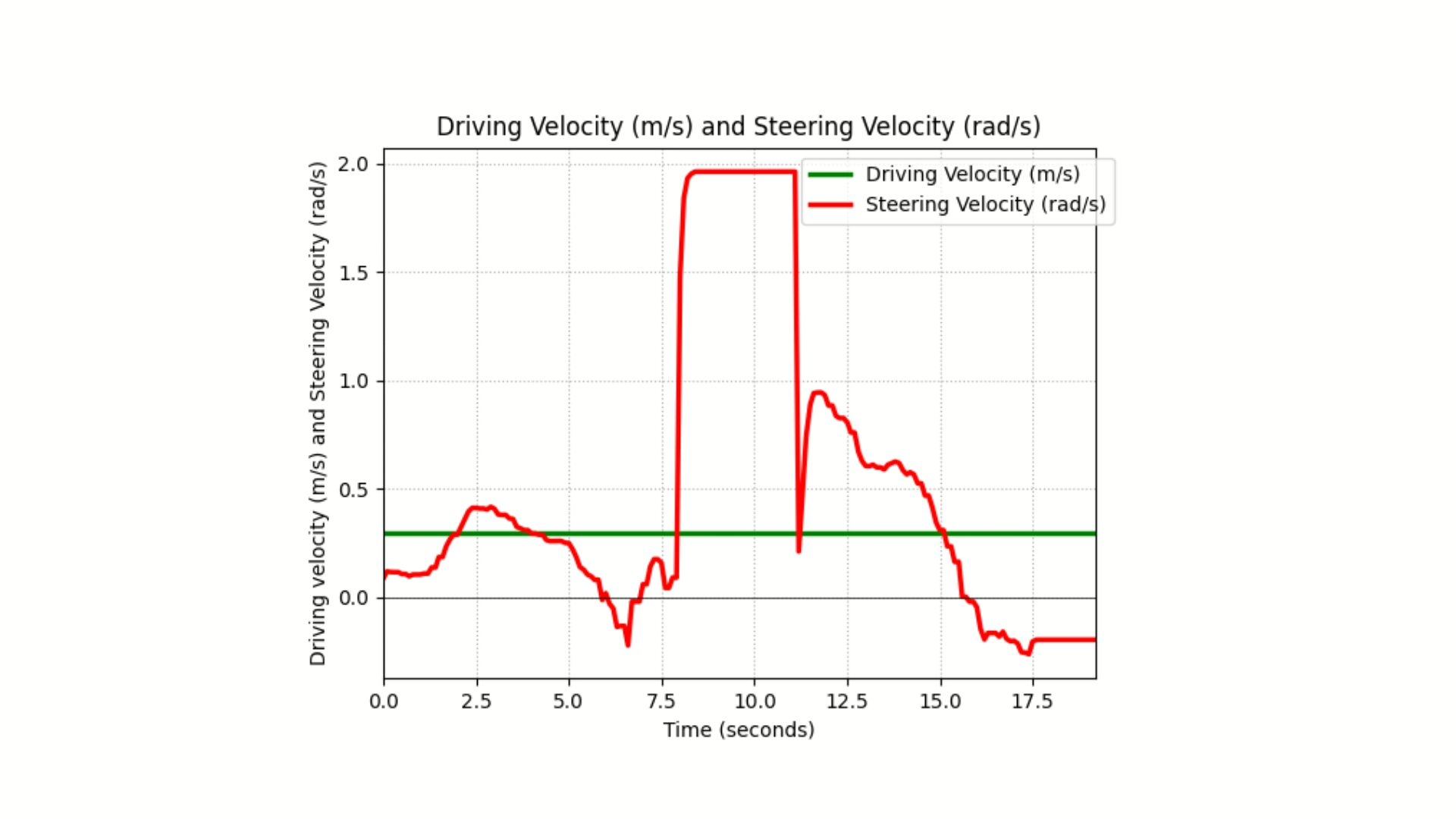

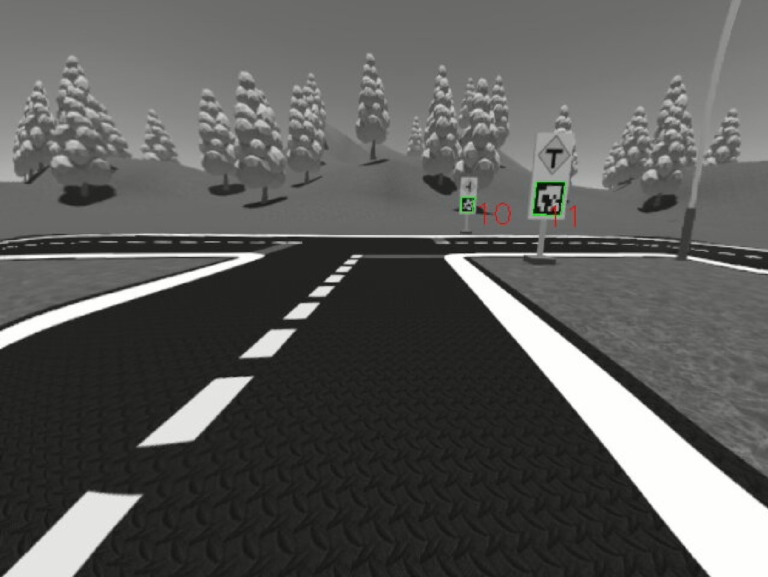

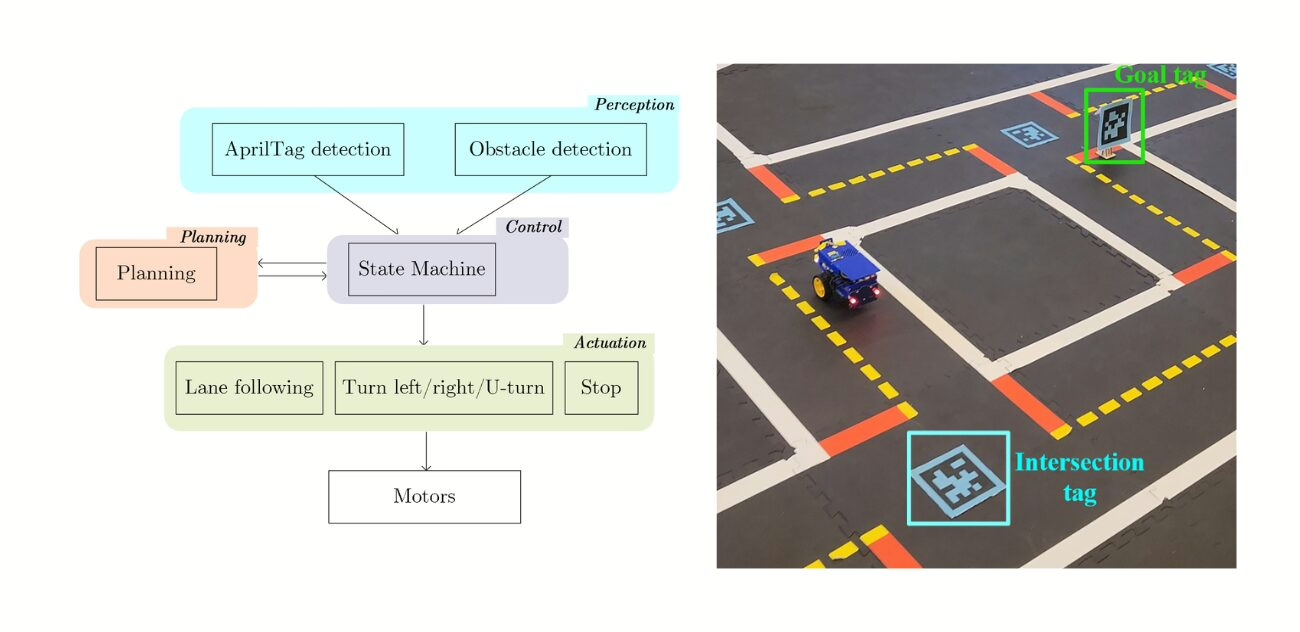

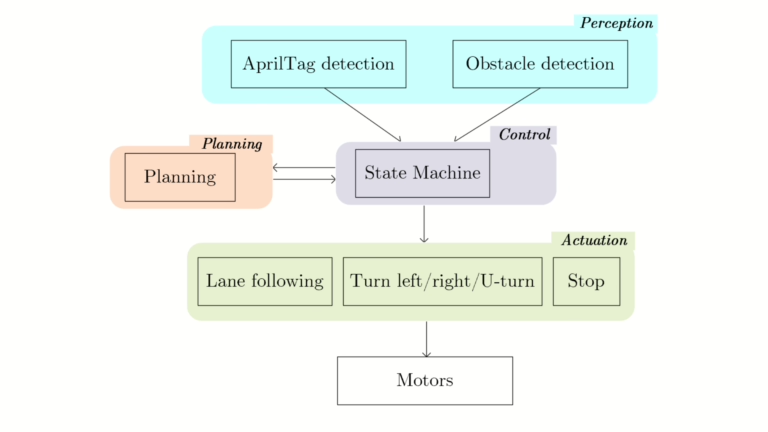

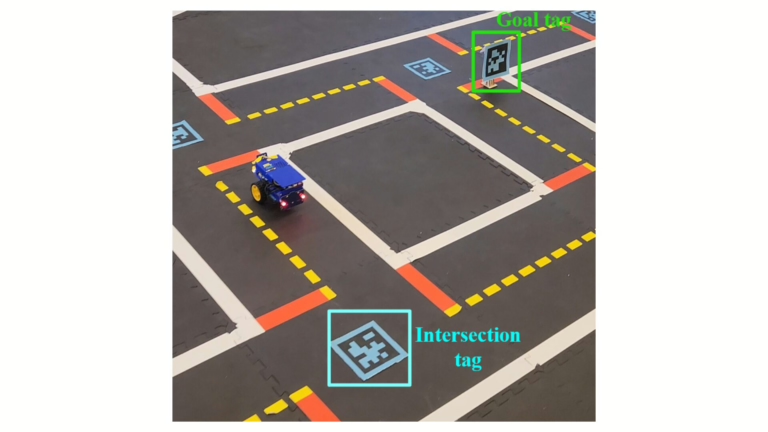

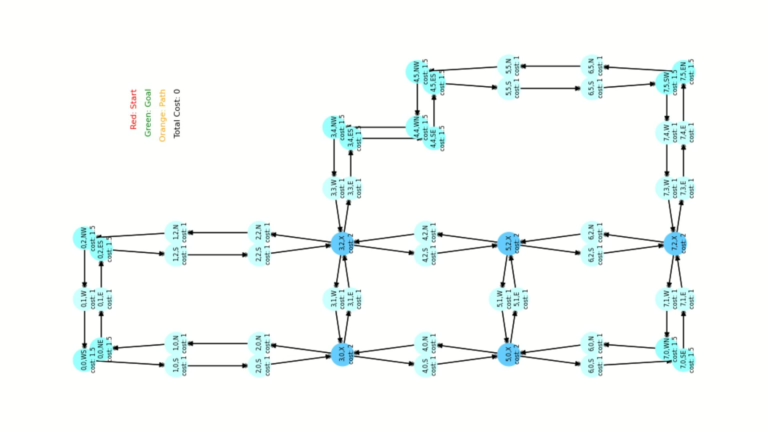

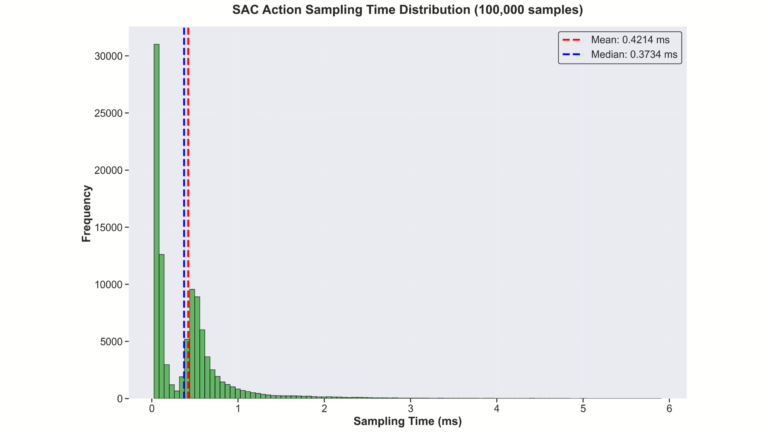

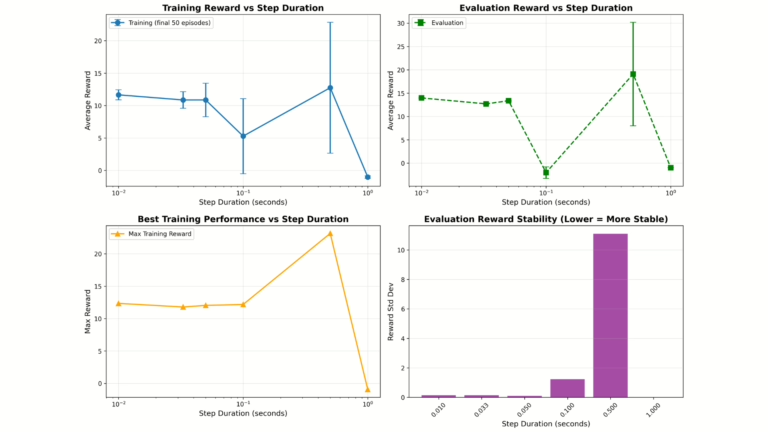

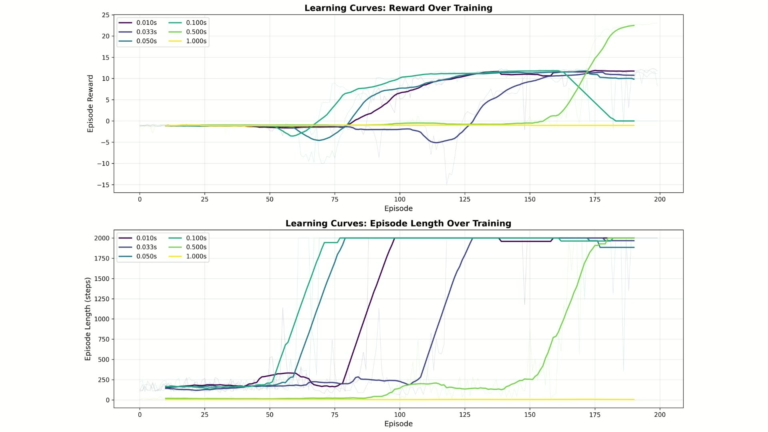

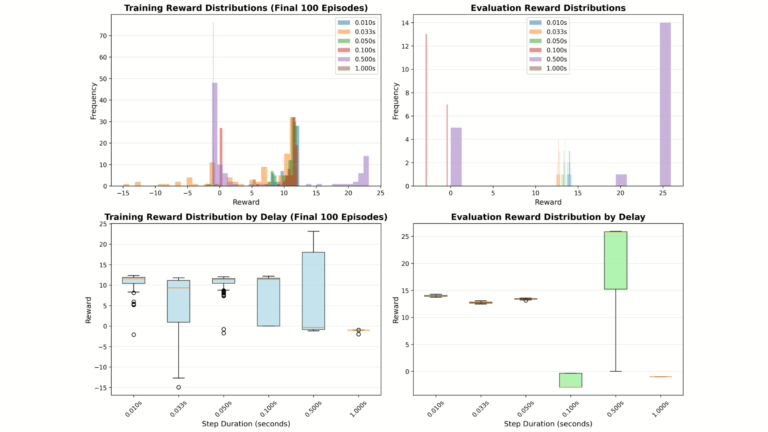

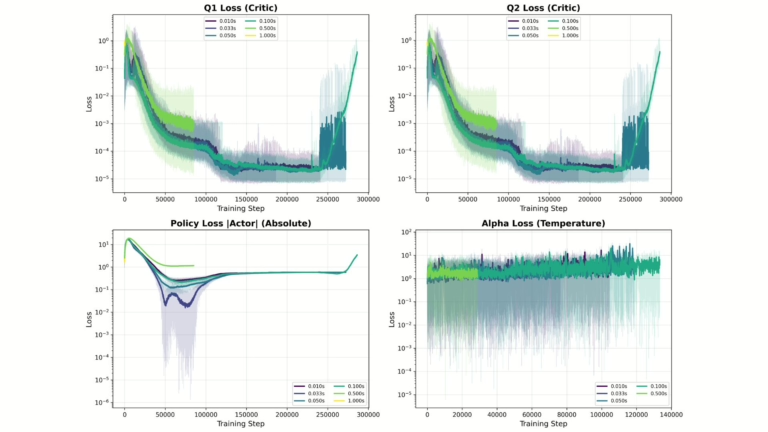

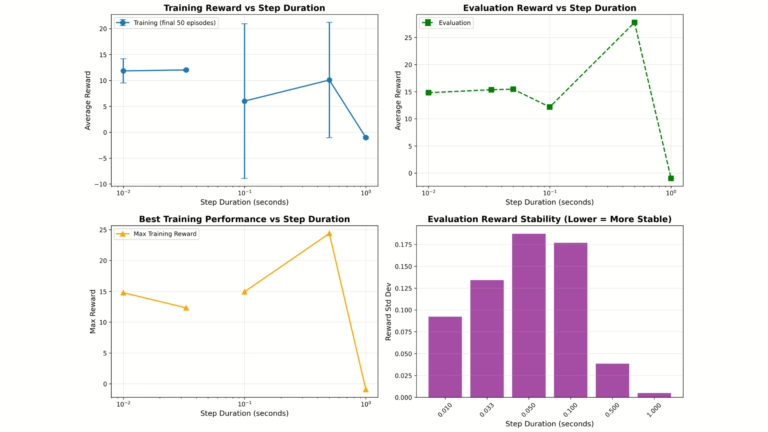

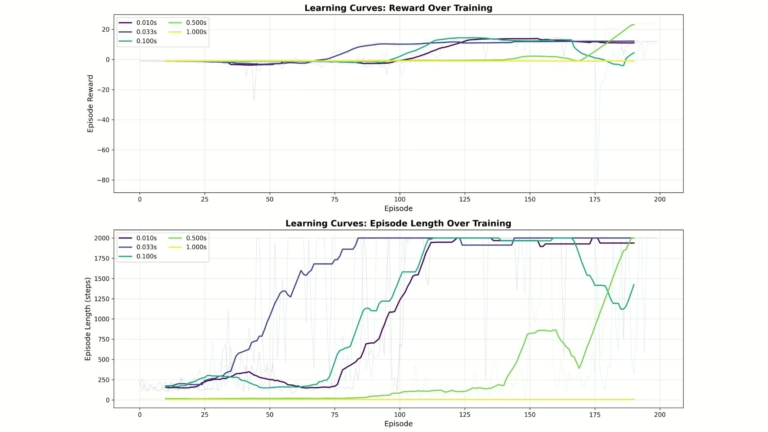

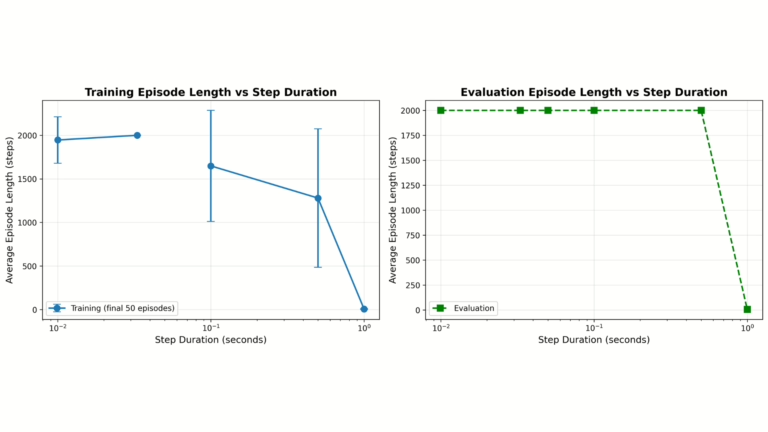

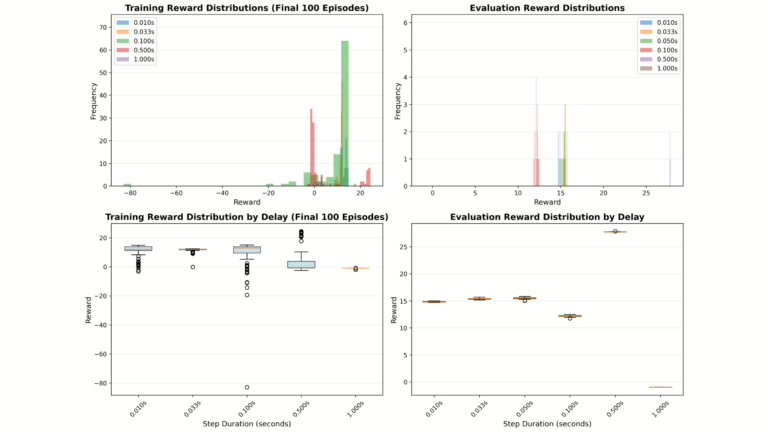

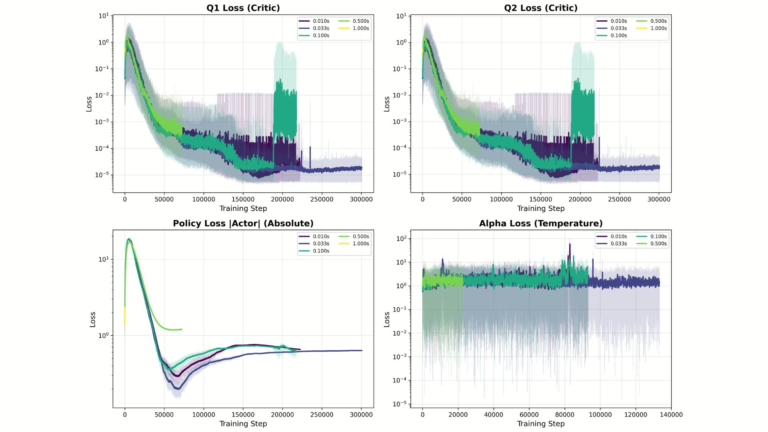

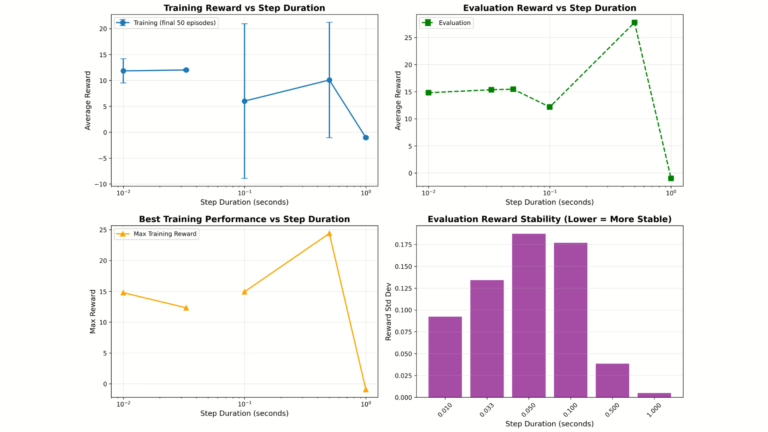

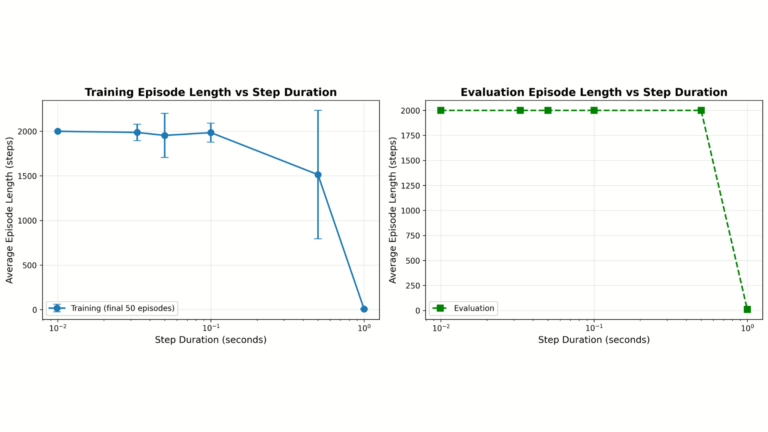

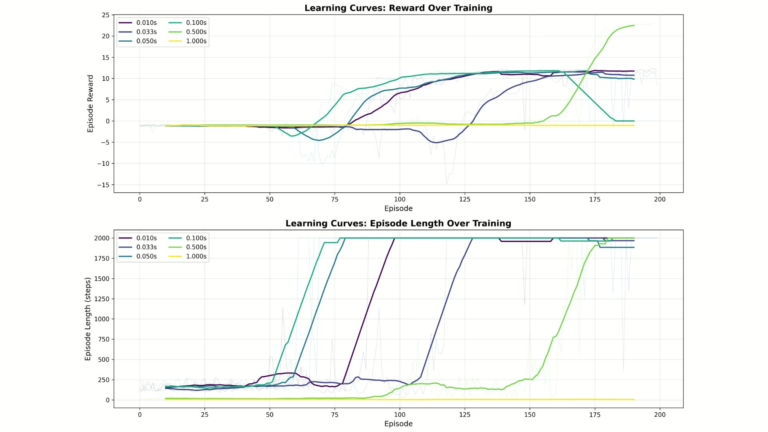

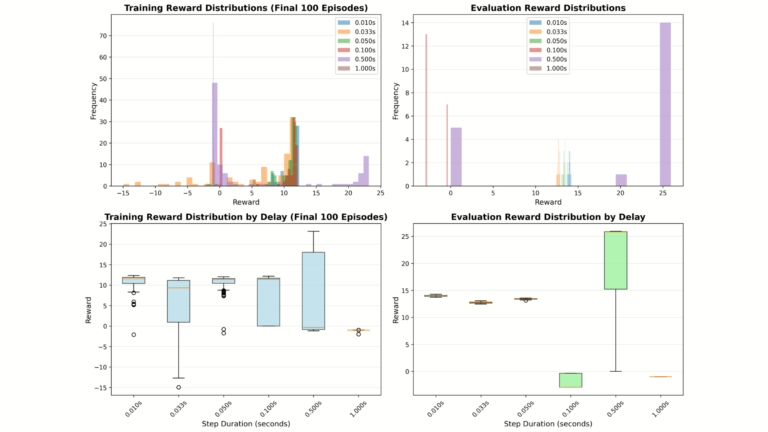

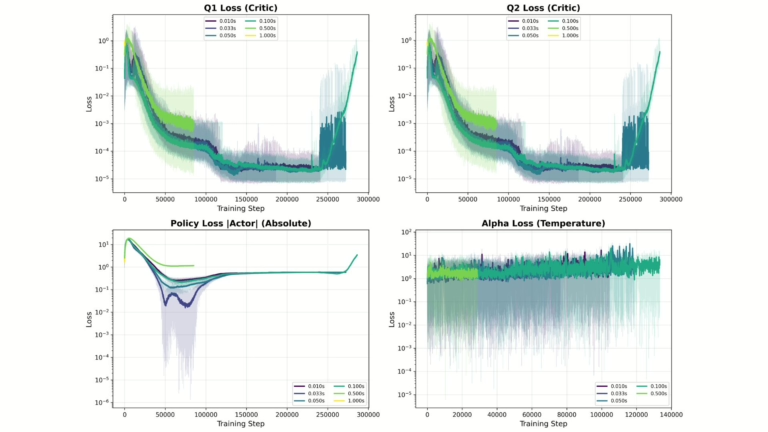

The approach implements Soft Actor-Critic (SAC) based Reinforcement Learning models in Duckiematrix simulation, introduces controlled fixed and variable time delays in the environment loop, and compares classical Reinforcement Learning policies π(at | st) with action-conditioned Real-Time Reinforcement Learning policies π(at | st−1, at−1) using evaluation reward, reward variance, and episode length metrics across multiple delay distributions.

Reinforcement Learning in Duckiematrix Real-Time - highlights

The challenges

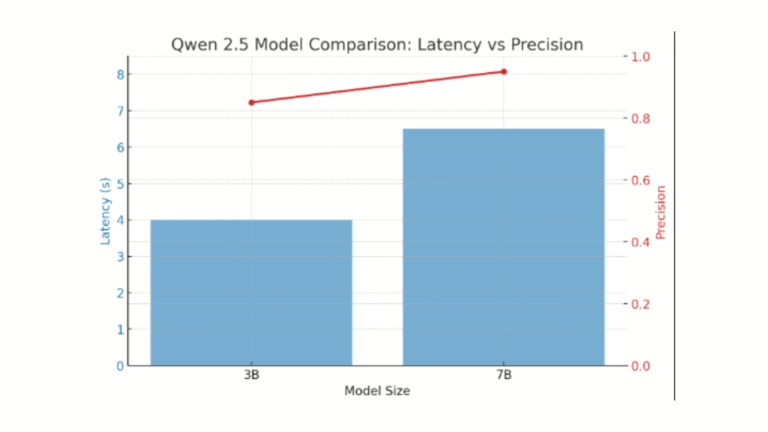

The challenges in this project involve modeling Reinforcement Learning in Duckiematrix under real-time constraints in Duckietown where computation delay violates the Markov Decision Process assumption of instantaneous state–action transitions, resulting in state–action mismatch, policy instability, and reward degradation. Fixed and variable delay distributions introduce non-stationarity, increased variance in evaluation reward, and failure modes at higher delays (≥0.1s for classical RL and ≥1.0s for both methods), while stochastic latency from neural network inference impacts policy execution timing, convergence behavior, and sample efficiency.

Additional challenges include maintaining stability in Soft Actor-Critic training under delayed feedback, handling missing training metrics, ensuring robustness across delay distributions, and evaluating performance using consistent metrics such as reward, variance, and episode length across multiple experimental conditions.

Looking for similar projects?

Check out the following works on sim-to-real with Duckietown:

Real-Time Reinforcement Learning in Duckiematrix: authors

Gabriel Sasseville is a Ph.D. student at Mila Institute in Montreal, Canada.

Guillaume Gagné-Labelle is a collaborating researcher at Mila Institute in Montreal, Canada.

Nicolas Bosteels is a Master’s Research student at Mila Institute in Montreal, Canada.

Learn more

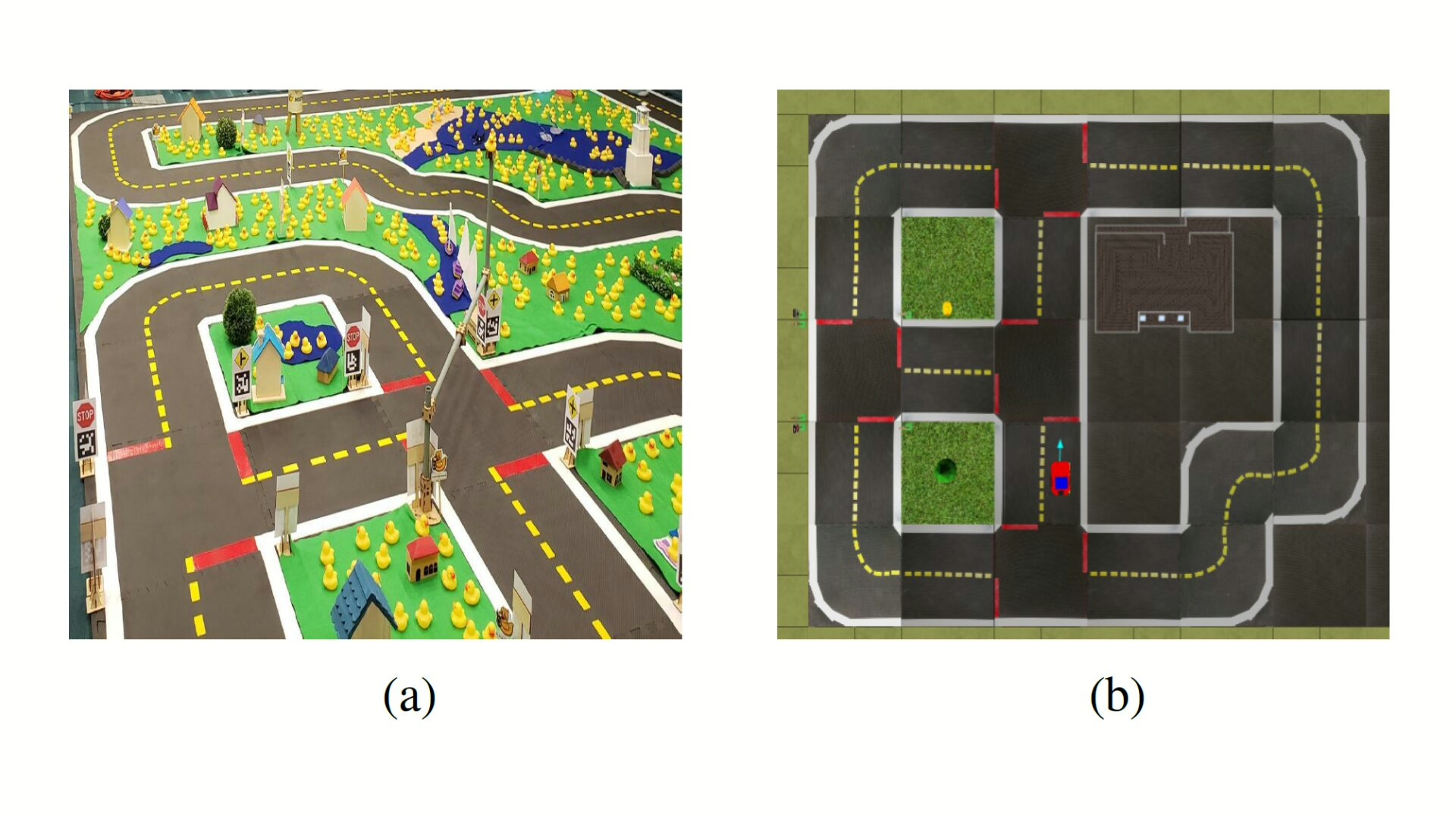

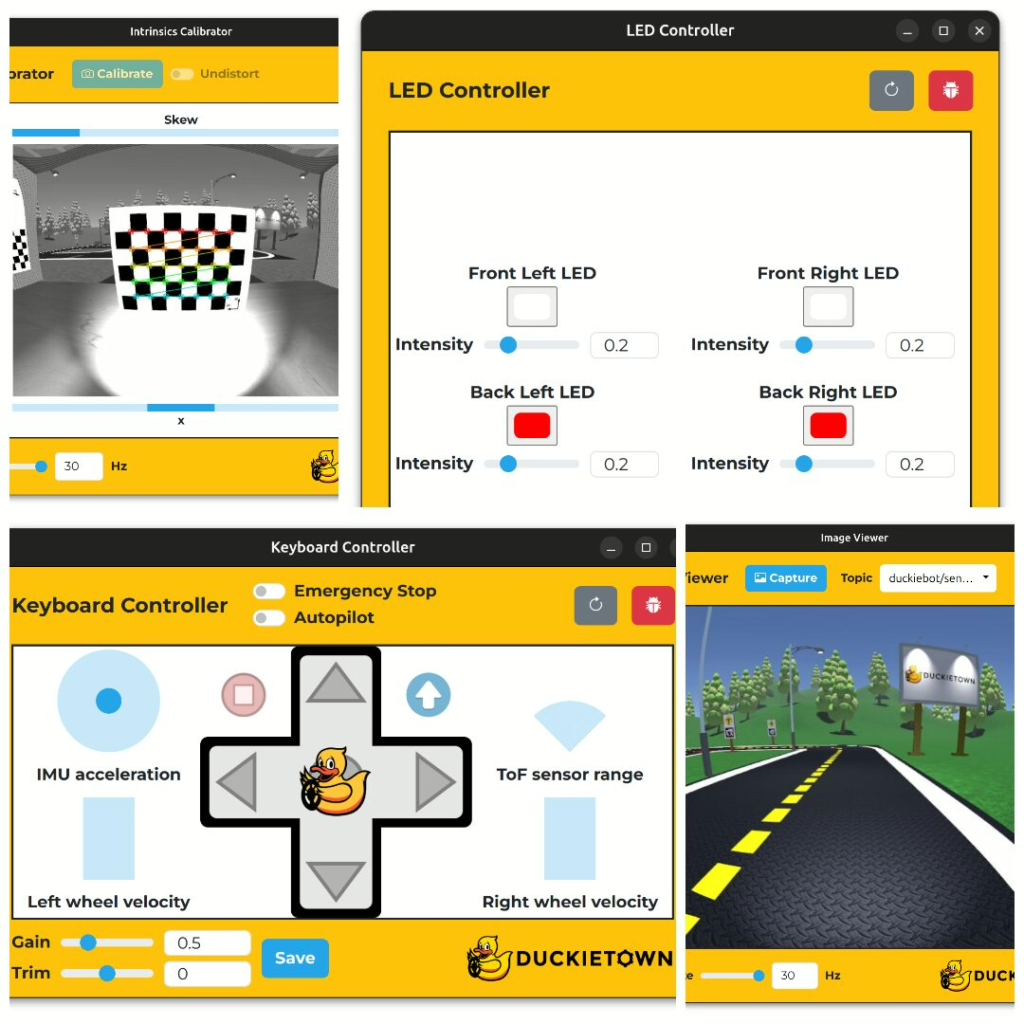

Duckietown is a modular, customizable platform for robotics and artificial intelligence education, enabling hands-on learning and real-world experimentation with autonomous systems.

Designed for teaching, learning, and research, Duckietown supports the full spectrum of autonomy development, from foundational computer science and robotics concepts to advanced AI and self-driving systems research.

These spotlight projects are shared to demonstrate how Duckietown bridges theory and practice in robotics and AI, empowering students to apply machine learning and autonomy techniques to physical robots while building practical skills valued in academic research and industry.