General Information

- Title: Visual Urban Navigation for Mobile Robots: Implementation in the Duckietown Environment

- Authors: Shima Akbari, Nima Akbari, Giuseppe Oriolo, Sergio Galeani

- Institution: Università degli Studi di Roma Tor Vergata, Italy

- Citation: S. Akbari, N. Akbari, G. Oriolo and S. Galeani, "Visual Urban Navigation for Mobile Robots: Implementation in the Duckietown Environment," 2025 International Conference on Control, Automation and Diagnosis (ICCAD), Barcelona, Spain, 2025, pp. 1-6, doi: 10.1109/ICCAD64771.2025.11099311.

Visual Control for Autonomous Navigation in Duckietown

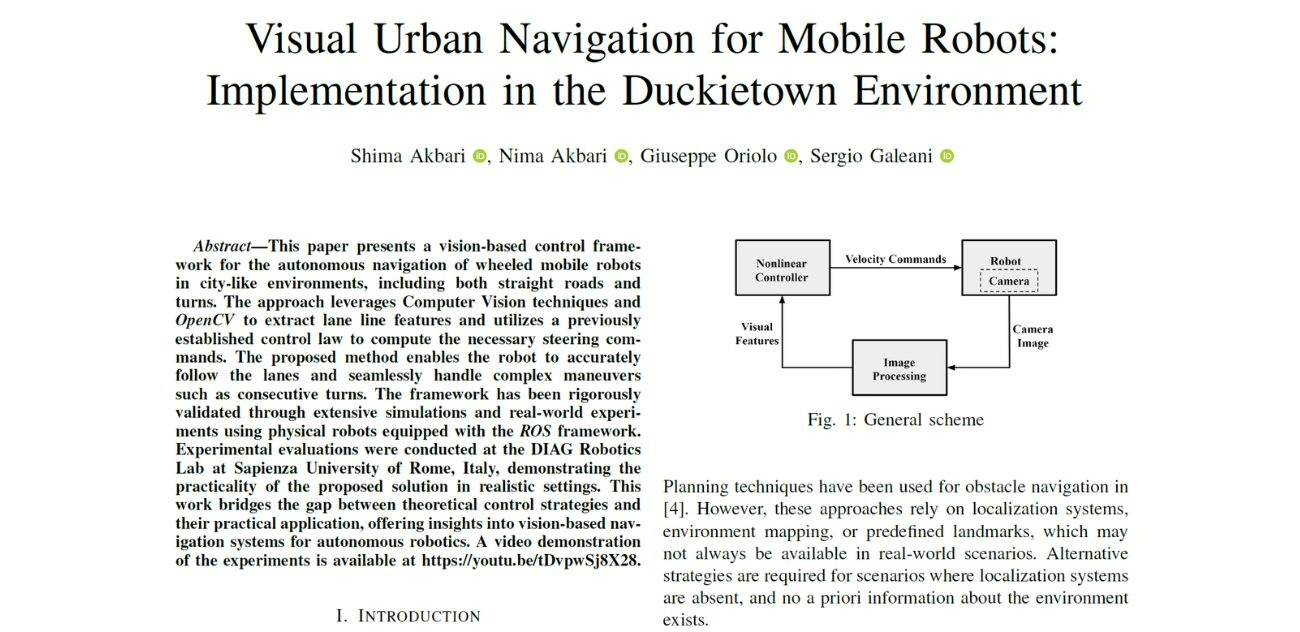

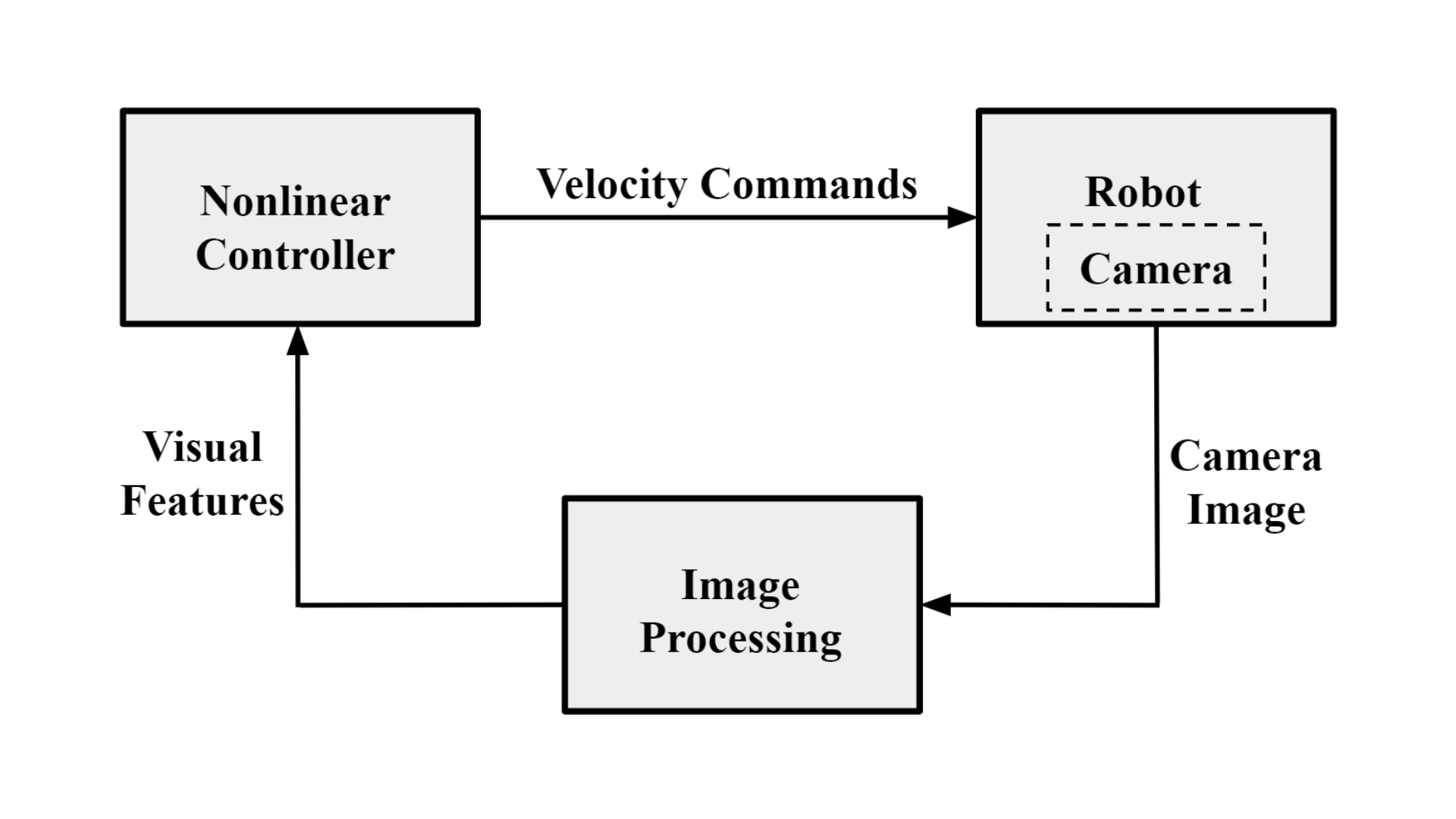

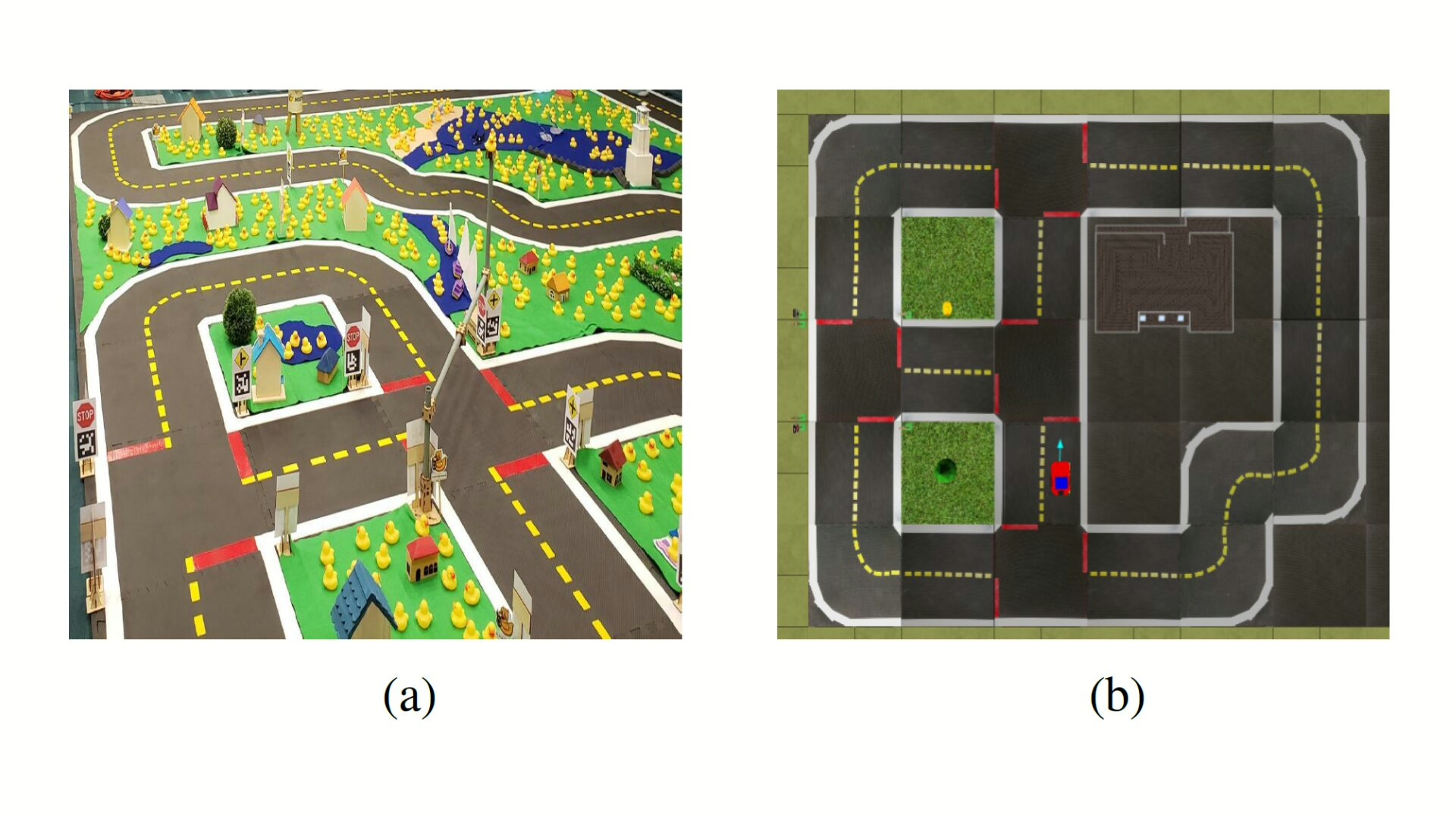

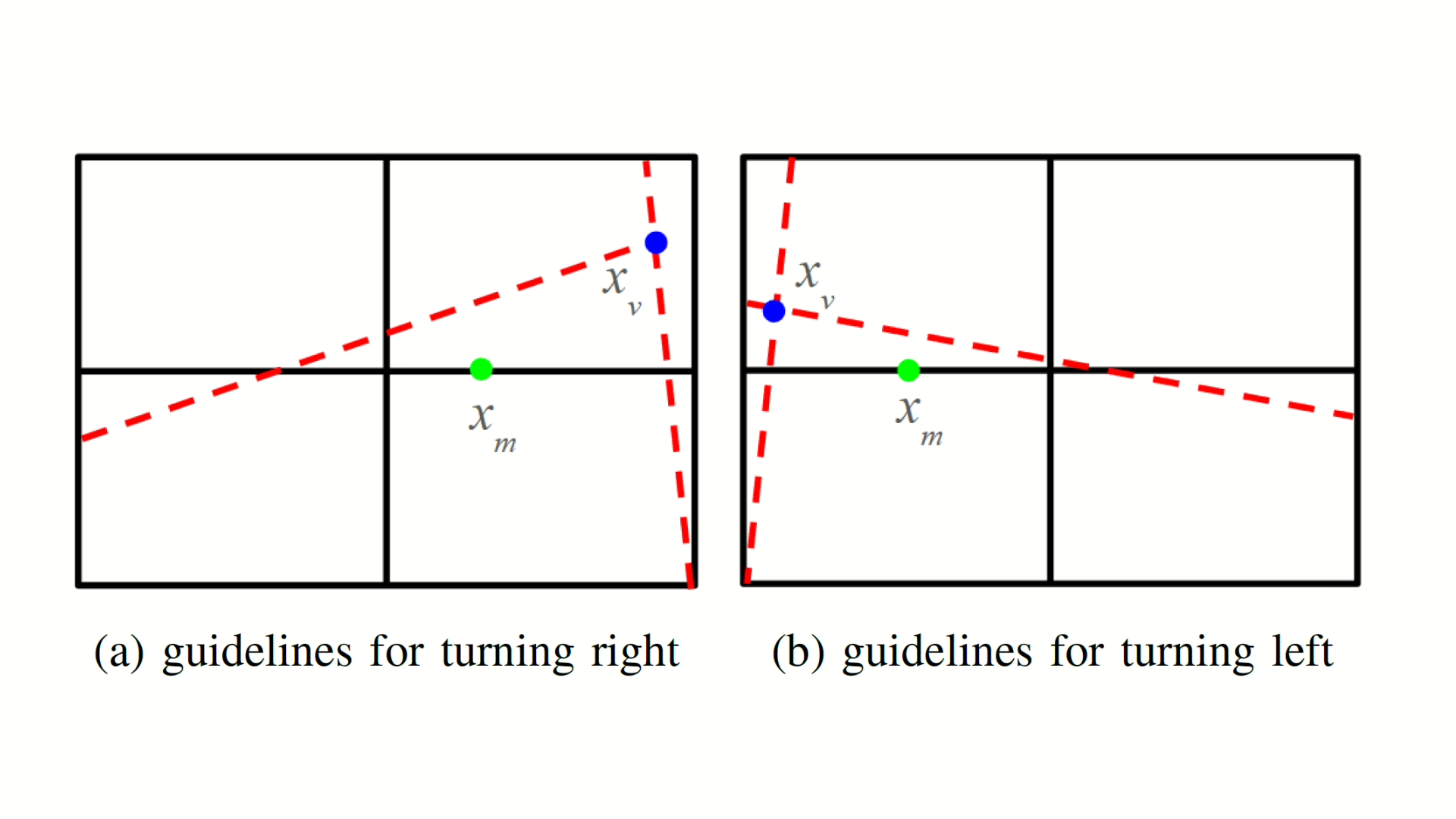

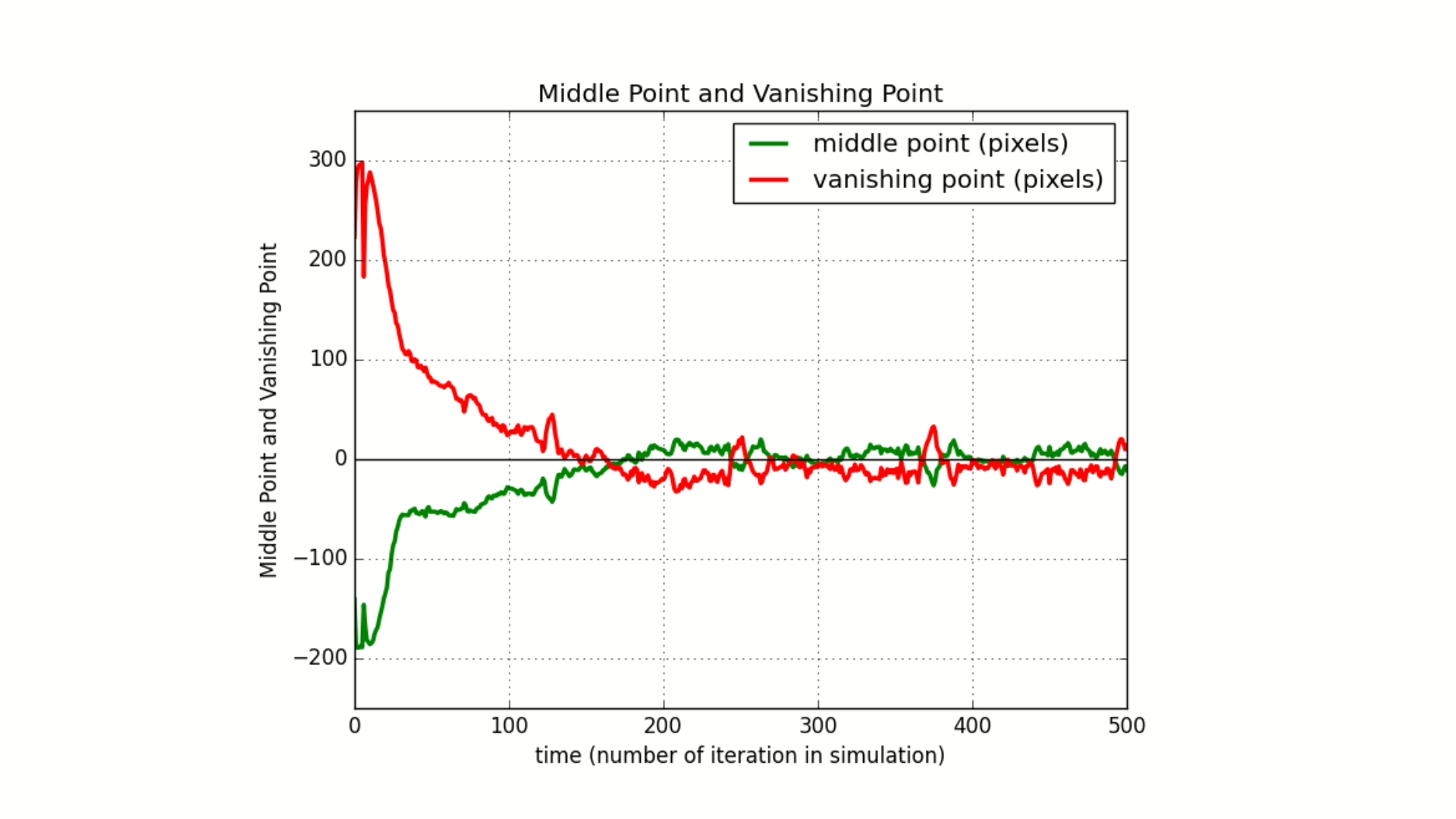

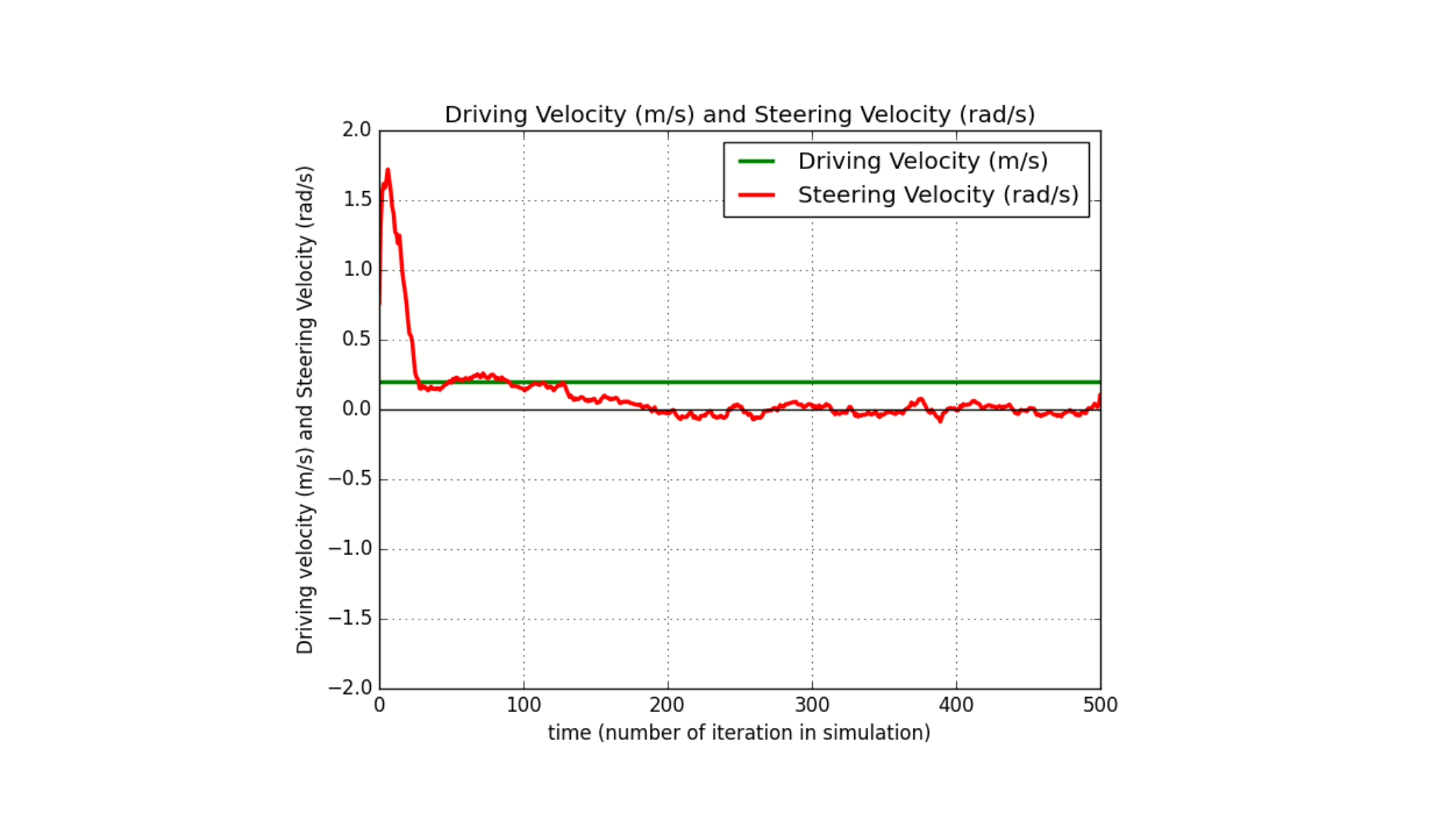

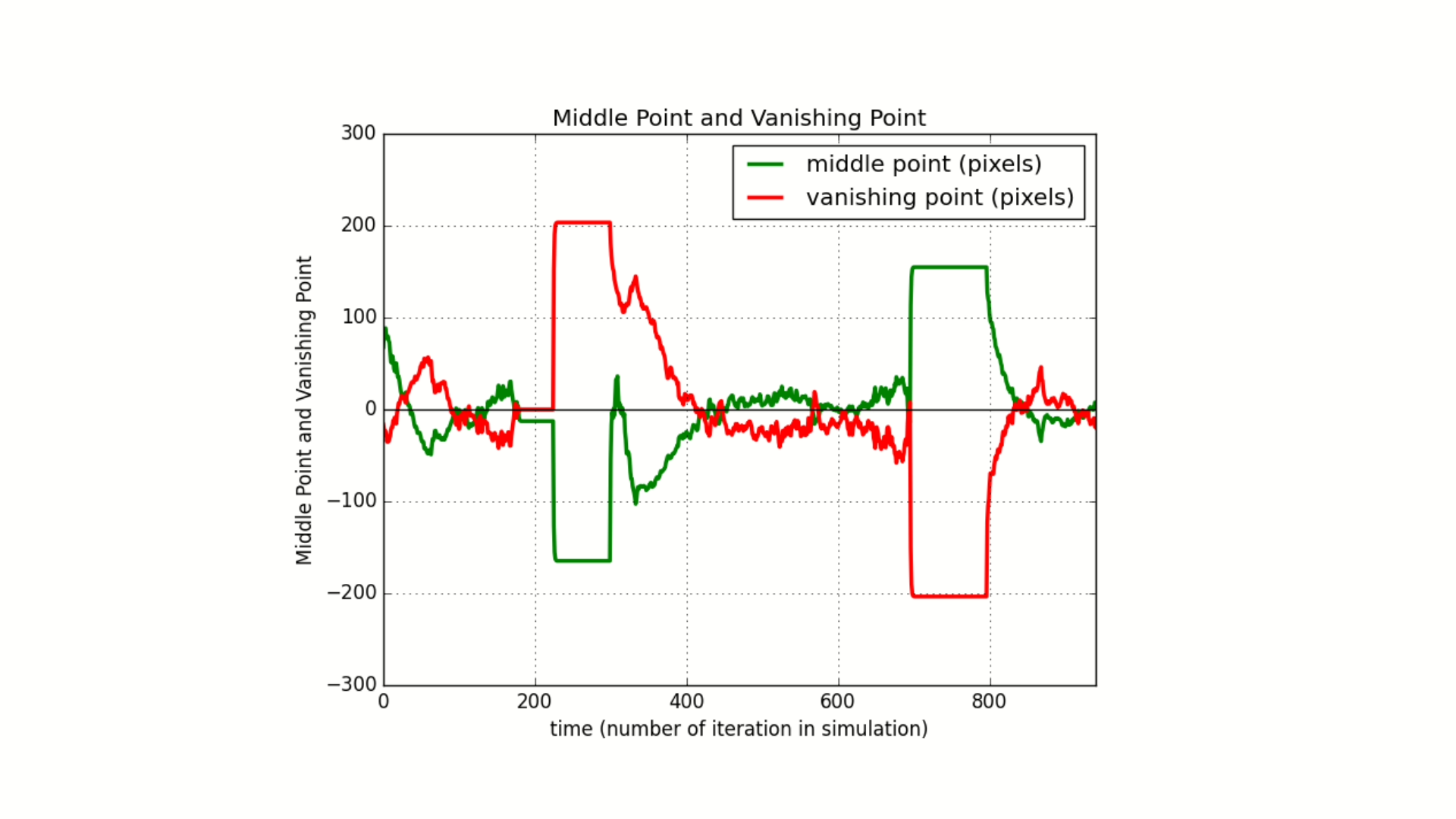

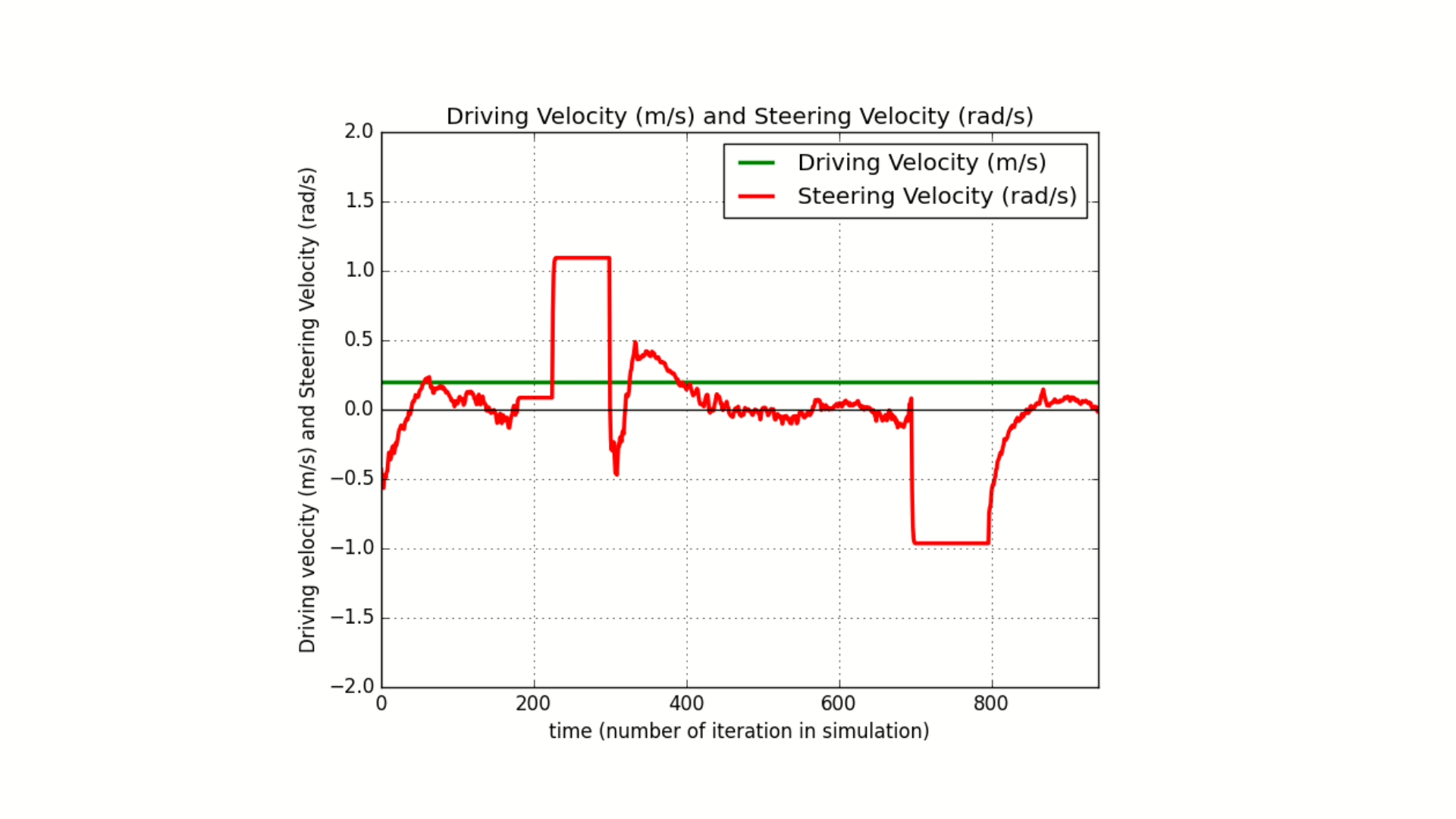

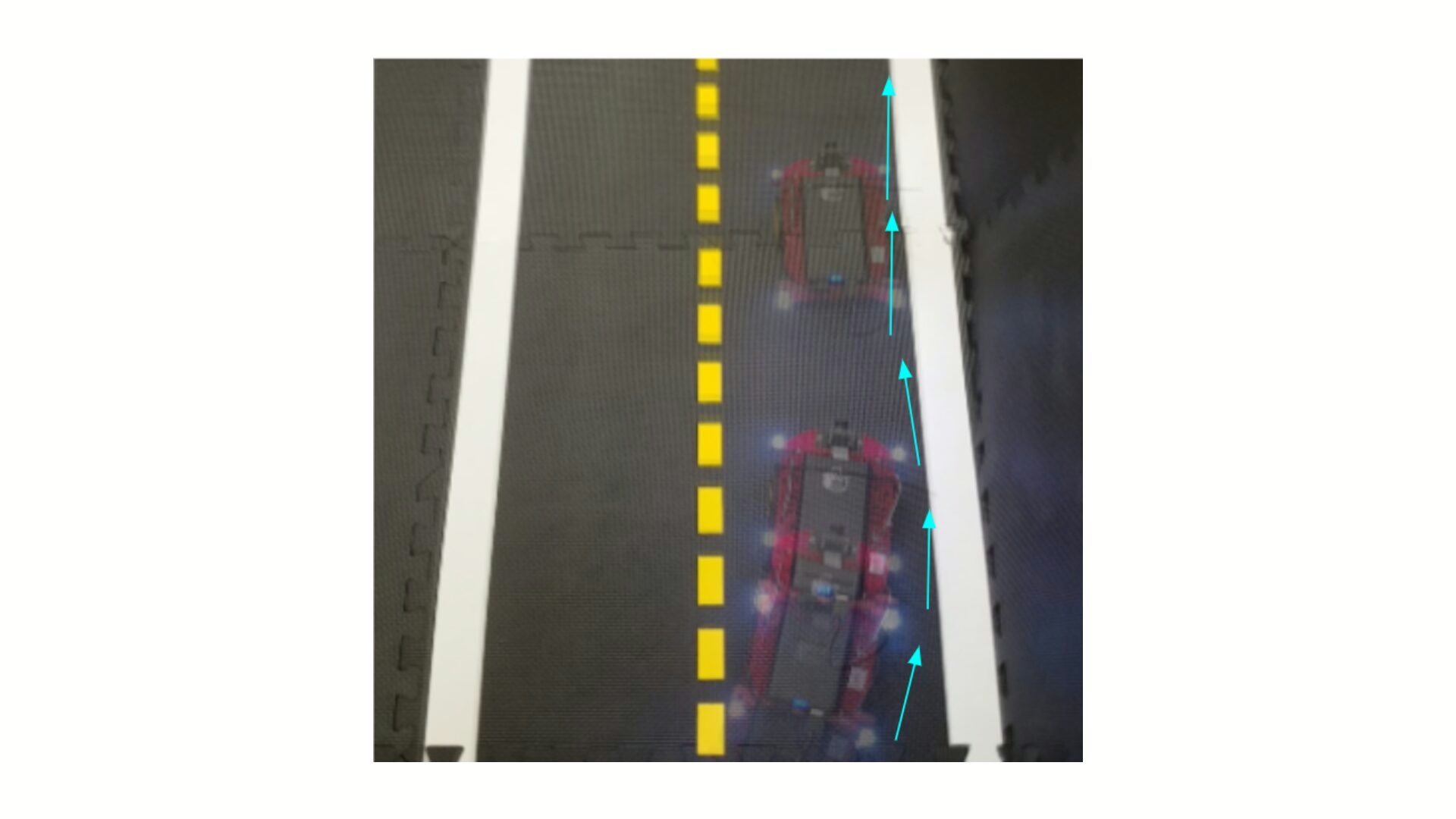

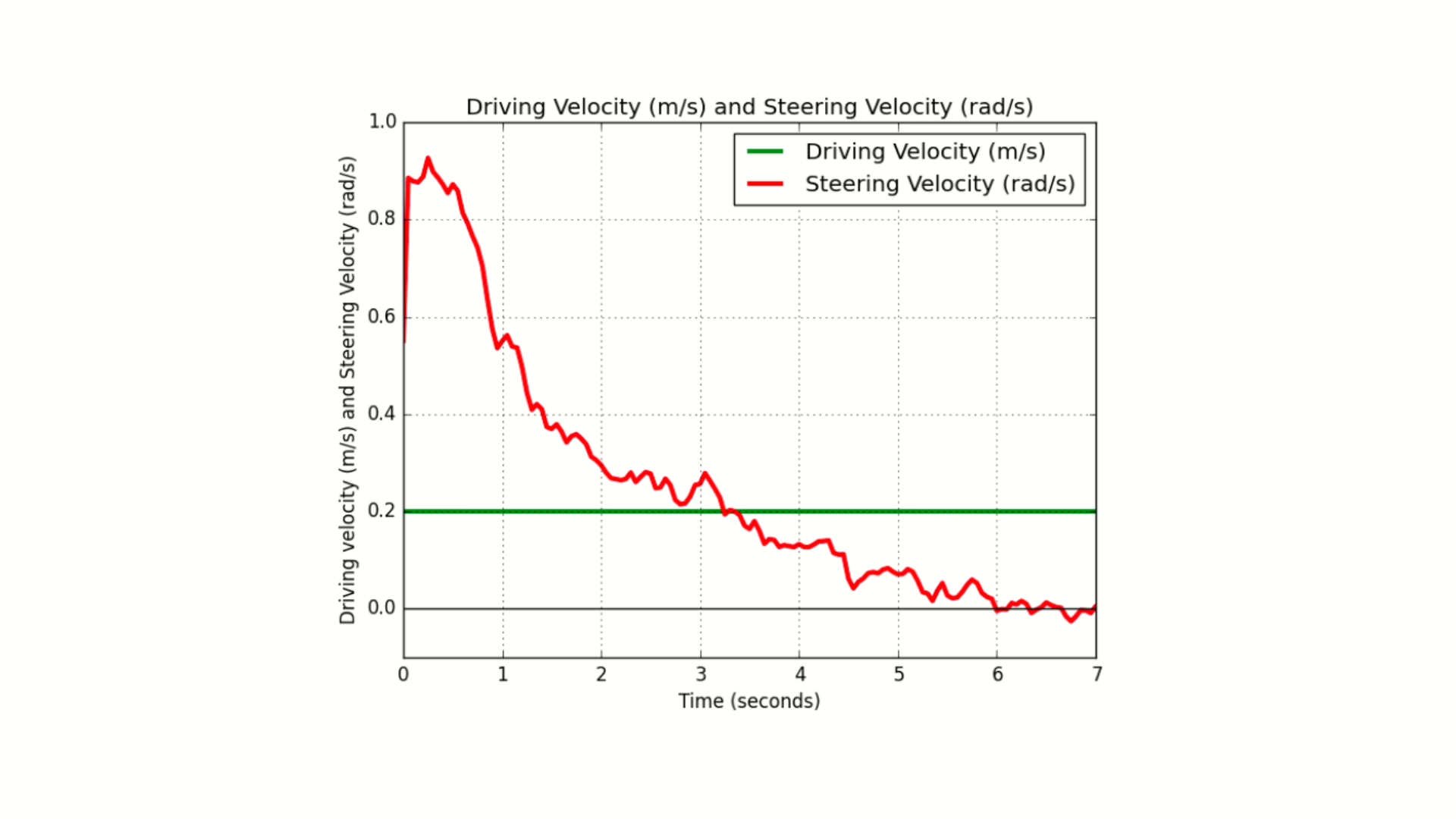

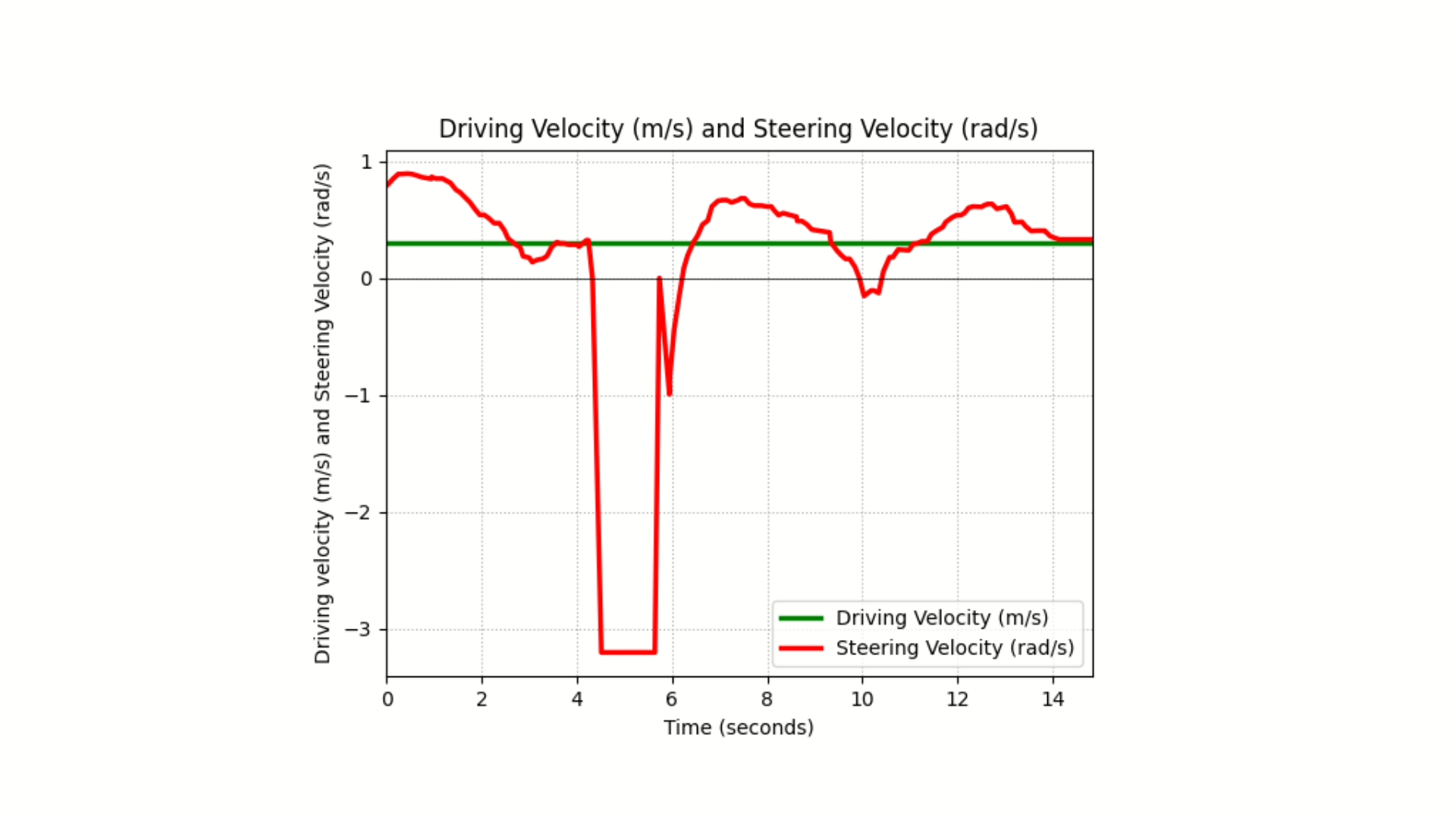

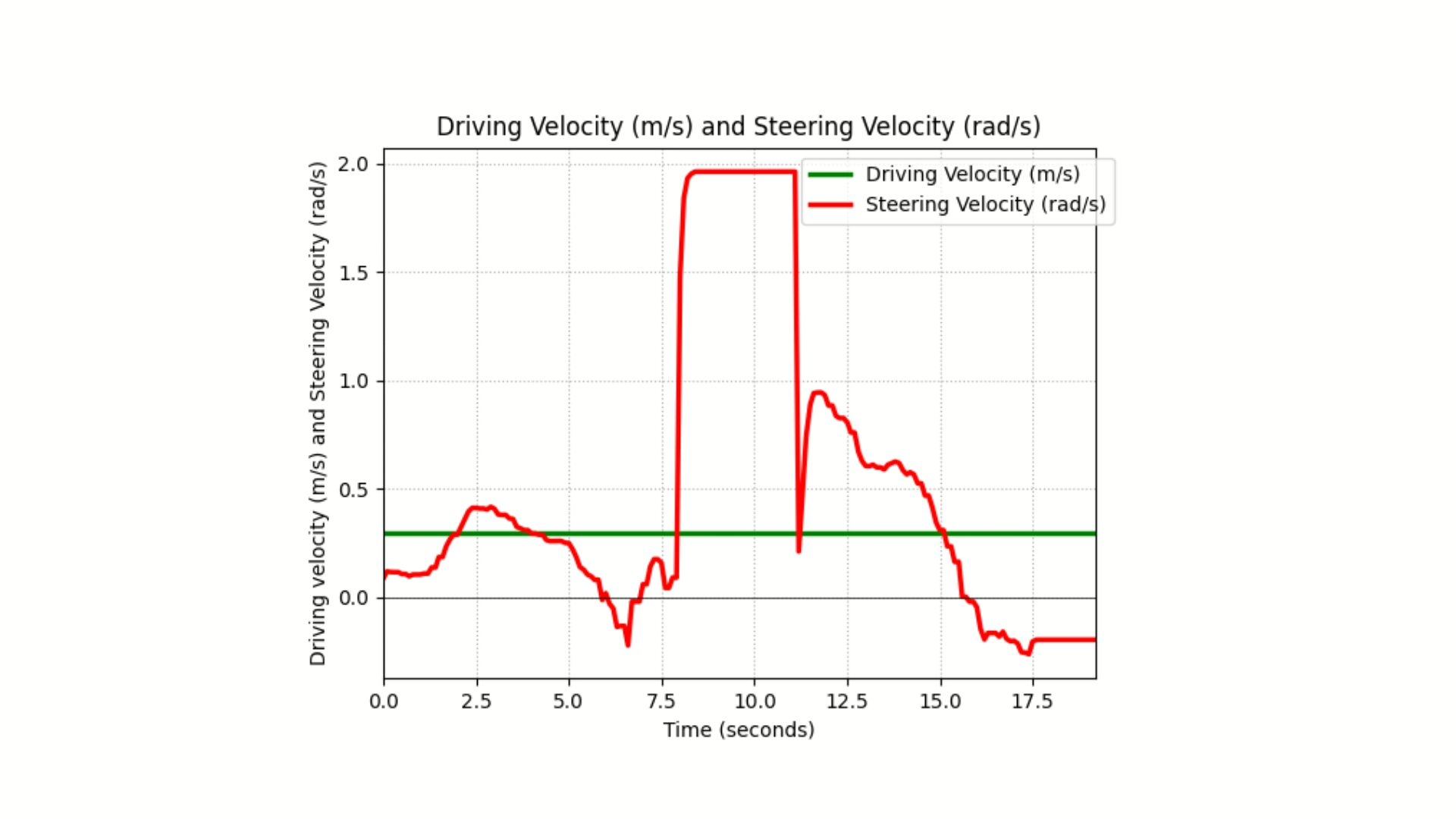

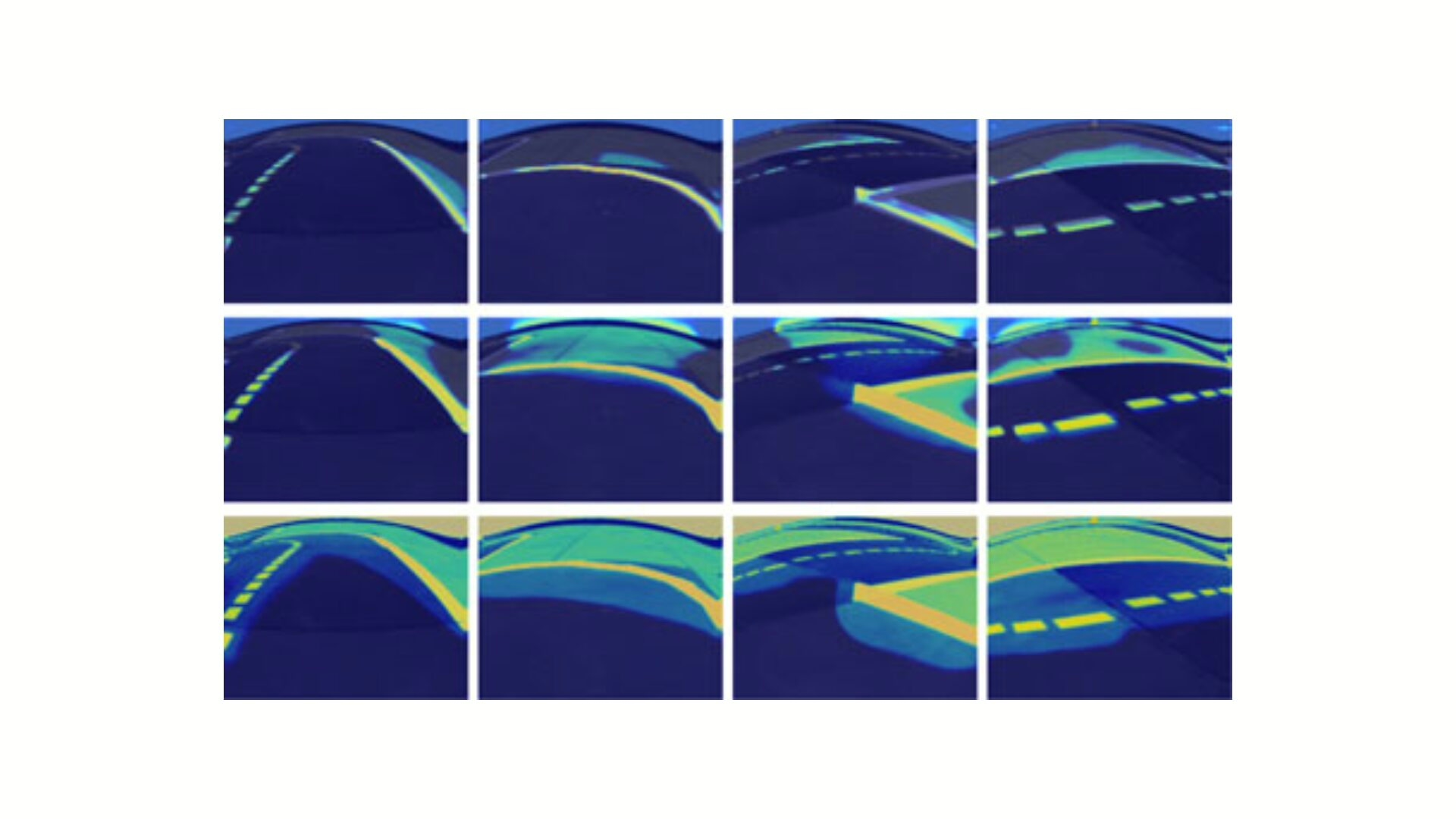

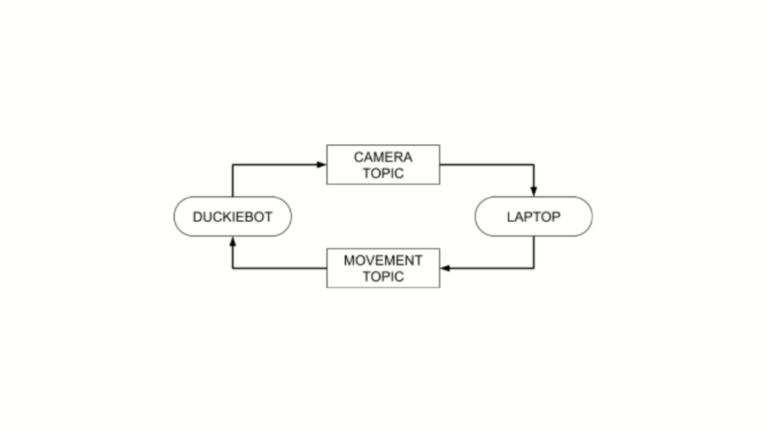

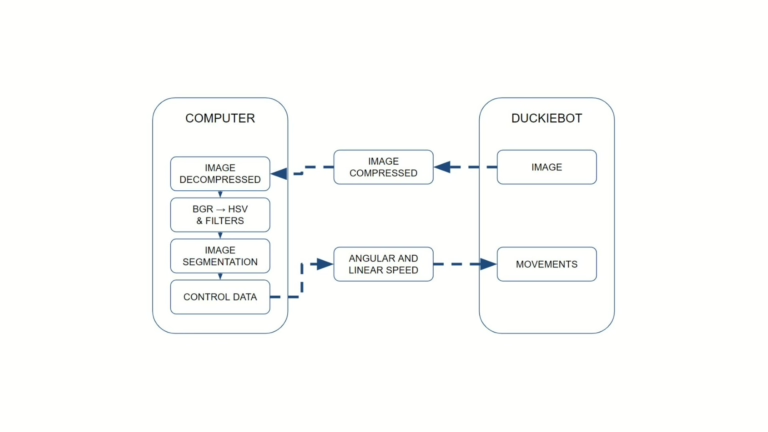

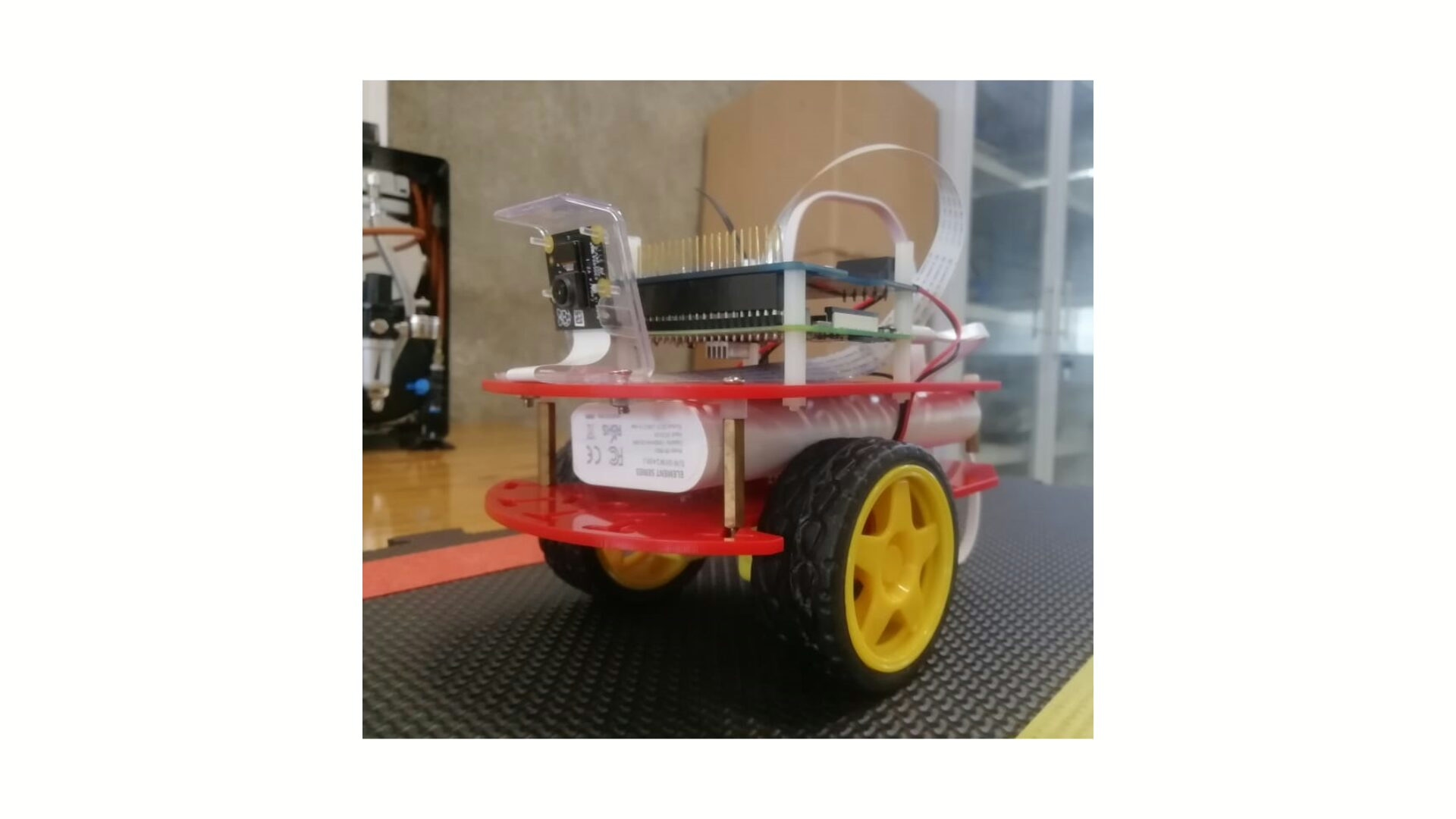

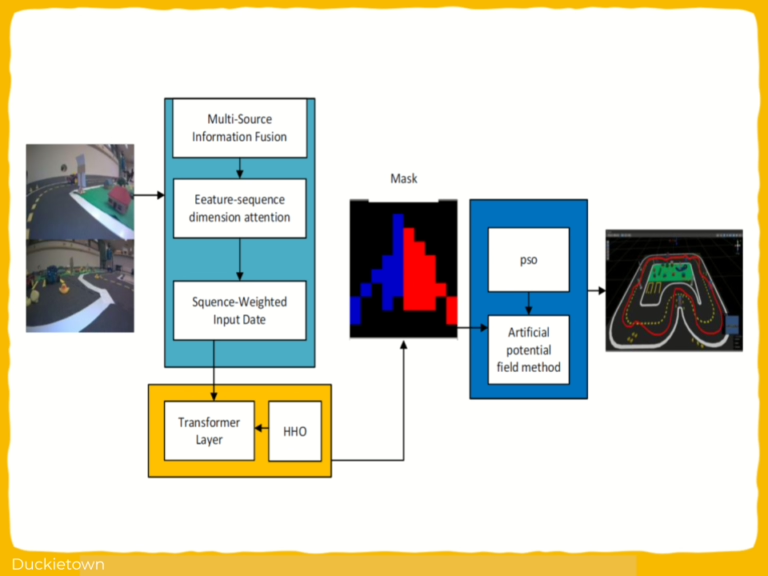

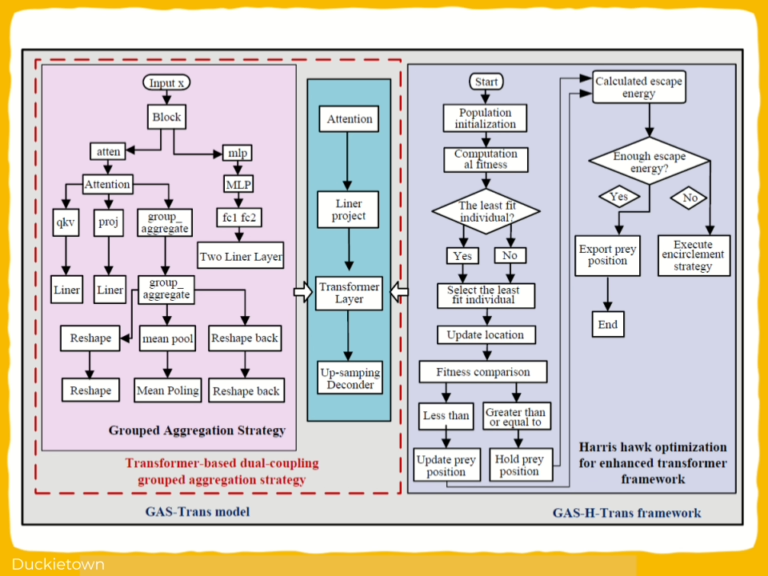

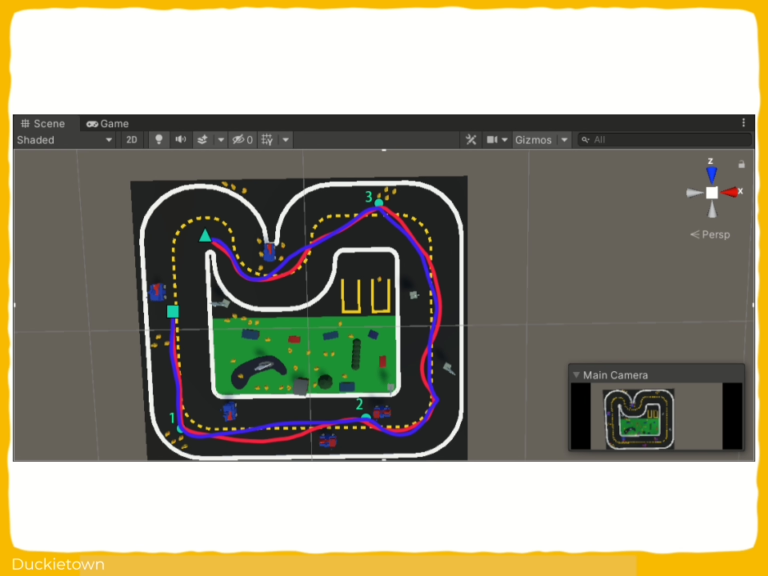

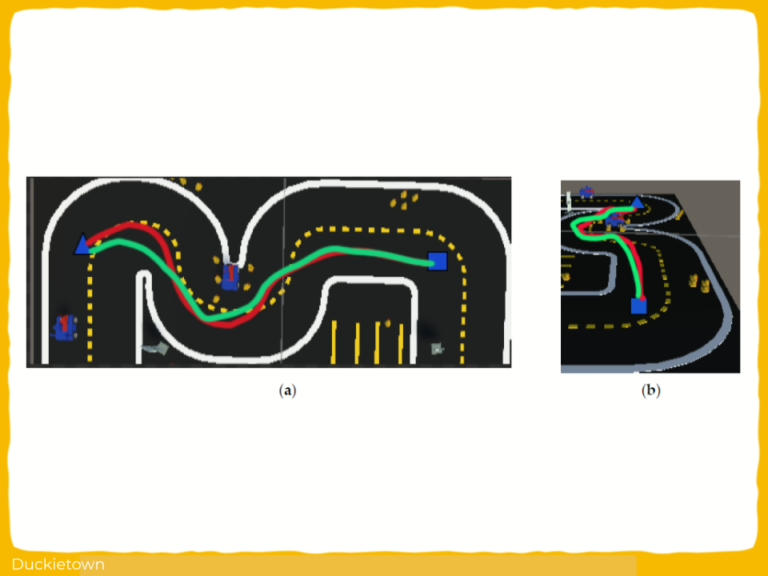

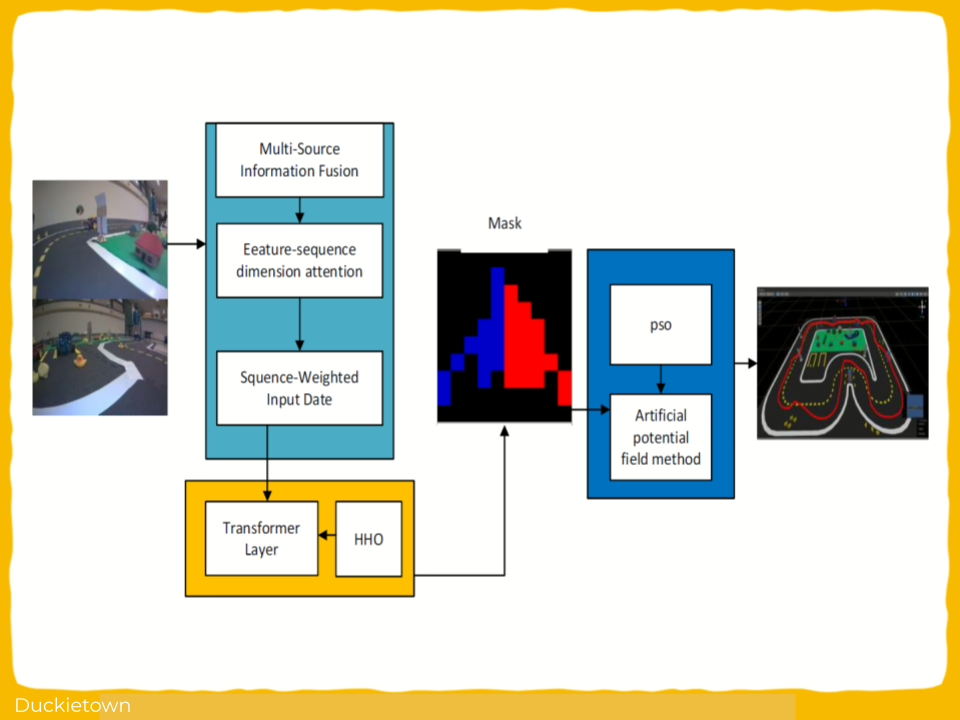

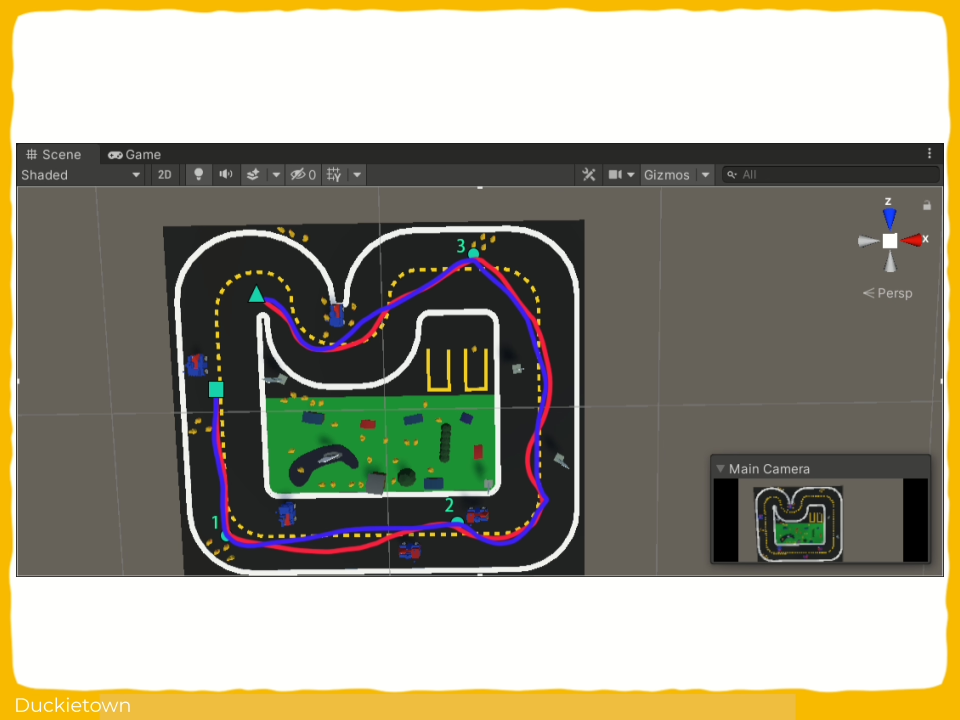

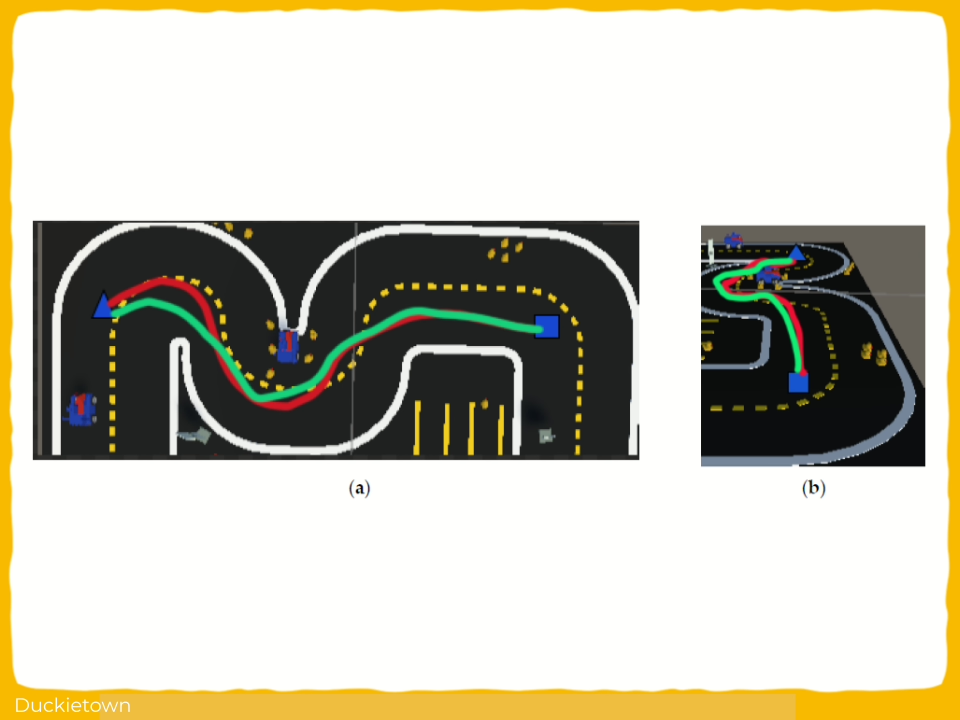

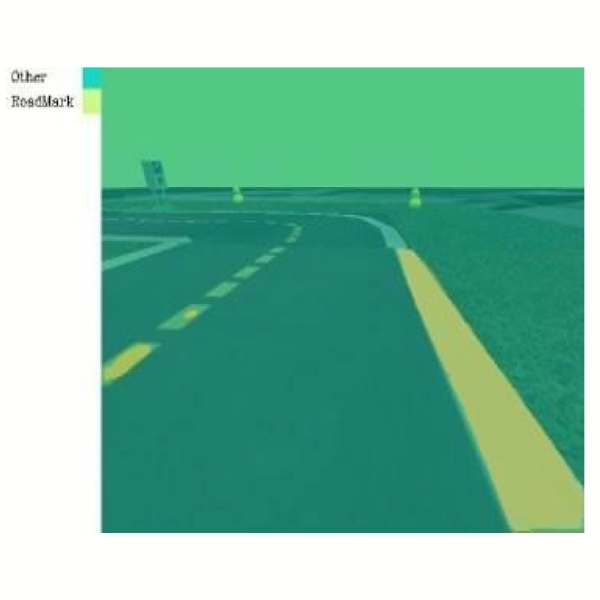

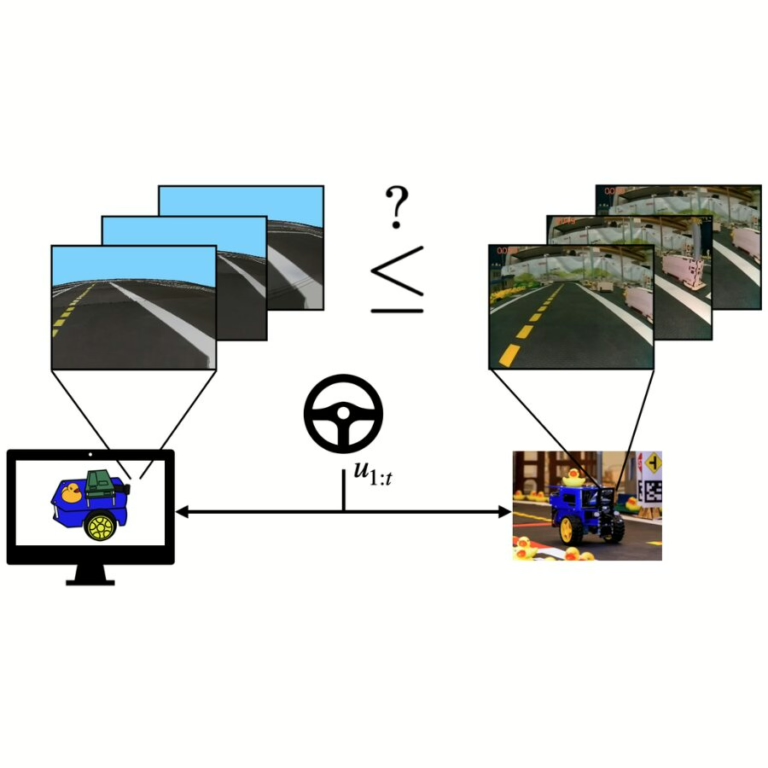

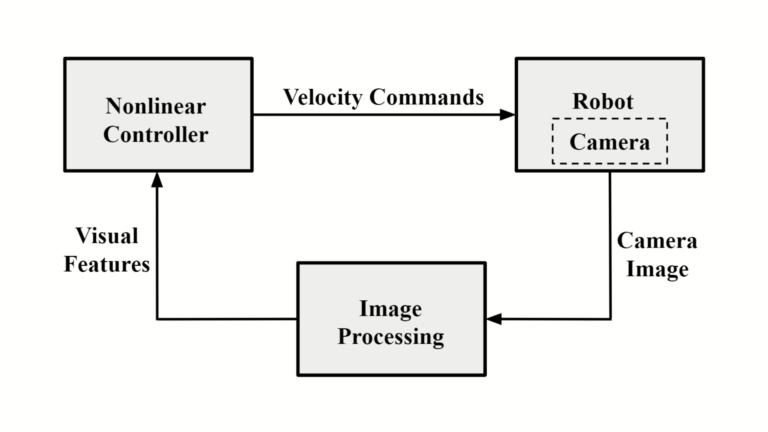

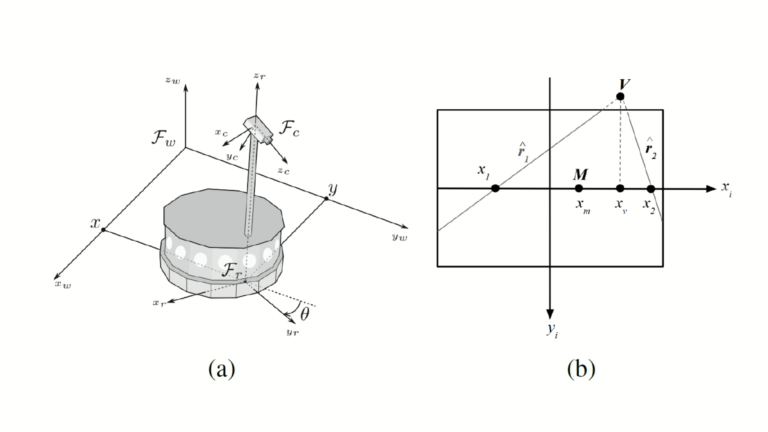

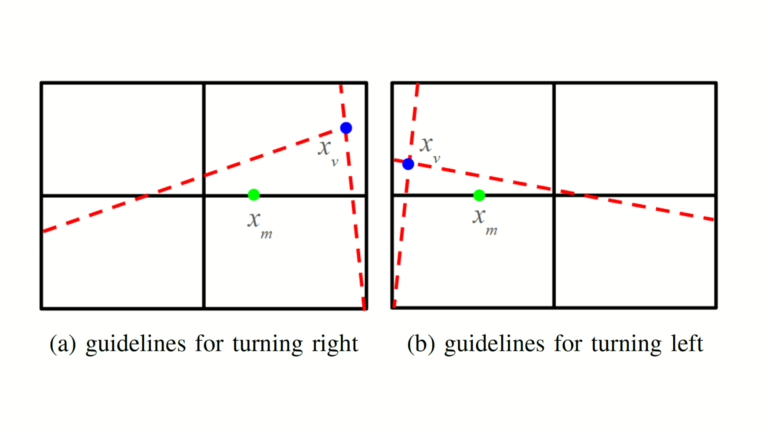

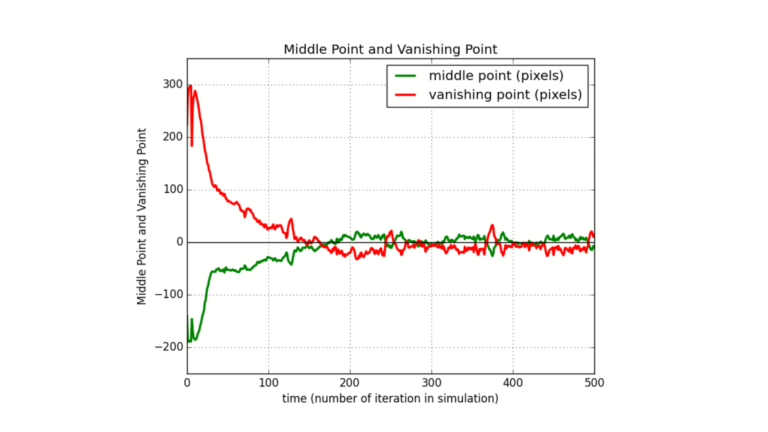

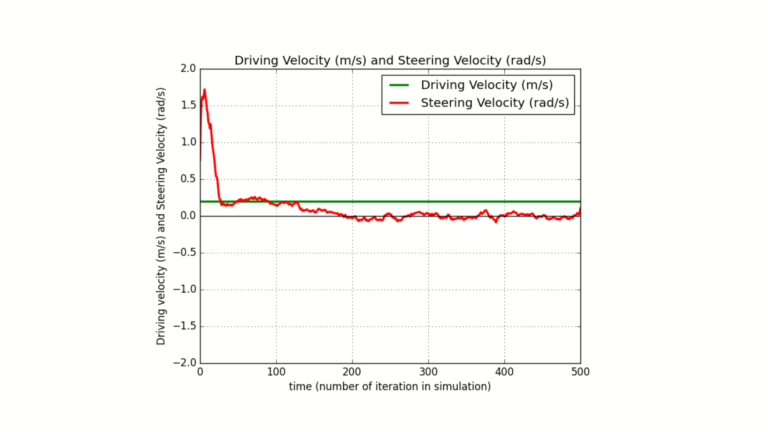

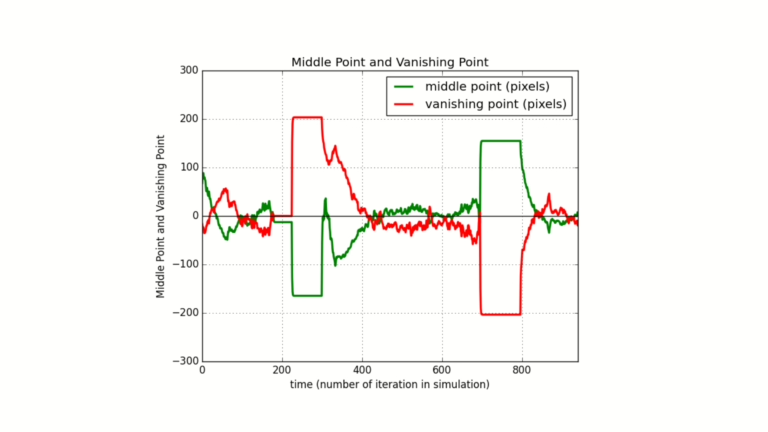

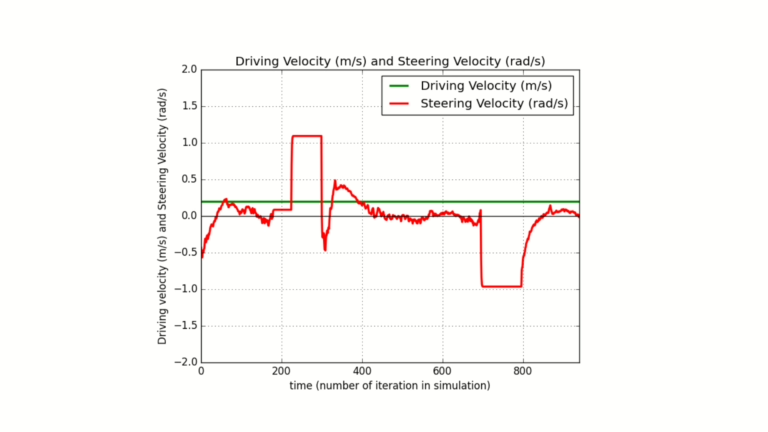

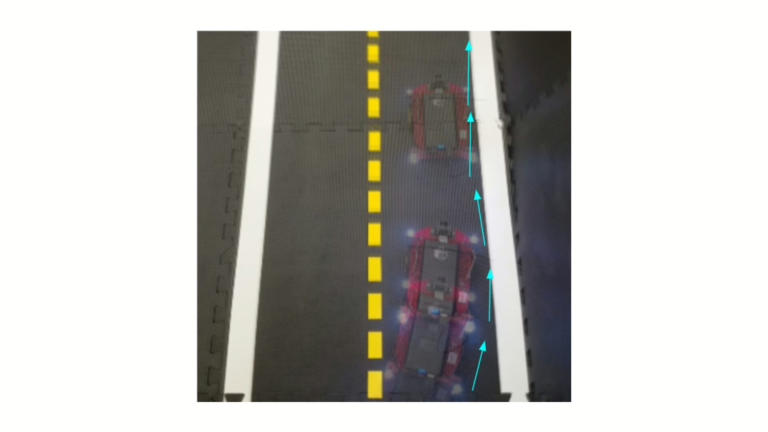

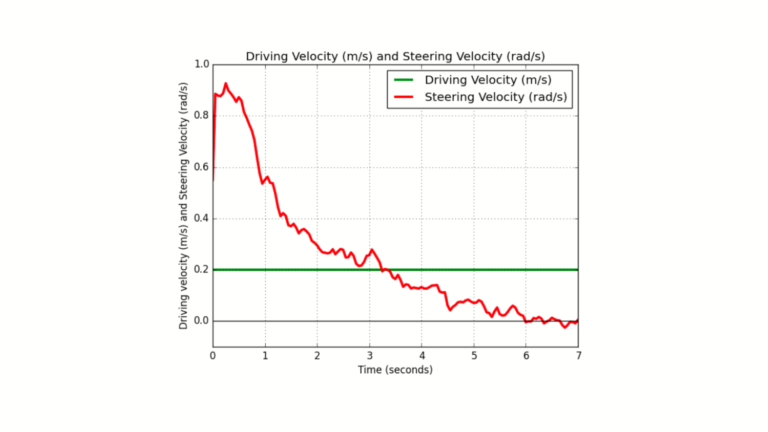

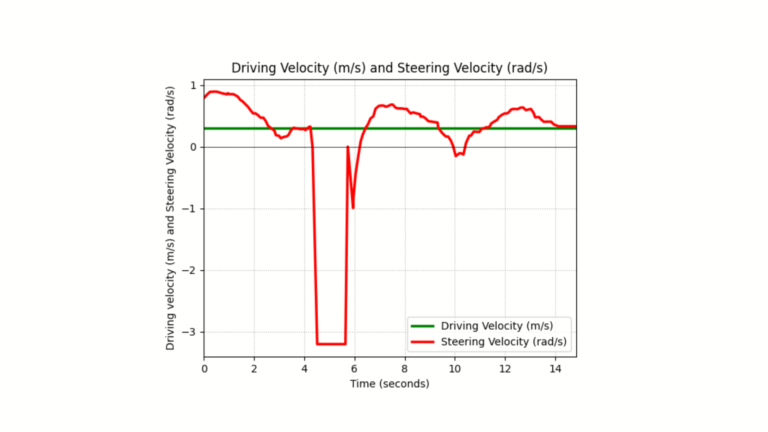

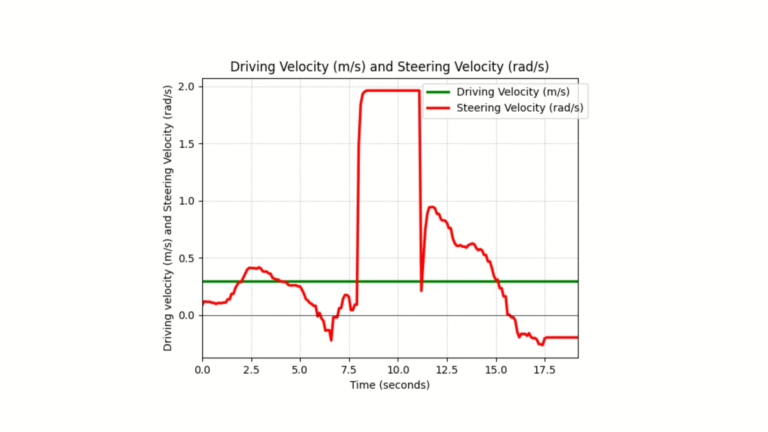

This research presents a visual control framework for in Duckietown using only onboard camera feedback for autonomous navigation. The system models the Duckiebot as a unicycle with constant driving velocity and uses steering velocity as the control input. Virtual guidelines are extracted from the lane boundaries to compute two visual features: the middle point and the vanishing point on the image plane.

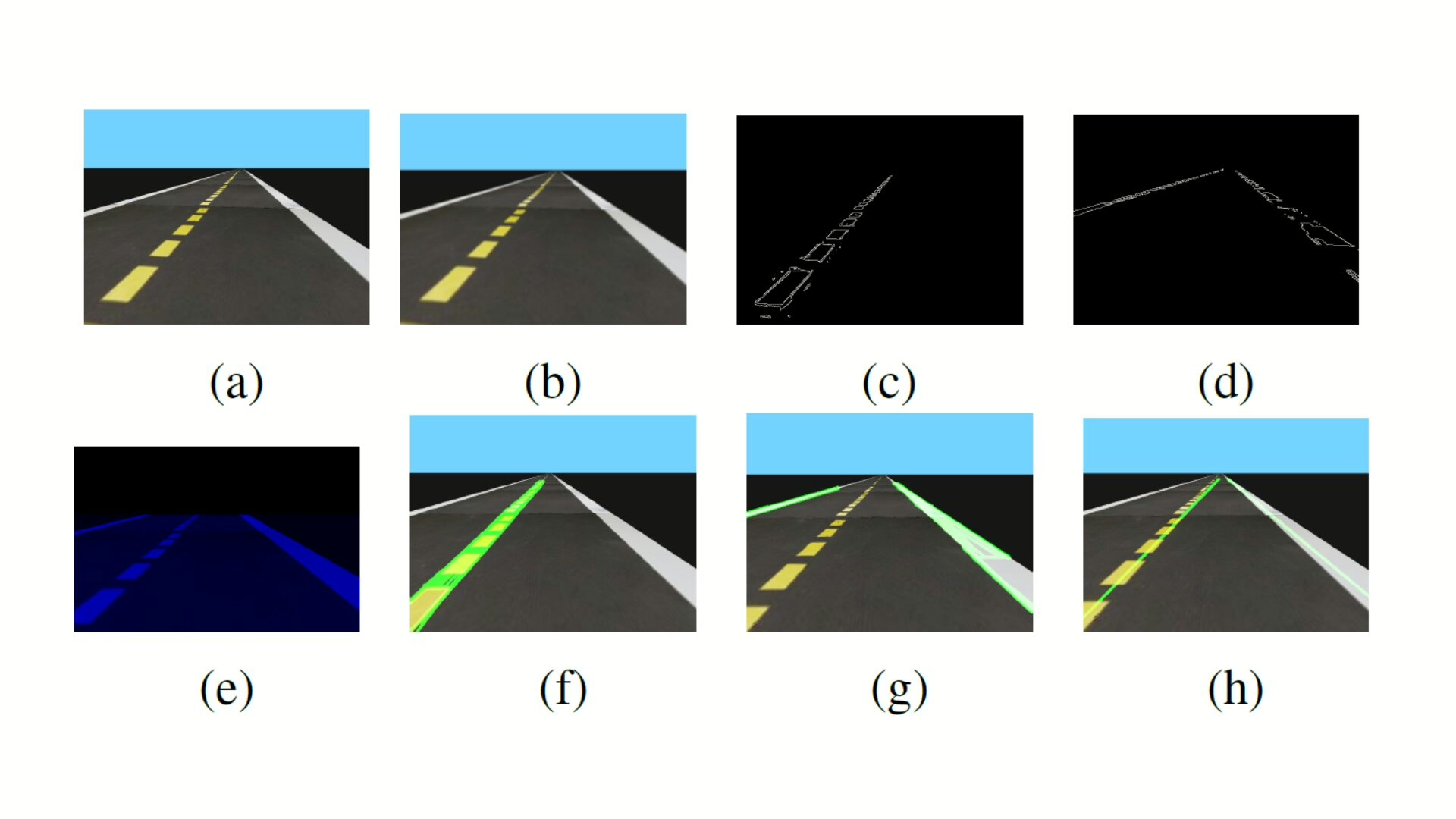

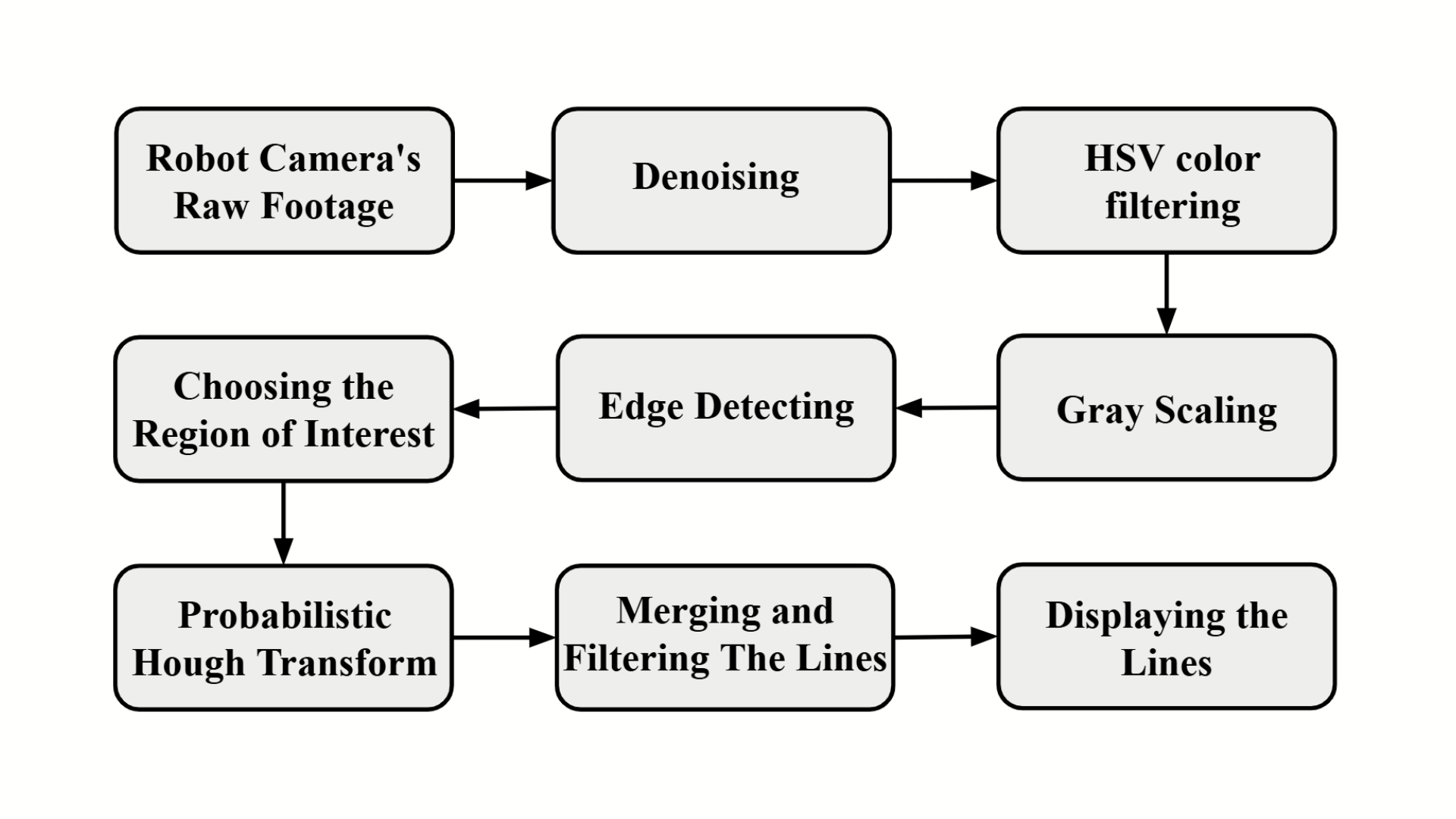

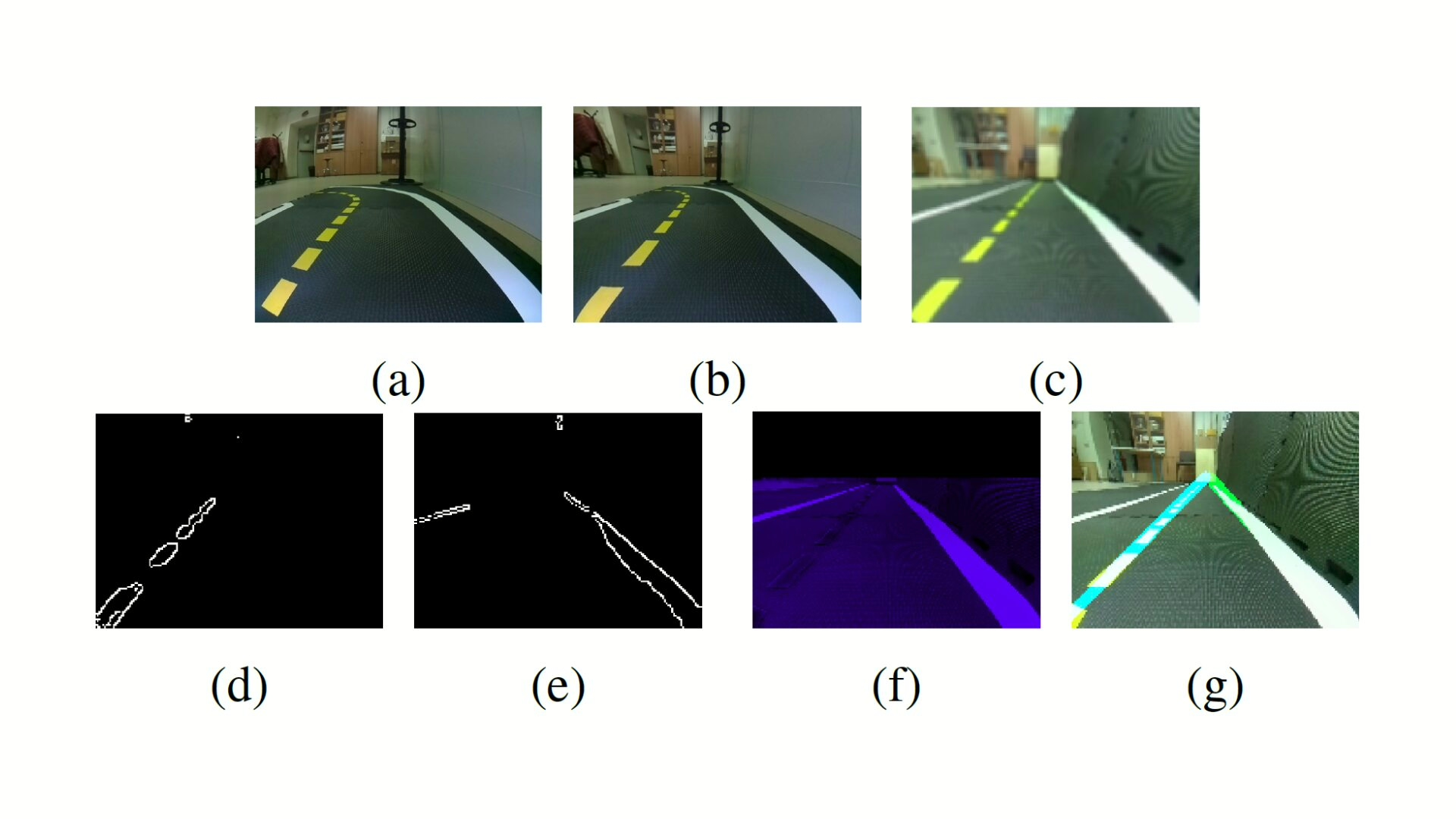

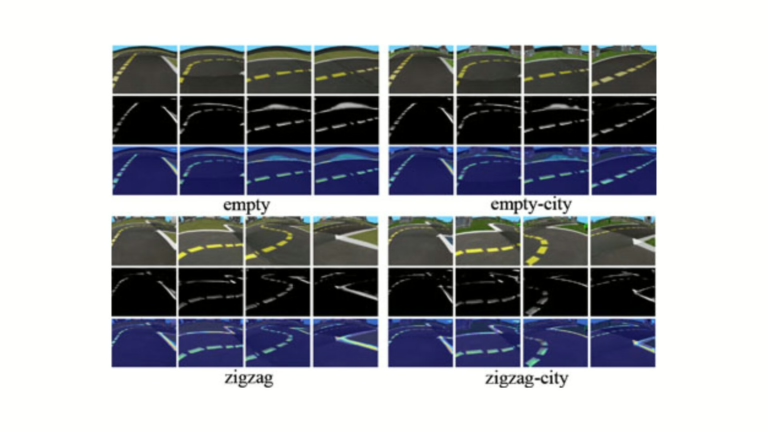

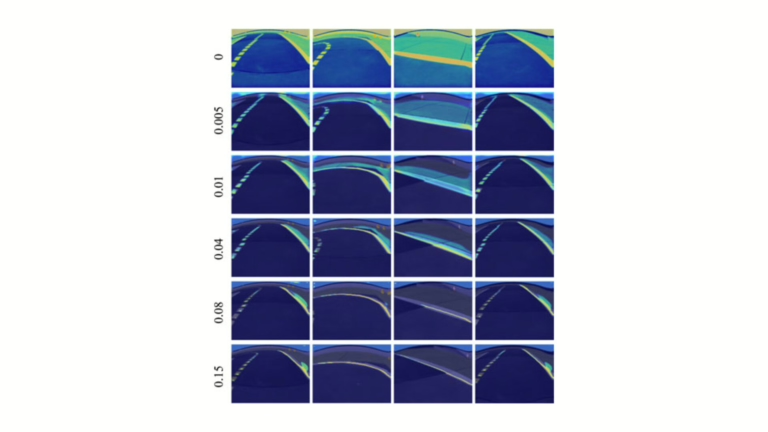

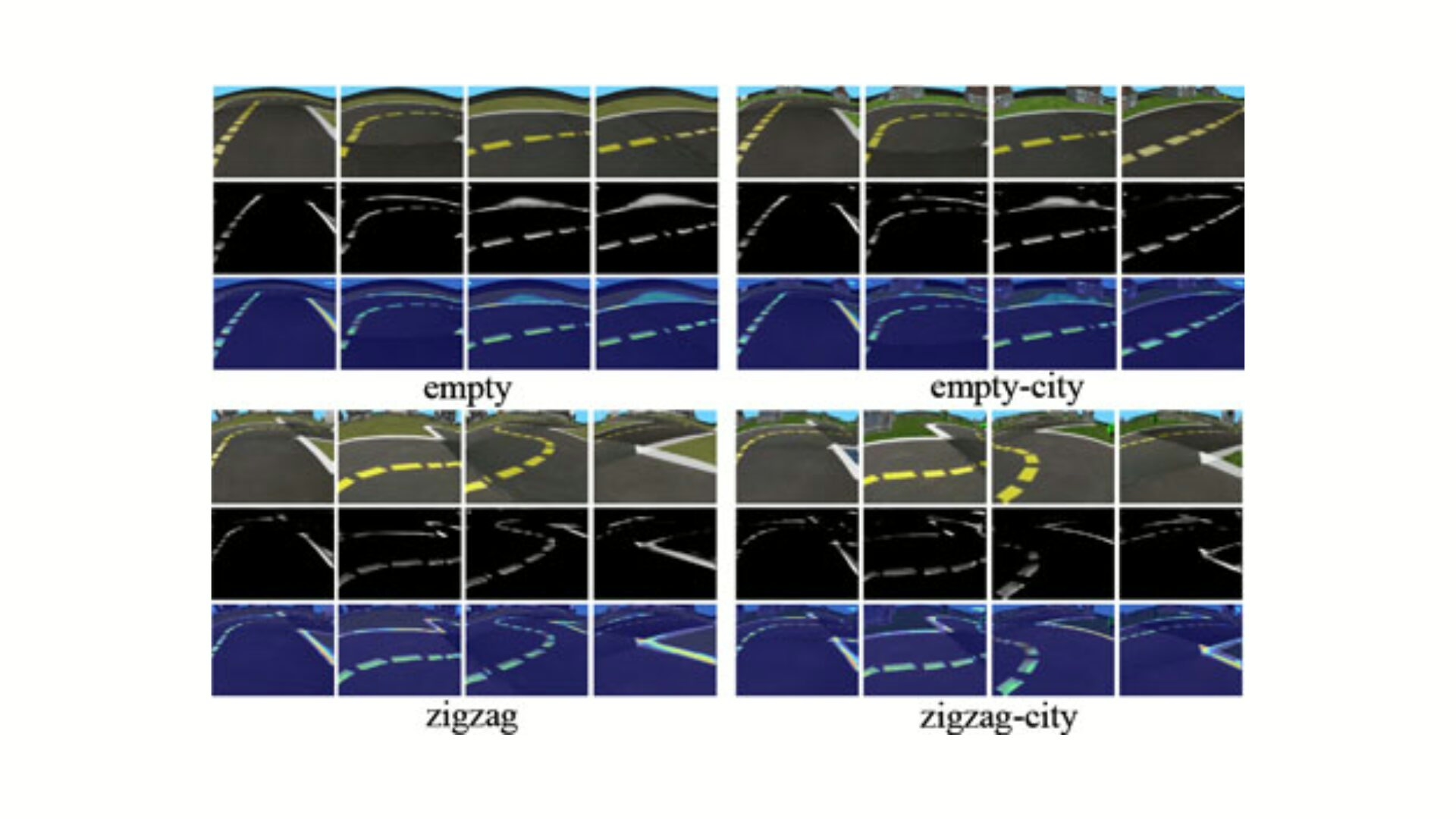

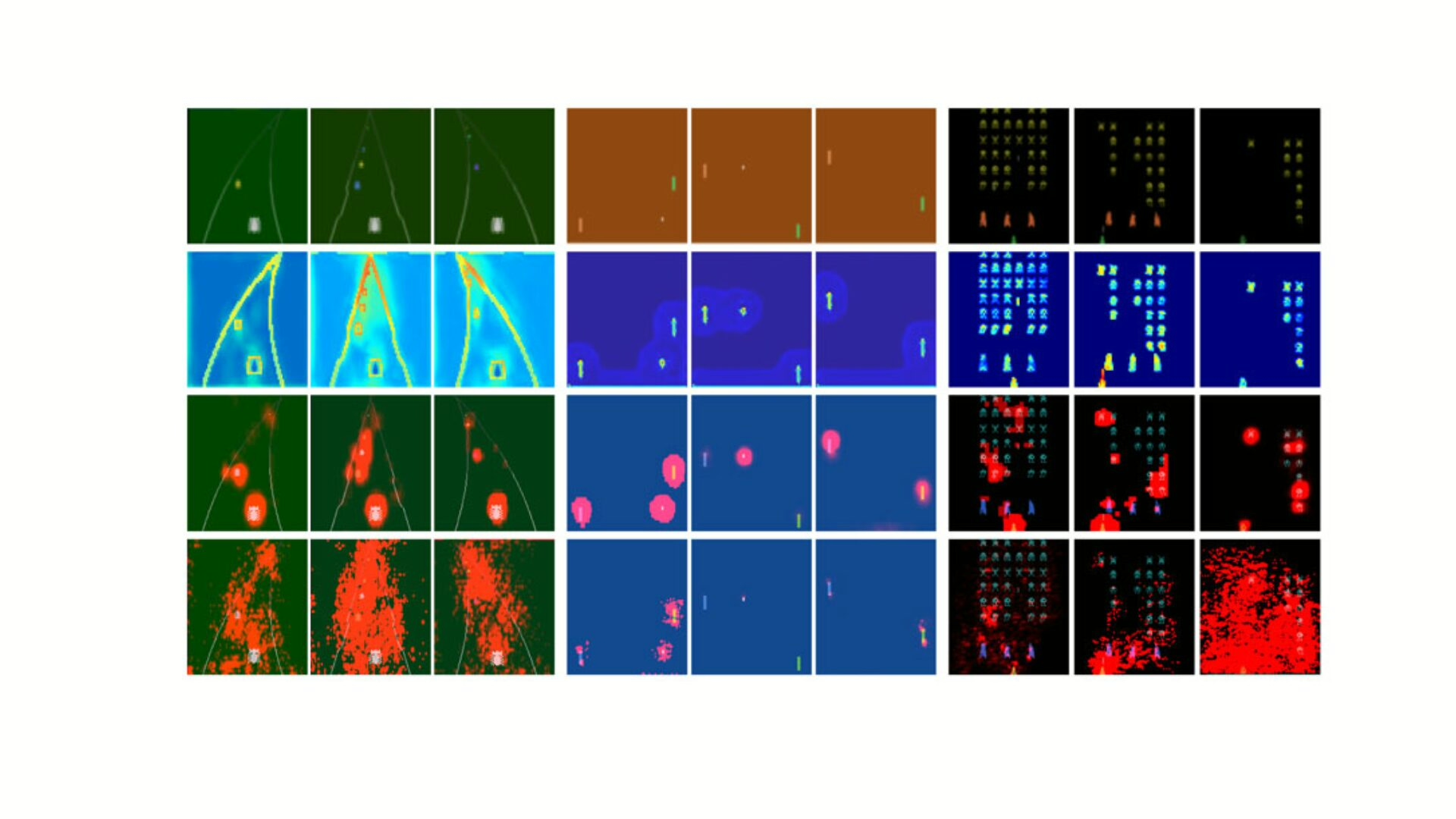

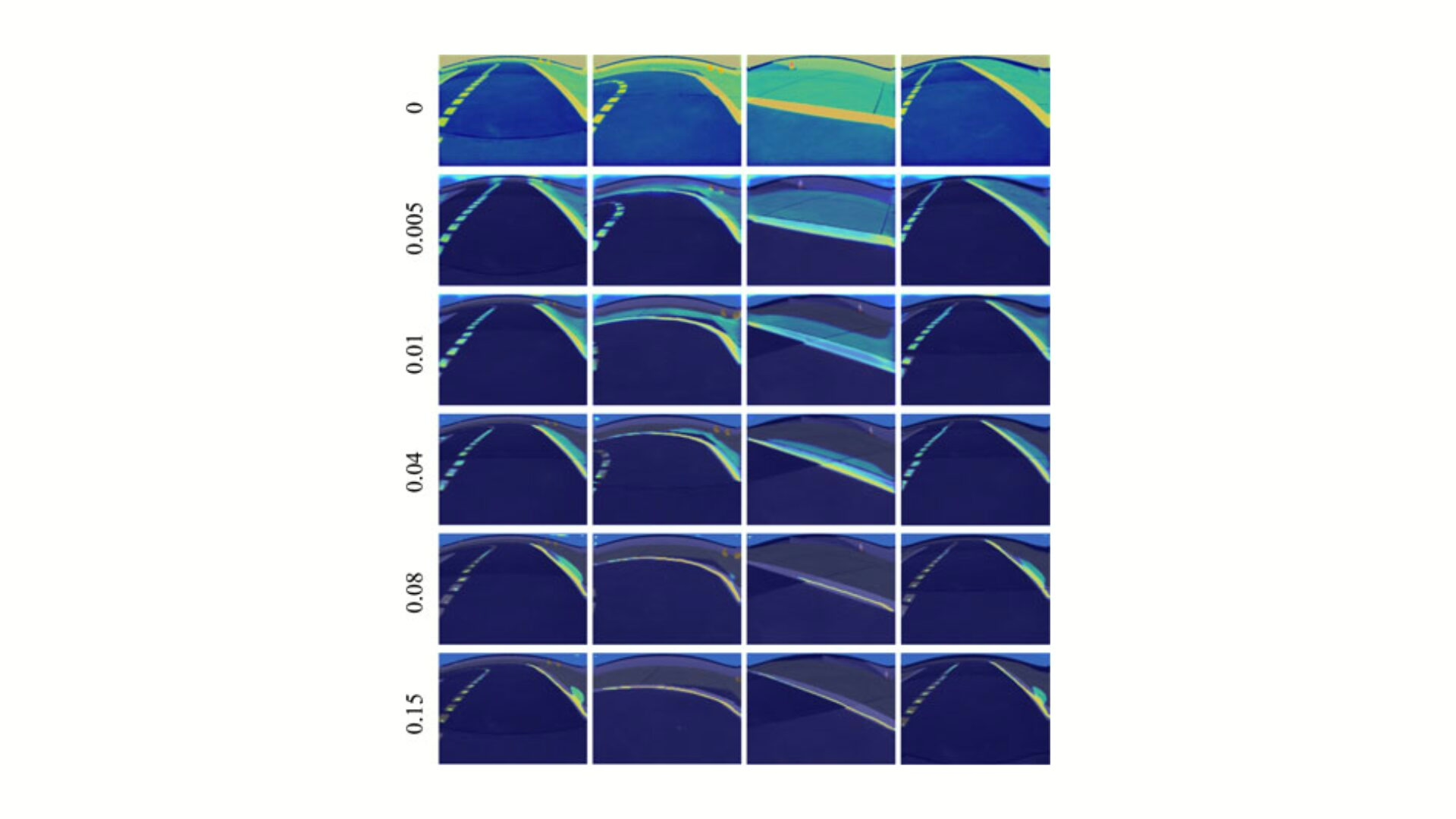

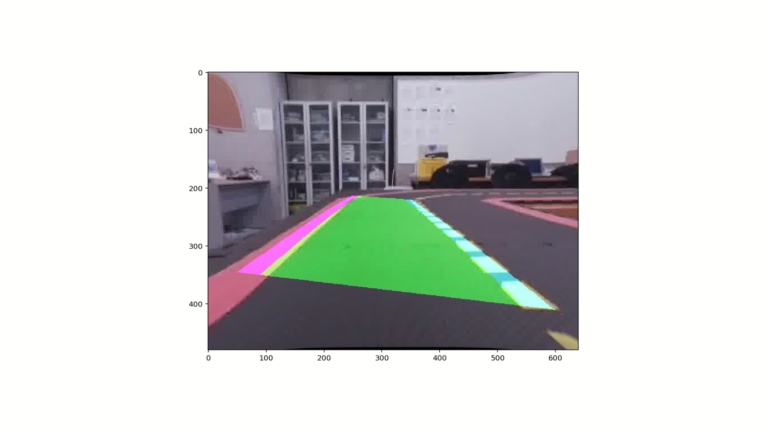

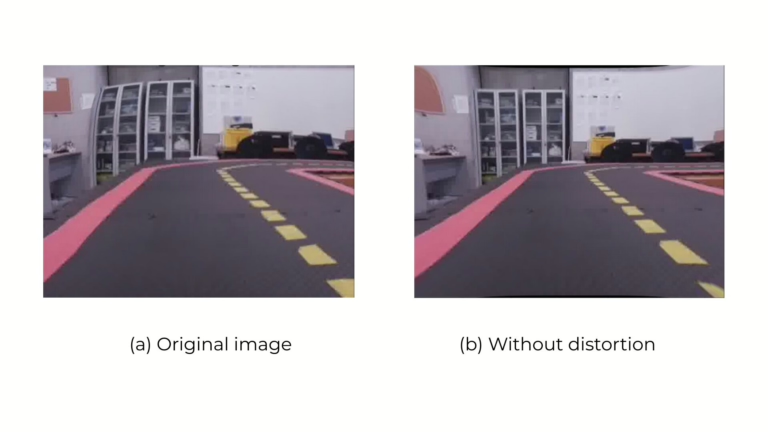

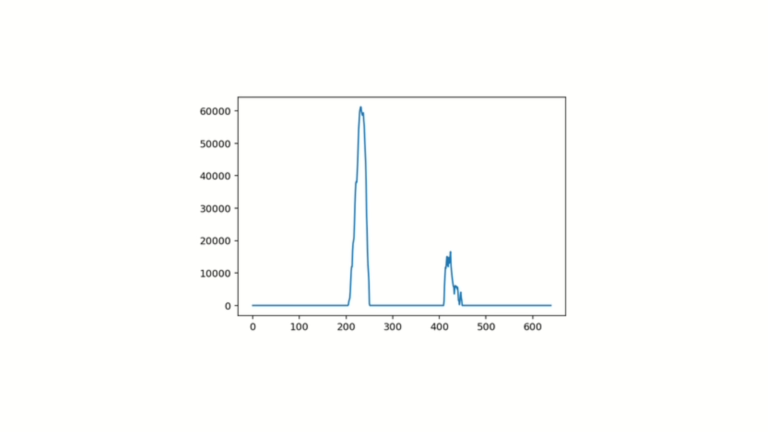

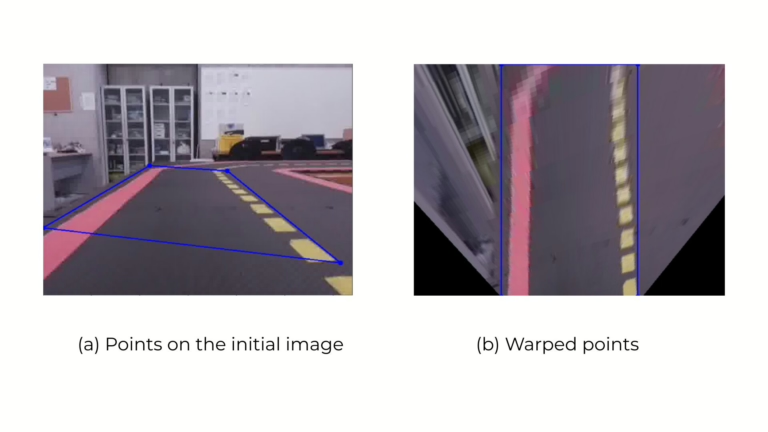

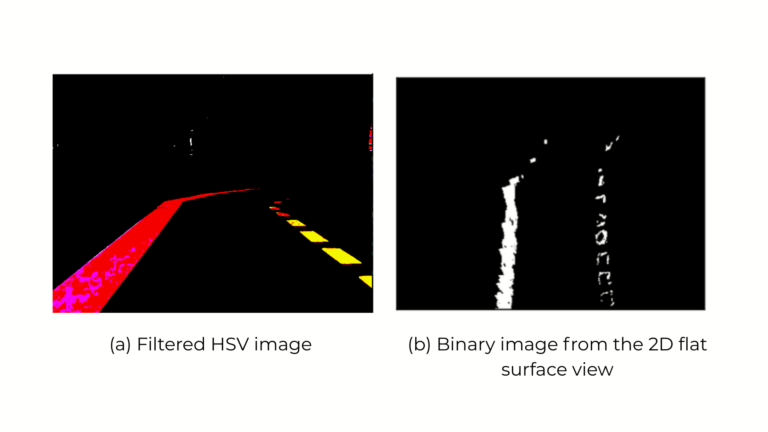

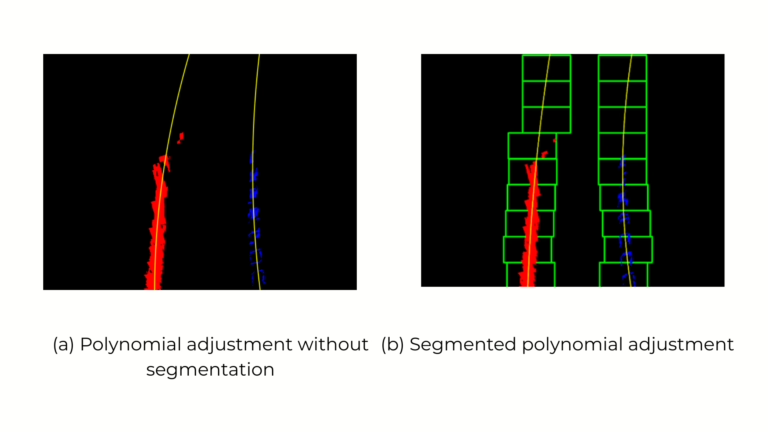

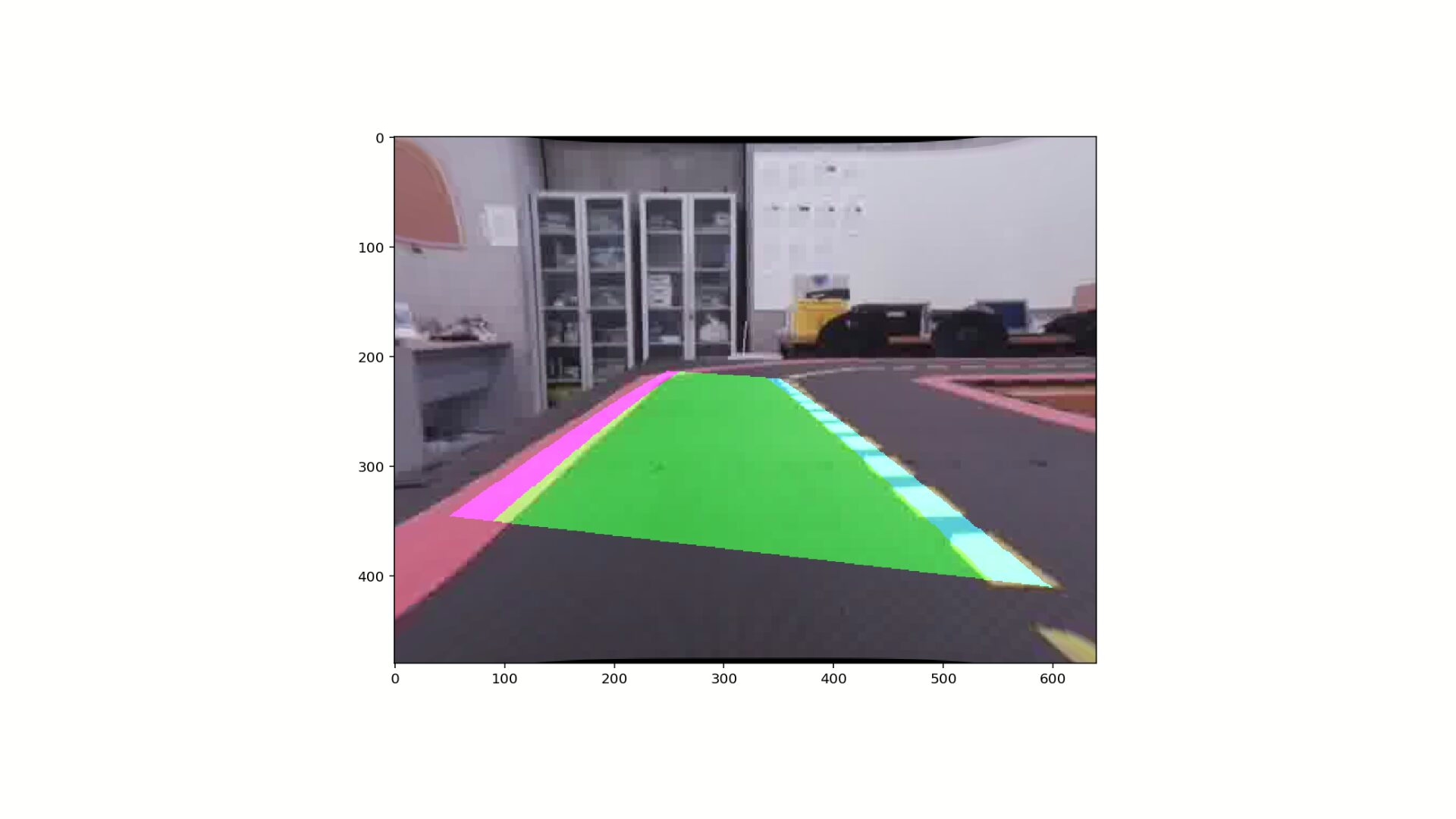

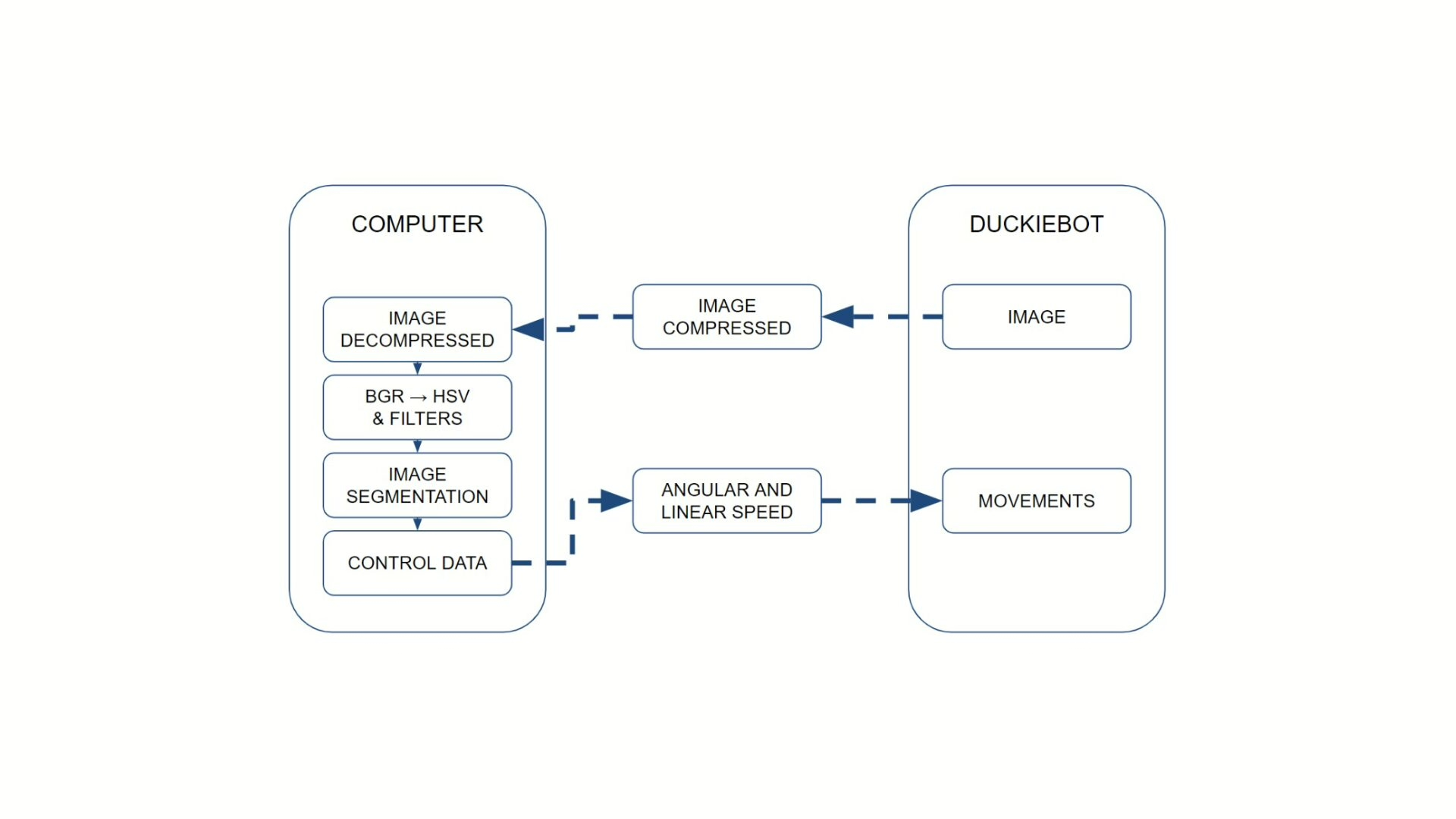

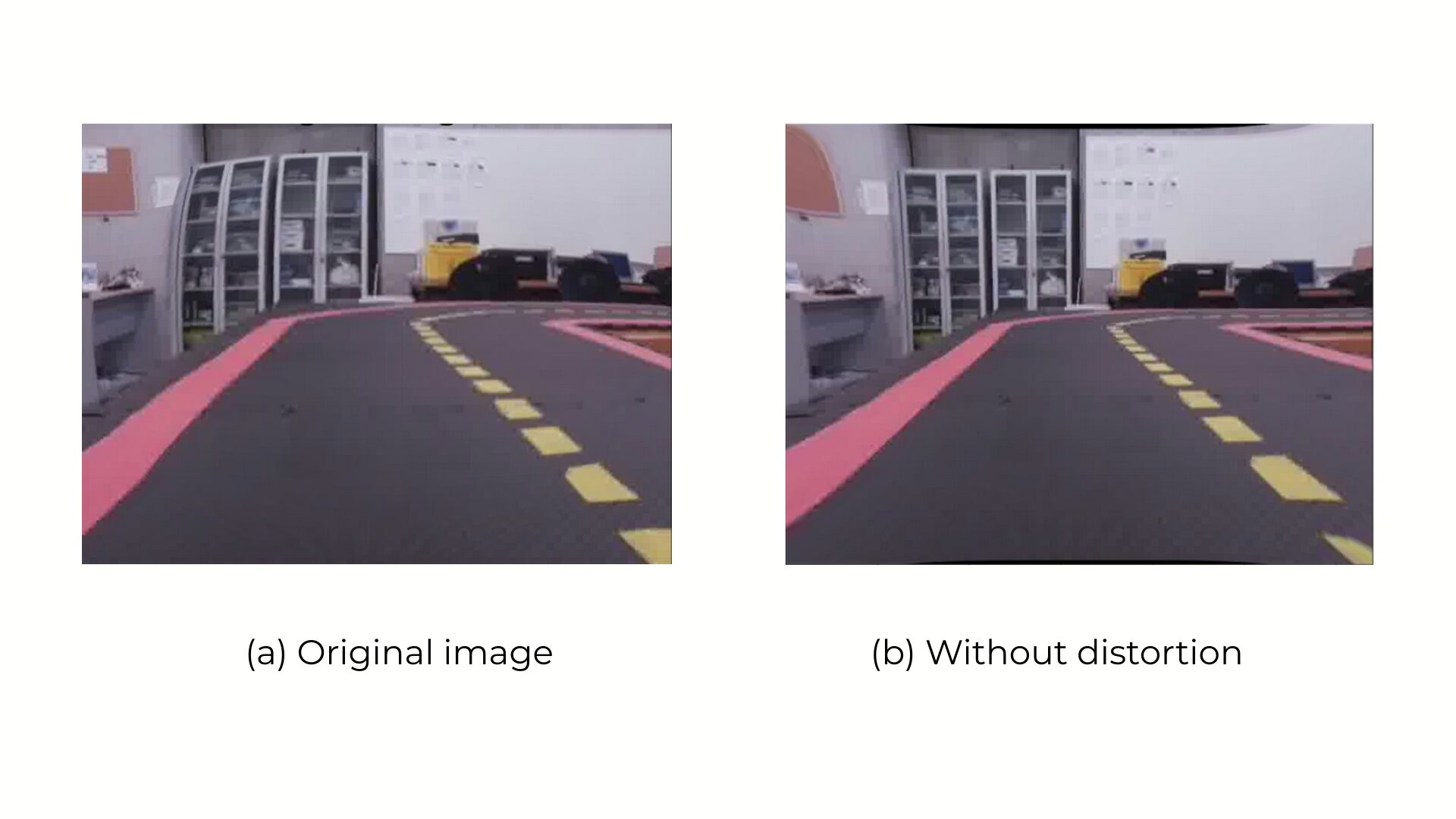

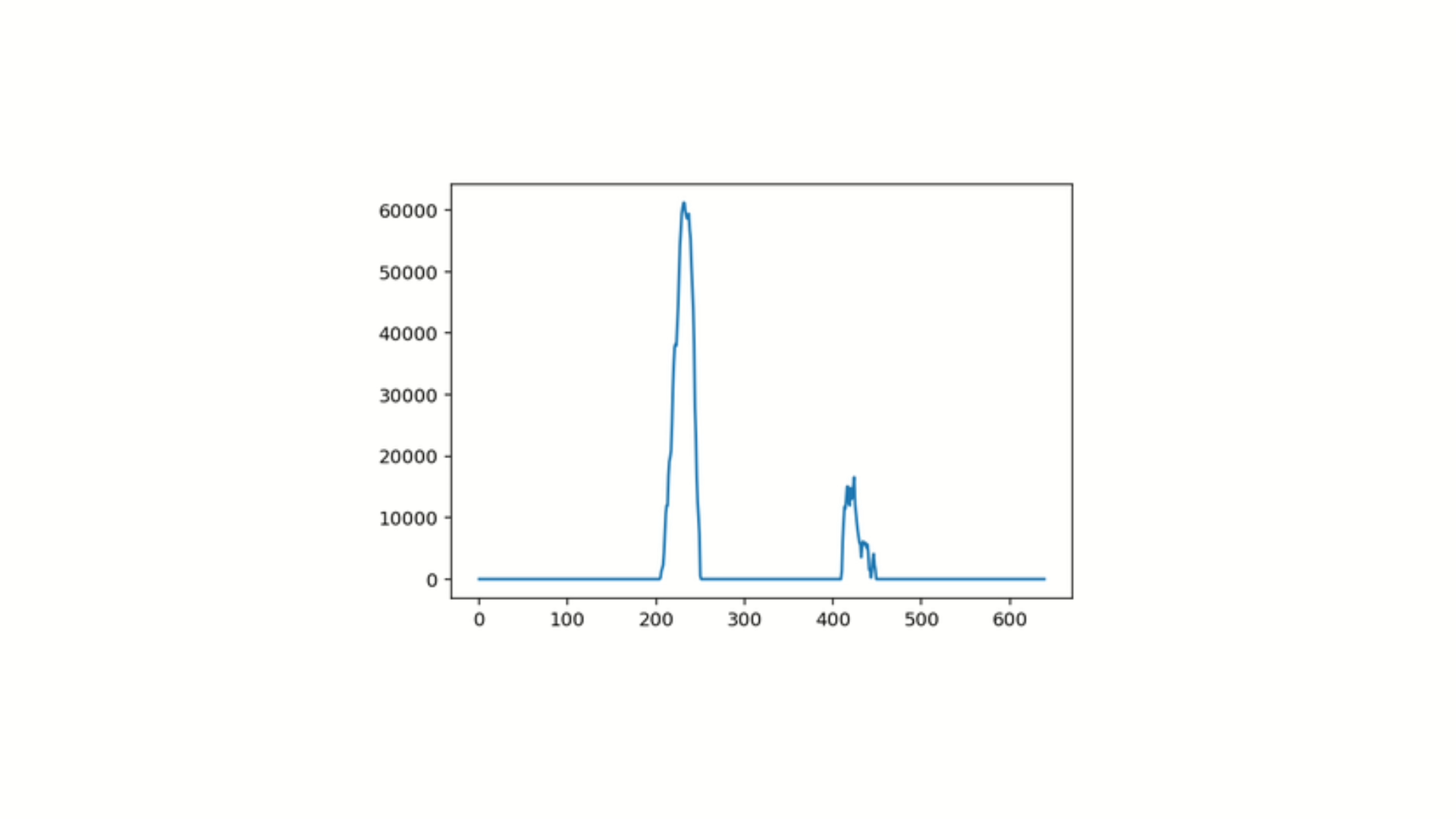

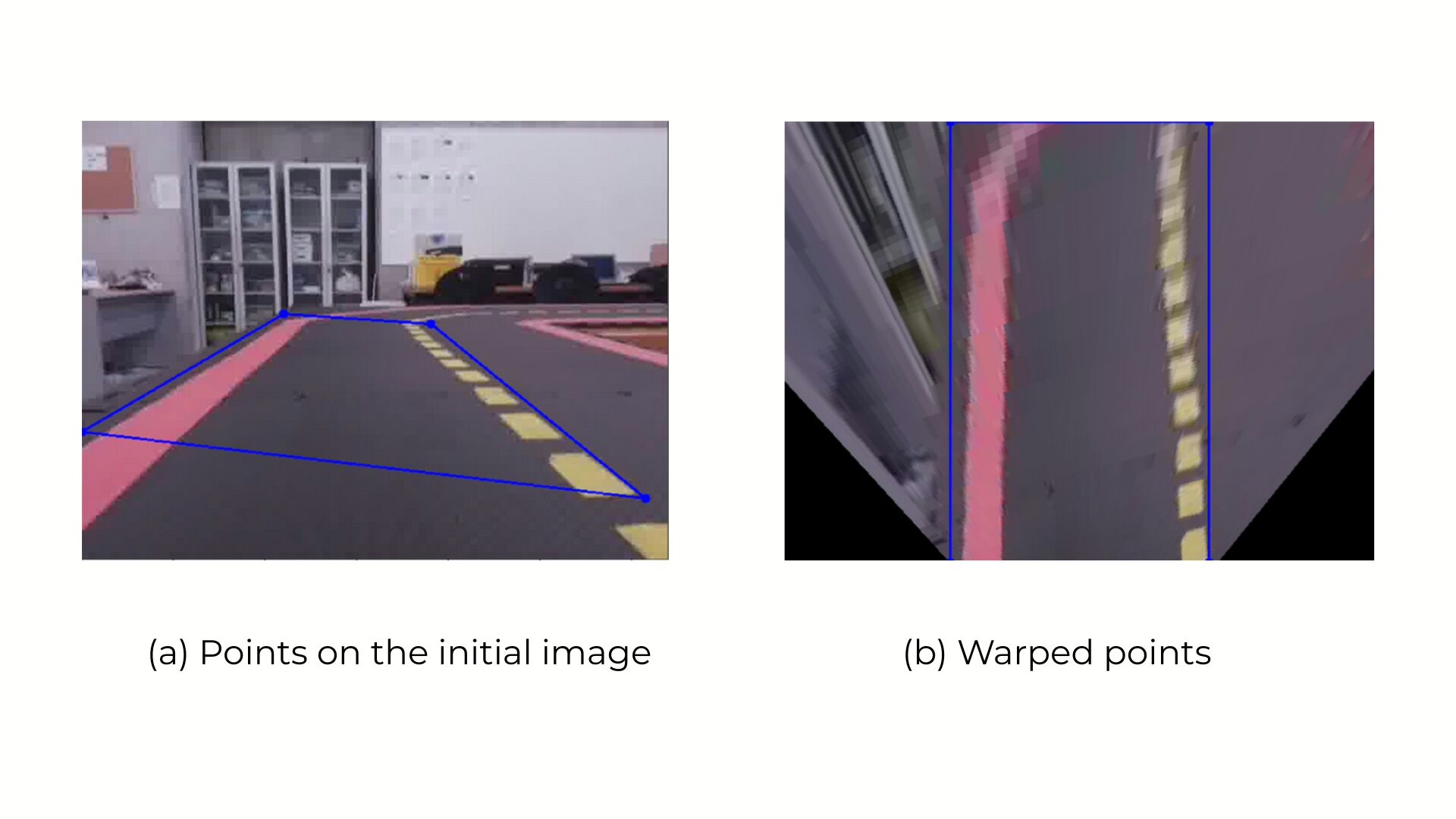

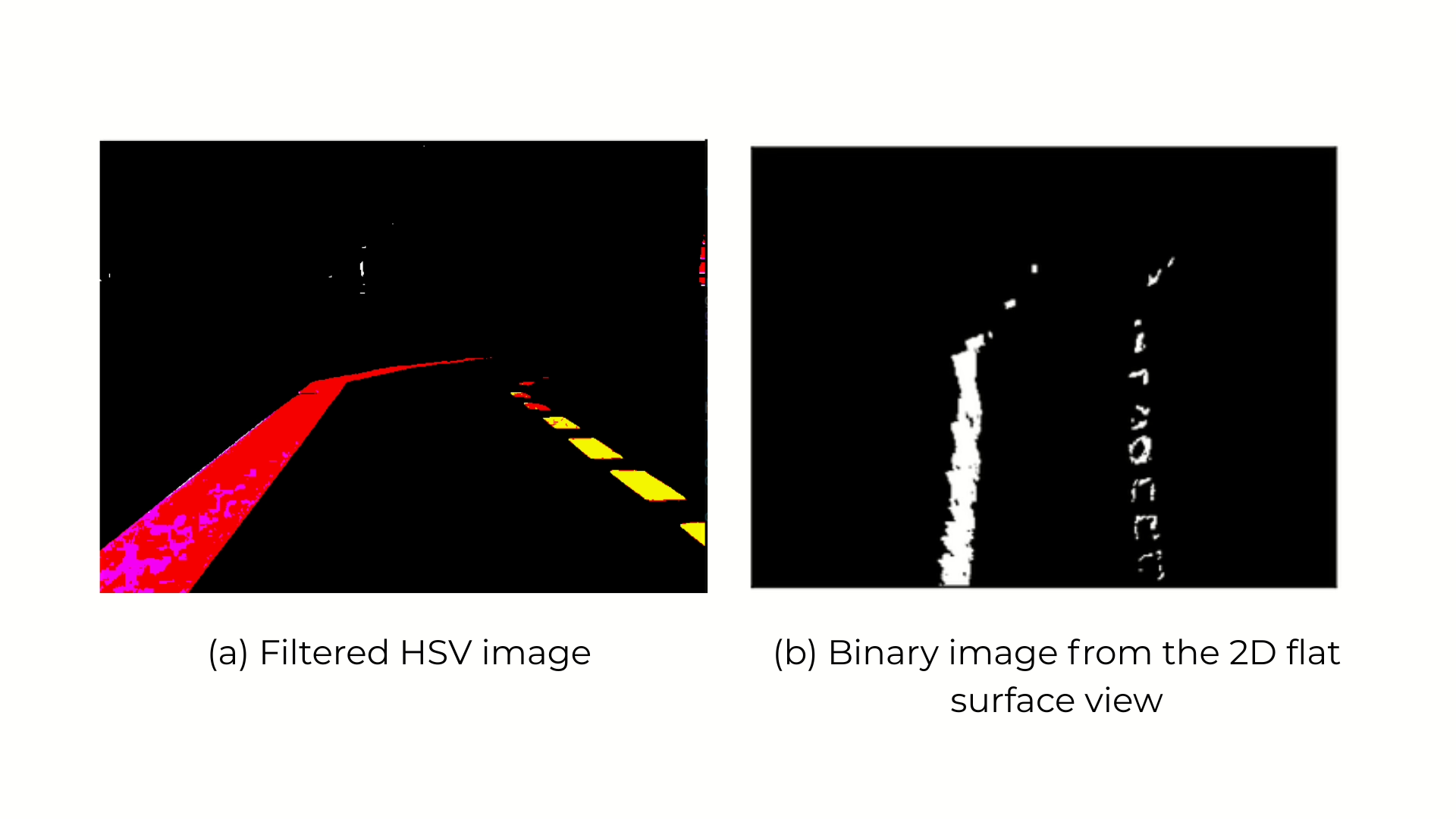

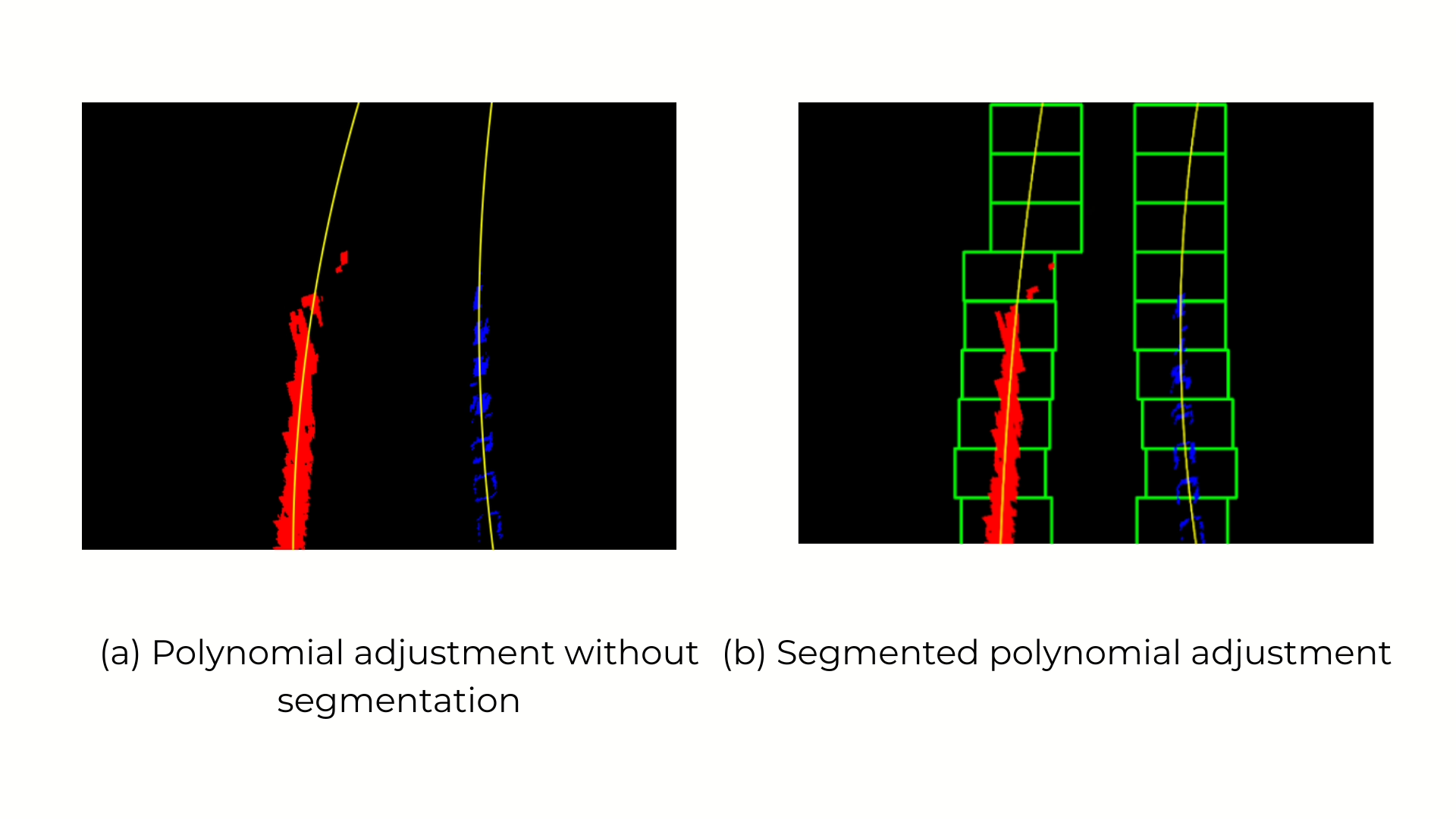

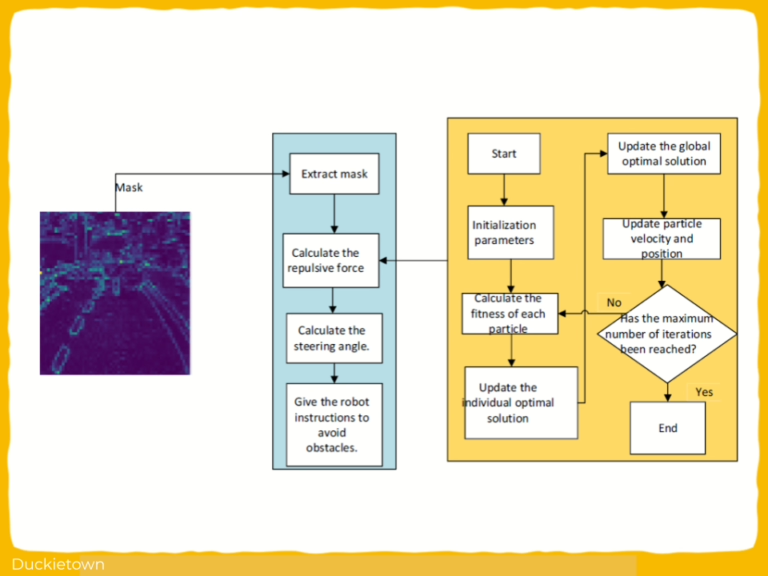

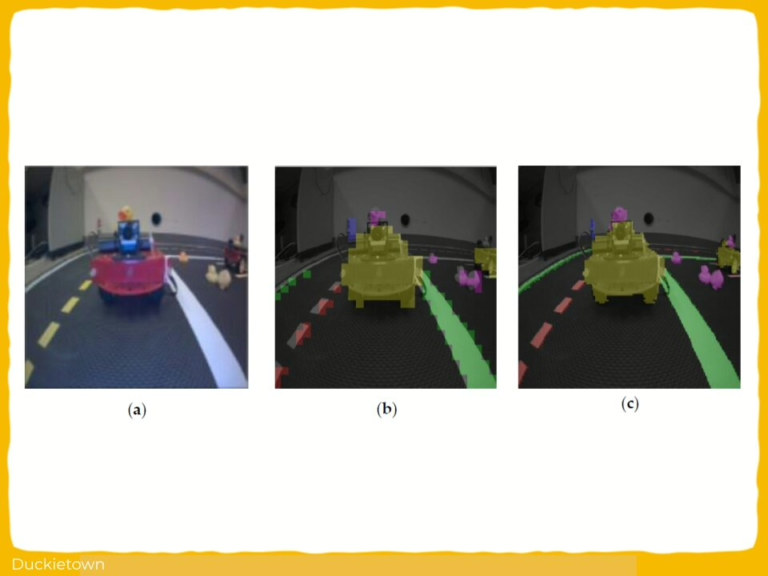

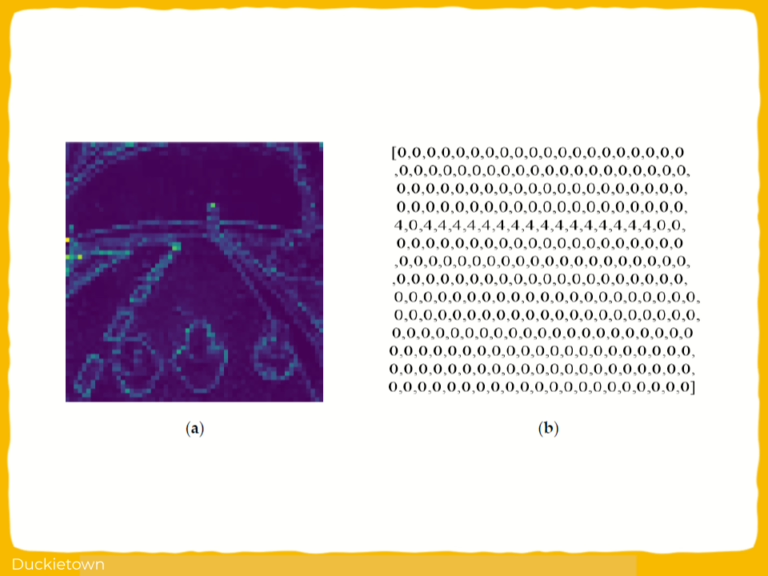

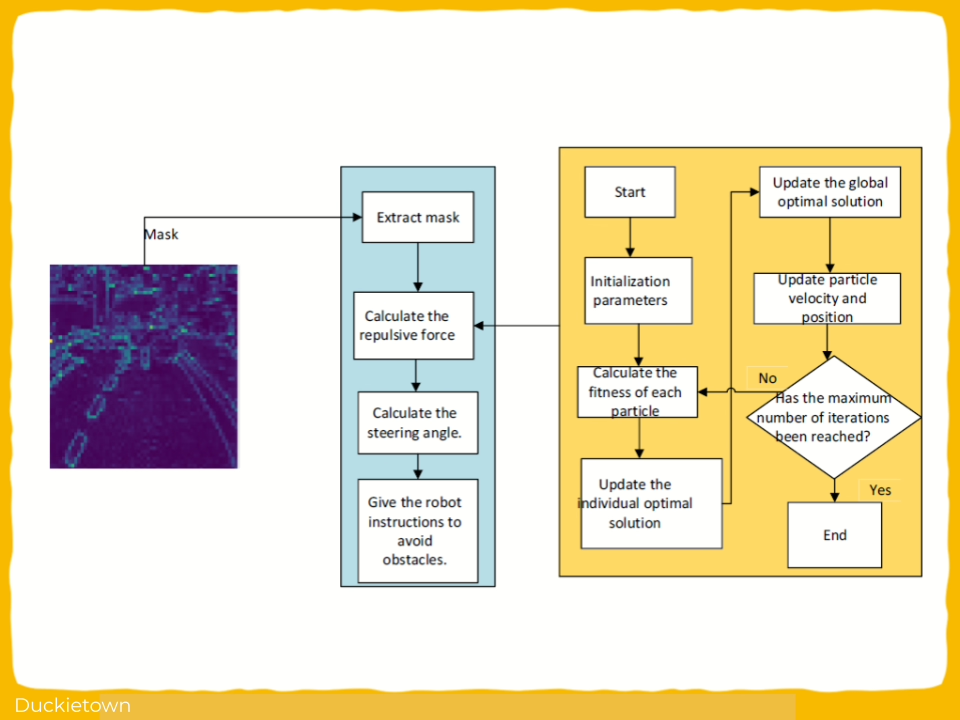

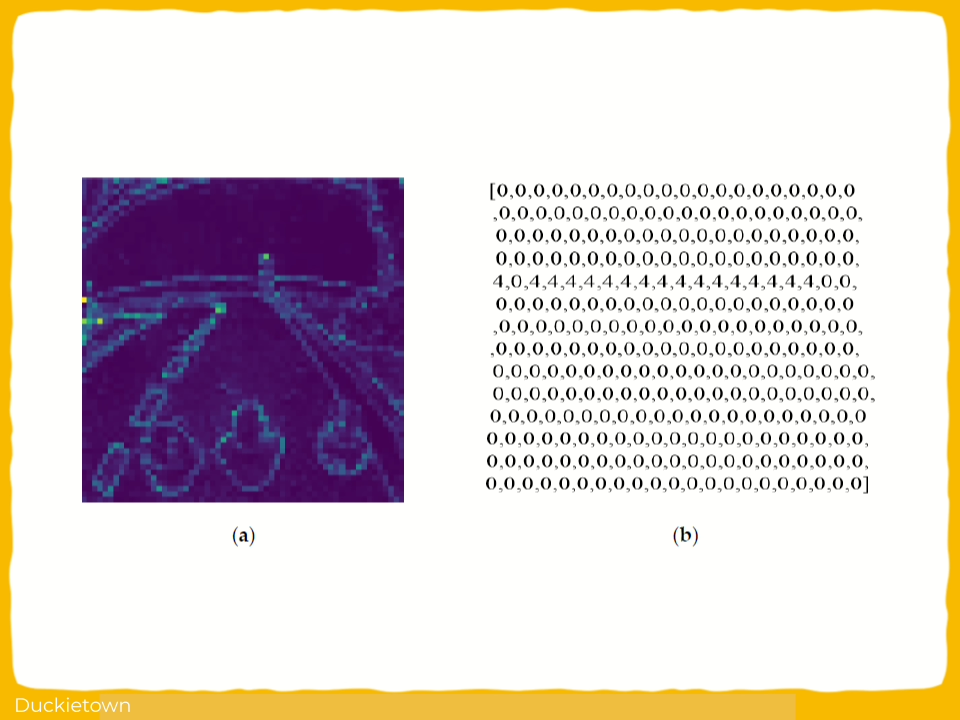

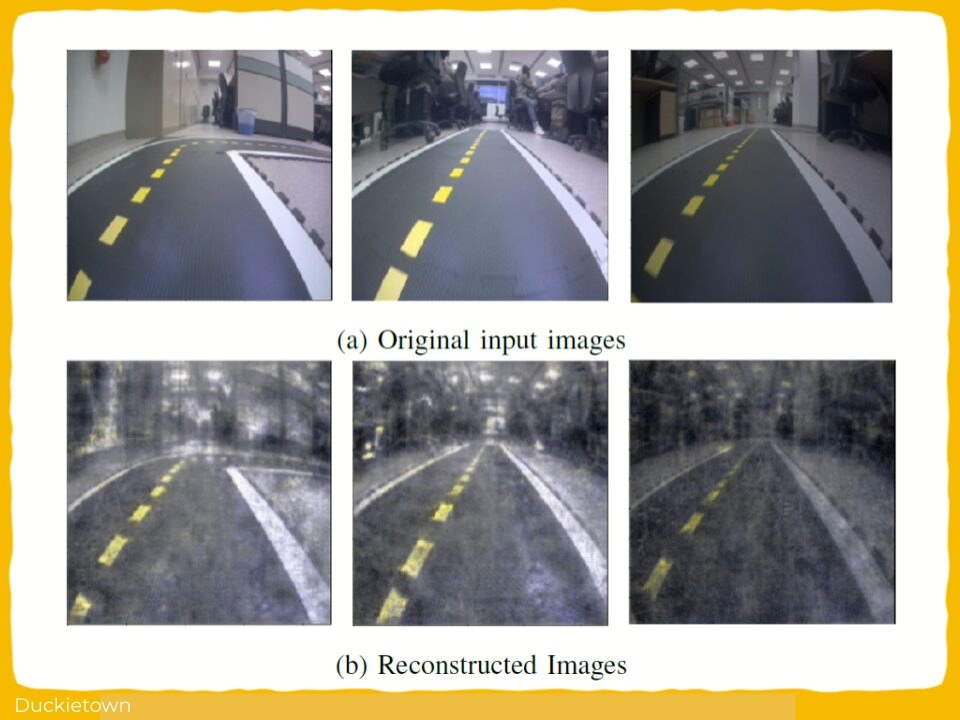

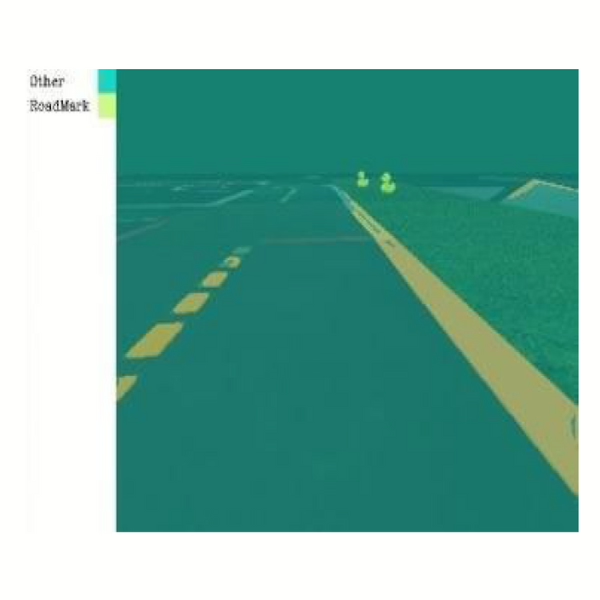

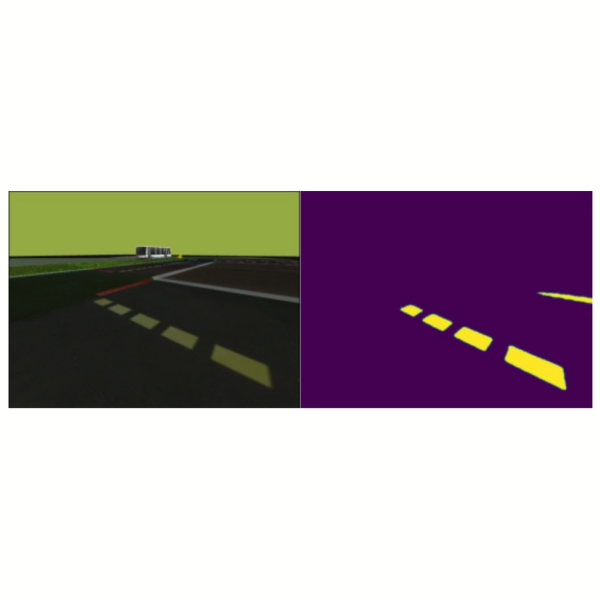

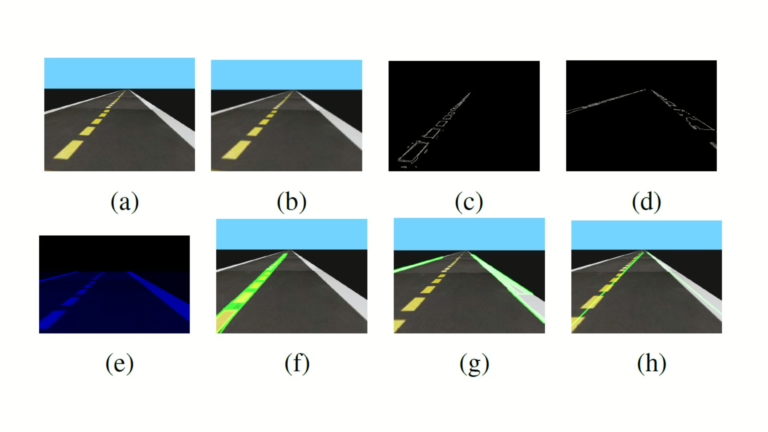

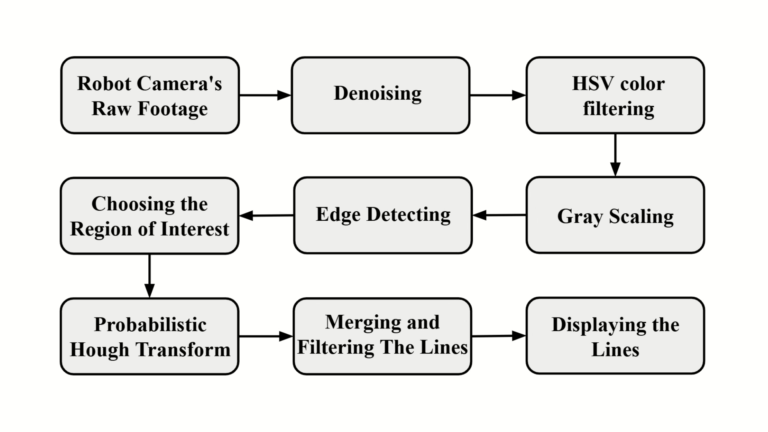

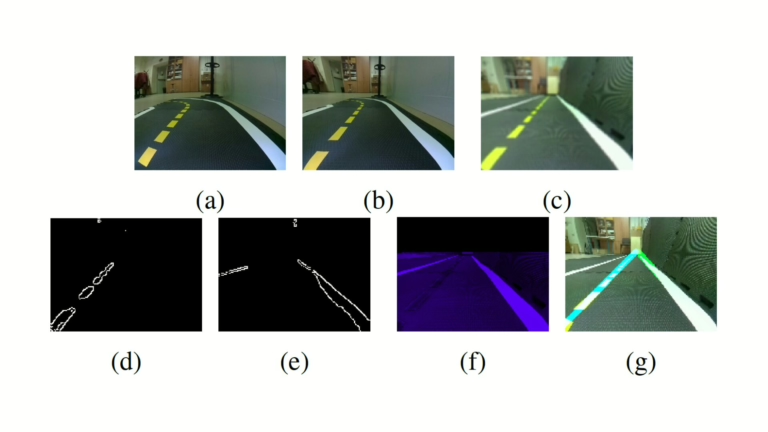

The controller drives these features to the image center using a mathematically derived control law. The visual features are obtained from the camera feed using a multi-stage image processing pipeline implemented in OpenCV. The pipeline includes frame denoising, grayscale conversion, edge detection using the Canny edge detection algorithm, region of interest masking, and line detection via the Probabilistic Hough Line Transform. This setup provides robust detection of the white and yellow lane markings under varying conditions.

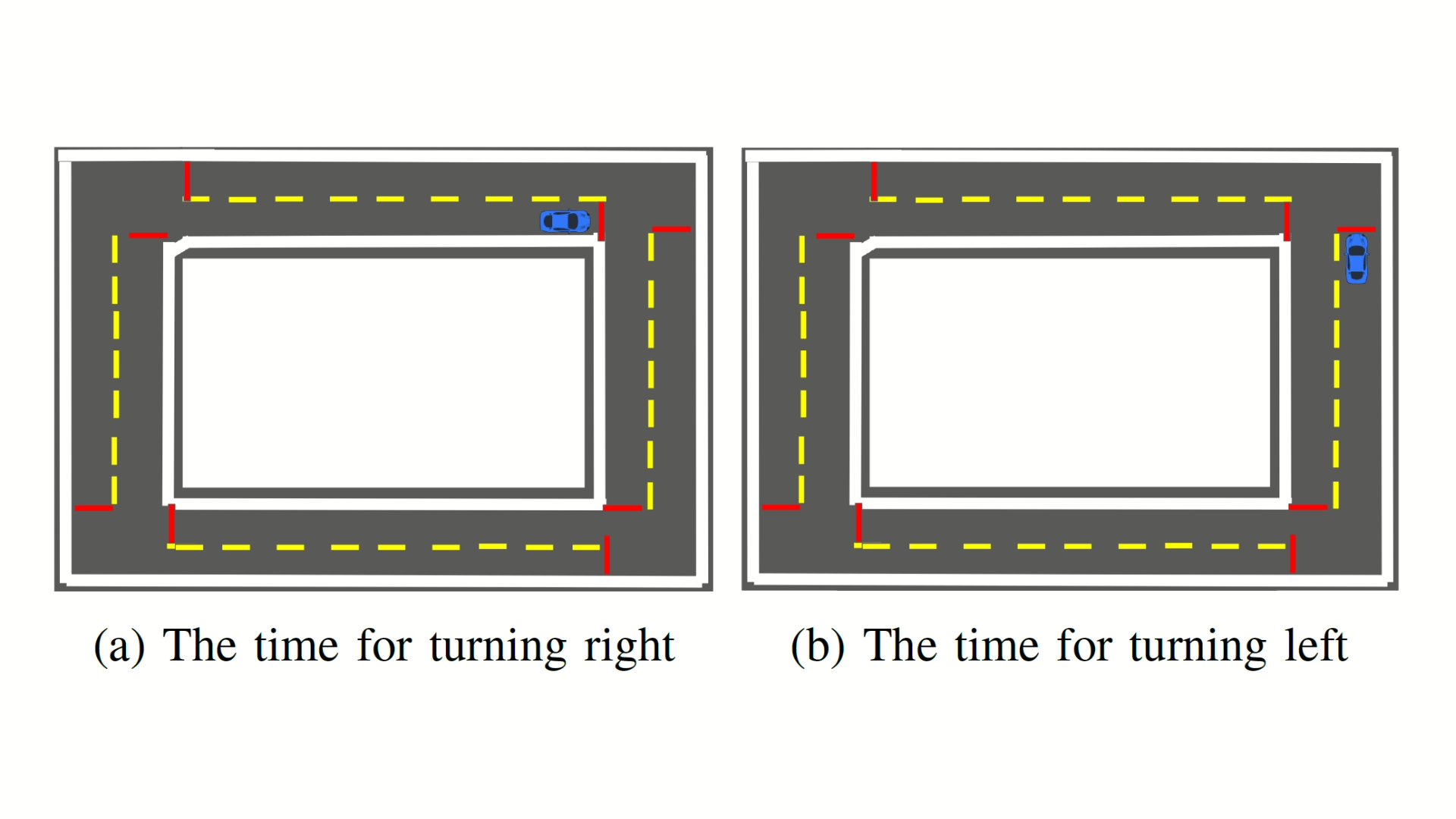

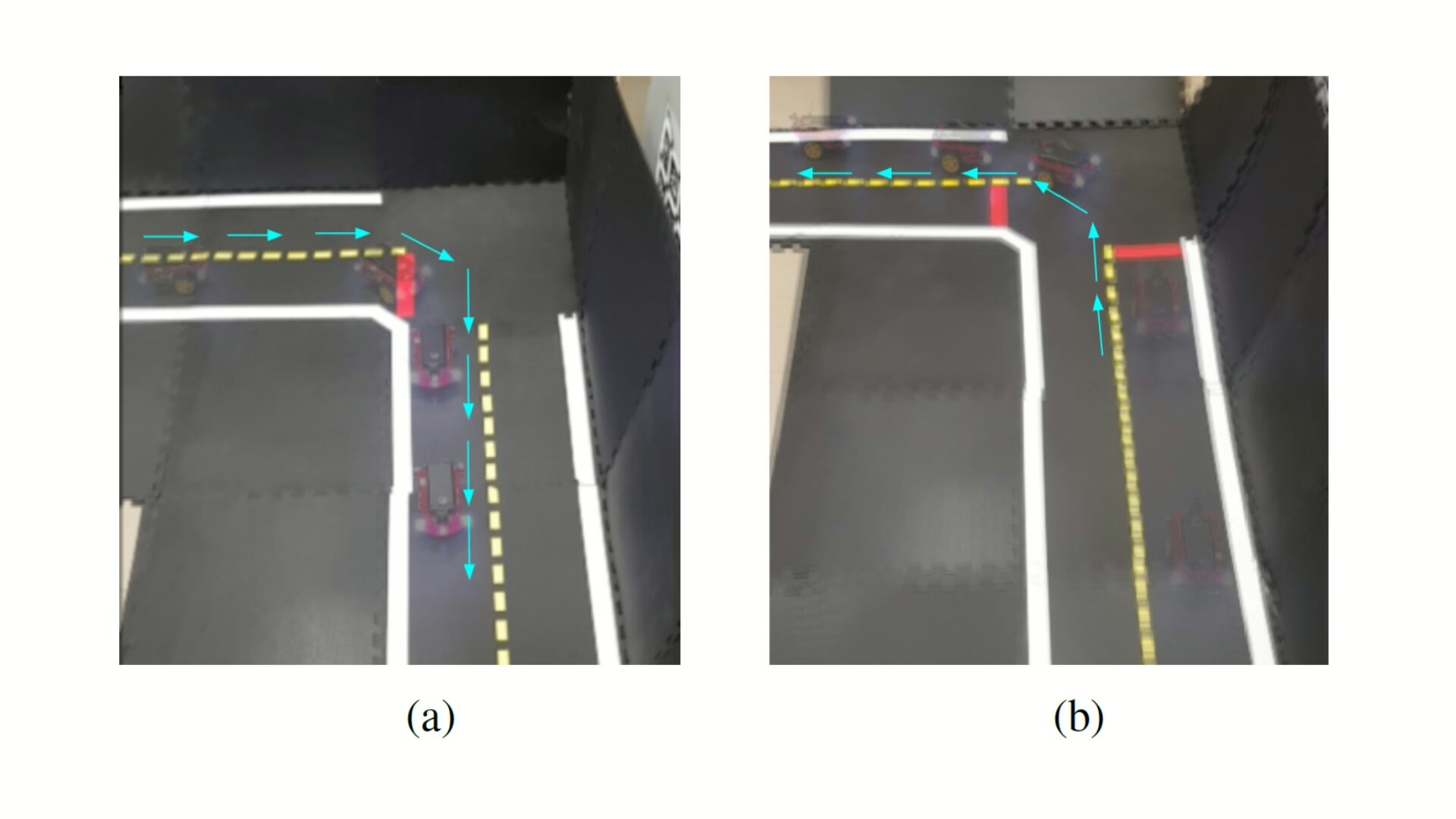

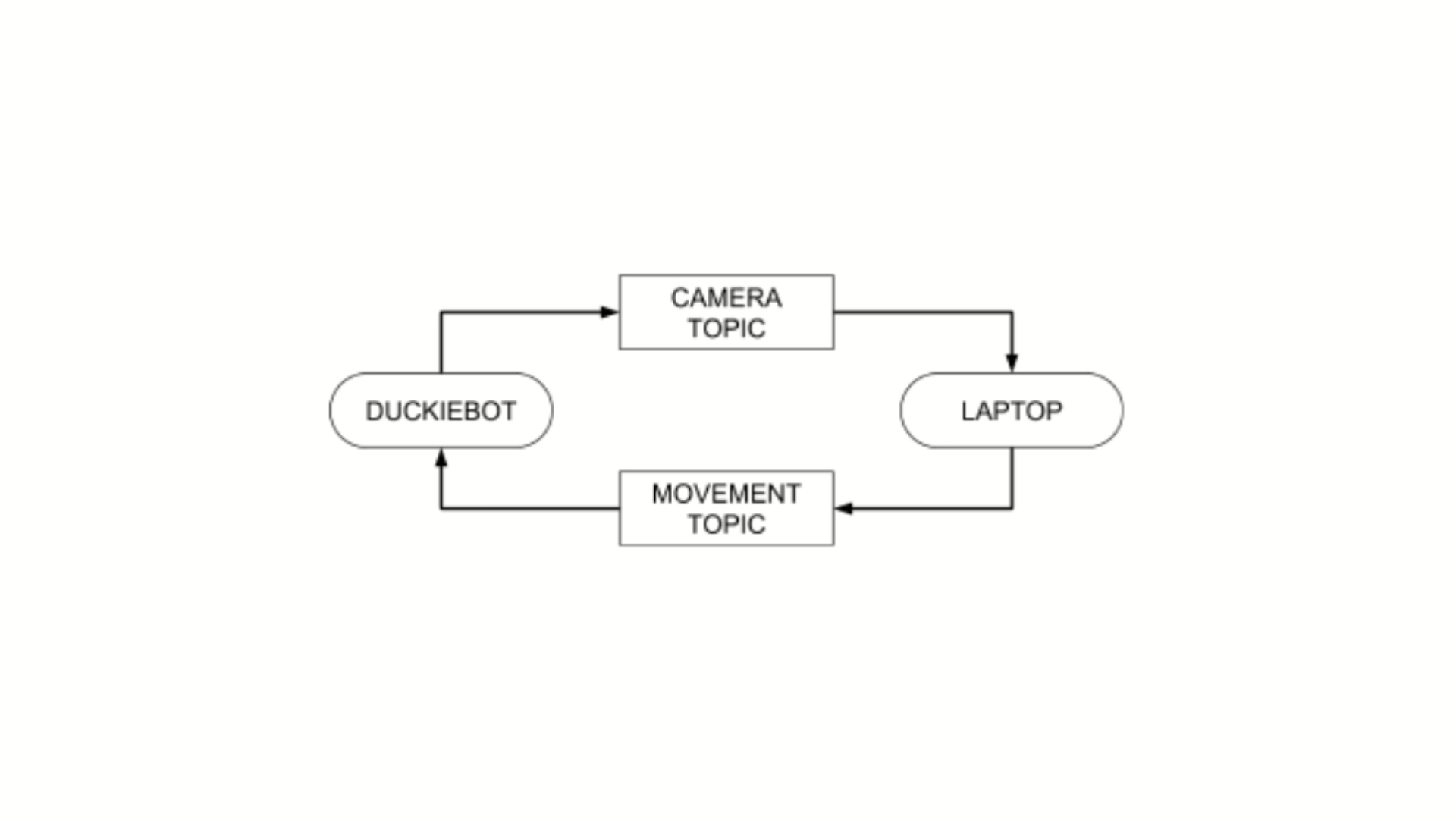

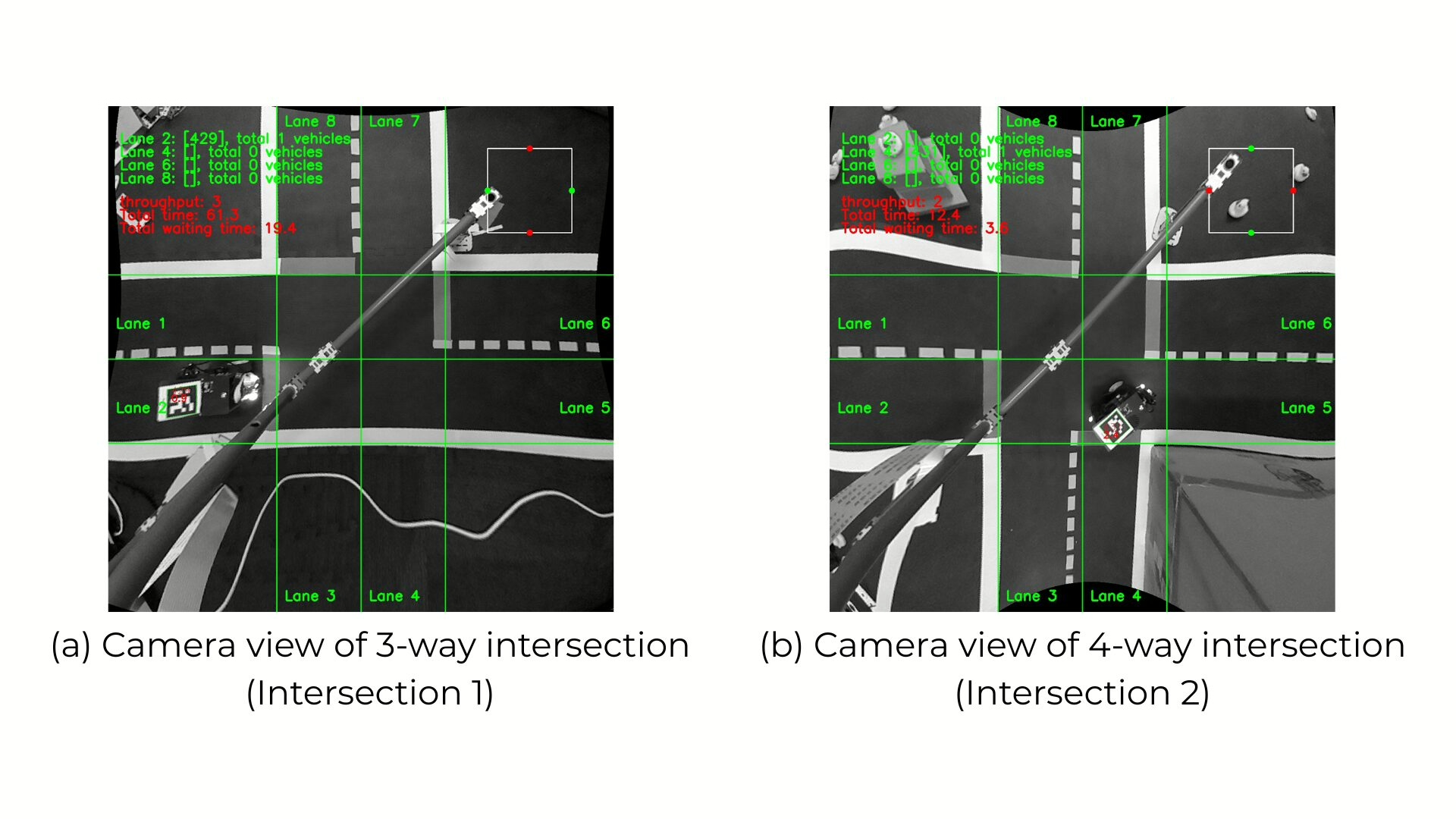

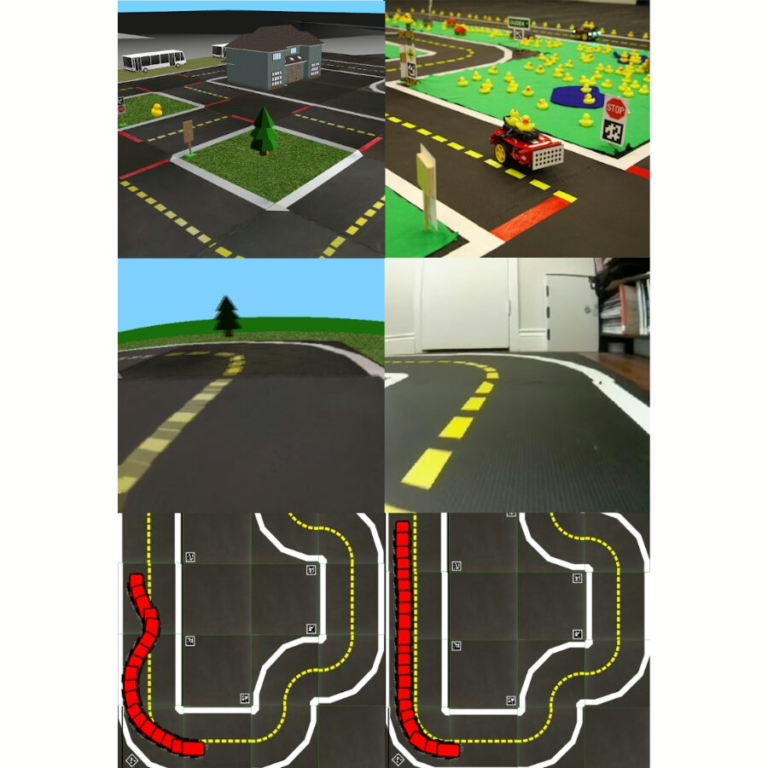

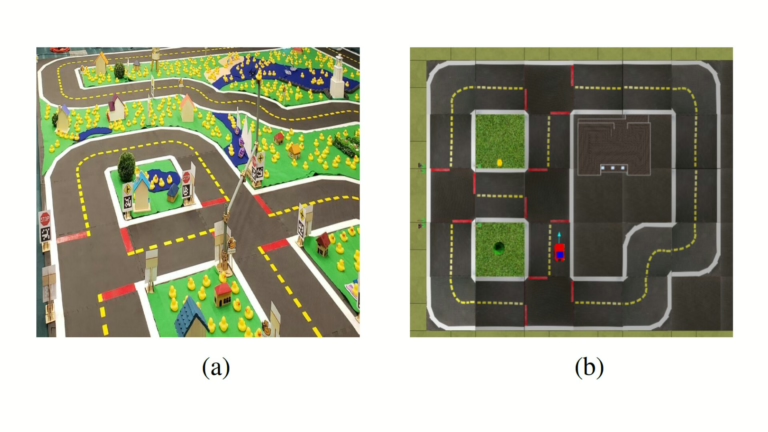

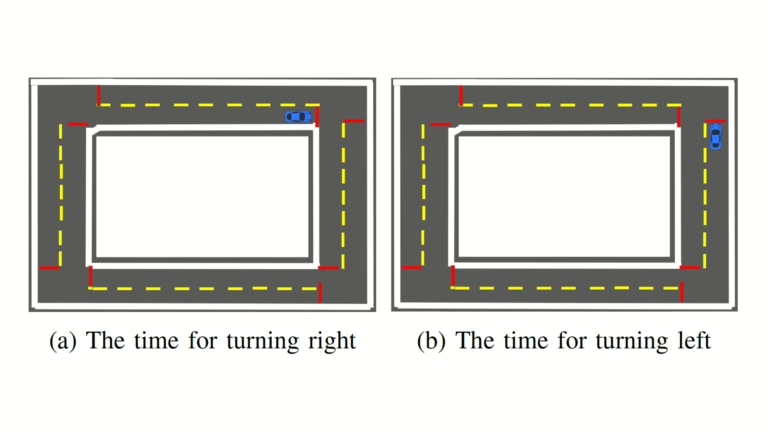

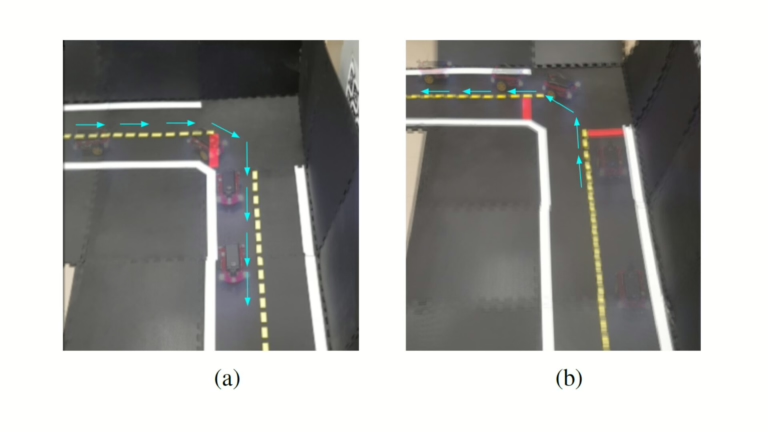

A scenario-driven transition system detects red lines marking intersections and activates artificial guidelines to execute controlled turns. The visual control implementation runs as a single ROS node following a publisher-subscriber architecture, deployed both in the Duckietown Simulator (gym) and in Duckietown.

Highlights - Visual Control for Autonomous Navigation in Duckietown

Here is a visual tour of the implementation of visual control for autonomous navigation by the authors. For all the details, check out the full paper.

Abstract

Here is the abstract of the work, directly in the words of the authors:

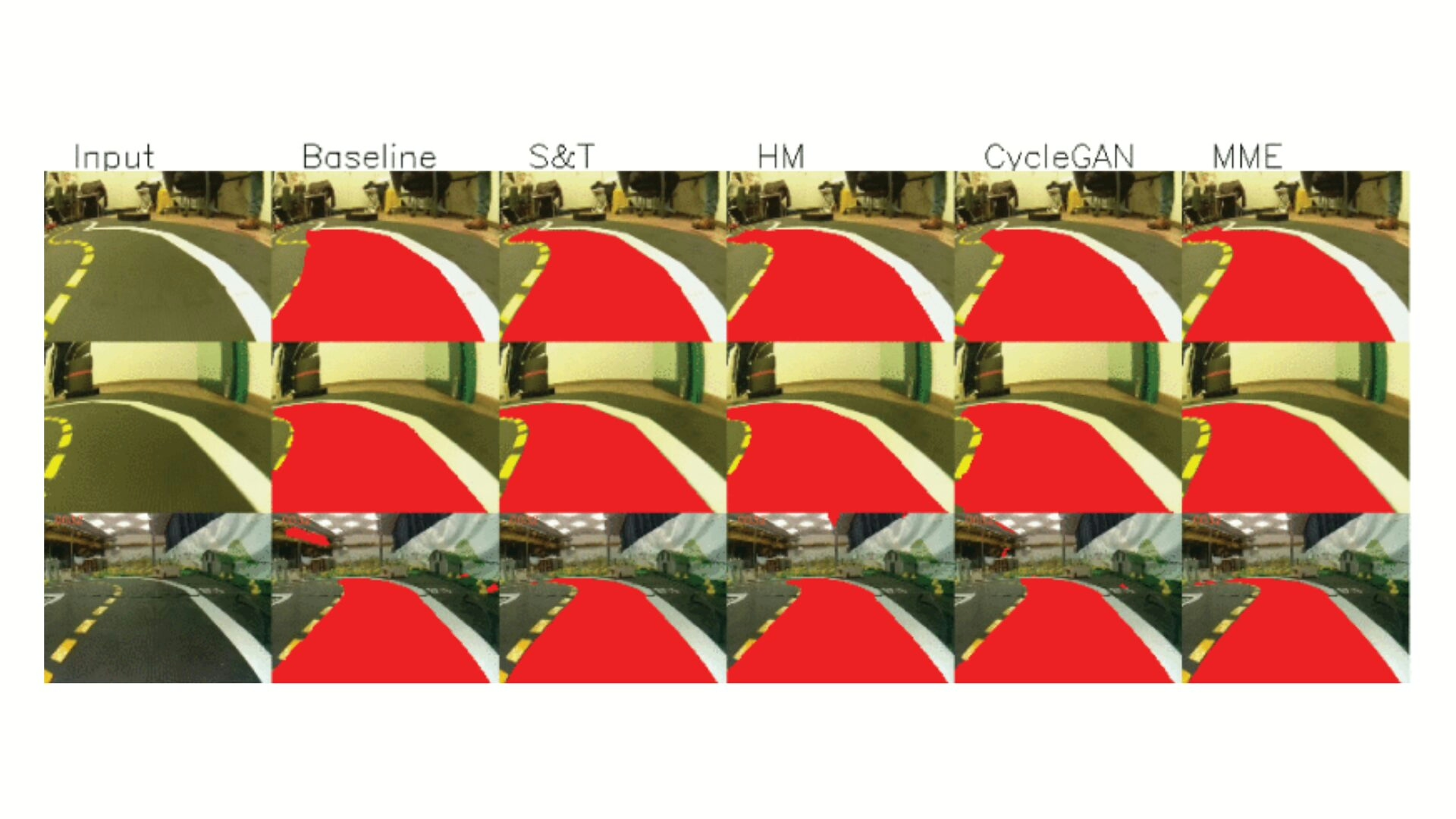

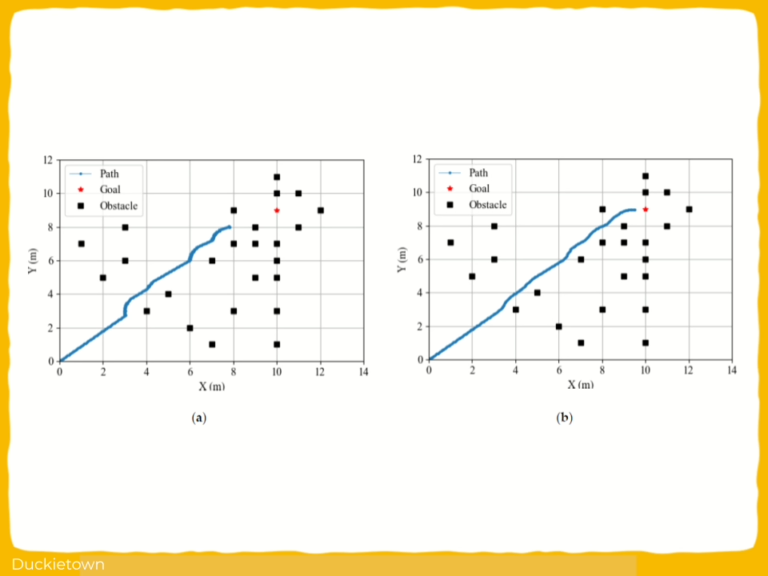

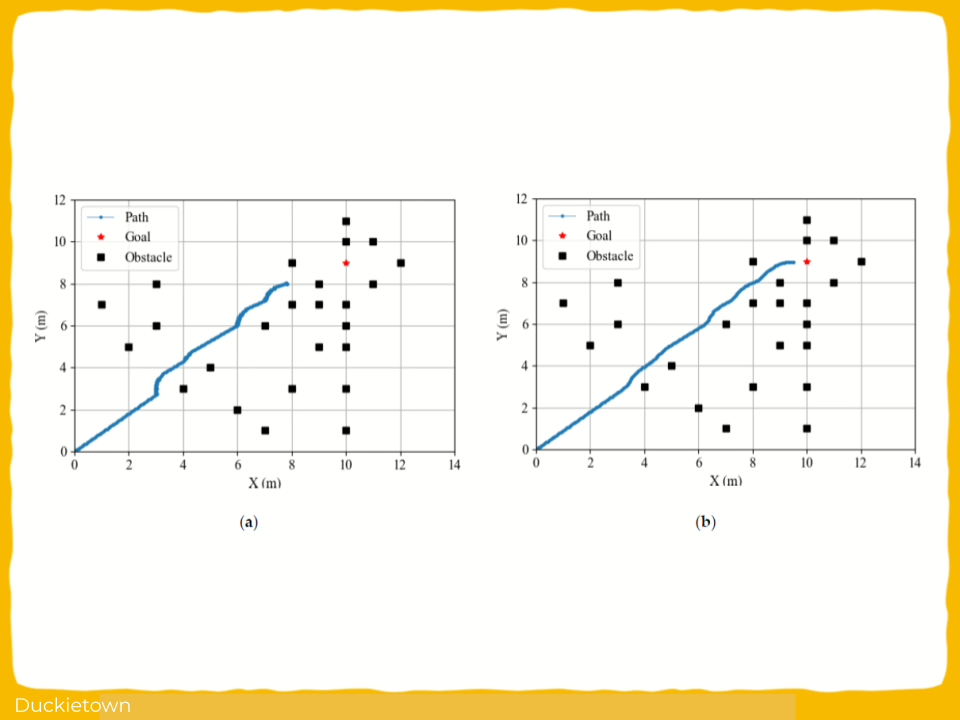

This paper presents a vision-based control framework for the autonomous navigation of wheeled mobile robots in city-like environments, including both straight roads and turns. The approach leverages Computer Vision techniques and OpenCV to extract lane line features and utilizes a previously established control law to compute the necessary steering commands.

The proposed method enables the robot to accurately follow the lanes and seamlessly handle complex maneuvers such as consecutive turns. The framework has been rigorously validated through extensive simulations and real-world experiments using physical robots equipped with the ROS framework. Experimental evaluations were conducted at the DIAG Robotics Lab at Sapienza University of Rome, Italy, demonstrating the practicality of the proposed solution in realistic settings.

This work bridges the gap between theoretical control strategies and their practical application, offering insights into vision-based navigation systems for autonomous robotics. A video demonstration of the experiments is available at https://youtu.be/tDvpwSj8X28.

Conclusion - Visual Control for Autonomous Navigation in Duckietown

Here is the conclusion according to the authors of this paper:

This paper proposed a vision-based control framework for lane-following tasks in wheeled mobile robots, validated through both simulations and real-world experiments. The approach effectively maintains the robot position at the center of lanes and enables safe left and right turns by relying solely on visual feedback from onboard camera, without requiring external localization systems or pre-mapped environments.

The system’s modular design and simplicity allow for seamless integration with other robotic systems, making it versatile for diverse urban navigation scenarios. Future research will focus on enhancing the framework to handle complex scenarios, such as autonomous lane corrections, and incorporating obstacle detection and avoidance mechanisms for improved performance in dynamic, real-world environments.

These advancements will expand the applicability of the proposed method, confirming its potential as a robust solution for autonomous navigation.

Did this work spark your curiosity?

Check out the following works on vehicle autonomy with Duckietown:

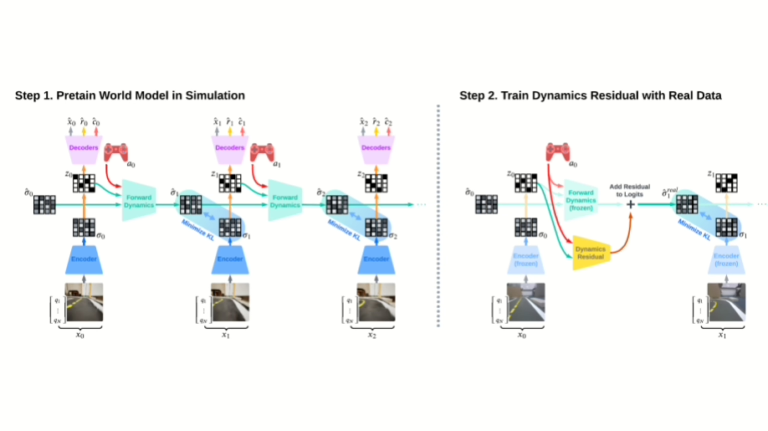

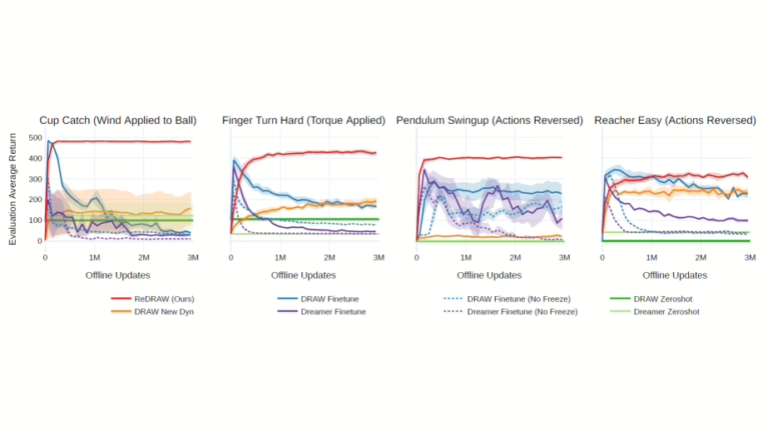

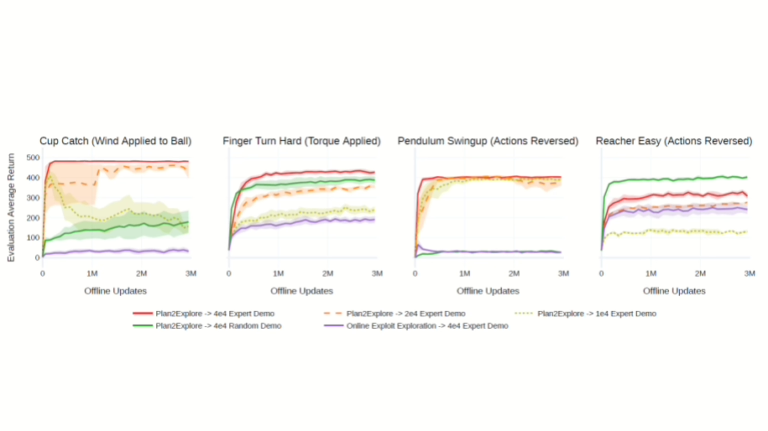

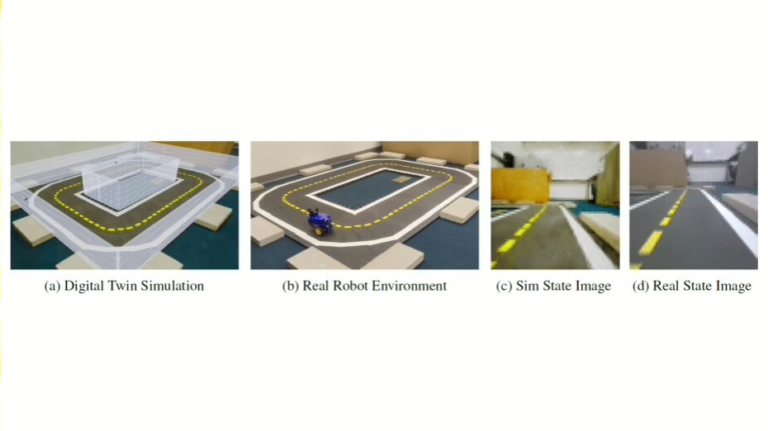

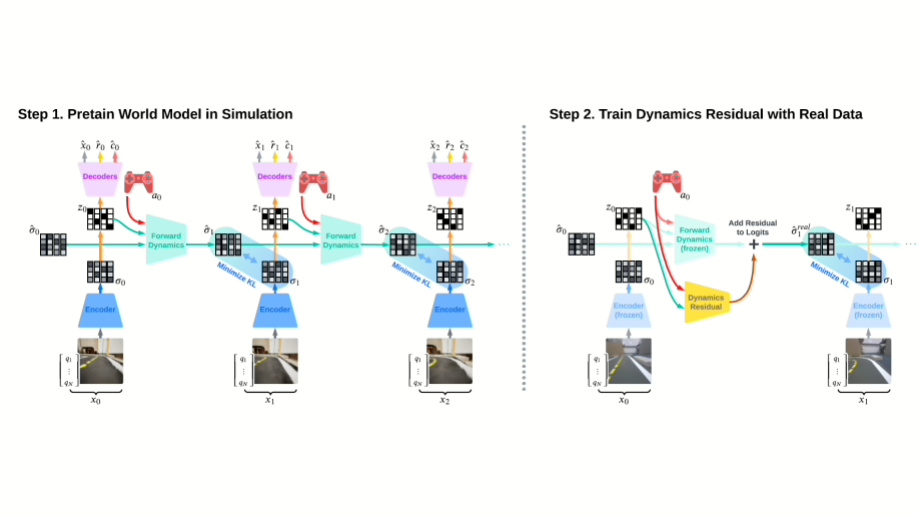

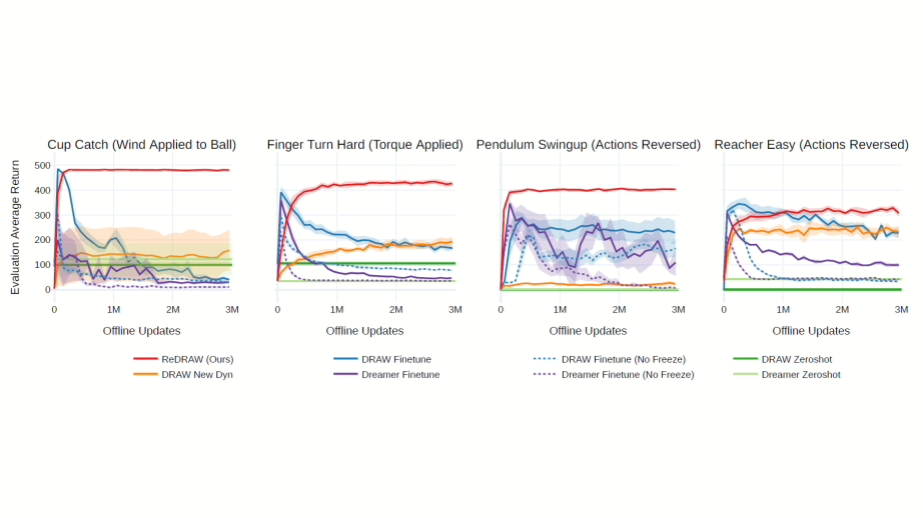

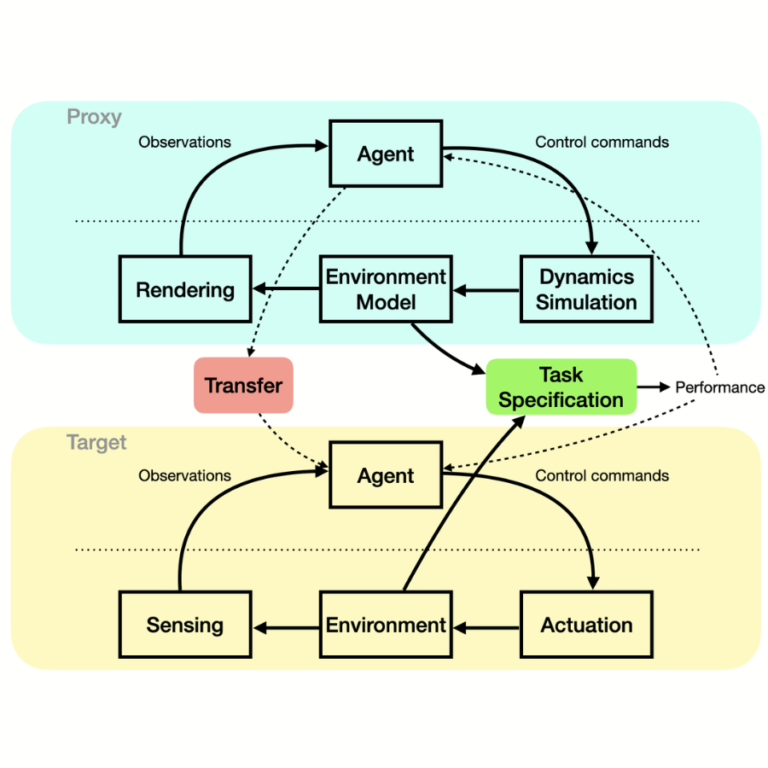

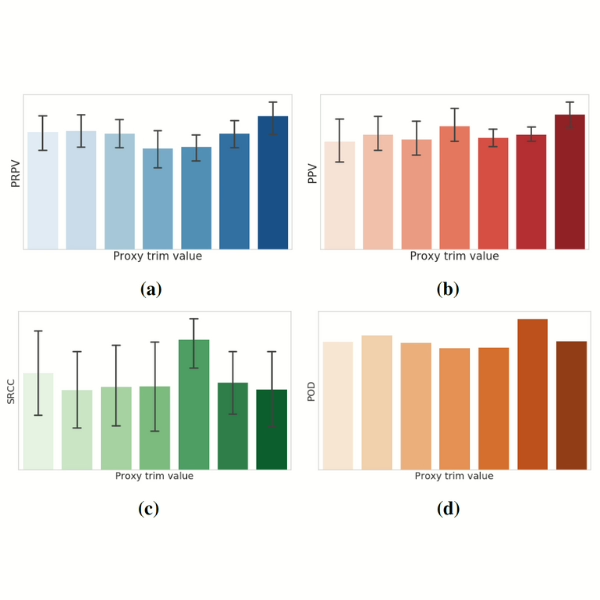

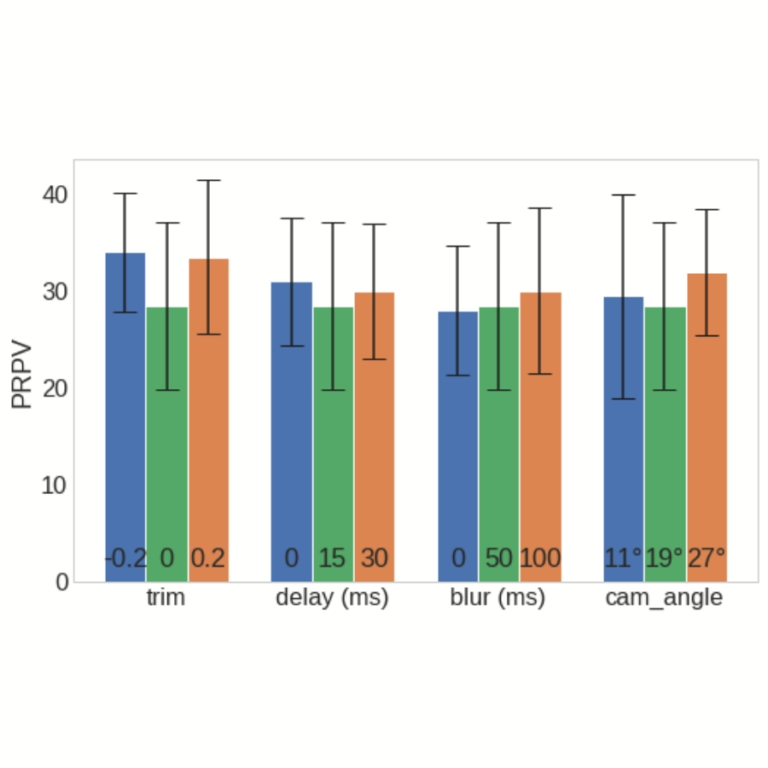

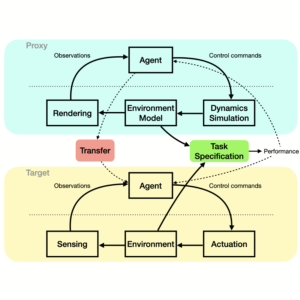

- Adapting World Models with Latent-State Dynamics Residuals

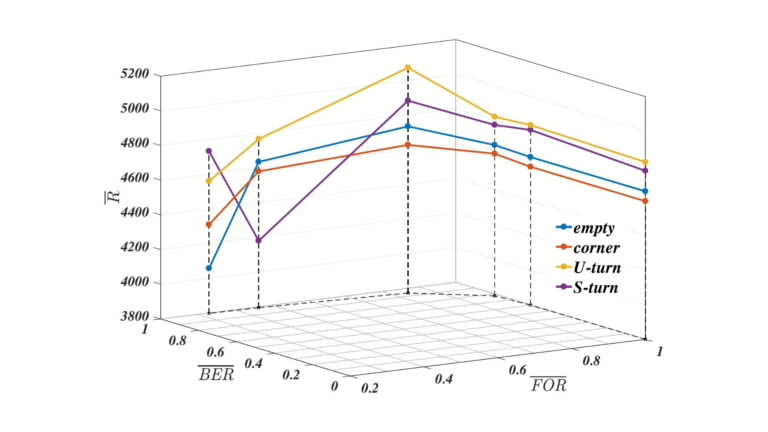

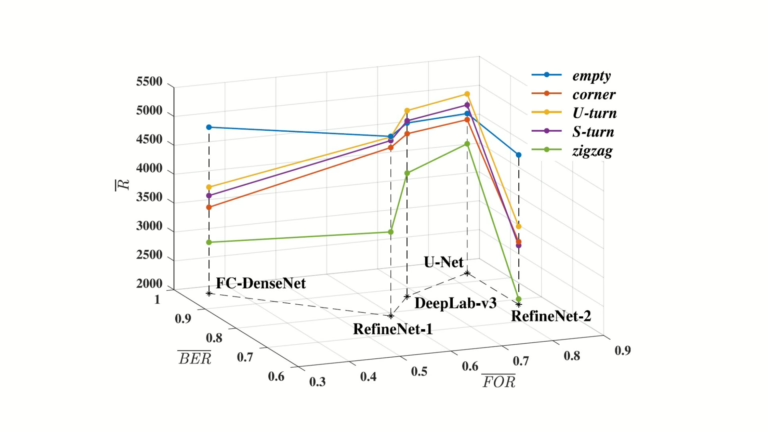

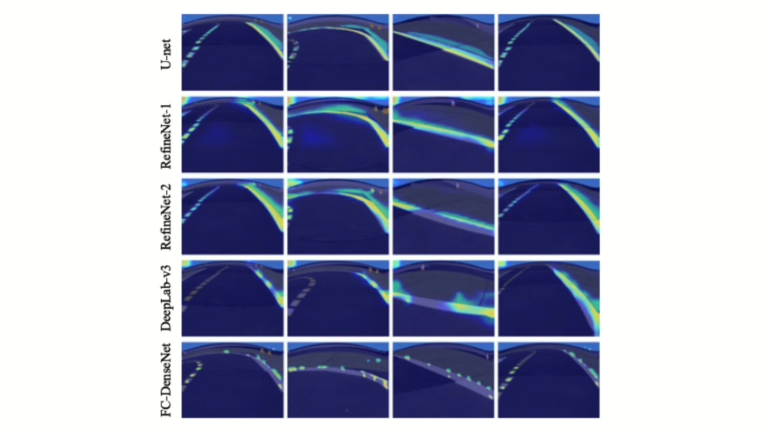

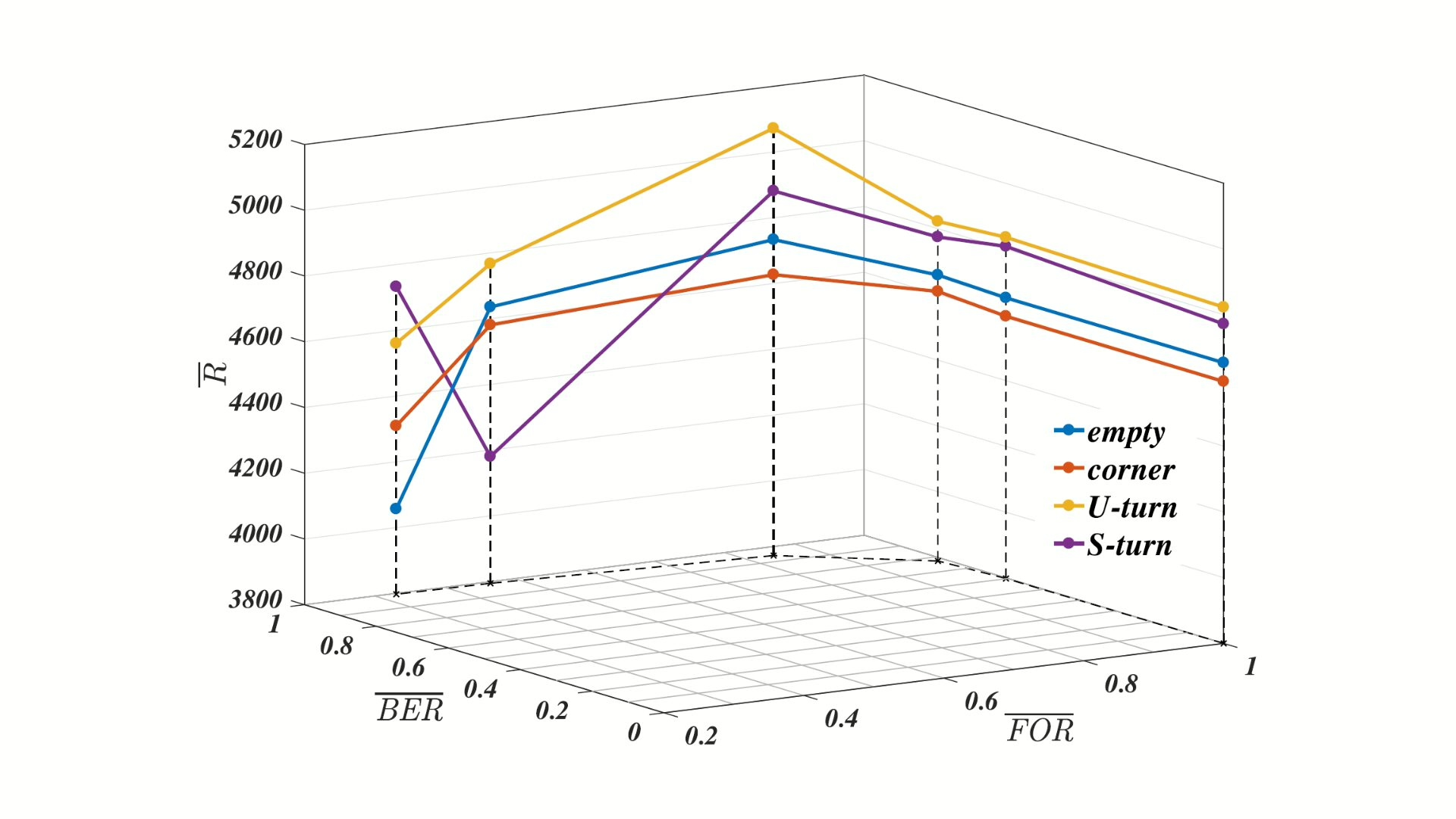

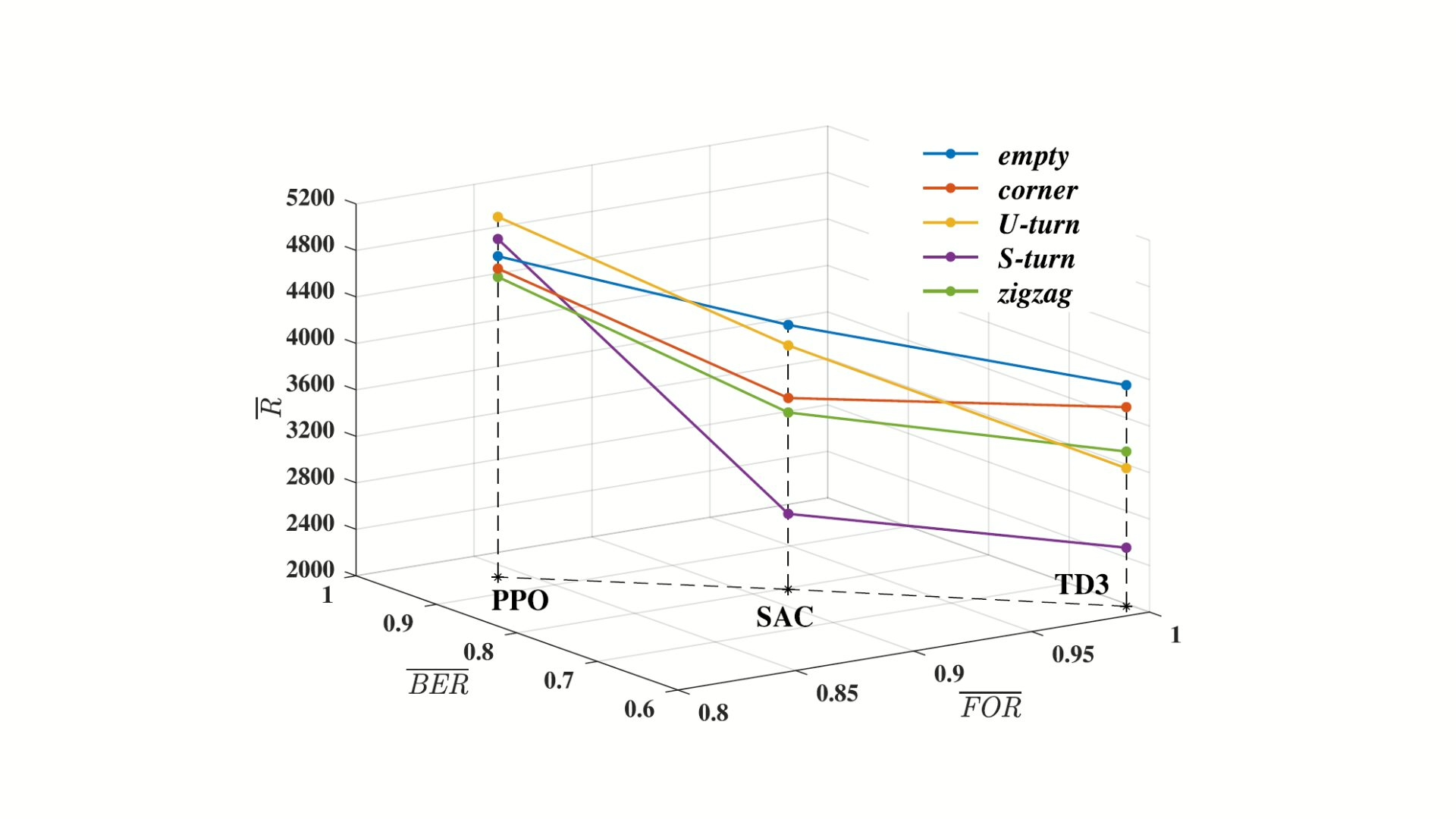

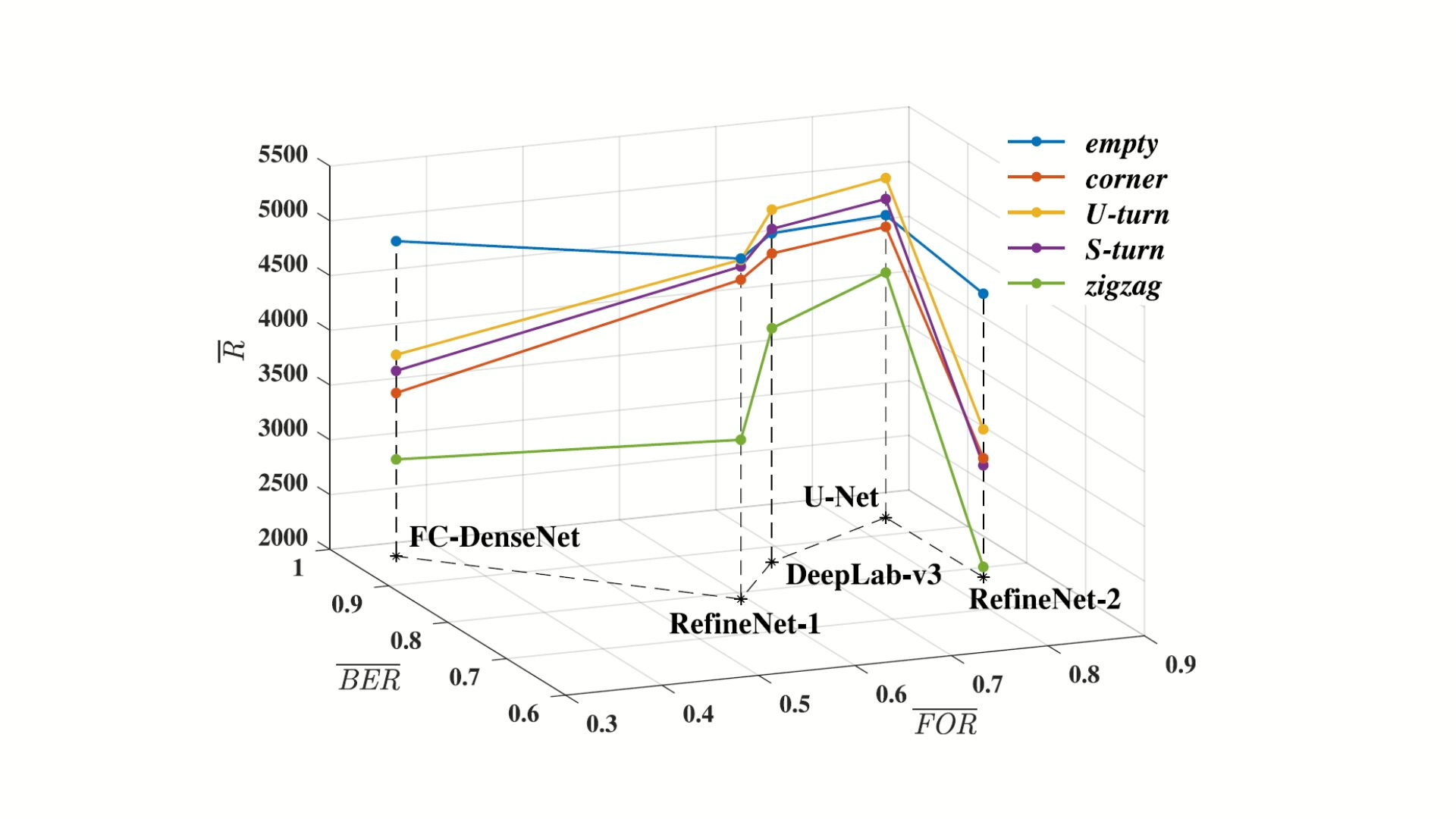

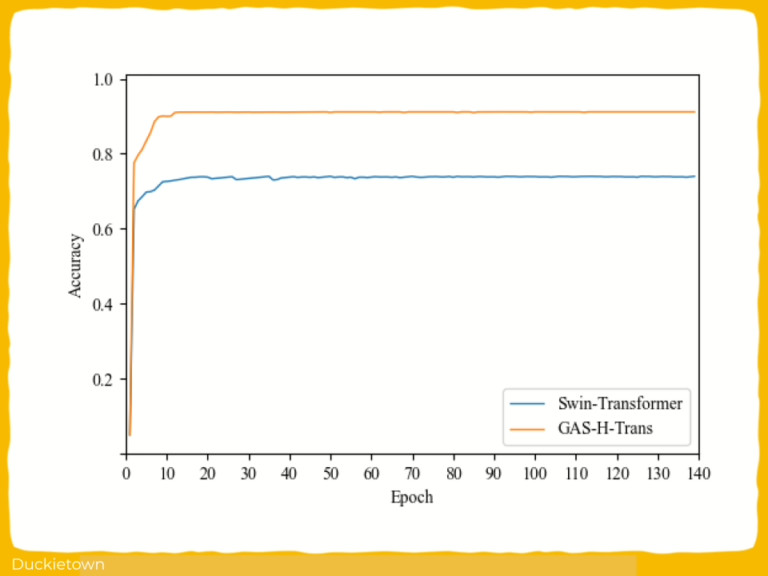

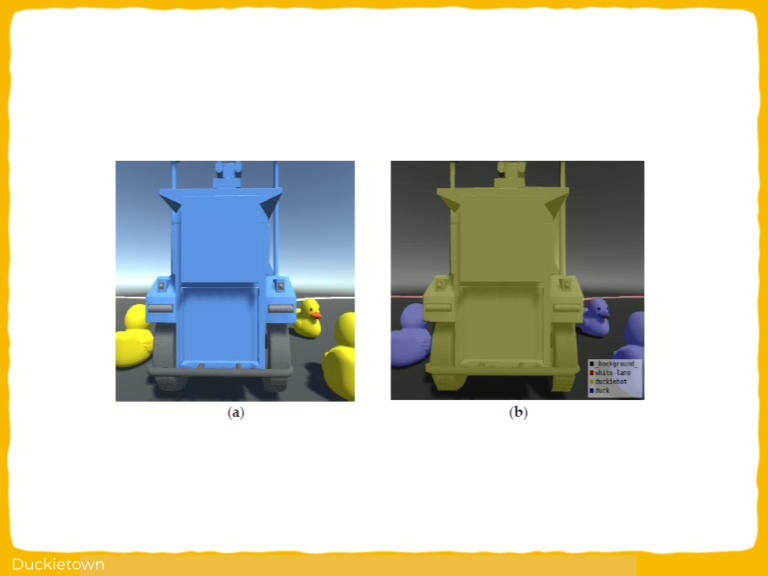

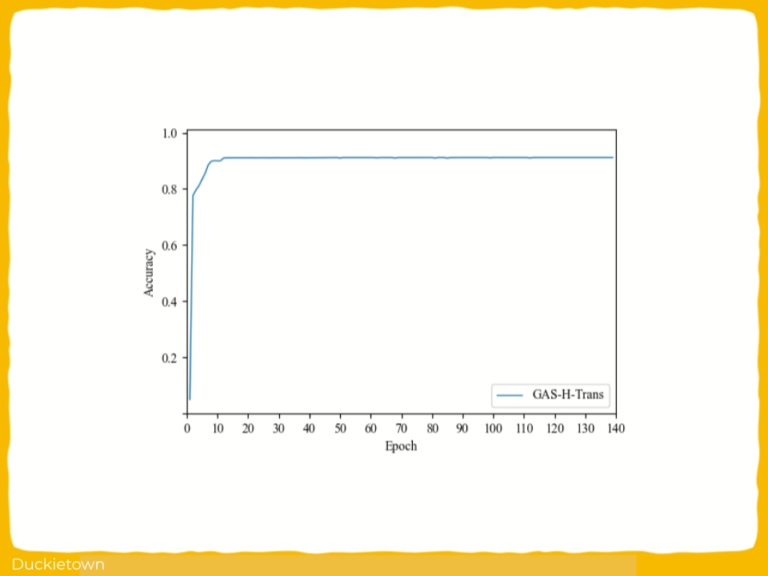

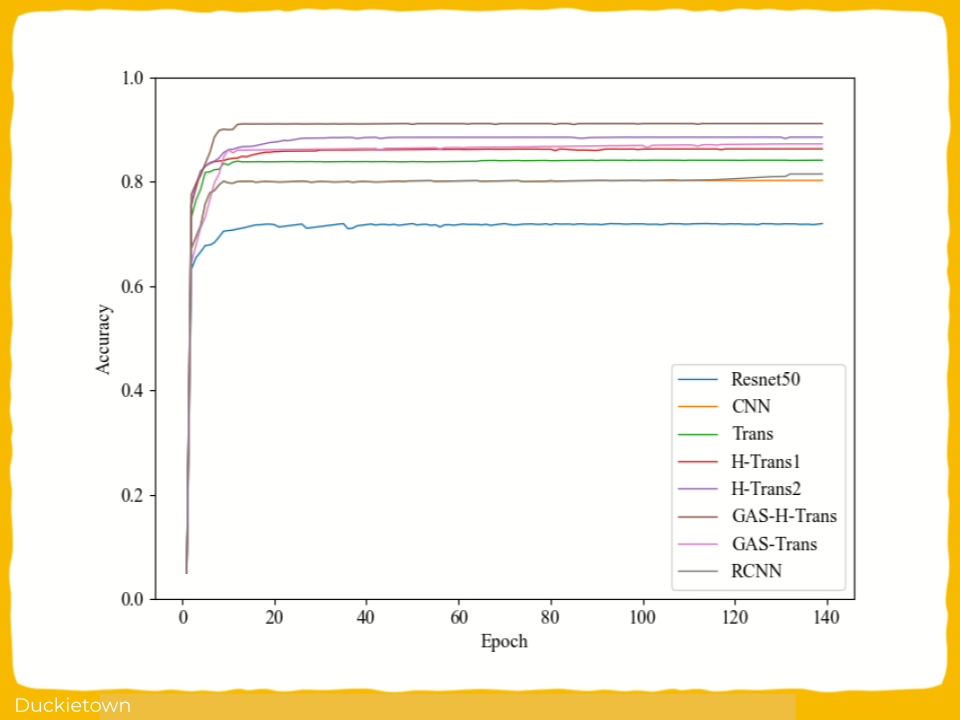

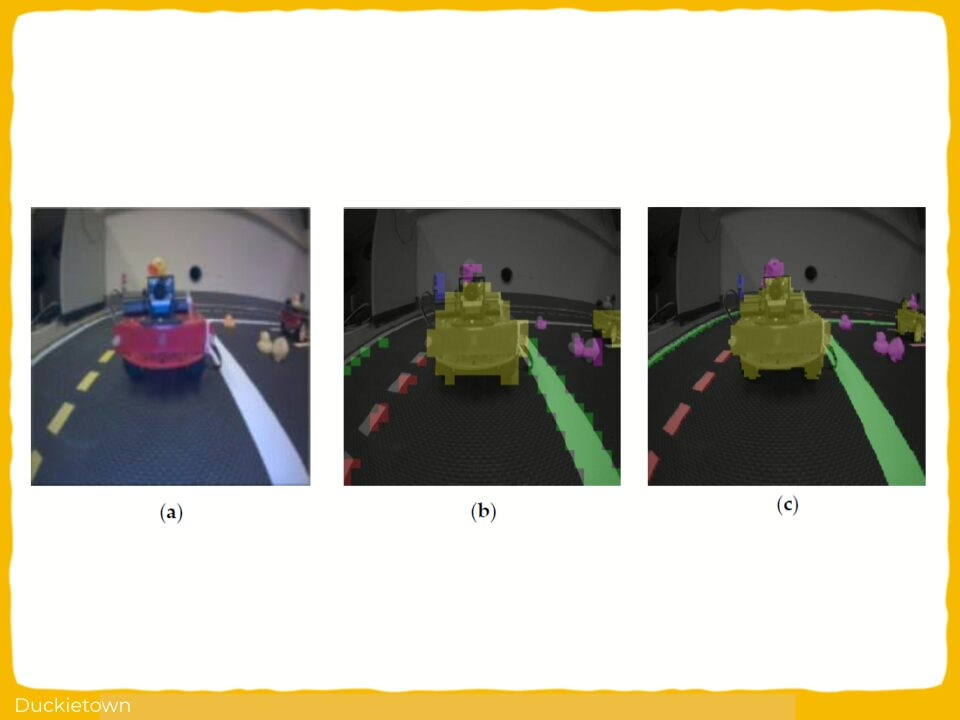

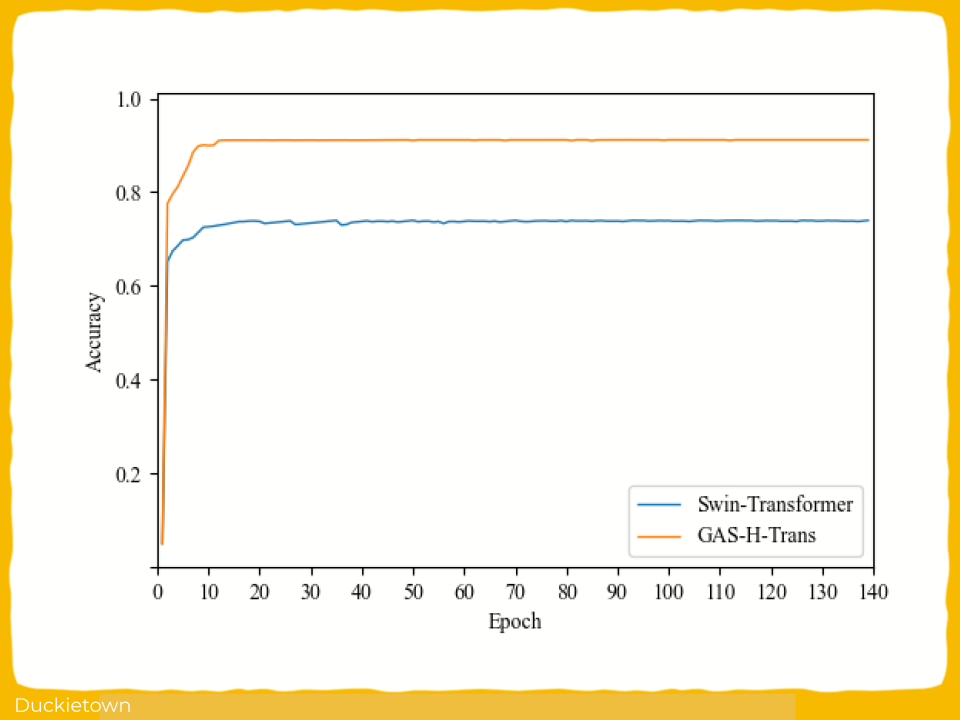

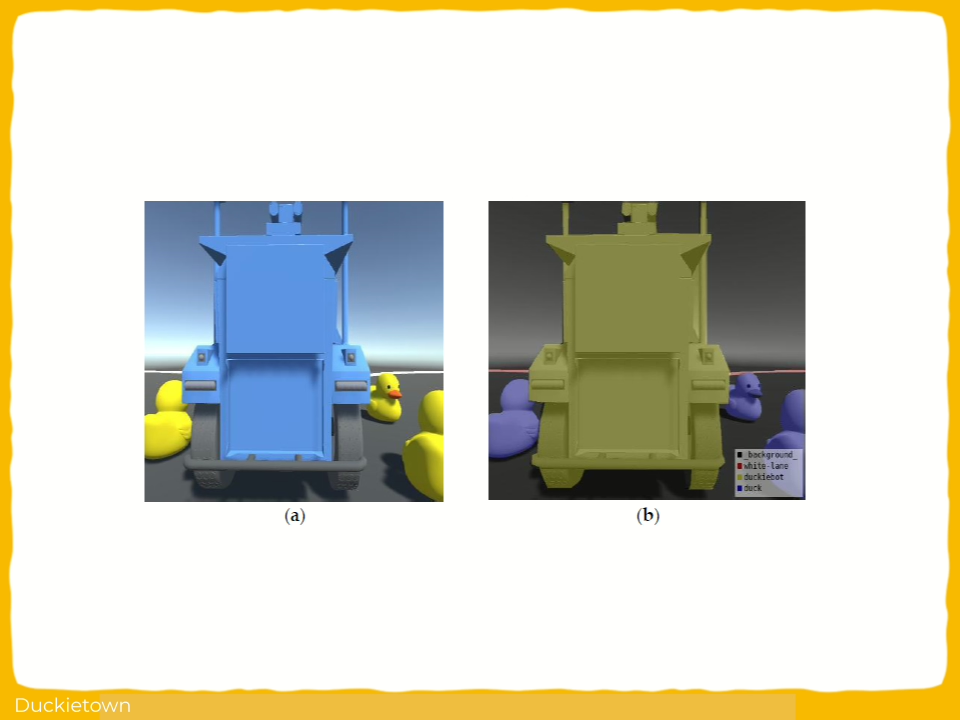

- Semantic Image Segmentation Methods in the Duckietown Project

- Visual Monitoring of Swarms of Industrial Robots

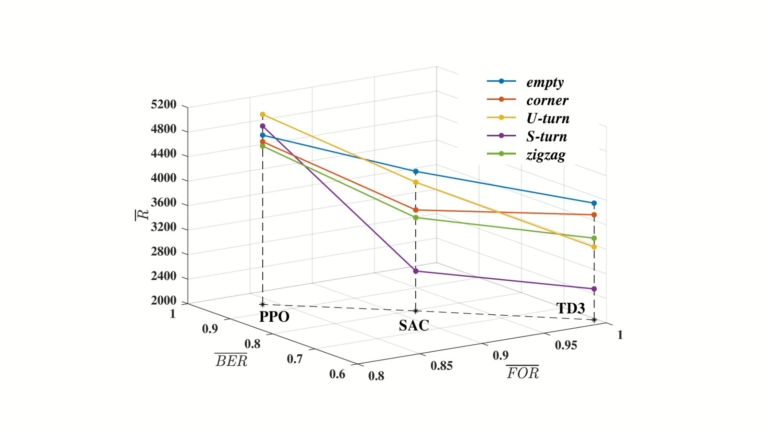

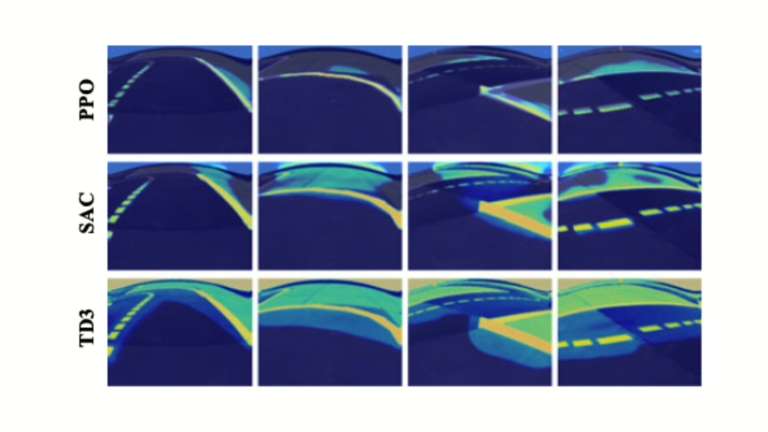

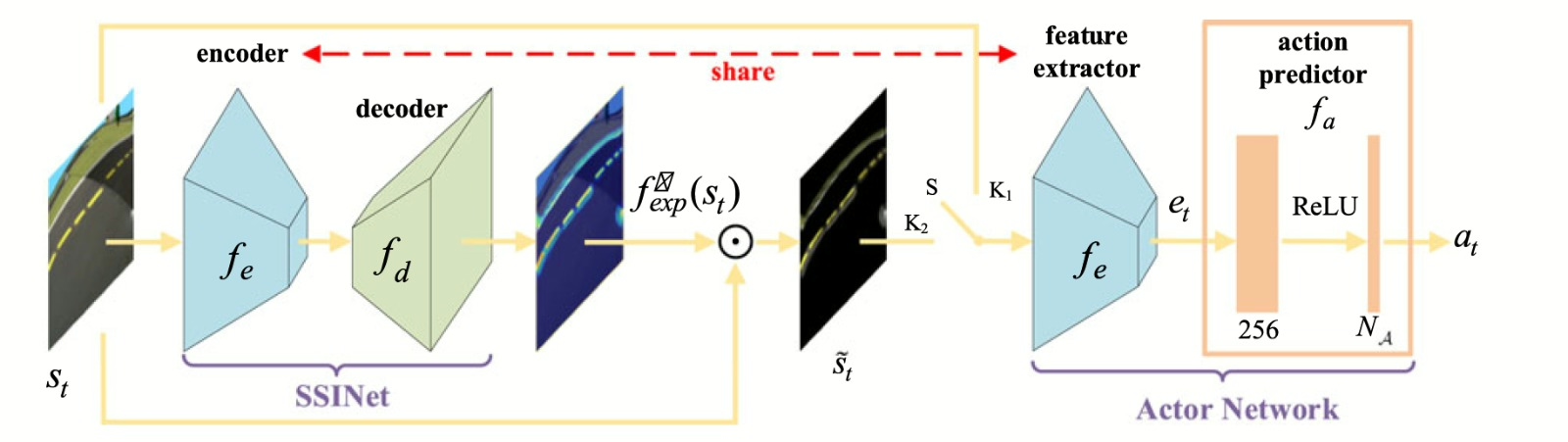

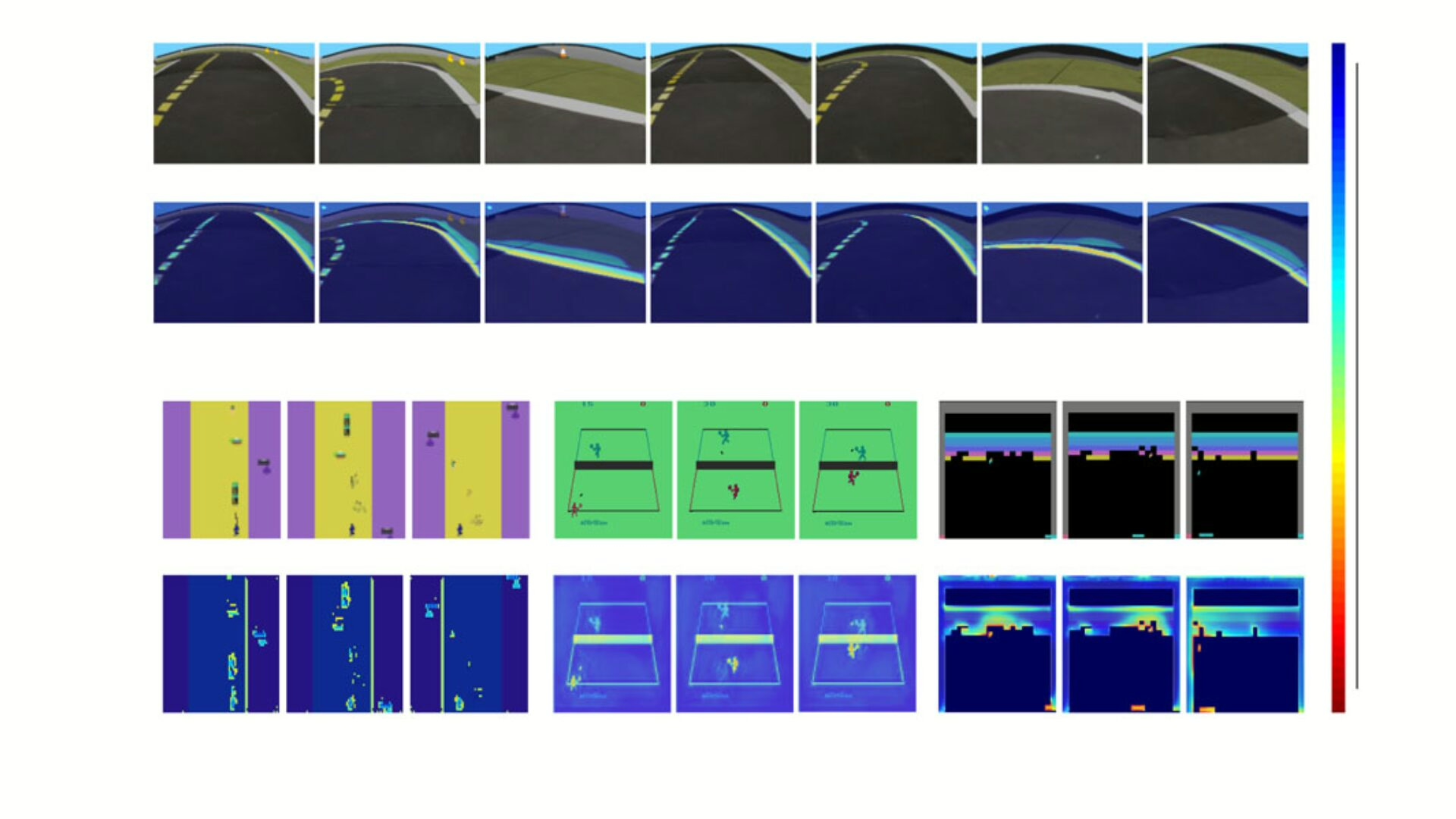

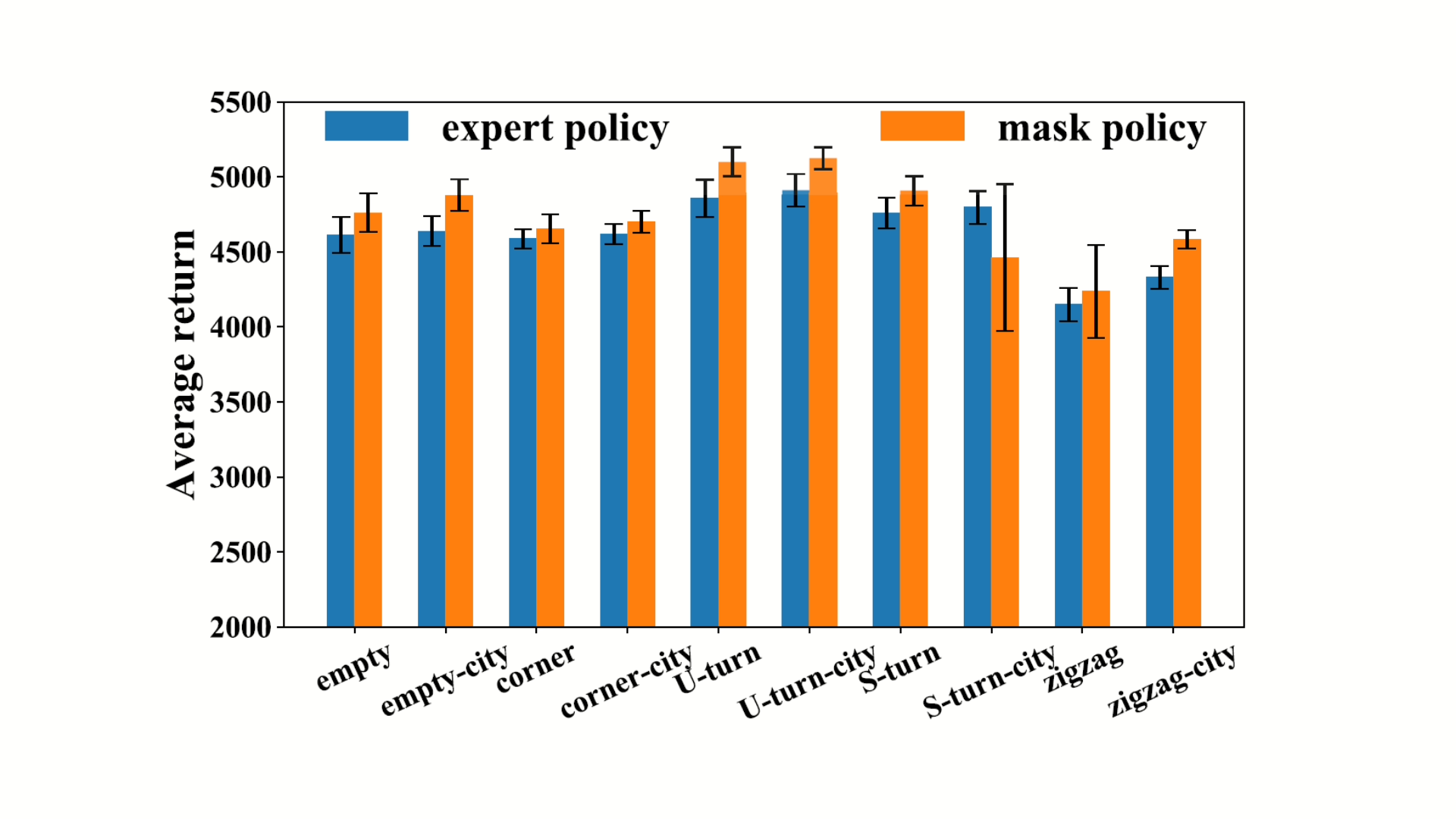

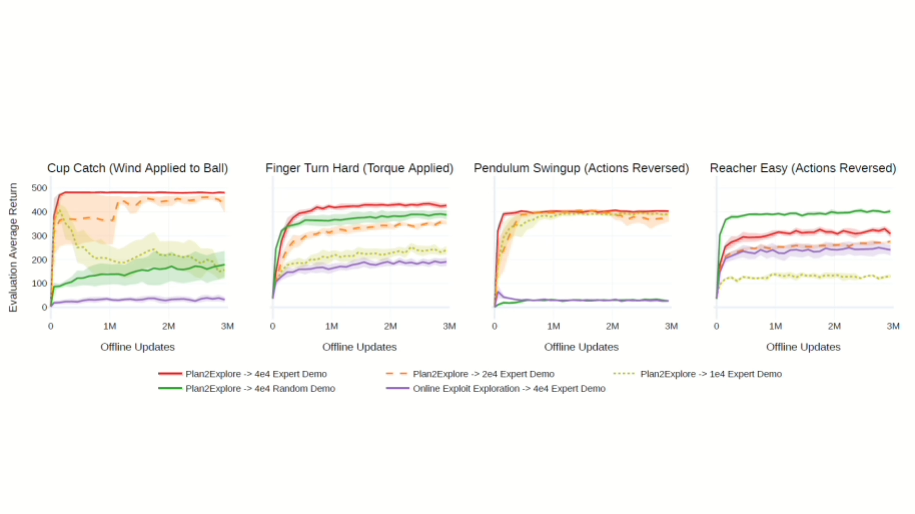

- Deep Reinforcement and Transfer Learning for Robot Autonomy

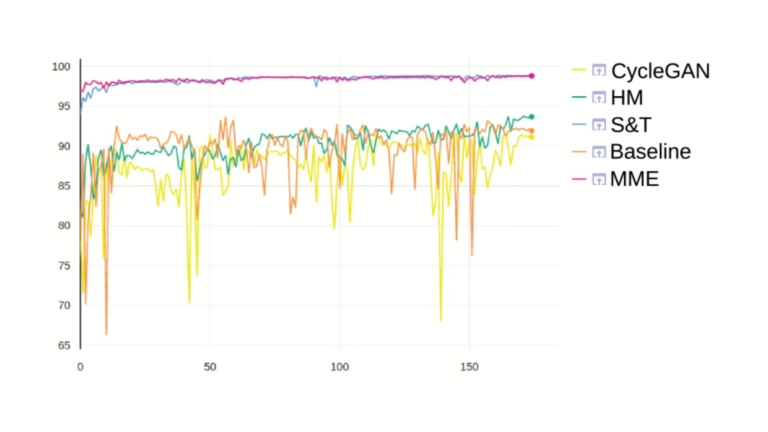

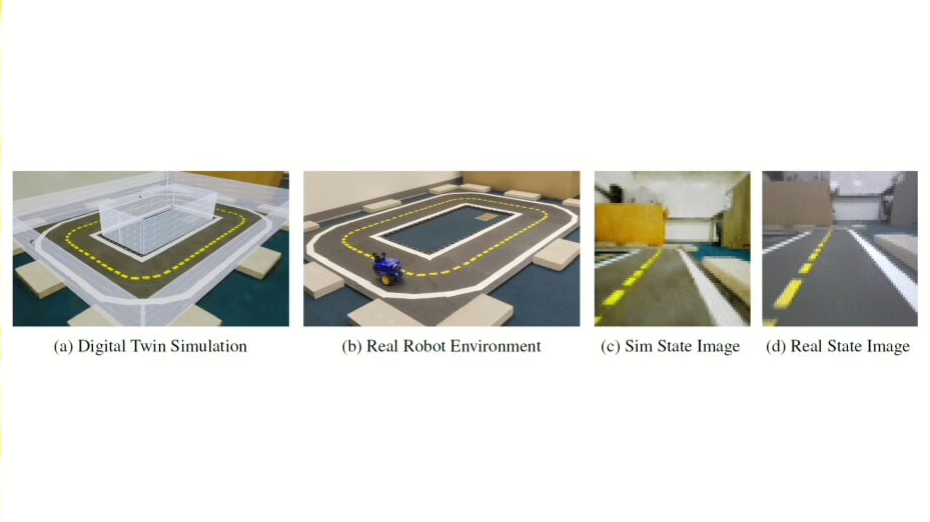

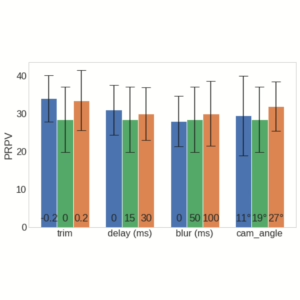

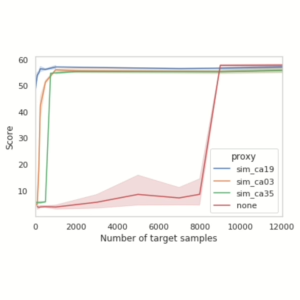

- Enhancing Visual Domain Randomization with Real Images for Sim-to-Real Transfer

Project Authors

Shima Akbari is a PhD student at Italian National Program in Autonomous Systems at the University of Rome Tor Vergata, Italy.

Nima Akbari is a PhD student at Basel University of Switzerland in privacy technologies for the Internet of Things.

Giuseppe Oriolo is a Full Professor of Automatic Control and Robotics at Sapienza University of Rome.

Sergio Galeani is a full professor at the University of Rome Tor Vergata, Italy.

Learn more

Duckietown is a platform for creating and disseminating robotics and AI learning experiences.

It is modular, customizable and state-of-the-art, and designed to teach, learn, and do research. From exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge, Duckietown evolves with the skills of the user.