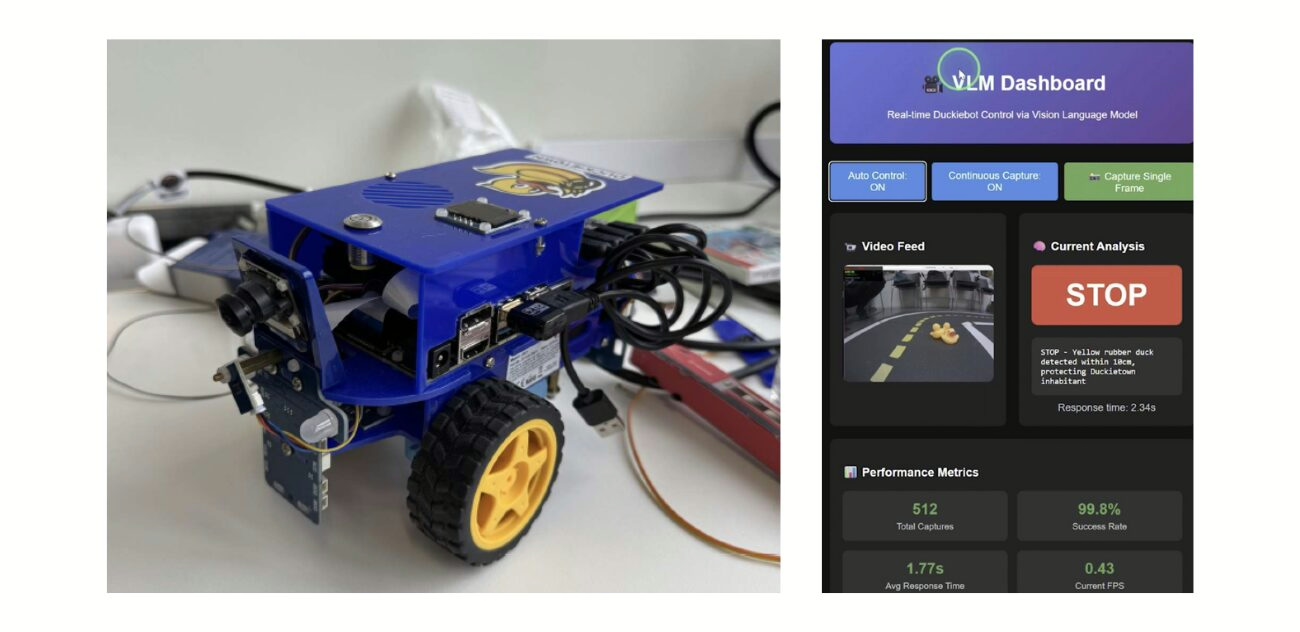

DB21v3-J Duckiebot upgrade kit now available

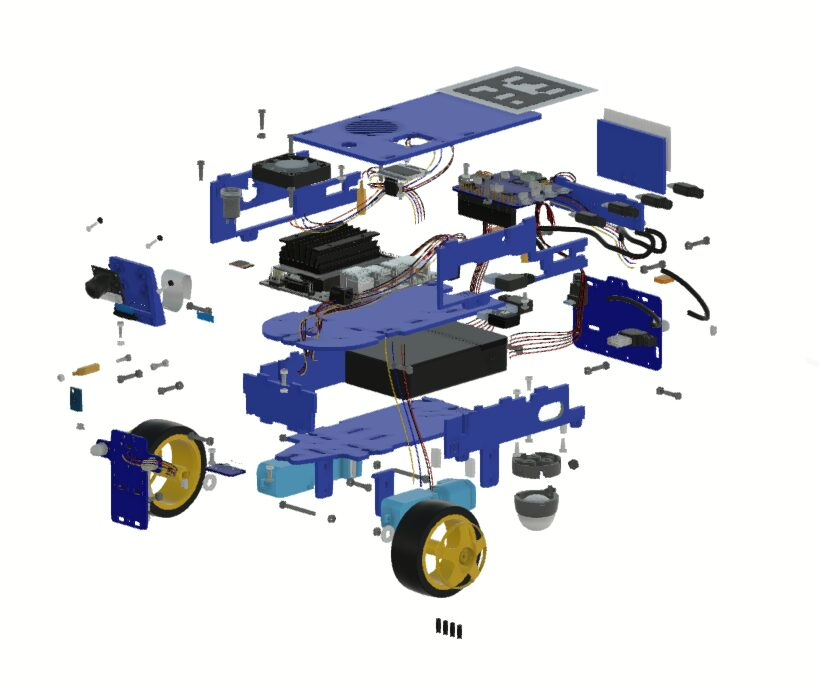

The DB21v3-J Duckiebot upgrade kit increases Duckiebot lifespan, enhances compatibility with different Jetson Nano 4GB development kits, improves driving performance, and reduces chassis assembly time.

Upgrading your Duckiebot from DB21-M or -J to DB21-Jv3

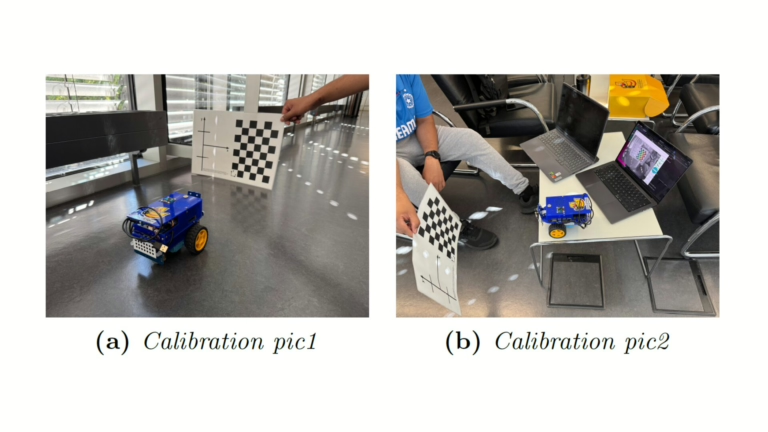

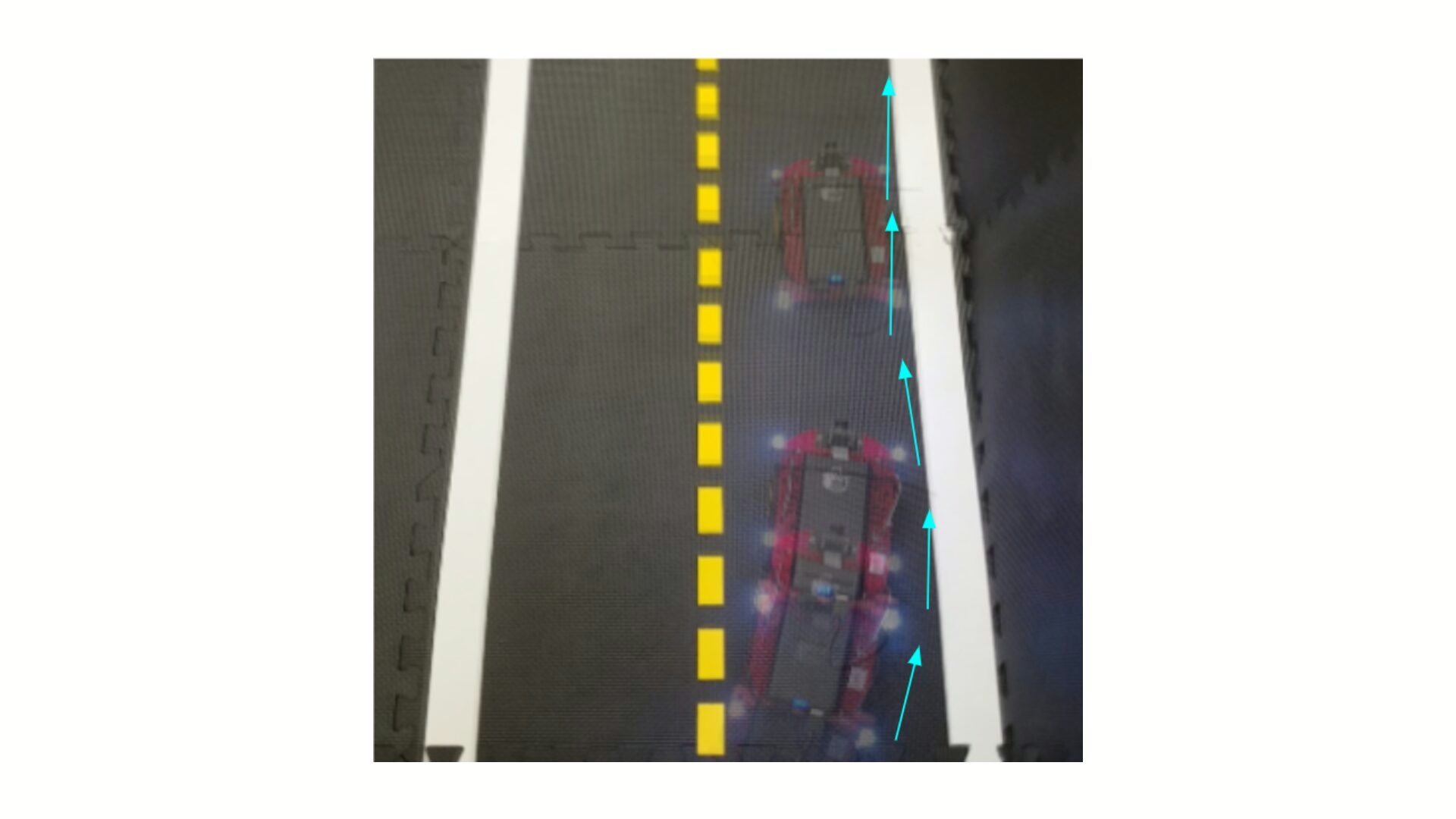

Building on the experience and feedback from users worldwide, Duckiebots have undergone many design iterations throughout the years.

This chassis upgrade, from DB21M or -J to DB21(v3)-J, introduces long-awaited improvements to the Duckiebot DB21 design, leading to shorter assembly time, better driving performance, and an overall improved user experience.

New omnidirectional wheel

- Improved rigidity: Three points of contact with the chassis instead of two, for improved rigidity and overall better driving performance

- Designed for maintenance: the new omnidirectional wheel can be opened and cleaned, providing an opportunity for removing the gunk that naturally builds up inside the wheel. This increases the life span of the wheel, and to some extent of the whole robot

- Uniform friction in all directions: thanks to the symmetry design and the undeformable nature of the components, this wheel provides more isotropic performance with respect to the previous model, leading to less force disturbance on the chassis and overall better driving performances.

Easier assembly process

- Faster to assemble: thanks to perfect fits

- More joyful to assemble: thanks to a more reproducible Duckiebot assembly experience

Compatible chassis

The upgraed chassis now supports multiple Jetson Nano variants, including Jetson Super Orin Nano, OKDOs C100 Jetson Nano 4GB development Kit, and Waveshare Jetson Dev Kit.

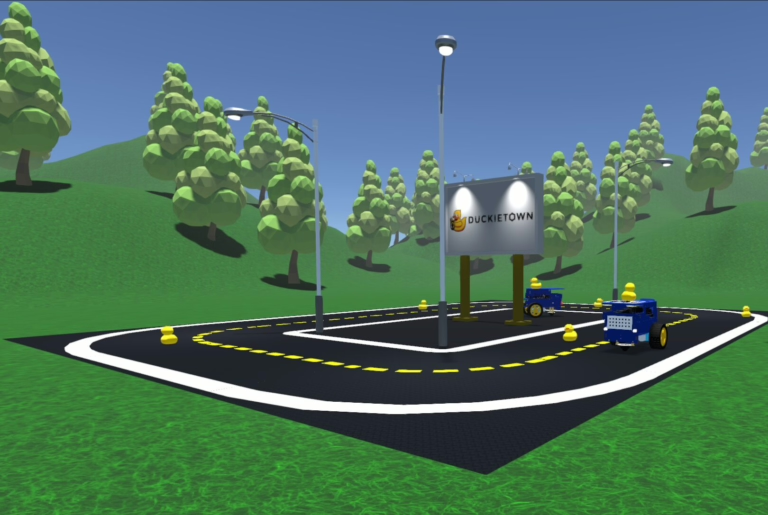

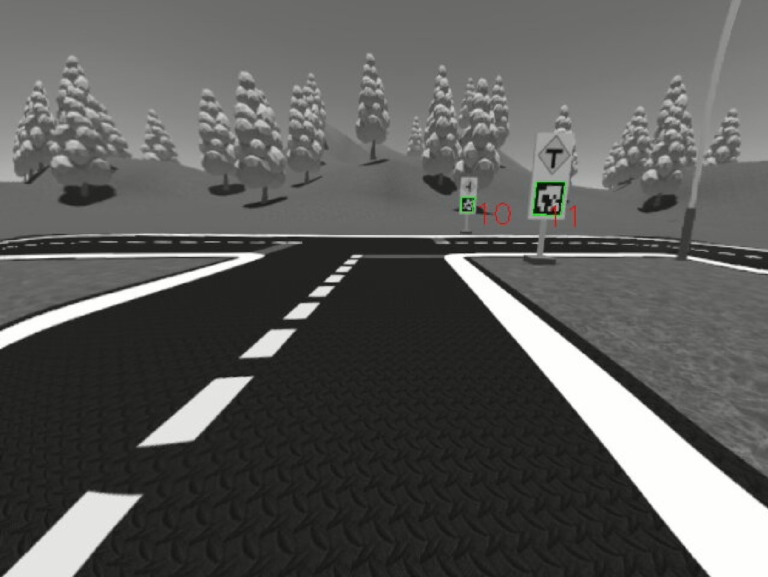

Learn more about Duckietown

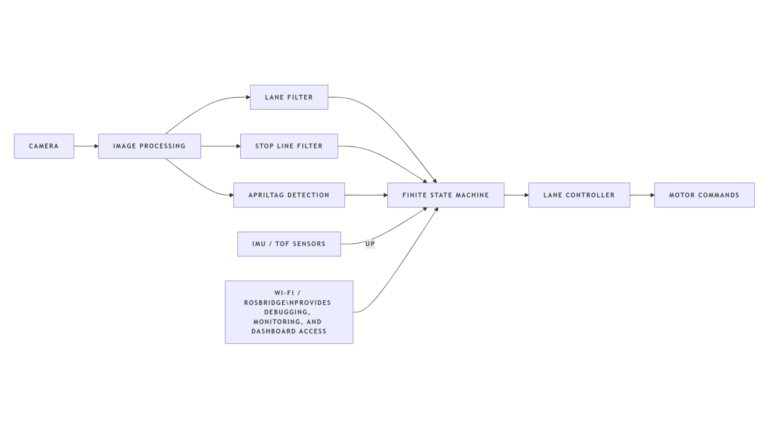

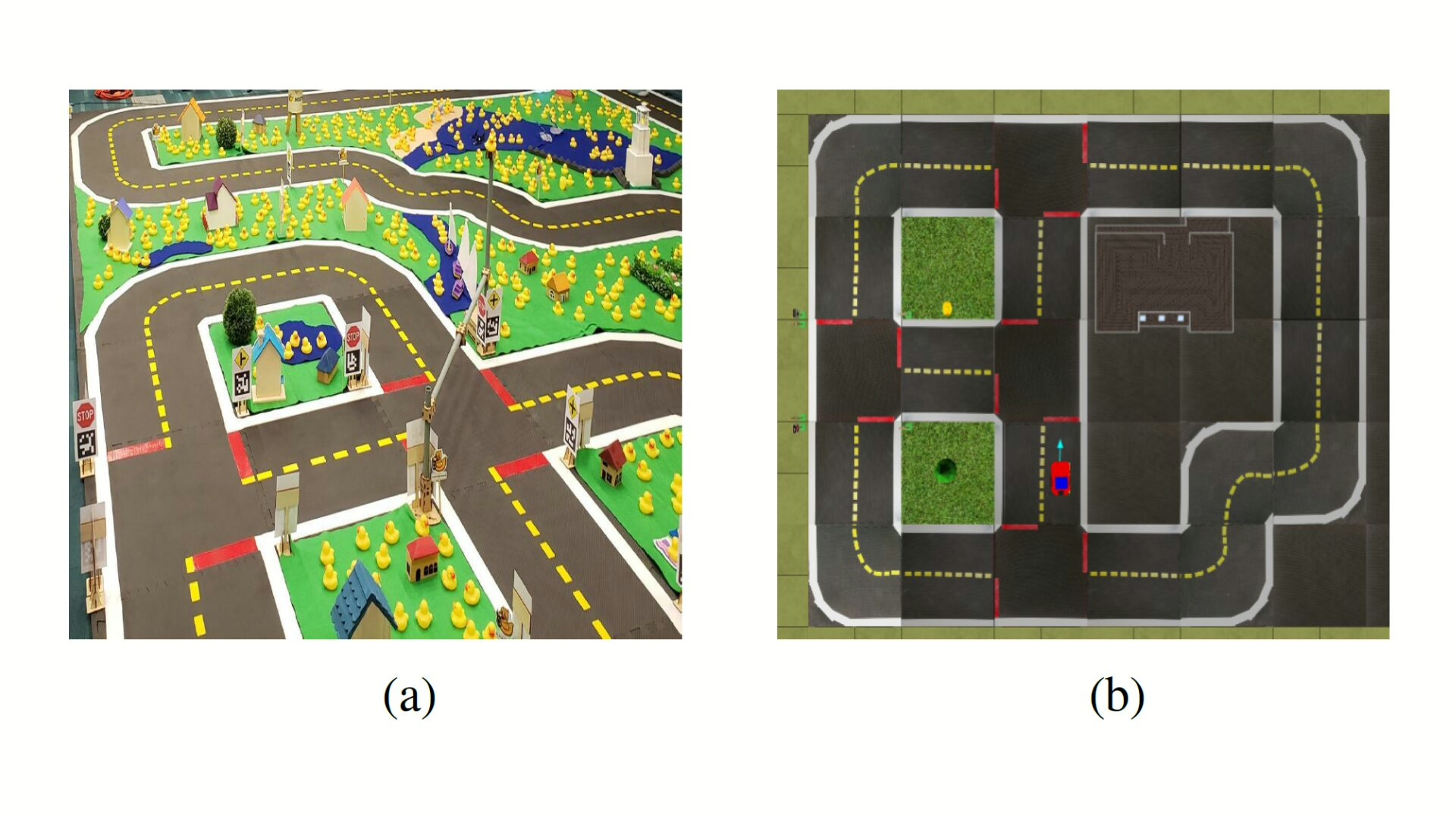

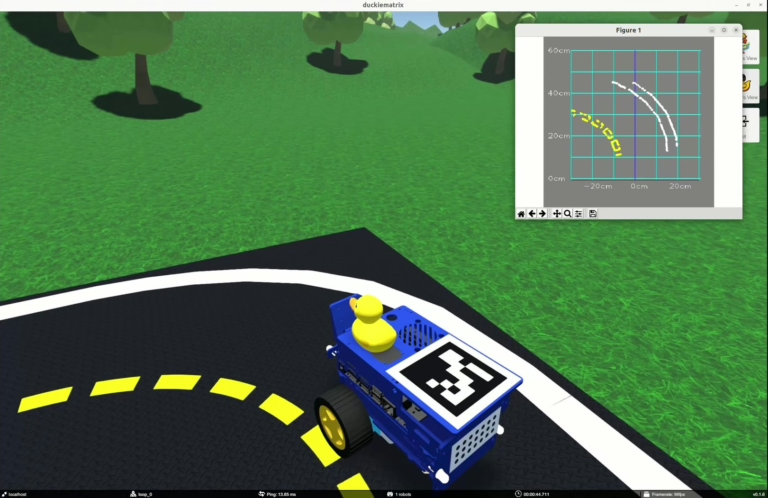

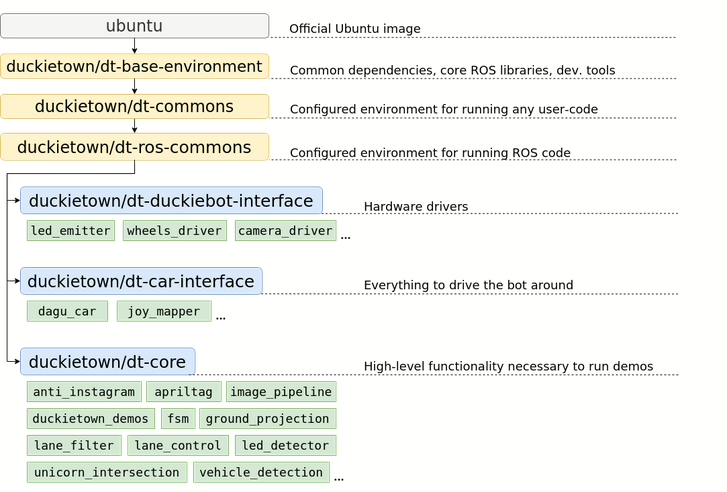

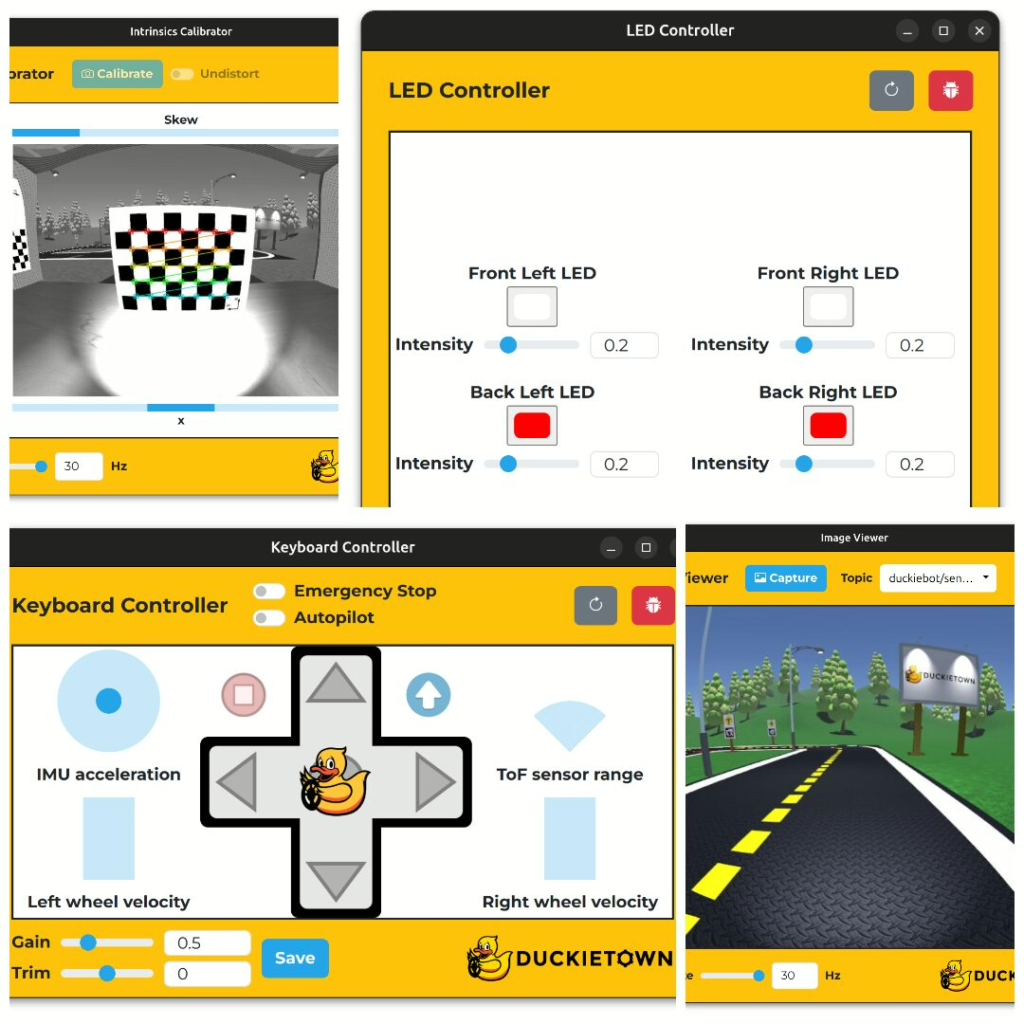

Duckietown is a set of tools that enables hands-on robotics and AI learning experiences.

It is designed to help teach, learn, and do research: from exploring the fundamentals of computer science and automation to pushing the boundaries of human knowledge.