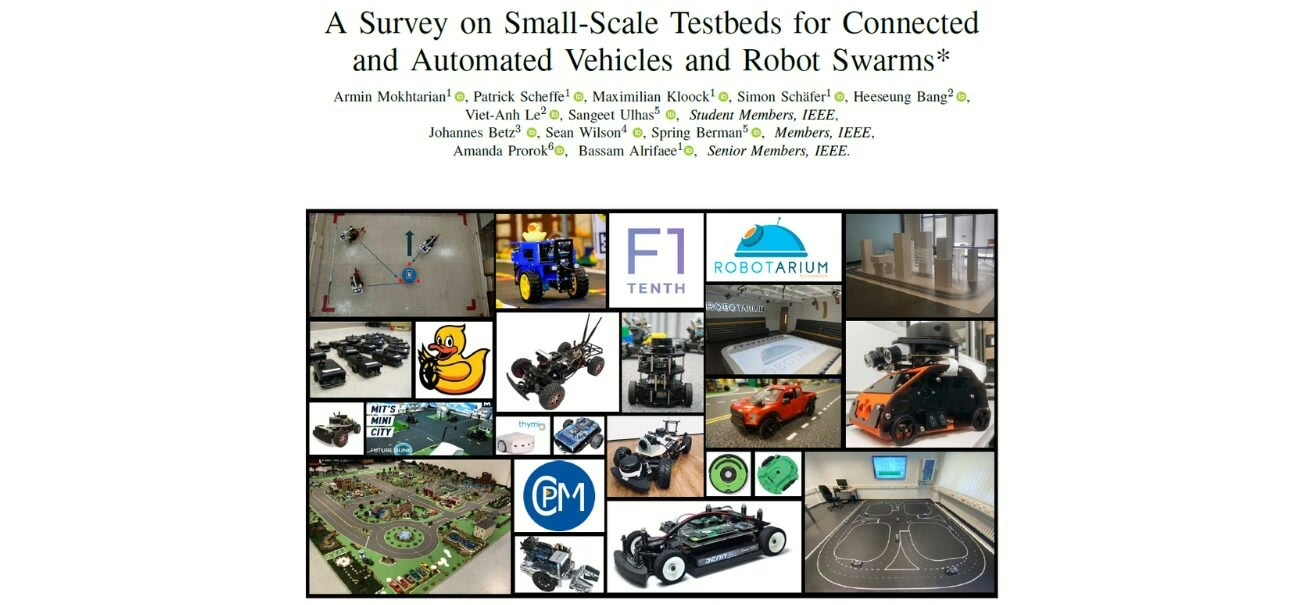

General Information

- Title: Agent-Based Autonomous Robotic System Using Deep Reinforcement and Transfer Learning

- Authors: Vladyslav Kyryk, Maksym Figat, Marian Kyryk

- Institution: Warsaw University of Technology, Warsaw, Poland

- Citation: Kyryk, V., Figat, M., Kyryk, M. (2024). Agent-Based Autonomous Robotic System Using Deep Reinforcement and Transfer Learning. In: Luntovskyy, A., Klymash, M., Melnyk, I., Beshley, M., Schill, A. (eds) Digital Ecosystems: Interconnecting Advanced Networks with AI Applications. TCSET 2024. Lecture Notes in Electrical Engineering, vol 1198. Springer, Cham.

Deep Reinforcement and Transfer Learning for Robot Autonomy

Developing autonomous robotic systems is challenging. When using machine learning based approaches, one of the main challenges is the high cost and complexity of real-world training. Running real world experiments is time consuming and depending on the application, can be expensive as well.

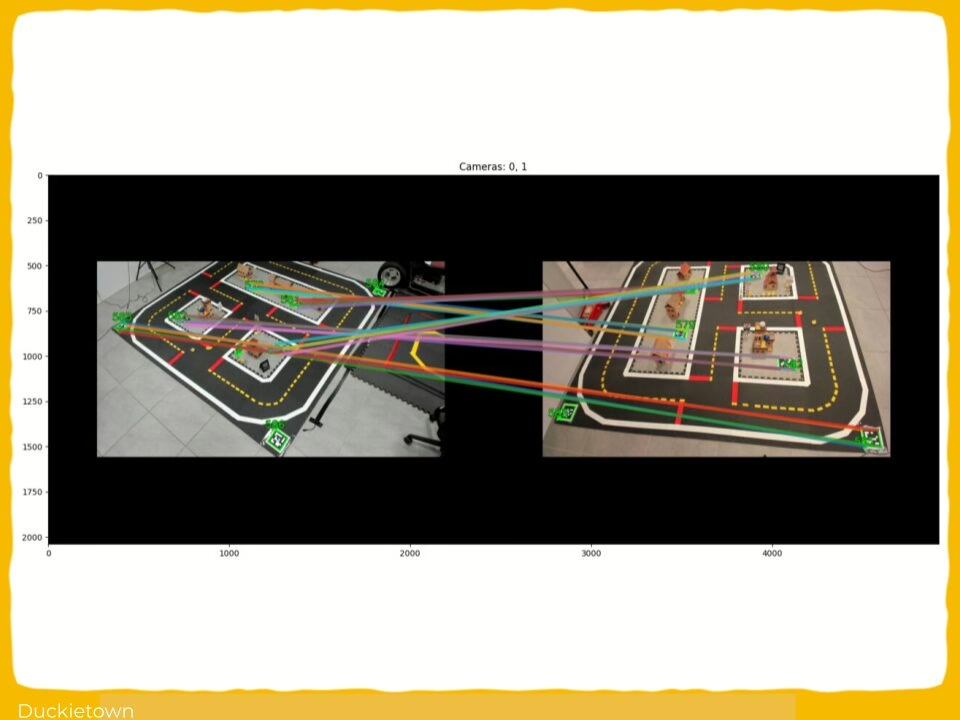

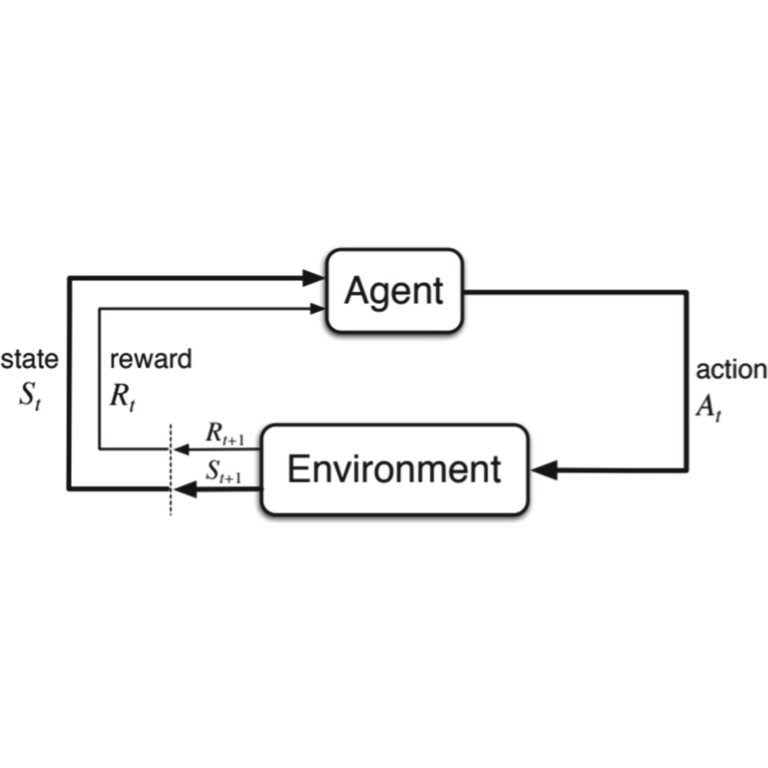

This work uses Deep Reinforcement Learning (DRL) and tackles this challenge through Transfer Learning (TL). DRL enables robots to learn optimal behaviors through trial-and-error, guided by reward-based feedback. Transfer Learning then addresses the high cost of generating training data by leveraging simulation environments.

Running experiments in simulation is time and cost efficient, the trained agent can then be deployed on a physical robot, in a process known as Sim2Real transfer. Ideally, this approach significantly reduces training costs and accelerates real-world deployment.

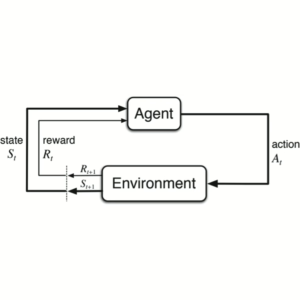

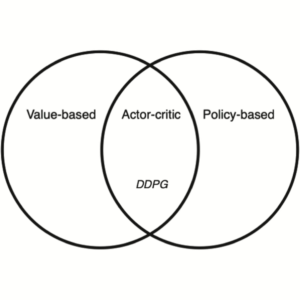

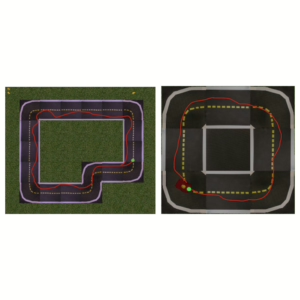

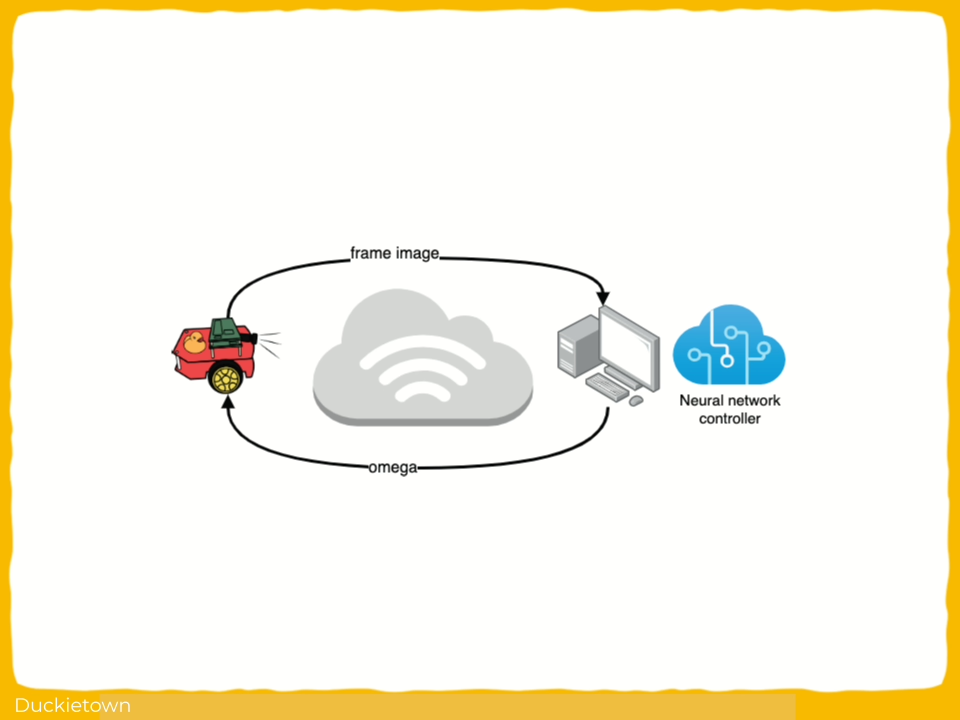

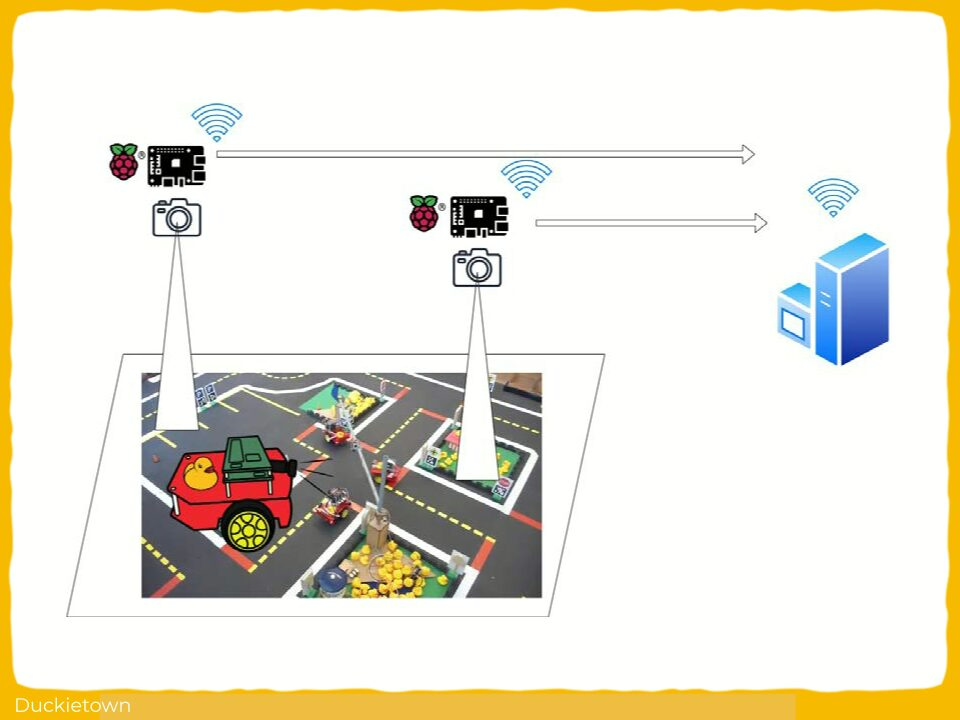

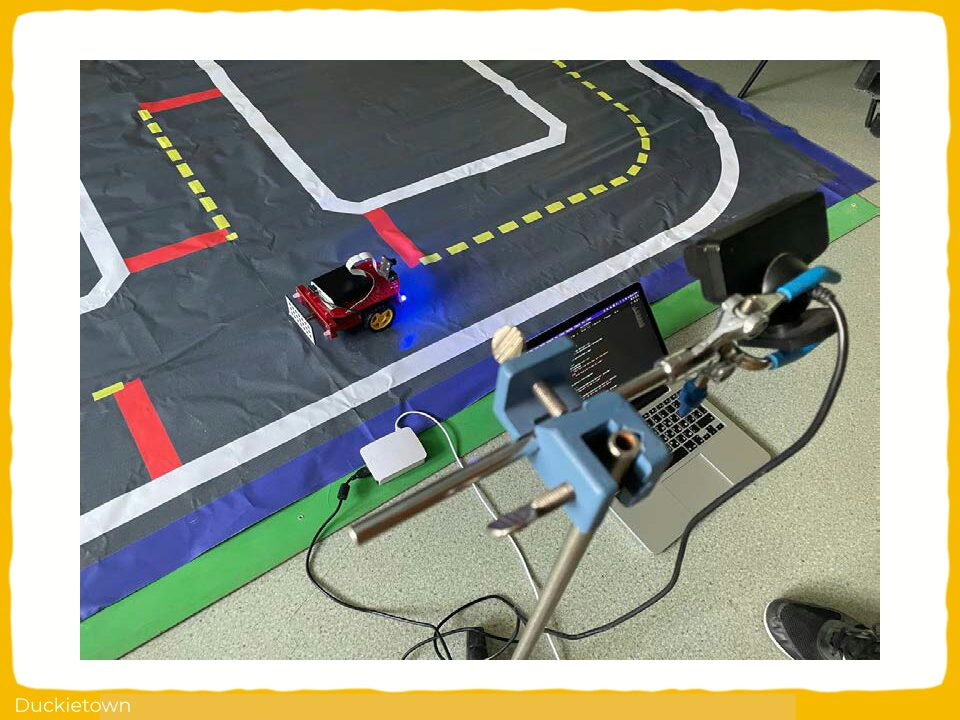

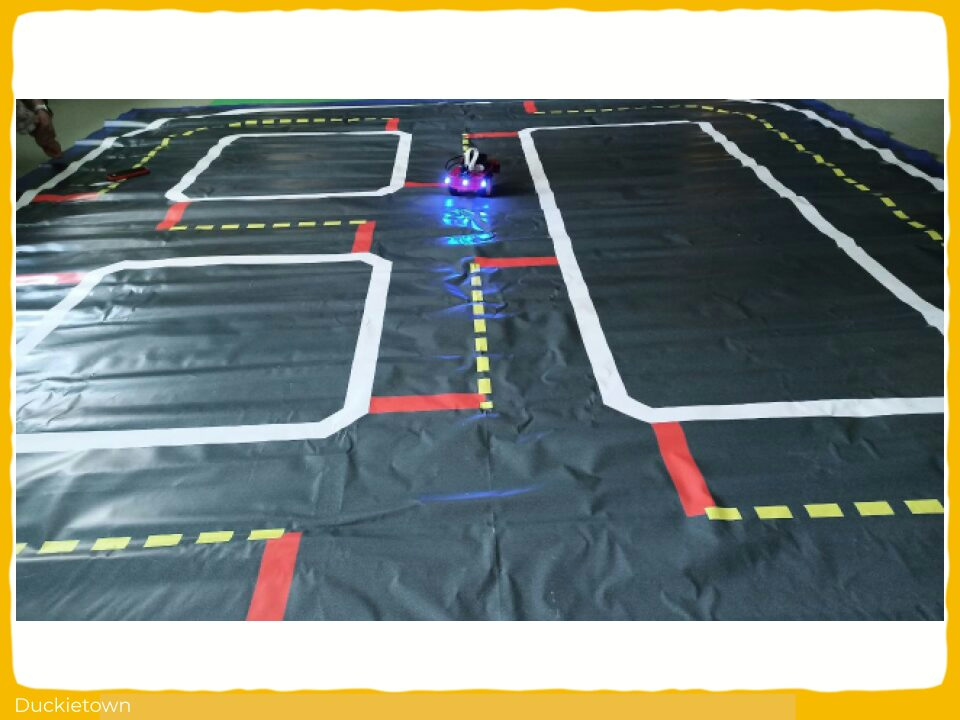

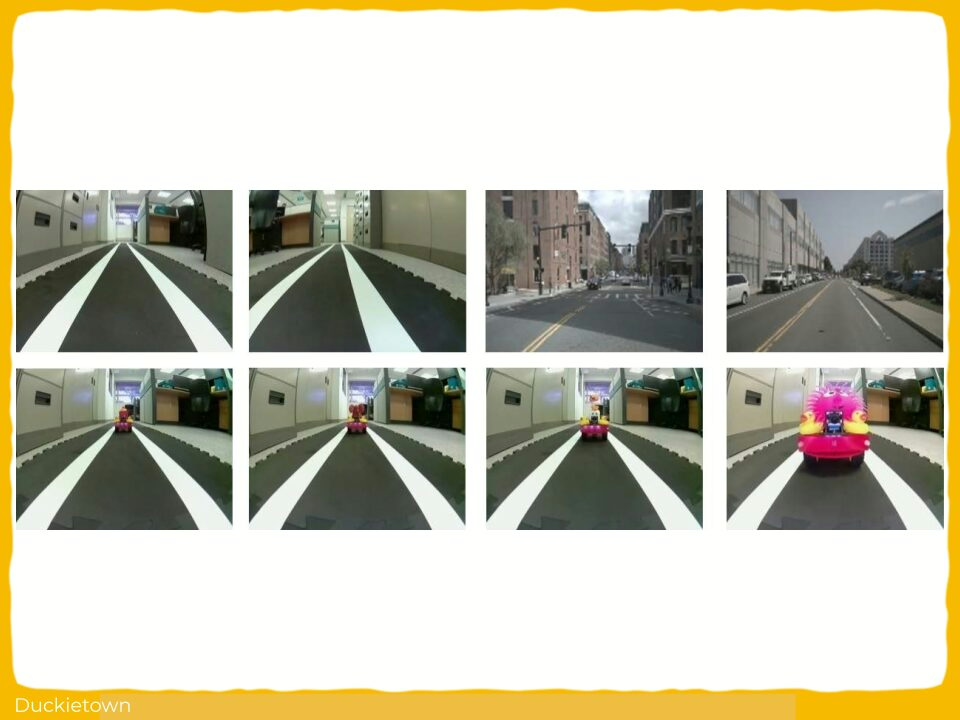

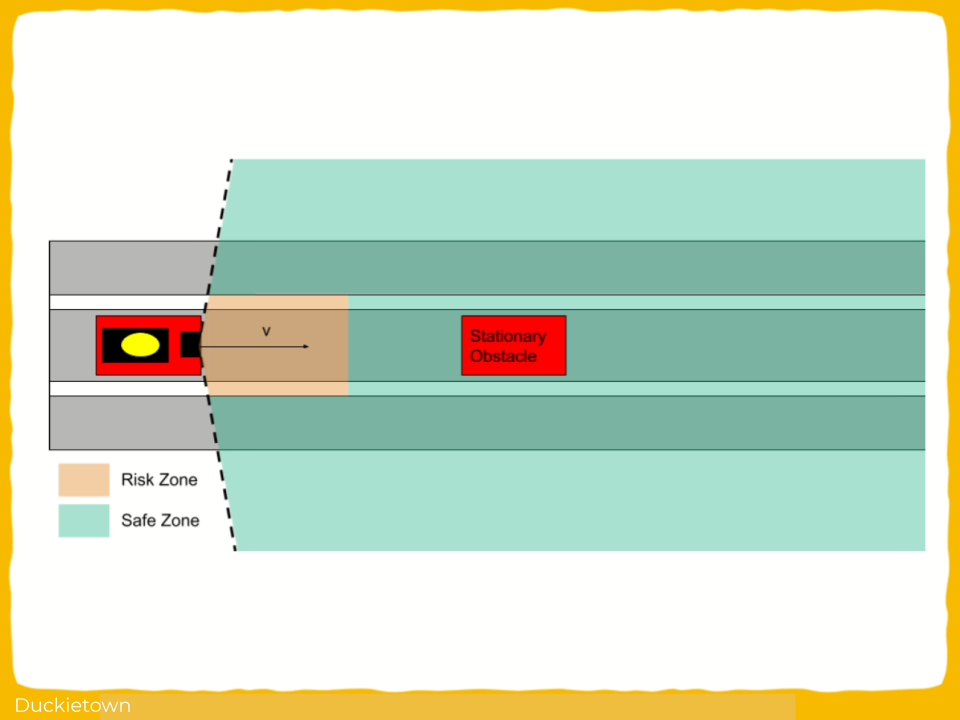

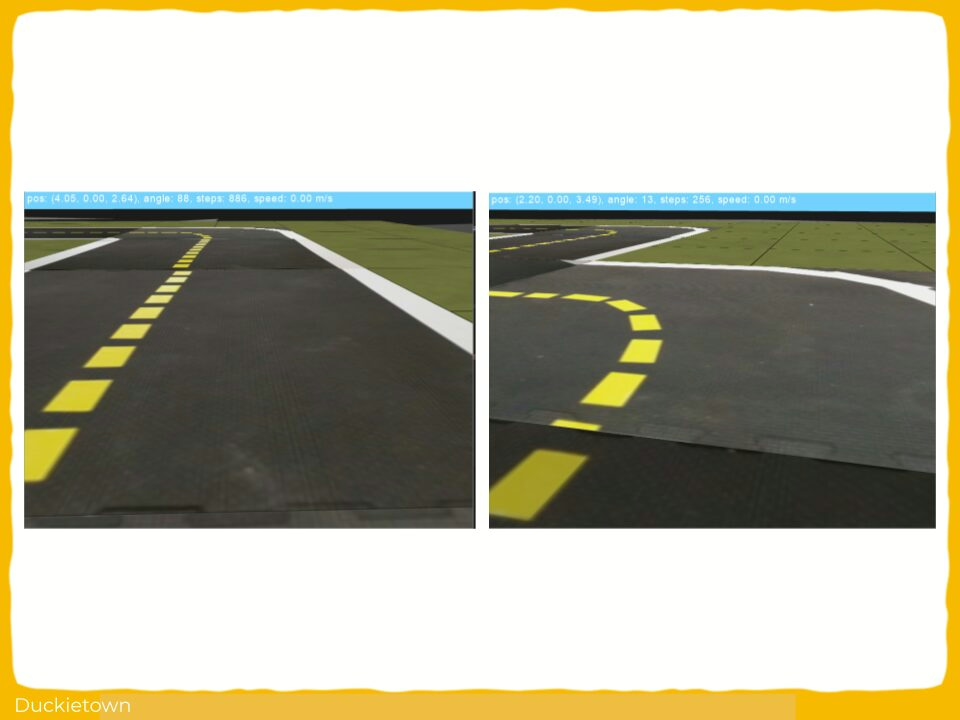

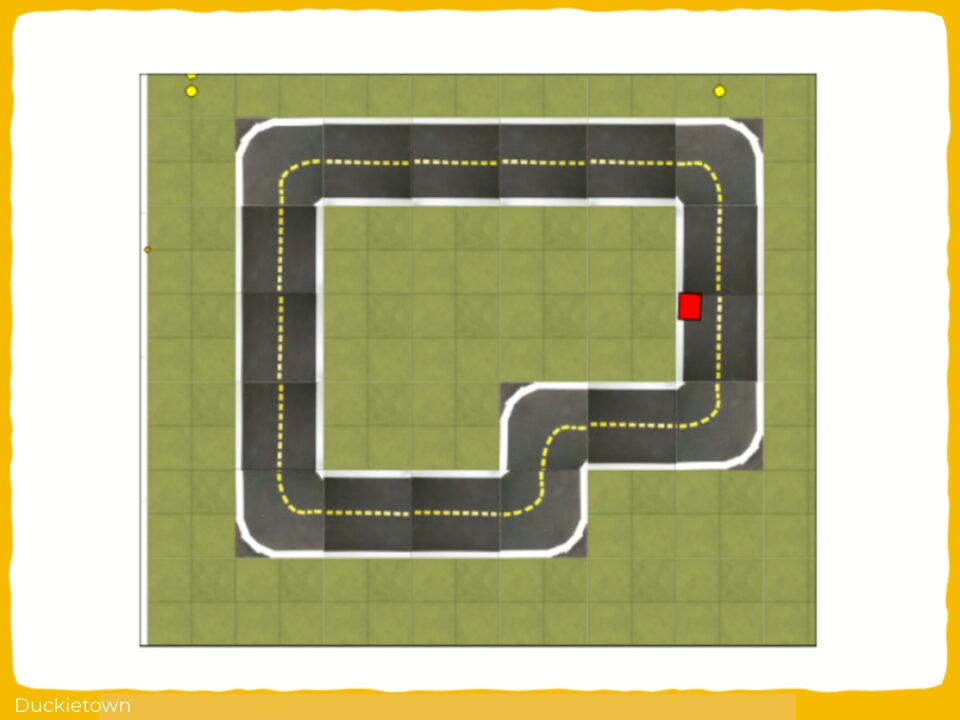

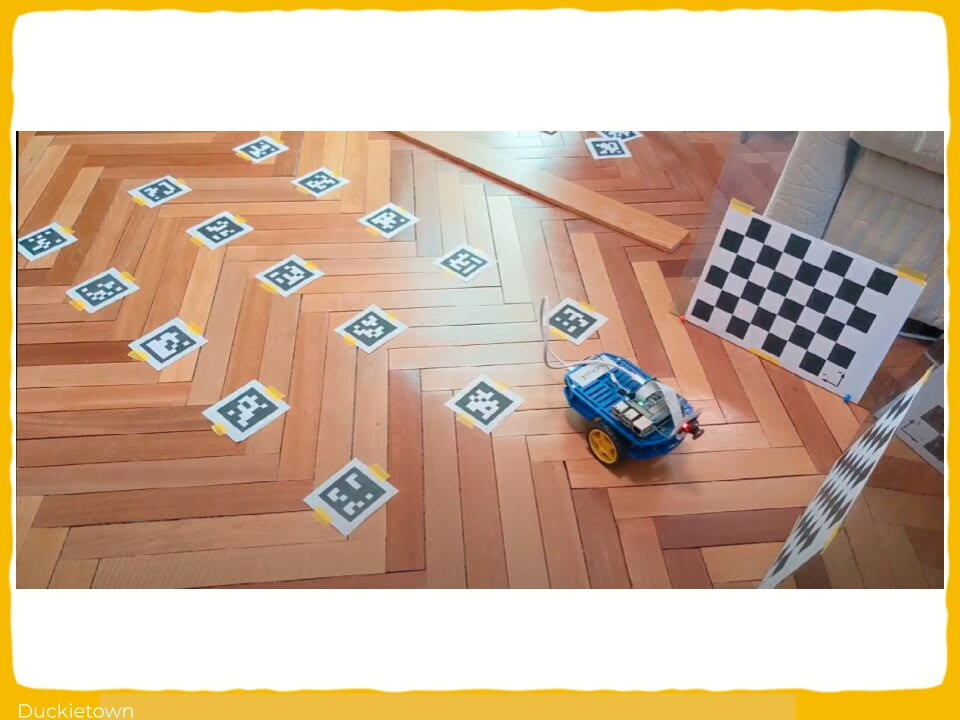

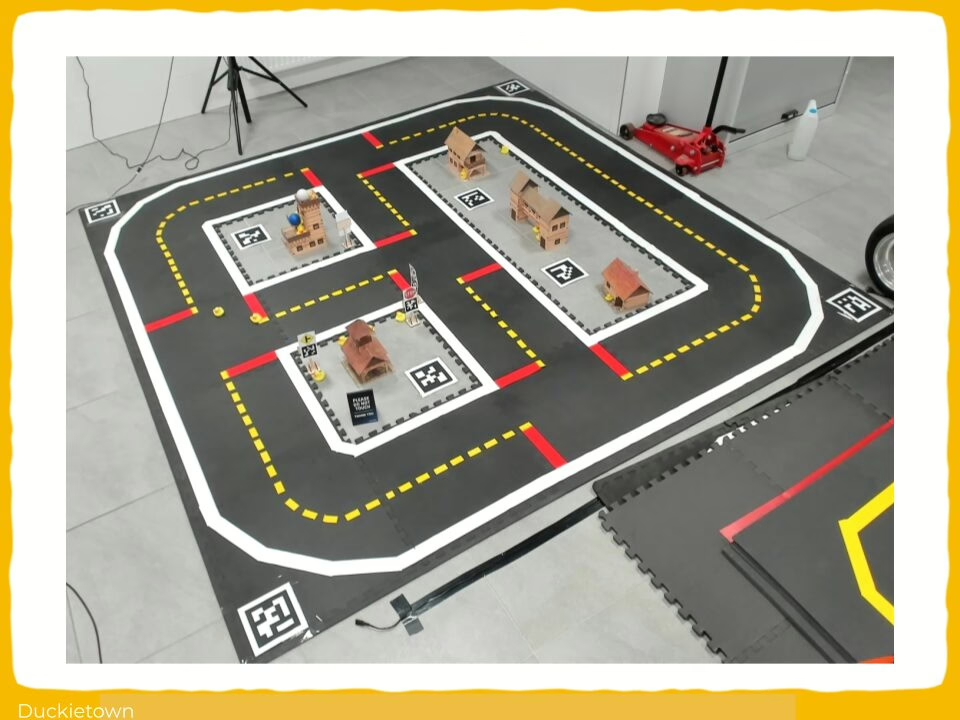

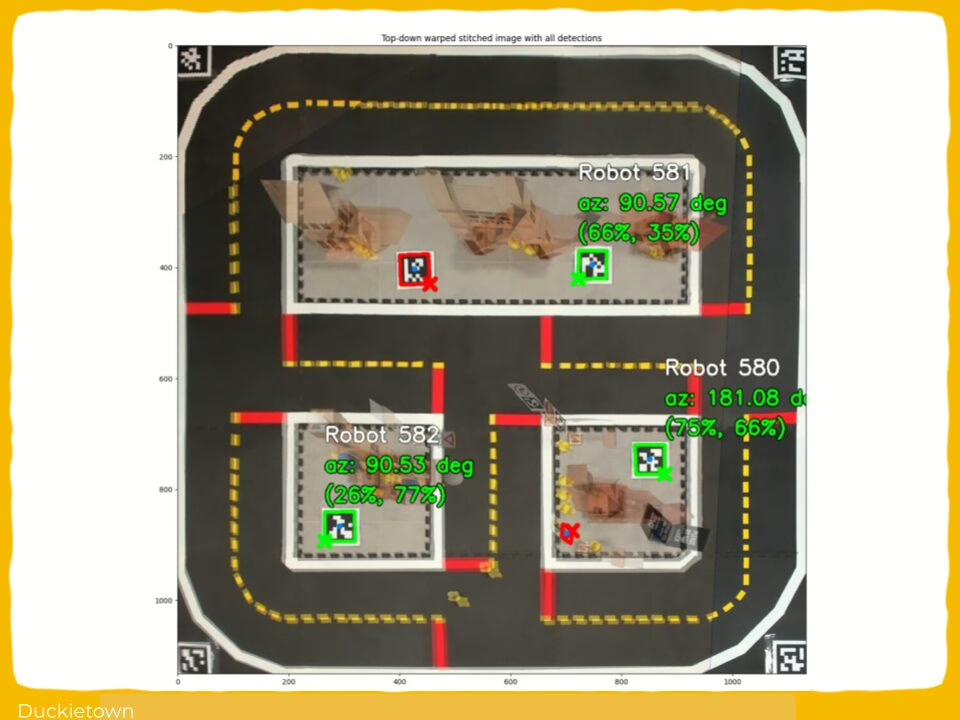

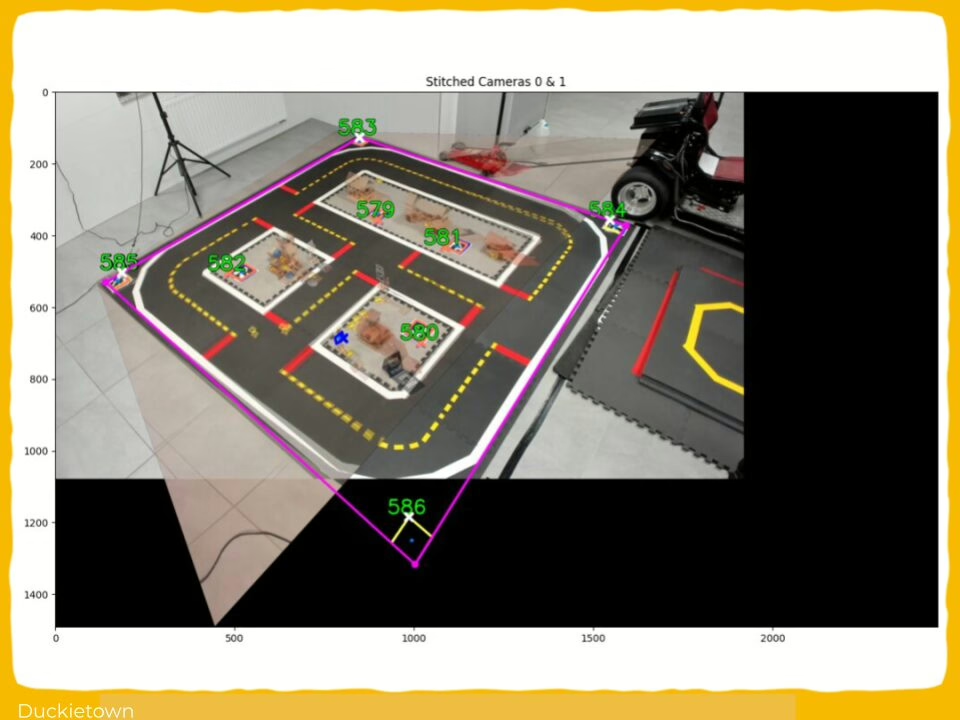

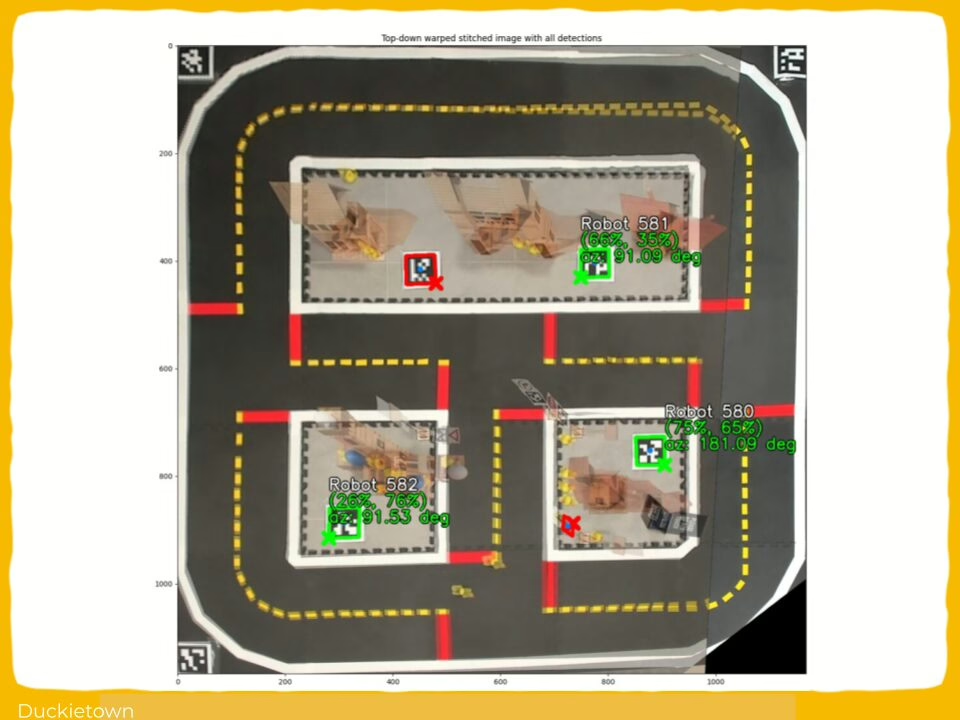

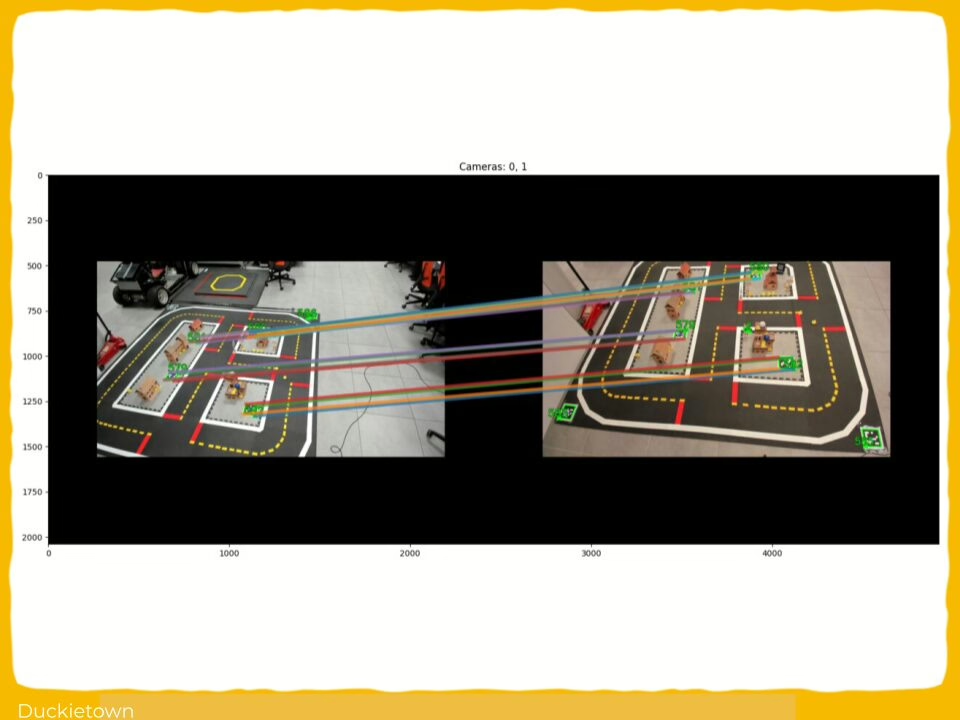

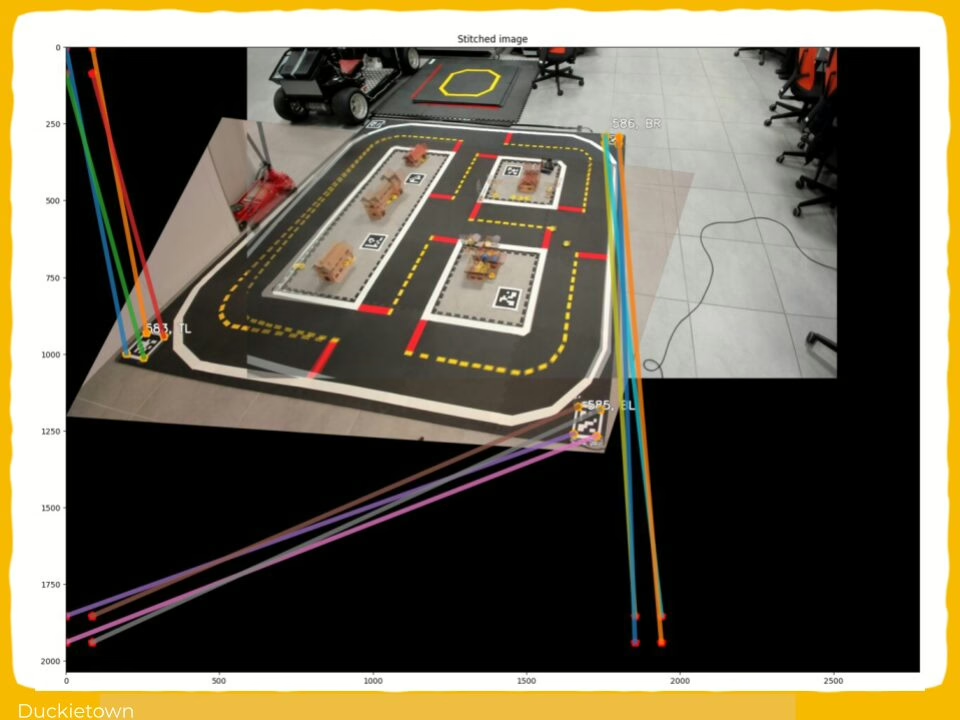

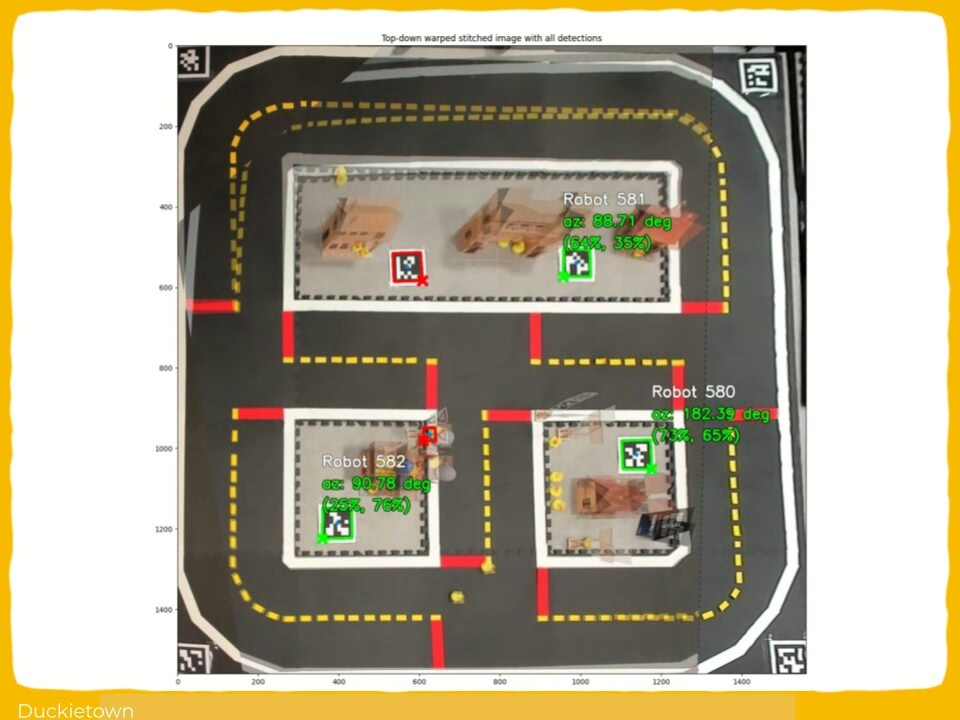

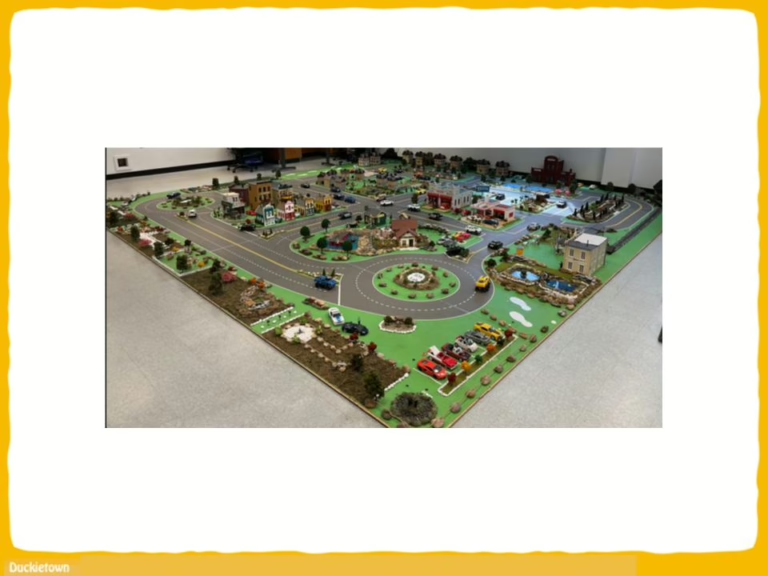

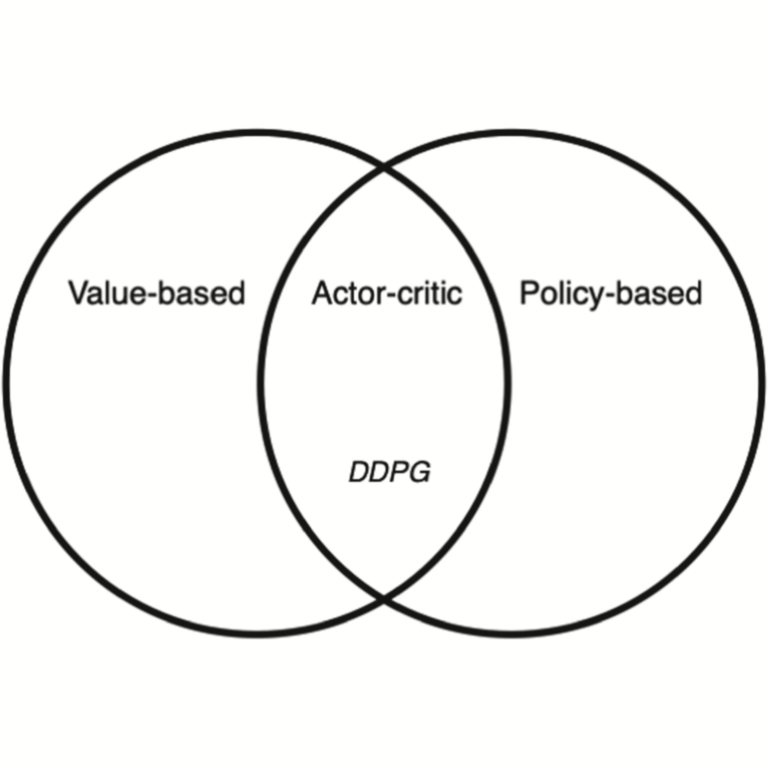

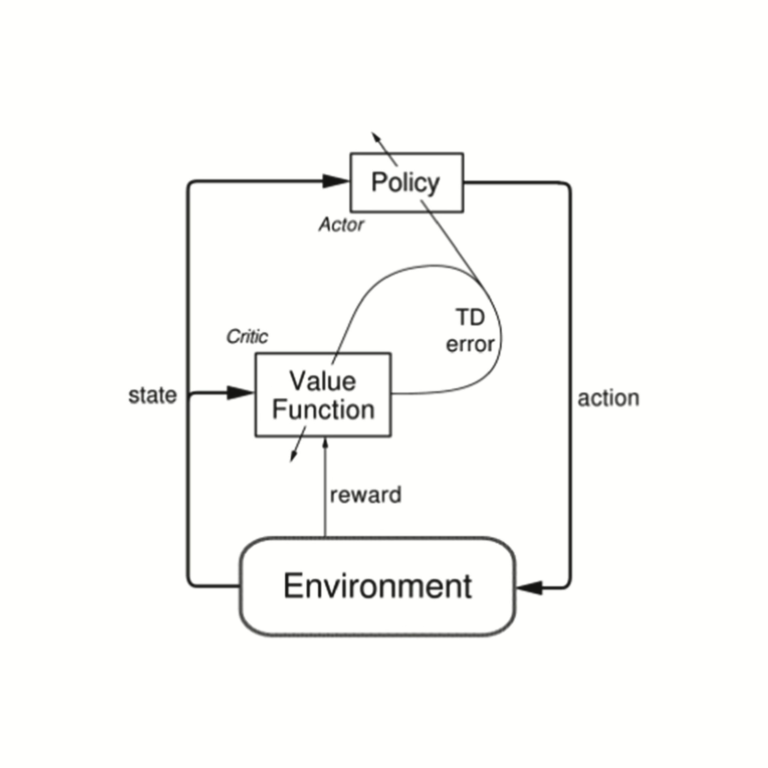

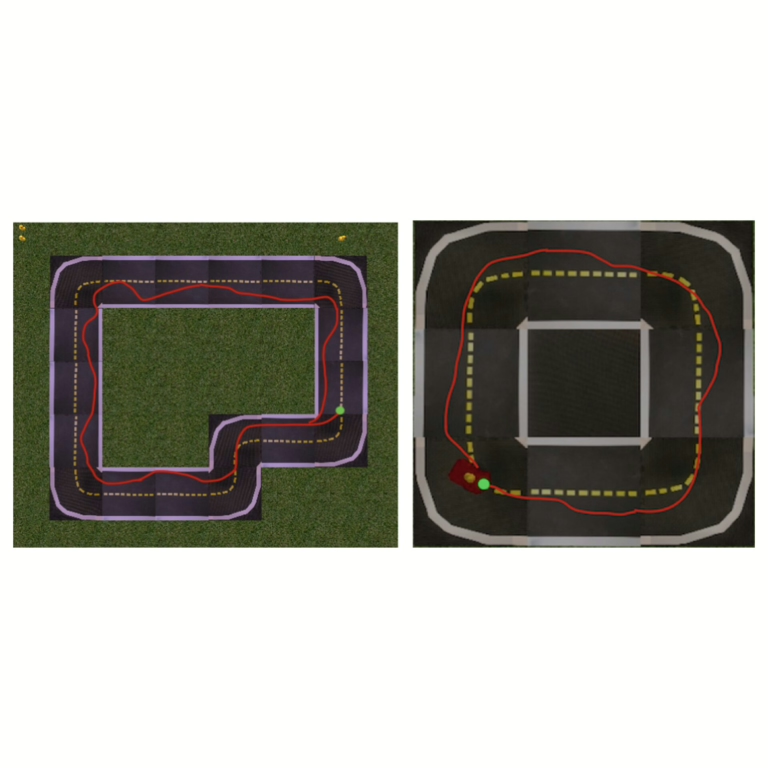

In this work, training occurs in a simulated Duckietown environment using Deep Deterministic Policy Gradient (DDPG) and TL techniques to mitigate the expected difference between simulated and real-world environments. The resulting agent is then deployed on a custom-built robot in a physical Duckietown city for evaluation.

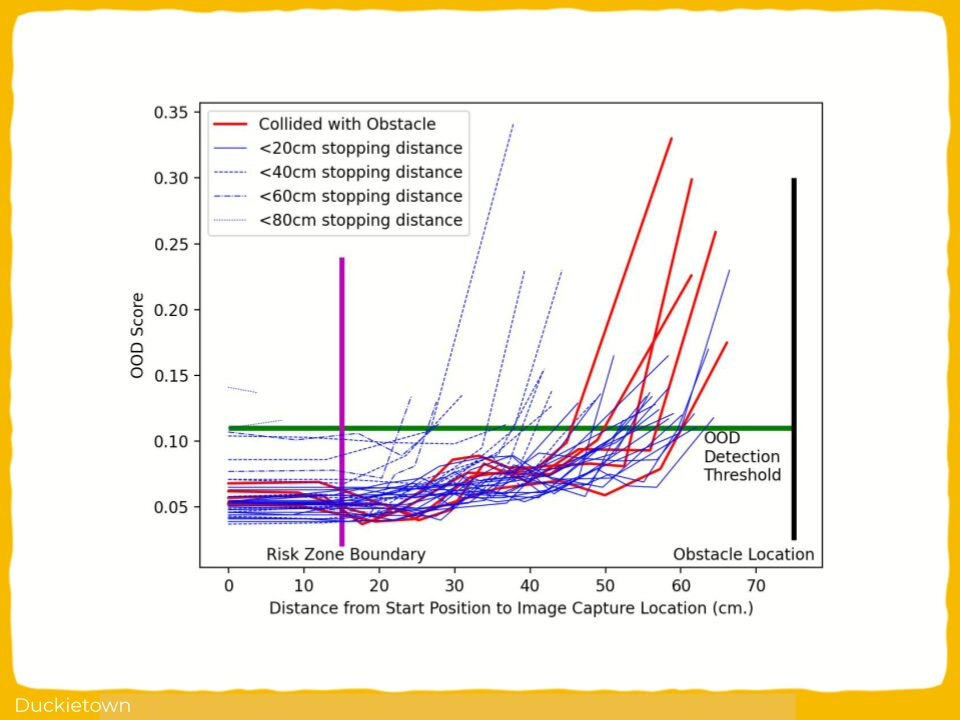

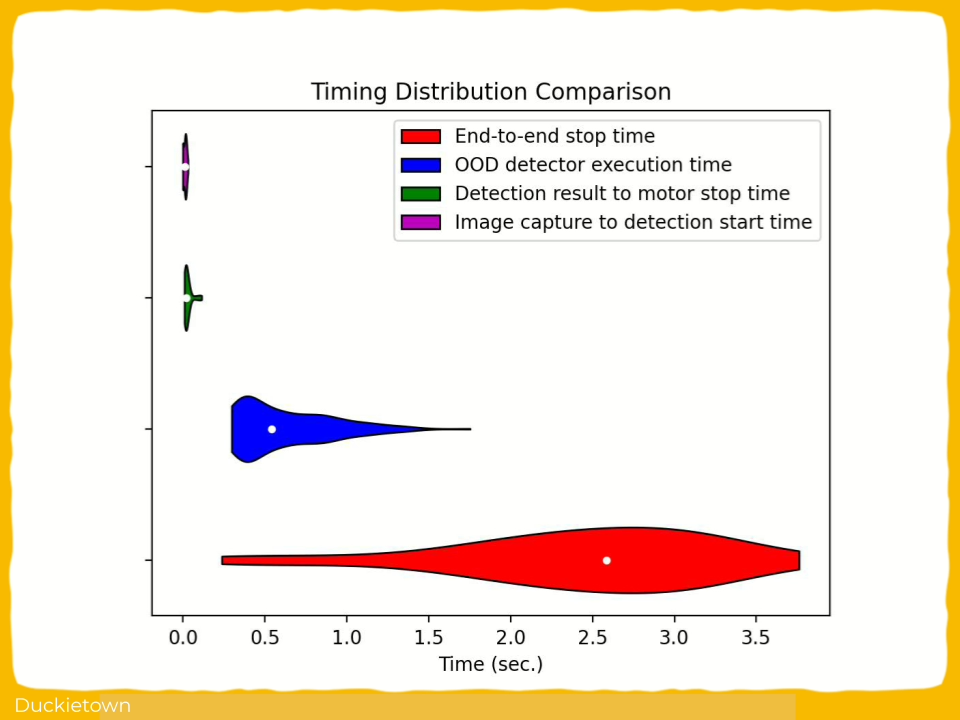

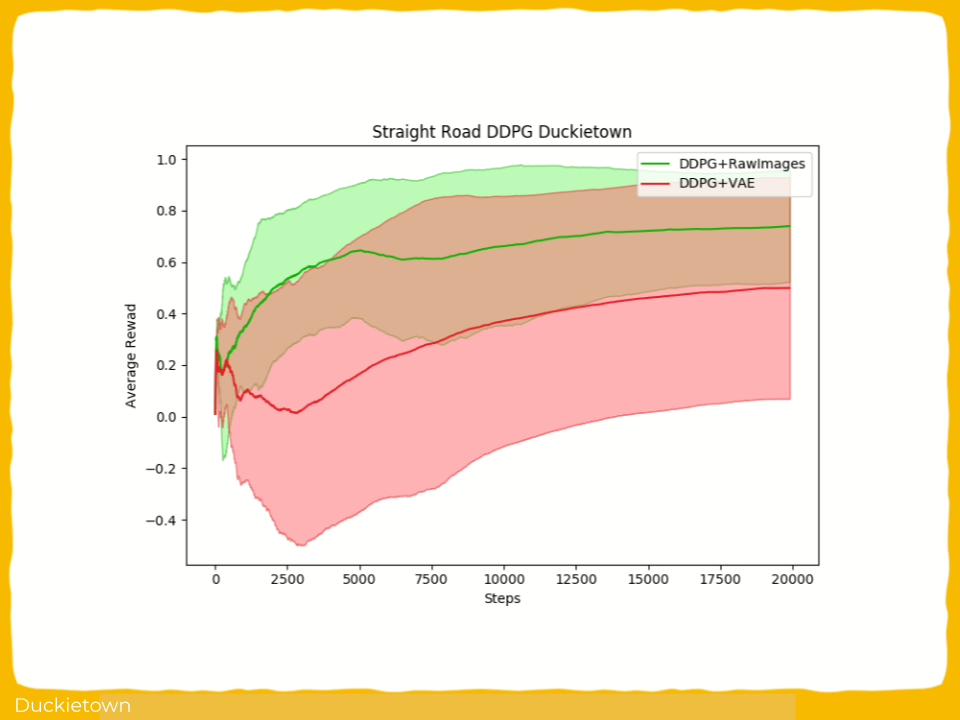

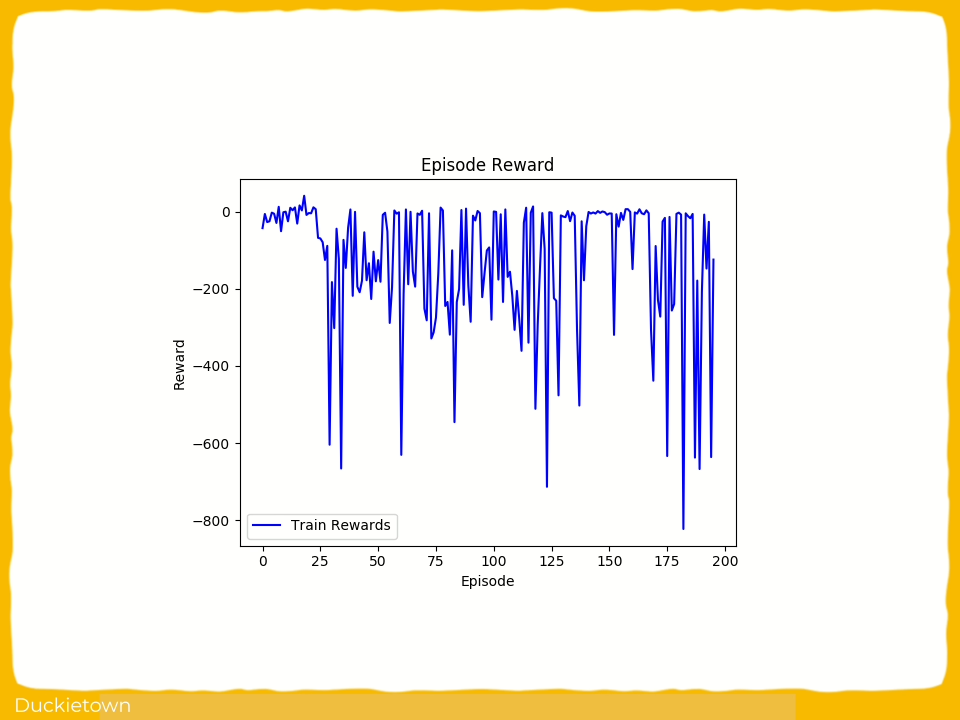

Results show that the DRL-based model successfully learns lane-following and navigation autonomous behaviors in simulation, and performance comparison with real world experiments is provided.

Highlights - Deep Reinforcement Learning for Agent-Based Autonomous Robot

Here is a visual tour of the work of the authors. For all the details, check out the full paper.

Abstract

In the author’s words:

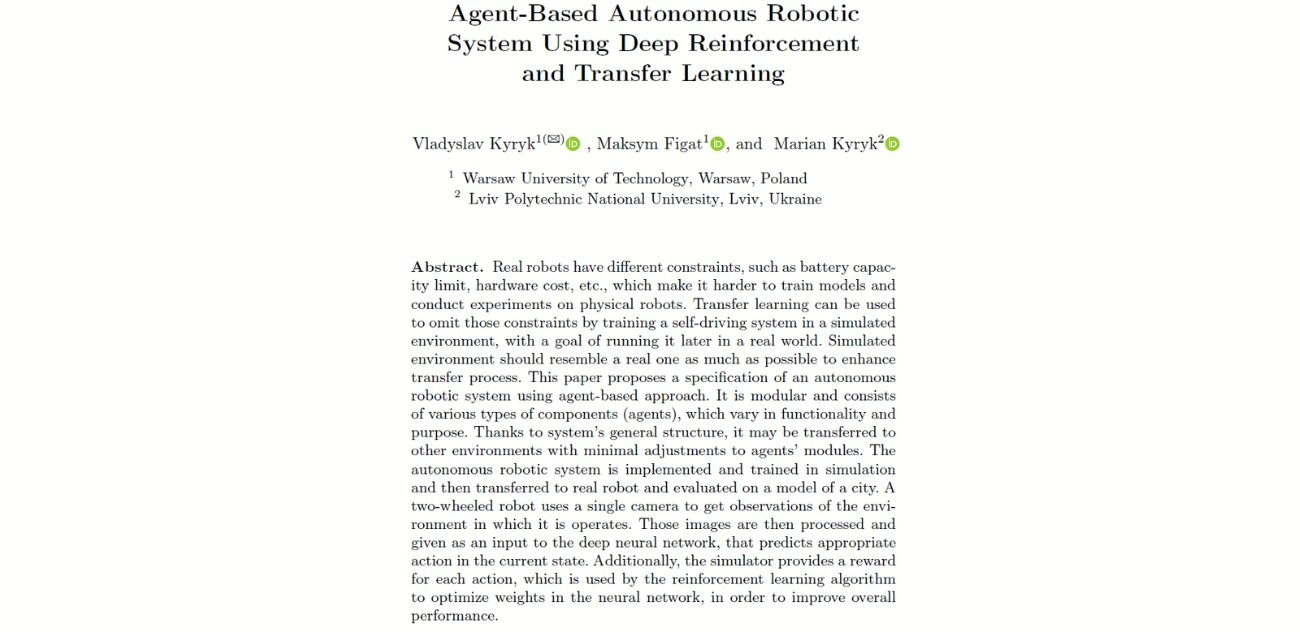

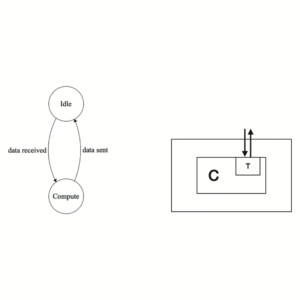

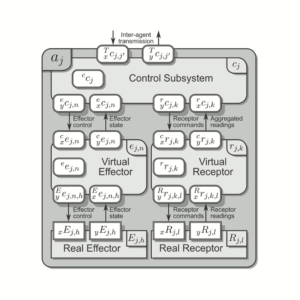

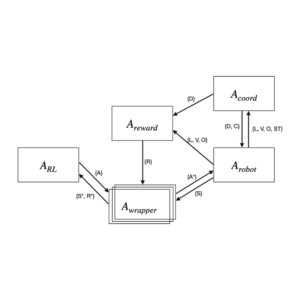

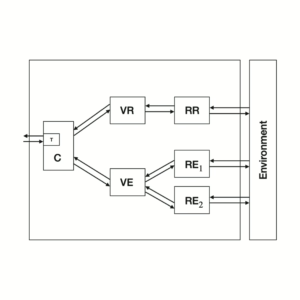

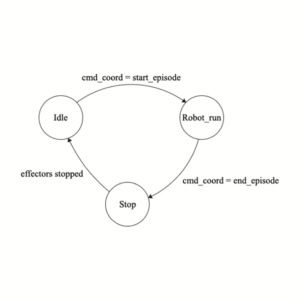

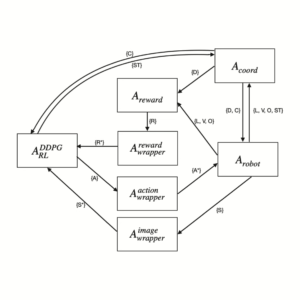

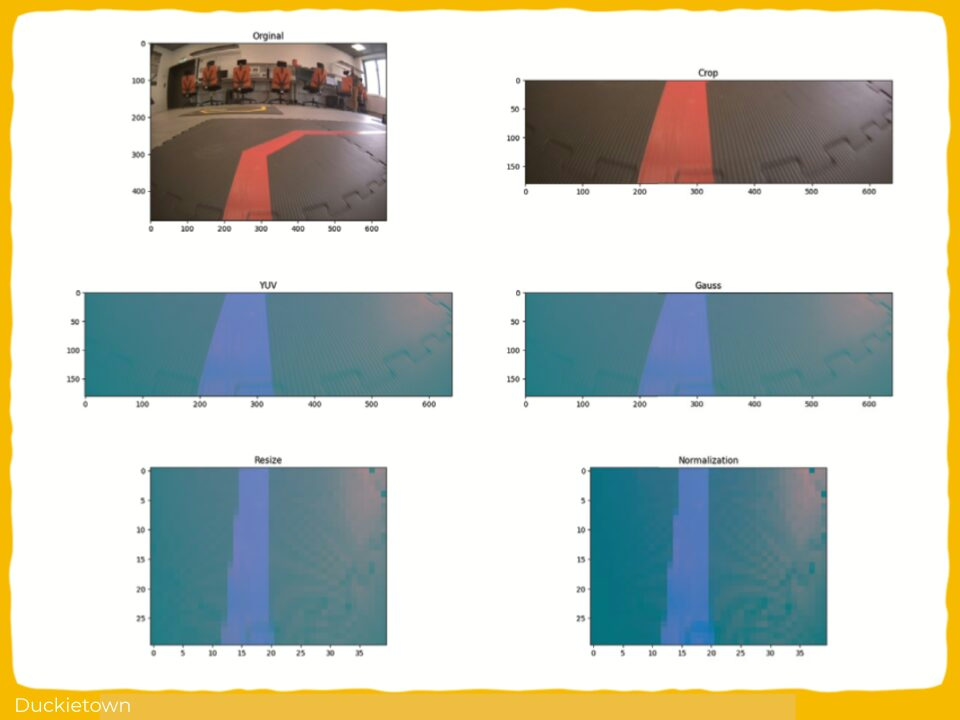

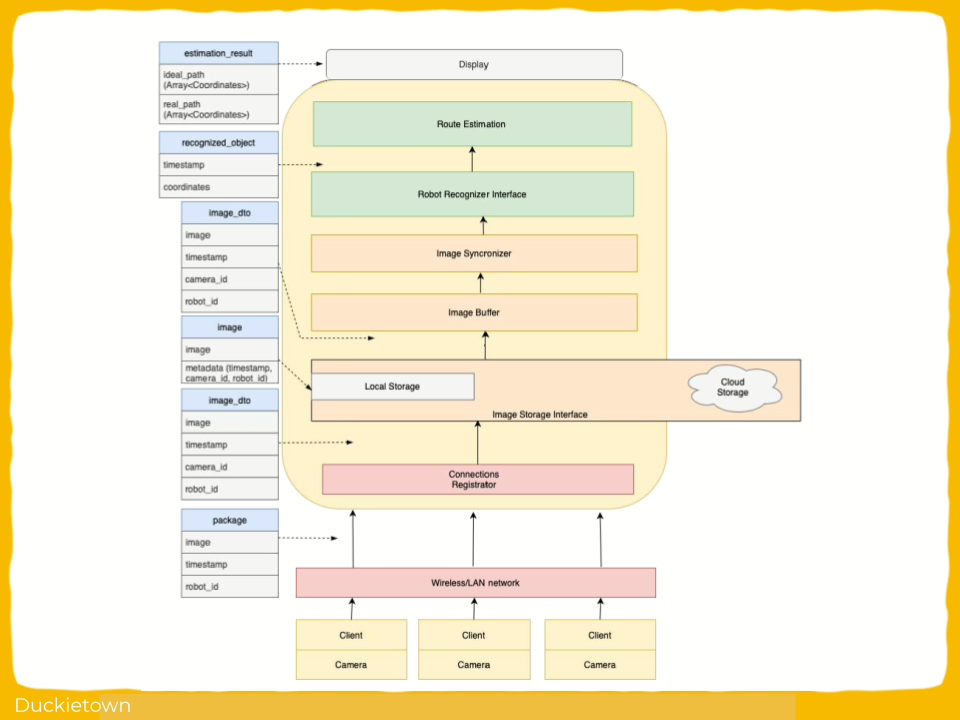

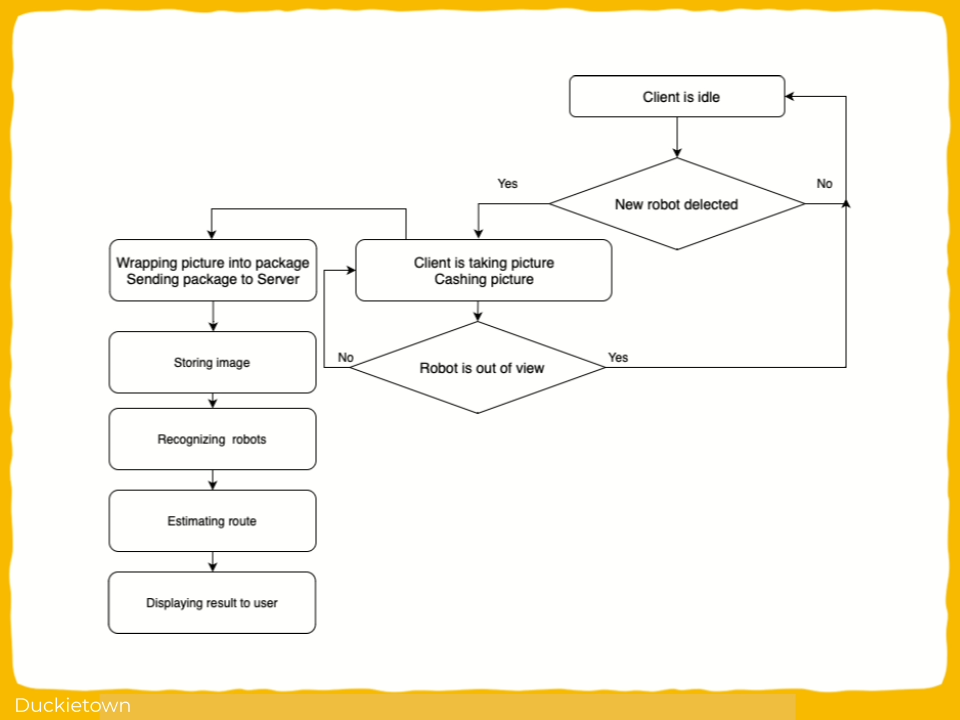

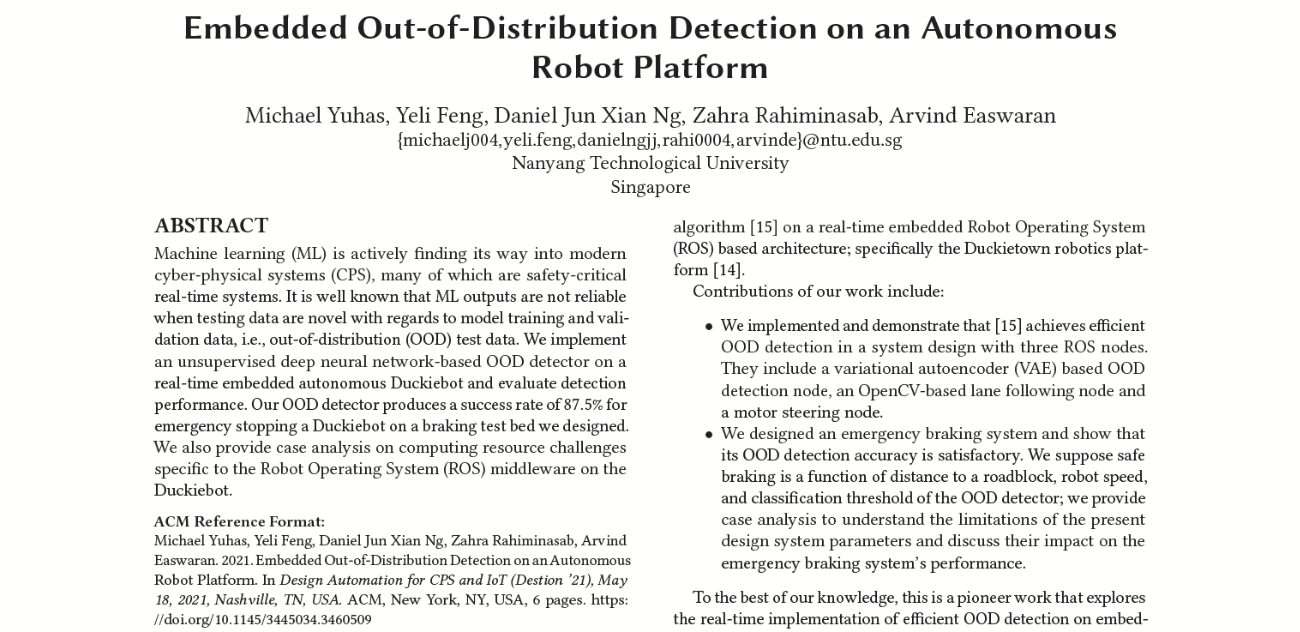

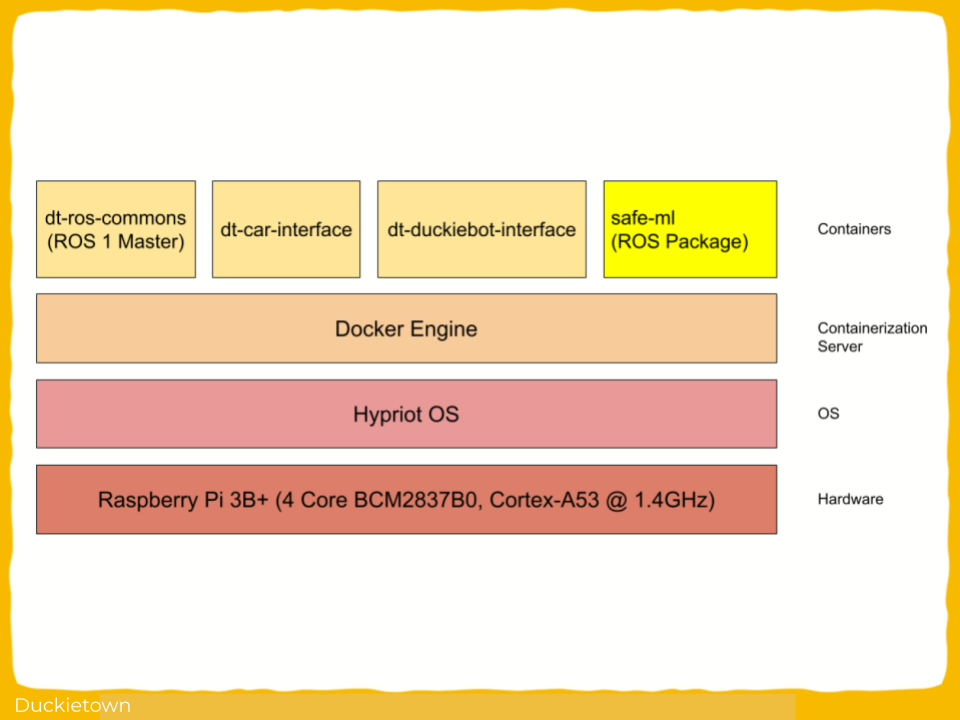

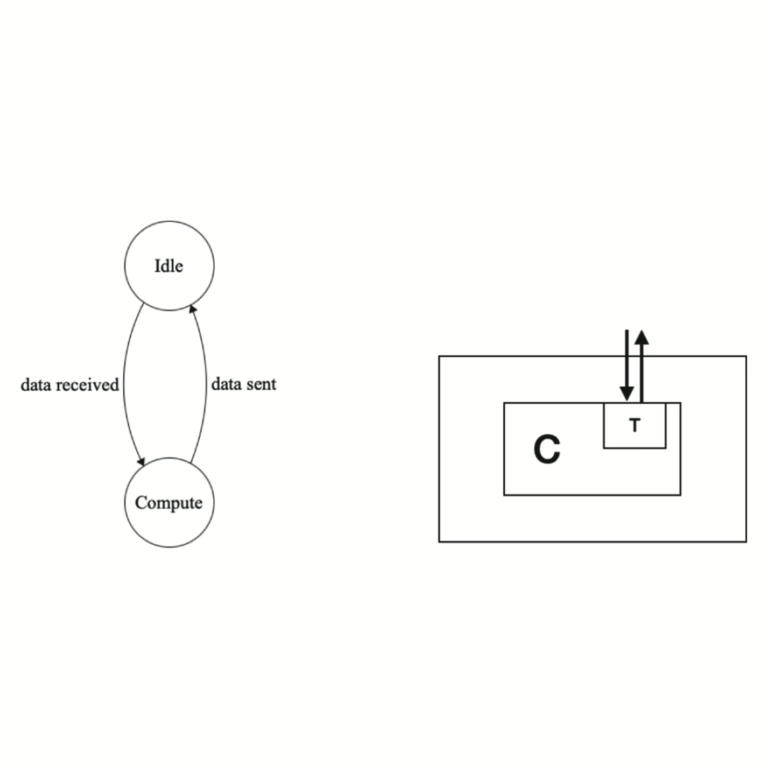

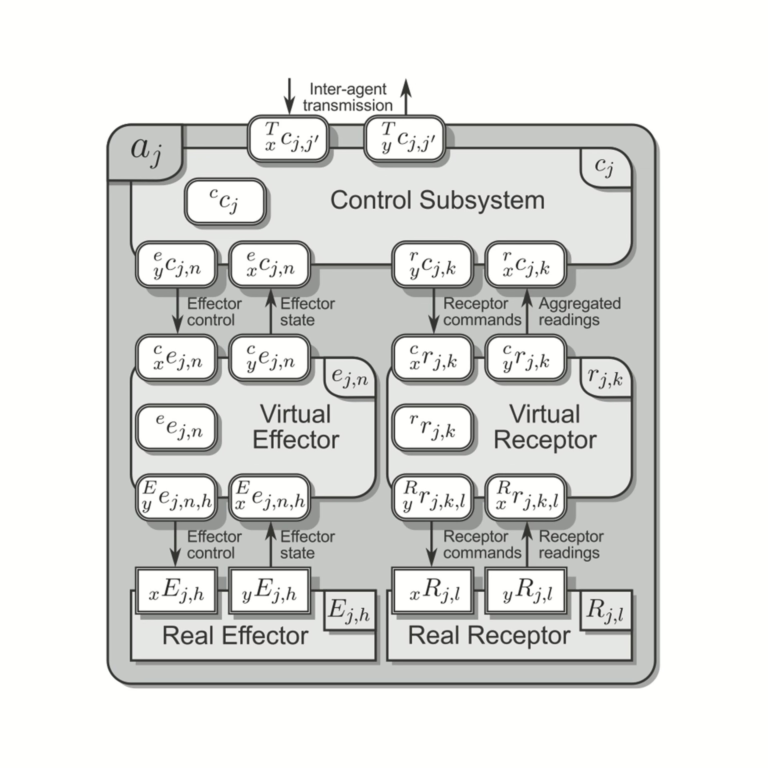

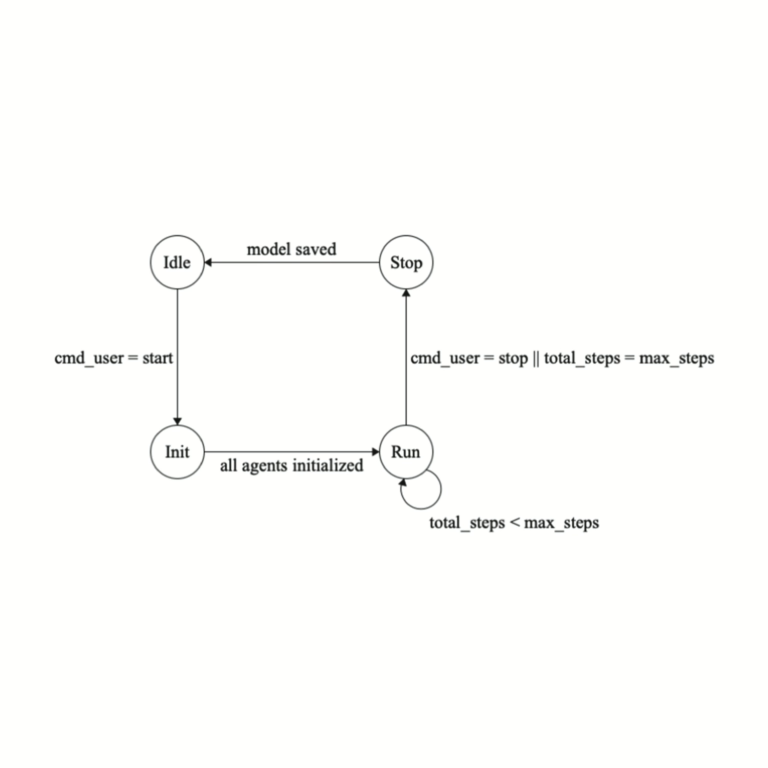

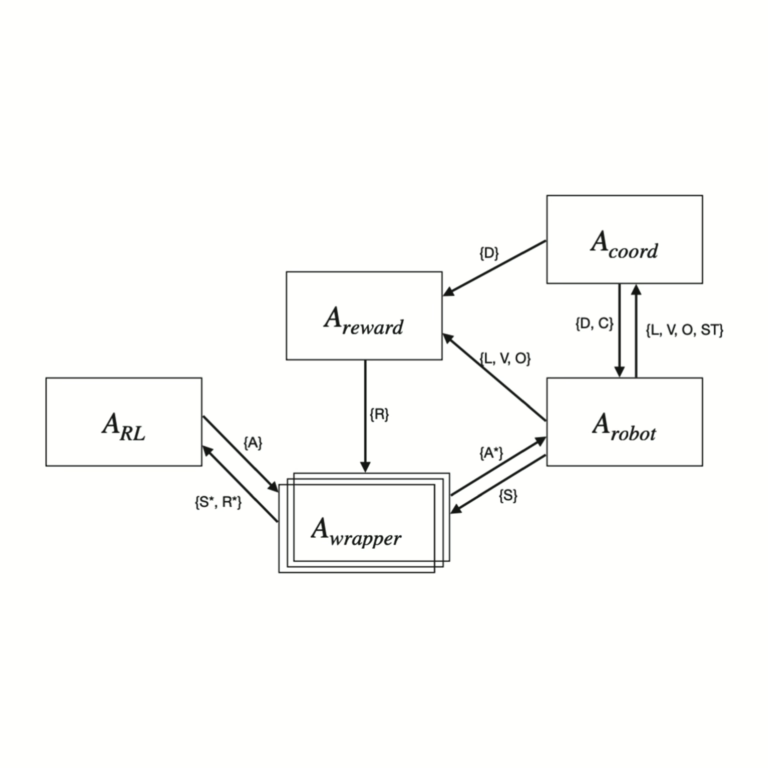

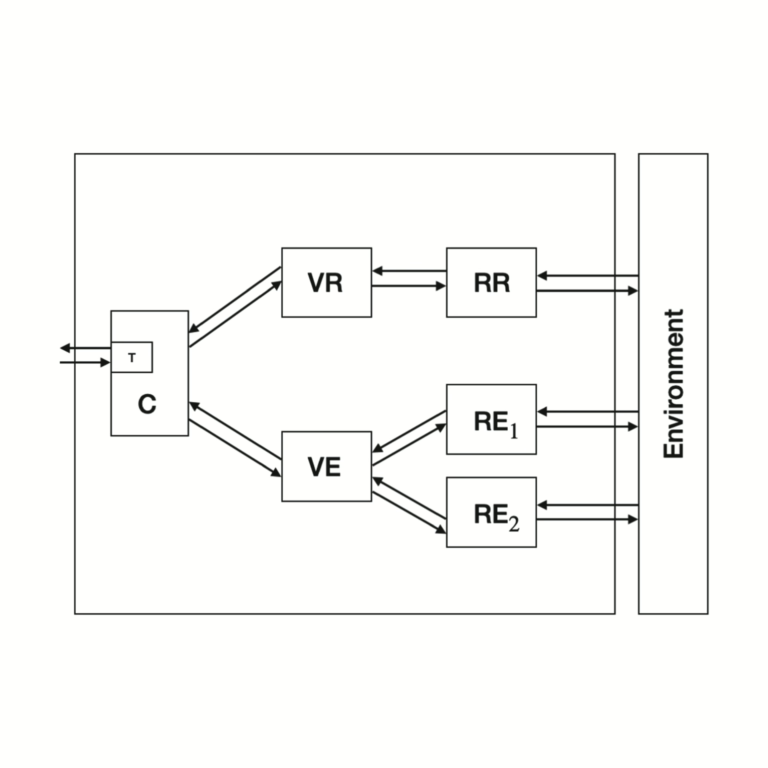

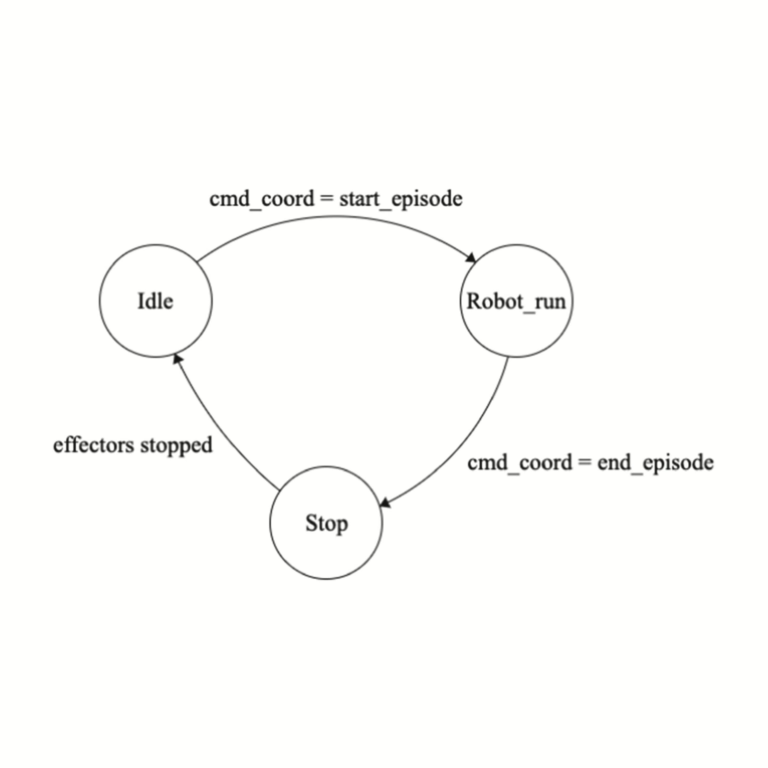

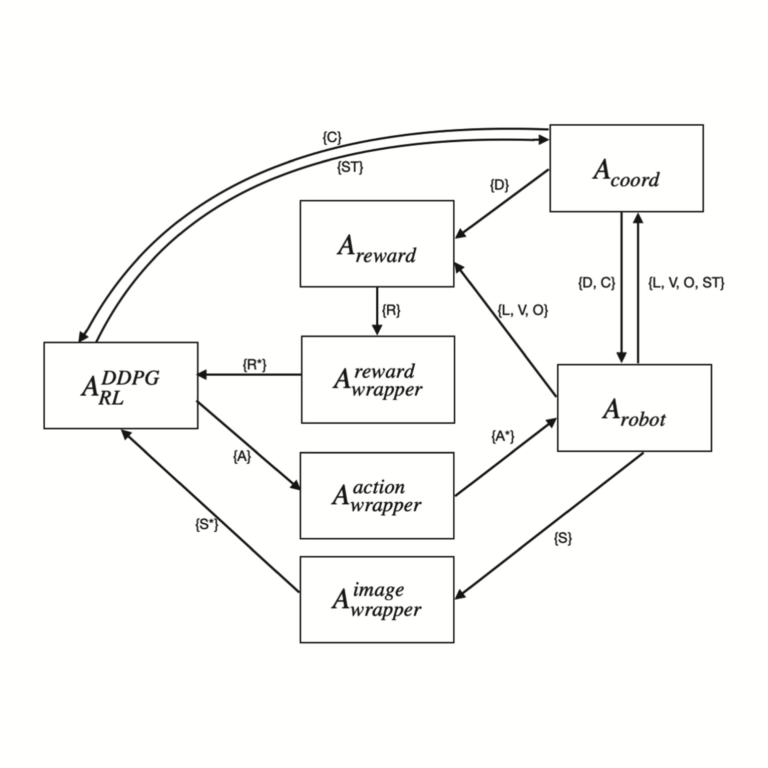

Real robots have different constraints, such as battery capacity limit, hardware cost, etc., which make it harder to train models and conduct experiments on physical robots. Transfer learning can be used to omit those constraints by training a self-driving system in a simulated environment, with a goal of running it later in a real world. Simulated environment should resemble a real one as much as possible to enhance transfer process. This paper proposes a specification of an autonomous robotic system using agent-based approach. It is modular and consists of various types of components (agents), which vary in functionality and purpose.

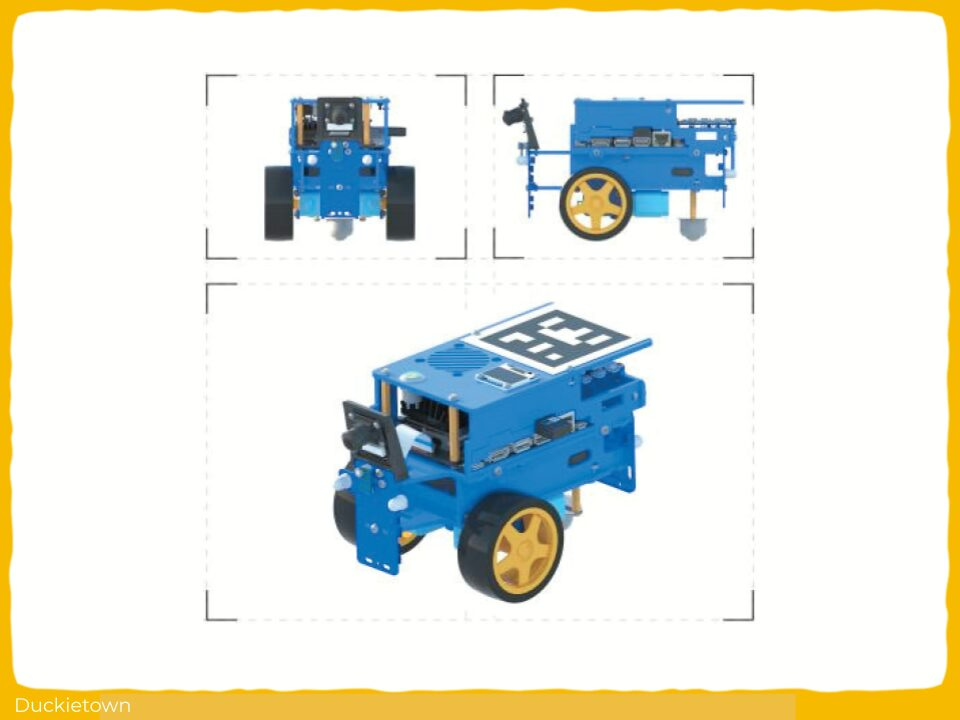

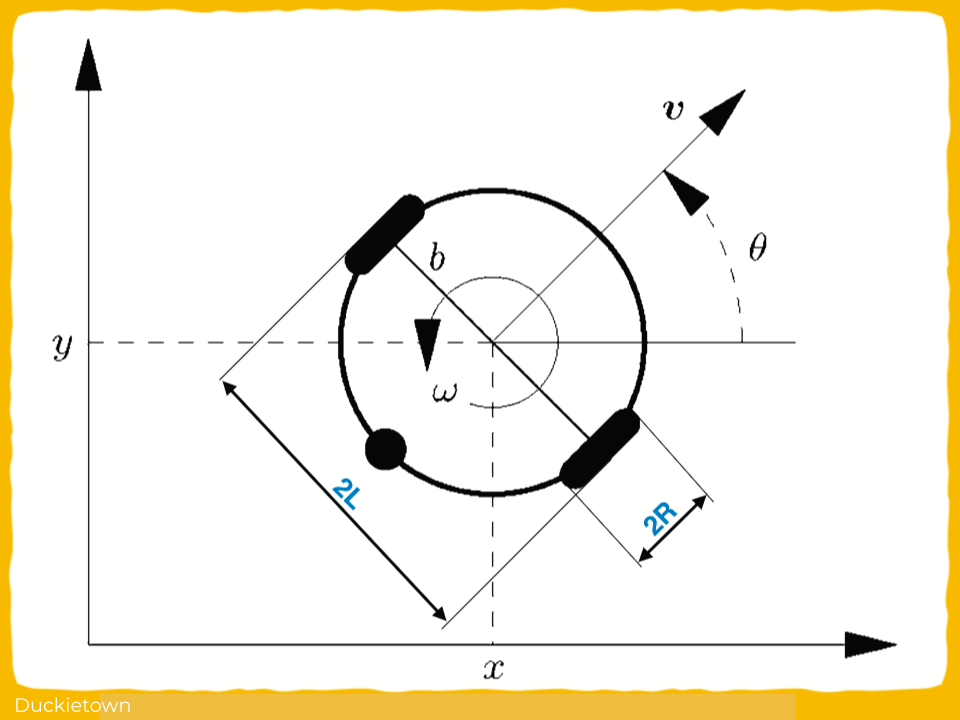

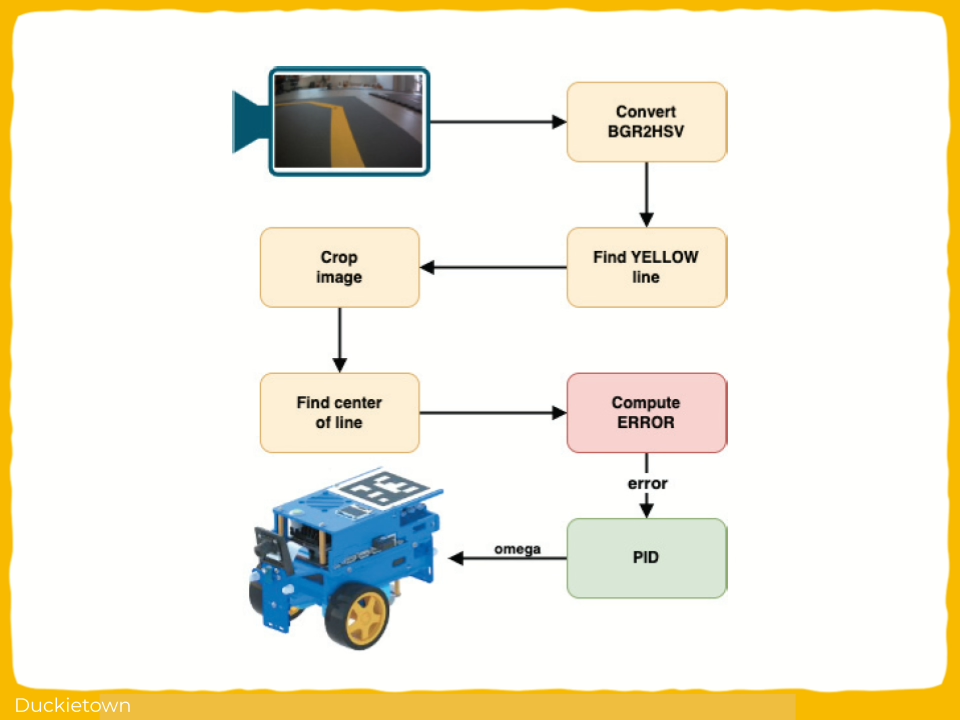

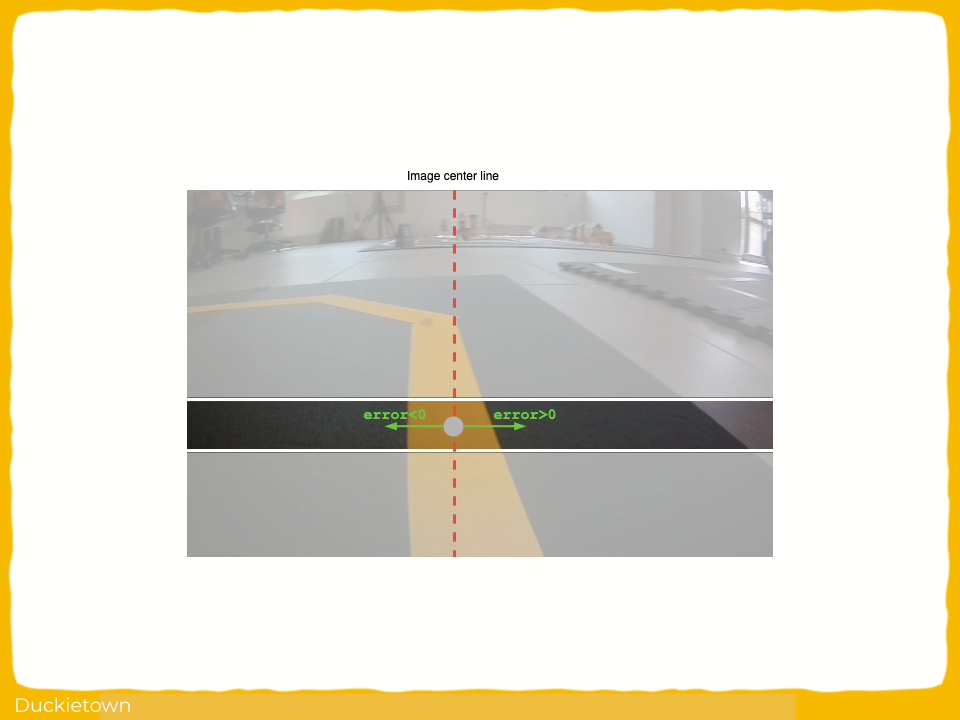

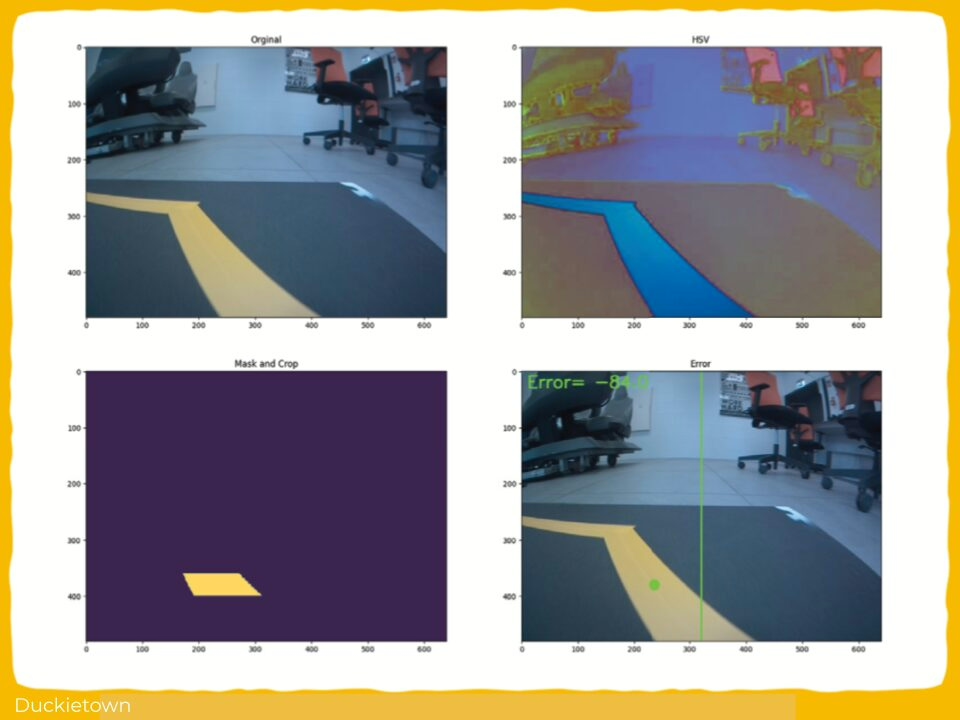

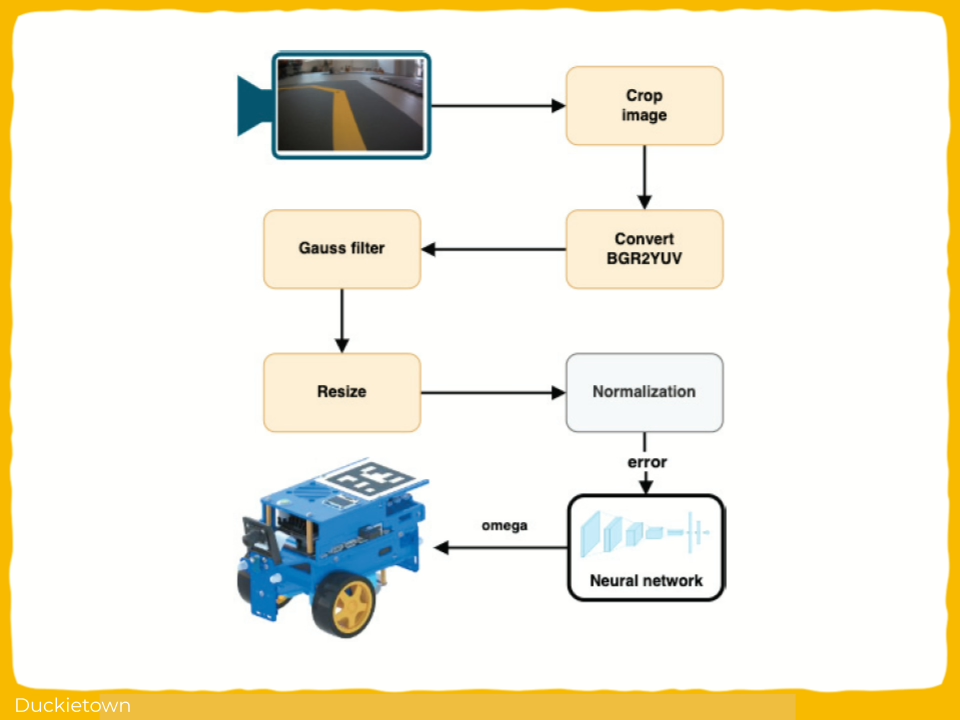

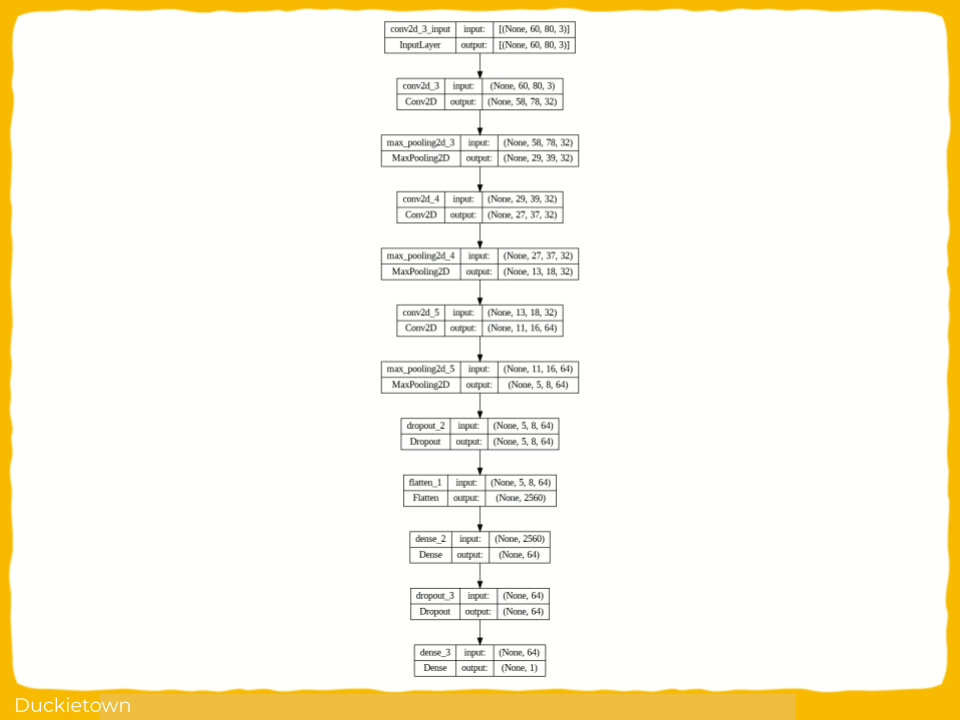

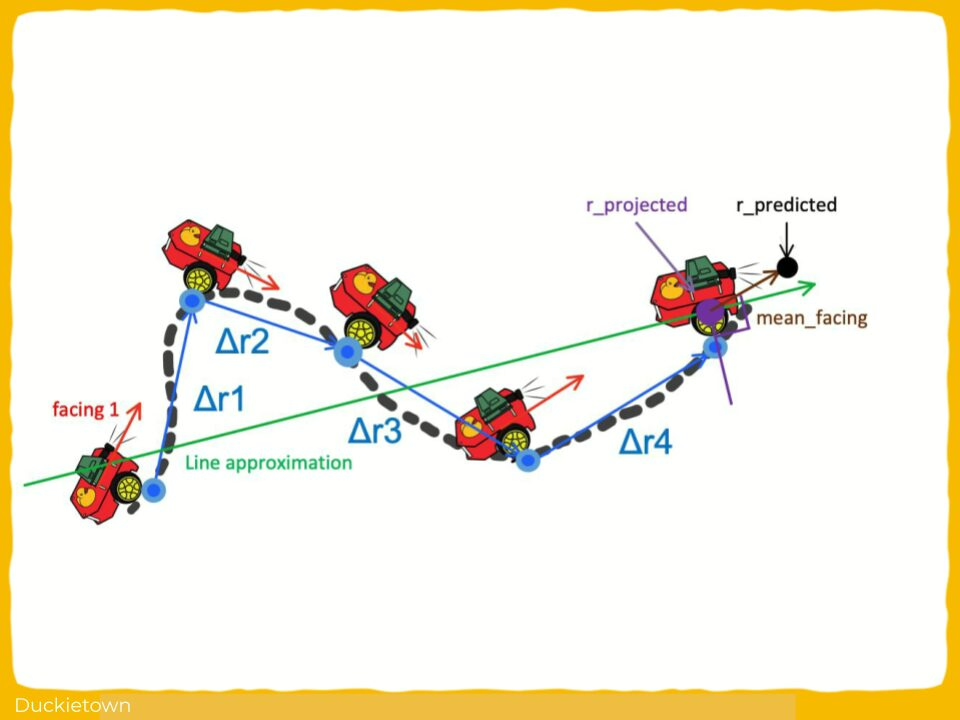

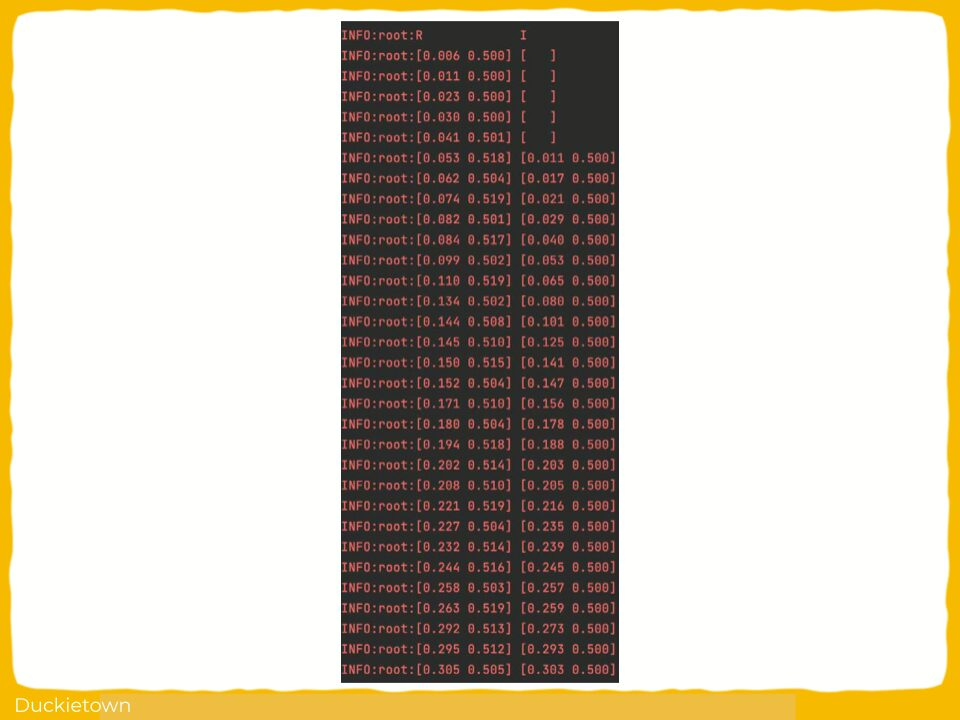

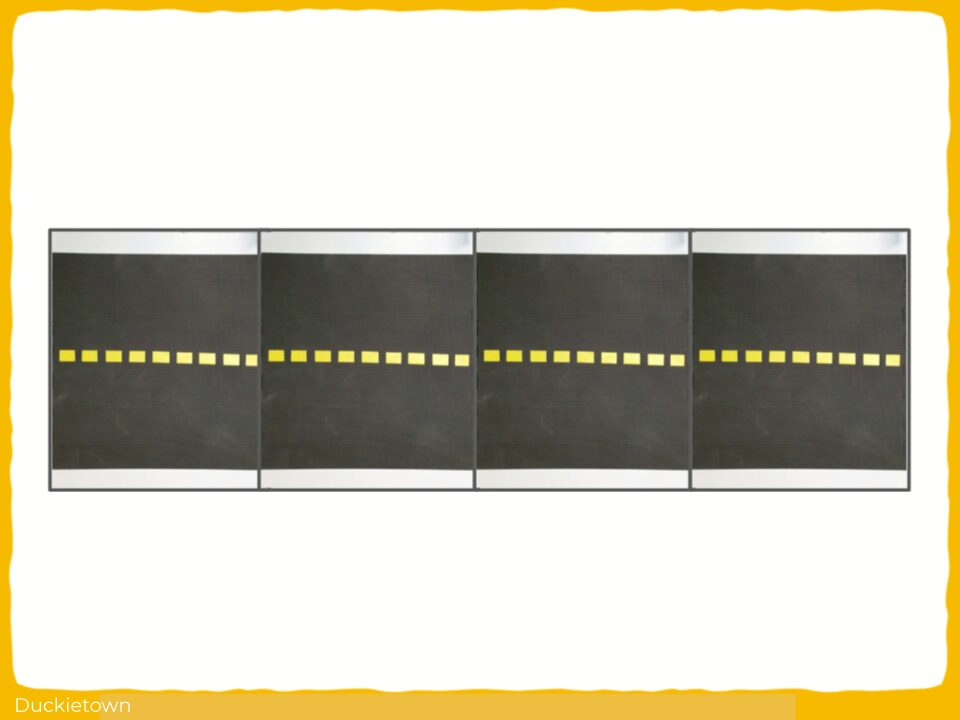

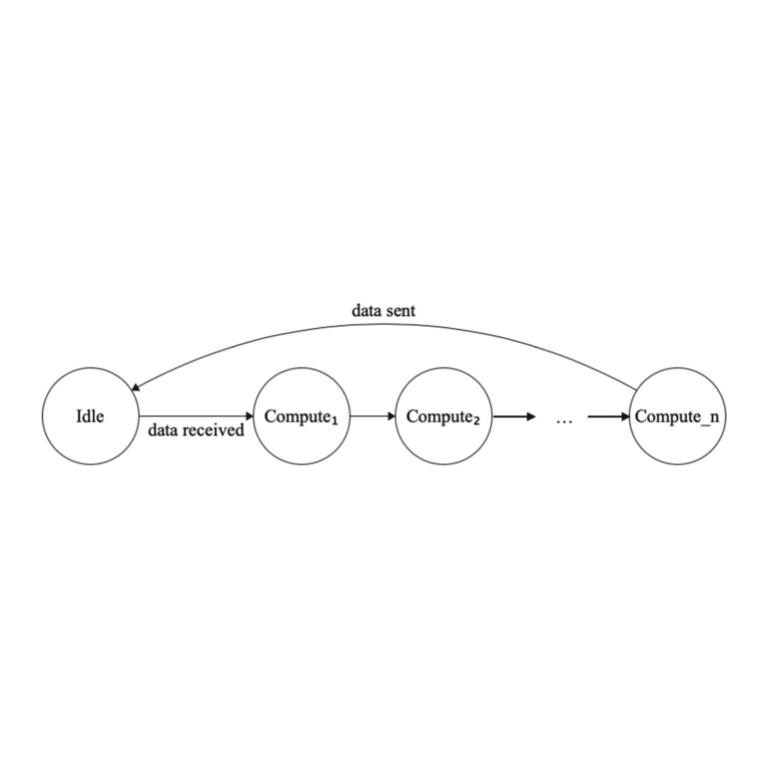

Thanks to system’s general structure, it may be transferred to other environments with minimal adjustments to agents’ modules. The autonomous robotic system is implemented and trained in simulation and then transferred to real robot and evaluated on a model of a city. A two-wheeled robot uses a single camera to get observations of the environment in which it is operates. Those images are then processed and given as an input to the deep neural network, that predicts appropriate action in the current state. Additionally, the simulator provides a reward for each action, which is used by the reinforcement learning algorithm to optimize weights in the neural network, in order to improve overall performance.

Conclusion - Deep Reinforcement Learning for Agent-Based Autonomous Robot

Here are the conclusions from the author of this paper:

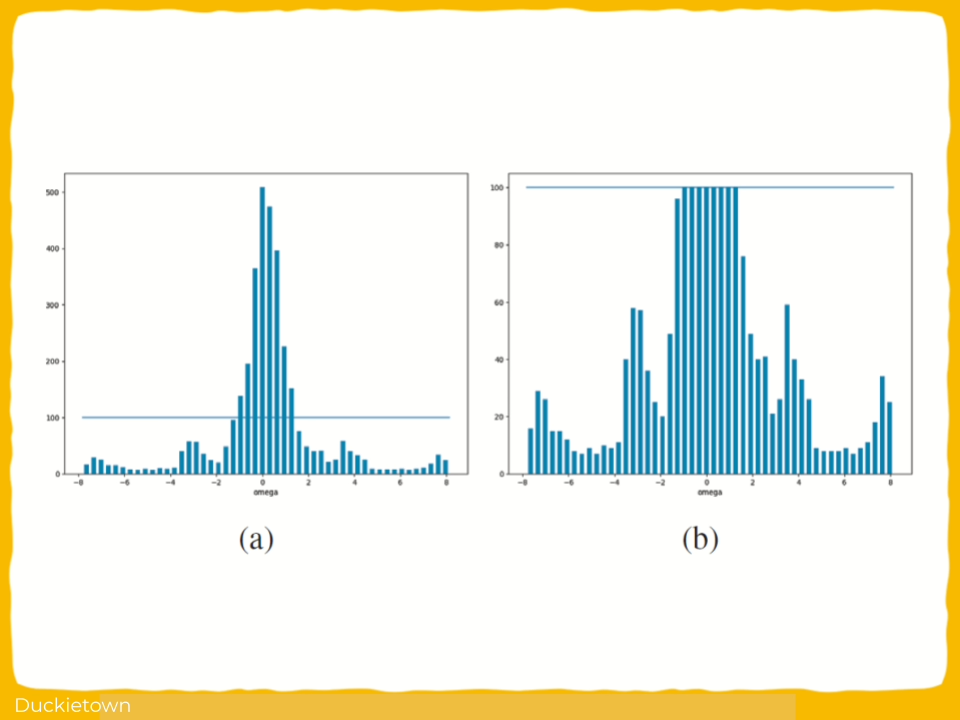

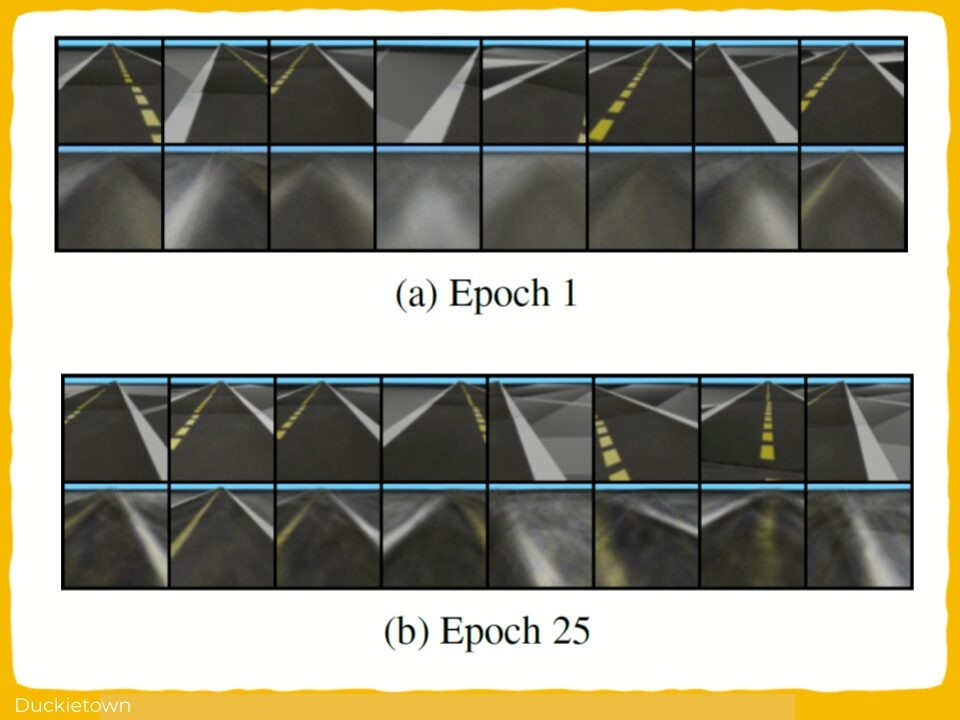

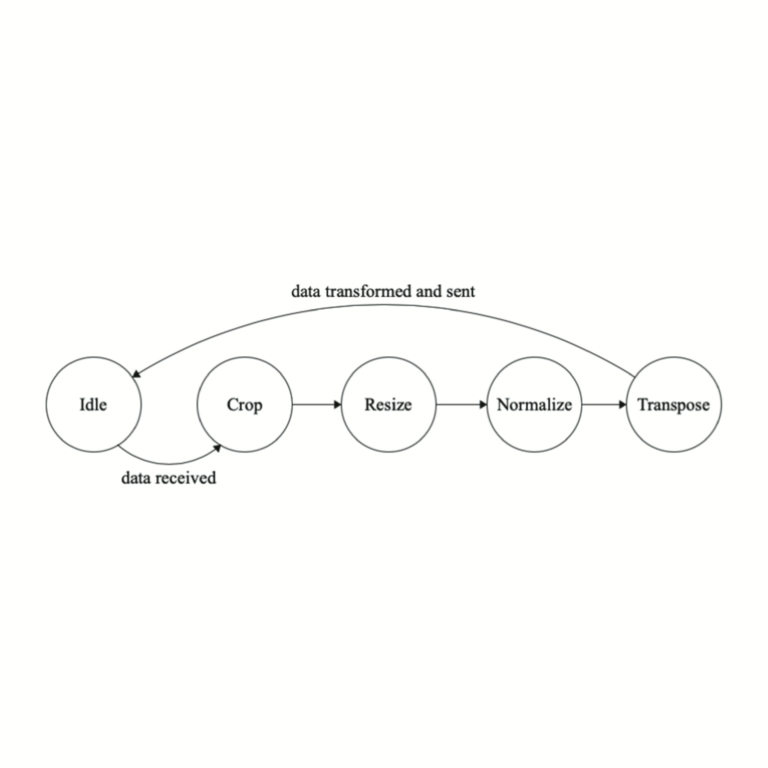

“After several breakthroughs in the field of Deep Reinforcement Learning, it became one of the most popular researched topics in Machine Learning and a common approach to the problem of autonomous driving. This paper presents the process of training an autonomous robotic system using popular actor-critic algorithm in the simulator, which may then also be run on real robot. It was possible to train an agent in real-time using trial-and-error approach without the need to collect vast amounts of labeled data. The neural network learned how to control the robot and how to follow the lanes, without any explicit guidelines. Only a few functions have been used to transform the data sent between environment and the agent, in order to make the learning process smoother and faster.

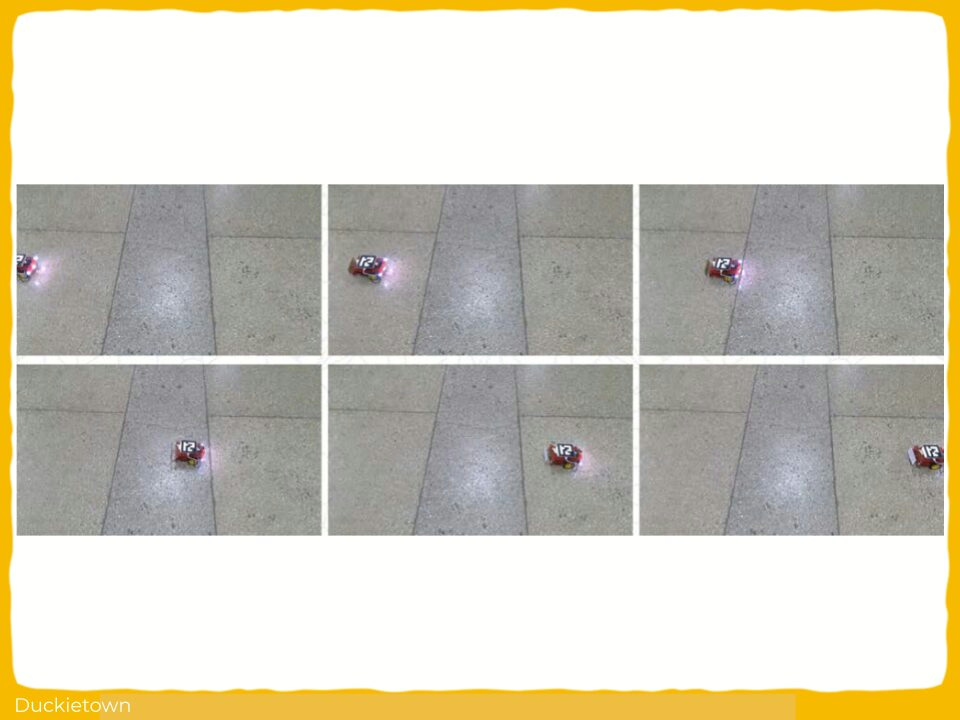

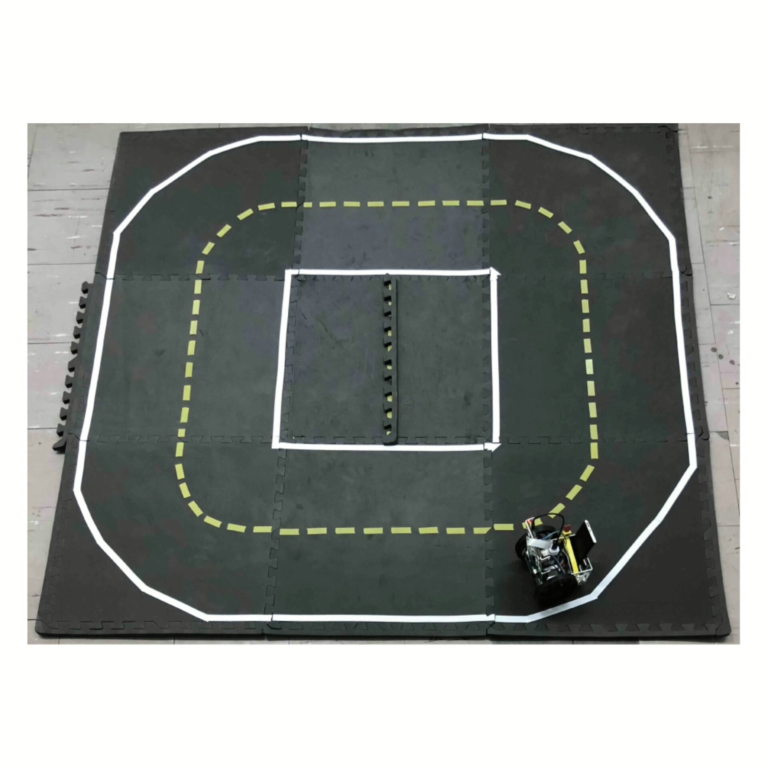

For evaluation purposes, a real robot and a small city model have been built, based on the Duckietown platform specification. This hardware has been used to evaluate in the real world the performance of the system, trained in simulator. Also, additional Transfer Learning techniques were used, in order to adjust the observations and actions in the real robot, due to the differences with simulated environment. Although, the performance in real environment was worse than in simulator, certain trained models were still able to guide the robot around a simple road loop, which shows a potential for such approach. As a result, the use of the simulator greatly reduced the time and effort needed to train the system, and transfer methods were used to deploy it in the real world.

The Duckietown platform provides a baseline, which was modified and refactored to follow the system structure. The simulator and its components are thoroughly documented, the detailed instructions explain how to train and run the robot both in simulation and in real world and evaluate the results. Duckietown provides complete sets of parts, necessary to build the robot and small city, however, it was decided to build custom robot, according to the guidelines. The robot uses a single camera to get observations of the surrounding environment.

The reinforcement learning algorithm was used to learn a policy, which tries to choose optimal actions based on the those observations with the help of reward function, that provides a feedback for previous decisions. It was possible to significantly reduce the effort required to train a model, thanks to the simulator, as the process does not require constant human supervision and involvement. Such approach proves to be very promising, as the agent learned how to do the lane-following task without any explicit labels, and has shown good performance in the simulated environment. Although, there is still a room for improvement, when it comes to transferring the model to real world, which requires various adaptations and adjustments to be made for The robot to properly execute maneuvers and show stability in its actions.”

Project Authors

Vladyslav Kyryk is currently working as a Data Scientist at Finitec, Warsaw, Poland.

Maksym Figat is working as an Assistant Professor at Warsaw University of Technology, Poland.

Maryan Kyryk is currently serving as the Co-Founder & CEO at Maxitech, Ukraine.

Learn more

Duckietown is a platform for creating and disseminating robotics and AI learning experiences.

It is modular, customizable and state-of-the-art, and designed to teach, learn, and do research. From exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge, Duckietown evolves with the skills of the user.