Autoduck: VLM-based Autonomous Navigation in Duckietown

Project Resources

- Objective: Develop an autonomous navigation system for Duckiebot DB21J in Duckietown using vision-based control and VLM integration.

- Approach: Implement calibrated perception, control, and FSM modules with ROS and integrate quantized Qwen 2.5 models on embedded hardware.

- Authors: Sahil Virani, Supratik Patel, Esmir Kico, Technical University of Munich

Dual-Mode Autonomous Navigation in Duckietown using VLM - the objectives

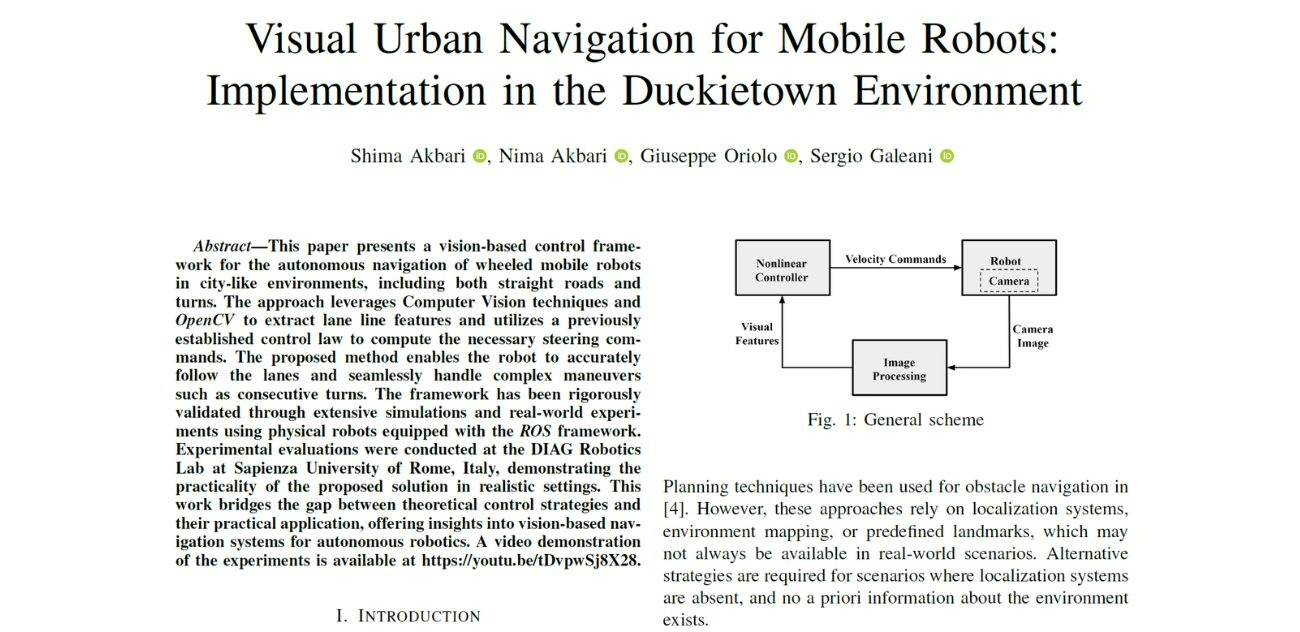

This project aims to implement an autonomous navigation system on the Duckiebot DB21J platform within Duckietown to enable vision-based control and decision-making using VLM (Vision Language Model).

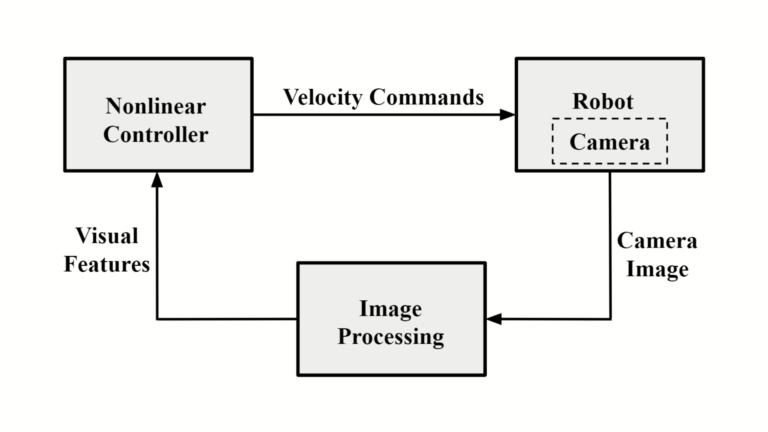

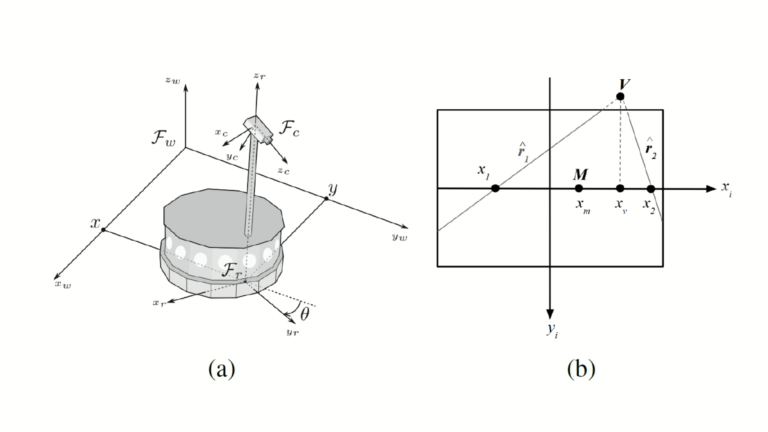

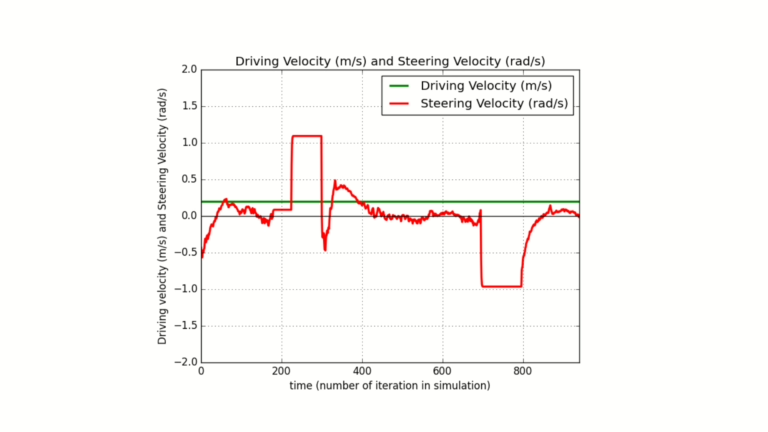

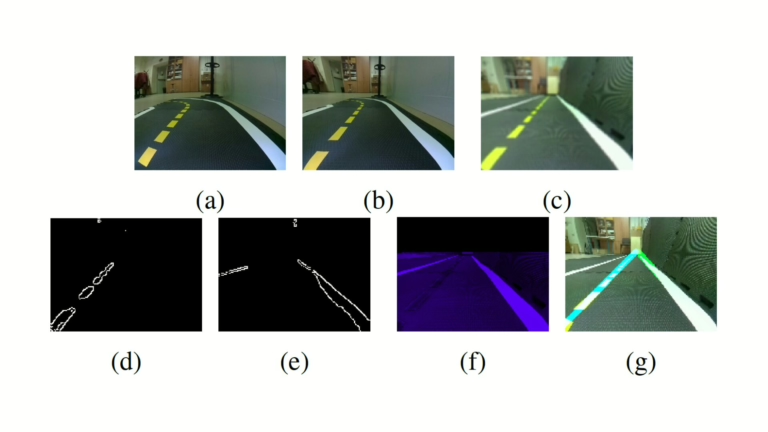

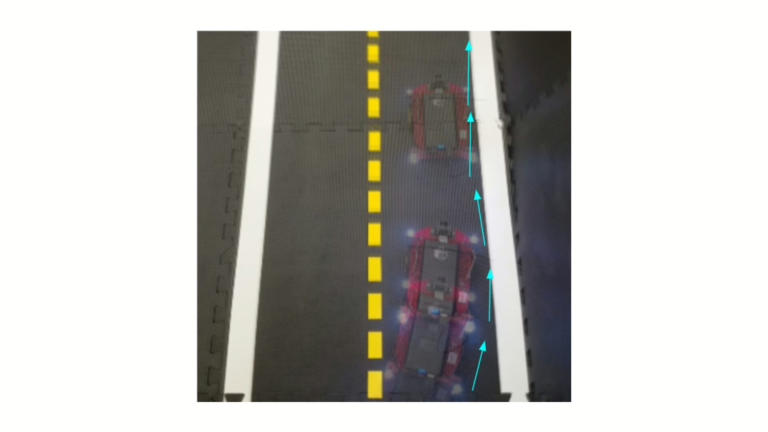

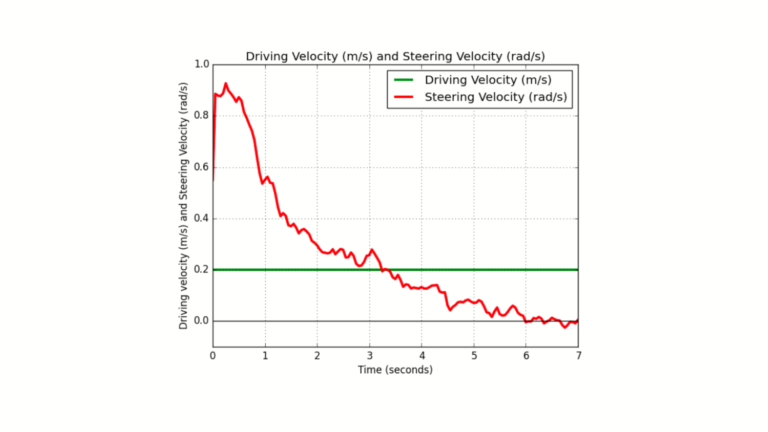

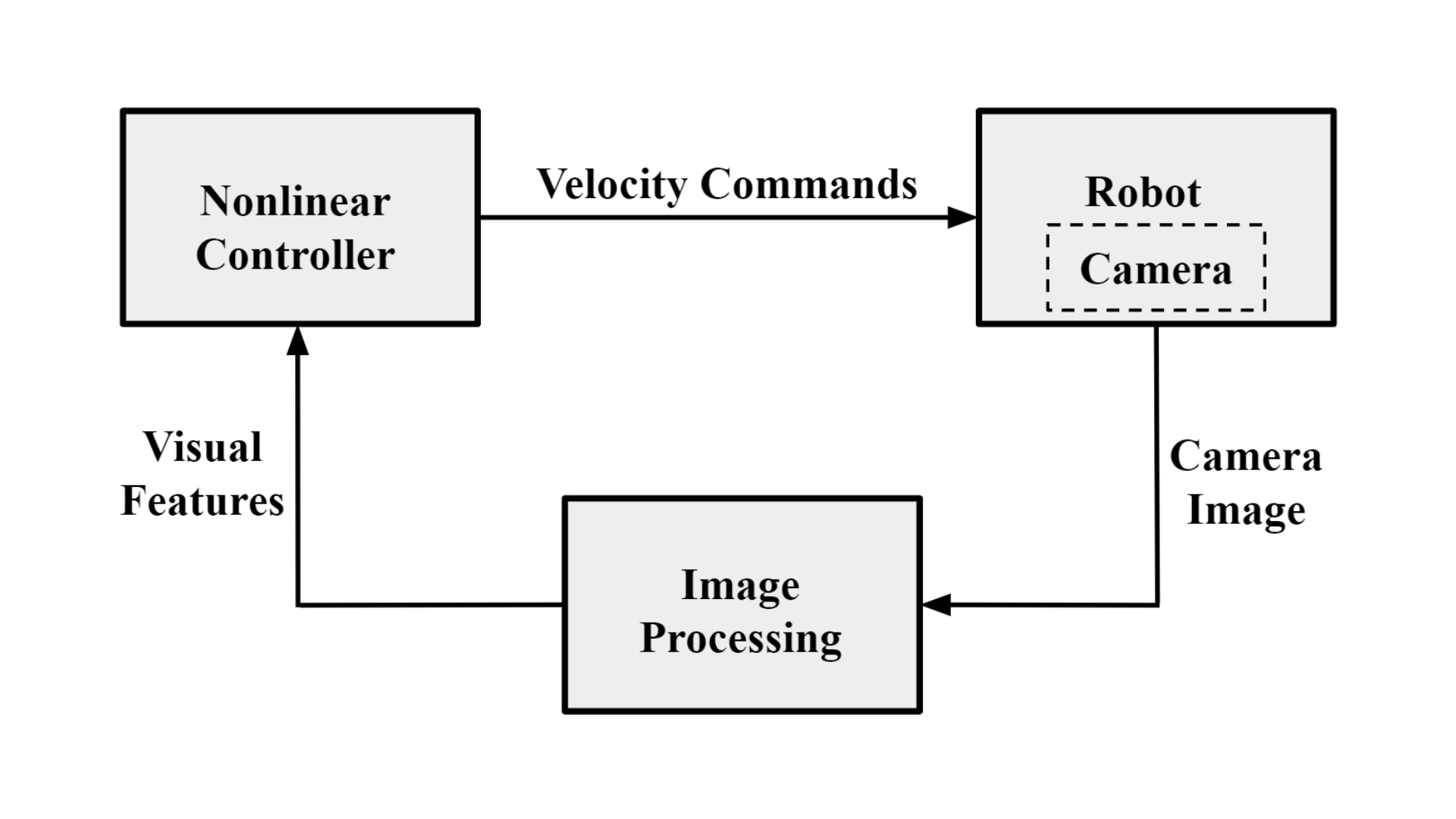

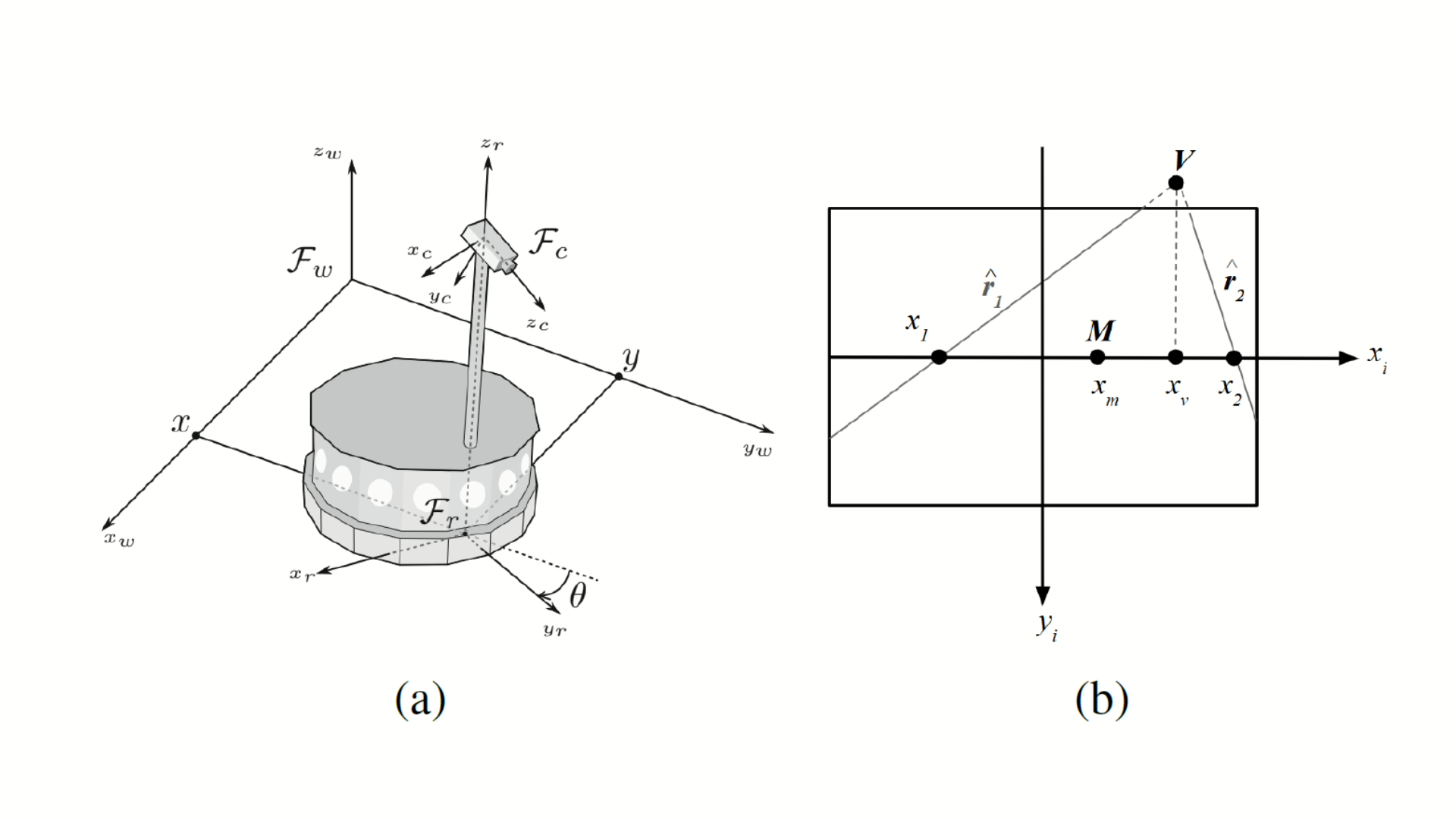

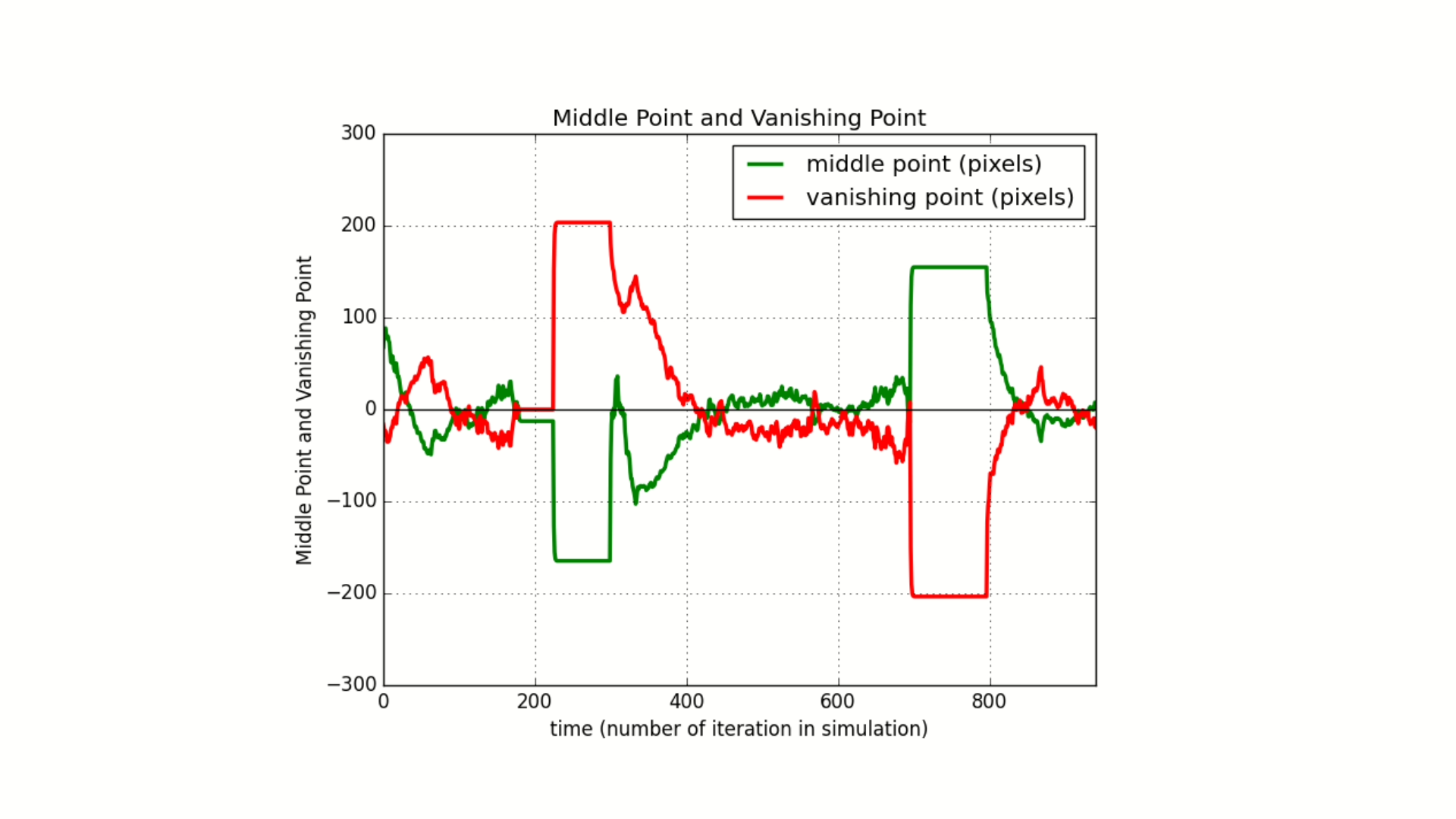

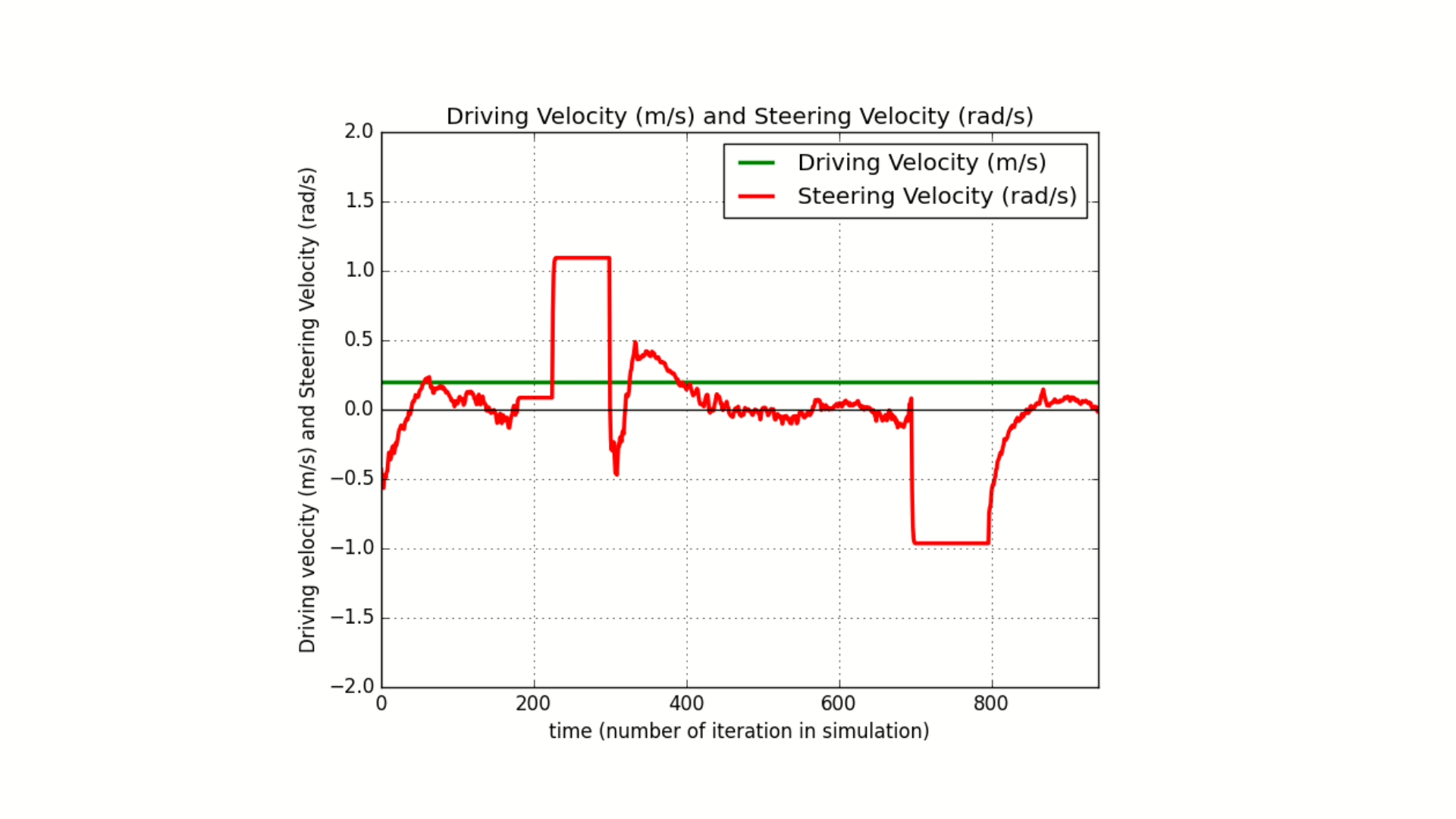

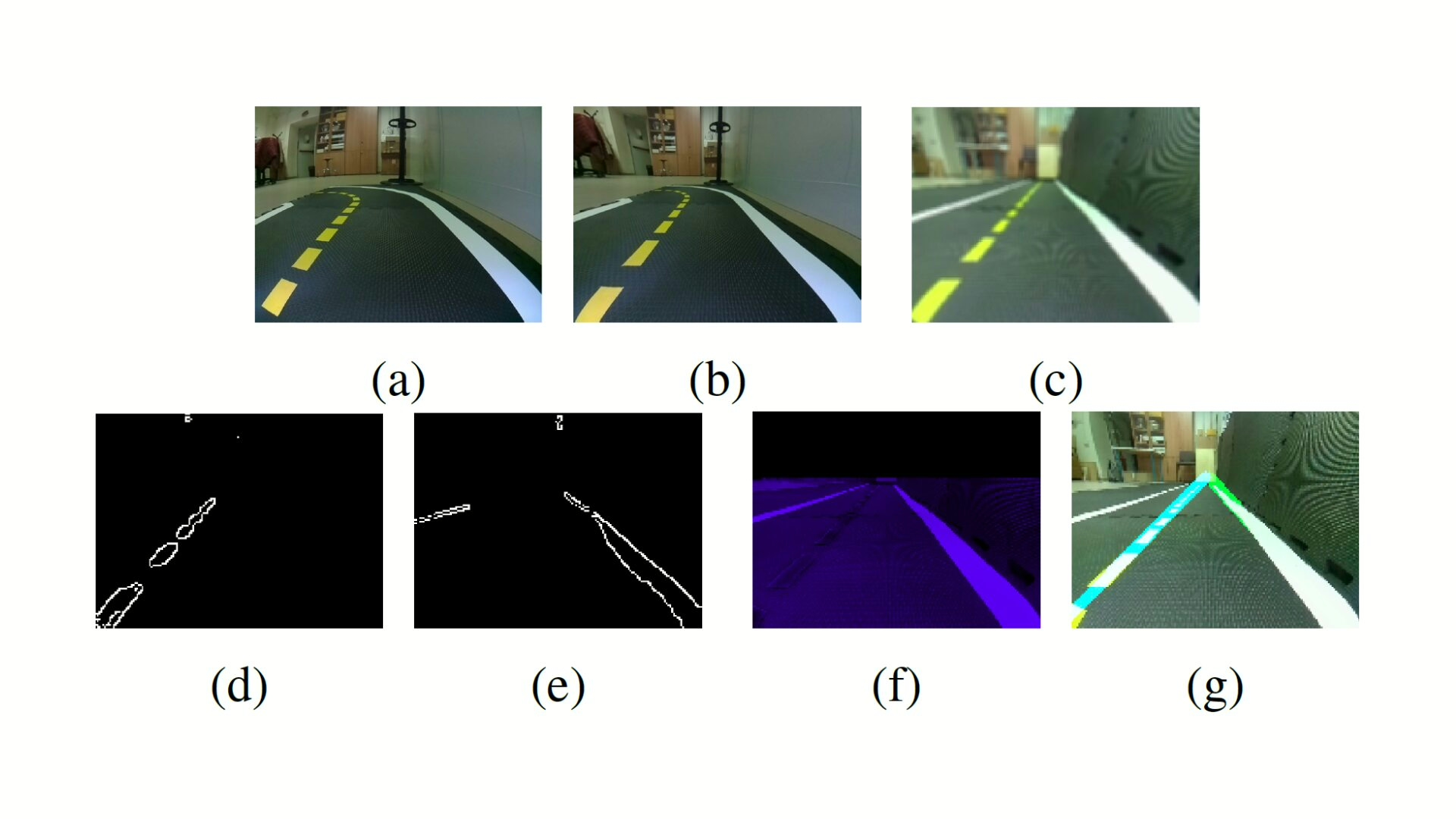

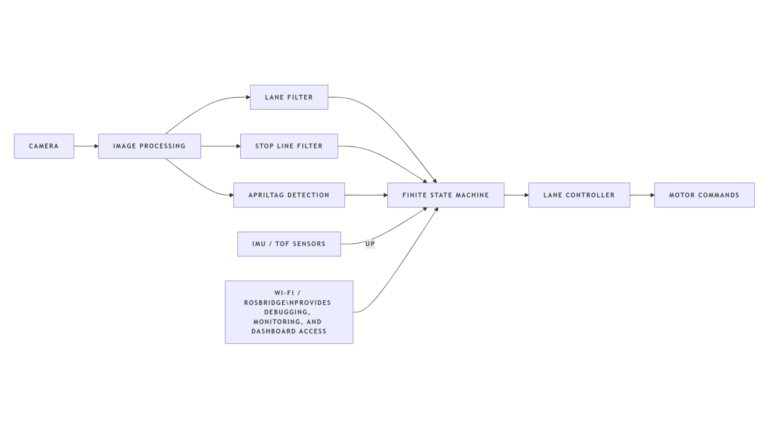

The system integrates calibrated camera intrinsics and extrinsics, motor gain and trim calibration, ROS nodes for perception and control, AprilTag-based semantic localization, stop line detection, lane filter for lateral pose estimation, finite state machine for state transitions, PID controllers for velocity and steering regulation, and quantized Qwen 2.5 models for multimodal inference on embedded hardware.

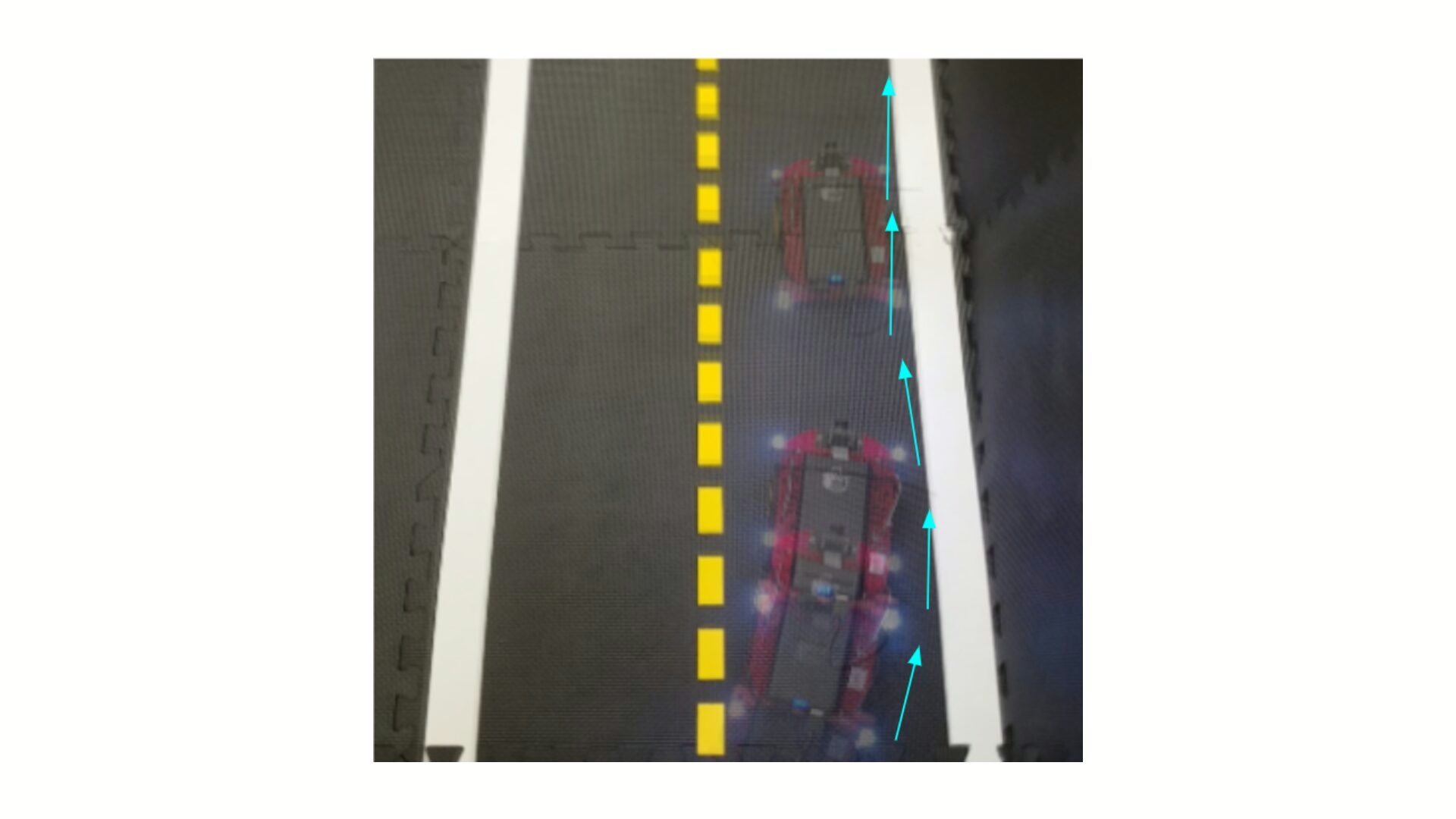

The work establishes a reproducible pipeline for benchmarking navigation algorithms, enabling analysis of trade-offs between model size, inference latency, memory limits, communication overhead, and control cycle timing in real-time robotic systems.

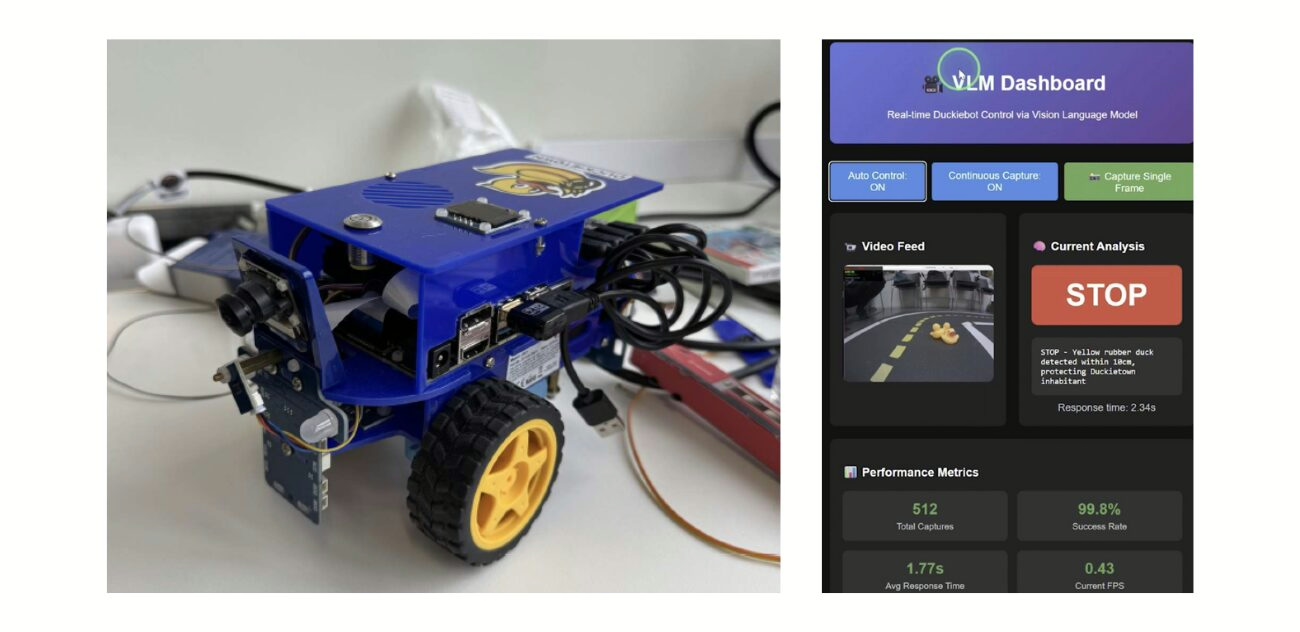

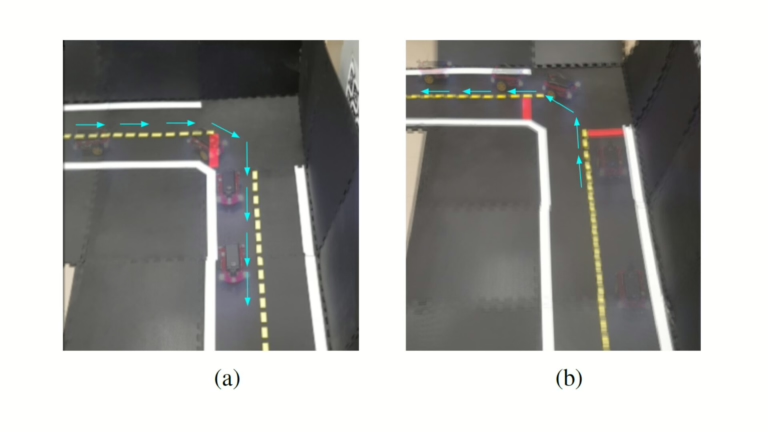

VLM in Duckietown - visual project highlights

The technical approach and challenges

This approach, at the technical level, involves:

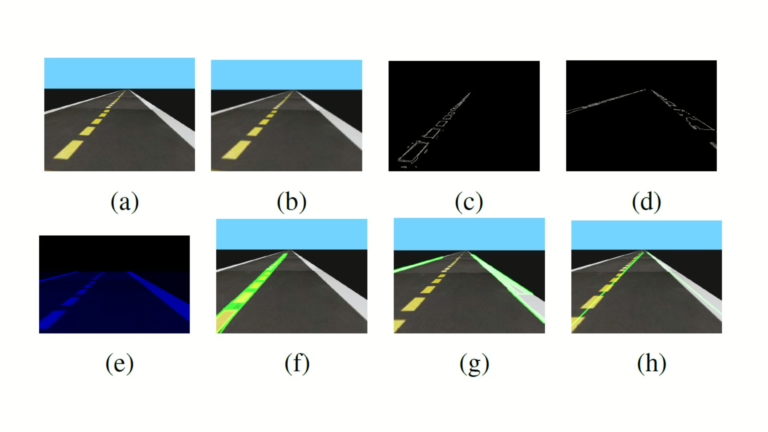

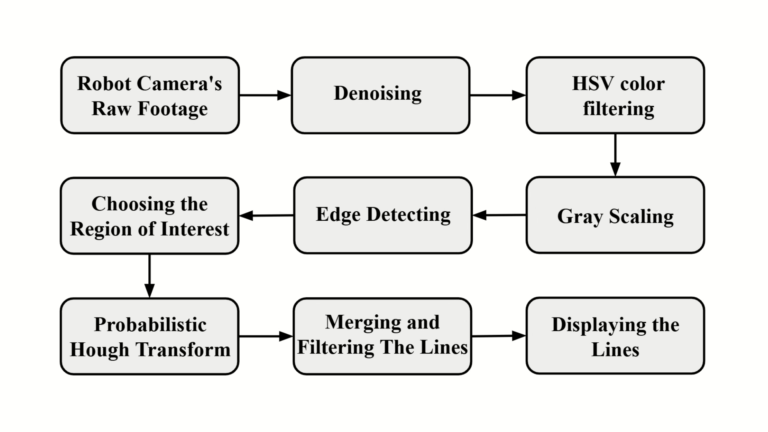

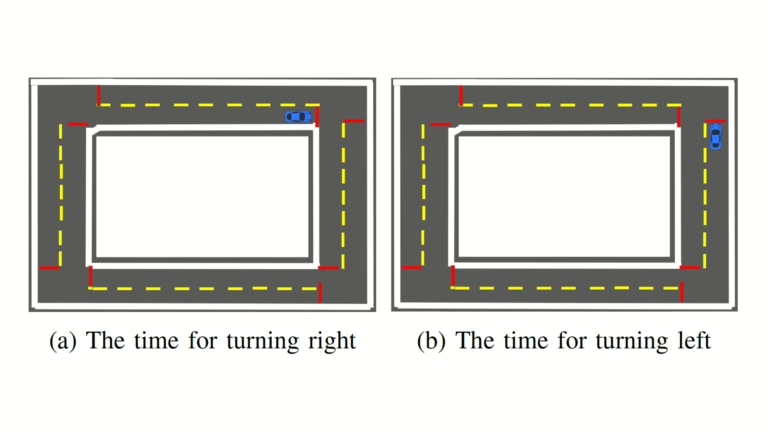

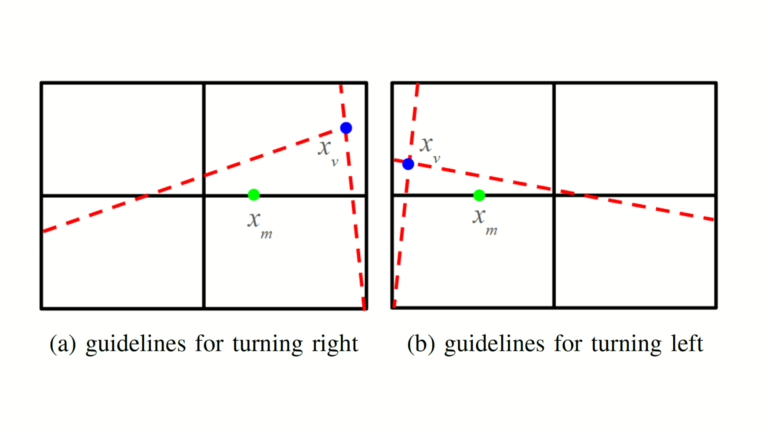

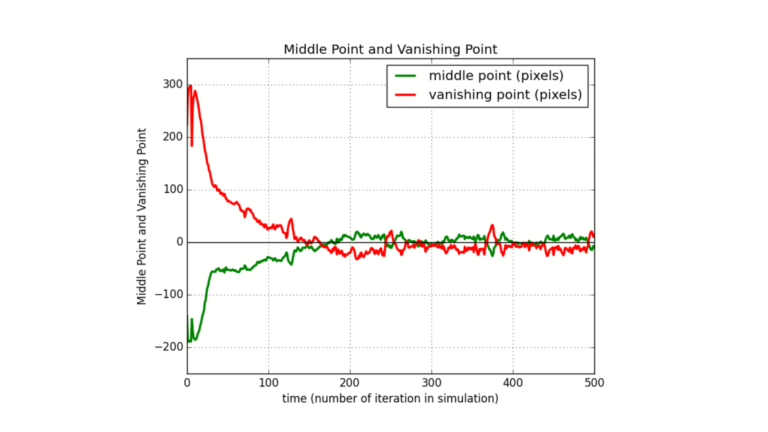

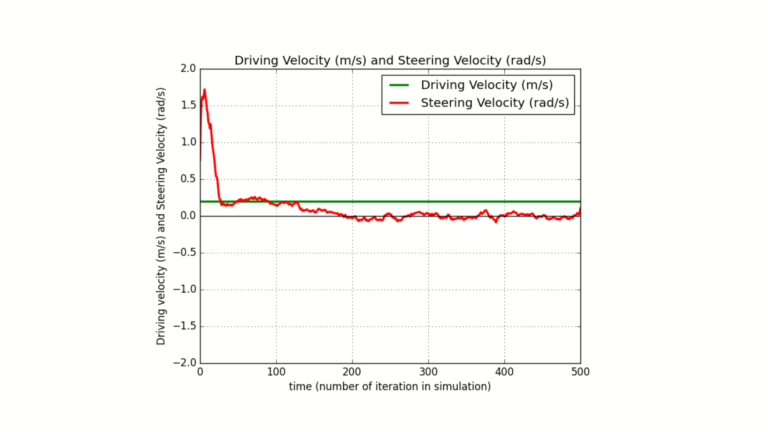

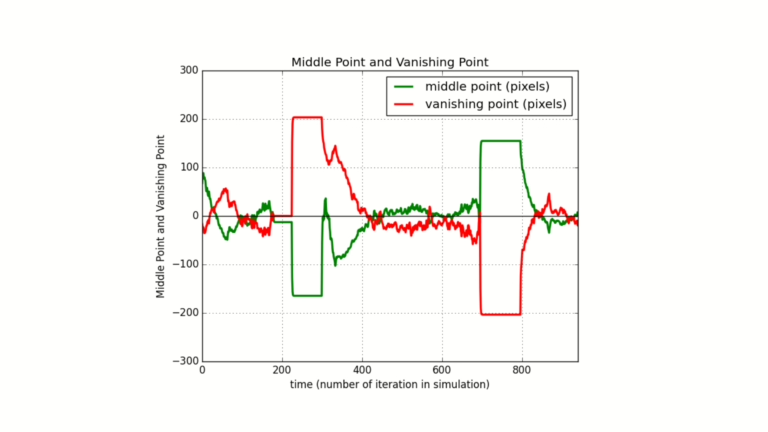

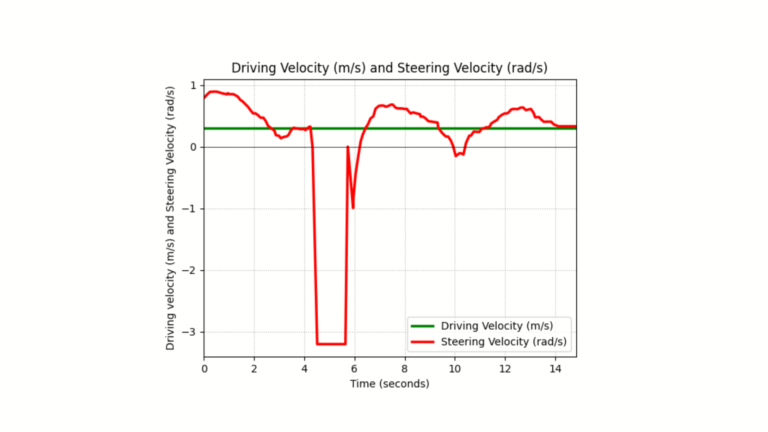

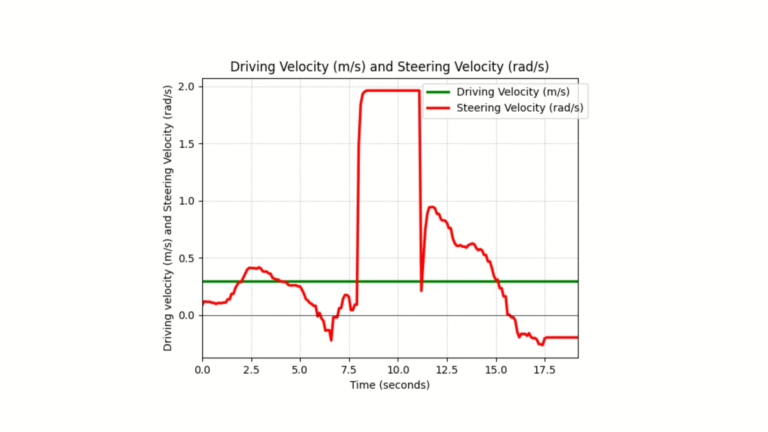

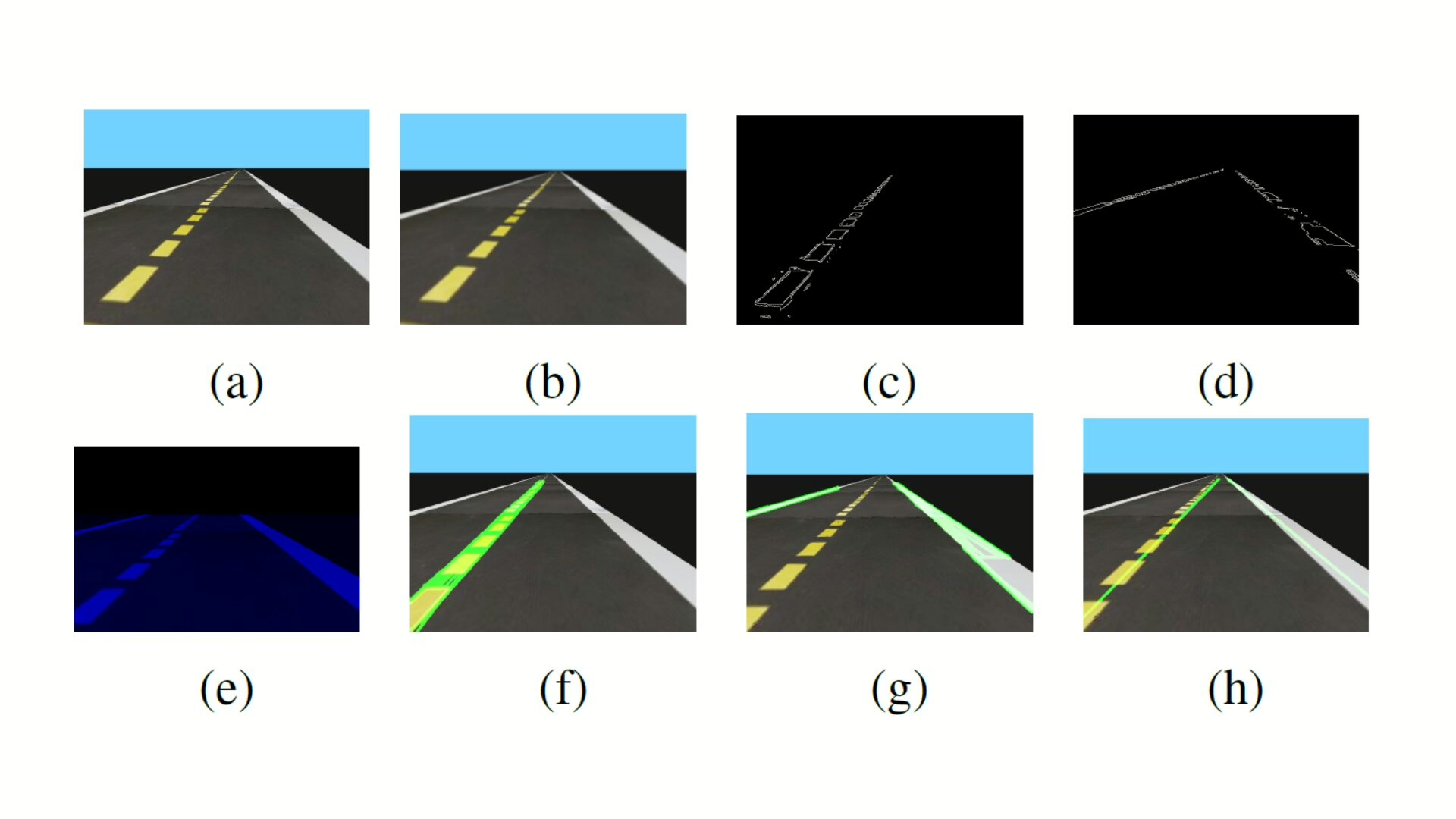

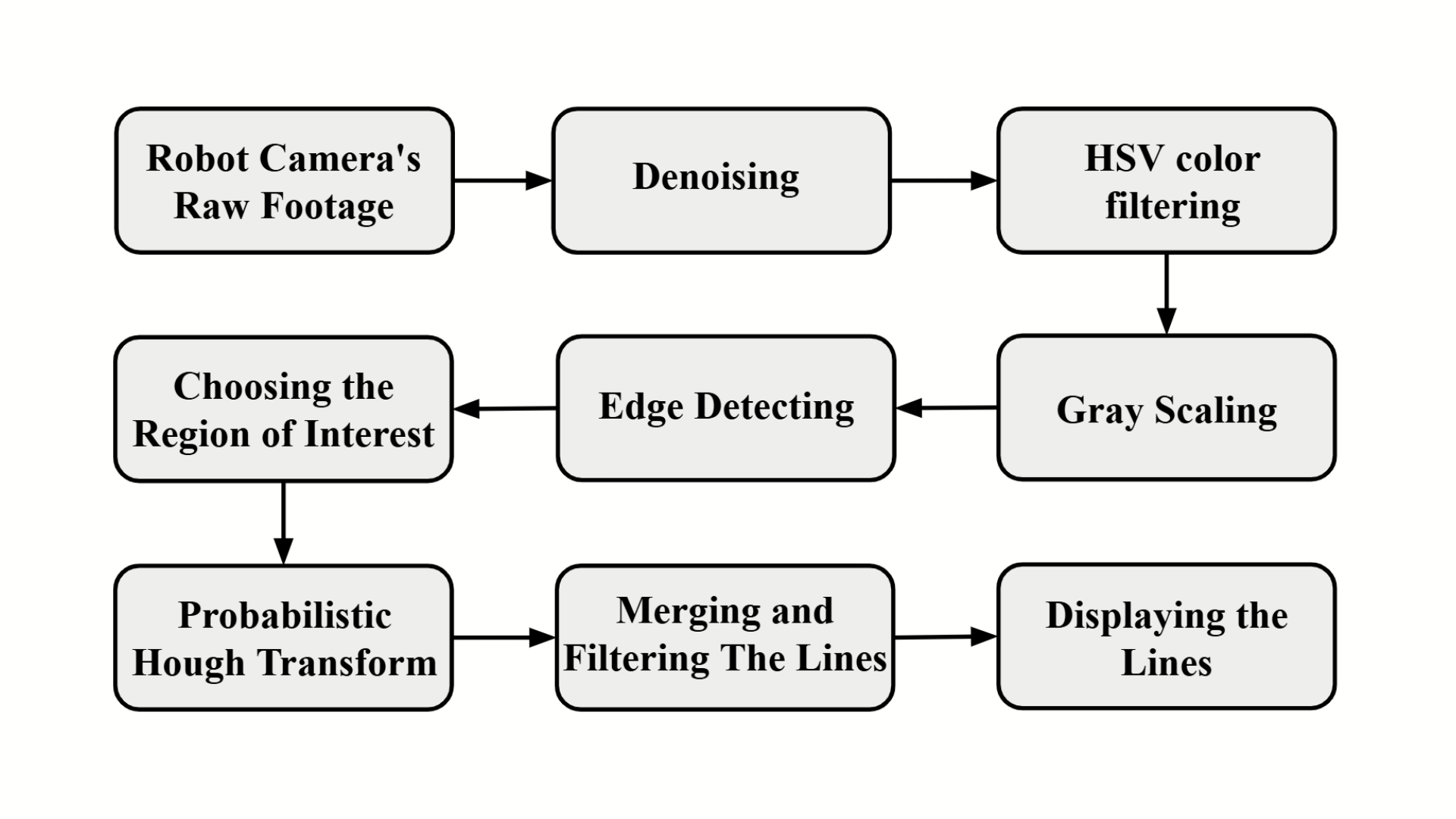

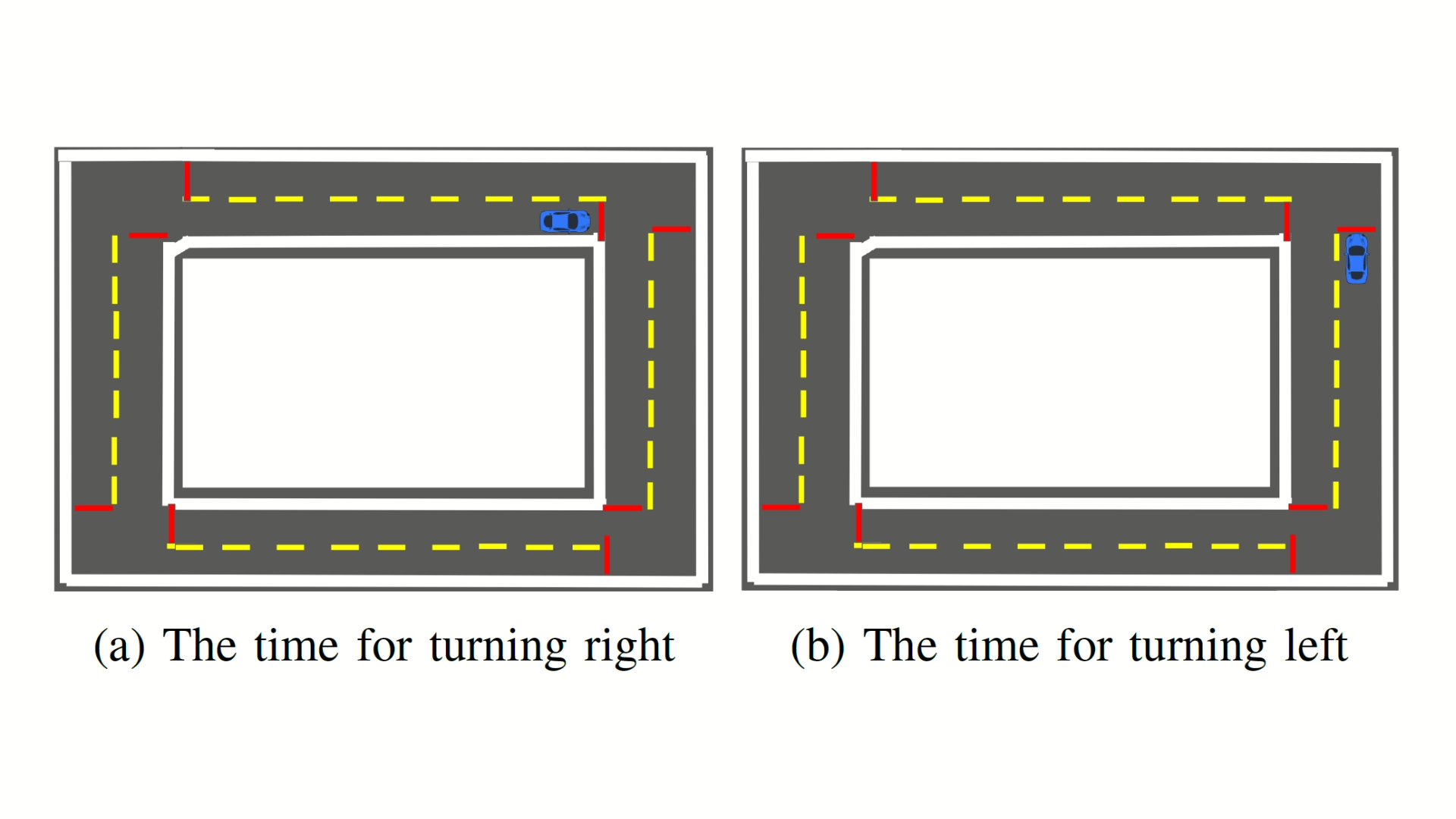

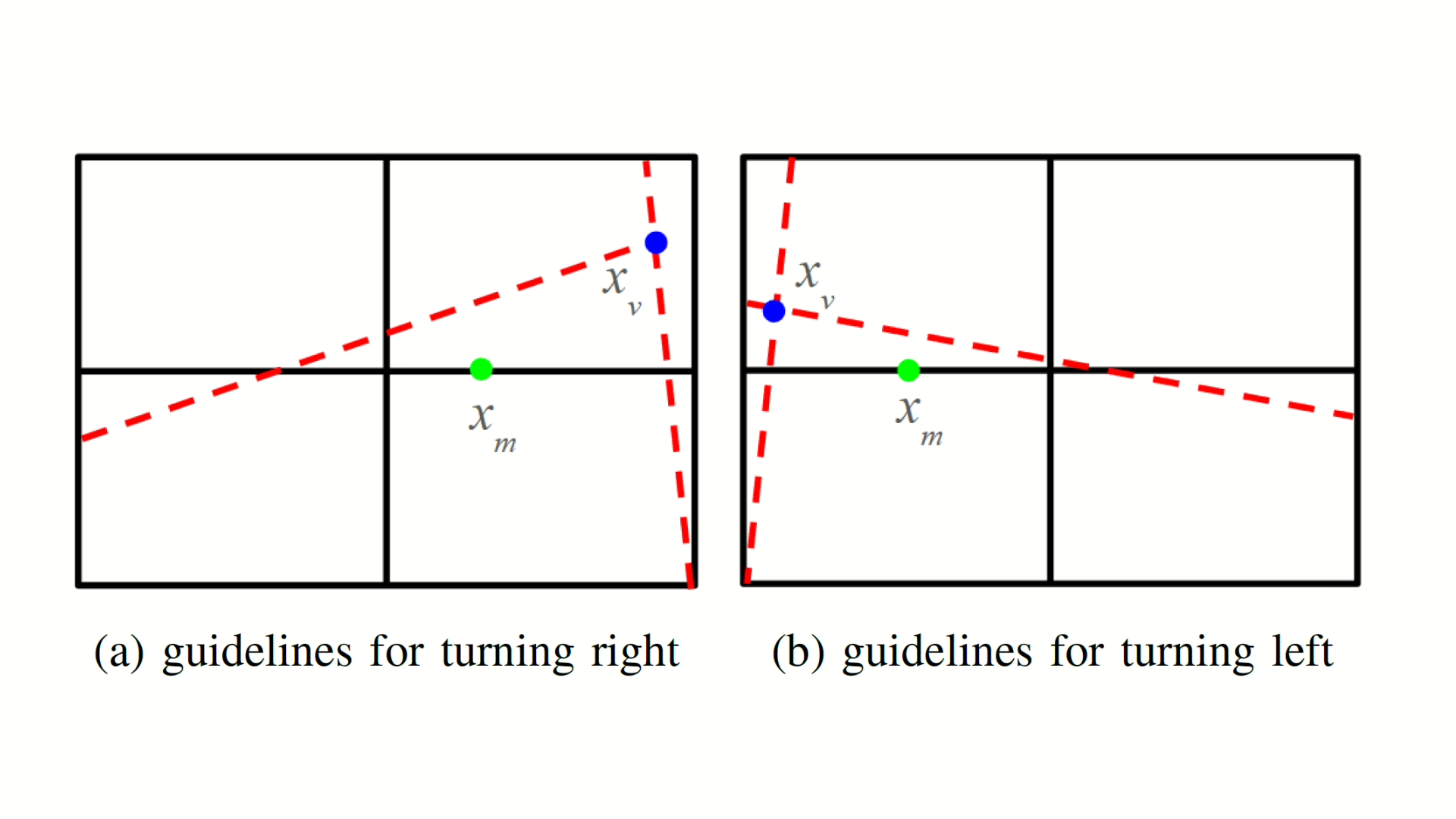

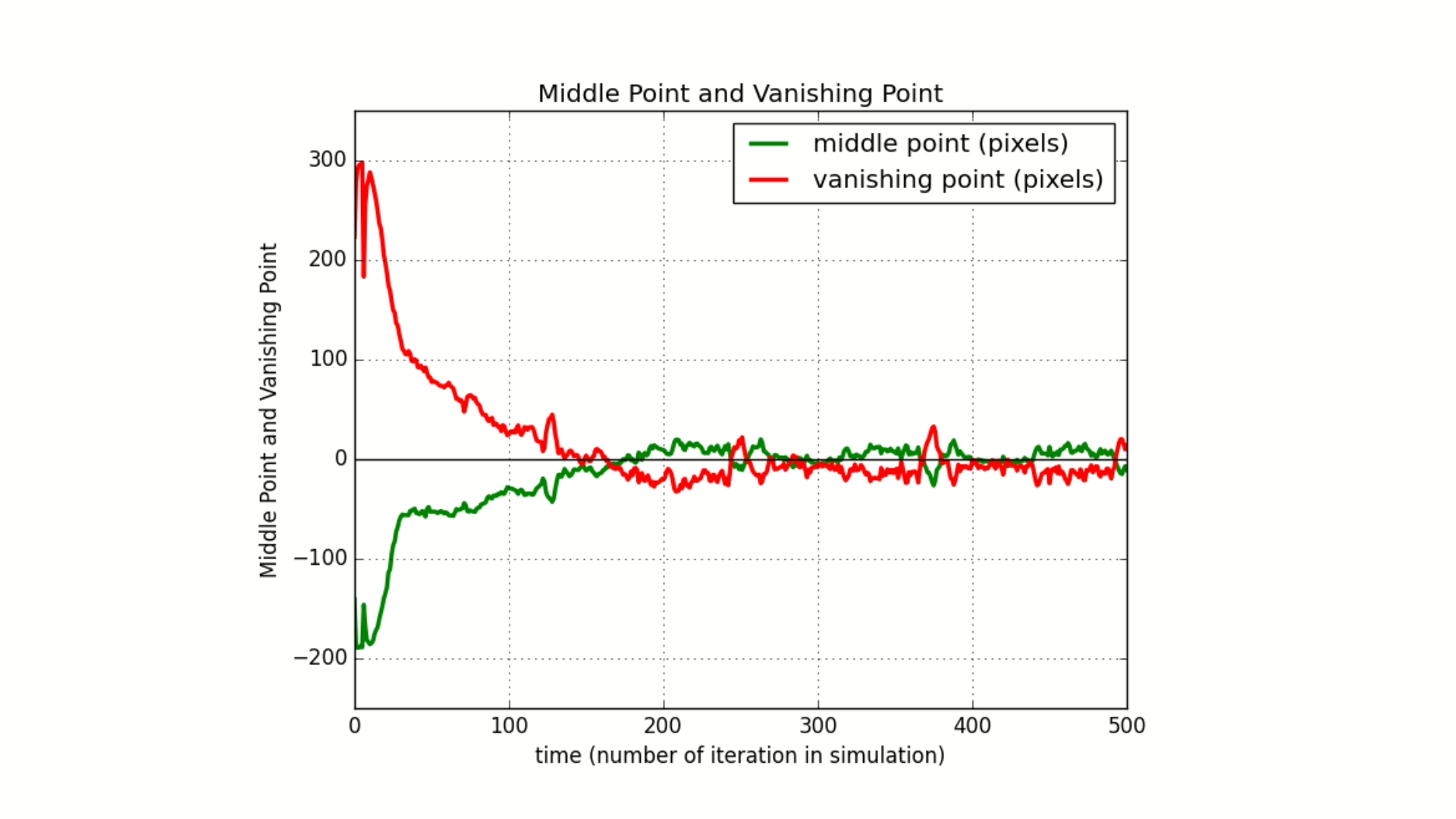

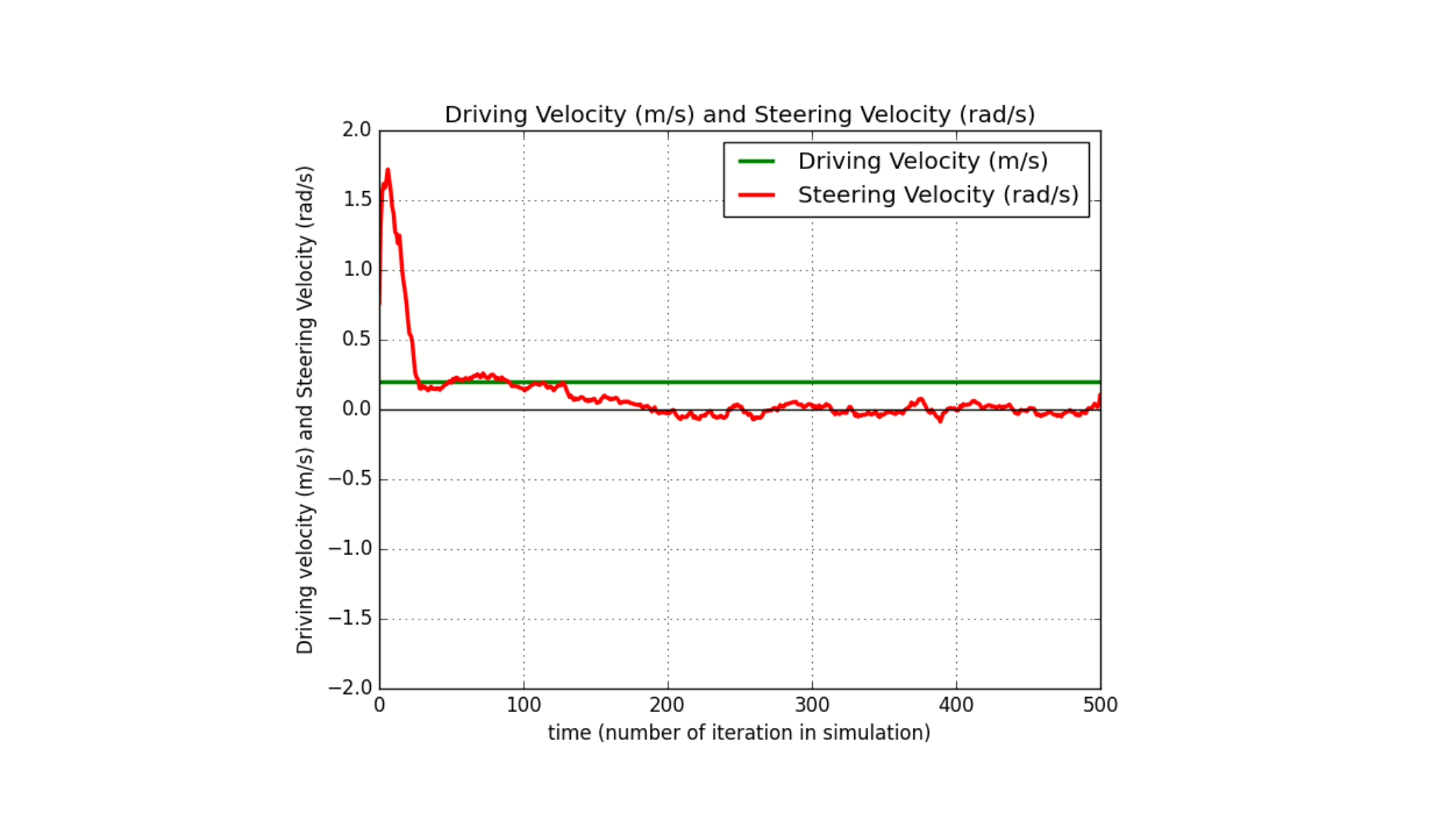

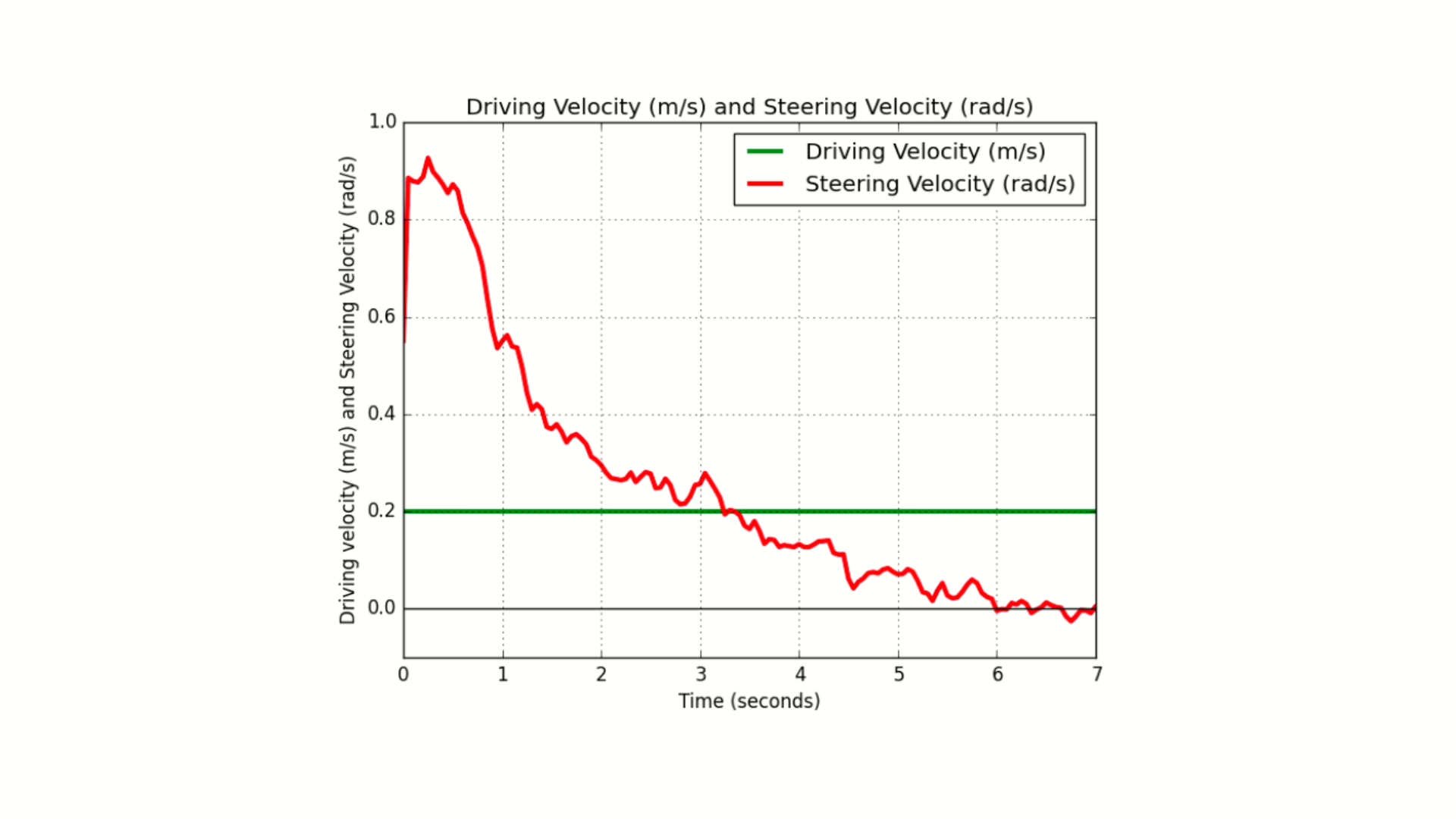

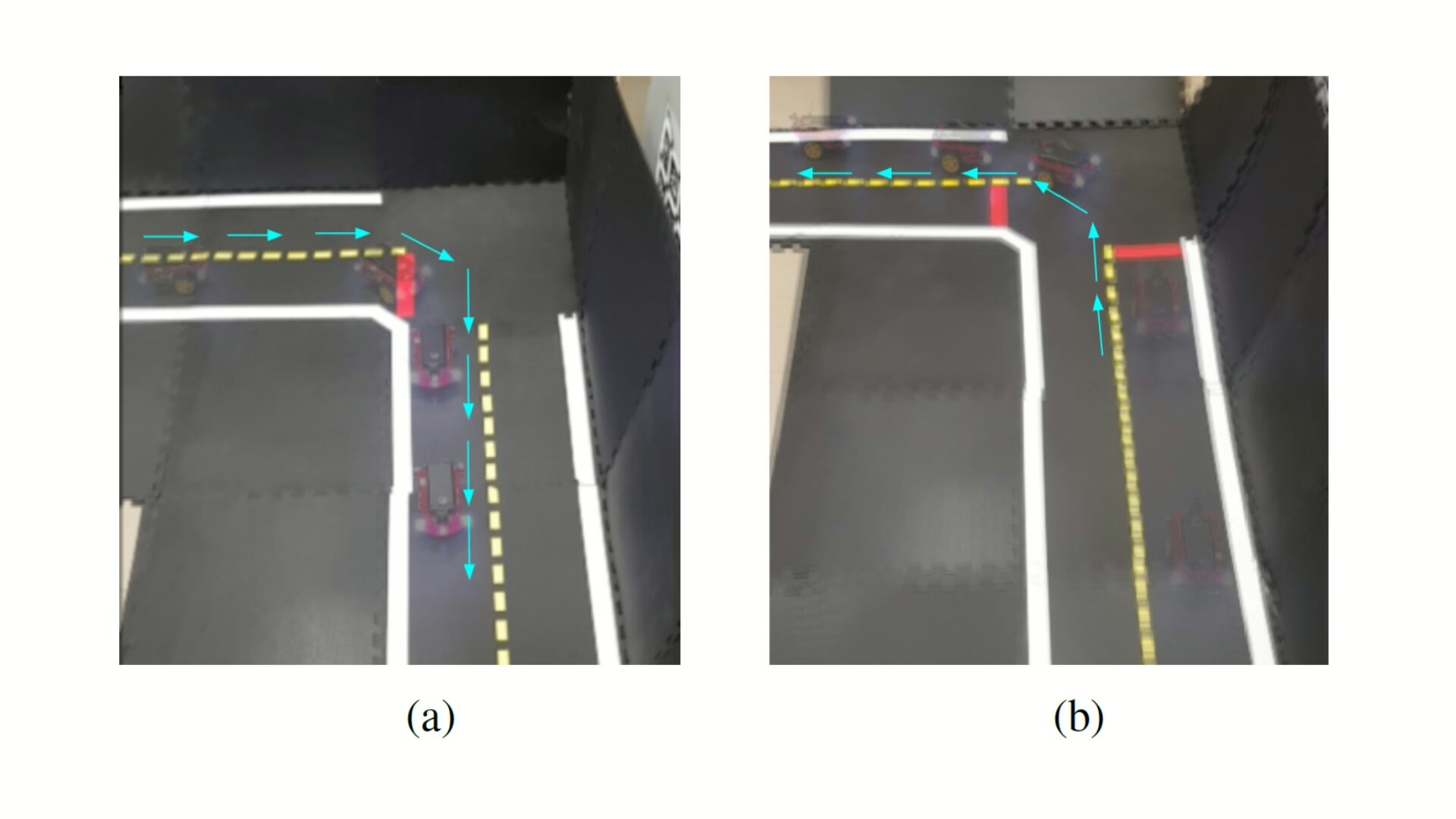

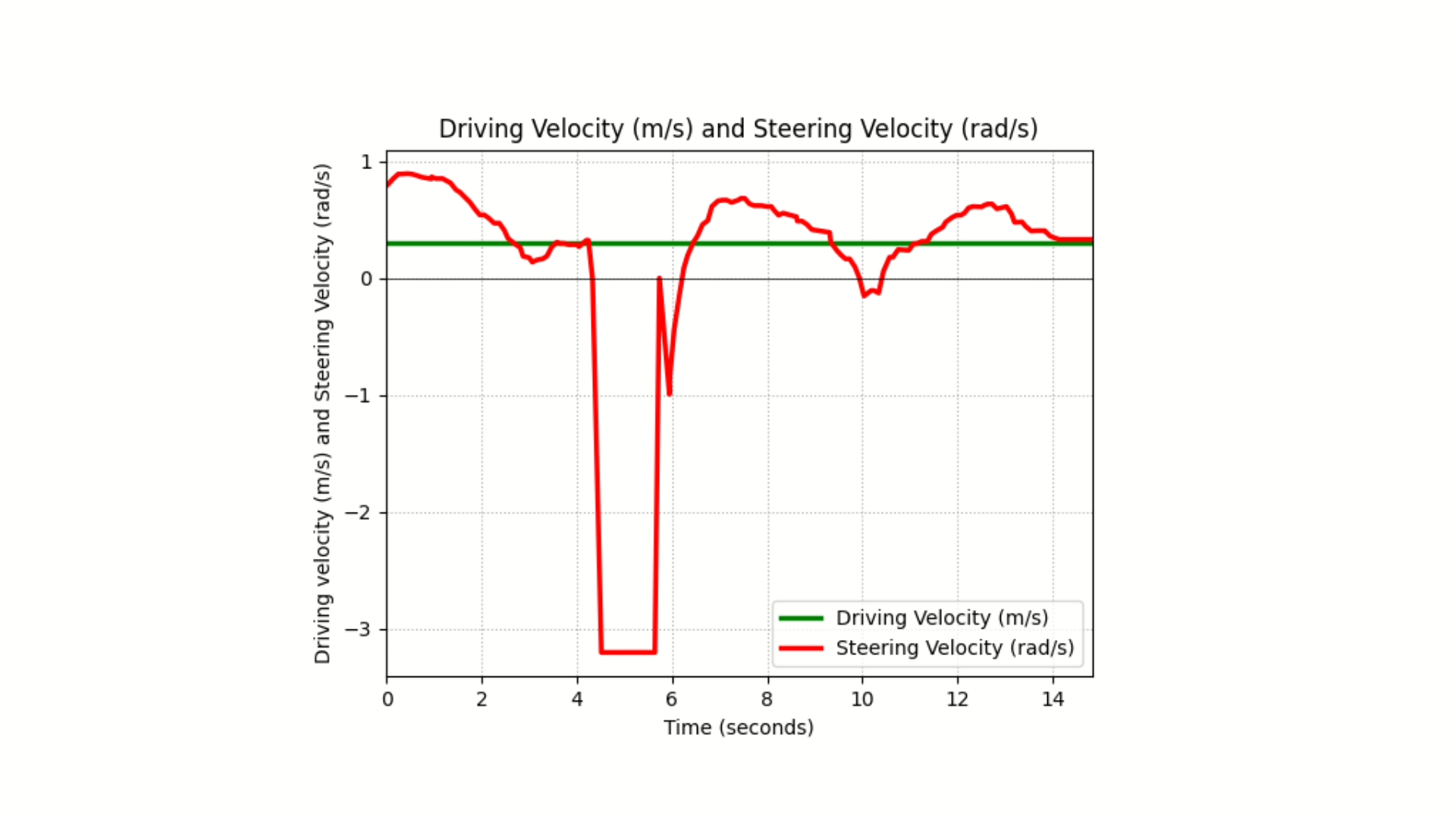

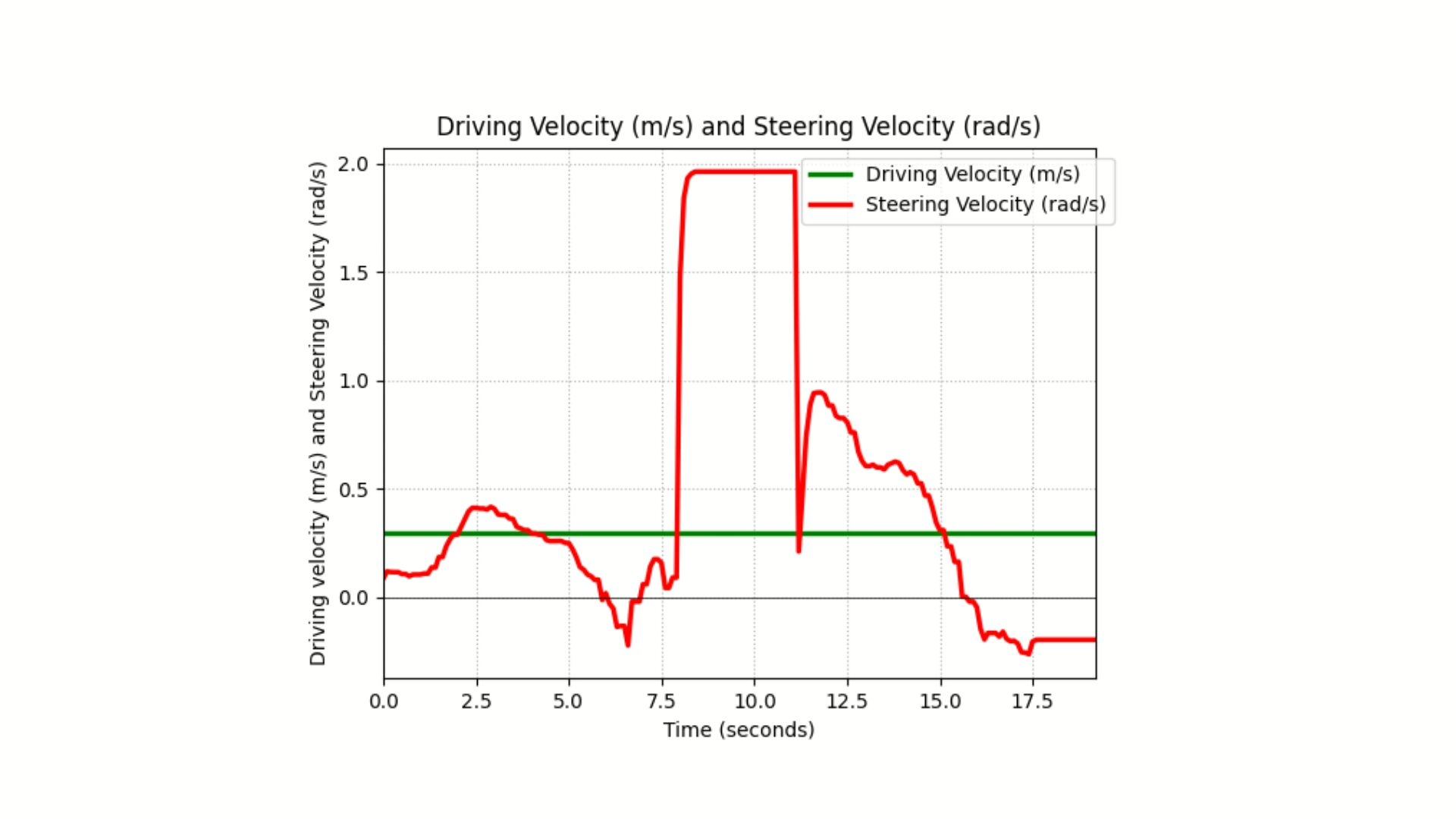

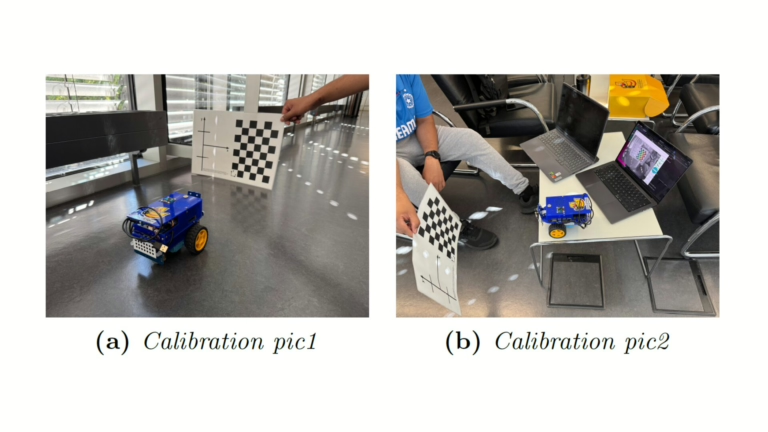

The method integrates calibrated camera intrinsics and extrinsics for distortion correction and frame alignment, motor gain and trim calibration for odometry consistency, and ROS-based perception nodes for lane filtering, stop line detection, obstacle recognition, and AprilTag-based pose estimation. Control nodes implement PID regulators for velocity and steering, parameterized turning primitives, and synchronized execution through a finite state machine that coordinates lane following, intersection stopping, turning maneuvers, and recovery states. Sensor fusion combines camera streams, encoder feedback, and ToF measurements for robust decision inputs.

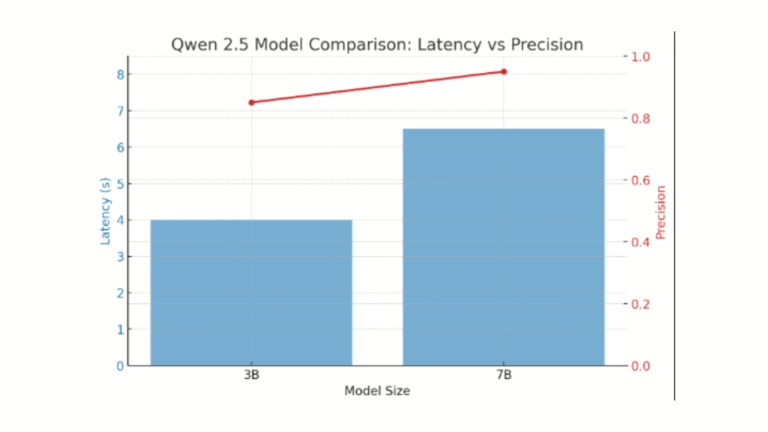

Quantized Qwen 2.5 vision-language models were deployed with llama.cpp, configured with reduced context window and batch size to match GPU memory limits. The models were evaluated for trajectory planning and visual reasoning tasks, with both 7B and 3B variants tested under quantization schemes. Integration required Docker containerization for portability and ROSBridge for monitoring and remote interaction.

Challenges included GPU memory capacity restricting larger model execution, inference latency exceeding 100 ms control cycle requirements, CUDA feature mismatches across builds, and instability in container runtimes on the NVIDIA Jetson Nano platform. These issues necessitated systematic parameter tuning of controllers, quantization of VLMs to GGUF formats, pruning strategies to reduce computation load, and hybrid offloading of visual reasoning to external compute nodes while maintaining low-level perception and control locally. Additional constraints involved balancing message-passing overhead in ROS, synchronization delays between perception and control nodes, and variability in inference reproducibility across different hardware builds.

Report and Presentation

Looking for similar projects?

Check out the following works on path planning with Duckietown:

VLM in Duckietown: Authors

Sahil Virani is a student at Technical University of Munich, Germany.

Suparatik Patel is a student at Technical University of Munich, Germany.

Esmir Kico is a student at Technical University of Munich, Germany.

Learn more

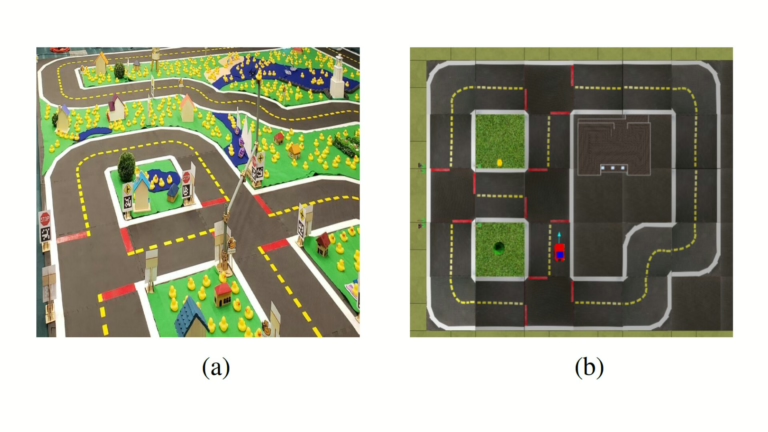

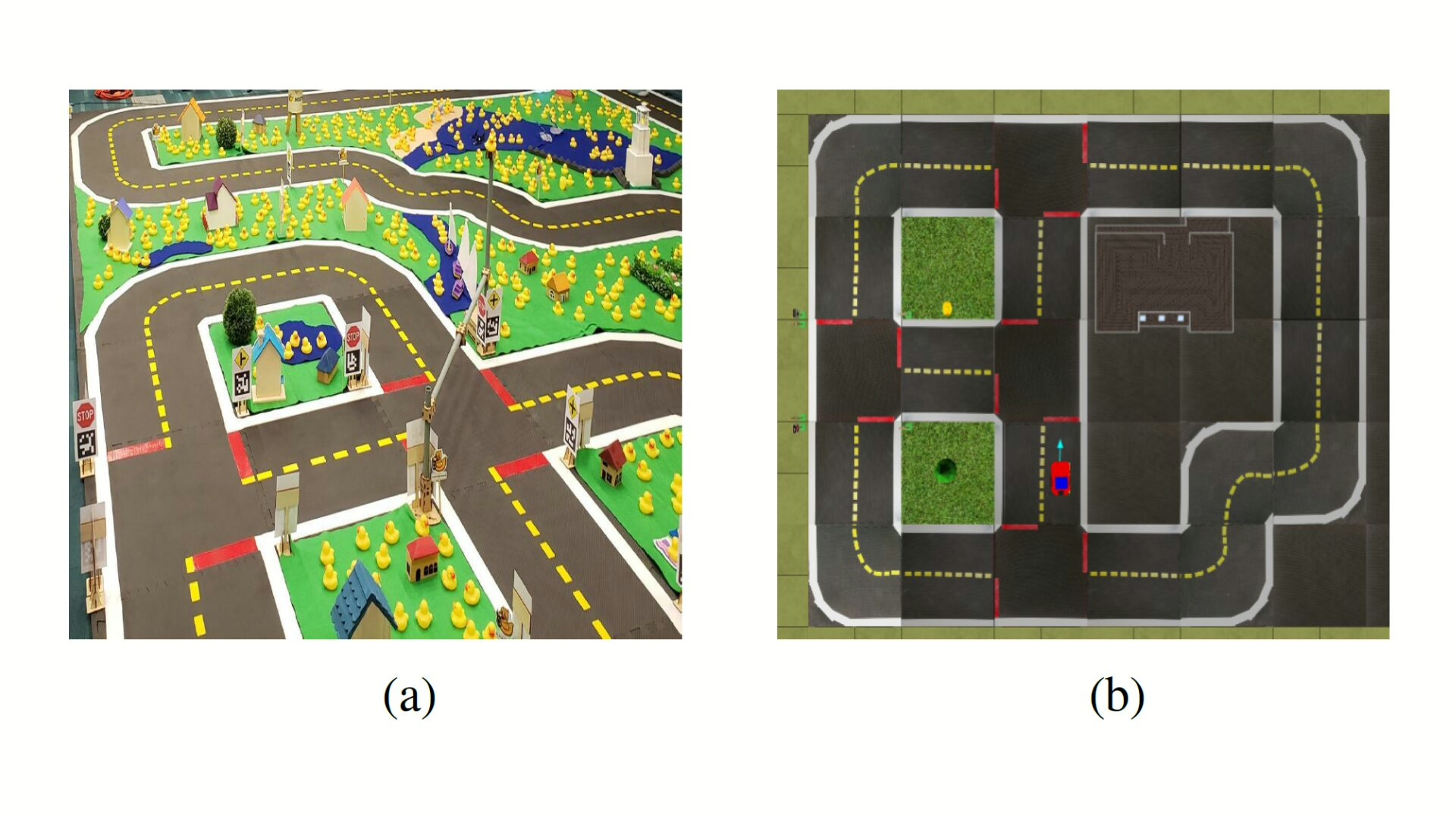

Duckietown is a modular, customizable, and state-of-the-art platform for creating and disseminating robotics and AI learning experiences.

Duckietown is designed to teach, learn, and do research: from exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge.

These spotlight projects are shared to exemplify Duckietown’s value for hands-on learning in robotics and AI, enabling students to apply theoretical concepts to practical challenges in autonomous robotics, boosting competence and job prospects.