General Information

- Analysis of Object Detection Models on Duckietown Robot Based on YOLOv5 Architectures

- Toan-Khoa Nguyen, Lien T. Vu, Viet Q. Vu, TiShu-Hao Liang, en-Dat Hoang, Minh-Quang Tran.

- National Taiwan University of Science and Technology, Taiwan.

- Nguyen, T.K., Vu, L.T., Vu, V.Q., Hoang, T.D., Liang, S.H. and Tran, M.Q., 2021. Analysis of object detection models on duckietown robot based on yolov5 architectures. International Journal of iRobotics, 4(4), pp.17-22.

Object Detection on Duckiebots Using YOLOv5 Models

Obstacle detection is about having autonomous vehicles perceive their surroundings, identify objects, and determine if they might conflict with the accomplishment of the robot’s task, e.g., navigating to reach a goal position.

Amongst the many applications of AI, object detection from images is arguably the one that experienced the most performance enhancement compared to “traditional approaches” such as color or blob detection.

Images are, from the point of view of a machine, nothing but (several) “tables” of numbers, where each number represents the intensity of light, at that location, across a channel (e.g., R, G, B for colored images).

Giving meaning to a cluster of numbers is not as easy as, for a human, it would be to identify a potential obstacle on the path. Machine learning-driven approaches have quickly outperformed traditional computer vision approaches at this task, strong of the abundant and cheap data for training made available by datasets and general imagery on the internet.

Various approaches (networks) for object detection have rapidly succeded in outperforming each other, and YOLO models particularly for their balance of computational efficiency and detection accuracy.

Learn about robot autonomy, and the difference between traditional and machine learning approaches, from the links below!

Abstract

In the author’s words:

Object detection technology is an essential aspect of the development of autonomous vehicles. The crucial first step of any autonomous driving system is to understand the surrounding environment.

In this study, we present an analysis of object detection models on the Duckietown robot based on You Only Look Once version 5 (YOLOv5) architectures. YOLO model is commonly used for neural network training to enhance the performance of object detection models.

In a case study of Duckietown, the duckies and cones present hazardous obstacles that vehicles must not drive into. This study implements the popular autonomous vehicles learning platform, Duckietown’s data architecture and classification dataset, to analyze object detection models using different YOLOv5 architectures. Moreover, the performances of different optimizers are also evaluated and optimized for object detection.

The experiment results show that the pre-trained of large size of YOLOv5 model using the Stochastic Gradient Decent (SGD) performs the best accuracy, in which a mean average precision (mAP) reaches 97.78%. The testing results can provide objective modeling references for relevant object detection studies.

Highlights - Object Detection on Duckiebots Using YOLOv5 Models

Here is a visual tour of the work of the authors. For more details, check out the full paper.

Conclusion - Object Detection on Duckiebots Using YOLOv5 Models

Here are the conclusions from the authors of this paper:

“This paper presents an analysis of object detection models on the Duckietown robot based on YOLOv5 architectures. The YOLOv5 model has been successfully used to recognize the duckies and cones on the Duckietown. Moreover, the performances of different YOLOv5 architectures are analyzed and compared.

The results indicate that using the pre-trained model of YOLOv5 architecture with the SGD optimizer can provide excellent accuracy for object detection. The higher accuracy can also be obtained even with the medium size of the YOLOv5 model that enables to accelerate the computation of the system.

Furthermore, once the object detection model is optimized, it is integrated into the ROS in the Duckietown robot. In future works, it is potential to investigate the YOLOv5 with Layer-wise Adaptive Moments Based (LAMB) optimizer instead of SGD, applying repeated augmentation with Binary Cross-Entropy (BCE), and using domain adaptation technique.”

Project Authors

Toan-Khoa Nguyen is currently working as an AI engineer at FPT Software AI Center, Vietnam.

Lien T. Vu is with the Faculty of Mechanical Engineering and Mechatronics, Phenikaa University, Vietnam.

Viet Q. Vu is with the Faculty of International Training, Thai Nguyen University of Technology, Vietnam.

Tien-Dat Hoang is with the Faculty of International Training, Thai Nguyen University of Technology, Vietnam.

Shu-Hao Liang is with the Center for Cyber-Physical System Innovation, National Taiwan University of Science and Technology, Taiwan.

Minh-Quang Tran is with the Industry 4.0 Implementation Center, Center for Cyber-Physical System Innovation, National Taiwan University of Science and Technology, Taiwan and also with the Department of Mechanical Engineering, Thai Nguyen University of Technology, Vietnam.

Learn more

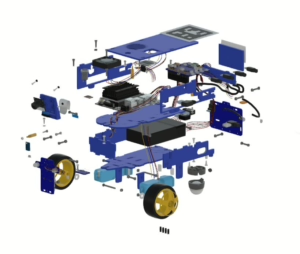

Duckietown is a platform for creating and disseminating robotics and AI learning experiences.

It is modular, customizable and state-of-the-art, and designed to teach, learn, and do research. From exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge, Duckietown evolves with the skills of the user.