General Information

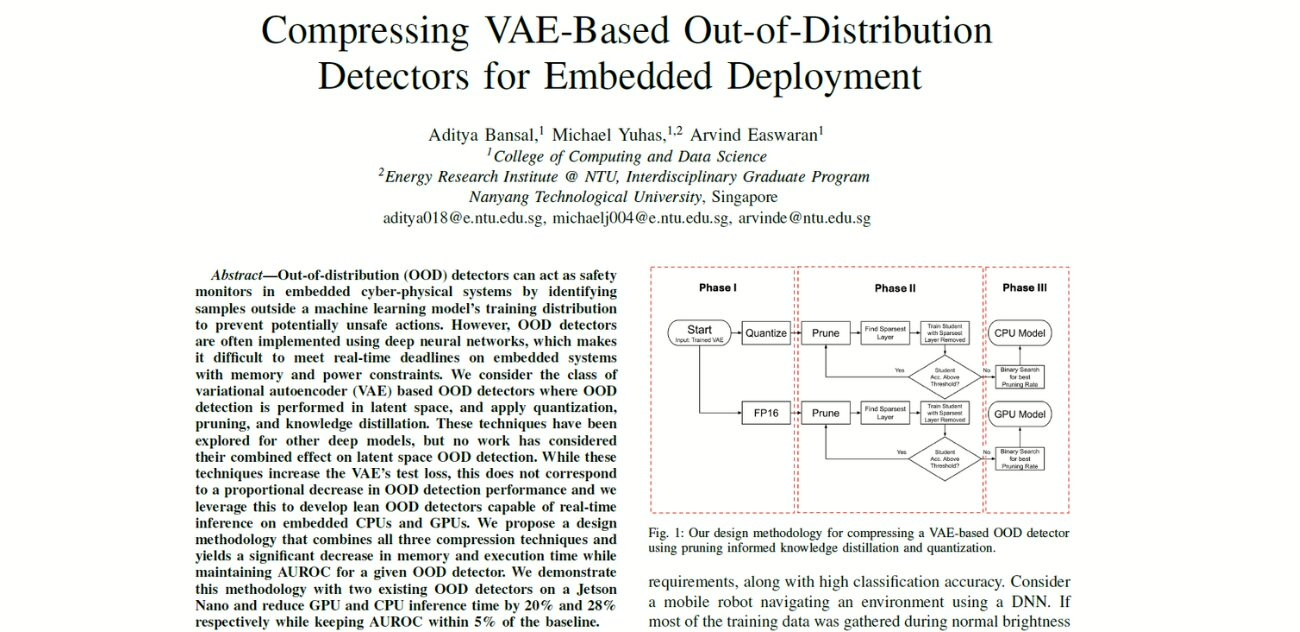

- Title: Compressing VAE-Based Out-of-Distribution Detectors for Embedded Deployment

- Authors: Aditya Bansal, Michael Yuhas, Arvind Easwaran

- Institution: Nanyang Technological University, SIngapore

- Citation: Bansal, A., Yuhas, M., & Easwaran, A. (2024). Compressing VAE-Based Out-of-Distribution Detectors for Embedded Deployment. In Proceedings - 2024 IEEE 30th International Conference on Embedded and Real-Time Computing Systems and Applications, RTCSA 2024 (pp. 37-42).

VAE-Based Out-of-Distribution Detectors for Embedded Systems

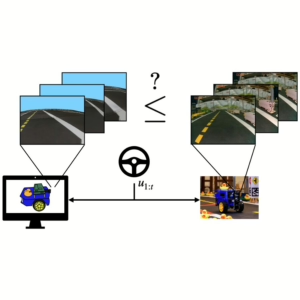

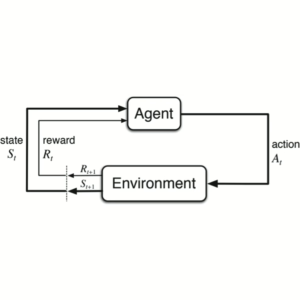

Out-of-distribution (OOD) detection is essential for maintaining safety in machine learning systems, especially those operating in the real world. It helps identify inputs that differ significantly from the training data, which could lead to unexpected or unsafe behavior.

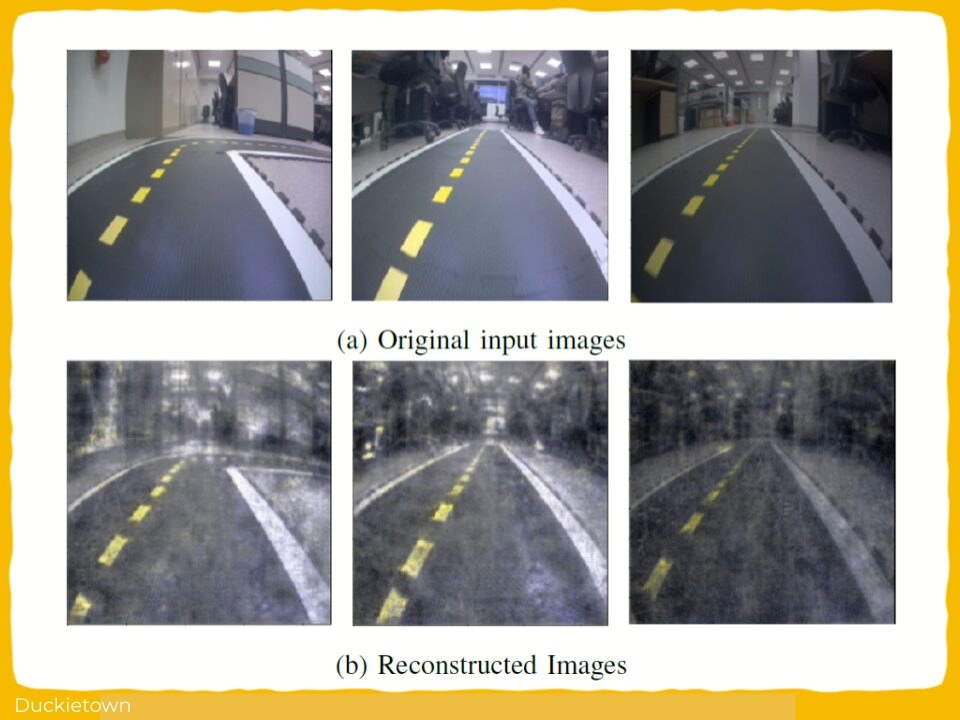

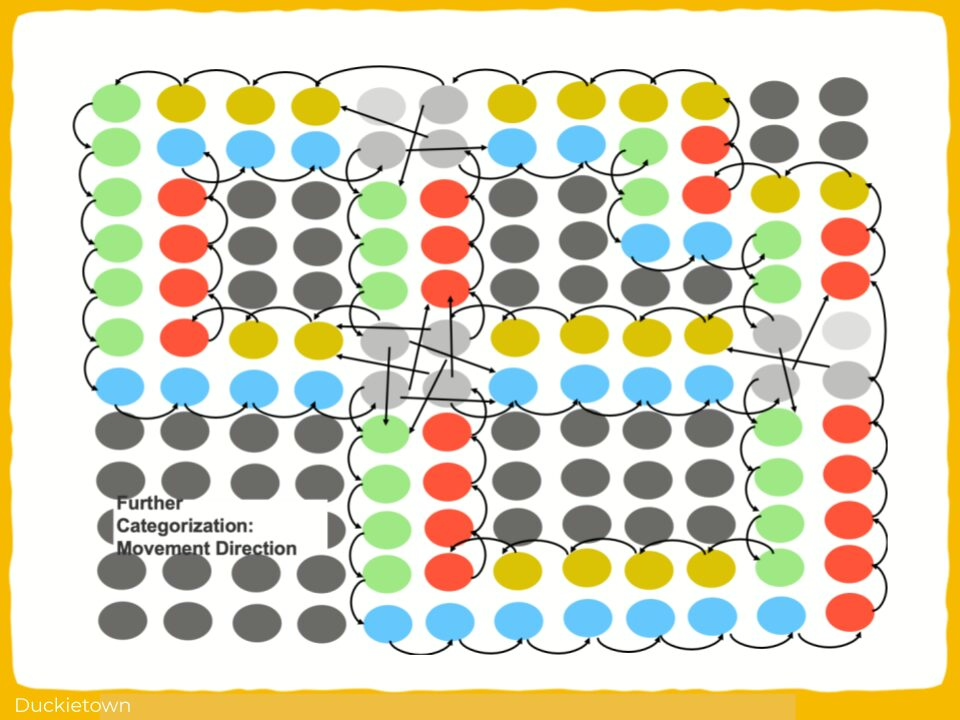

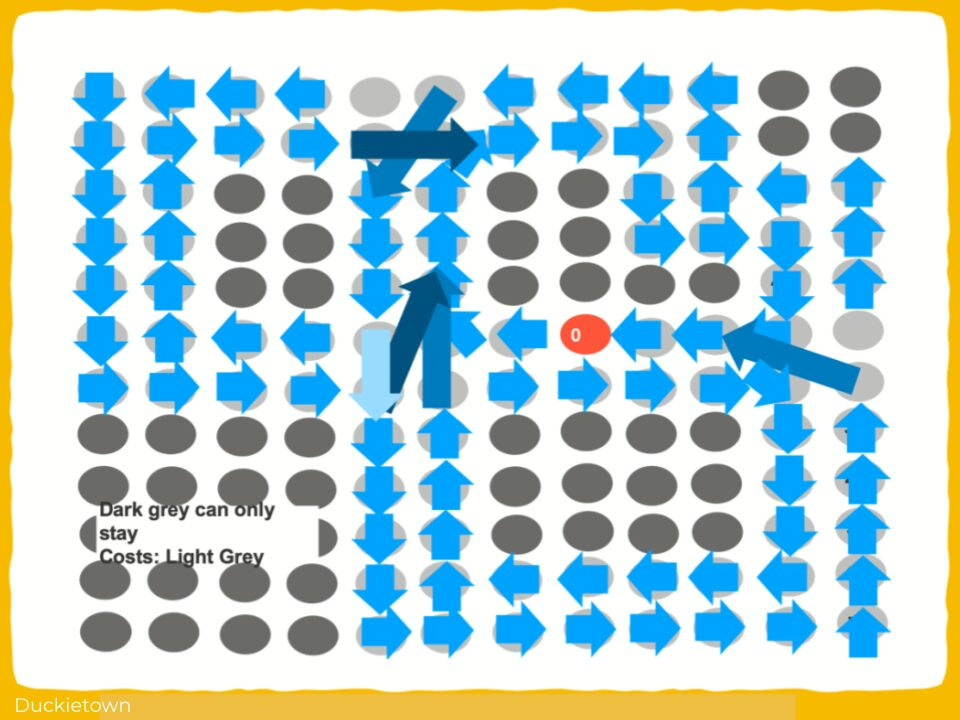

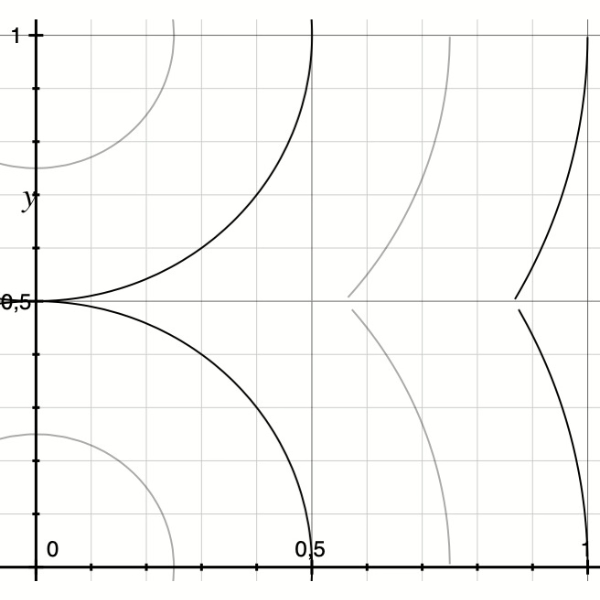

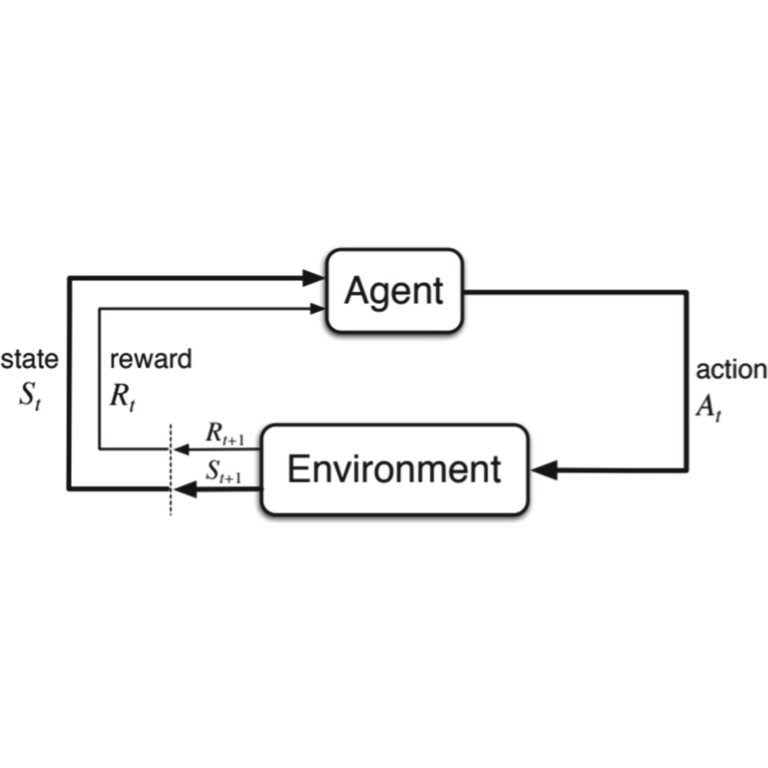

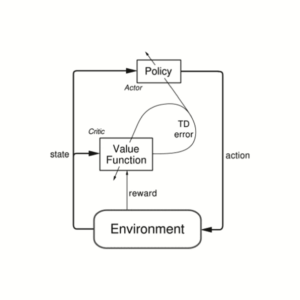

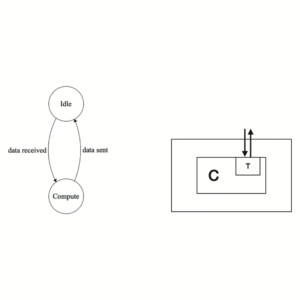

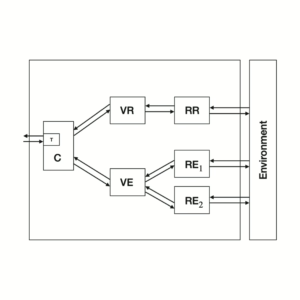

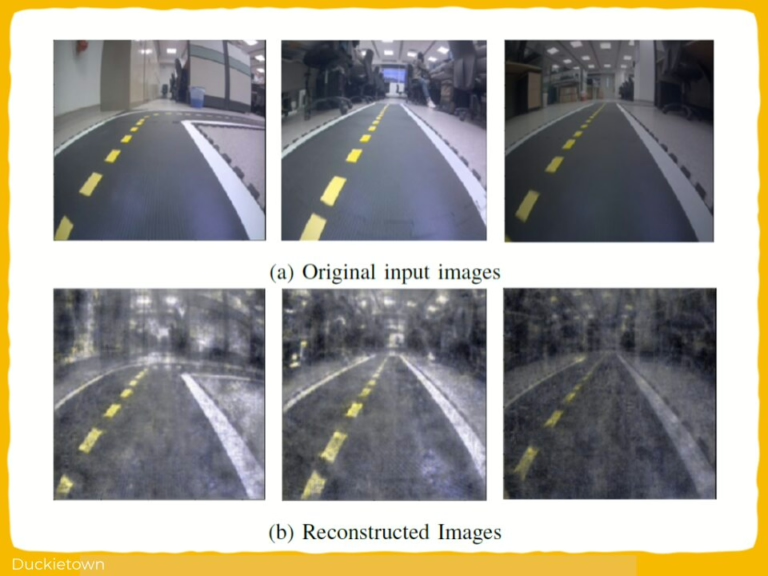

Variational Autoencoders (VAEs) are neural networks that compress input data into a smaller latent space (a compact set of features) and reconstructs the input from this compressed version.

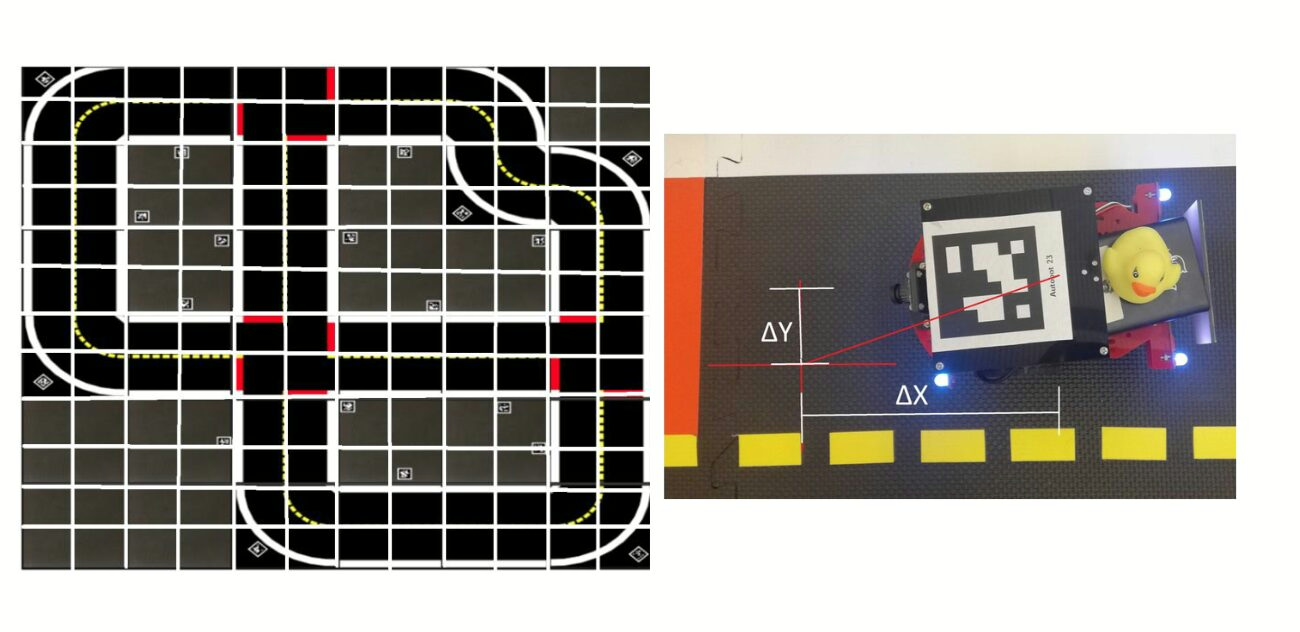

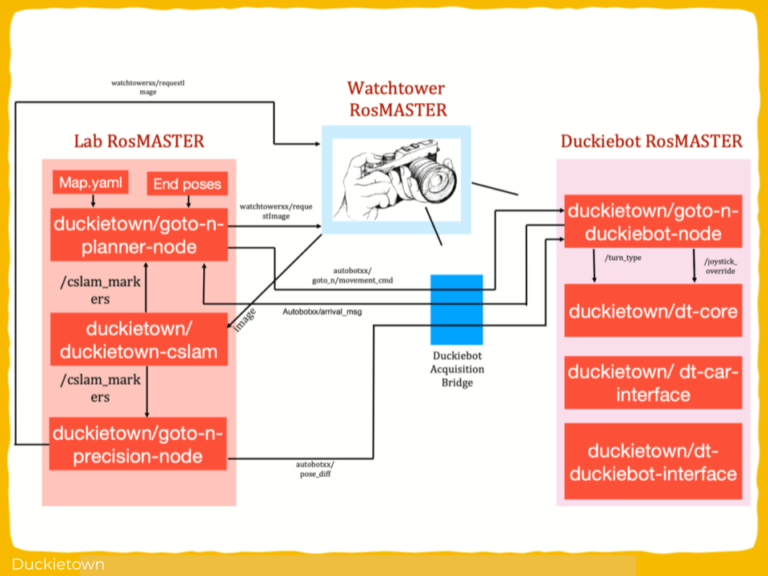

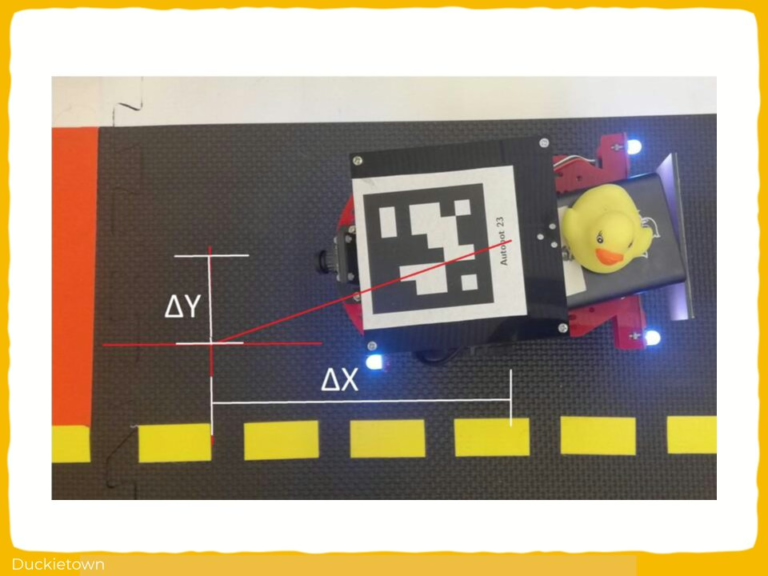

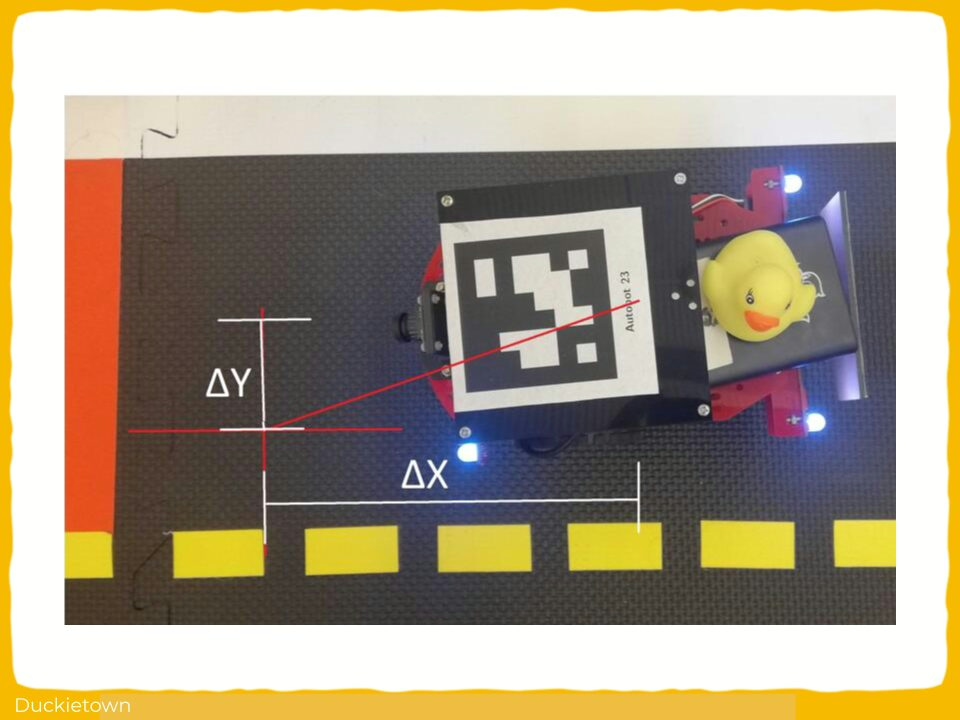

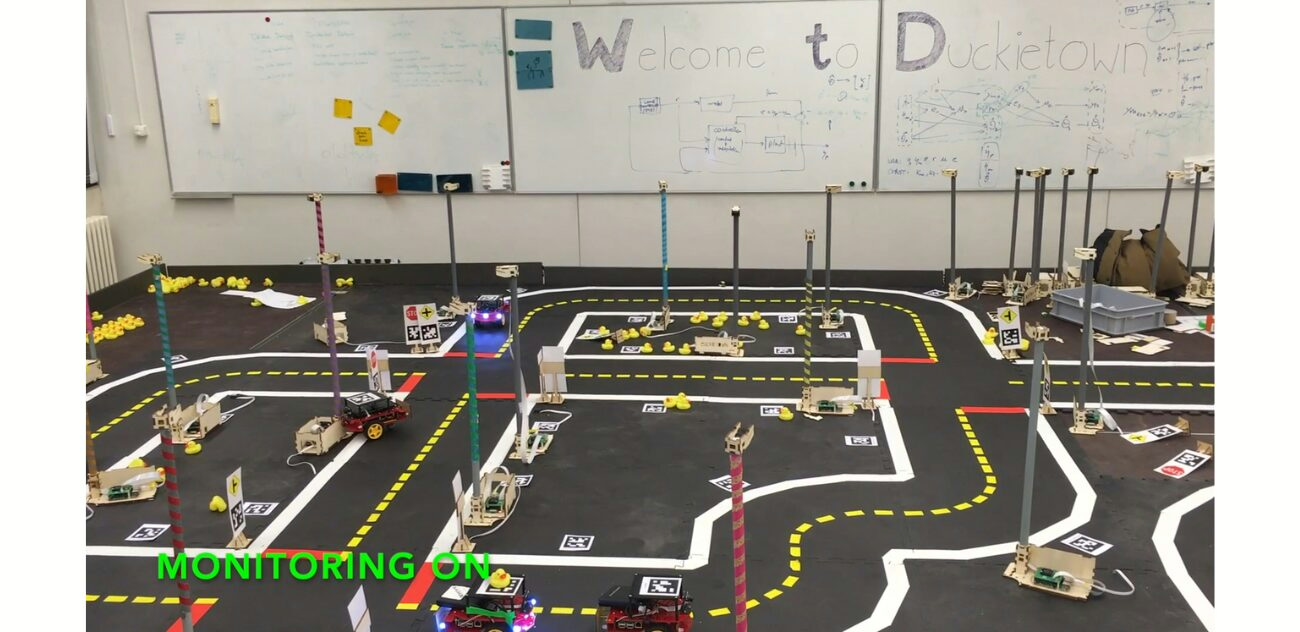

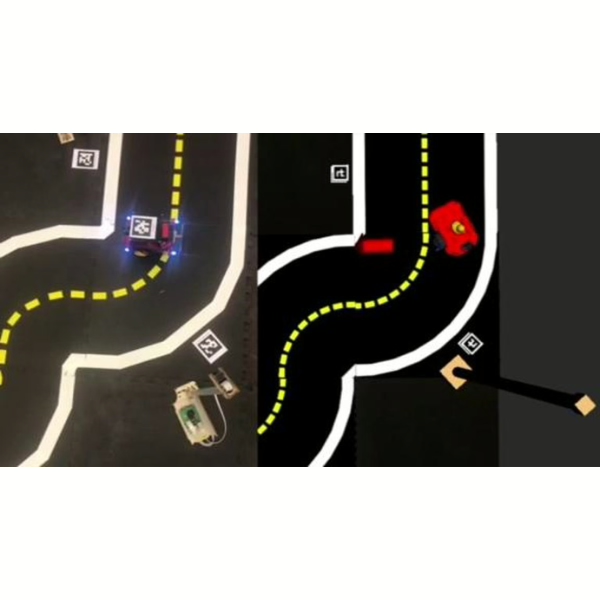

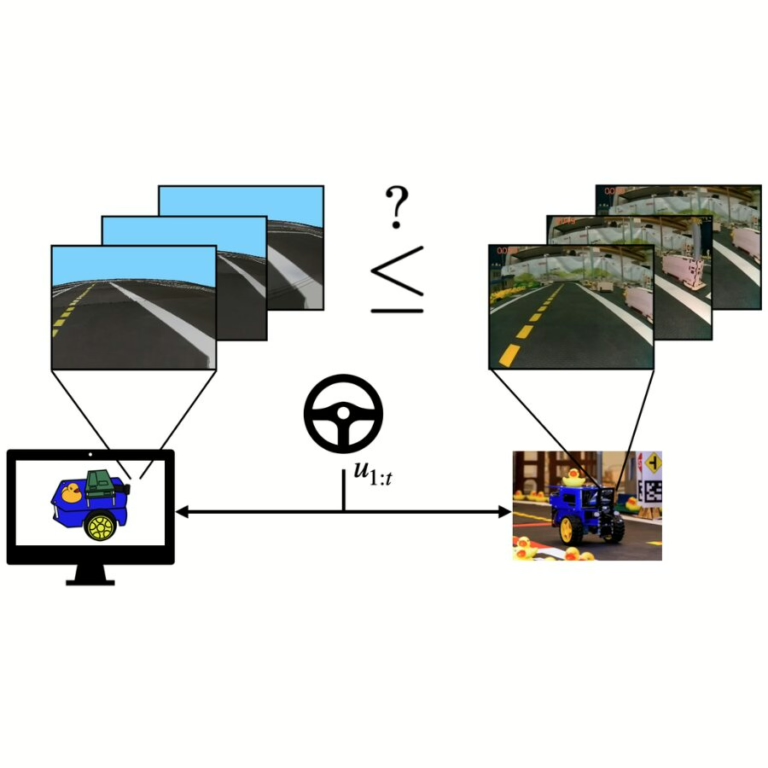

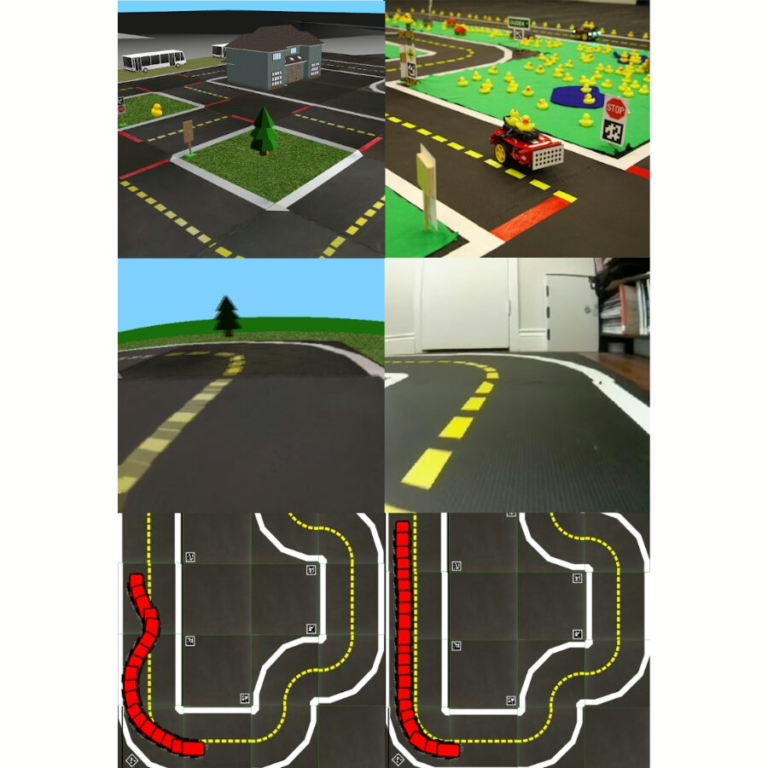

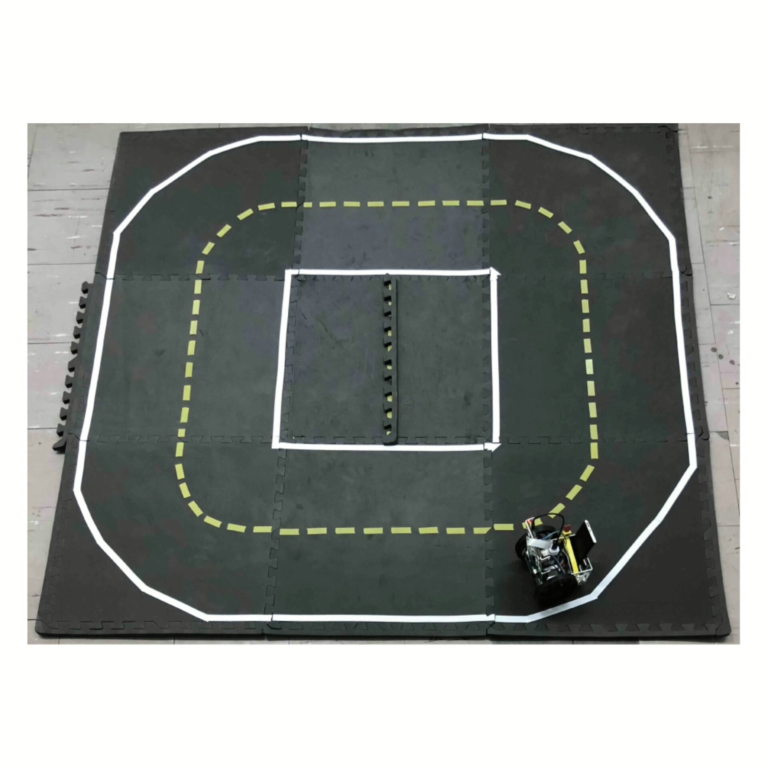

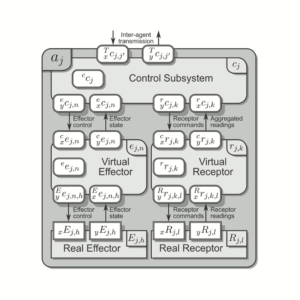

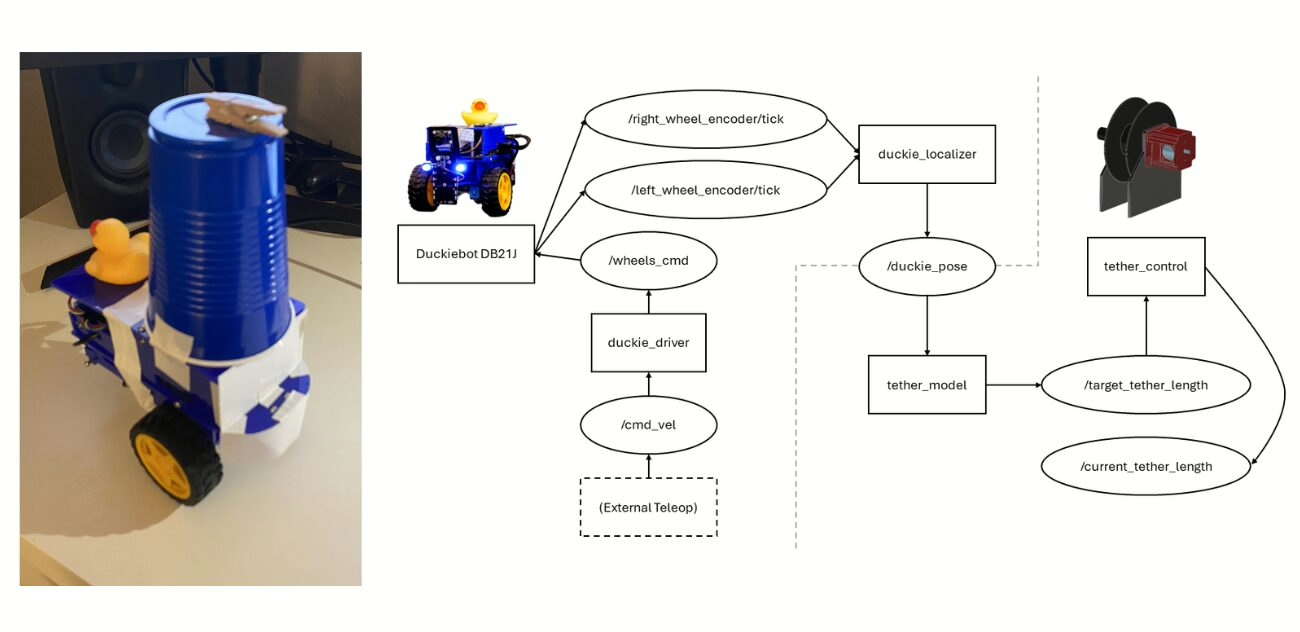

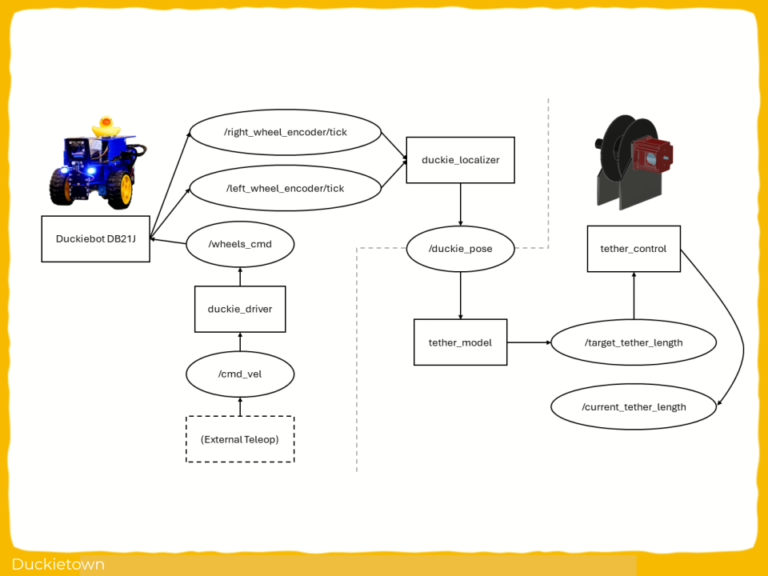

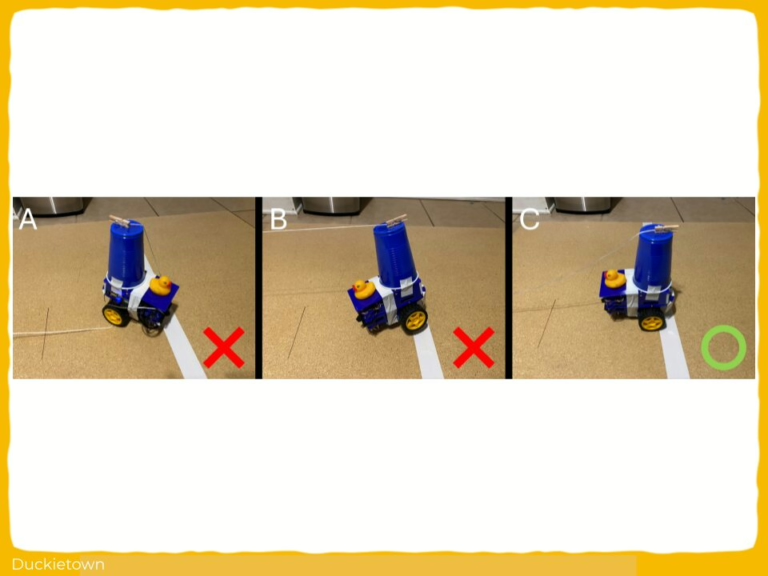

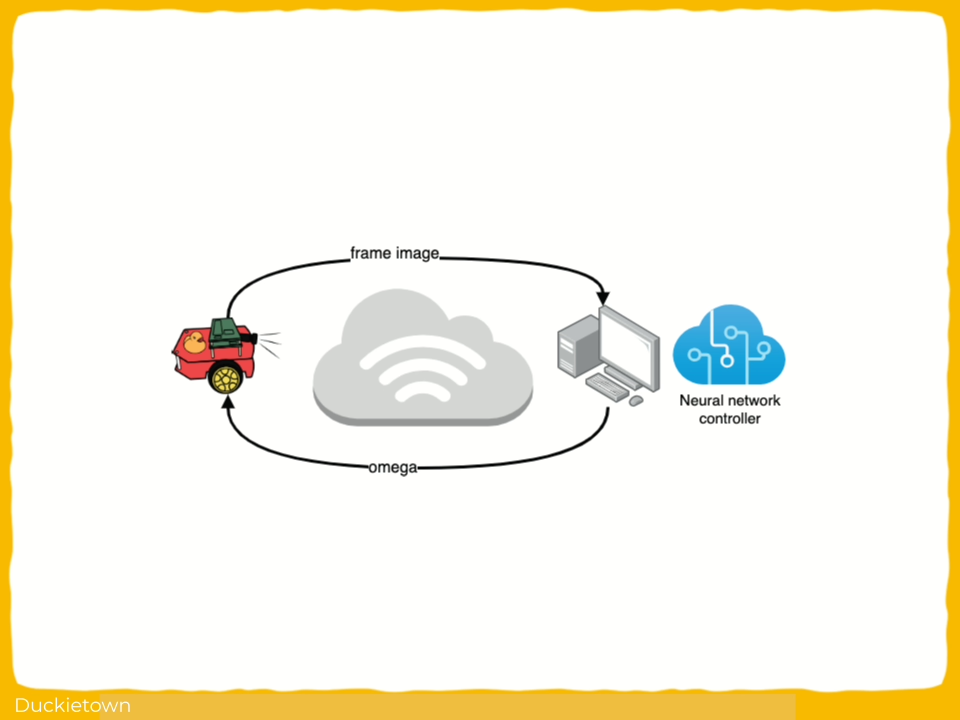

In OOD detection, if the reconstruction fails or doesn’t fit the expected latent space, the input is flagged as unfamiliar, i.e., out-of-distribution. While VAEs are effective, they are computationally expensive, making them hard to deploy on small, embedded devices like Duckiebots.

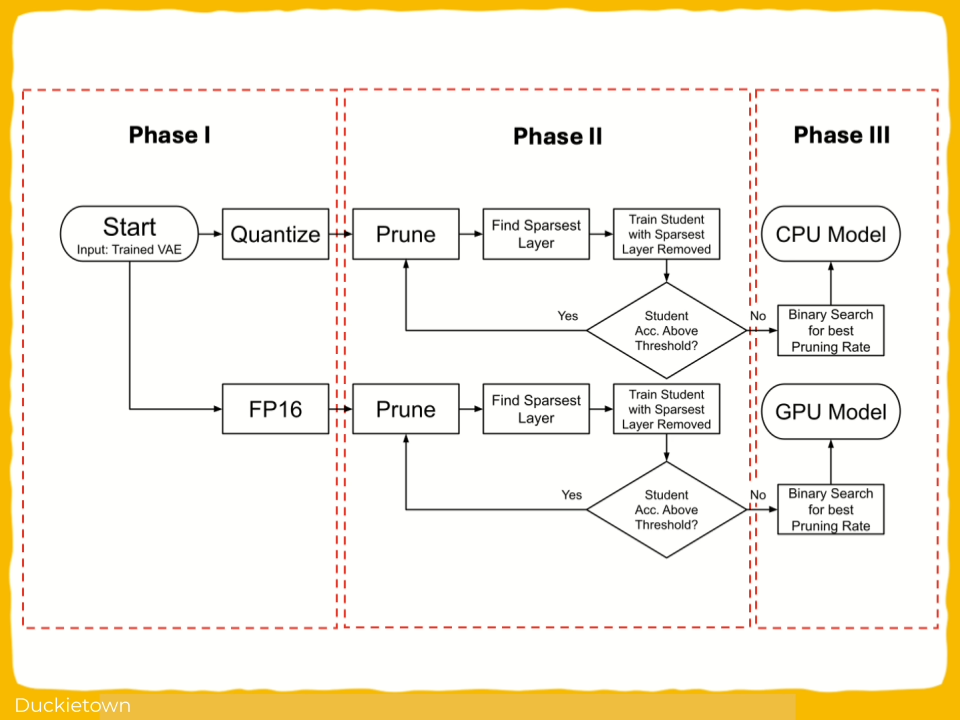

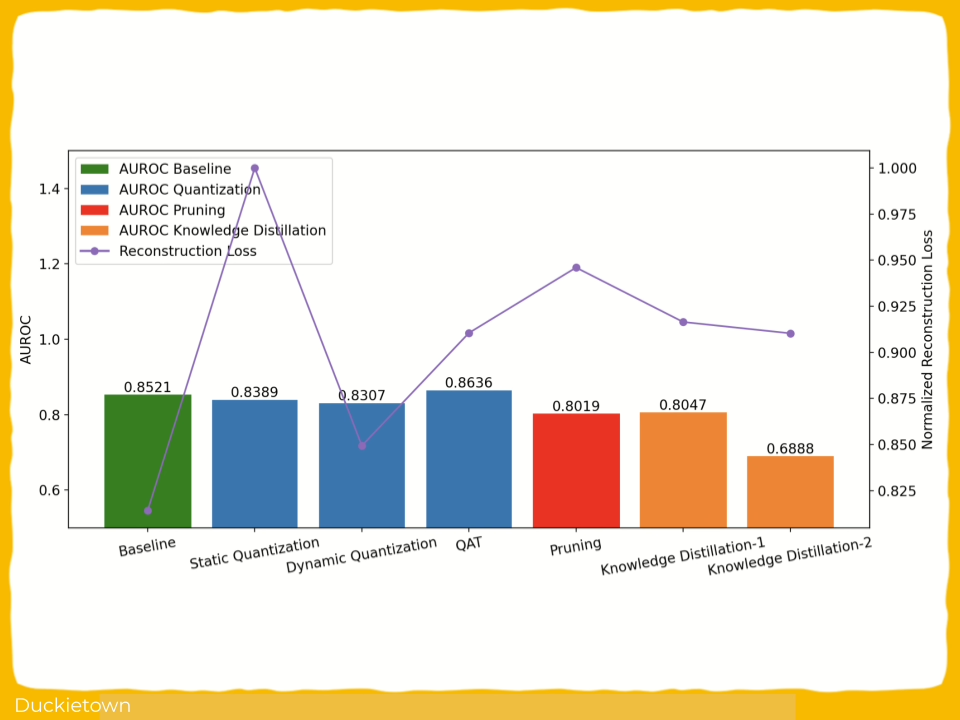

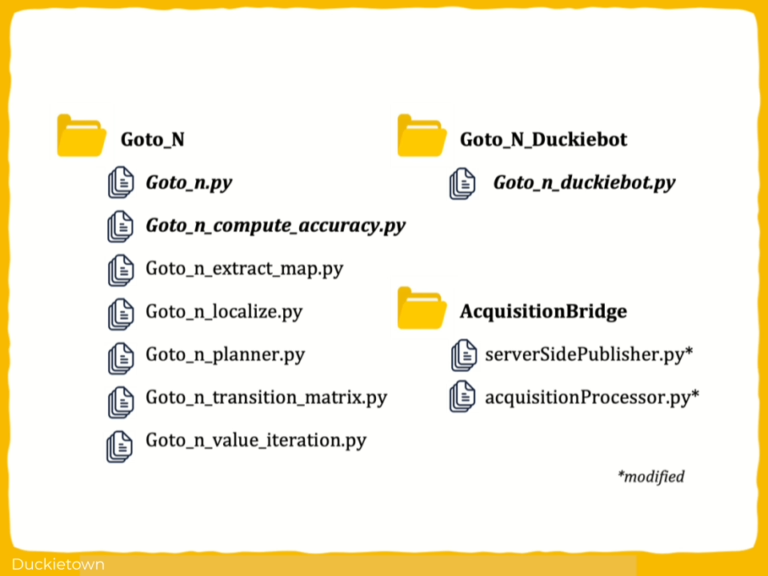

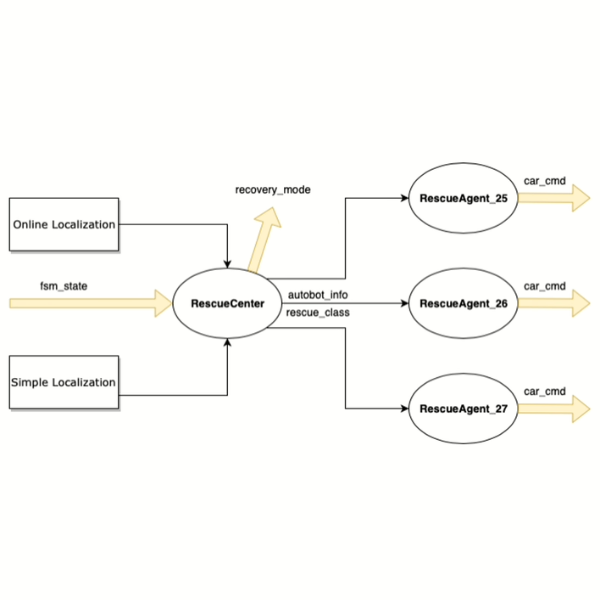

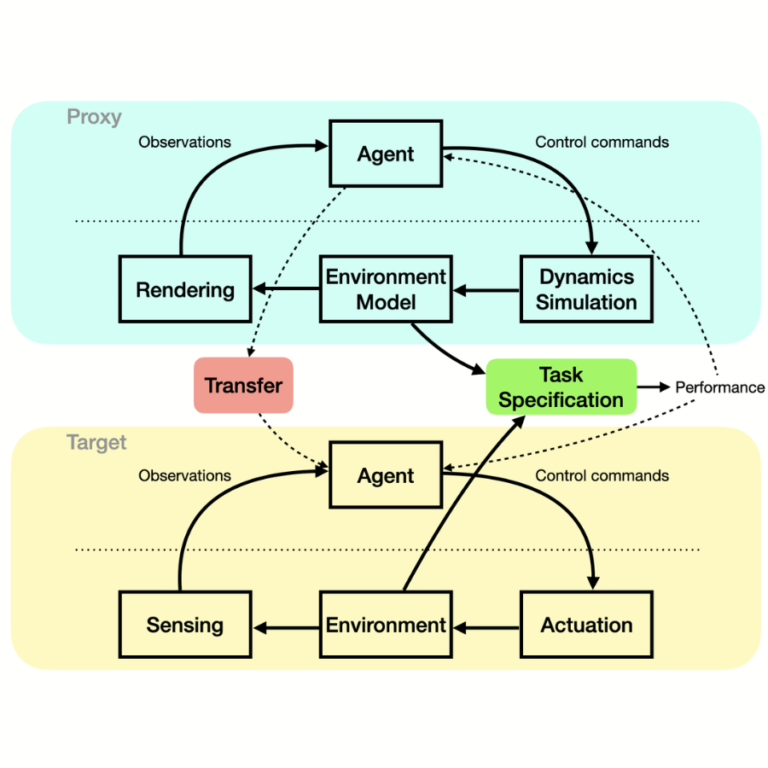

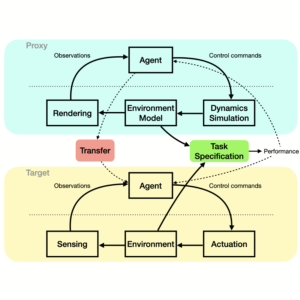

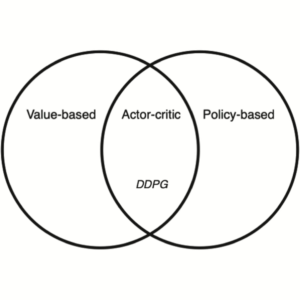

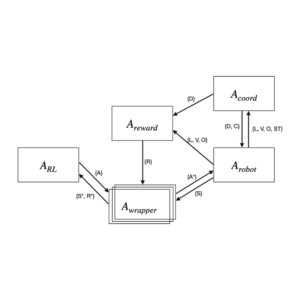

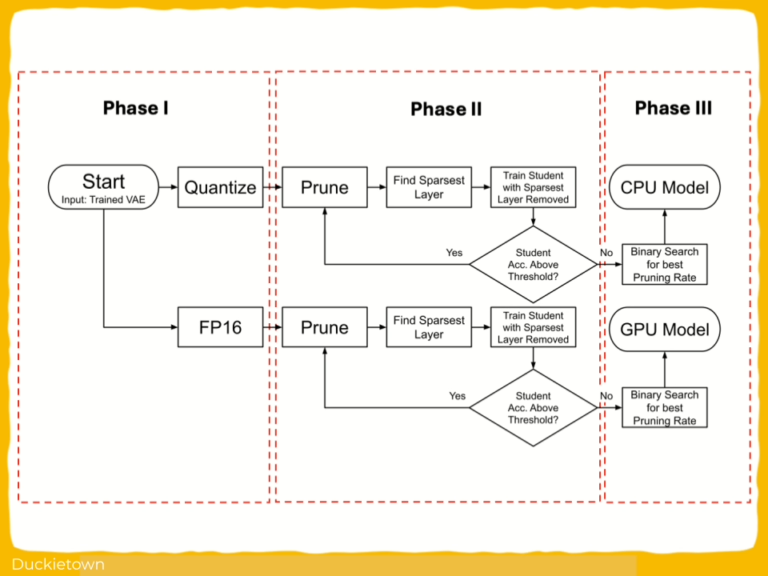

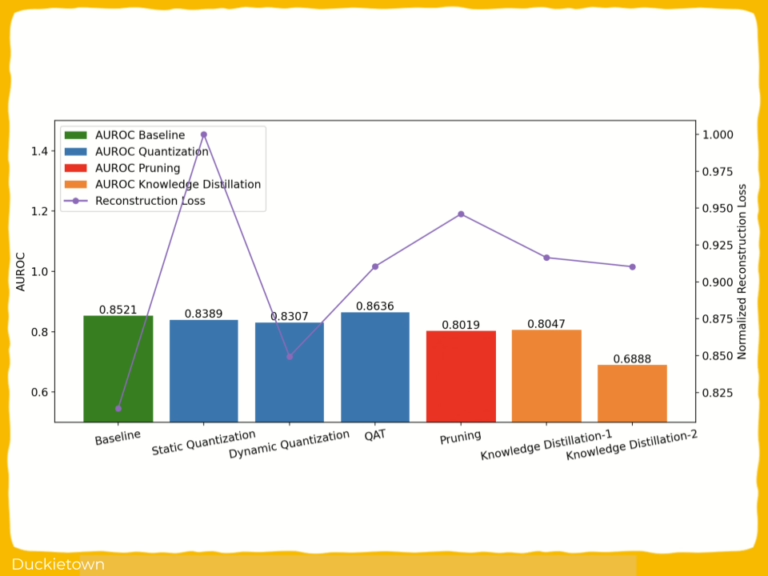

To solve this challenge, building upon previous work (Embedded Out-of-Distribution Detection on an Autonomous Robot Platform), the researchers applied three model compression techniques:

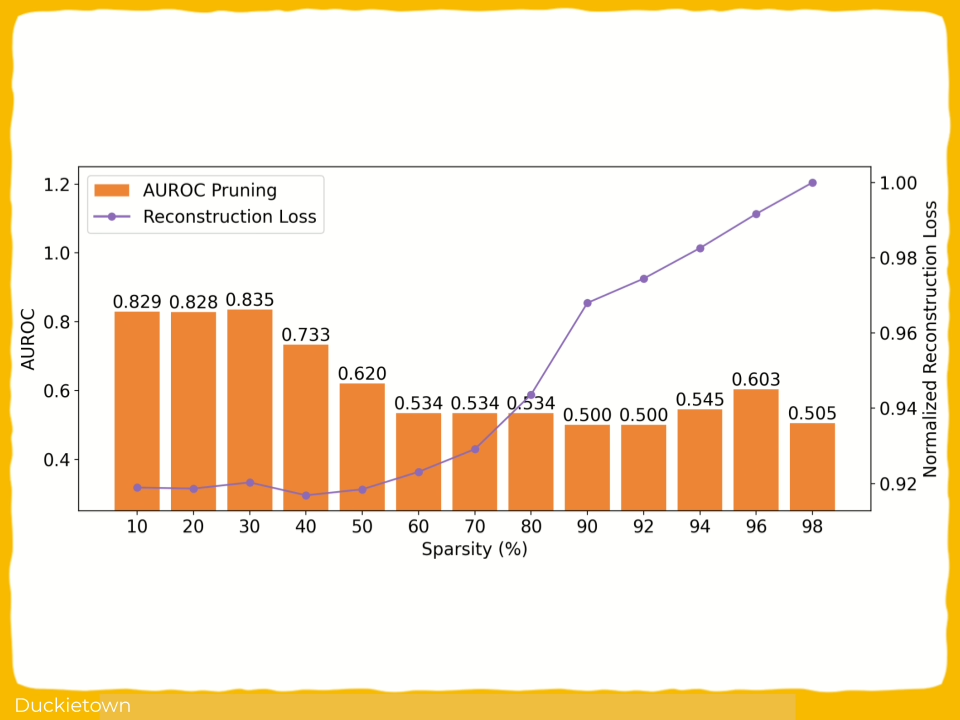

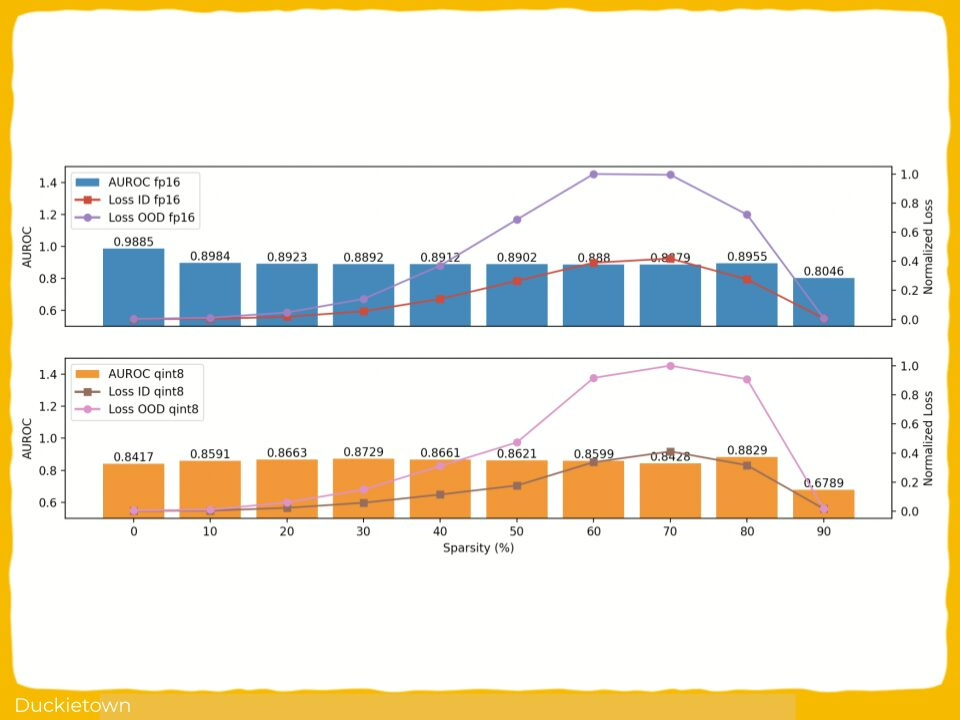

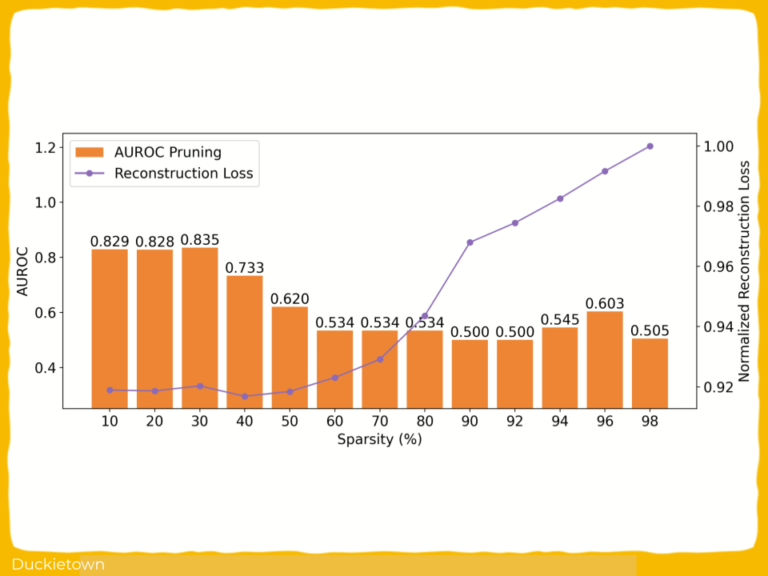

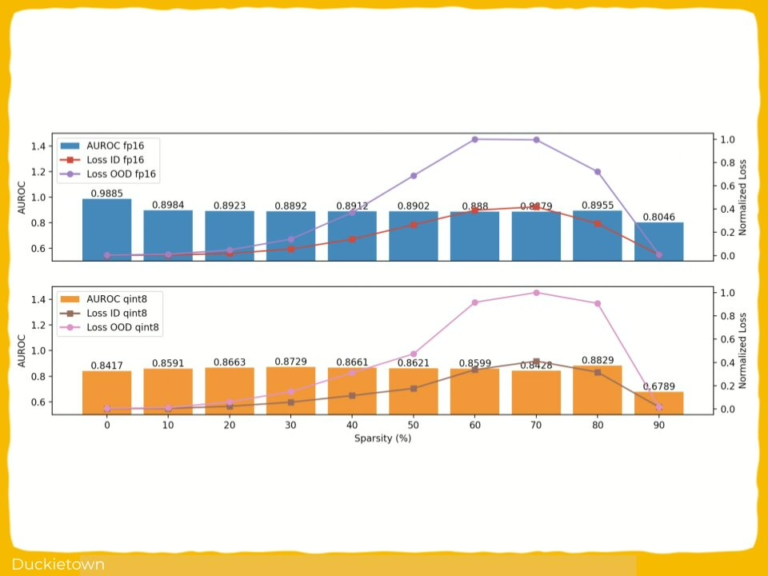

- Pruning: Removes low-importance weights or neurons to shrink and speed up the model.

- Knowledge distillation: Trains a smaller “student” model to mimic a larger “teacher” model.

- Quantization: Lowers numerical precision (e.g., from 32-bit to 8-bit) to save memory and improve speed.

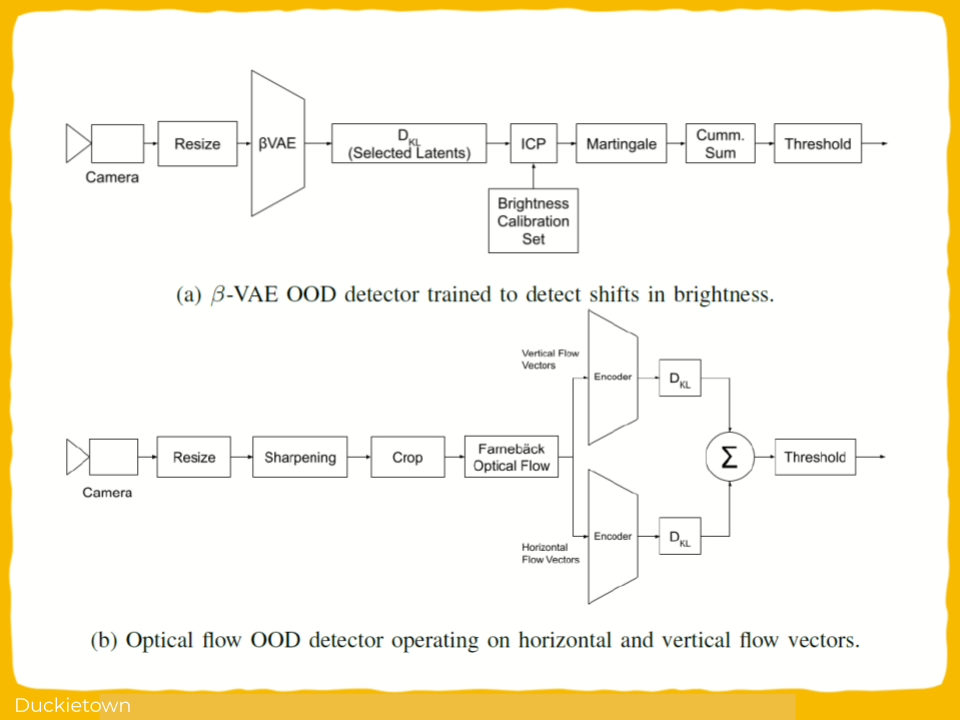

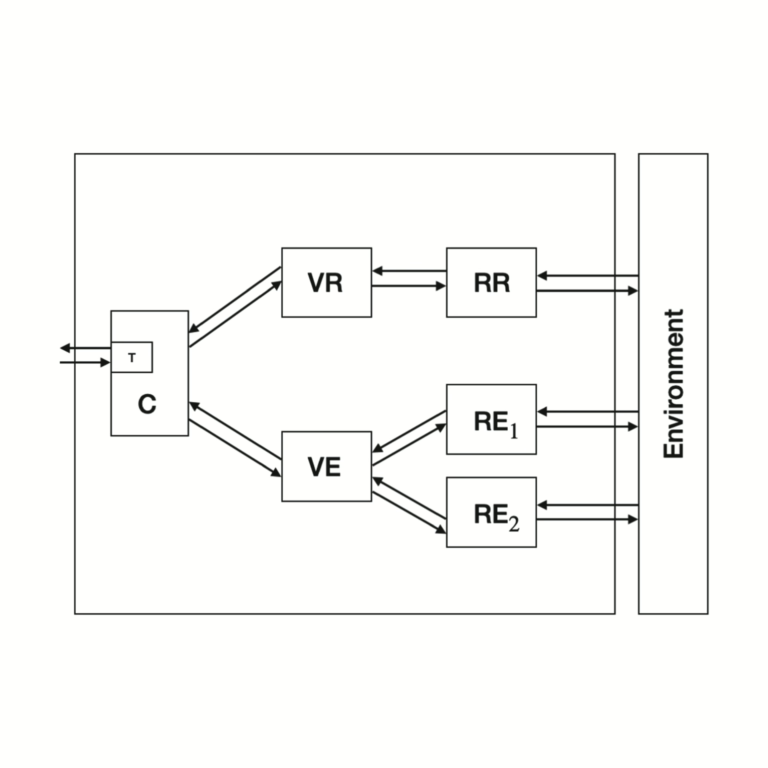

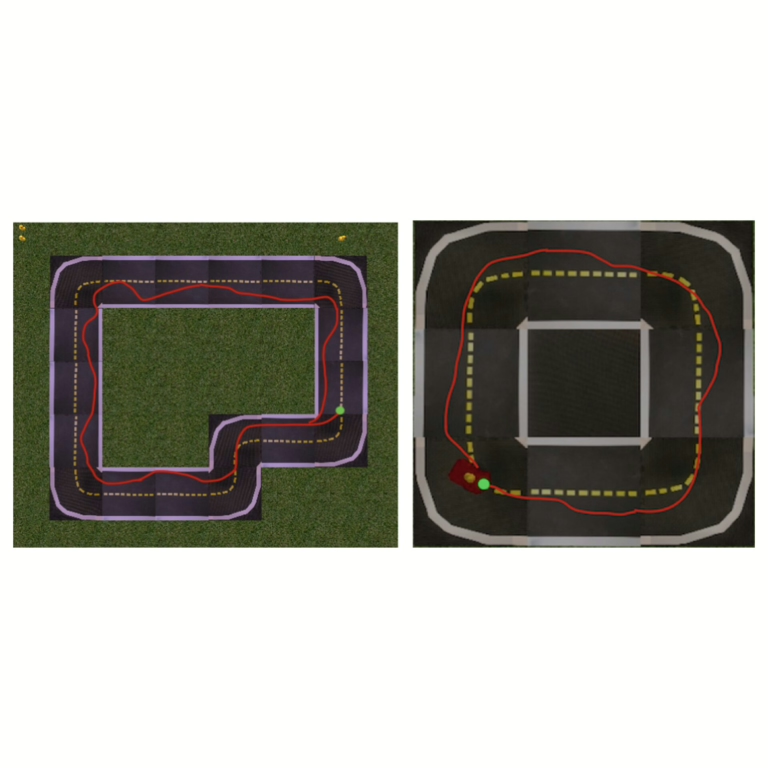

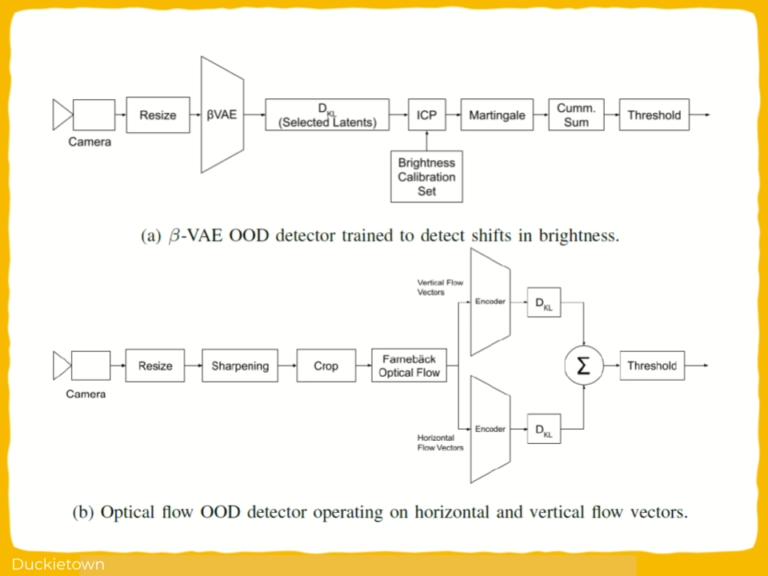

Two VAE-based OOD detectors were evaluated:

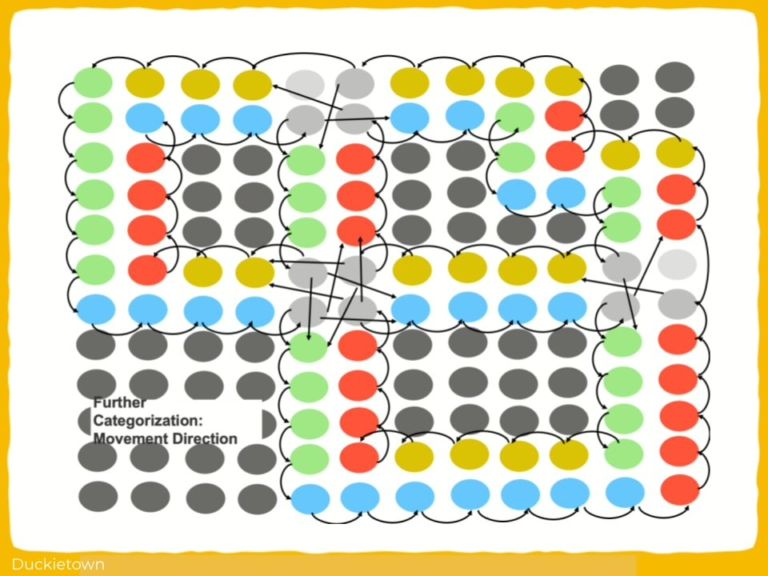

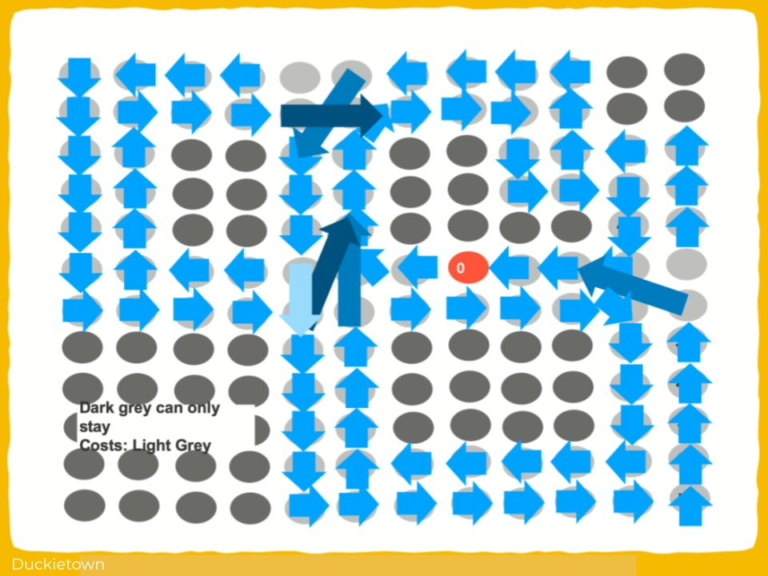

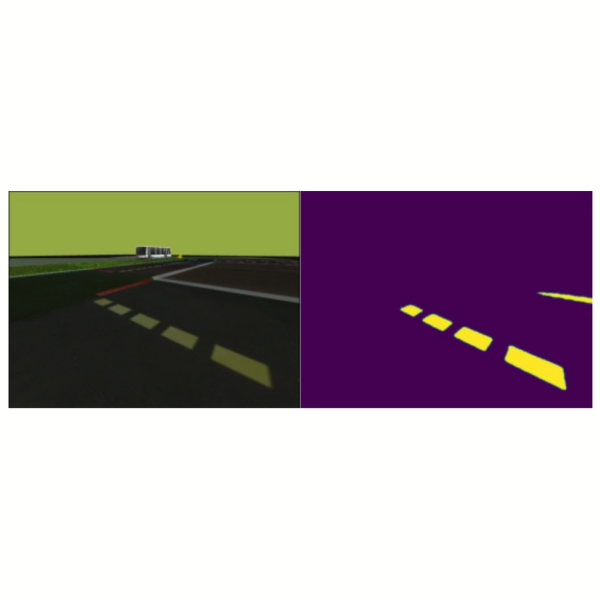

- β-VAE: A variant of VAE that learns more interpretable latent features (controlled by a parameter called β).

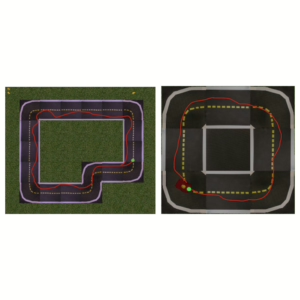

- Optical Flow Detector: Analyzes how pixels move across video frames to detect unusual motion.

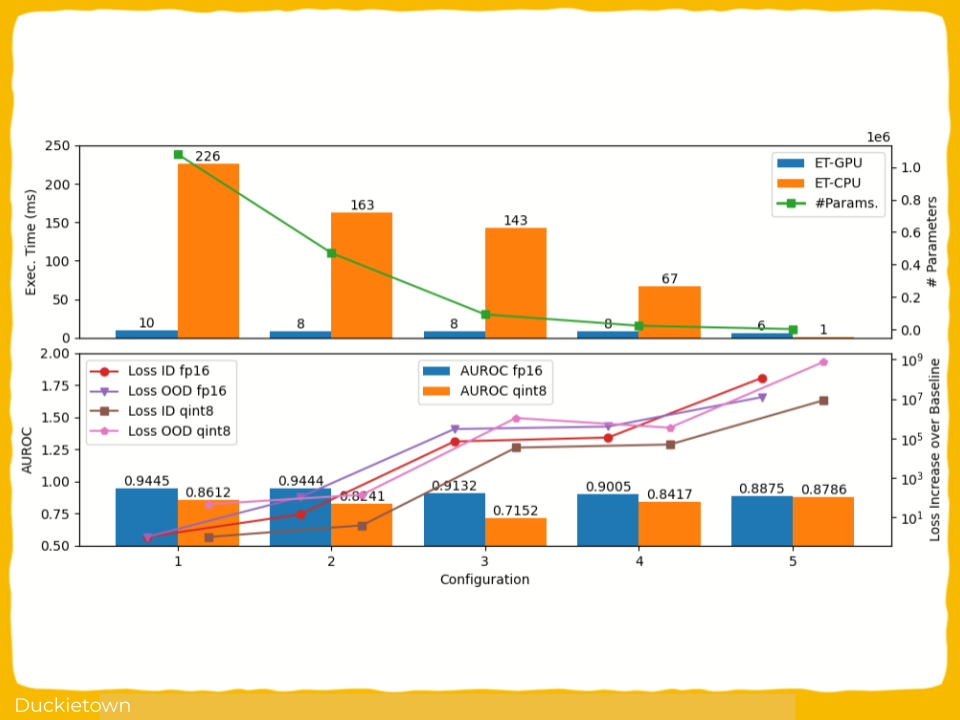

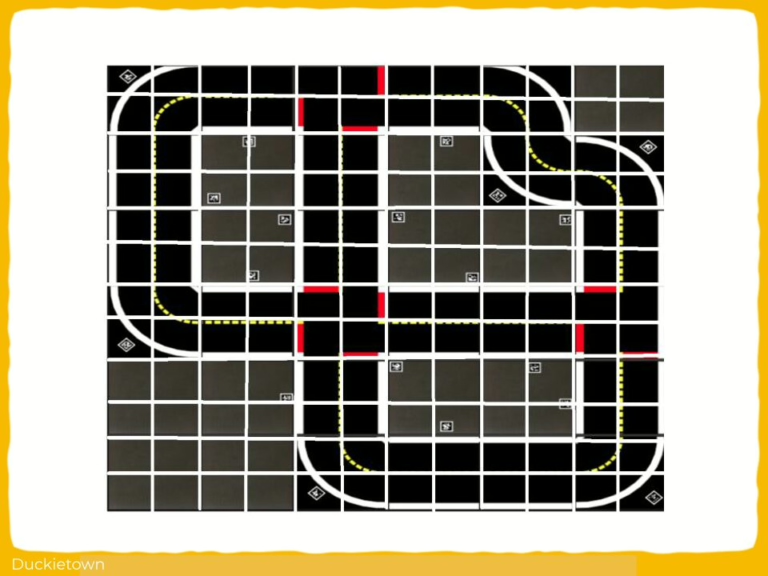

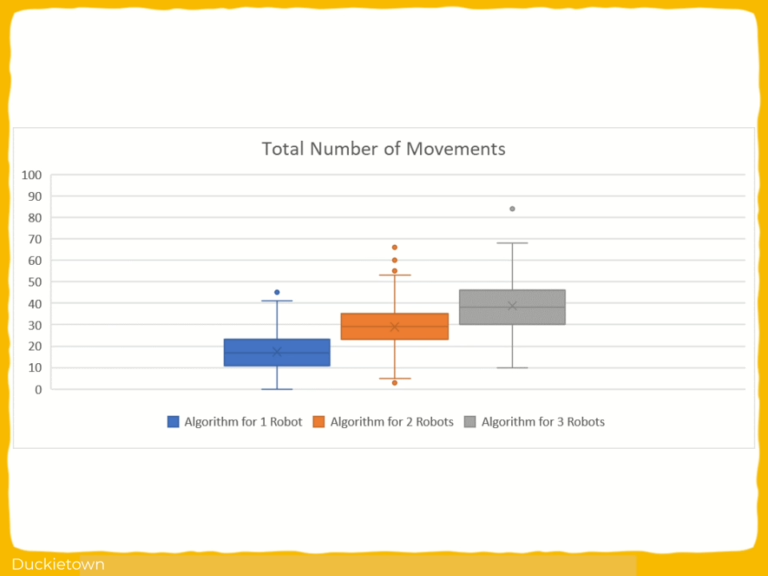

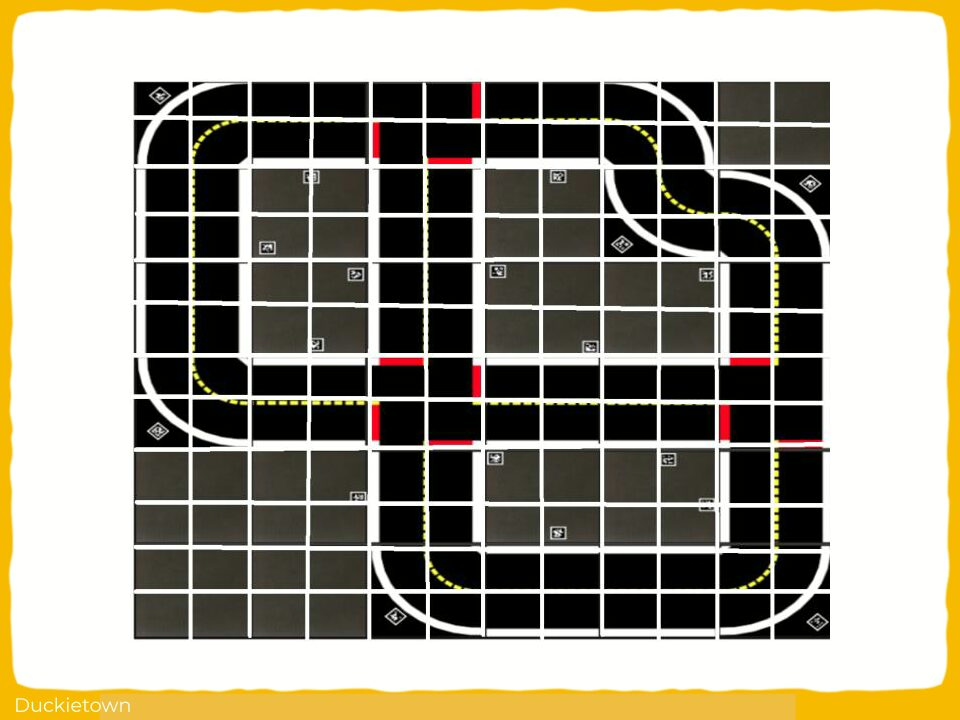

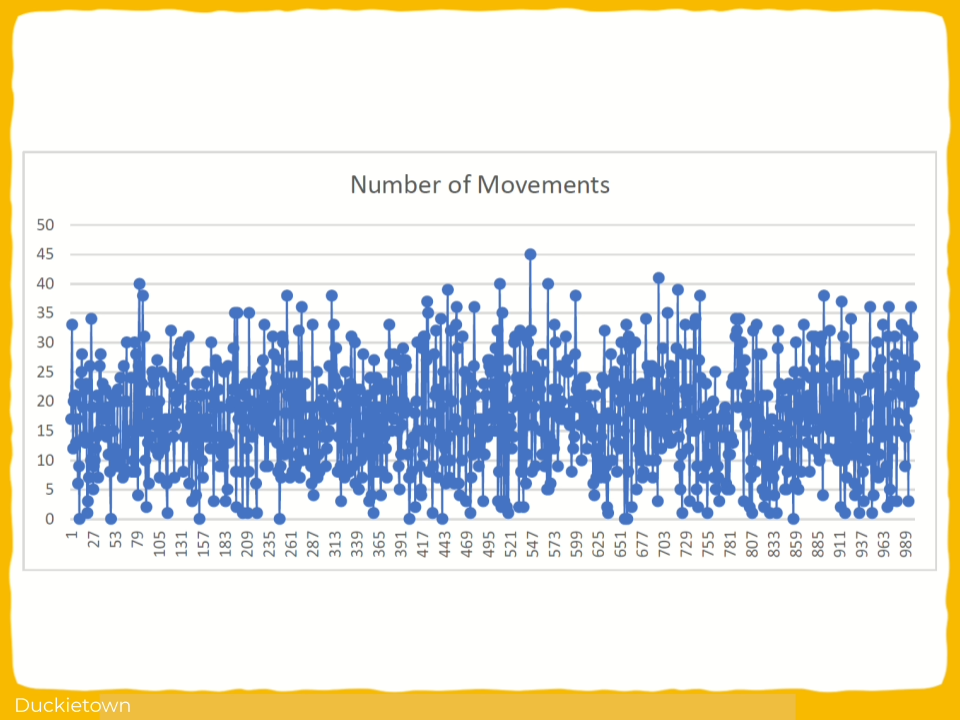

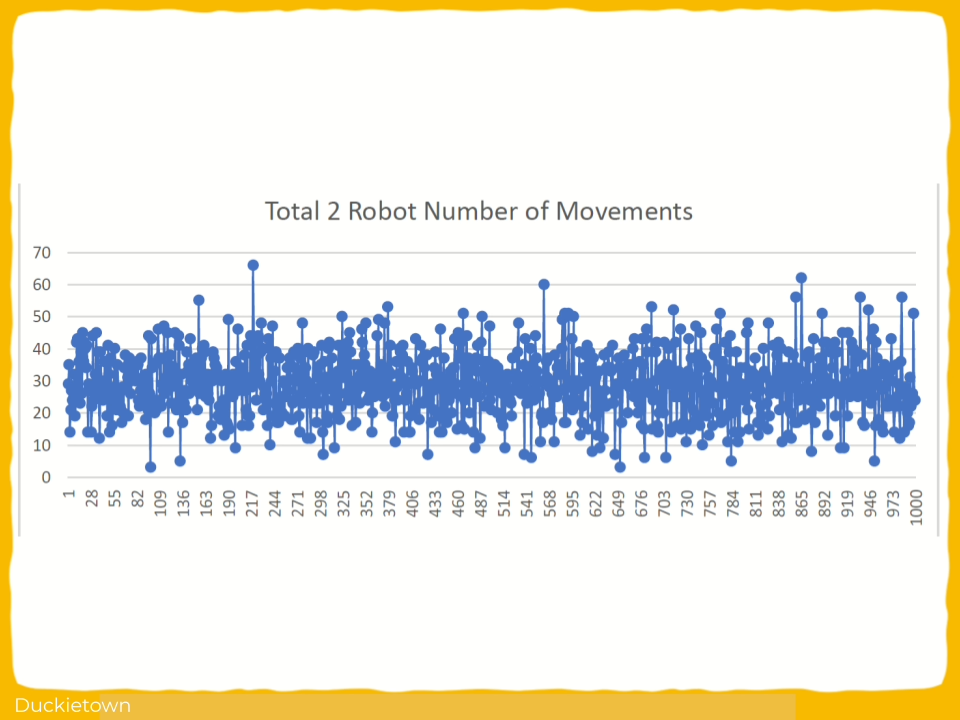

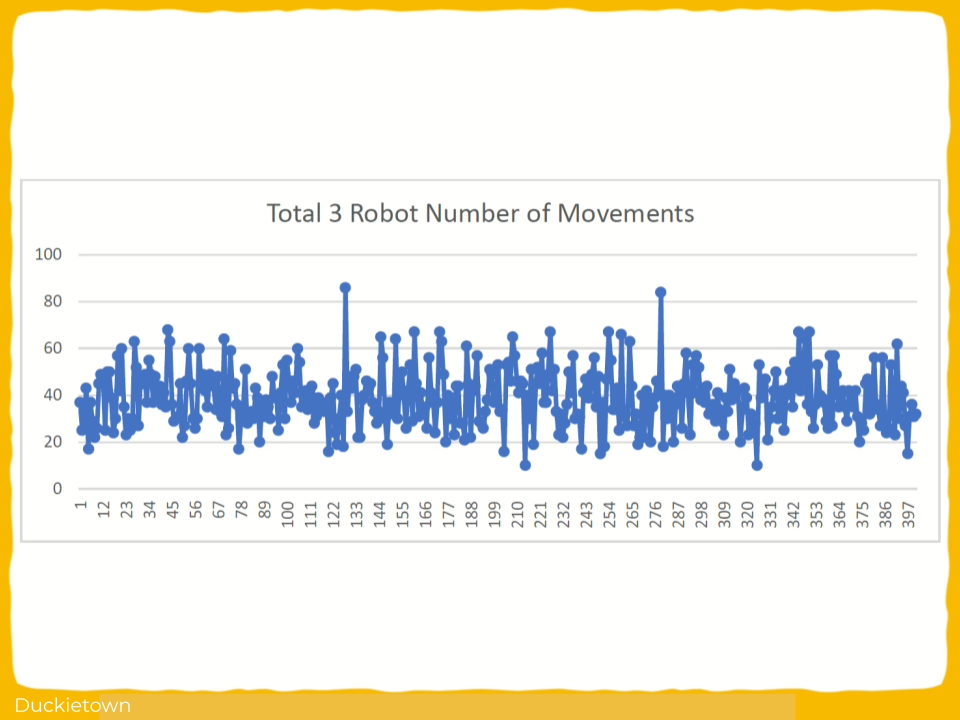

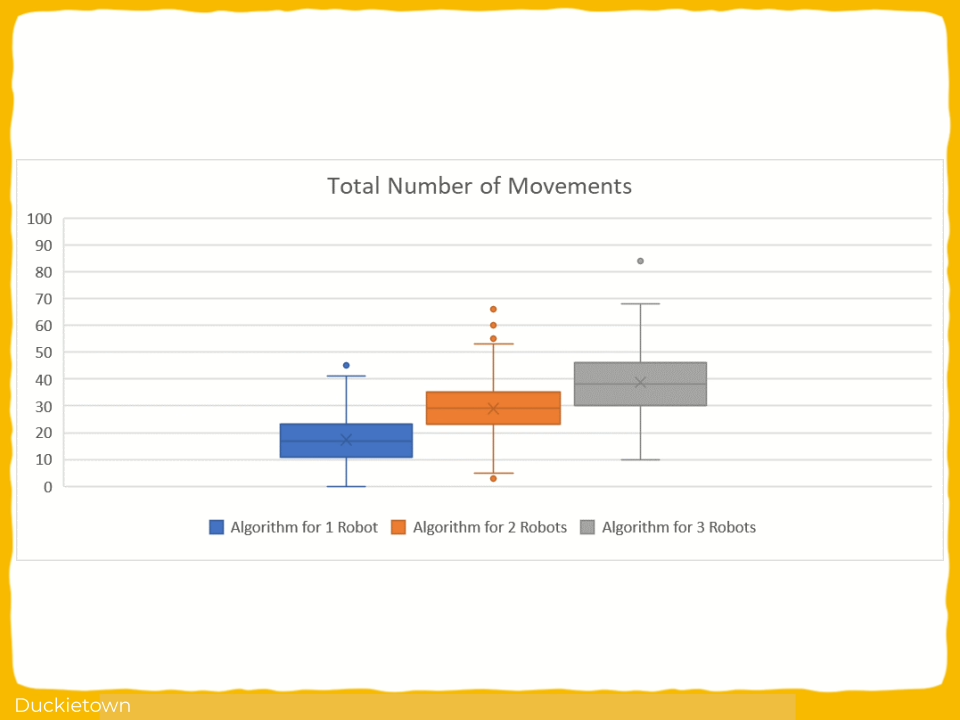

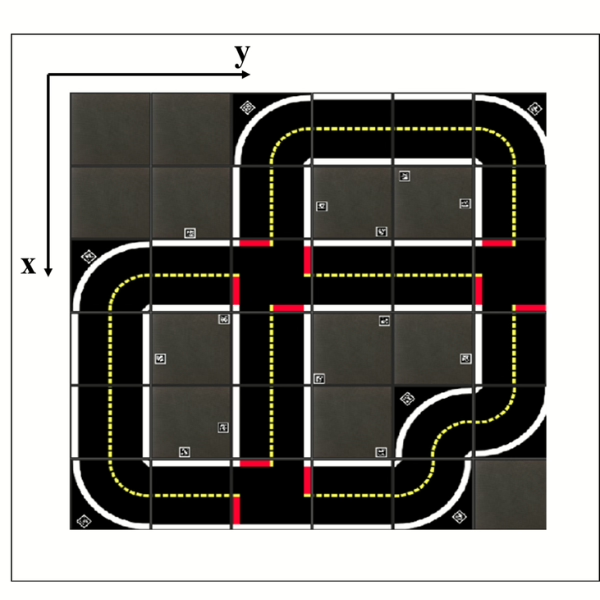

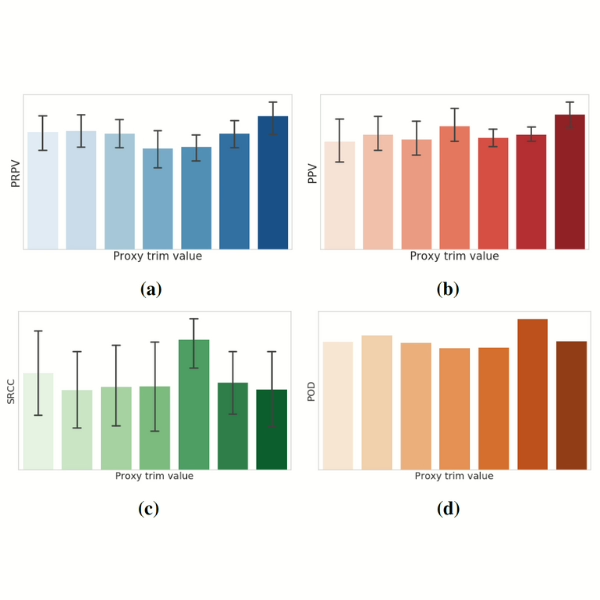

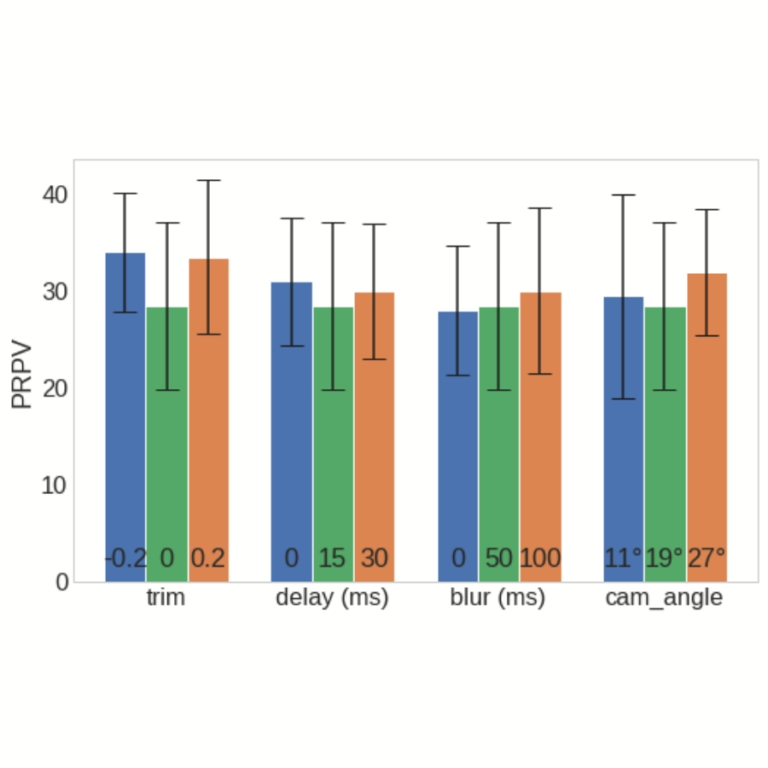

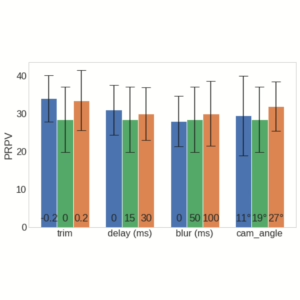

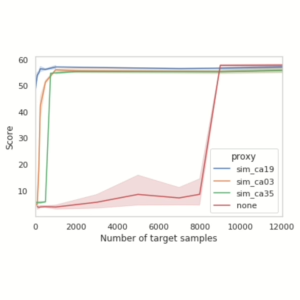

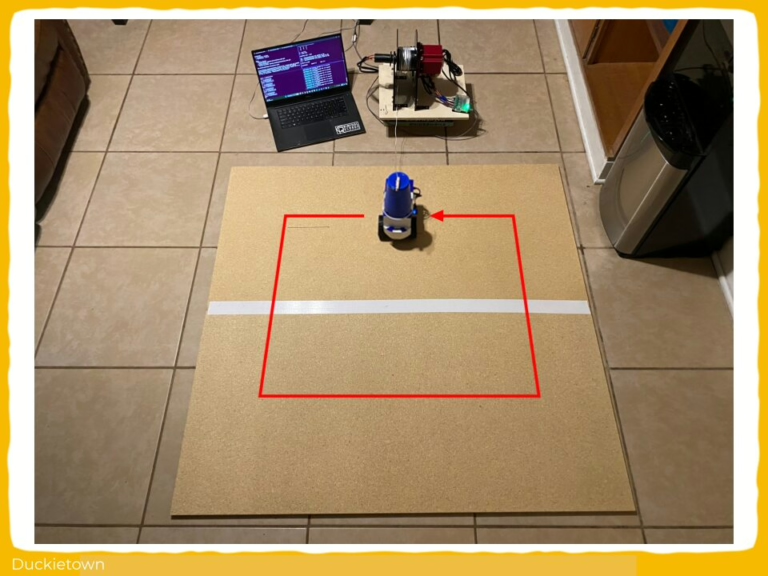

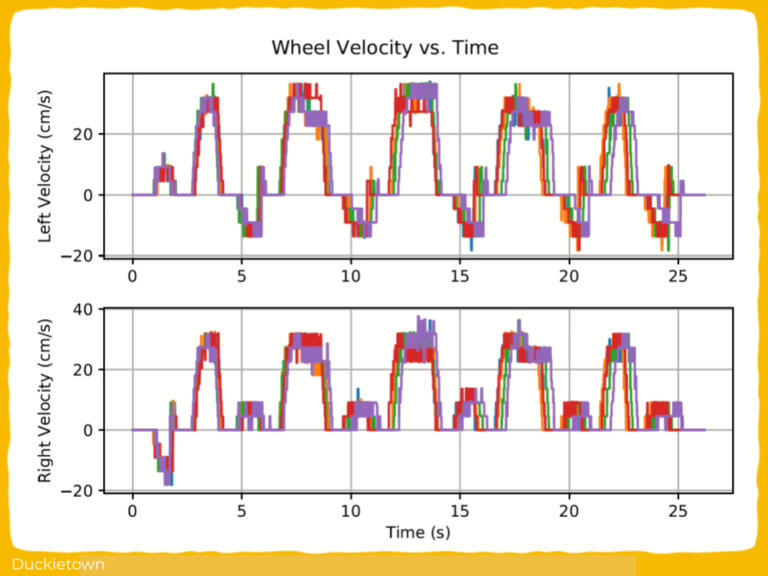

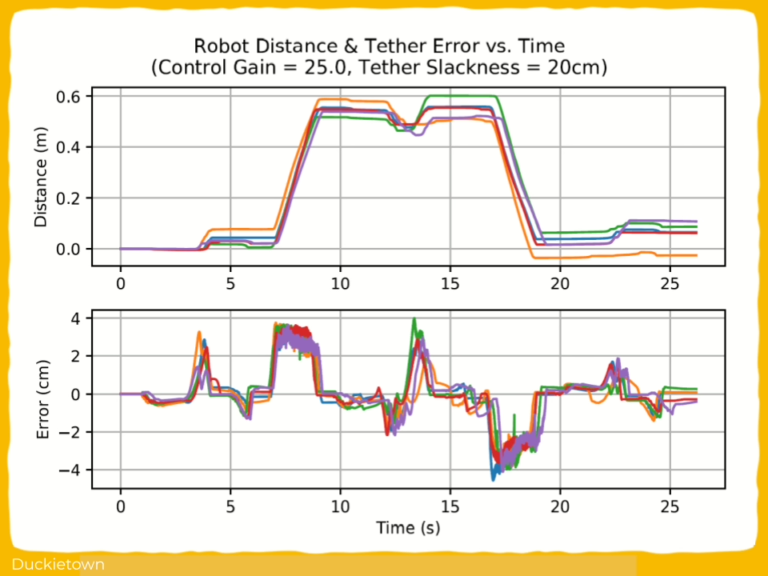

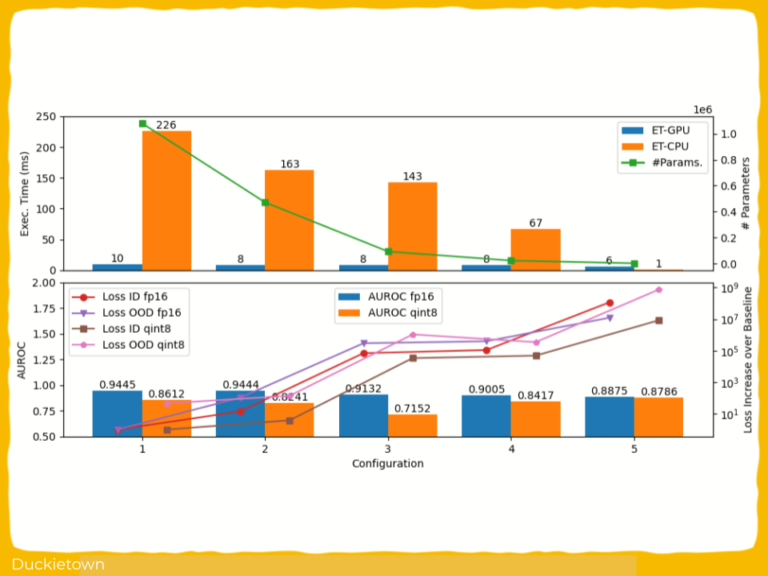

Both models were trained and tested using data collected in Duckietown, and the models were measured using Area under the Receiver Operating Characteristic Curve (AUROC), which shows how well the model separates known from unknown inputs, memory footprint, and execution latency. The compressed models achieved faster inference times, smaller memory usage, and only minor drops in detection accuracy.

Highlights - VAE-Based Out-of-Distribution Detectors for Embedded Systems

Here is a visual tour of the work of the authors. For all the details, check out the full paper.

Abstract

In the author’s words:

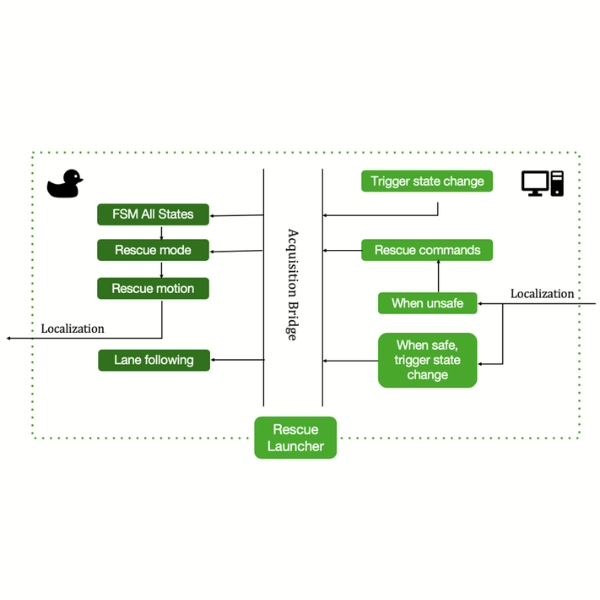

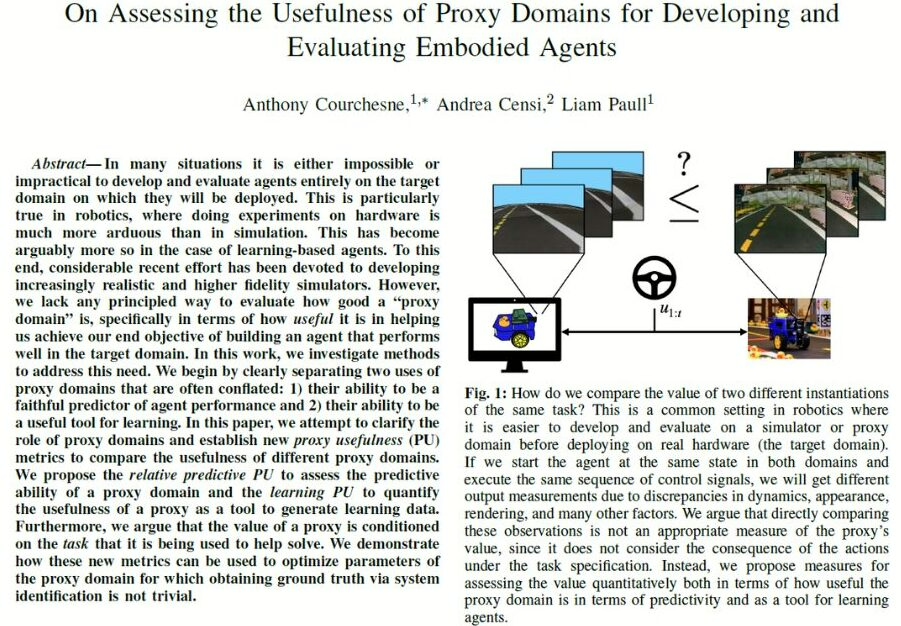

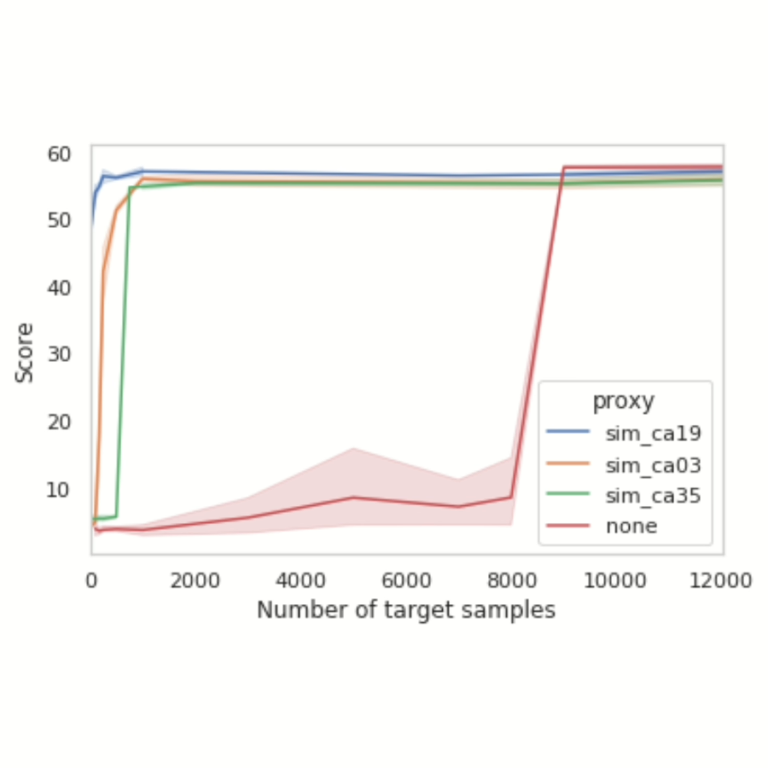

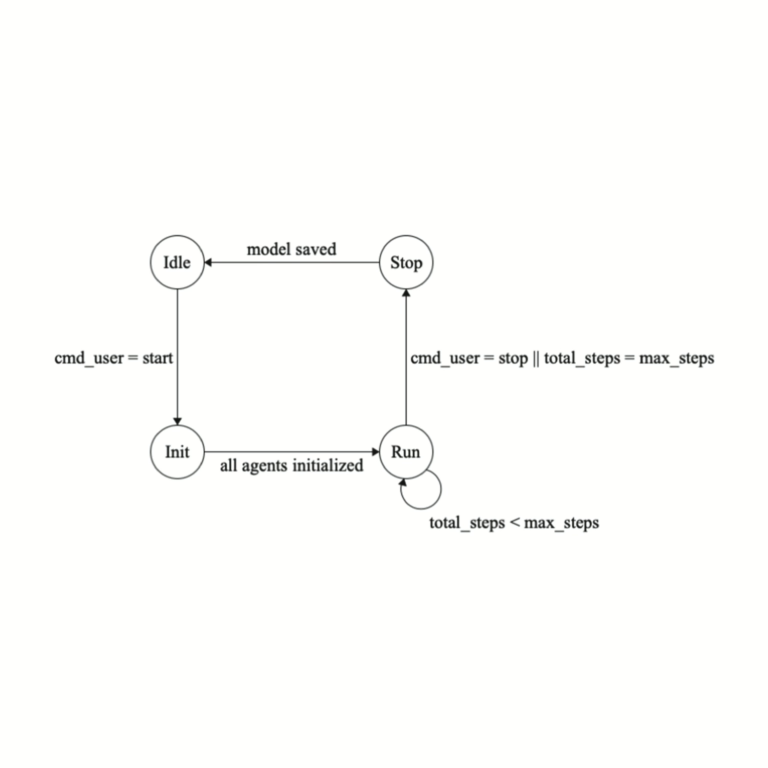

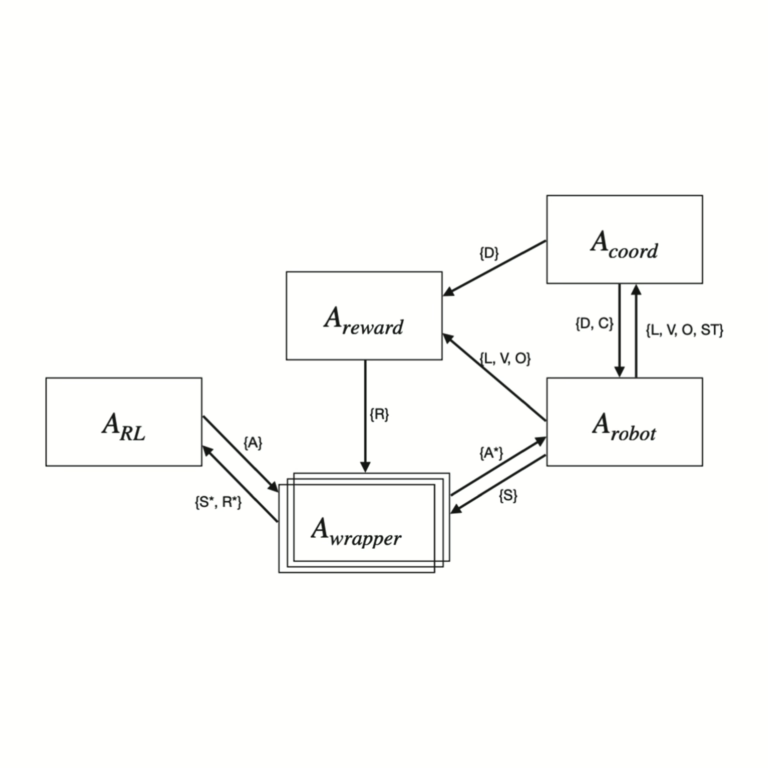

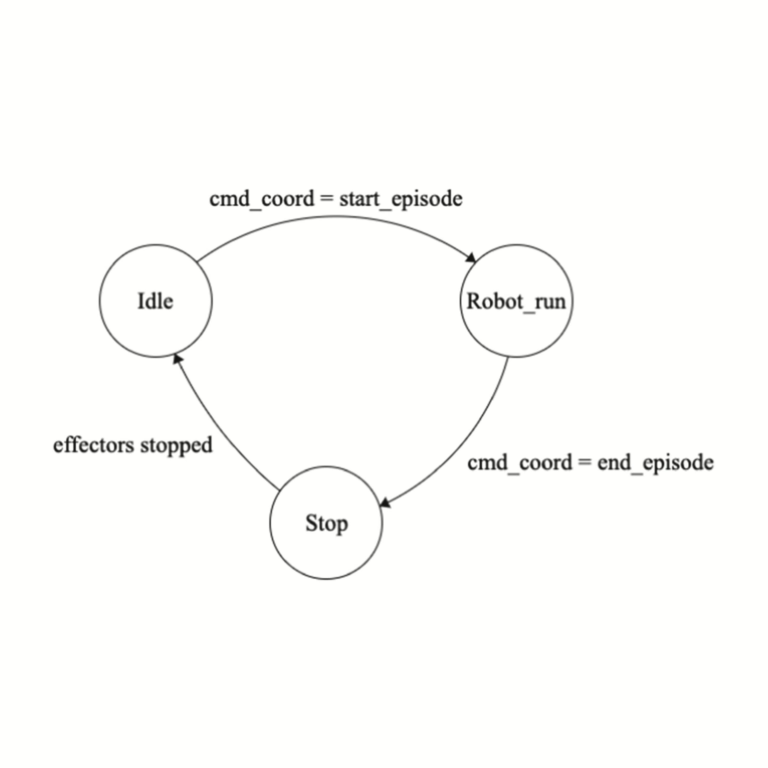

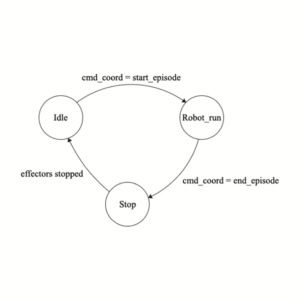

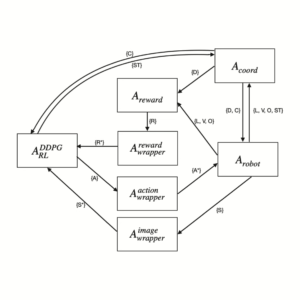

Out-of-distribution (OOD) detectors can act as safety monitors in embedded cyber-physical systems by identifying samples outside a machine learning model’s training distribution to prevent potentially unsafe actions. However, OOD detectors are often implemented using deep neural networks, which makes it difficult to meet real-time deadlines on embedded systems with memory and power constraints. We consider the class of variational autoencoder (VAE) based OOD detectors where OOD detection is performed in latent space, and apply quantization, pruning, and knowledge distillation.

These techniques have been explored for other deep models, but no work has considered their combined effect on latent space OOD detection. While these techniques increase the VAE’s test loss, this does not correspond to a proportional decrease in OOD detection performance and we leverage this to develop lean OOD detectors capable of real-time inference on embedded CPUs and GPUs. We propose a design methodology that combines all three compression techniques and yields a significant decrease in memory and execution time while maintaining AUROC for a given OOD detector.

We demonstrate this methodology with two existing OOD detectors on a Jetson Nano and reduce GPU and CPU inference time by 20% and 28% respectively while keeping AUROC within 5% of the baseline.

Conclusion - VAE-Based Out-of-Distribution Detectors for Embedded Systems

Here are the conclusions from the author of this paper:

We explored different neural network compression techniques on β-VAE and optical flow OOD detectors using a mobile robot powered by a Jetson Nano. Based on our analysis of results for quantization, knowledge distillation, and pruning, we proposed a design strategy to find the model with the best execution time and memory usage while maintaining some accuracy metric for a given VAE-based OOD detector. We successfully demonstrated this methodology on an optical flow OOD detector and showed that our methodology’s ability to aggressively prune and compress a model is due to the unique attributes of VAE-based OOD detection.

Despite our methodology’s good performance, it requires access to OOD samples at design time to act as a crossvalidation set. In our case study, we assume OOD samples arise from a particular generating distribution, but this may not be the case in general. Furthermore, it only guides the search for a faster architecture, but does not guarantee the optimum result. Nevertheless, we believe having a design methodology that combines quantization, knowledge distillation, and pruning allows engineers to exploit the combined powers of these techniques instead of considering them individually.

Project Authors

Aditya Bansal is currently working as a Machine Learning Engineer at Adobe, United States.

Michael Yuhas is currenly working as a Research Assistant at Nanyang Technological University, Singapore.

Arvind Easwaran is an Associate Professor at Nanyang Technological University, Singapore.

Learn more

Duckietown is a platform for creating and disseminating robotics and AI learning experiences.

It is modular, customizable and state-of-the-art, and designed to teach, learn, and do research. From exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge, Duckietown evolves with the skills of the user.