Author: Jacopo Tani

AI Driving Olympics 2021: Urban League Finalists

AI Driving Olympics 2021 - Urban League Finalists

LF), lane following with vehicles (LFV) and lane following with intersections, (LFI).

To account for differences between the real world and simulation, this edition finalists can make one additional submission to the real challenges to improve their scores.

Finalists are the authors of AI-DO 2021 submissions in the top 5 ranks for each challenge.

This year’s finalists are: LF

- András Kalapos

- Bence Haromi

- Sampsa Ranta

- ETU-JBR Team

- Giulio Vaccari

LFV

- Sampsa Ranta

- Adrian Brucker

- Andras Beres

- David Bardos

LFI

- András Kalapos

- Sampsa Ranta

- Adrian Brucker

- Andras Beres

The deadline for submitting the “final” submissions is Dec. 9th, 2 pm CET. All submissions received after this time will count towards the next edition of AI-DO.

Don’t forget to join the #aido channel on the Duckietown Slack for updates!

Congratulations to all the participants, and best of luck to the finalists!

AI-DO 5 competition leaderboard update

AI-DO 5 pre-finals update

With the fifth edition of the AI Driving Olympics finals day approaching, 1326 solutions submitted from 94 competitors in three challenges, it is time to glance over at the leaderboards!

Leaderboards updates

LF), lane following with pedestrians (LFP) and lane following with other vehicles, multibody (LFV_multi). Learn more about the challenges here.

Each submission can be sent to multiple challenges.

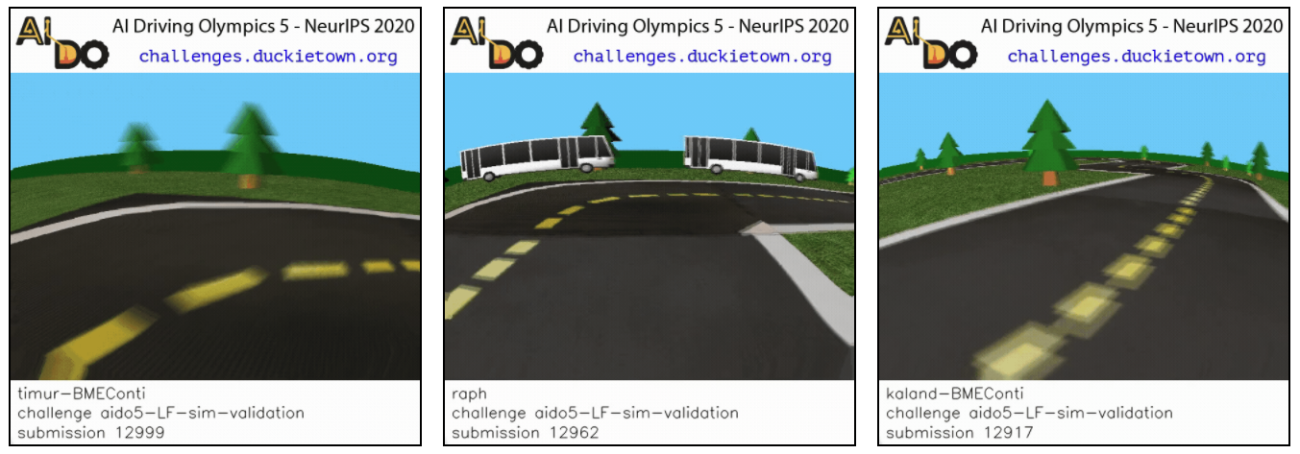

Let’s look at some of the most promising or interesting submissions. The Montréal menace

Raphael Jean at Mila / University of Montréal is a new entrant for this year.

An interesting submission: submission #12962

The submissions from the cold

Team JetBrains from Saint Petersburg was a winner of previous editions of AI-DO. They have been dominating the leaderboards also this year.

Interesting submissions: submission #12905

All of JetBrains submissions: JBRRussia1.

BME Conti

PhD student Robert Moni (BME-Conti) from Hungary.

Interesting submissions: submission #12999

All submissions: timur-BMEconti

Deadline for submissions

The “Self-Driving cars with Duckietown” Massive Open Online Course on edX

"Self-Driving Cars with Duckietown" hands-on MOOC on edX

We are launching a massive open online course (MOOC): “Self-Driving Cars with Duckietown” on edX, and it is free to attend!

This course is made possible thanks to the support of the Swiss Federal Institute of Technology in Zurich (ETHZ), in collaboration with the University of Montreal, the Duckietown Foundation, and the Toyota Technological Institute at Chicago.

This course combines remote and hands-on learning with real-world robots. It is offered on edX, the trusted platform for learning, and it is now open for enrollment.

Learning autonomy

Participants will engage in software and hardware hands-on learning experiences, with focus on overcoming the challenges of deploying autonomous robots in the real world.

This course will explore the theory and implementation of model- and data-driven approaches for making a model self-driving car drive autonomously in an urban environment.

Pedestrian detection

MOOC Factsheet

- Name: Self-driving cars with Duckietown

- Start: March 2021

- Platform: edX

- Cost: free to attend

Prerequisites

- Basic Linux, Python, Git

- Elements of linear algebra, probability, calculus

- Elements of kinematics, dynamics

- Computer with native Ubuntu installation

What you will learn

- Computer Vision

- Robot operations

- Object Detection

- Localization, planning and control

- Reinforcement Learning

Why Self-driving cars with Duckietown?

Teaching autonomy requires a fundamentally different approach when compared to other computer science and engineering disciplines, because it is multi-disciplinary. Mastering it requires expertise in domains ranging from fundamental mathematics to practical machine-learning skills.

Robot Perception

Robots operate in the real world, and theory and practice often do not play well together. There are many hardware platforms and software tools, each with its own strengths and weaknesses. It is not always clear what tools are worth investing time in mastering, and how these skills will generalize to different platforms.

Duckiebot Detection

Learning through challenges

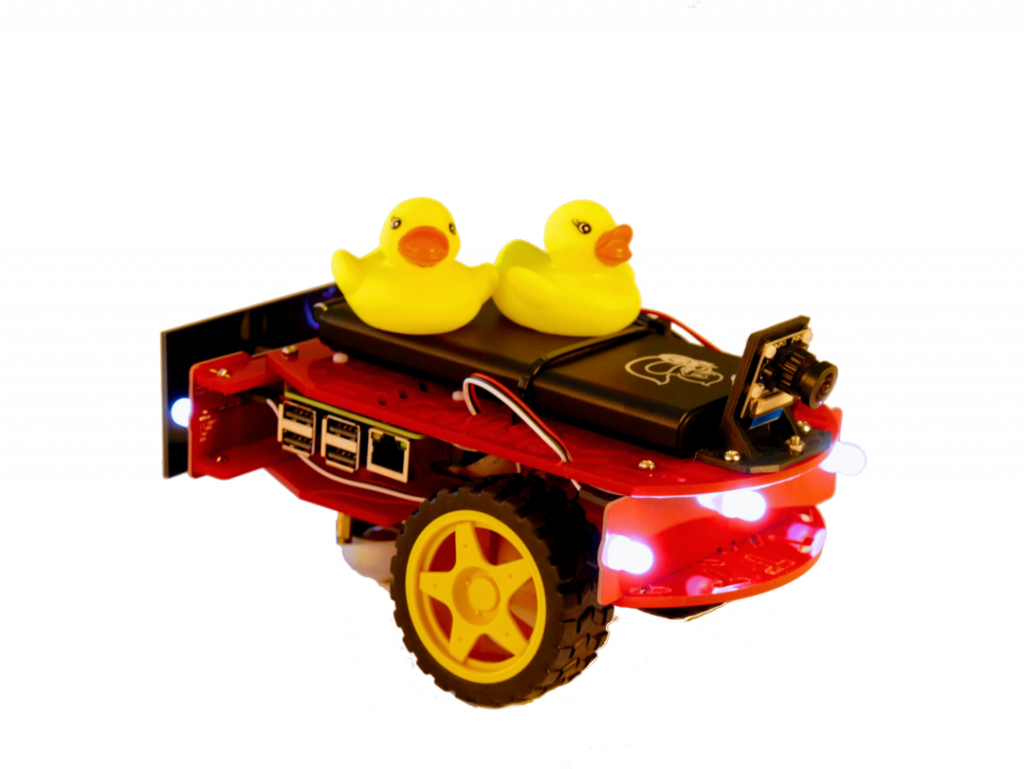

Progressing through behaviors of increasing complexity, participants uncover concepts and tools that address the limitations of previous approaches. This allows to get Duckiebots to actually do things, while gradually re-iterating concepts through various technical frameworks. Simulation and real-world experiments will be performed using a Python, ROS, and Docker based software stack.

Robot Planning

(Hidden) This line and everything under this line are hidden

This course combines remote and hands-on learning with real-world robots.

It is offered on edX, the trusted platform for learning, and it is now open for enrollment.

Learning activities will support the use of NVIDIA Jetson Nano powered Duckiebots.

Robust Reinforcement Learning-based Autonomous Driving Agent for Simulation and Real World

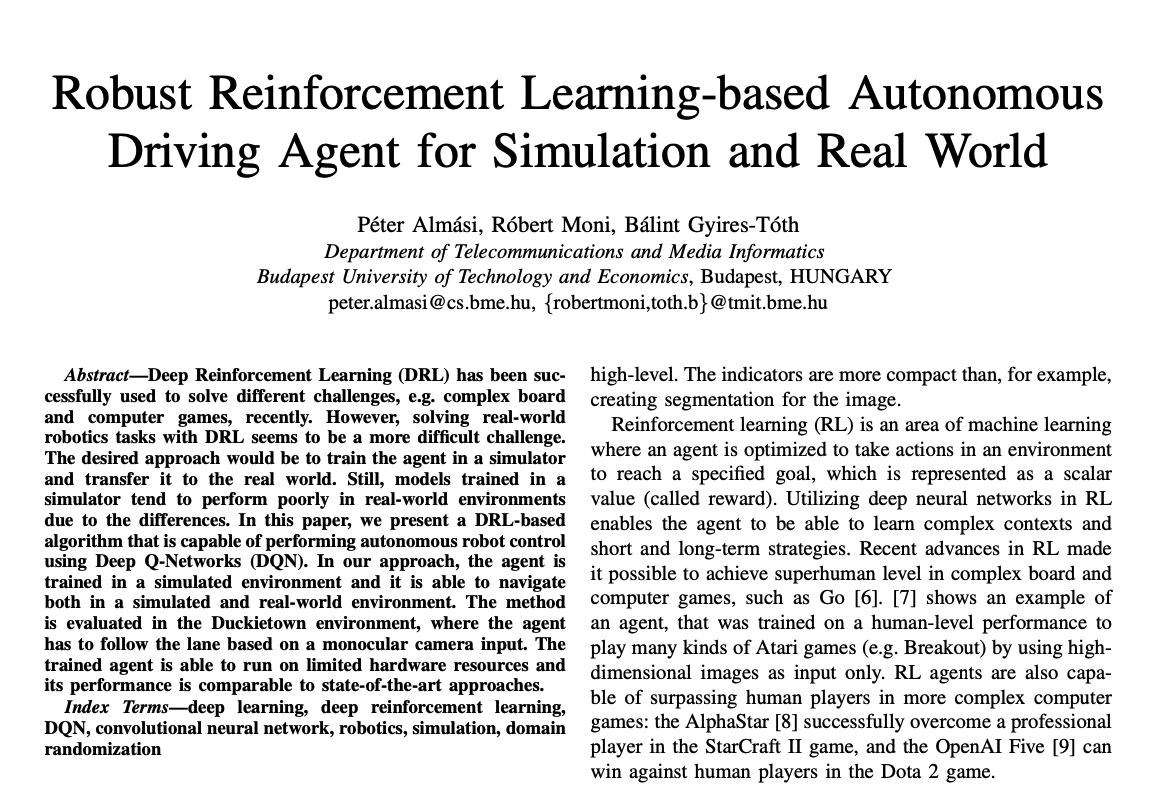

- Title: Robust Reinforcement Learning-based Autonomous Driving Agent for Simulation and Real World - IEEE Conference Publication

- Authors: Péter Almási, Róbert Moni, Bálint Gyires-Tóth

- Published: 2020 International Joint Conference on Neural Networks (IJCNN), Glasgow, United Kingdom, 2020, pp. 1-8, doi: 10.1109/IJCNN48605.2020.9207497

Robust Reinforcement Learning-based Autonomous Driving Agent for Simulation and Real World

We asked Róbert Moni to tell us more about his recent work. Enjoy the read!

The author's perspective

Most of us, proud nerd community members, experience driving first time by the discrete actions taken on our keyboards. We believe that the harder we push the forward arrow (or the W-key), the car from the game will accelerate faster (sooo true 😊 ). Few of us believes that we can resolve this task with machine learning. Even fever of us believes that this can be done accurately and in a robust mode with a basic Deep Reinforcement Learning (DRL) method known as Deep Q-Learning Networks (DQN).

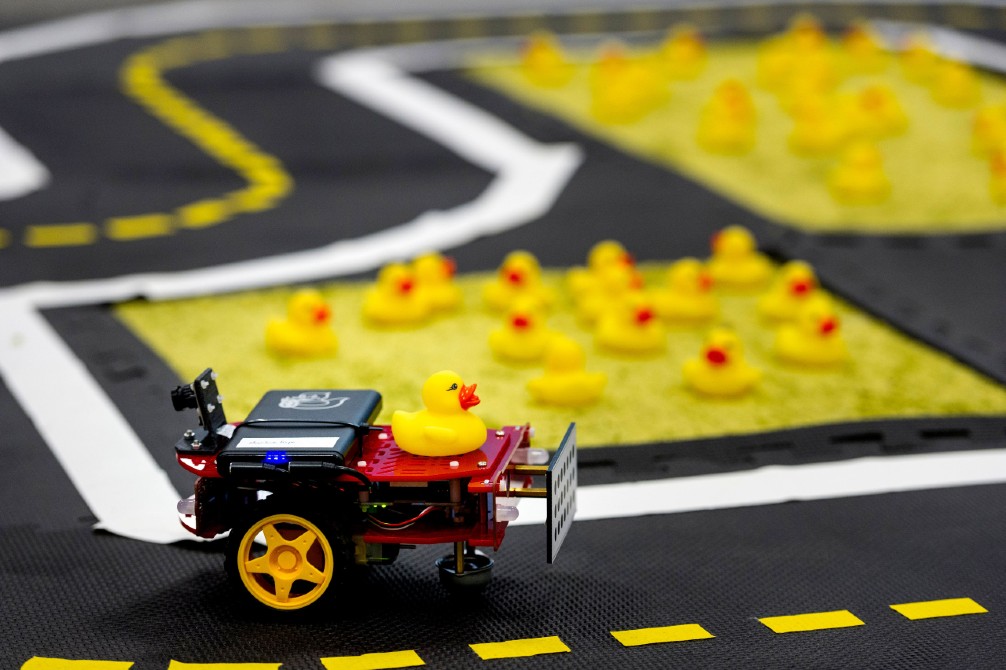

It turned to be true in the case of a Duckiebot, and even more, with some added computer vision techniques it was able to perform well both in simulation (where the training process was carried out) and real world.

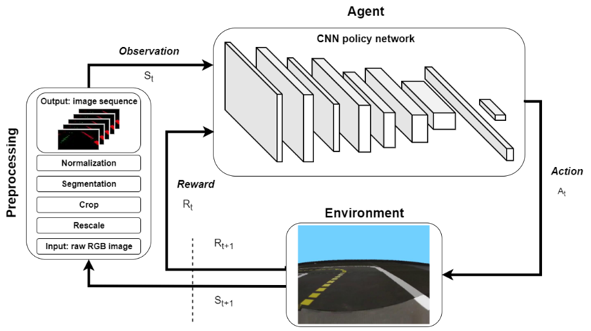

The pipeline

The complete training pipeline carried out in the Duckietown-gym environment is visualized in the figure above and works as follows. First, the camera images go through several preprocessing steps:

- resizing to a smaller resolution (60×80) for faster processing;

- cropping the upper part of the image, which doesn’t contain useful information for the navigation;

- segmenting important parts of the image based on their color (lane markings);

- and normalizing the image;

- finally a sequence is formed from the last 5 camera images, which will be the input of the Convolutional Neural Network (CNN) policy network (the agent itself).

The agent is trained in the simulator with the DQN algorithm based on a reward function that describes how accurately the robot follows the optimal curve. The output of the network is mapped to wheel speed commands.

The workings

The CNN was trained with the preprocessed images. The network was designed such that the inference can be performed real-time on a computer with limited resources (i.e. it has no dedicated GPU). The input of the network is a tensor with the shape of (40, 80, 15), which is the result of stacking five RGB images. The network consists of three convolutional layers, each followed by ReLU (nonlinearity function) and MaxPool (dimension reduction) operations.

The convolutional layers use 32, 32, 64 filters with size 3 × 3. The MaxPool layers use 2 × 2 filters. The convolutional layers are followed by fully connected layers with 128 and 3 outputs. The output of the last layer corresponds to the selected action. The output of the neural network (one of the three actions) is mapped to wheel speed commands; these actions correspond to turning left, turning right, or going straight, respectively.

Learn more

Our work was acknowledged and presented at the IEEE World Congress on Computational Intelligence 2020 conference. We plan to publish the source code after AI-DO5 competition. Our paper is available on ieeexplore.ieee.org, deepai.org and arxiv.org.

Check out our sim and real demo on Youtube performed at our Duckietown Robotarium put together at Budapest University of Technology and Economics. .

Learn robotics from the comfort of your home with Duckietown

These are difficult times for us all.

With physical distancing directives issued across the globe and many people restricted to their homes, we want to reach out (virtually) and offer our support.

To help you beat the isolation blues, the Duckietown Foundation is

towards the next 100 orders of Duckiebots, Starter Kits, and Navigation Packs.

Remember that you can still learn about robotics without a robot!

Almost all of our resources remain open and available for your use.

Join us on Slack, peruse our library, or start training for the Urban League of the AI Driving Olympics.

Due to the closure of academic institutions the Duckietown Autolabs are temporarily closed.

Coming soon: online demonstrations and tutorials to help you get started!

Have fun learning and stay safe!

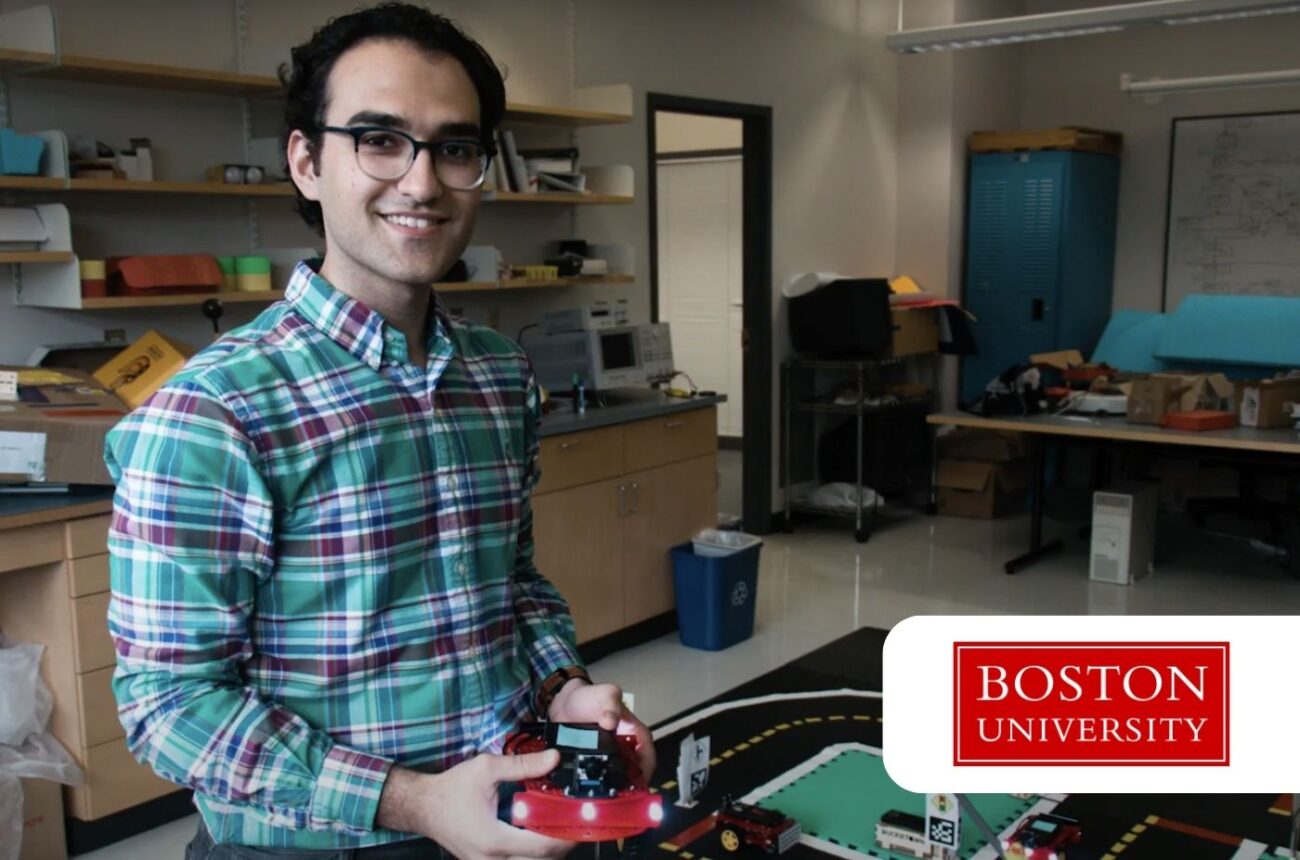

Community Spotlight: Arian Houshmand – Control Algorithms for Traffic

Control algorithms to improve traffic

The goal

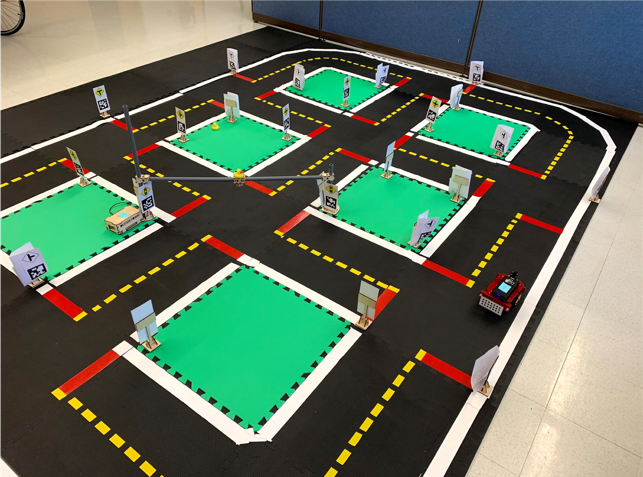

The goal of this project is to design control and optimization technologies that enable a plug-in hybrid electric vehicle (PHEV) to communicate with other cars and city infrastructure and act on that information. By providing cars with situational self-awareness, they will be able to efficiently calculate the best possible route, accelerate and decelerate as needed, and manage their powertrain. This is an important task toward advancing the vision to create an ‘Internet of Cars,’ in which connected and self-driving cars operate seamlessly with each other and traffic infrastructure, improving fuel efficiency and safety, and reducing traffic congestion and pollution.

Today’s commercially-available self-driving cars rely on costly sensors, specifically radar, camera, and LIDAR (light) to operate semi-autonomously. In the NEXTCAR project, BU researchers with project collaborators are looking to go beyond that by developing decision-making algorithms to improve the autonomous operation of a single hybrid vehicle as well as algorithms for communications between vehicles and their environment, enabling self-driving cars to cooperate and interact within their socio-cyber-physical environment.

Several different functions have been developed throughout this project including:

● Eco-routing: Procedure of finding the optimal route for a vehicle to travel between two points, which utilizes the least amount of energy costs.

● Eco-AND (Economical Arrival and Departure): An optimal control framework for approaching a traffic light without stopping at the intersection by having traffic light cycle time information.

● CACC (Cooperative Adaptive Cruise Control): An extension of adaptive cruise control

"We use Duckietown to train students on how to implement their algorithms on embedded systems and also as a means to demonstrate our developed technologies in action and in a live setting."

Arian Houshmand

(ACC) that by benefiting from vehicle to vehicle (V2V) communication increases the safety and energy efficiency by reducing headway.

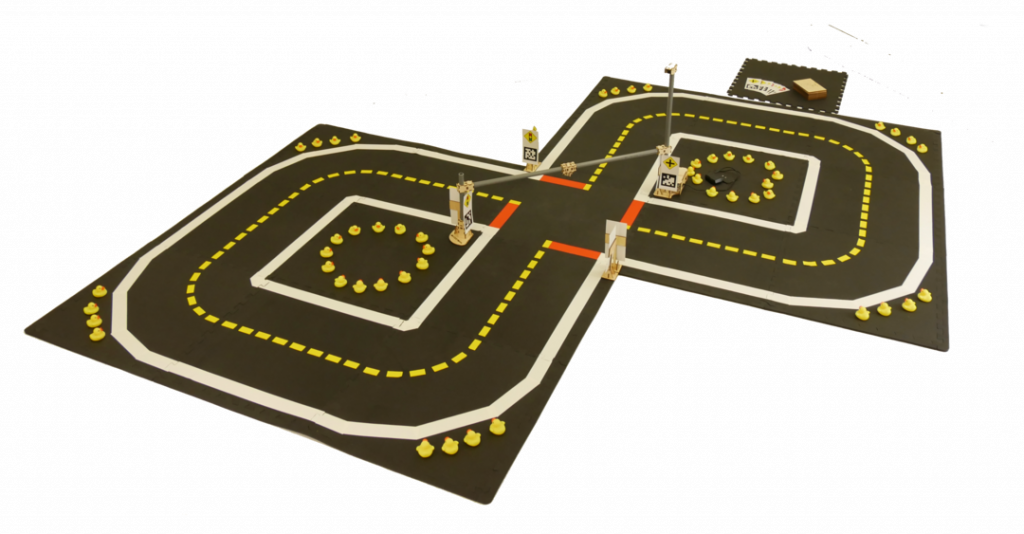

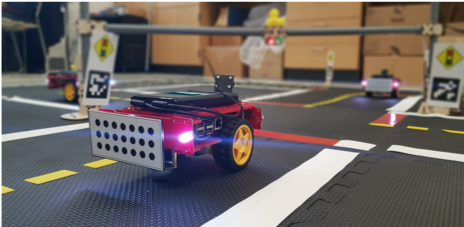

In order to validate and test the developed technologies, researchers first use simulation environments to test the algorithms. After verifying through simulation, they implement the algorithms on Duckietown, and finally deploy them on real cars (Audi A3 e-tron) at the University of Michigan’s M-city (test track for self-driving cars).

We use Duckietown to train students on how to implement their algorithms on embedded systems and also as a means to demonstrate our developed technologies in action and in a live setting. Since most of our research focuses on Connected and Automated Vehicles (CAVs), we need to establish connections between individual Duckiebots and traffic lights. As a result, we created a platform for exchanging information and control commands between all the cars and traffic lights.

Online localization of Duckiebots is a challenging task, and is missing from the current framework. We relied on our external motion capture sensors (OptiTrack) to localize the robots.

Duckietown is a nice platform for performing experiments on autonomous robots since It is relatively simple to set up the town and Duckiebots. Moreover, the built in perception and lane keeping capabilities are very useful to kick off experiments quickly. Traffic lights and signs are also helpful to create different scenarios for testing algorithms in city-like scenarios.

What would make Duckietown even more useful in our application is feedback sensors for determining wheel rotational speed/position as it is difficult to correct for rotational speed errors of the wheels and a ROS node for exchanging information between robots and traffic lights for testing collaborative control algorithms.

Learn more about Duckietown

The Duckietown platform enables state-of-the-art robotics and AI learning experiences.

It is designed to help teach, learn, and do research: from exploring the fundamentals of computer science and automation to pushing the boundaries of human knowledge.

Tell us your story

Are you an instructor, learner, researcher or professional with a Duckietown story to tell? Reach out to us!

AI-DO 3 – Urban Event Winners

In case you missed it AI-DO 3 has come and gone. Interested in reliving the competition? Here’s the video.

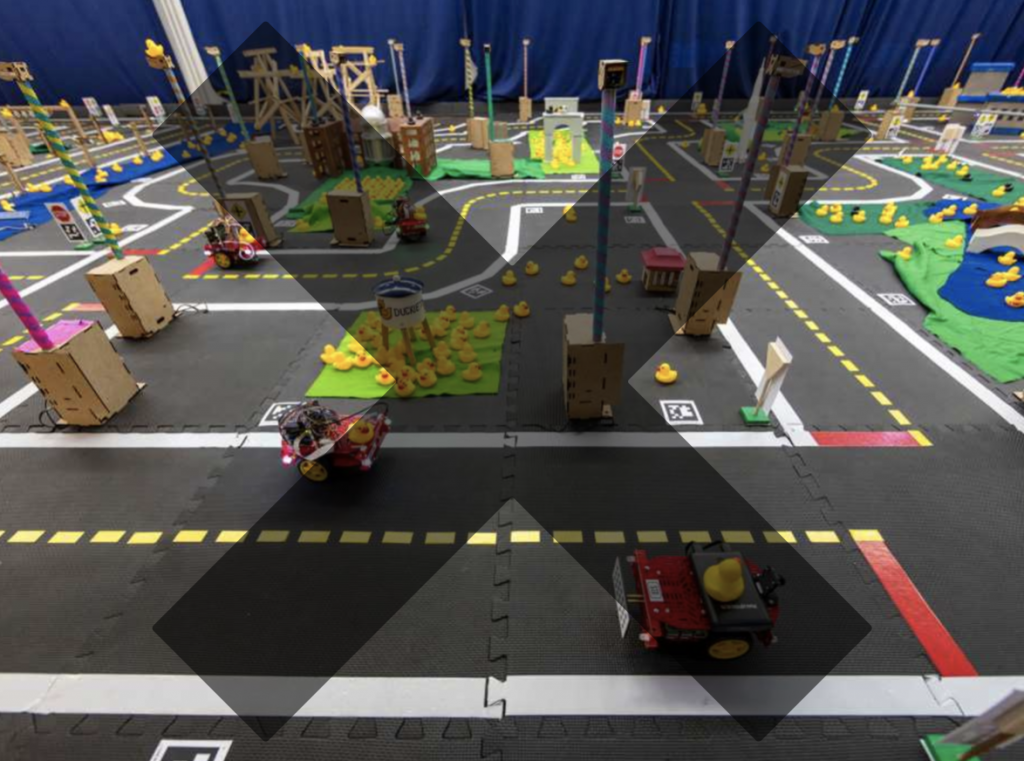

We had a great time at NeurIPS hosting the Third Edition of the AI Driving Olympics. As usual the sound of Duckies attracted an engaging and supportive crowd.

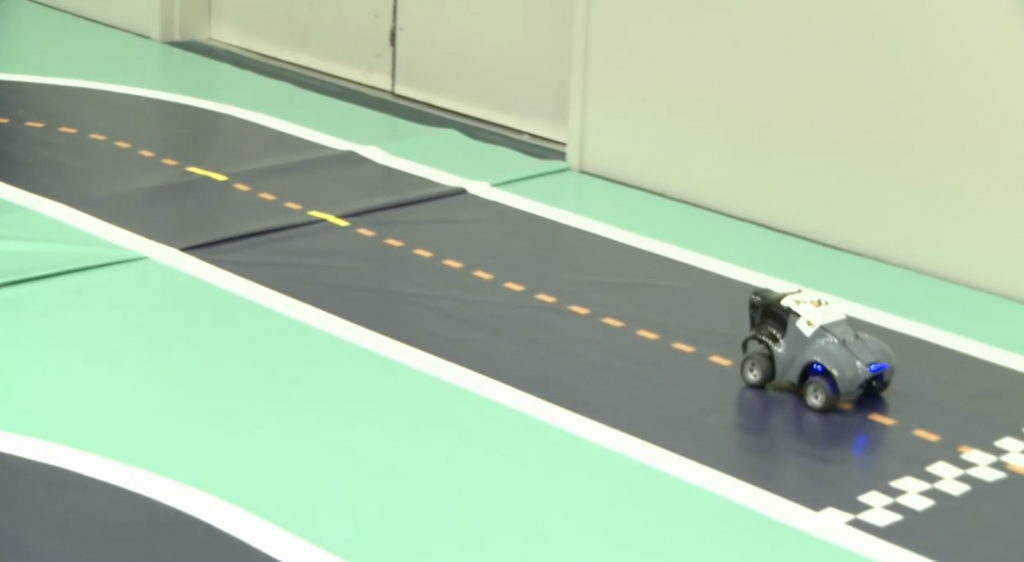

Racing Event

The competition began with the Racing Event, hosted by AWS DeepRacer. They ran their top 10 submissions and selected the winner by who could complete the fastest lap.

Racing Event Winner

Ayrat Baykov at 8:08 seconds

Advanced Perception Event

The winners of the Advanced Perception Event hosted by APTIV and the nuScenes dataset were announced. Luckily a member of the winning team was present to accept the award.

Rank 3

CenterTrack – Open and Vision

Rank 2

VV_Team

Rank 1

StanfordlPRL-TRI

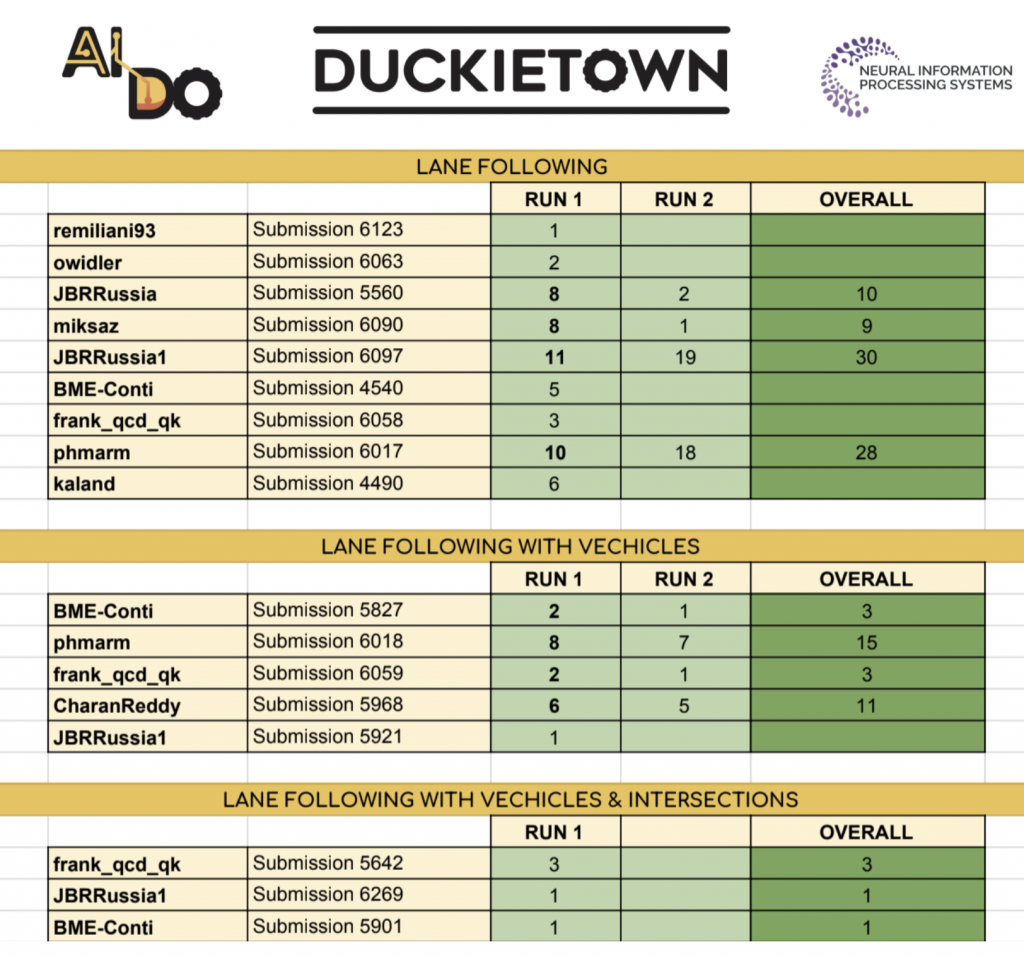

Urban Event

The competition culminated with Duckietown’s own Urban Driving Event, where we ran the top submissions for each of the three challenges on our competition tracks.

Winners

Lane Following

JBRRussia1: Konstantin Chaika, Nikita Sazanovich, Kirill Krinkin, Max Kuzmin

Lane Following with Vehicles

phmarm

Lane Following with Vehicles and Intersections

frank_qcd_qk

Final Scoreboard

A few pictures from the event

Congratulations to all the winners and thanks for participating in the competition. We look forward to seeing you for AI-DO 4!

Duckietown Workshop at RoboCup Junior

Duckietown Workshop at RoboCup Junior 2019

In collaboration with the RoboCup Federation, the Duckietown Foundation will be offering workshops at RoboCup 2019 in Sydney, Australia, providing a hands-on introduction to the Duckietown platform.

We will be hosting three one-day workshops as part of RoboCup 2019 from July 4-6, 2019 for teachers, students, and independent learners who are interested in finding out more about the Duckietown platform. Attendance is completely free and everyone is welcome to apply, even if you are not participating in RoboCup.

There are no formal requirements, though basic familiarity with GNU/Linux and shell usage is recommended.

If you would like to apply to attend a workshop, please complete this form.

We will have Duckiebots and Duckietowns for participants to use. However, you are more than welcome to bring your own Duckiebots, available for purchase at https://get.duckietown.com.

We will be hosting three one-day workshops as part of RoboCup 2019 from July 4-6, 2019 for teachers, students, and independent learners who are interested in finding out more about the Duckietown platform. Attendance is completely free and everyone is welcome to apply, even if you are not participating in RoboCup. There are no formal requirements, though basic familiarity with GNU/Linux and shell usage is recommended.

If you would like to apply to attend a workshop, please complete this form.

We will have Duckiebots and Duckietowns for participants to use. However, you are more than welcome to bring your own Duckiebots, available for purchase at https://get.duckietown.com.

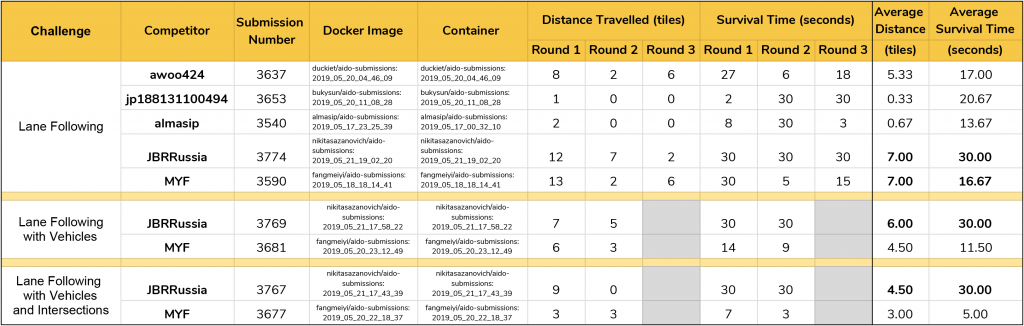

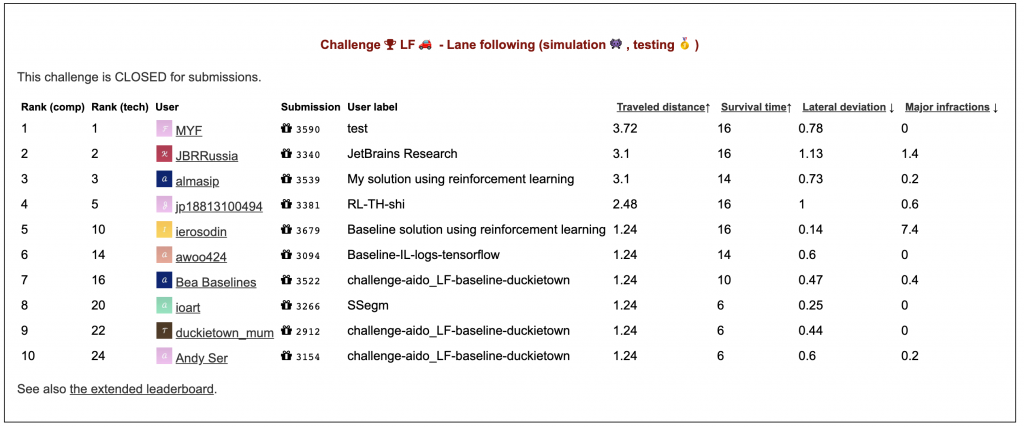

Congratulations to the winners of the second edition of the AI Driving Olympics!

Team JetBrains came out on top on all 3 challenges

It was a busy (and squeaky) few days at the International Conference on Robotics and Automation in Montreal for the organizers and competitors of the AI Driving Olympics.

The finals were kicked off by a semifinals round, where we the top 5 submissions from the Lane Following in Simulation leaderboard. The finalists (JBRRussia and MYF) moved forward to the more complicated challenges of Lane Following with Vehicles and Lane Following with Vehicles and Intersections.

If you couldn’t make it to the event and missed the live stream on Facebook, here’s a short video of the first run of the JetBrains Lane Following submission.

Thanks to everyone that competed, dropped in to say hello, and cheered on the finalists by sending the song of the Duckie down the corridors of the Palais des Congrès.

A few pictures from the event

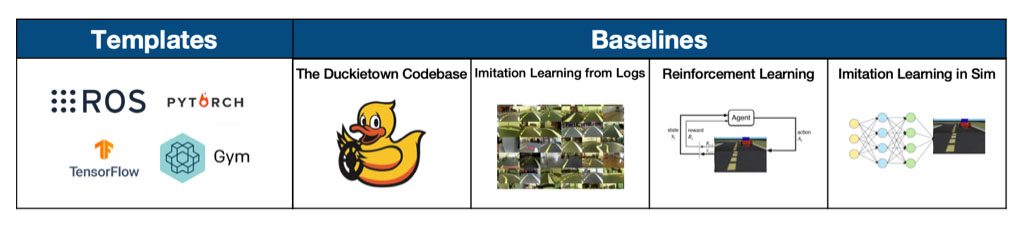

Don't know much about the AI Driving Olympics?

It is an accessible and reproducible autonomous car competition designed with straightforward standardized hardware, software and interfaces.

Get Started

Step 1: Build and test your agent with our available templates and baselines

Step 2: Submit to a challenge

Check out the leaderboard

View your submission in simulation

Step 3: Run your submission on a robot

in a Robotarium