Real-Time Reinforcement Learning in Duckiematrix

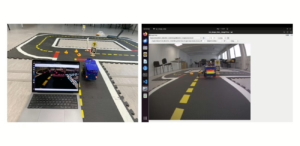

This project explores Reinforcement Learning in Duckiematrix within Duckietown, analyzing real-time delays and their impact on autonomous driving performance.

Creating novel autonomous behaviors for your Duckiebots, or working to improve upon existing ones, is a great way to learn how to work in a team and gain real-world skills in the process. Let us know about your robotics and AI projects!

This project explores Reinforcement Learning in Duckiematrix within Duckietown, analyzing real-time delays and their impact on autonomous driving performance.

This project studies Sim-to-Real Transfer in Duckietown using high- and low-fidelity simulators to predict autonomous vehicles performance on Duckiebots.

This project implements an autonomous navigation system on the Duckiebot DB21J in the Duckietown environment using vision-based control and VLMs.

This project develops Duckietown Autonomous Navigation using Dijkstra planning, perception, and control for lane following, turning, and obstacle detection.

This project explores localization in Duckietown using sensor fusion to estimate Duckiebot poses through visual tags and stitched camera inputs.

Students at TUM build on the Duckietown out-of-the-box autonomous navigation pipeline introducing features for a more complete driving experience.

This project implements adaptive pure pursuit control and deep learning-based obstacle detection for lane following and obstacle avoidance for Duckiebots.

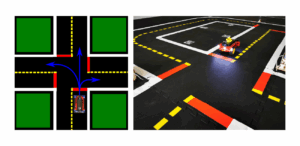

This project implements an Autonomous Navigation System using computer vision, and Dijkstra algorithm for precise lane following and safe intersection handling.

This project uses PID control, AprilTag-based turns, dead reckoning, and visual servoing to enable autonomous navigation and parking in Duckietown.

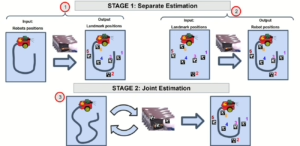

Implementing Extended Kalman Filter (EKF) SLAM on Duckiebots to enhance localization accuracy and map static landmarks using AprilTags and odometry.

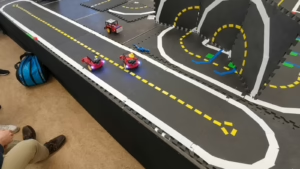

A path planning algorithm that enables optimized multi-robot navigation in Duckietown, using nodegraph mapping and movement minimization techniques.

Learn how to transform Duckietown in a smart city performing autonomous recovery of distressed Duckiebots, through vehicle to infrastructure (v2i) interactions.

This project enhances Adaptive Lane Following by enabling Duckiebots to autonomously calibrate wheel trim, ensuring stable navigation without manual tuning.

This project develops a flexible tether control system for Duckiebot, using ROS and an automated spool to optimize tether length for mobility and efficiency.

This project uses deep reinforcement learning for autonomous lane following, tackling sim-to-real challenges with domain adaptation and vision-based control.

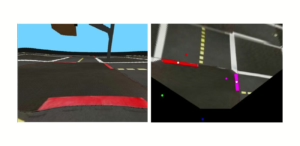

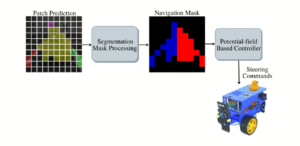

This project develops a visual obstacle detection system in Duckietown using inverse perspective mapping to improve autonomous navigation accuracy.

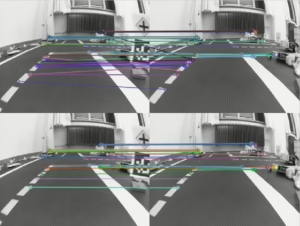

Explore how 3D image features enhance Duckiebot intersection navigation in Duckietown, blending cutting-edge BEV tech with hands-on autonomous driving insights.

This project is on monocular navigation in Duckietown using LEDNet, comparing its performance with vision transformer for lane-following and obstacle avoidance.

This thesis applies Reinforcement Learning (RL) for autonomous lane-keeping and YOLO v5 obstacle detection in Duckietown, achieving safe navigation.

What if Duckietowns had smart lighting, so that car and street light fields would combine dynamically for optimal visual perception?

This project uses DBSCAN (Density-Based Algorithm for Discovering Clusters

in Large Spatial Databases with Noise) to improve Duckiebot intersection navigation.

The Obstavoid Algorithm enables obstacle avoidance in Duckietown in realtime, calculating optimal paths using a 3D grid for dynamic, collision-free navigation.

Have you ever wanted to work from home but your Duckiebot is back at the lab? Learn how to access your Duckiebot from anywhere at any time.

This project by Gianmarco Bernasconi, a former Duckietown student, provided an estimate of the Duckiebot’s pose using a monocular visual odometry approach.

This project enhances Duckiebot planning capabilities for autonomous navigation in Duckietowns using the Dijkstra algorithm.

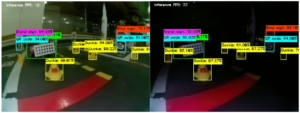

This project implements robust object detection in Duckietown for Duckiebots under varying lighting conditions and object clutter using a YOLO-based NN.

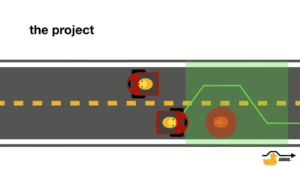

This student project implements dynamic obstacle avoidance for Duckiebots with the aim of detecting and navigating around static and moving obstacles.

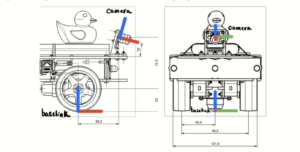

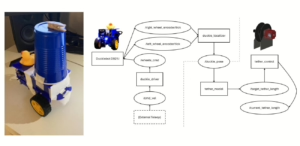

This student project implements an Ackermann steering system on a Duckiebot to simulate 4 wheels, and better and more complex real-world car model.

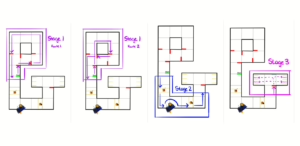

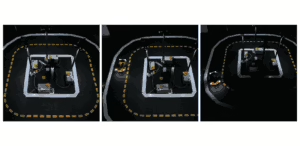

This student project implements an autonomous parking solution, inclusive of parking lot design and autonomous behavior, for Duckiebots in Duckietown.

“Safe Reinforcement Learning (Safe-RL)” explores using Deep Q Learning to train Duckiebots to perform lane following. Reproduce these results with Duckietown.

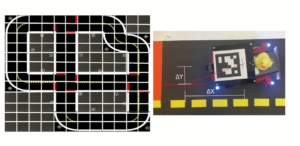

Anatidaephilia is loving the idea that somewhere, somehow, a duck is watching you. The cSLAM equips Duckietowns with the ability to localize Duckiebots.

If you would like your project to be featured on this page, let us know about it!