General Information

- Deep Reinforcement Learning for Autonomous Navigation on Duckietown Platform: Evaluation of Adversarial Robustness

- Abdullah Hosseini, Saeid Houti, Junaid Qadir

- Qatar University

- A. Hosseini, S. Houti and J. Qadir, "Deep Reinforcement Learning for Autonomous Navigation on Duckietown Platform: Evaluation of Adversarial Robustness," 2023 International Symposium on Networks, Computers and Communications (ISNCC), Doha, Qatar, 2023, pp. 1-6, doi: 10.1109/ISNCC58260.2023.10323905.

Deep RL for Autonomous Navigation on Duckietown Platform: Evaluation of Adversarial Robustness

What is adversarial robustness in navigation tasks all about? A few important topics:

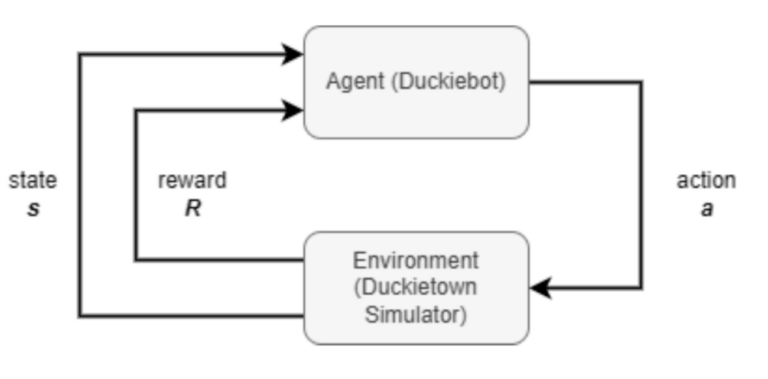

Reinforcement Learning (RL) is a type of machine learning where agents learn to make decisions by receiving rewards or penalties based on their actions in an environment. This is great because it removed the need for curated training datasets.

Deep Reinforcement Learning (DRL) enhances RL by using deep neural networks to process complex inputs and make decisions. Deep networks are neural networks with multiple layers.

Adversarial Robustness refers to a system’s ability to resist and maintain performance despite deliberate attacks or input perturbations.

Navigation is the task of finding feasible paths between points in the environment like Google Maps or similar systems provide us in everyday life.

Learn about RL, navigation and other robot autonomy topics at the link below.

Abstract

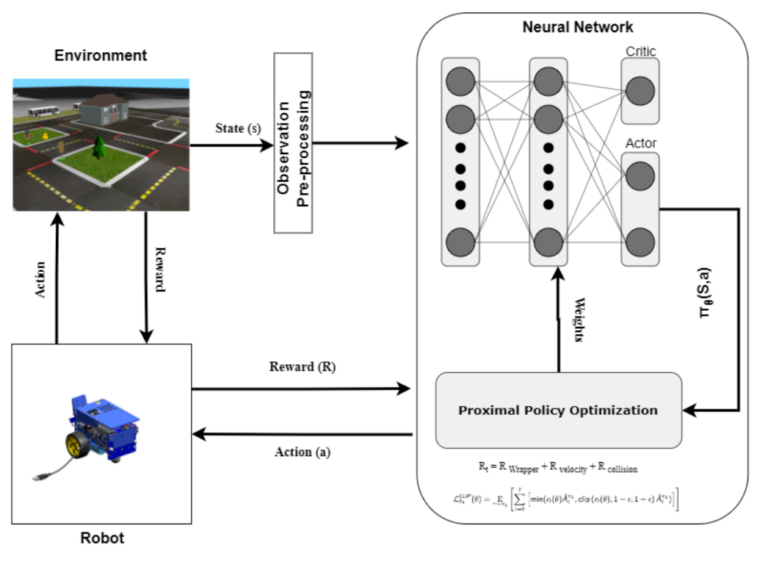

Self-driving cars have gained widespread attention in recent years due to their potential to revolutionize the transportation industry. However, their success critically depends on the ability of reinforcement learning (RL) algorithms to navigate complex environments safely. In this paper, we investigate the potential security risks associated with end-to-end deep RL (DRL) systems in autonomous driving environments that rely on visual input for vehicle control, using the open-source Duckietown platform for robotics and self-driving vehicles.

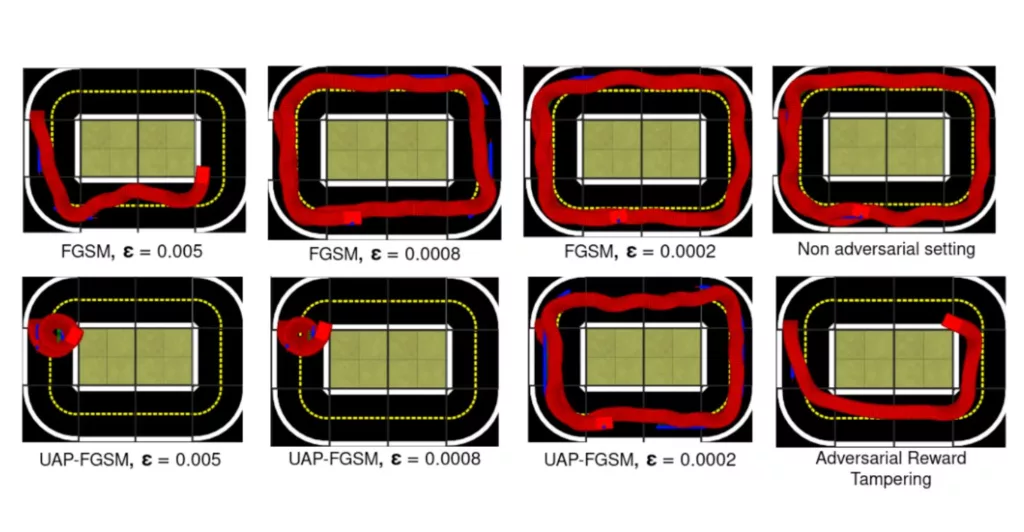

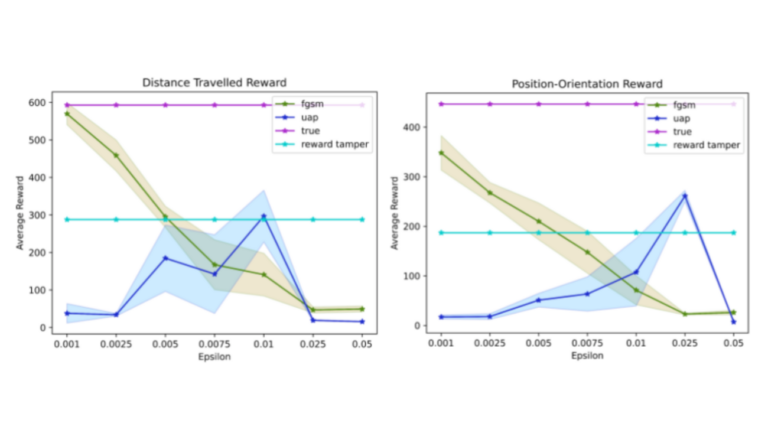

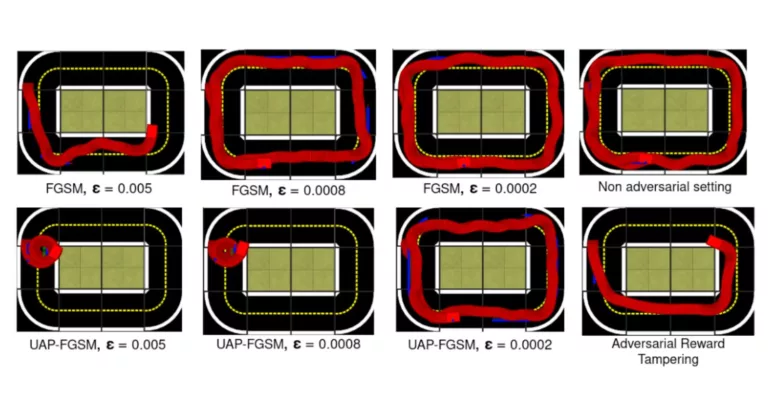

We demonstrate that current DRL algorithms are inherently susceptible to attacks by designing a general state adversarial perturbation and a reward tampering approach. Our strategy involves evaluating how attacks can manipulate the agent’s decision-making process and using this understanding to create a corrupted environment that can lead the agent towards low-performing policies. We introduce our state perturbation method, accompanied by empirical analysis and extensive evaluation, and then demonstrate a targeted attack using reward tampering that leads the agent to catastrophic situations.

Our experiments show that our attacks are effective in poisoning the learning of the agent when using the gradient-based Proximal Policy Optimization algorithm within the Duckietown environment. The results of this study are of interest to researchers and practitioners working in the field of autonomous driving, DRL, and computer security, and they can help inform the development of safer and more reliable autonomous driving systems.

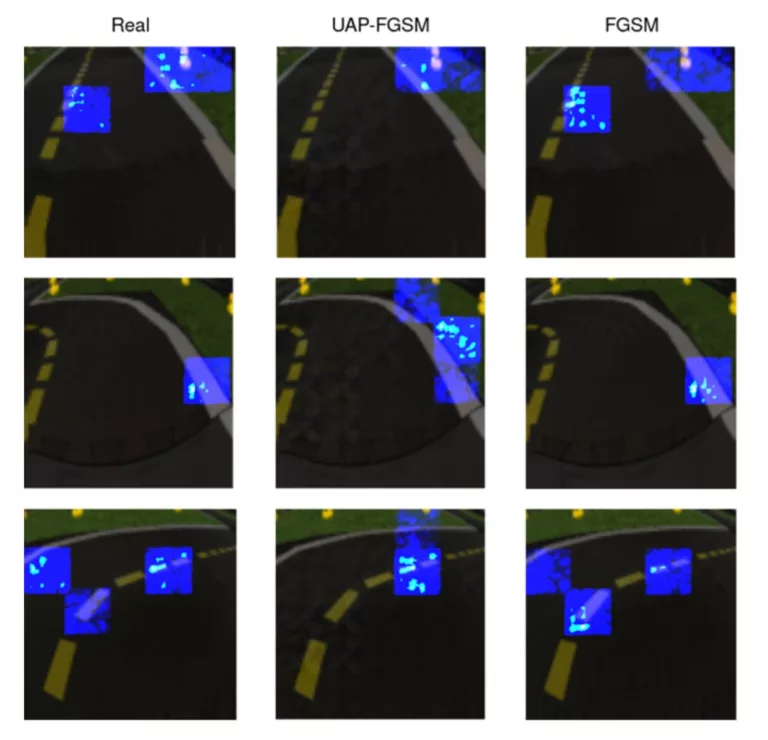

Highlights - Evaluation of Adversarial Robustness Results

Here is a visual tour of the work of the authors. For more details, check out the paper link.

Conclusion

Here are the conclusions from the authors of this paper:

“The focus of our study was to address adversarial attacks on deep reinforcement learning (DRL) agents, specifically examining state adversarial attacks and reward-tampering attacks.

We developed a parametric framework for state adversarial attacks and a non-parametric framework for reward tampering attacks, which enabled us to create effective attacks. We found that the performance of a DRL agent declined rapidly after the attack, and the deviation from the road was worse than that of standard DRL.

We used salient maps to provide a clear explanation of the policies’ internal operations in both the adversarial and non-adversarial aspects. Our research provides insight into the potential vulnerabilities of DRL agents and highlights the need for more robust and secure agents to mitigate the risk of adversarial attacks.

Moving forward, future work will focus on incorporating real-world analysis to test the performance of the DuckieBot under both adversarial and non-adversarial settings”.

Project Authors

Abdullah Hosseini is a Research and Development Specialist at Weill Cornell Medicine in Qatar.

Saeid Houti is a Software Developer at Ministry of Education and Higher Education Qatar.

Junaid Qadir is a Professor of Computer Engineering at Qatar University.

Learn more

Duckietown is a platform for creating and disseminating robotics and AI learning experiences.

It is modular, customizable and state-of-the-art, and designed to teach, learn, and do research. From exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge, Duckietown evolves with the skills of the user.

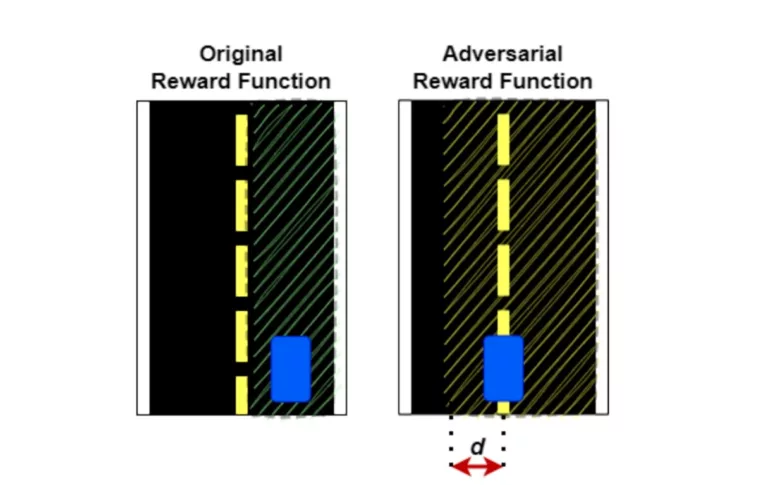

End-to-end Deep RL (DRL) systems: in autonomous driving environments that rely on visual input for vehicle control face potential security risks, including:

- State Adversarial Perturbations: Subtle alterations to visual input that mislead the DRL agent, causing incorrect decision-making.

- Reward Tampering: Manipulation of the reward signal to misguide the learning process, leading the agent to adopt unsafe or inefficient policies.

These vulnerabilities can compromise the safety and reliability of self-driving vehicles.