- Title: Integration of open source platform Duckietown and gesture recognition as an interactive interface for the museum robotic guide

- Authors: Feng-Ching Cheng, Zi-Yu Wang, and Jee-Jee Chen

- Published in 2018 Wireless and Optical Communication Conference

Integration of open source platform Duckietown and gesture recognition as an interactive interface for the museum robotic guide

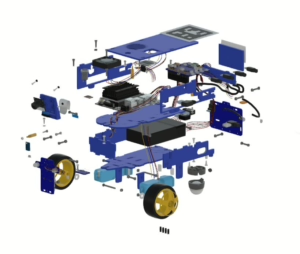

In recent years, population aging becomes a serious problem. To decrease the demand for labor when navigating visitors in museums, exhibitions, or libraries, this research designs an automatic museum robotic guide which integrates image and gesture recognition technologies to enhance the guided tour quality of visitors. The robot is a self-propelled vehicle developed by ROS (Robot Operating System), in which we achieve the automatic driving based on the function of lane-following via image recognition. This enables the robot to lead guests to visit artworks following the preplanned route. In conjunction with the vocal service about each artwork, the robot can convey the detailed description of the artwork to the guest. We also design a simple wearable device to perform gesture recognition. As a human machine interface, the guest is allowed to interact with the robot by his or her hand gestures. To improve the accuracy of gesture recognition, we design a two phase hybrid machine learning-based framework. In the first phase (or training phase), k-means algorithm is used to train historical data and filter outlier samples to prevent future interference in the recognition phase. Then, in the second phase (or recognition phase), we apply KNN (k-nearest neighboring) algorithm to recognize the hand gesture of users in real time. Experiments show that our method can work in real time and get better accuracy than other methods.

Did you find this interesting?

Read more Duckietown based papers here.