Author: Duckietown Admin

Interpretable Reinforcement Learning for Visual Policies

General Information

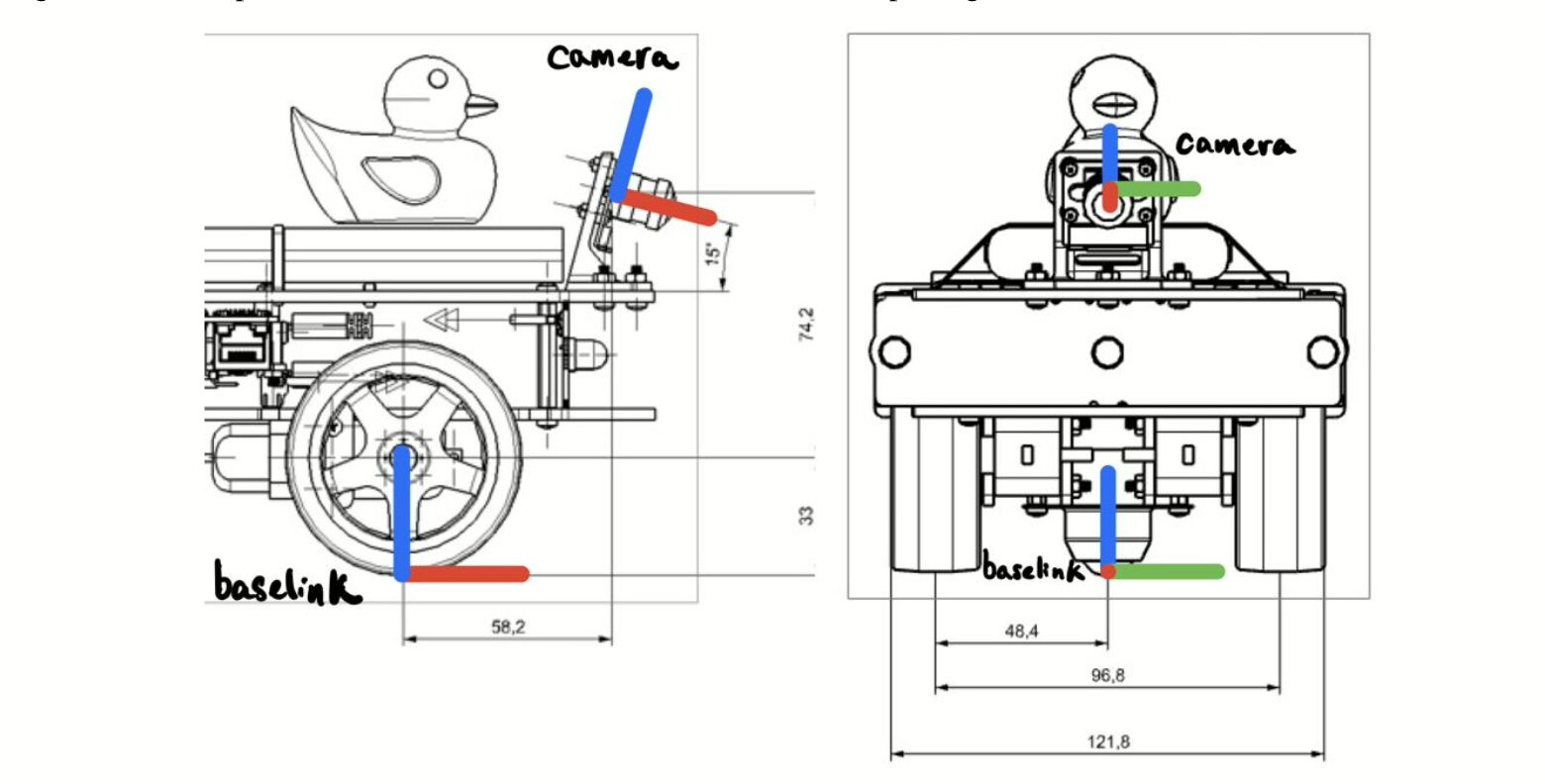

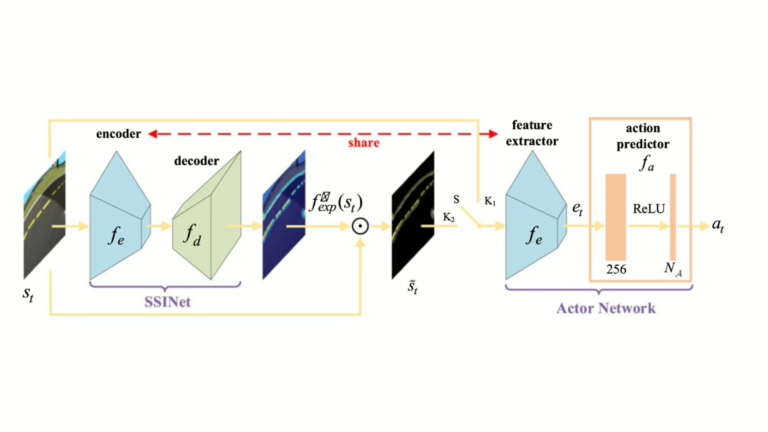

- Title: Self-Supervised Discovering of Interpretable Features for Reinforcement Learning

- Authors: Wenjie Shi, Gao Huang, Shiji Song, Zhuoyuan Wang, Tingyu Lin, Cheng Wu

- Institution: Tsinghua University, Beijing, China

- Citation: W. Shi, G. Huang, S. Song, Z. Wang, T. Lin and C. Wu, "Self-Supervised Discovering of Interpretable Features for Reinforcement Learning," in IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 44, no. 5, pp. 2712-2724, 1 May 2022, doi: 10.1109/TPAMI.2020.3037898.

Interpretable Reinforcement Learning for Visual Policies

Reinforcement Learning (RL) has enabled solving complex problems, especially in relation to visual perception in robotics. An outstanding challenges is that of allowing humans to make sense of the decision making process, so to enable deployment in safety-critical applications such as, e.g., autonomous driving. This work focuses on the problem of interpretable reinforcement learning in vision-based agents.

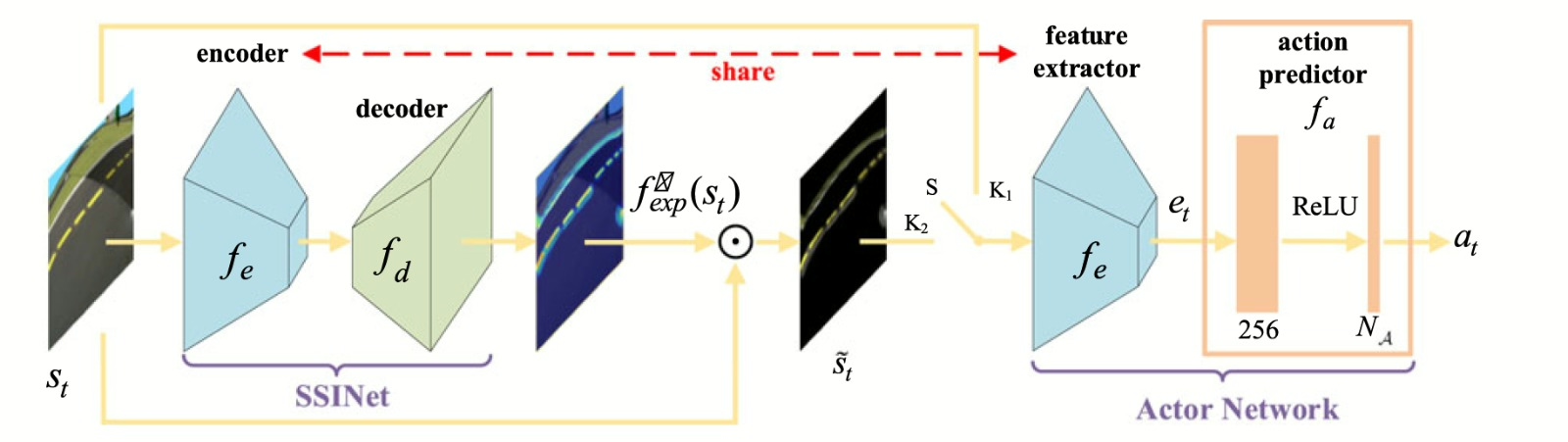

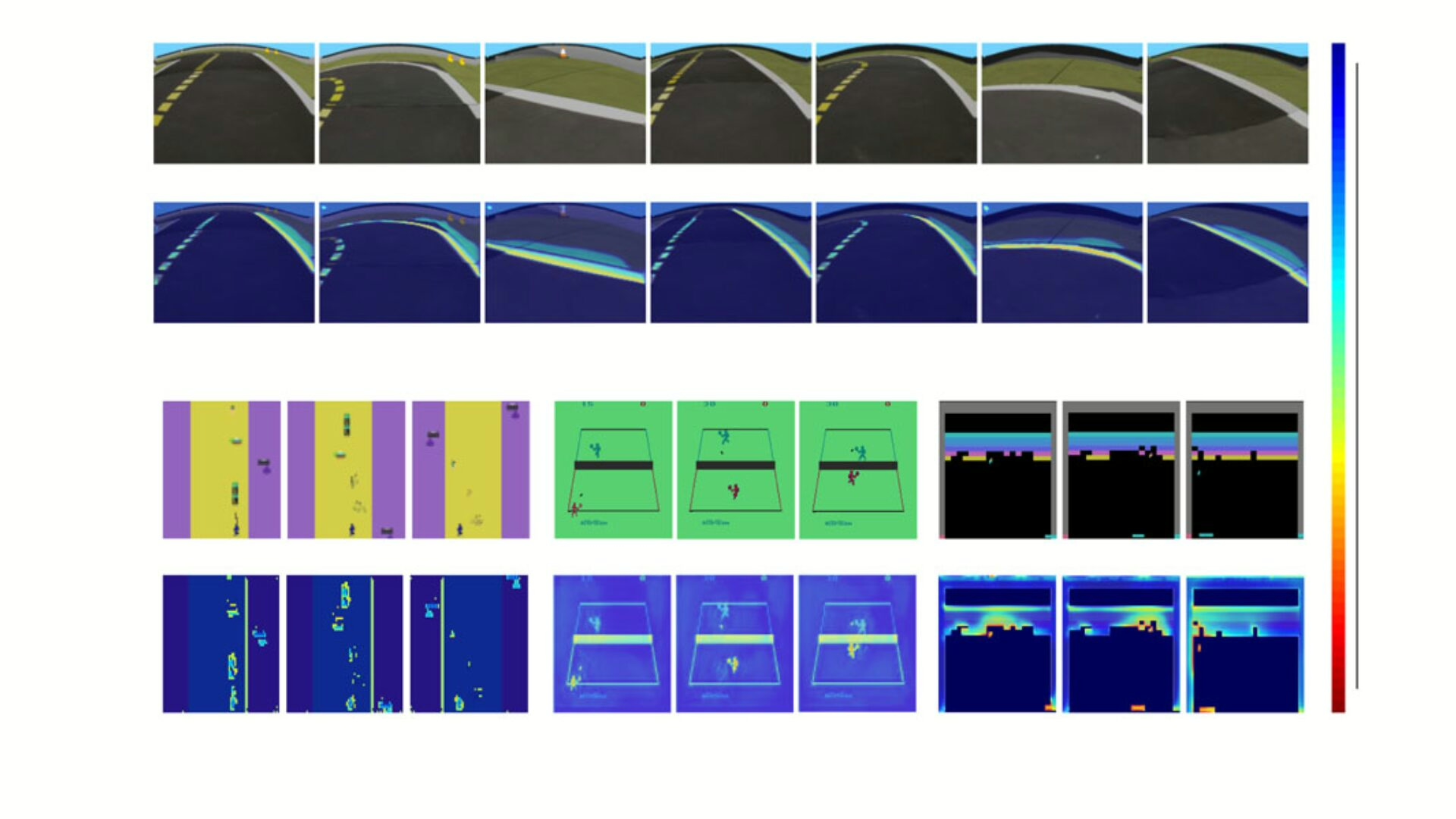

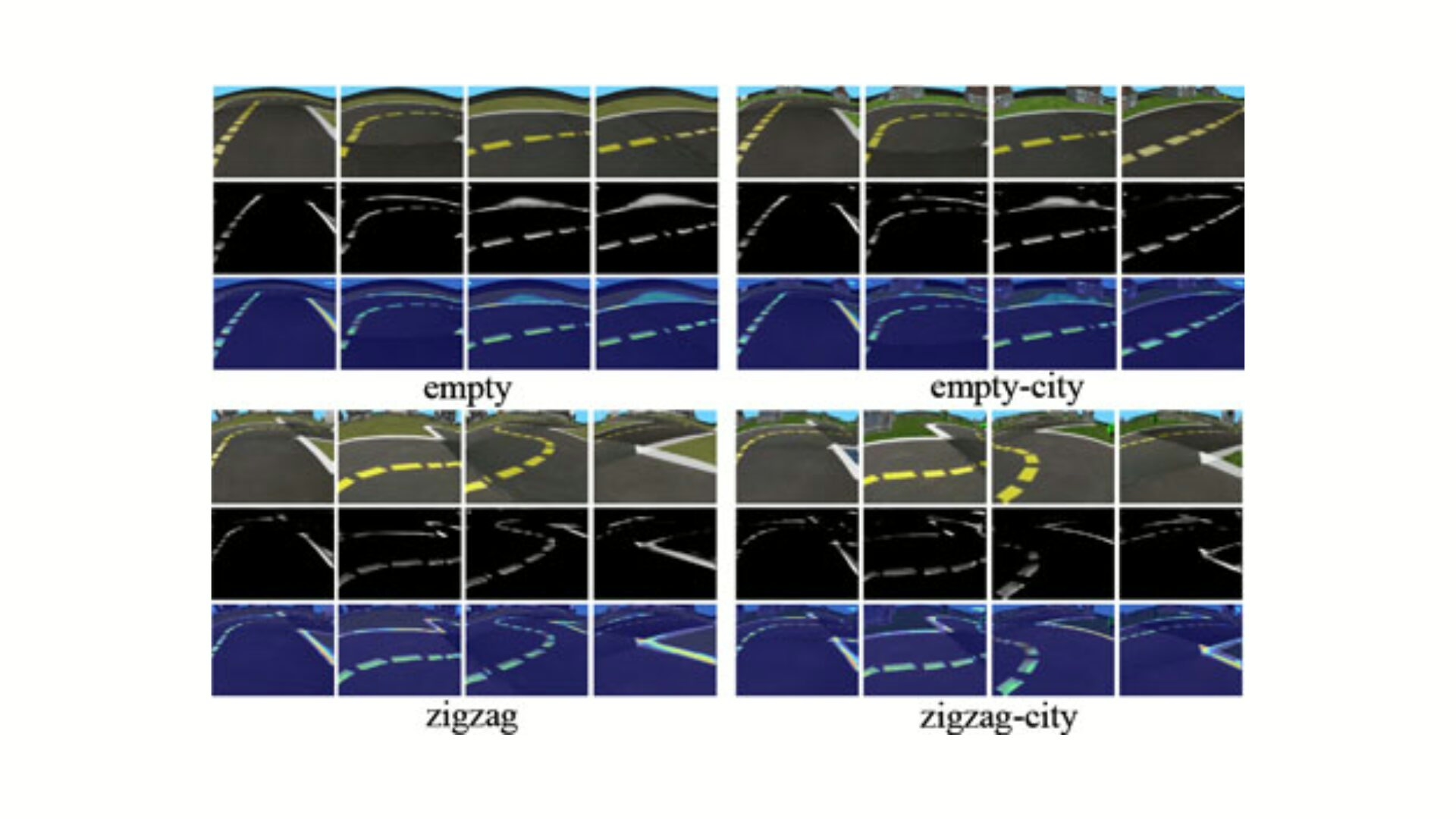

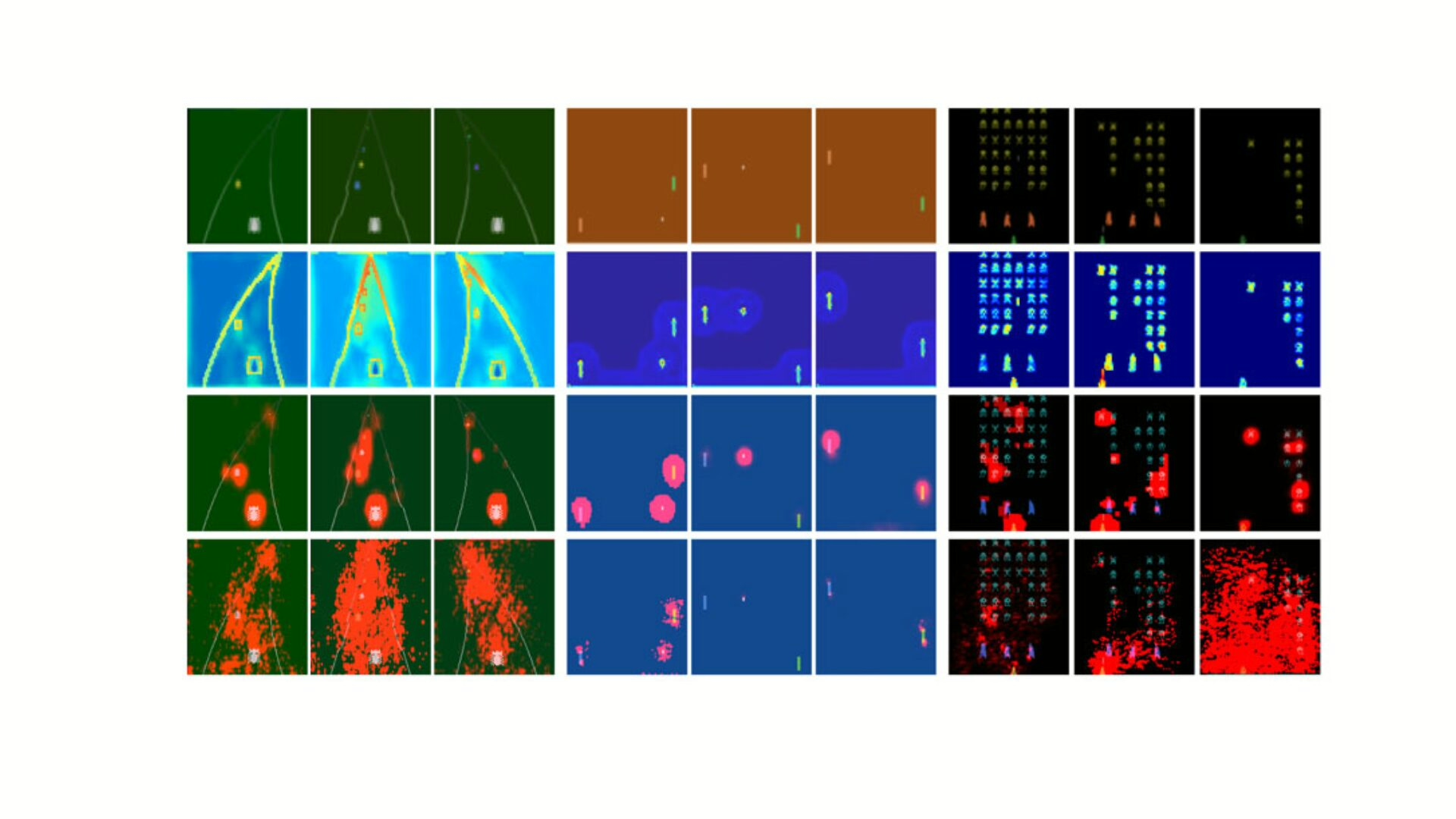

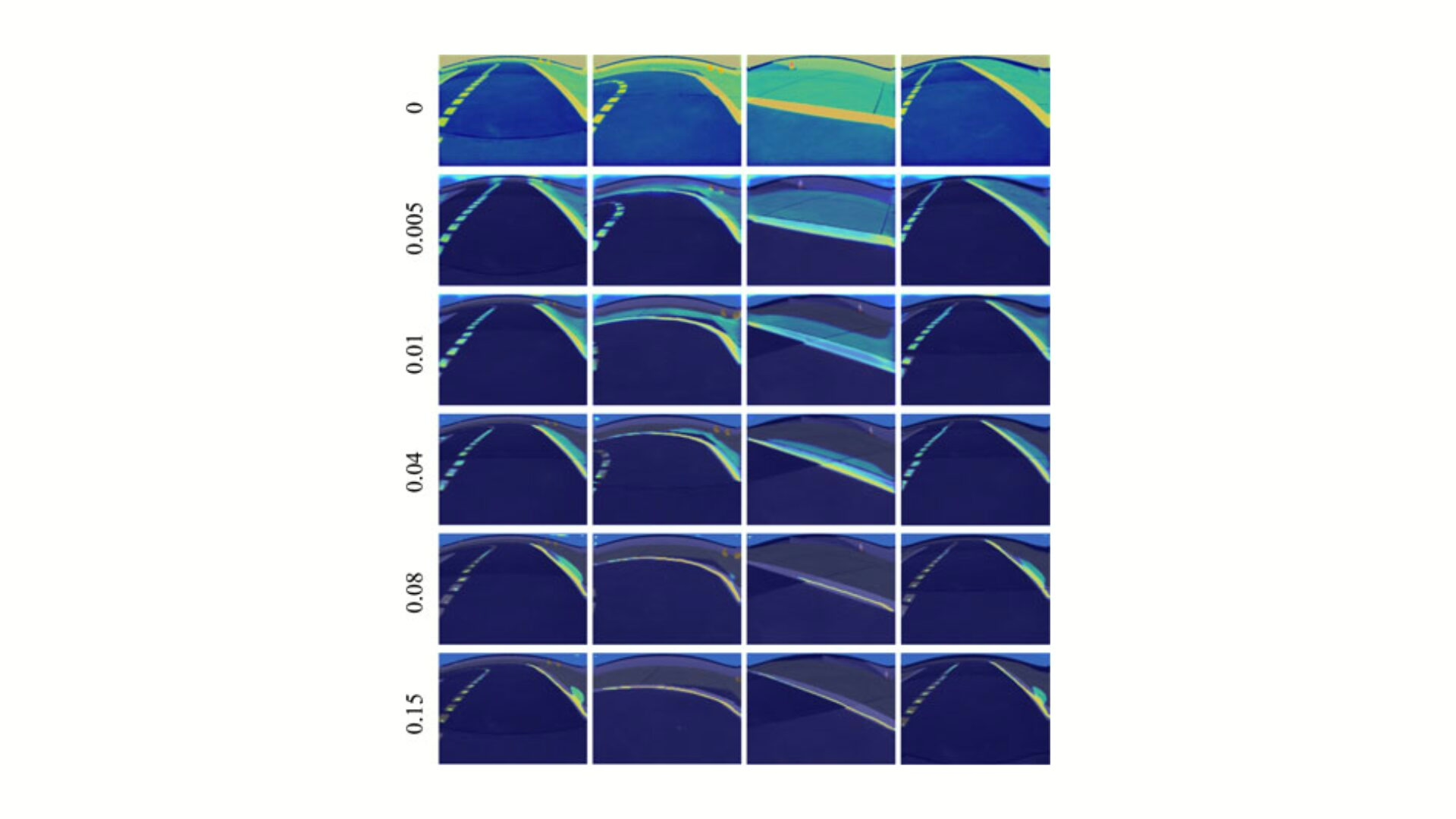

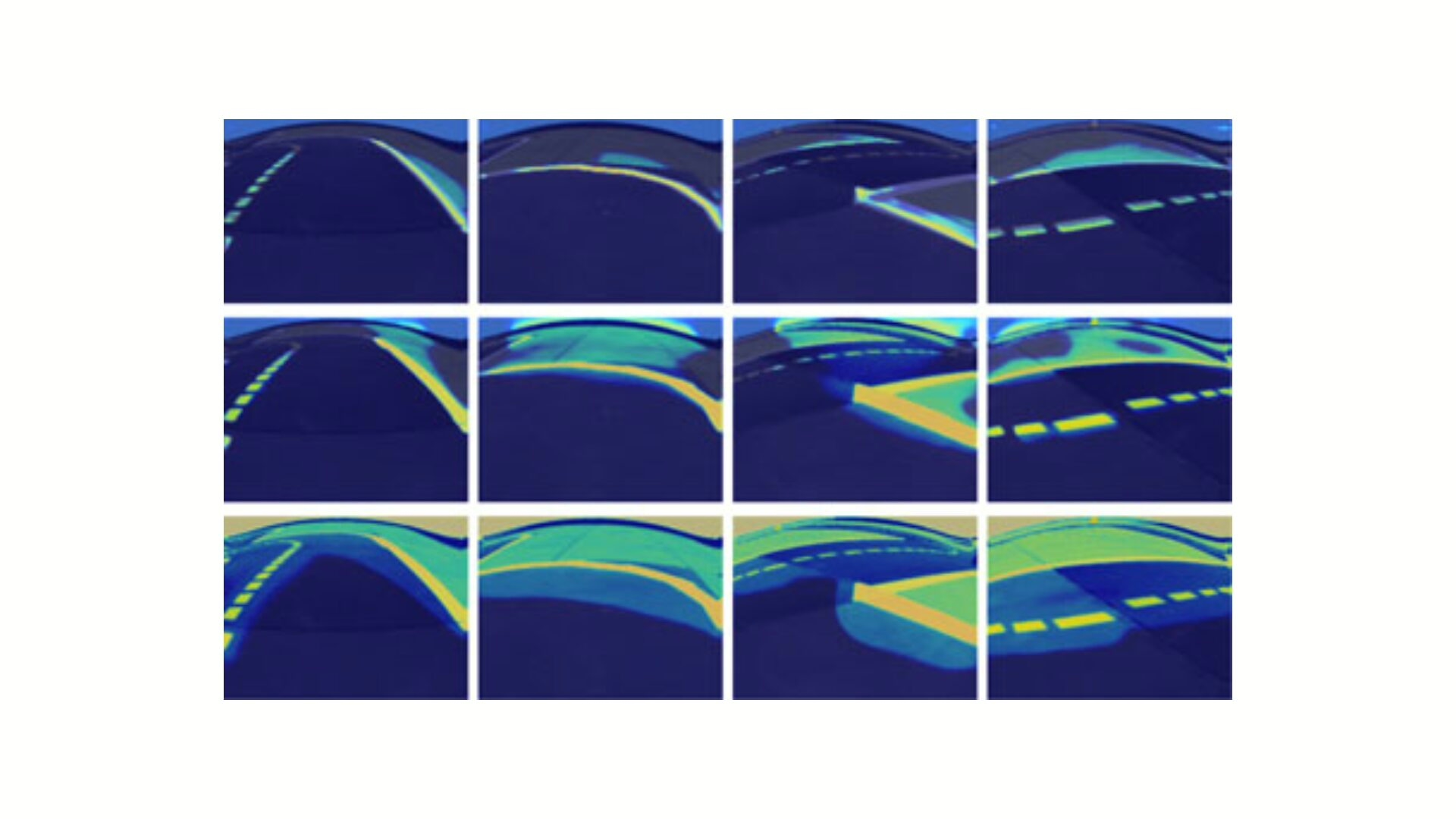

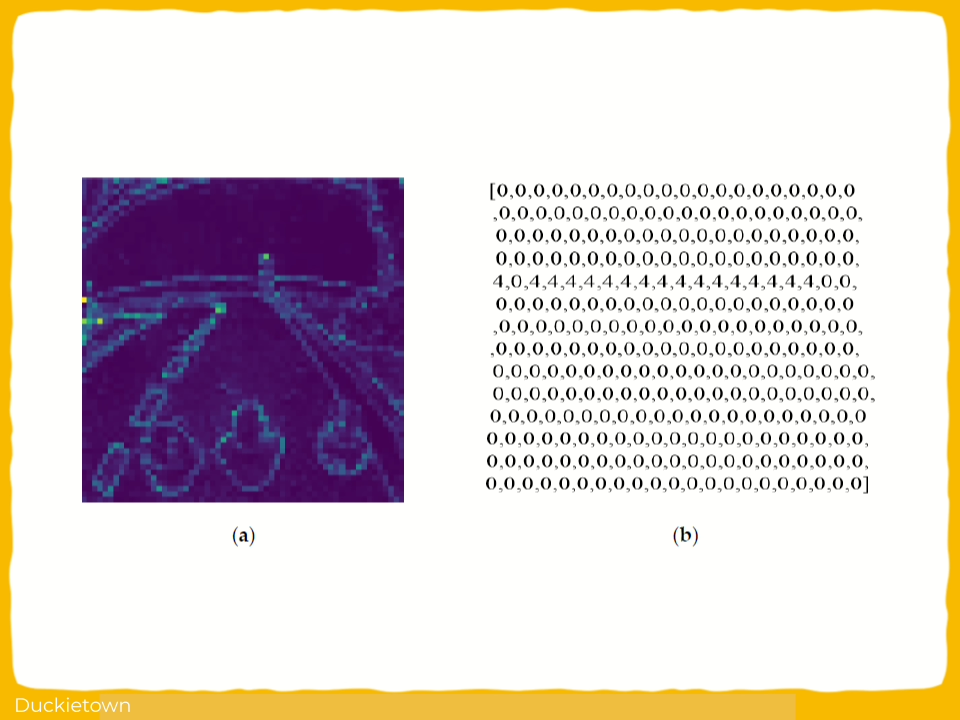

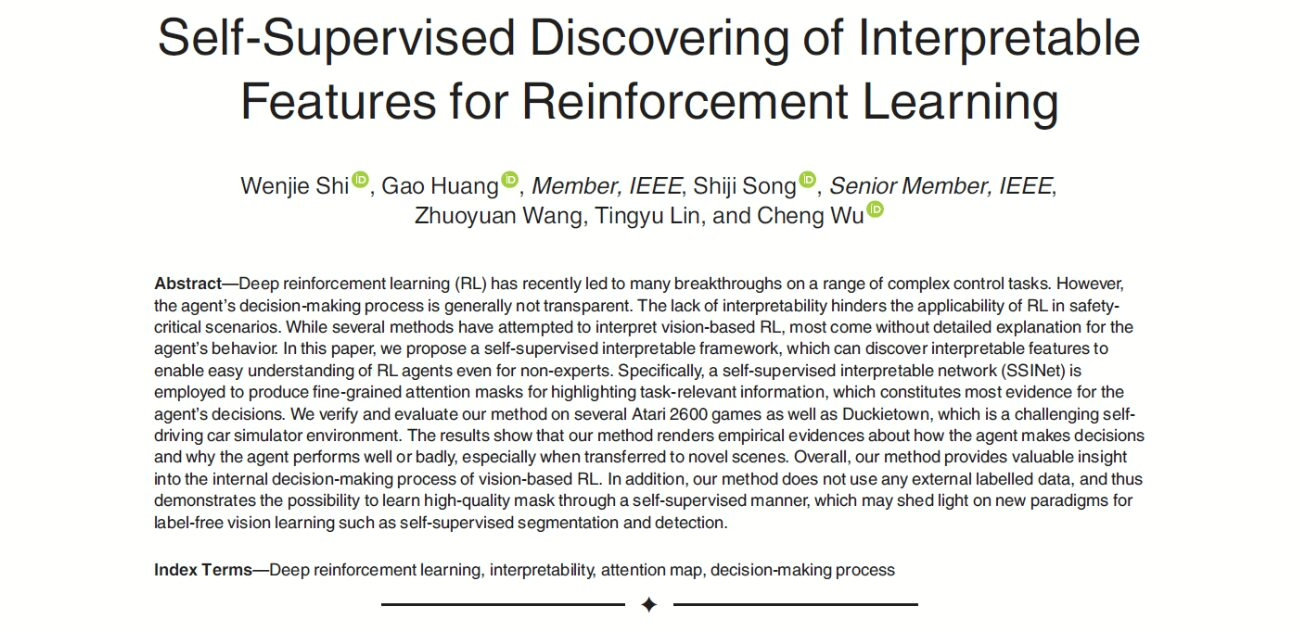

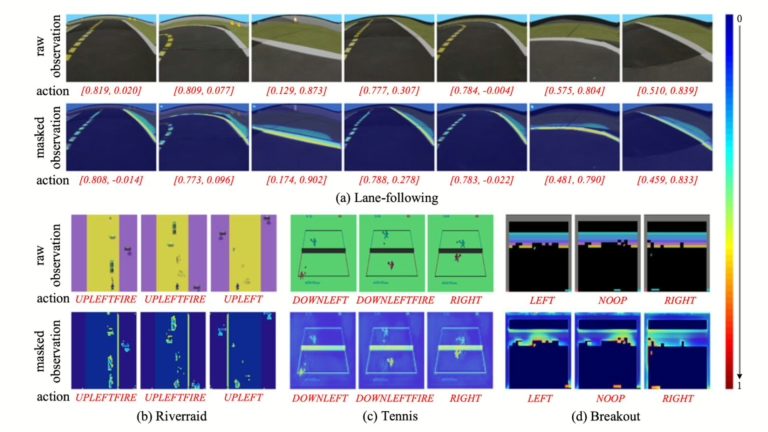

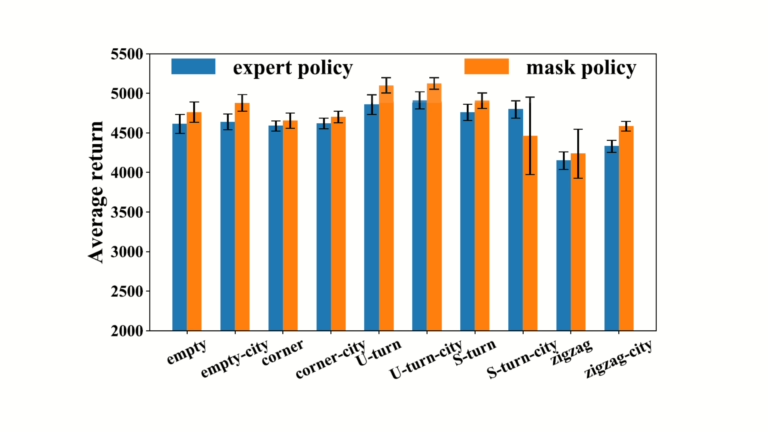

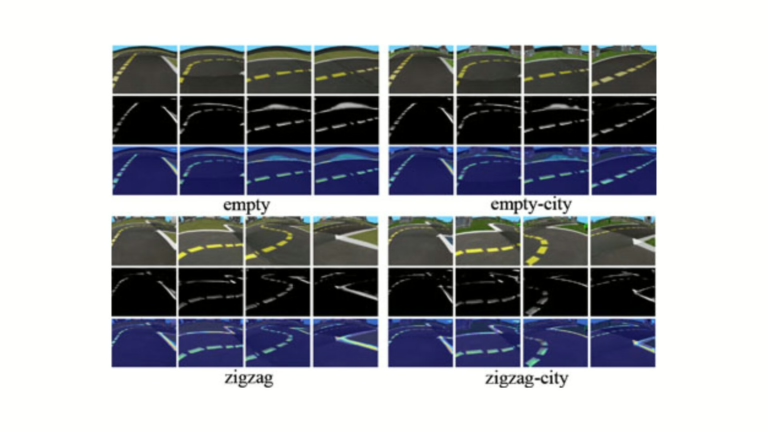

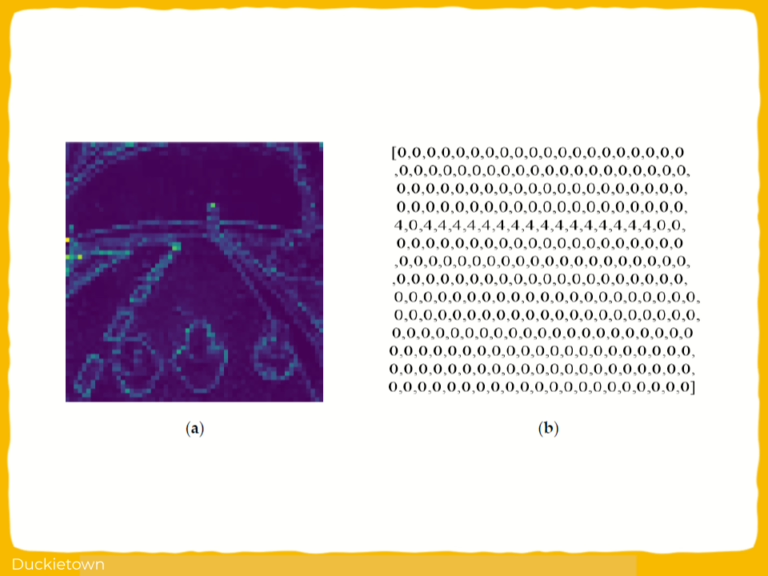

In particular, this research introduces a self-supervised framework for interpretable reinforcement learning in vision-based agents. The focus lies in enhancing policy interpretability by generating precise attention maps through Self-Supervised Attention Mechanisms (SSAM).

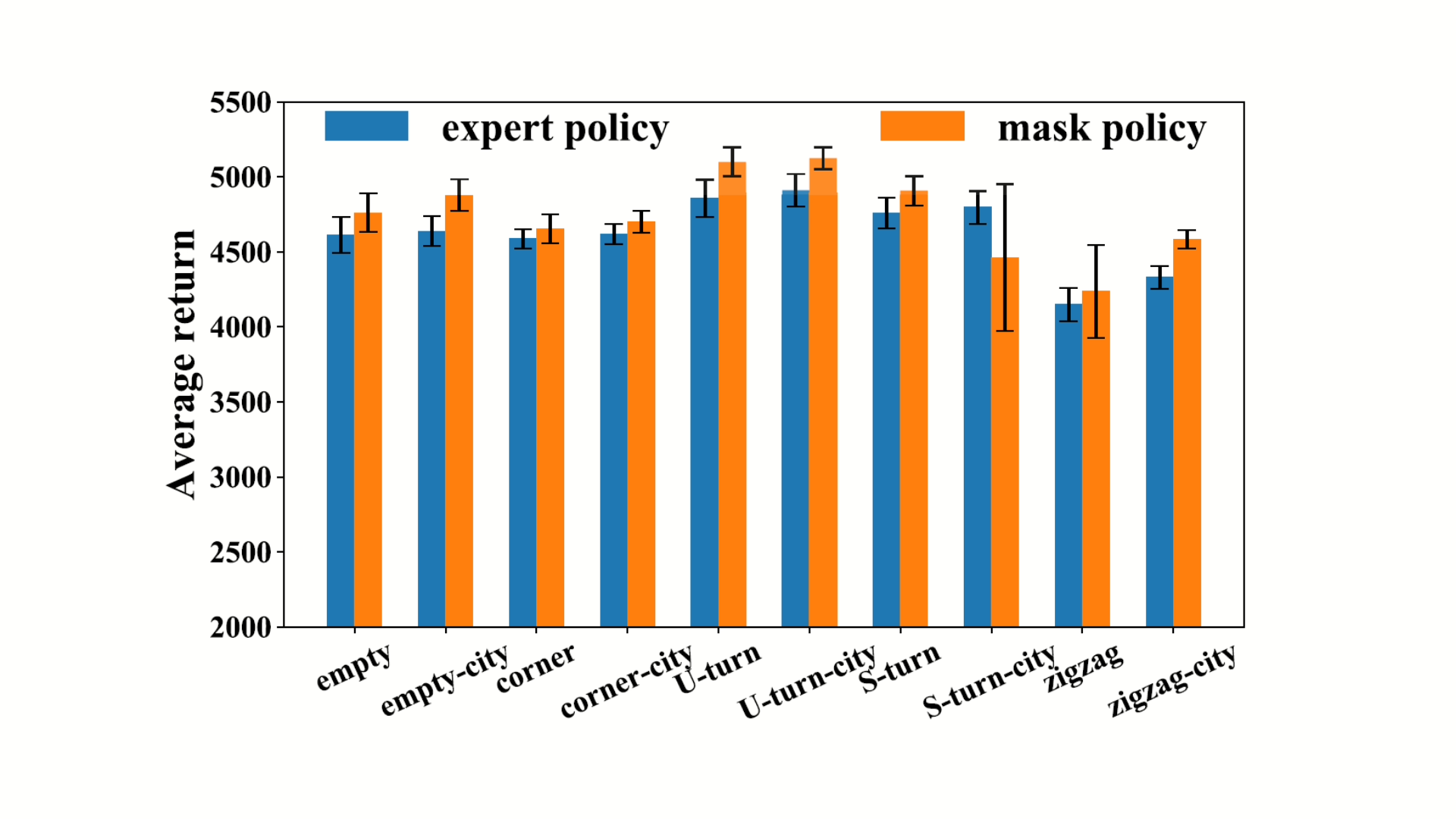

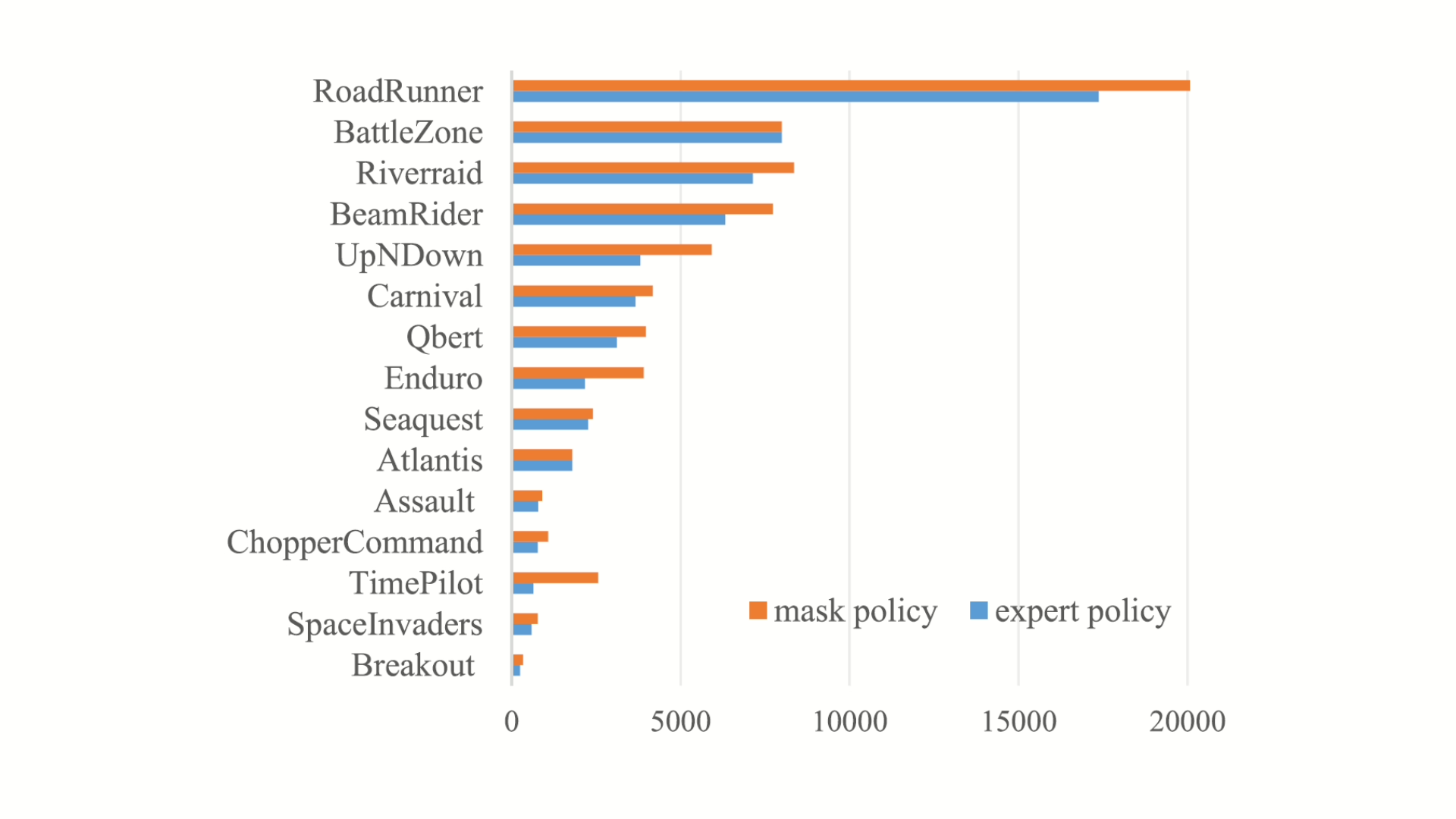

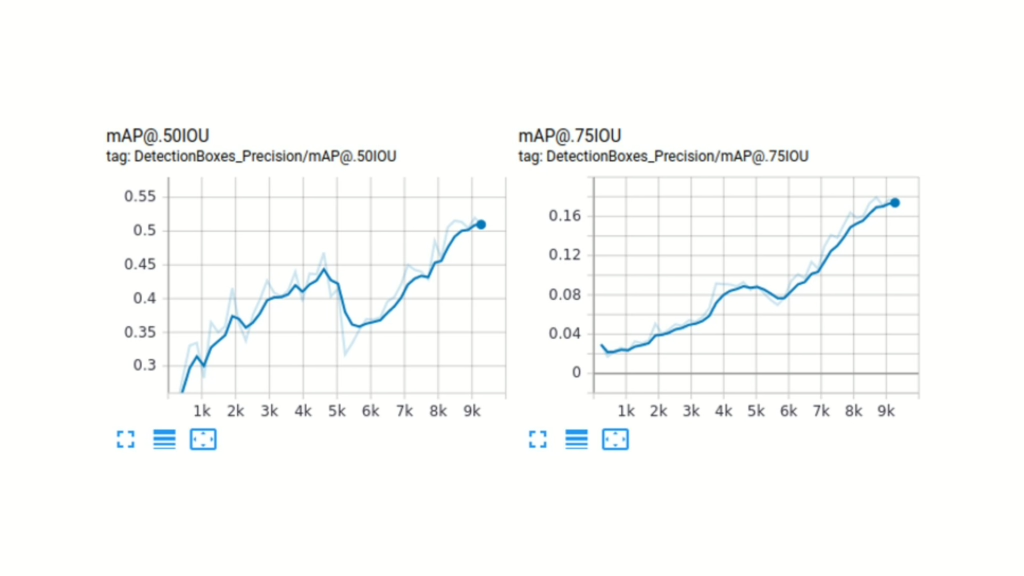

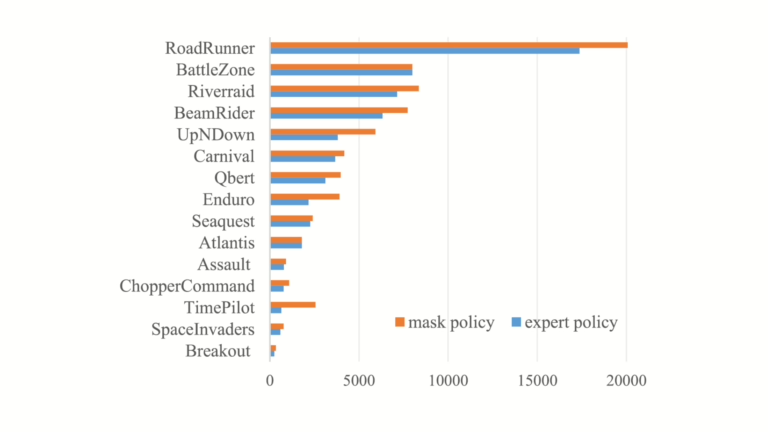

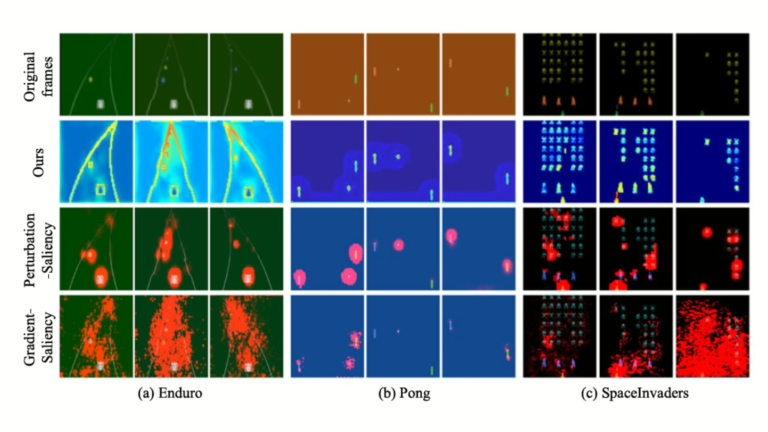

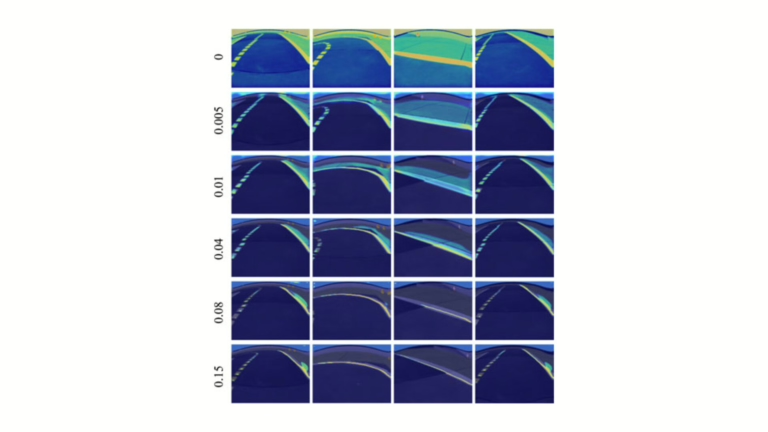

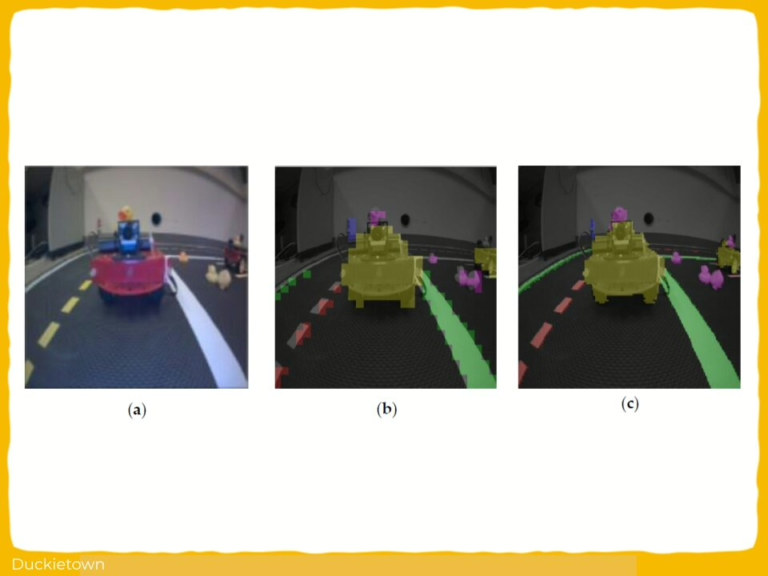

The method does not rely on external labels and works using data generated by a pretrained RL agent. A self-supervised interpretable network (SSINet) is deployed to identify task-relevant visual features. The approach is evaluated across multiple environments, including Atari and Duckietown.

Key components of the method include:

- A two-stage training process using pretrained policies and frozen encoders

- Attention masks optimized using behavior resemblance and sparsity constraints

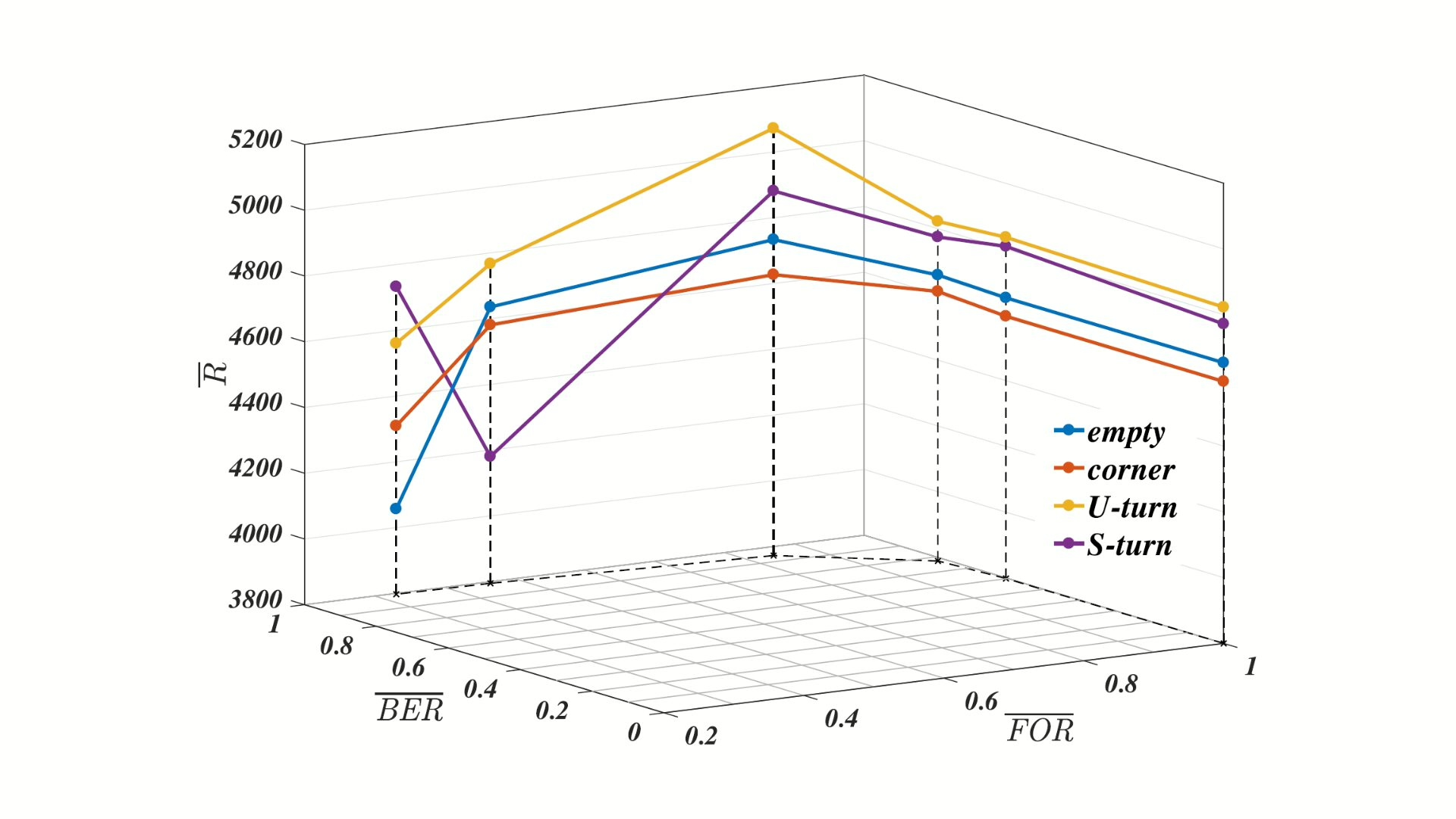

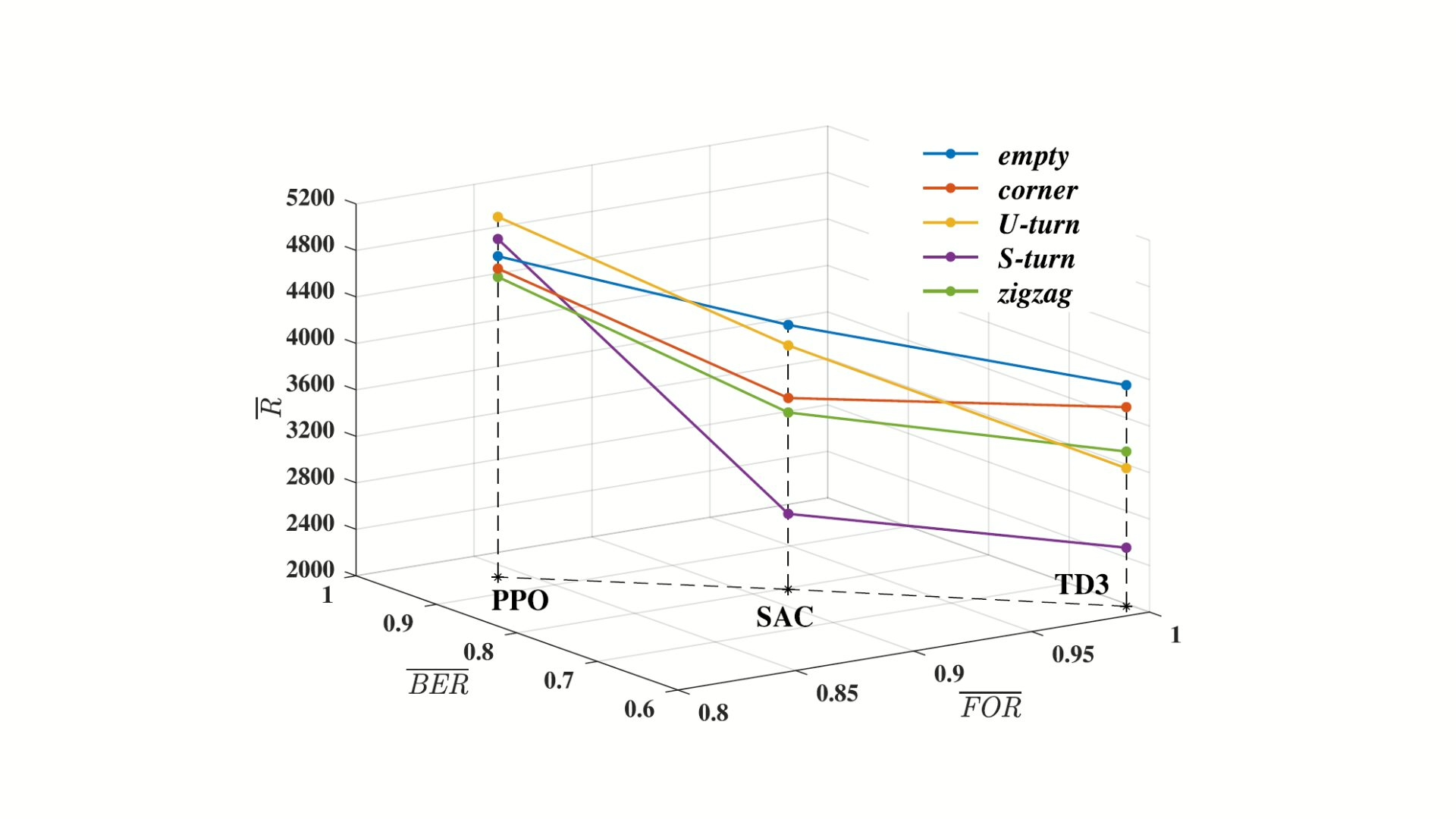

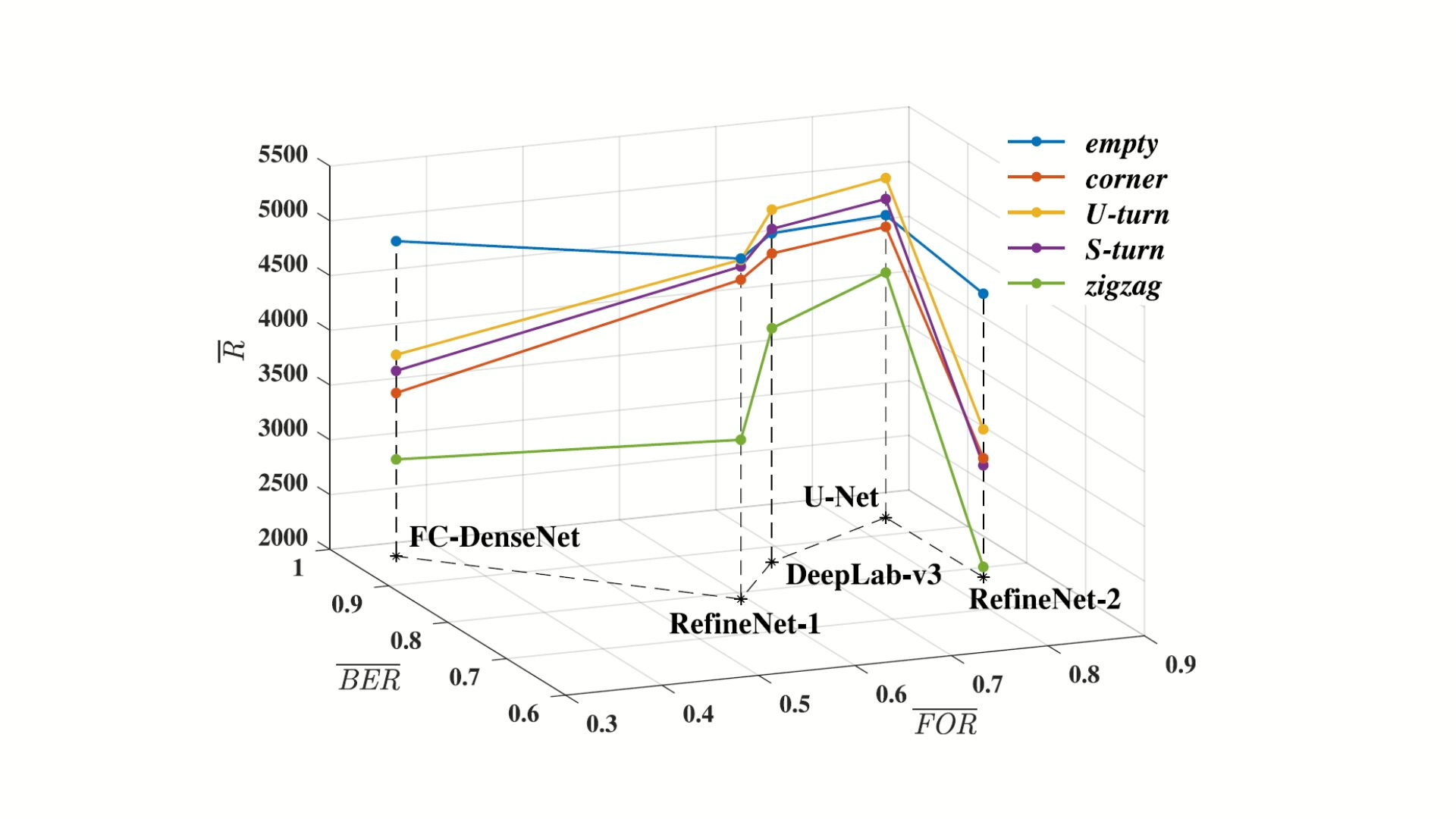

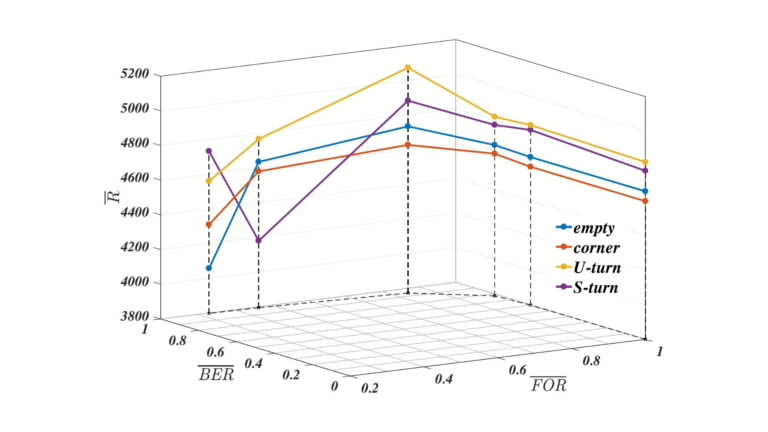

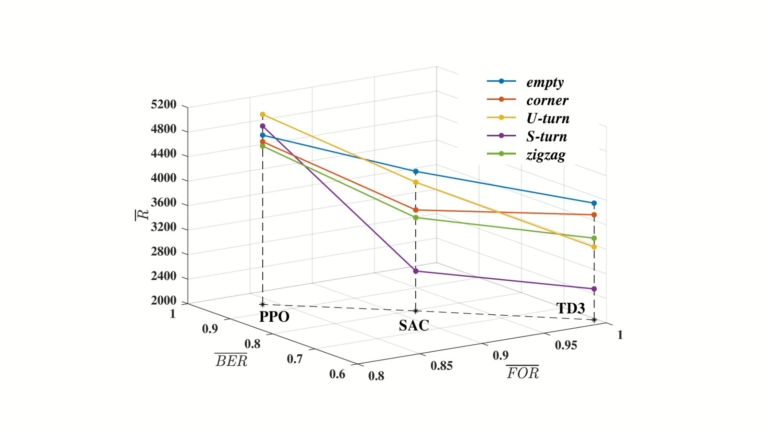

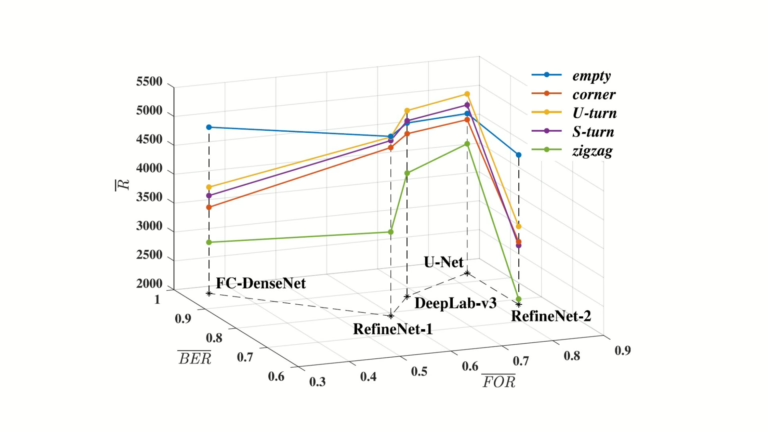

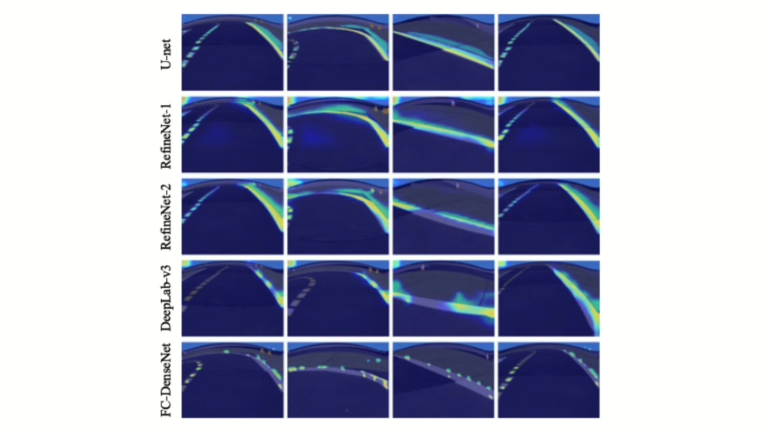

- Quantitative evaluation using FOR and BER metrics for attention quality

- Comparative analysis with gradient and perturbation-based saliency methods

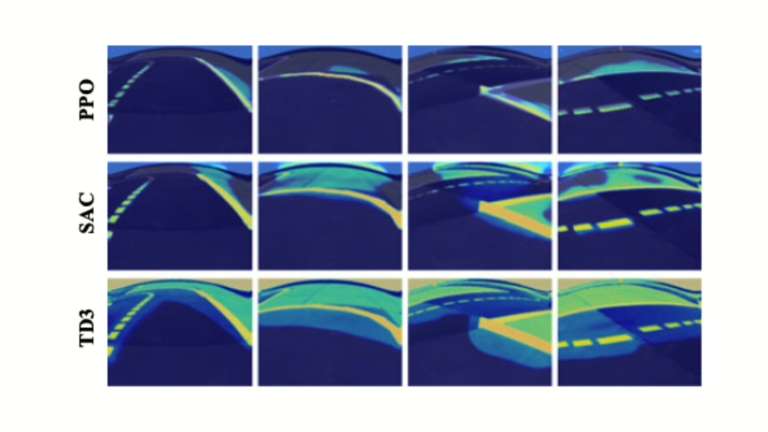

- Application across various architectures and RL algorithms including PPO, SAC, and TD3

The proposed approach isolates relevant decision-making cues, offering insight into agent reasoning. In Duckietown, the framework demonstrates how visual interpretability can aid in diagnosing performance bottlenecks and agent failures, offering a scalable model for interpretable reinforcement learning in autonomous navigation systems.

Highlights - interpretable reinforcement learning for visual policies

Here is a visual tour of the implementation of interpretable reinforcement learning for visual policies by the authors. For all the details, check out the full paper.

Abstract

Here is the abstract of the work, directly in the words of the authors:

Deep reinforcement learning (RL) has recently led to many breakthroughs on a range of complex control tasks. However, the agent’s decision-making process is generally not transparent. The lack of interpretability hinders the applicability of RL in safety-critical scenarios. While several methods have attempted to interpret vision-based RL, most come without detailed explanation for the agent’s behavior. In this paper, we propose a self-supervised interpretable framework, which can discover interpretable features to enable easy understanding of RL agents even for non-experts. Specifically, a self-supervised interpretable network (SSINet) is employed to produce fine-grained attention masks for highlighting task-relevant information, which constitutes most evidence for the agent’s decisions. We verify and evaluate our method on several Atari 2600 games as well as Duckietown, which is a challenging self-driving car simulator environment. The results show that our method renders empirical evidences about how the agent makes decisions and why the agent performs well or badly, especially when transferred to novel scenes. Overall, our method provides valuable insight into the internal decision-making process of vision-based RL. In addition, our method does not use any external labelled data, and thus demonstrates the possibility to learn high-quality mask through a self-supervised manner, which may shed light on new paradigms for label-free vision learning such as self-supervised segmentation and detection.

Conclusion - interpretable reinforcement learning for visual policies

Here is the conclusion according to the authors of this paper:

In this paper, we addressed the growing demand for human-interpretable vision-based RL from a fresh perspective. To that end, we proposed a general self-supervised interpretable framework, which can discover interpretable features for easily understanding the agent’s decision-making process. Concretely, a self-supervised interpretable network (SSINet) was employed to produce high-resolution and sharp attention masks for highlighting task-relevant information, which constitutes most evidence for the agent’s decisions. Then, our method was applied to render empirical evidences about how the agent makes decisions and why the agent performs well or badly, especially when transferred to novel scenes. Overall, our work takes a significant step towards interpretable vision-based RL. Moreover, our method exhibits several appealing benefits. First, our interpretable framework is applicable to any RL model taking as input visual images. Second, our method does not use any external labelled data. Finally, we emphasize that our method demonstrates the possibility to learn high-quality mask through a self-supervised manner, which provides an exciting avenue for applying RL to self automatically labelling and label-free vision learning such as self-supervised segmentation and detection.

Did this work spark your curiosity?

Check out the following works on vehicle autonomy with Duckietown:

Project Authors

Wenjie Shi received the BS degree from the School of Hydropower and Information Engineering, Huazhong University of Science and Technology, Wuhan, China, in 2016. He is currently working toward the Ph.D. degree in control science and engineering from the Department of Automation, Institute of Industrial Intelligence and Systems, Tsinghua University, Beijing, China.

Gao Huang (Member, IEEE) received the B.S. degree in automation from Beihang University, Beijing, China, in 2009, and the Ph.D. degree in automation from Tsinghua University, Beijing, in 2015. He is currently an Associate Professor with the Department of Automation, Tsinghua University.

Shiji Song (Senior Member, IEEE) received the Ph.D. degree in mathematics from the Department of Mathematics, Harbin Institute of Technology, Harbin, China, in 1996. He is currently a Professor at the Department of Automation, Tsinghua University, Beijing, China.

Zhuoyuan Wang (IEEE) is currently a Ph. D. student at Carnegie Mellon University, and holds a B.S. degree in control science and engineering in the Department of Automation, Tsinghua University, Beijing, China.

Tingyu Lin received the B.S. degree and the Ph.D. degree in control system from the School of Automation Science and Electrical Engineering at Beihang University in 2007 and 2014, respectively. He is now a Member of China Simulation Federation (CSF).

Cheng Wu received the M.Sc. degree in electrical engineering from Tsinghua University, Beijing, China, in 1966. He is currently a Professor with the Department of Automation, Tsinghua University.

Learn more

Duckietown is a platform for creating and disseminating robotics and AI learning experiences.

It is modular, customizable and state-of-the-art, and designed to teach, learn, and do research. From exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge, Duckietown evolves with the skills of the user.

Features for Efficient Autonomous Navigation in Duckietown

Features for Efficient Autonomous Navigation in Duckietown

Project Resources

- Objective: Improving the range of autonomous behaviors of physical Duckiebots in Duckietown.

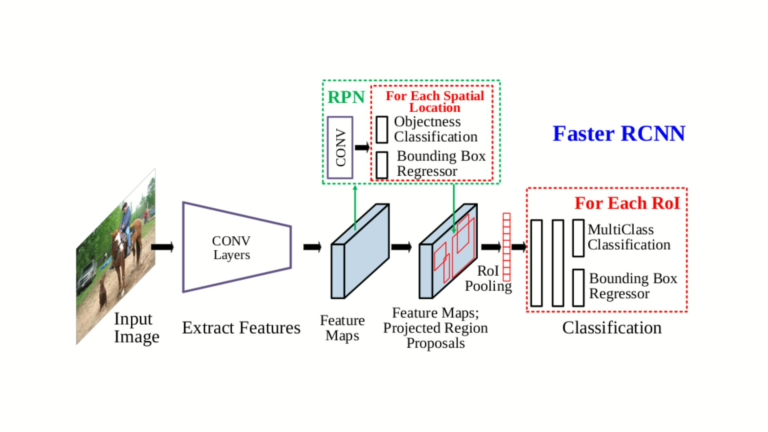

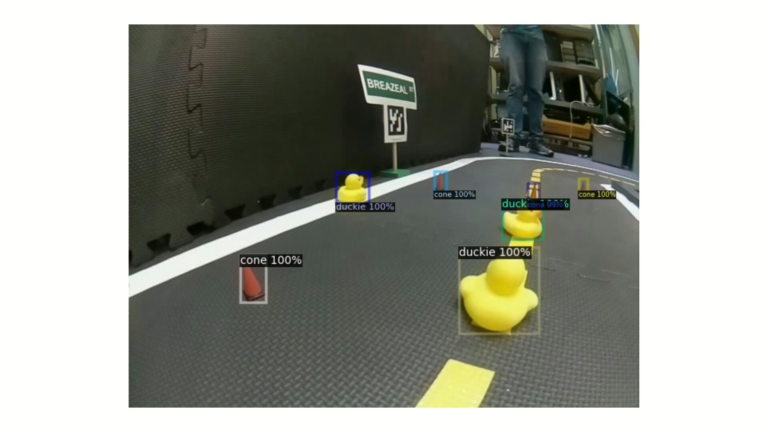

- Approach: Improving and integrating novel control (pure pursuit), perception (YOLO), and navigation algorithms on the Duckietown out-of-the-box "lane following" visual pipeline for autonomy.

- Authors: Servesh Khandwe, Ayush Kumar, and Parth Karkar from the Technical University of Munich, Germany.

Project highlights

Visual Feedback for Autonomous Navigation in Duckietown - the objectives

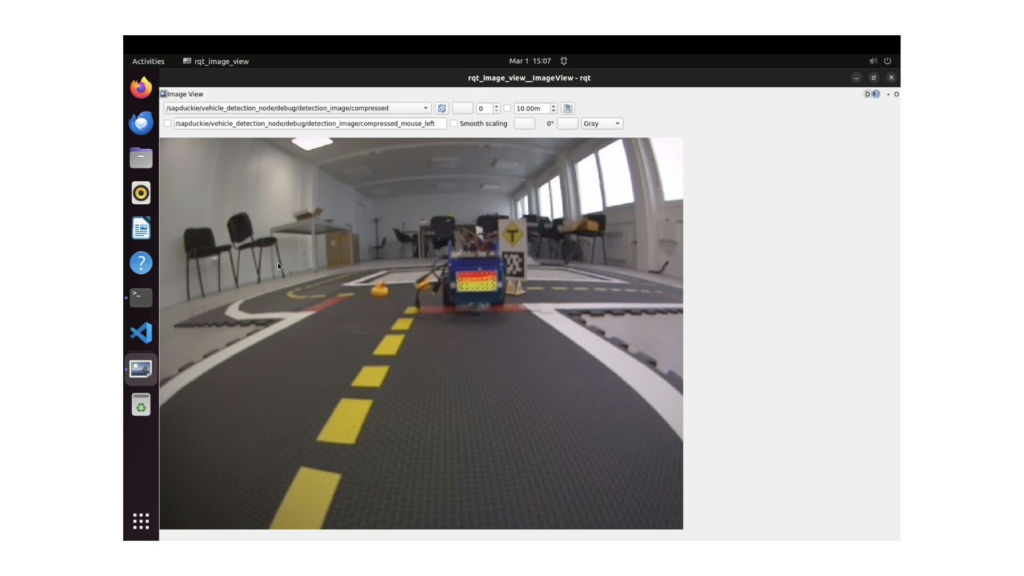

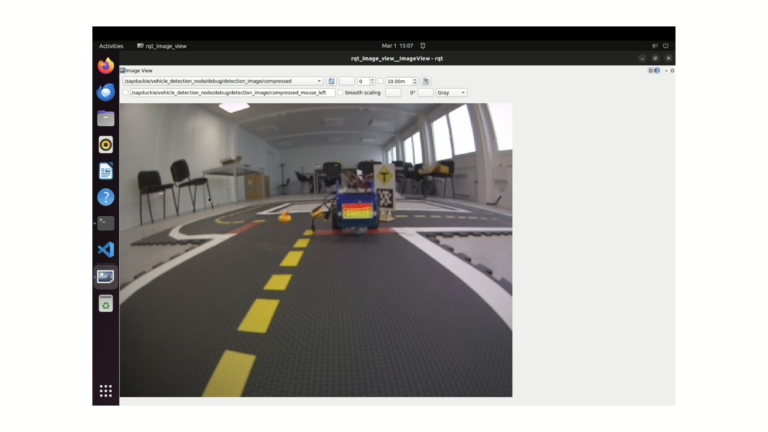

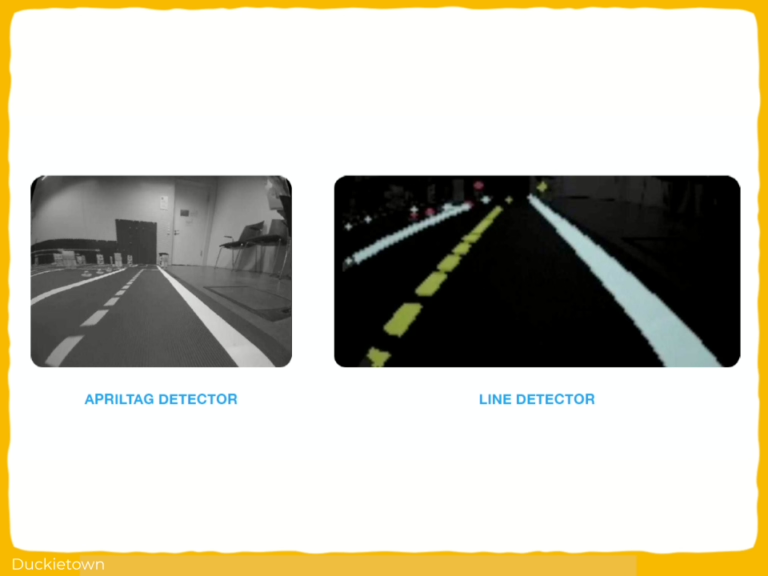

This project from students at TUM (Technische Universität of Munich) builds on the preexisting Duckietown autonomy stack to add/reintegrate/improve upon much-needed autonomous navigation features: improved control (pure pursuit instead of PID), red stop line detection, AprilTag detection, intersection navigation, and obstacle detection (using YOLO v3), making Duckietowns more complex and interesting!

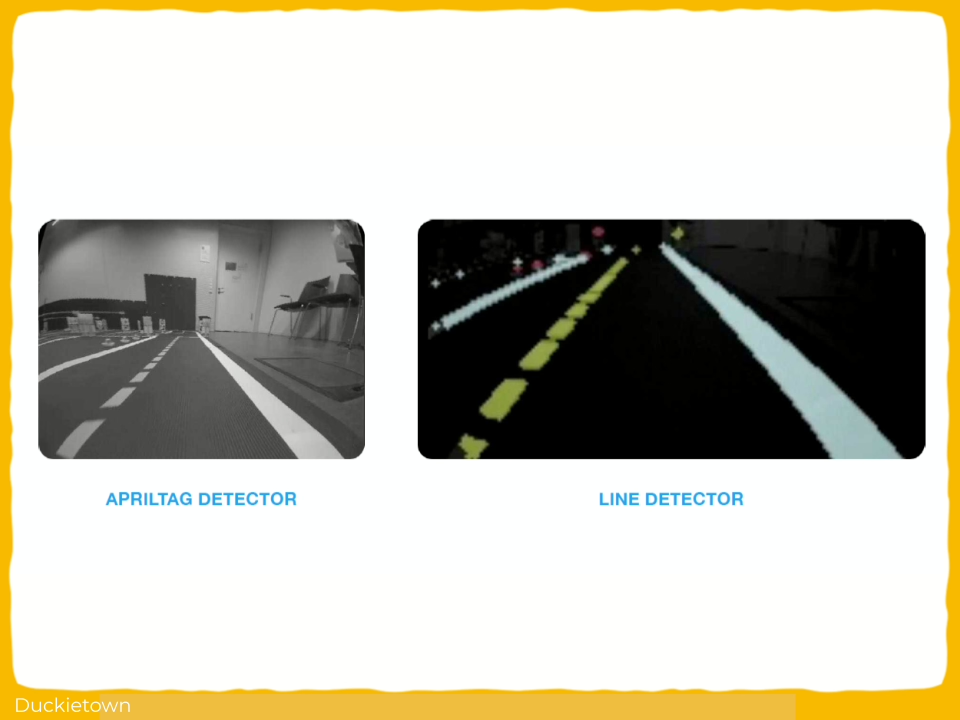

The resulting agent includes modules for lane following, stop line detection, and intersection handling using AprilTags, following the legacy infrastructure of Duckietown.

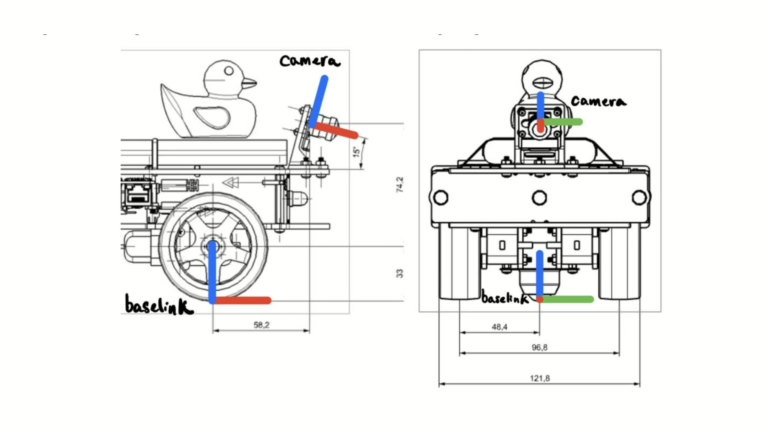

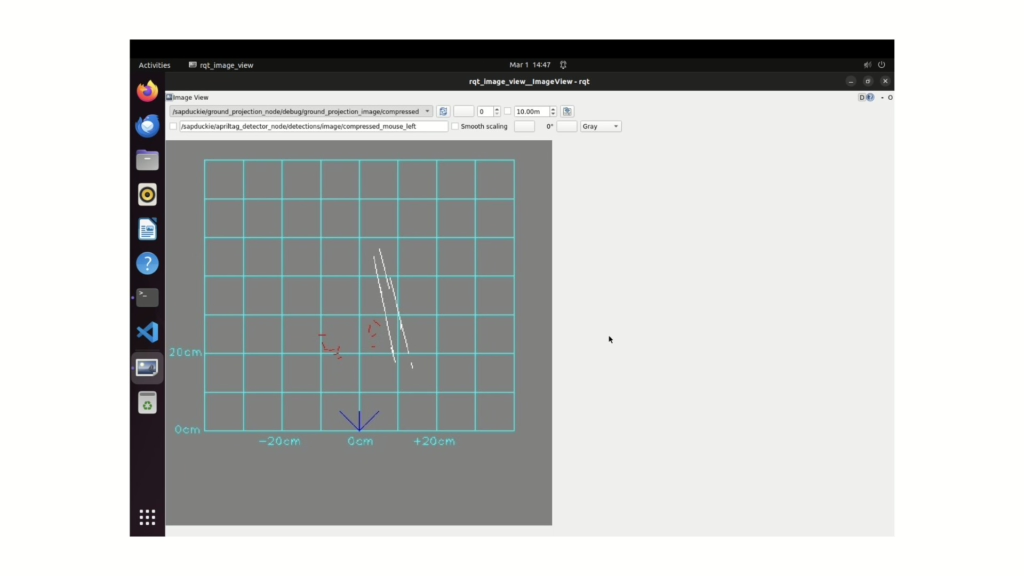

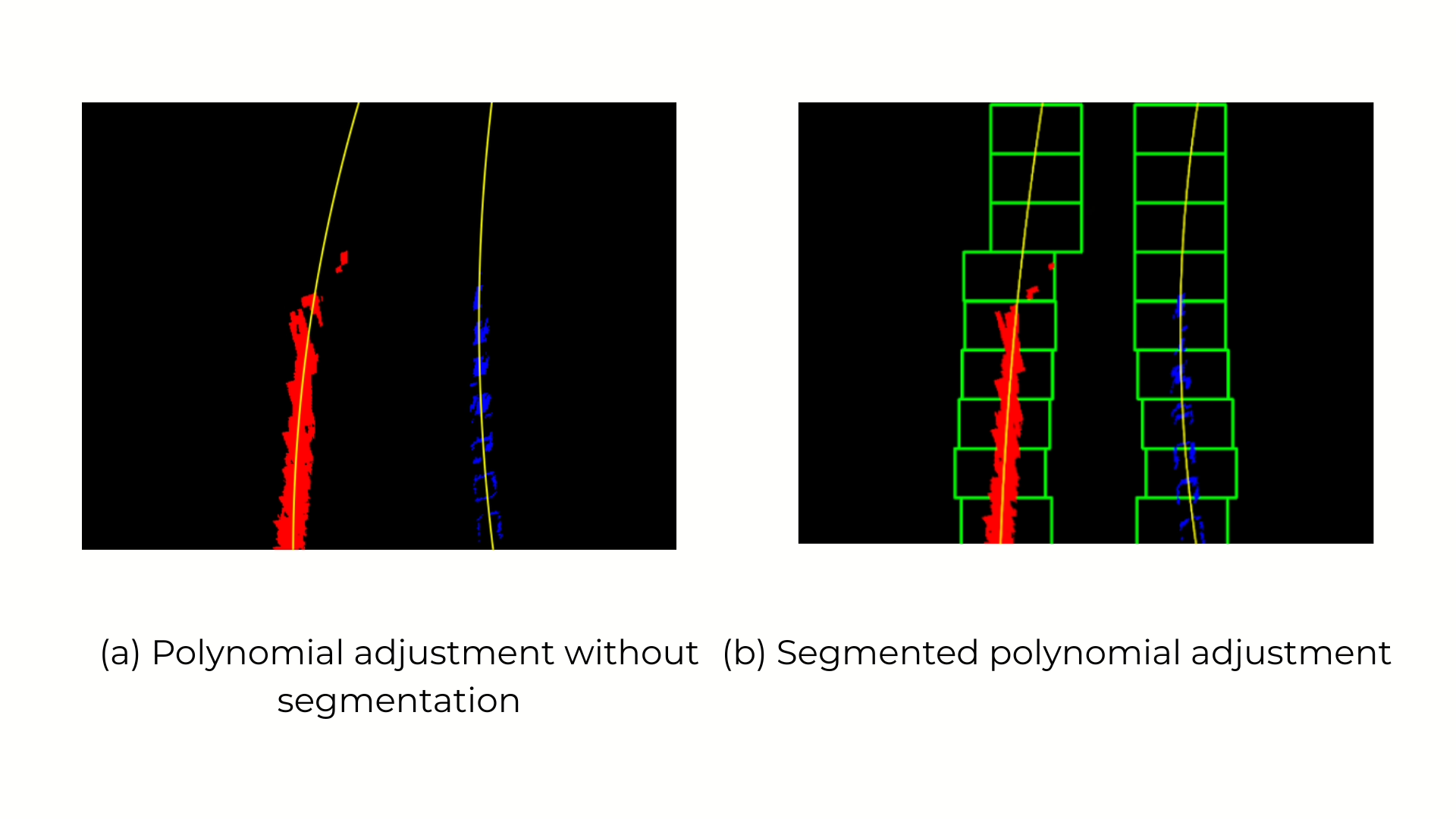

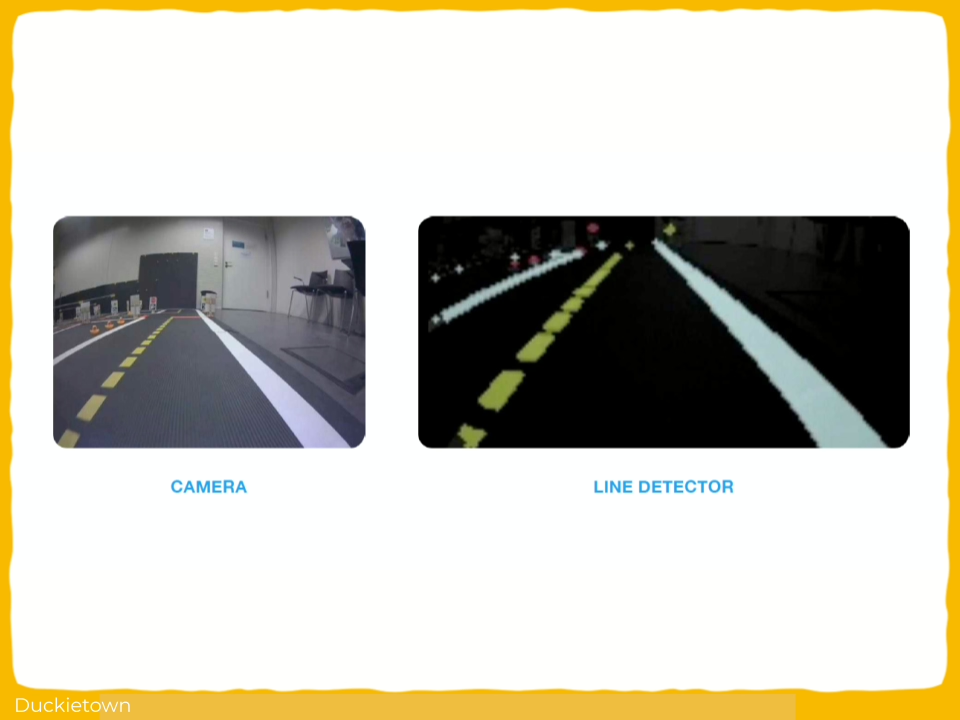

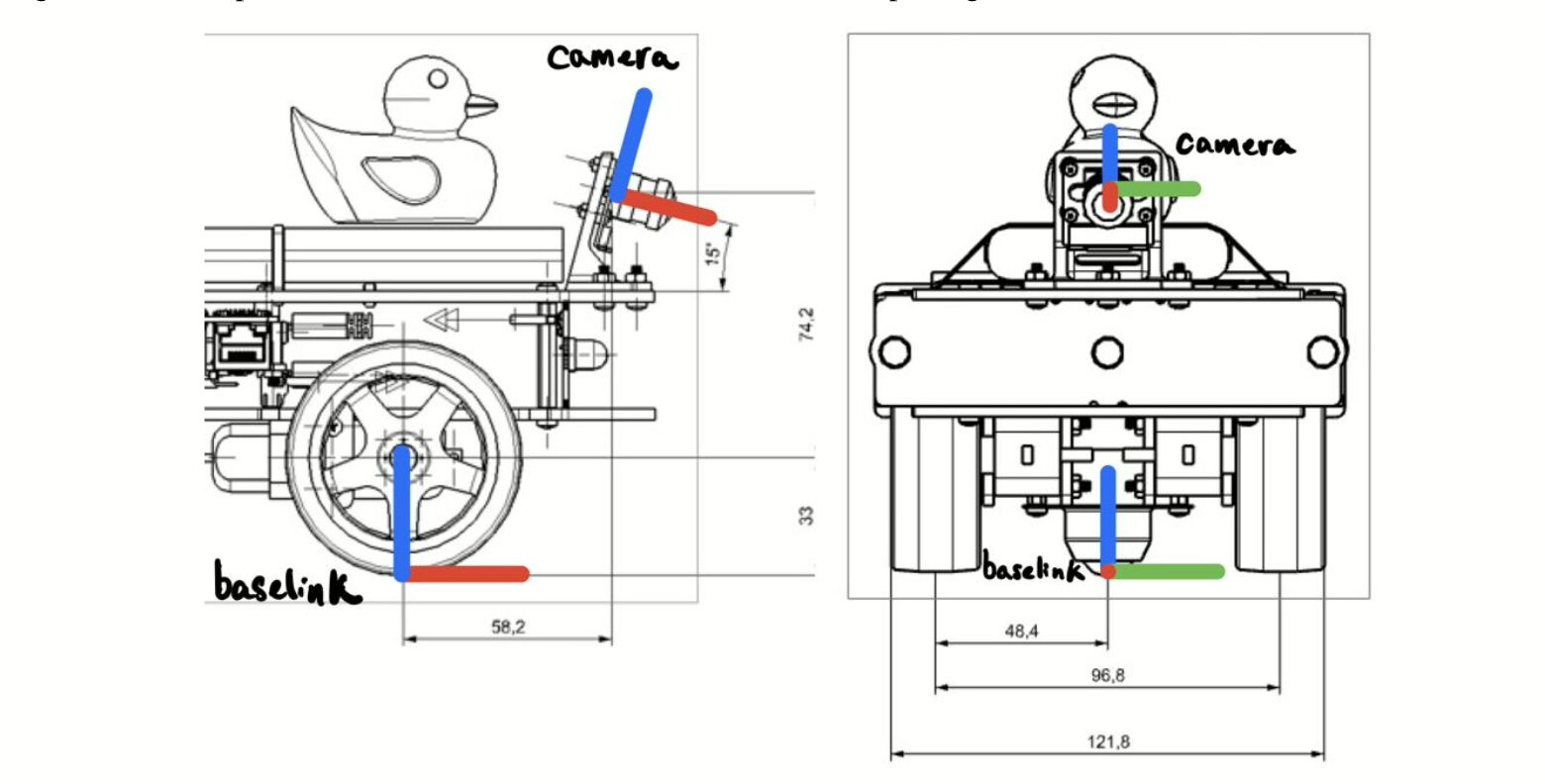

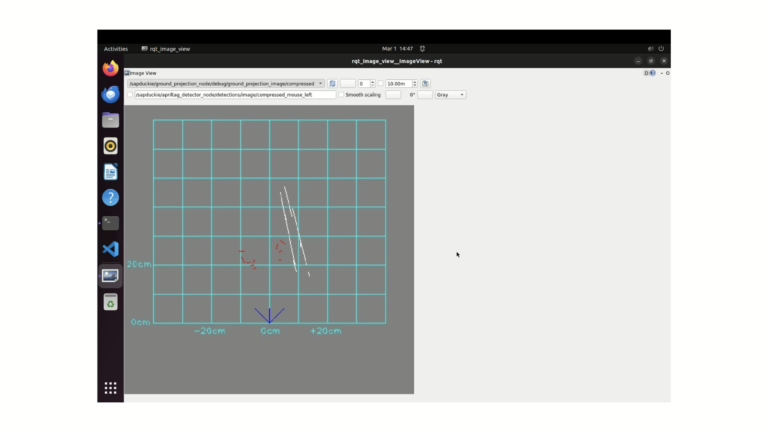

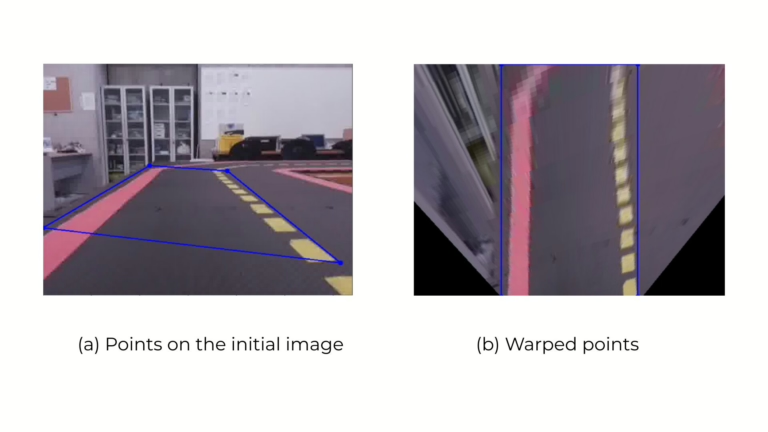

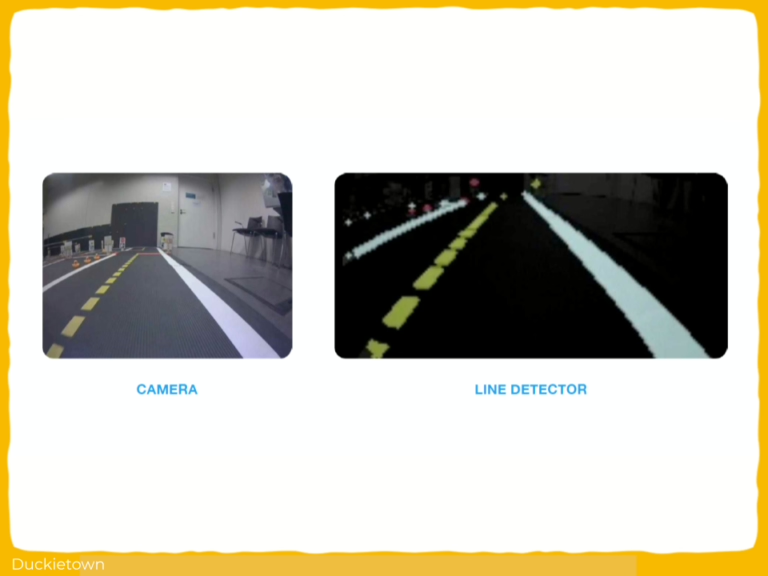

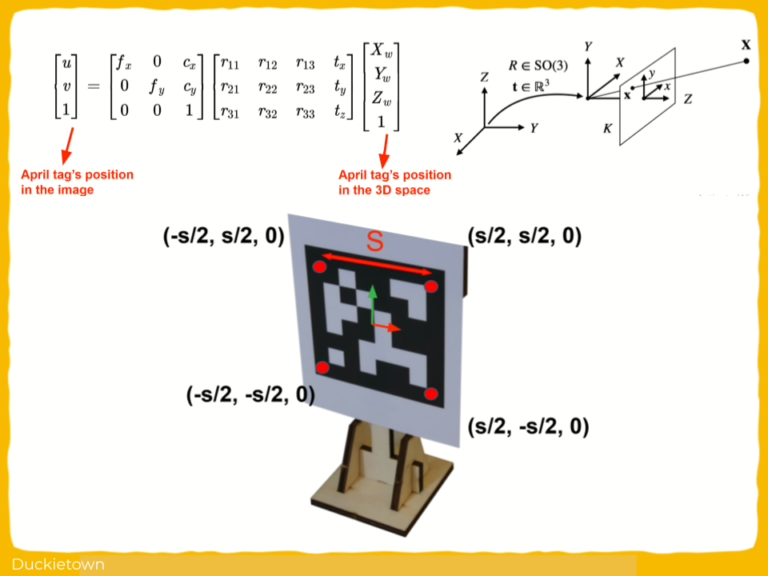

The autonomy pipeline relies heavily on vision as the primary means of perception: lane edges are projected from image space to the ground plane using inverse perspective mapping learned after running a camera calibration procedure.

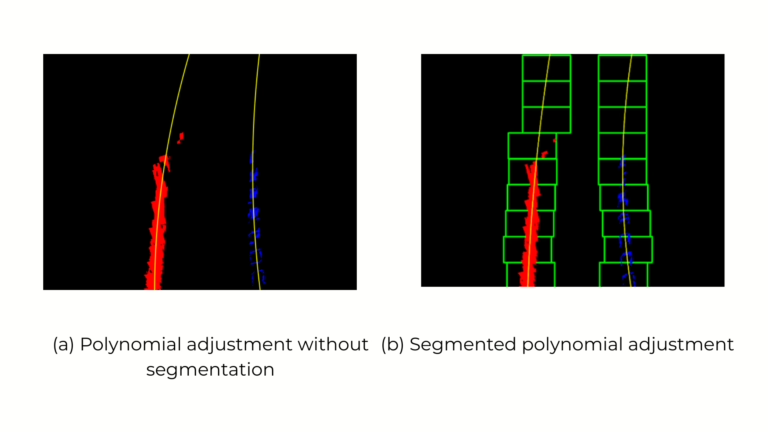

The Duckiebot then estimates a dynamic target point by offsetting yellow or white lane markers depending on visibility. The curvature is computed based on the geometric relation between the Duckiebot and the goal point, and the steering command is derived from this curvature.

The Duckiebot velocity and angular velocity are then modulated using a second-degree polynomial function based on detected path geometry.

Visual input from an onboard monocular camera is processed through a lane filter with adaptive Gaussian variance scaling relative to frame timing.

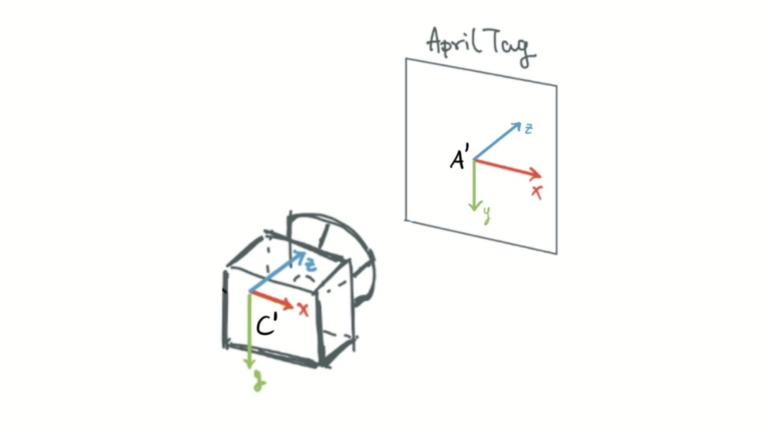

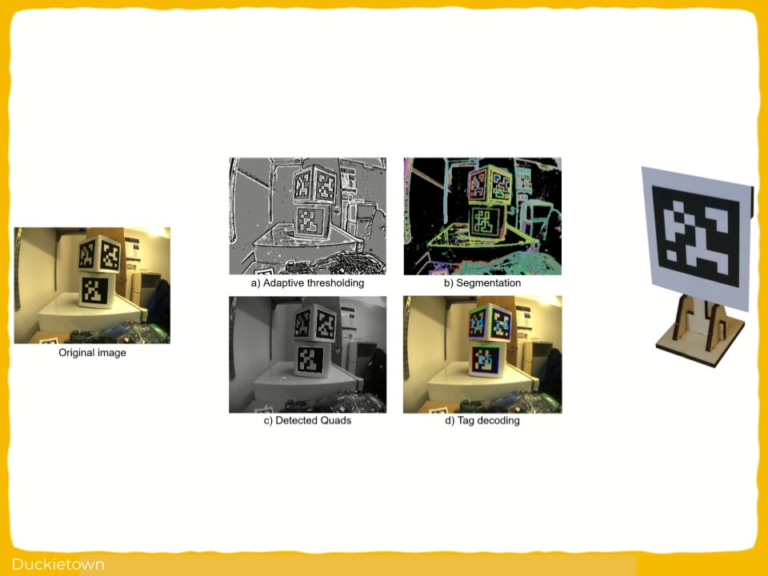

When running by an intersection, stop lines are detected using HSV color segmentation. AprilTag detection determines intersection decisions, with tag IDs mapped to turn directions.

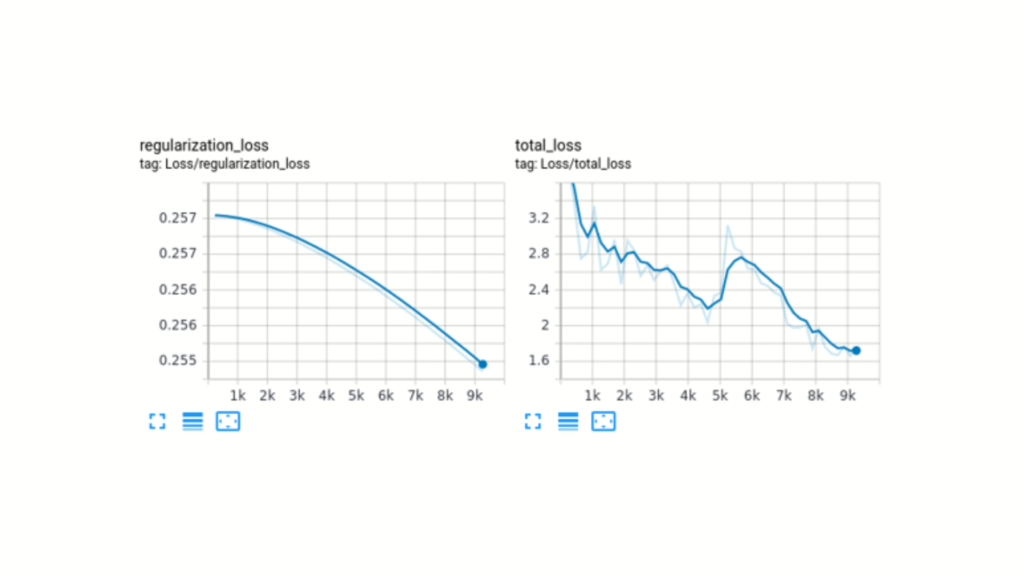

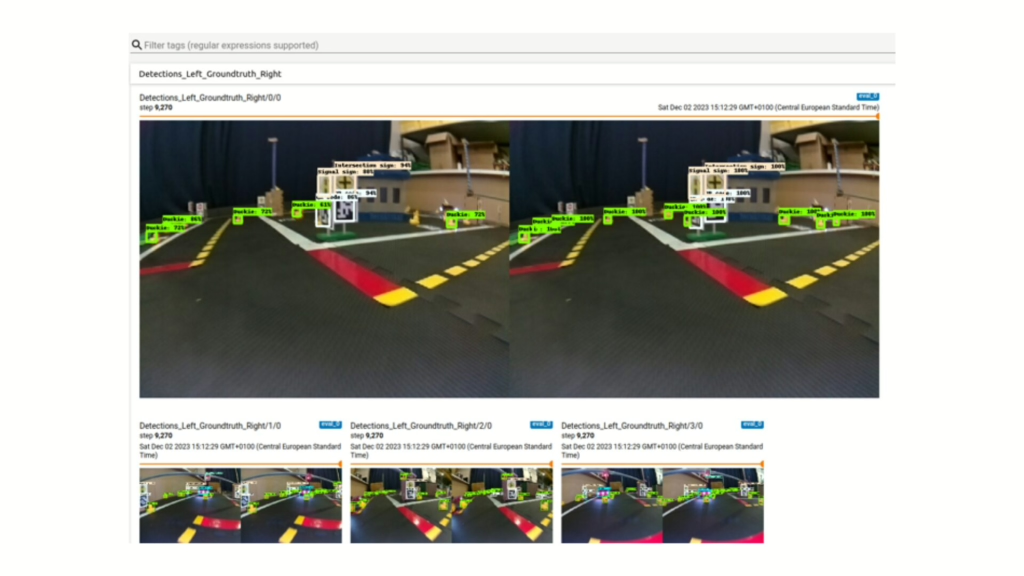

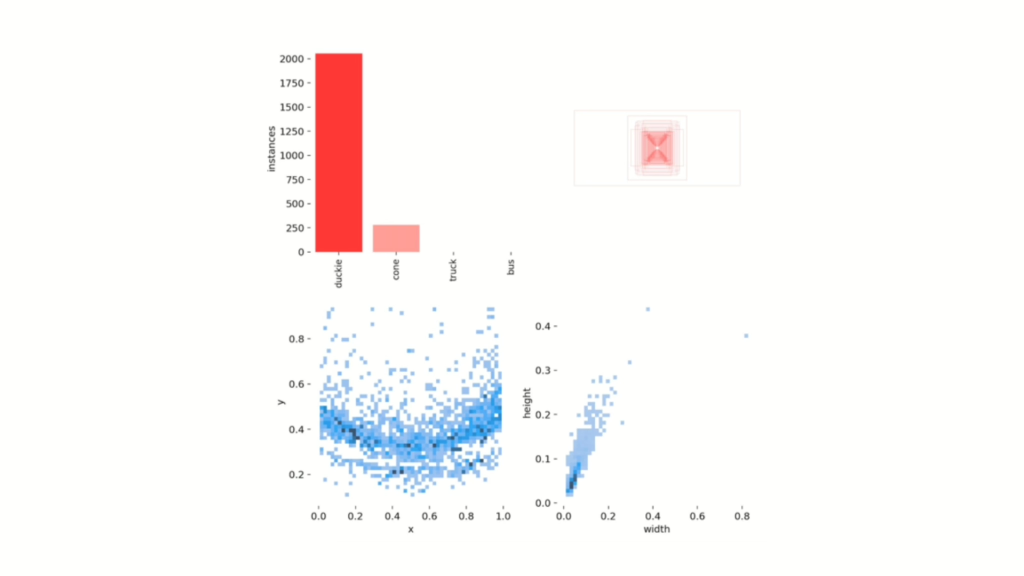

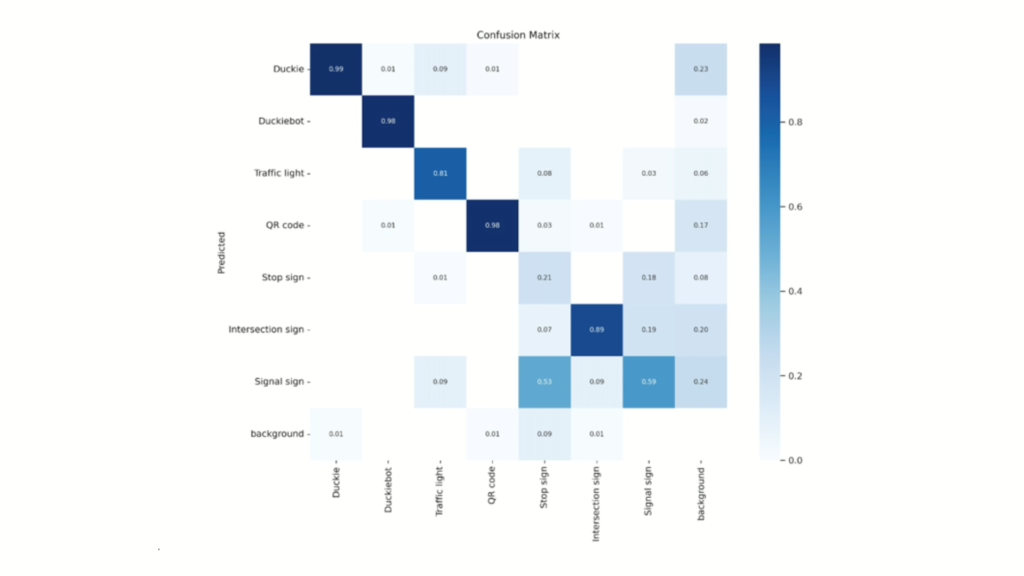

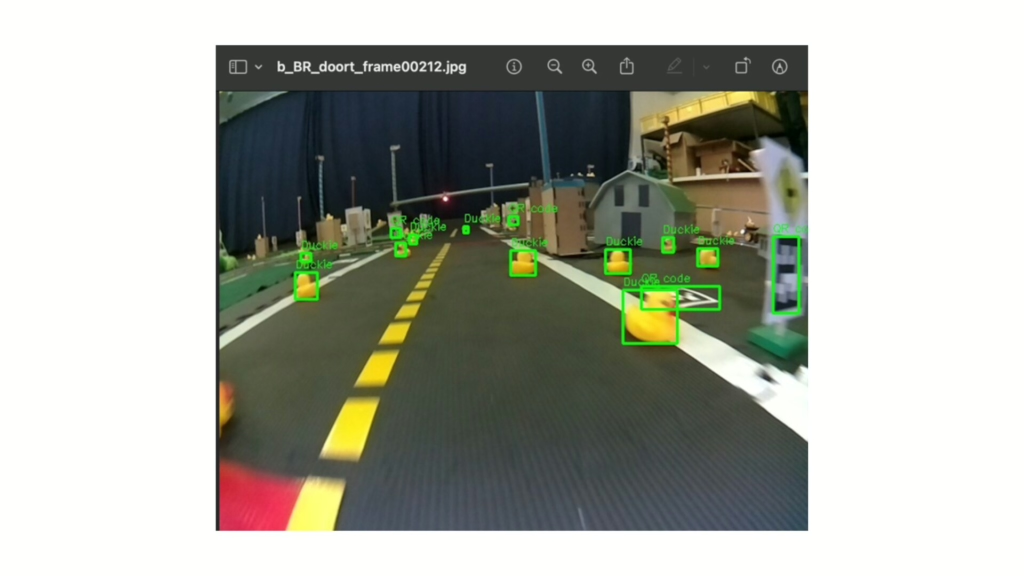

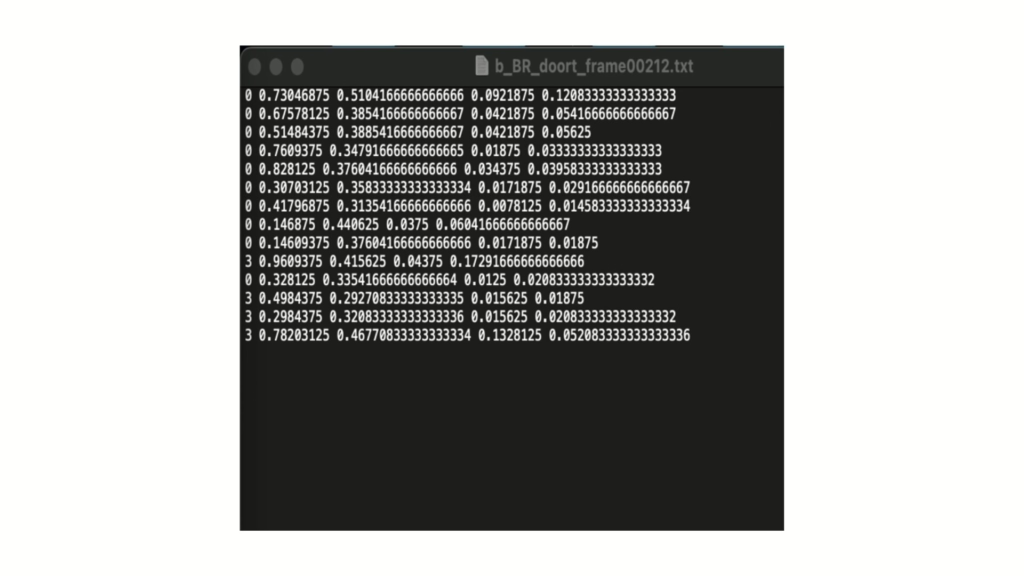

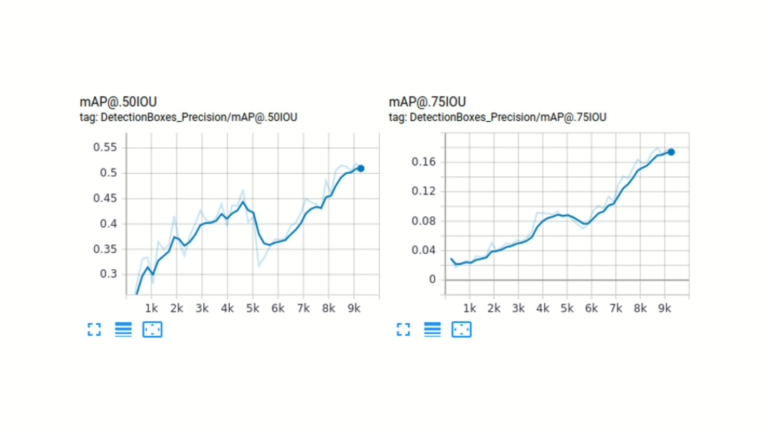

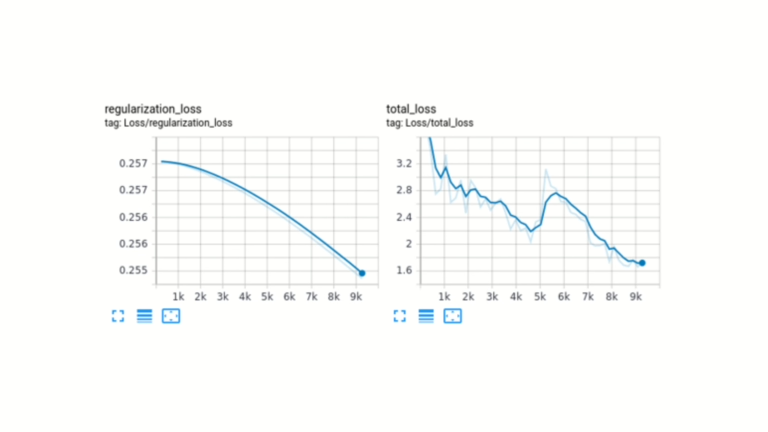

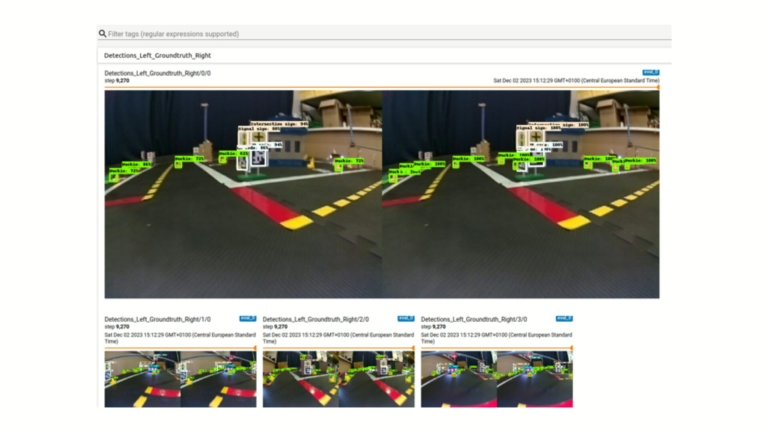

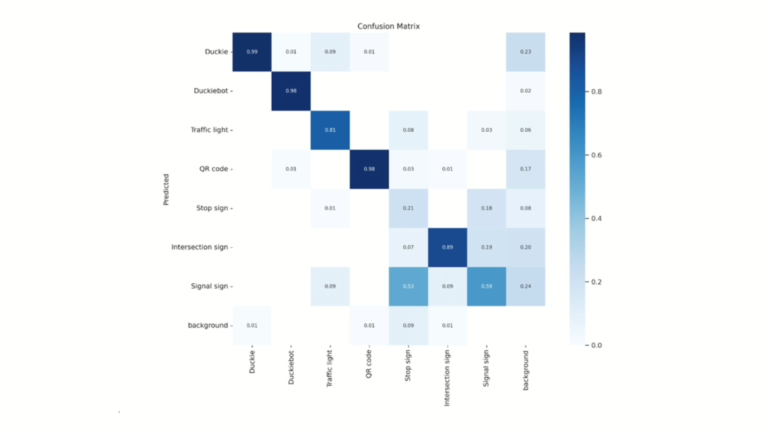

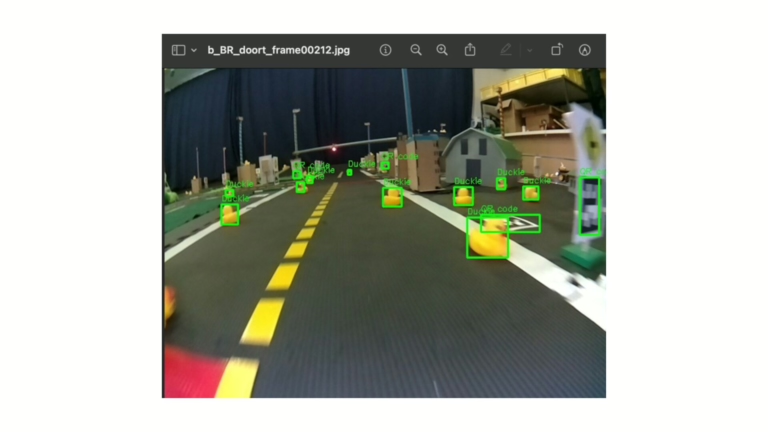

Every module is implemented as an independent ROS package with dedicated launch files, coordinated via a central launch file. A YOLOv3 object detection model, trained on a custom Duckietown dataset, provides real-time obstacle recognition.

The challenges and approach

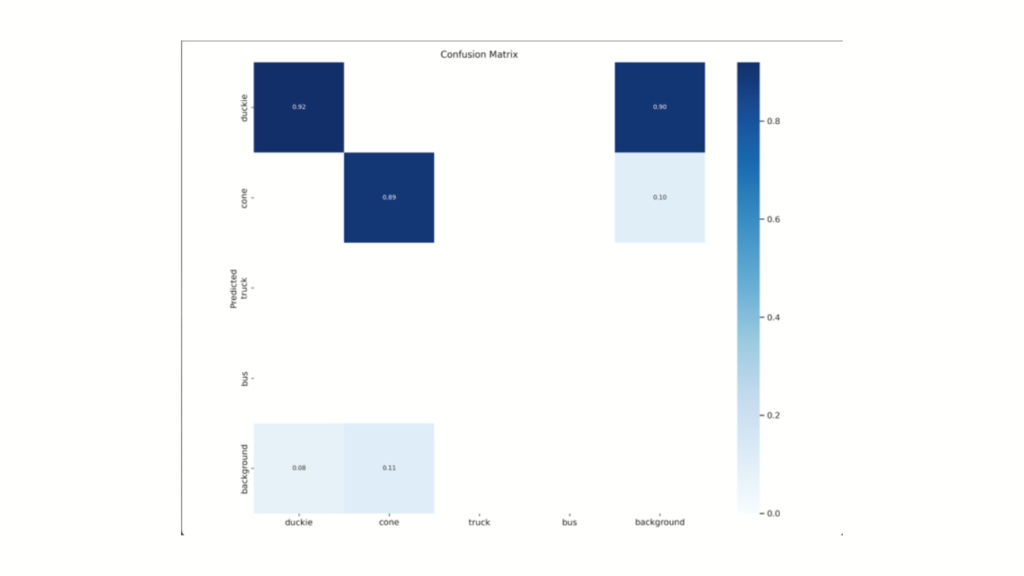

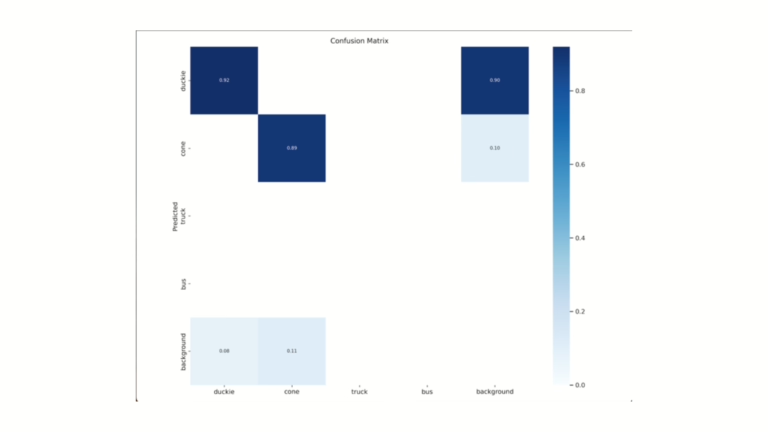

One major hurdle was integrating object detection models like Single-Shot Detector (SSD) and YOLO with the Duckiebot’s ROS-based camera system.

While the SSD model was trained on a custom Duckietown dataset, ROS publisher-subscriber mismatches prevented live inference. Transitioning to the YOLO model involved adapting annotation formats and re-training for compatibility with the YOLO architecture. In lane following, the default controller from Duckietown demos showed high deviation, prompting the implementation of a modified pure pursuit approach.

Additional challenges arose from limited computational resources on the Duckiebot, with CPU overuse causing processing delays when running all modules concurrently. The approach focused on modular development, isolating lane following, stop line detection, and intersection navigation into separate ROS packages with fine-tuned parameters. The pure pursuit algorithm was adapted for ground-projected lane estimation, dynamic speed control, and target point calculation based on visible lane markers. Integration of AprilTag-based intersection logic and LED signaling provided directional control at intersections.

This structured, iterative methodology enabled real-time, vision-guided behavior while operating within the constraints.

Project Report

Did this work spark your curiosity?

Check out the follow works on path planning with Duckietown:

Visual Feedback for Autonomous Navigation in Duckietown: Authors

Servesh Khandwe is currently working as a Software Engineer at Porsche Digital, Germany.

Ayush Kumar is currently working as a Research Assistant at Fraunhofer IIS, Germany.

Parth Karkar is currently working as an Analytical Consultant at Mutares SE & Co. KGaA, Germany.

Learn more

Duckietown is a modular, customizable, and state-of-the-art platform for creating and disseminating robotics and AI learning experiences.

Duckietown is designed to teach, learn, and do research: from exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge.

These spotlight projects are shared to exemplify Duckietown’s value for hands-on learning in robotics and AI, enabling students to apply theoretical concepts to practical challenges in autonomous robotics, boosting competence and job prospects.

Visual Feedback for Autonomous Lane Tracking in Duckietown

General Information

- Title: Lane Following with a Duckiebot Vehicle using Visual Feedback

- Authors: Oscar Castro, Axel Céspedes, Roosevelt Ubaldo, Oscar E. Ramos

- Institution: Universidad de Ingenieria y Tecnologia - UTEC Lima, Peru

- Citation: O. Castro, A. Céspedes, R. Ubaldo and O. E. Ramos, "Lane Following with a Duckiebot Vehicle using Visual Feedback," 2019 IEEE Sciences and Humanities International Research Conference (SHIRCON), Lima, Peru, 2019, pp. 1-4, doi: 10.1109/SHIRCON48091.2019.9024875.

Visual Feedback for Autonomous Lane Tracking in Duckietown

How can vehicle autonomy be achieved by relying only on visual feedback from the onboard camera?

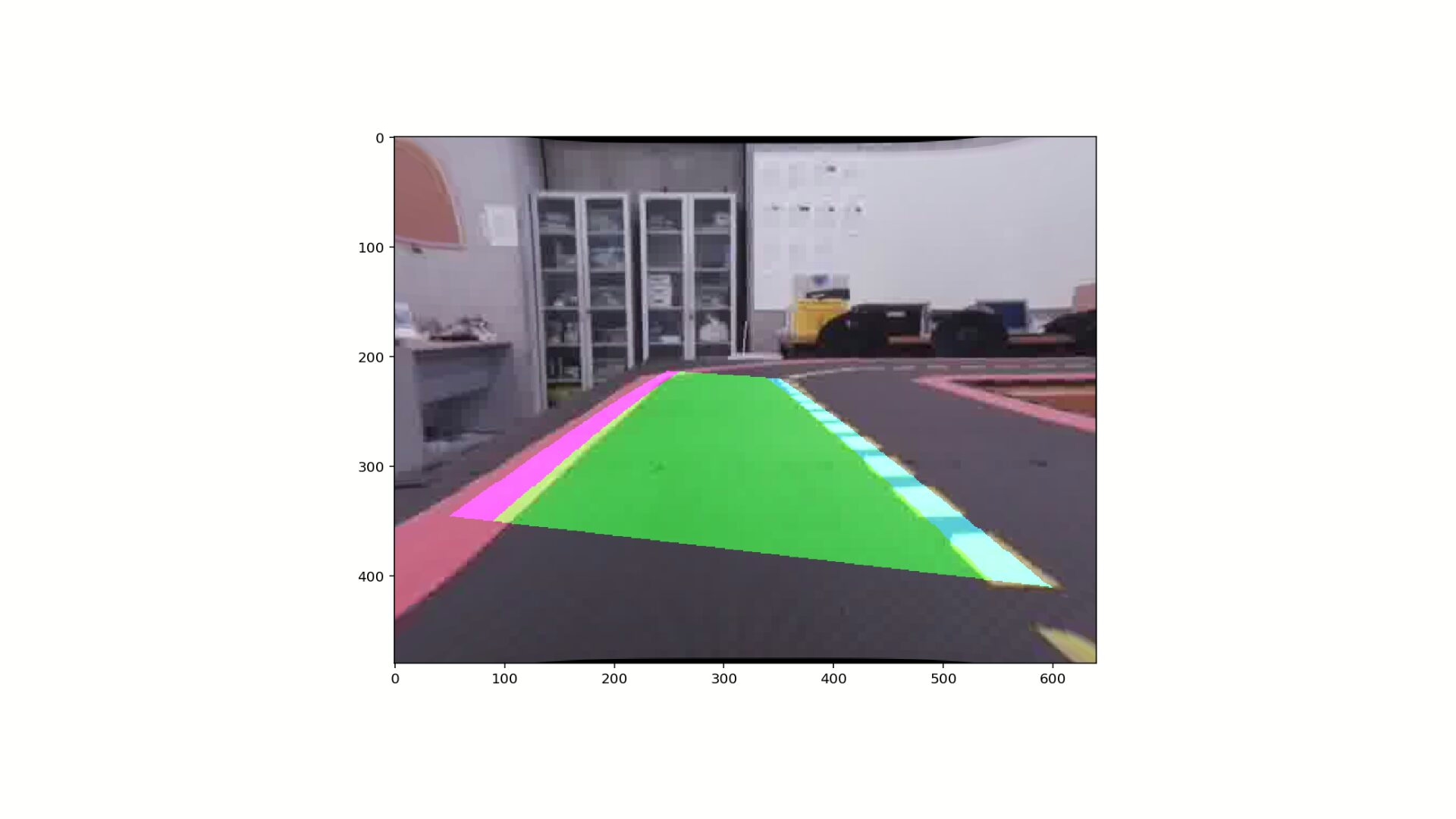

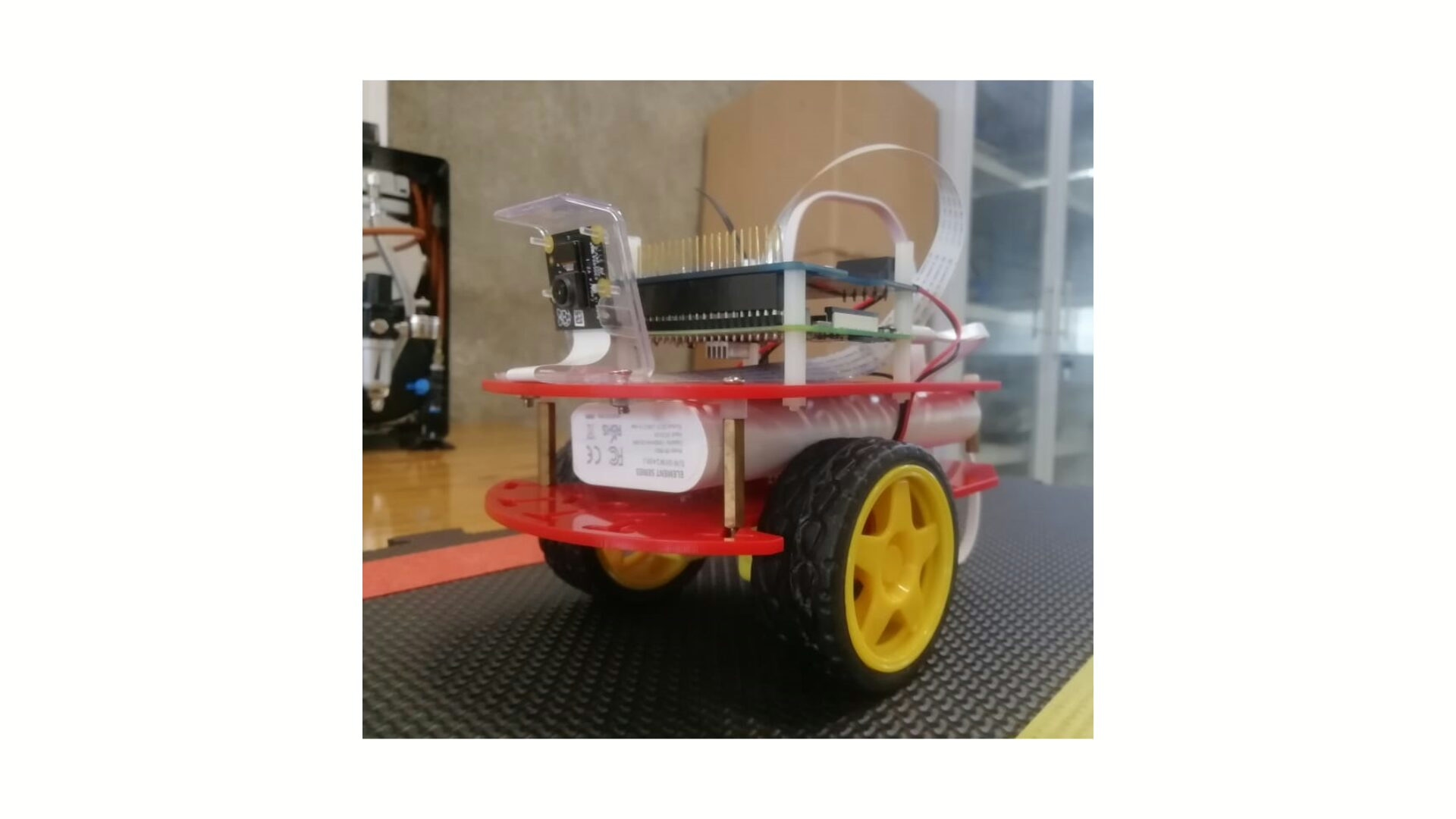

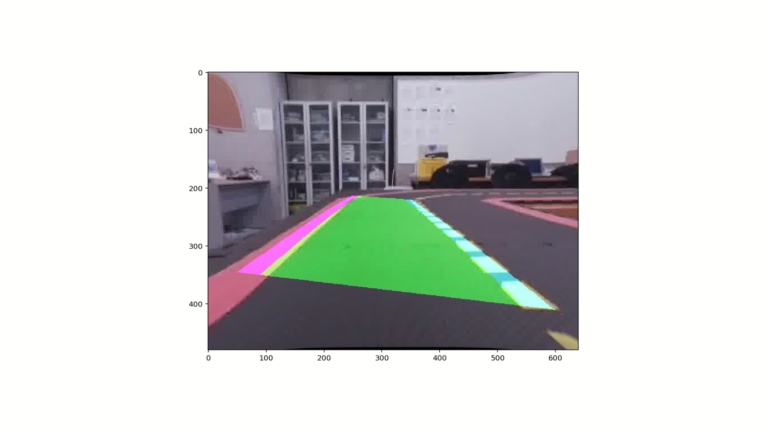

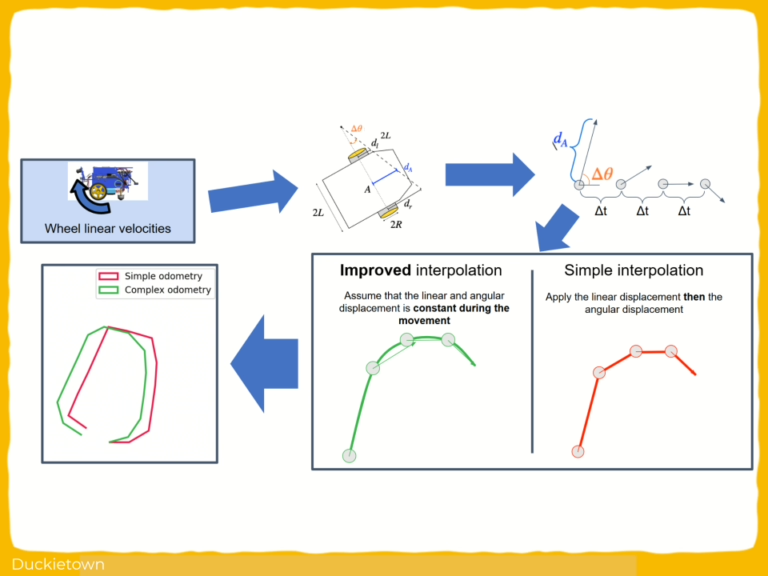

This work presents an implementation of lane following for the Duckietbot (DB17) using visual feedback as the only onboard sensor. The approach relies on real-time lane detection, and pose estimation, eliminating the need for wheel encoders.

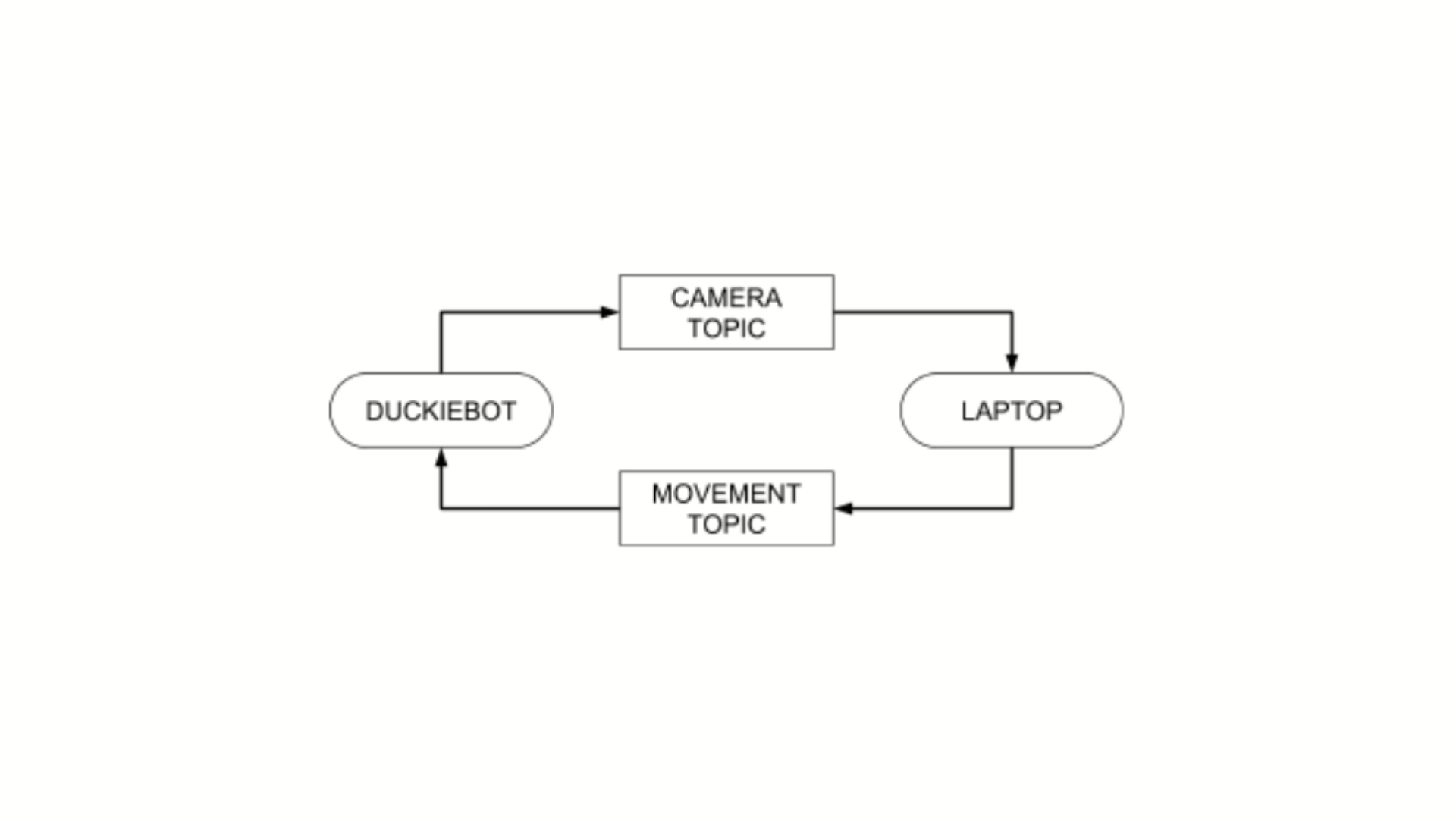

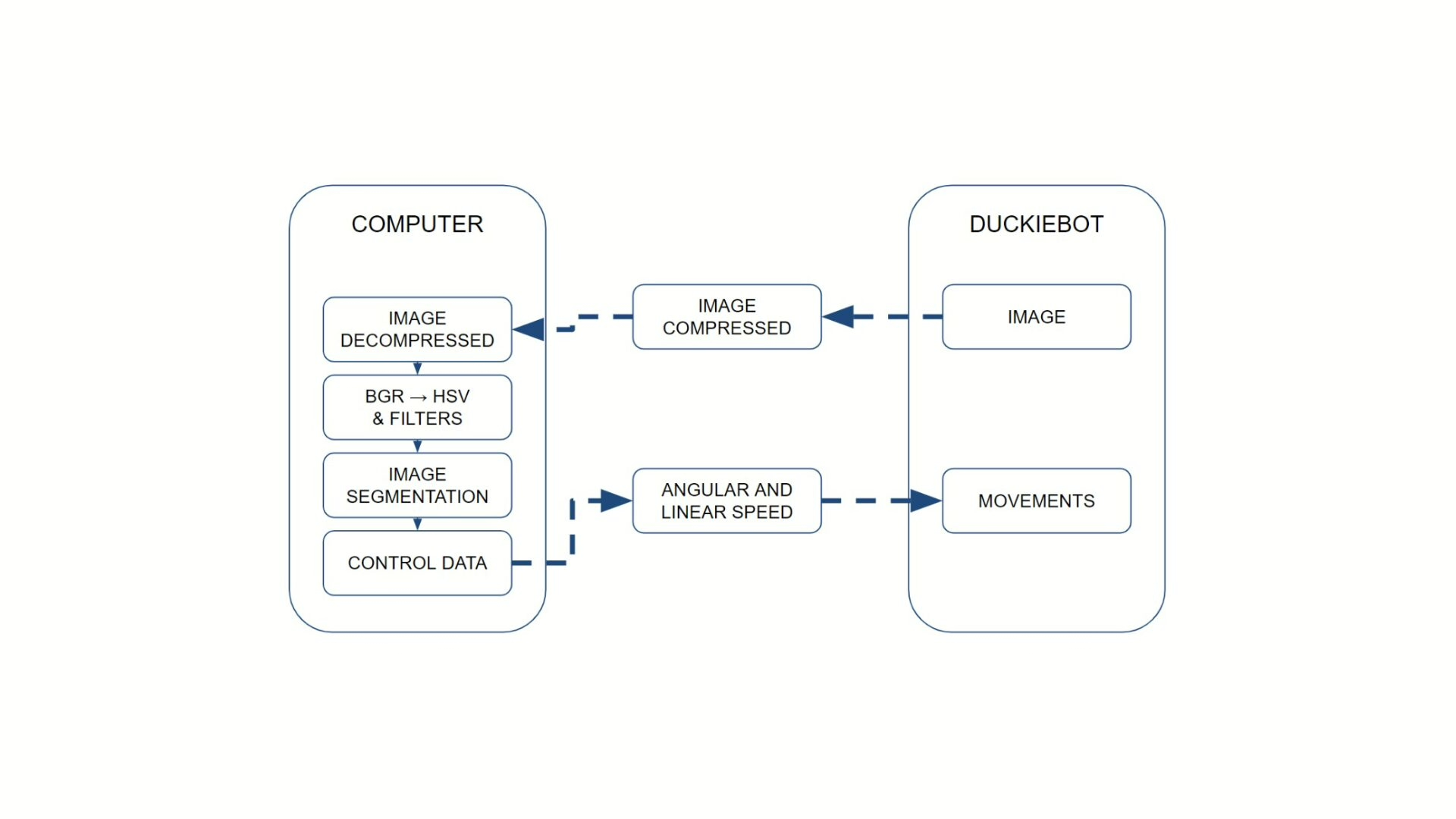

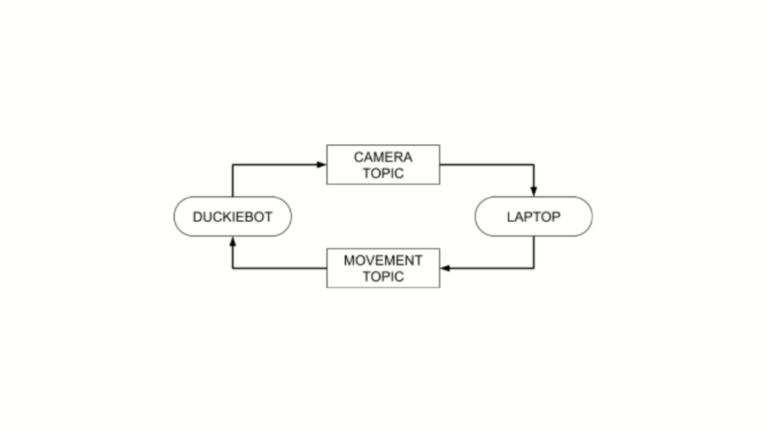

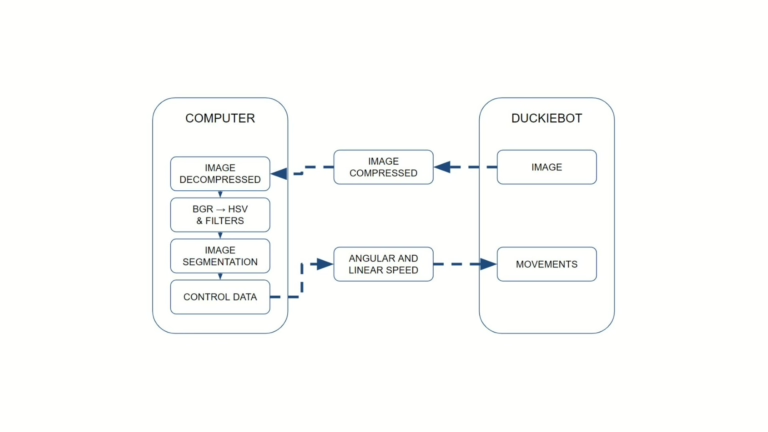

The onboard computation is provided by a Raspberry Pi, which performs low-level motor control, while high-level image processing and decision-making are offloaded to an external ROS-enabled computer.

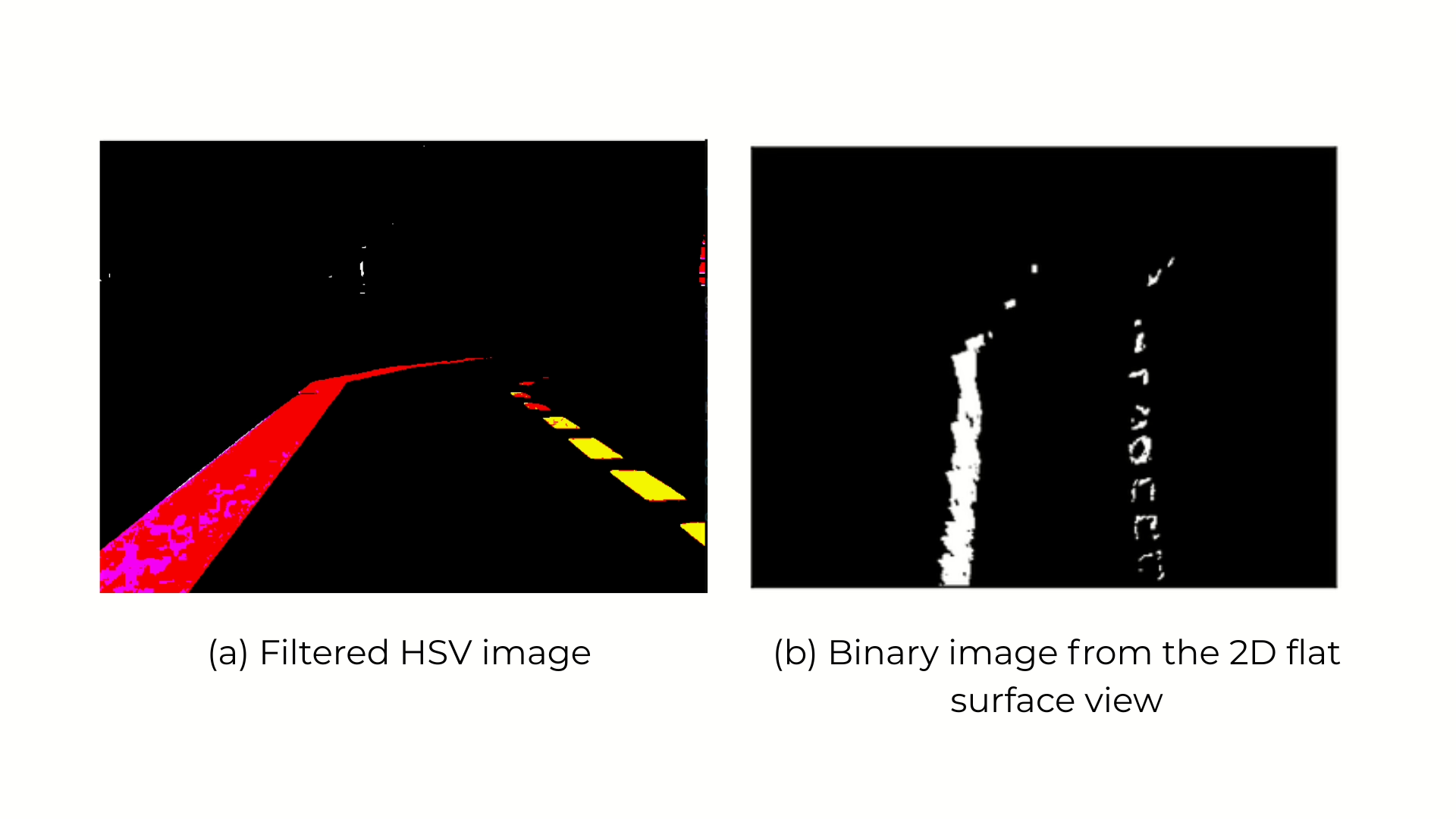

The key technical aspects of the implemented autonomy pipeline include:

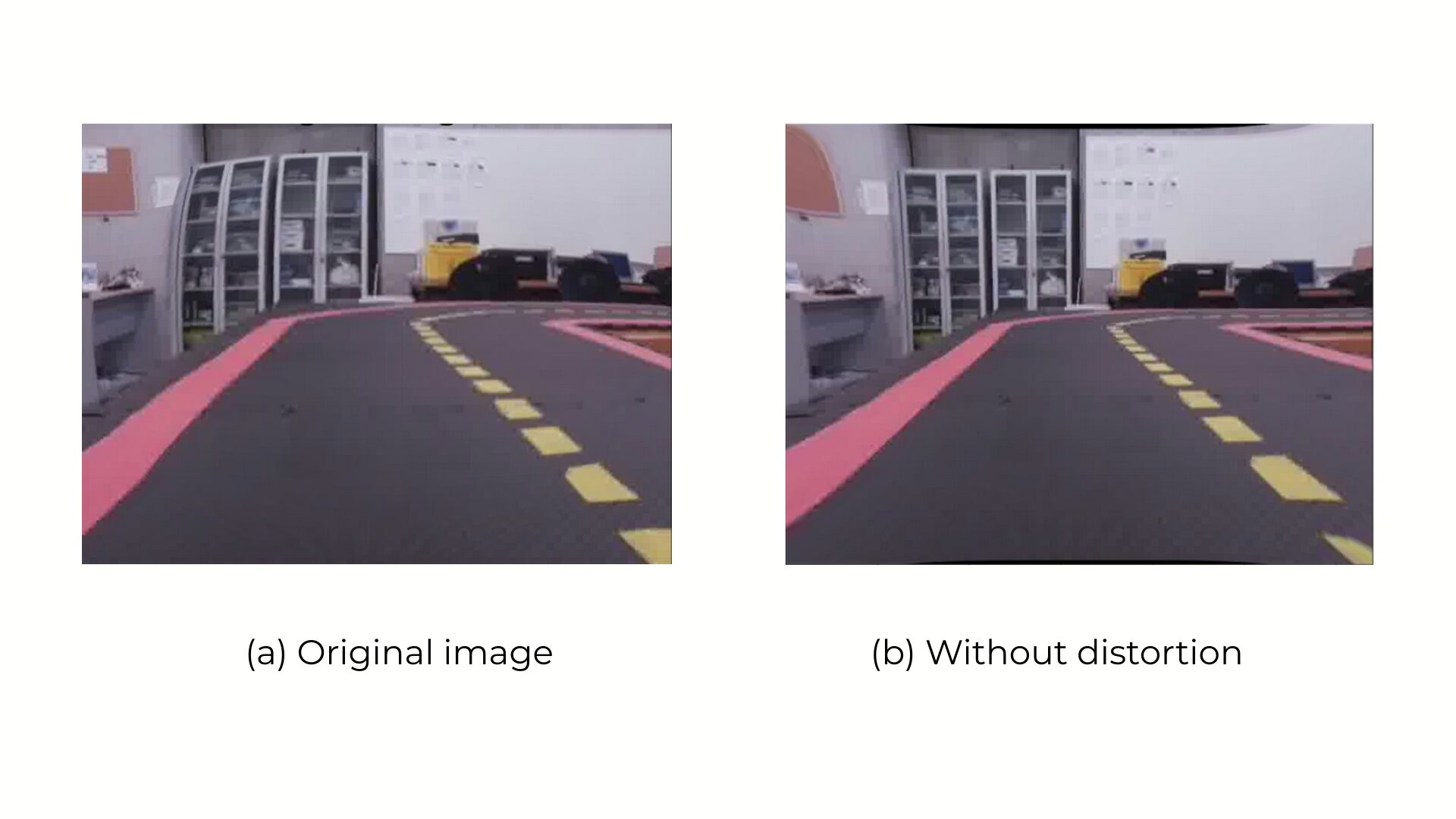

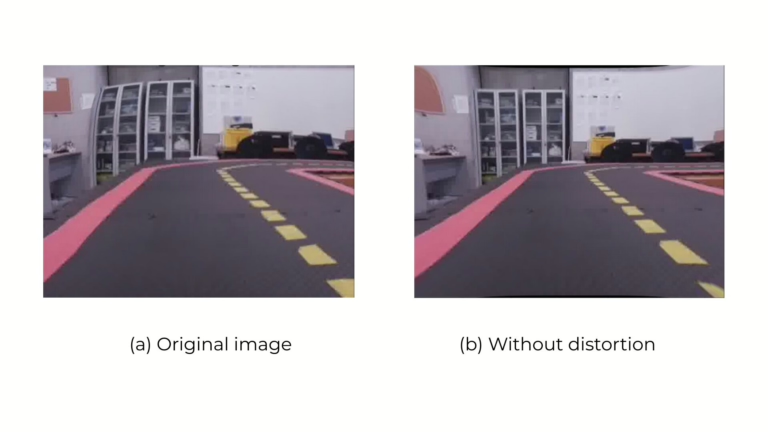

- Camera calibration to correct fisheye lens distortion;

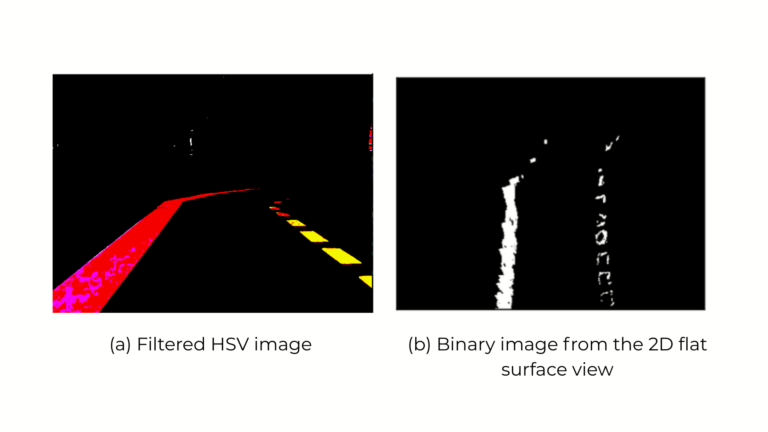

- HSV-based image segmentation for lane line detection;

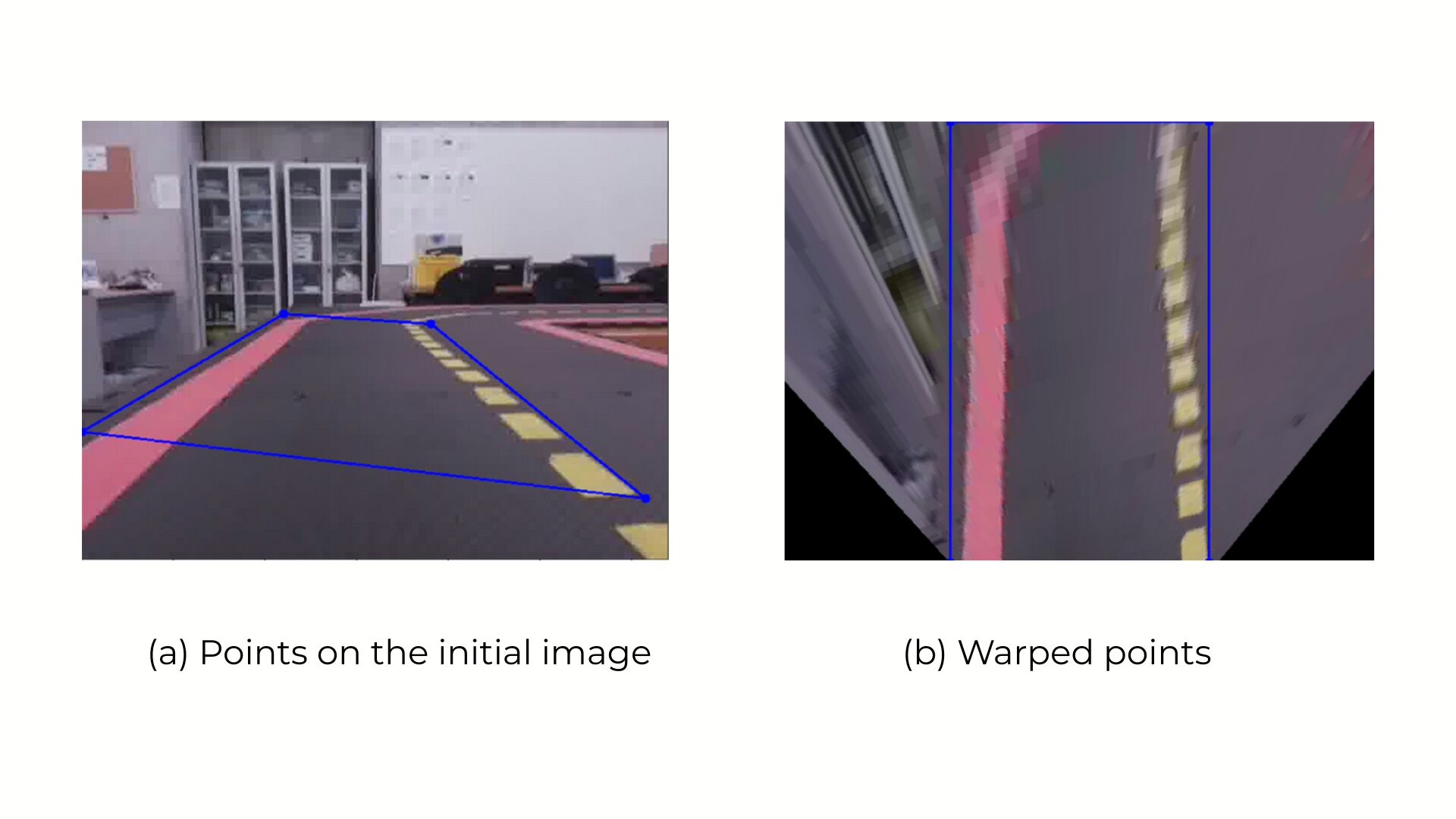

- Aerial perspective transformation for geometric consistency;

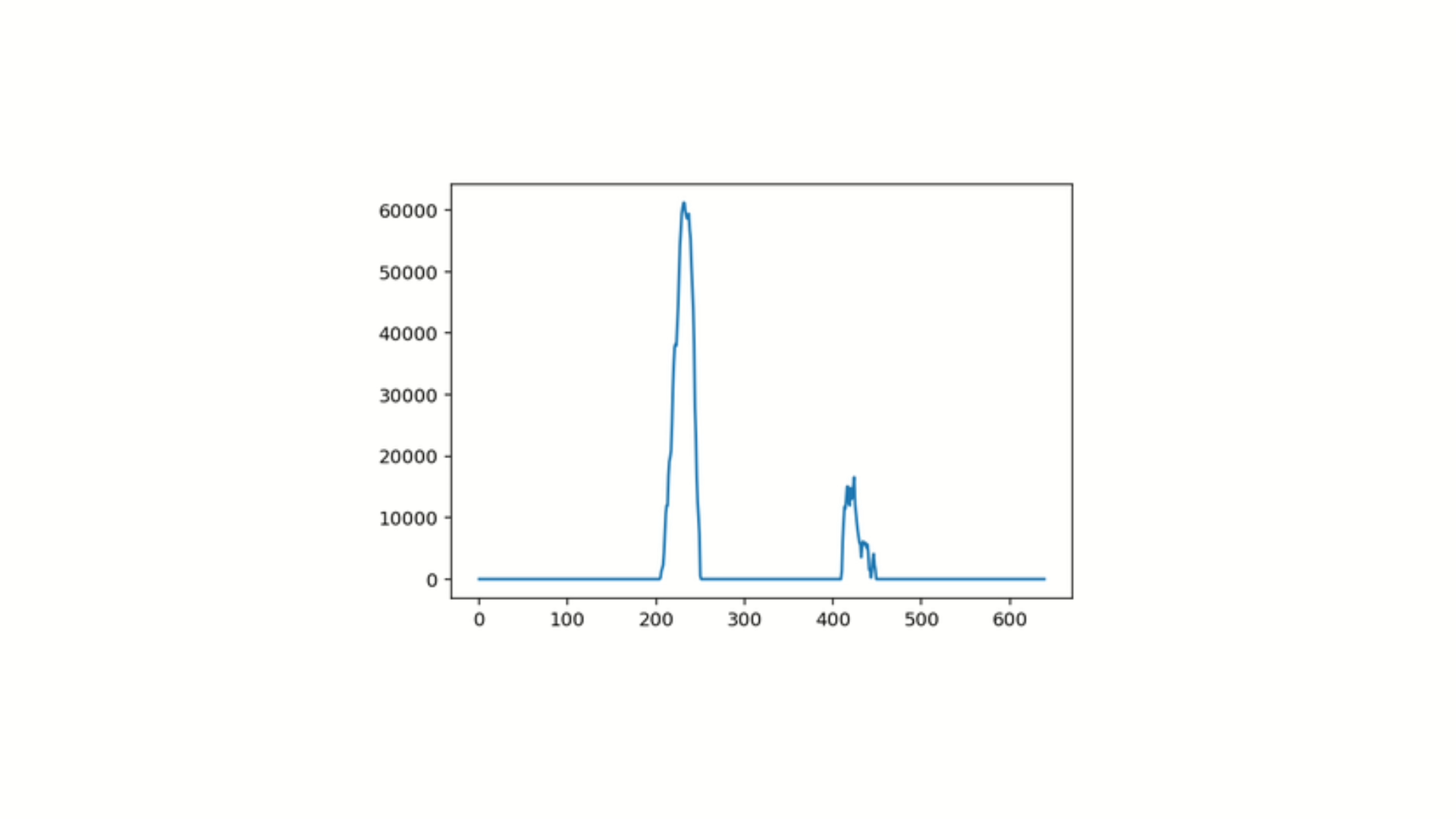

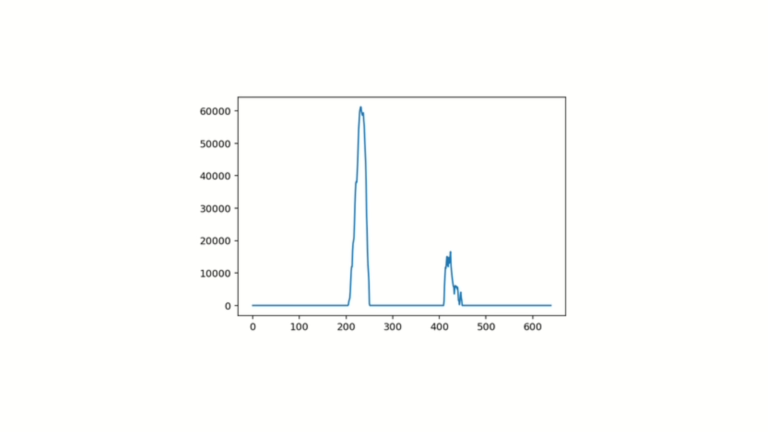

- Histogram-based color separation of continuous and dashed lines;

- Piecewise polynomial fitting for path curvature estimation;

- Closed-loop motion control based on computed linear and angular velocities.

The methodology demonstrates the feasibility of using camera-based perception to control robot motion in structured environments. By using Duckiebot and Duckietown as the development platform, this work is another example of how to bridge the gap between real-world testing and cost-effective prototyping, making vehicle autonomy research more accessible in educational and research contexts.

Highlights - visual feedback for lane tracking in Duckietown

Here is a visual tour of the implementation of vehicle autonomy by the authors. For all the details, check out the full paper.

Abstract

Here is the abstract of the work, directly in the words of the authors:

The autonomy of a vehicle can be achieved by a proper use of the information acquired with the sensors. Real-sized autonomous vehicles are expensive to acquire and to test on; however, the main algorithms that are used in those cases are similar to the ones that can be used for smaller prototypes. Due to these budget constraints, this work uses the Duckiebot as a testbed to try different algorithms as a first step to achieve full autonomy. This paper presents a methodology to properly use visual feedback, with the information of the robot camera, in order to detect the lane of a circuit and to drive the robot accordingly.

Conclusion - visual feedback for lane tracking in Duckietown

Here is the conclusion according to the authors of this paper:

Autonomous cars are currently a vast research area. Due to this increase in the interest of these vehicles, having a costeffective way to implement algorithms, new applications, and to test them in a controlled environment will further help to develop this technology. In this sense, this paper has presented a methodology for following a lane using a cost-effective robot, called the Duckiebot, using visual feedback as a guide for the motion. Although the whole system was capable of detecting the lane that needs to be followed, it is still sensitive to illumination conditions. Therefore, in places with a lot of lighting and brightness variations, the lane recognition algorithm can affect the autonomy of the vehicle.

As future work, machine learning, and particularly convolutional neural networks, is devised as a means to develop robust lane detectors that are not sensitive to brightness variation. Moreover, more than one Duckiebot is intended to drive simultaneously in the Duckietown.

Did this work spark your curiosity?

Check out the following works on vehicle autonomy with Duckietown:

Project Authors

Oscar Castro is currently working at Blume, Peru.

Axel Eliam Céspedes Duran is currently working as a Laboratory Professor of the Industrial Instrumentation course at the UTEC – Universidad de Ingeniería y Tecnología, Peru.

Roosevelt Jhans Ubaldo Chavez is currently working as a Laboratory Professor of the Industrial Instrumentation course at the UTEC – Universidad de Ingeniería y Tecnología, Peru.

Oscar E. Ramos is currently working toward the Ph.D. degree in robotics with the Laboratory for Analysis and Architecture of Systems, Centre National de la Recherche Scientifique, University of Toulouse, Toulouse, France.

Learn more

Duckietown is a platform for creating and disseminating robotics and AI learning experiences.

It is modular, customizable and state-of-the-art, and designed to teach, learn, and do research. From exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge, Duckietown evolves with the skills of the user.

Pure Pursuit Lane Following with Obstacle Avoidance

Pure Pursuit Lane Following with Obstacle Avoidance

Project Resources

- Objective: Develop a lane following and obstacle avoidance system using the pure pursuit control for Duckiebots. (DB18)

- Approach: Use an adaptive pure pursuit controller with dynamic speed tuning, integrated with vision-based deep-learning based object detection for obstacle-aware navigation.

- Authors: Soroush Saryazdi, Dhaivat Bhatt, Robotics and Embodied AI Lab (REAL), Université de Montréal and is also affiliated with MILA.

Project highlights

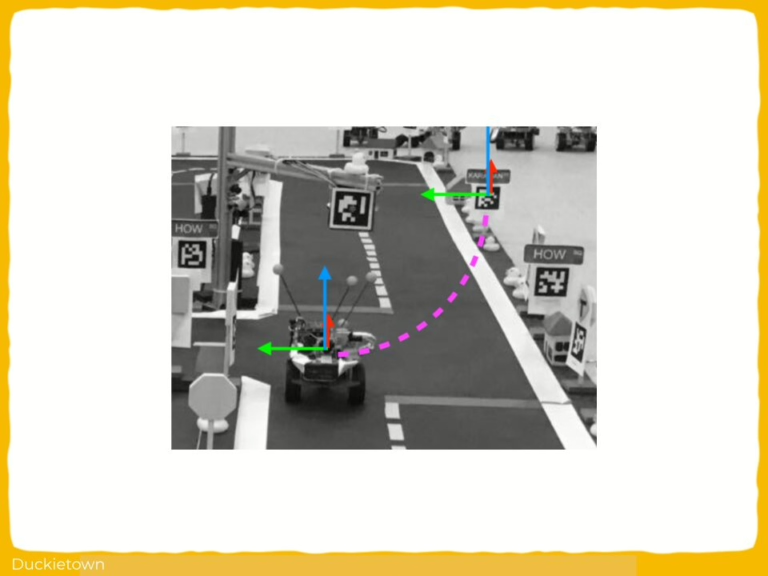

Pure Pursuit Lane Following with Obstacle Avoidance - the objectives

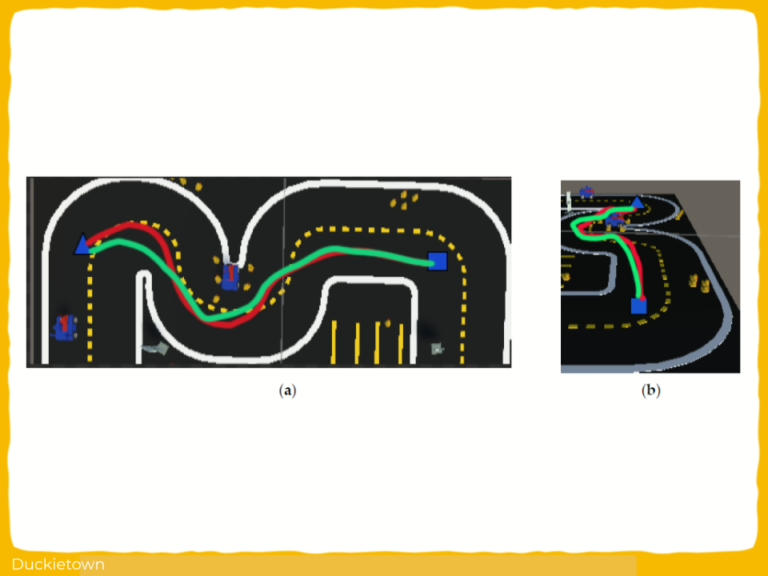

Pure pursuit is a geometric path tracking algorithm used in autonomous vehicle control systems. It calculates the curvature of the road ahead by determining a target point on the trajectory and computing the required angular velocity to reach that point based on the vehicle’s kinematics.

Unlike proportional integral derivative (PID) control, which adjusts control outputs based on continuous error correction, pure pursuit uses a lookahead point to guide the vehicle along a trajectory, enabling stable convergence to the path without oscillations. This method avoids direct dependency on derivative or integral feedback, reducing complexity in environments with sparse or noisy error signals.

This project aims to implement a pure pursuit-based lane following system integrated with obstacle avoidance for autonomous Duckiebot navigation. The goal is to enable real-time tracking of lane centerlines while maintaining safety through detection and response to dynamic obstacles such as other Duckiebots or cones.

The pipeline includes a modified ground projection system, an adaptive pure pursuit controller for path tracking, and both image processing and deep learning-based object detection modules for obstacle recognition and avoidance.

The challenges and approach

The primary challenges in this project include robust target point estimation under variable lighting and environmental conditions, real-time object detection with limited computational resources, and smooth trajectory control in the presence of dynamic obstacles.

The approach involves modular integration of perception, planning, and control subsystems.

For perception, the system uses both classical image processing methods and a trained deep learning model for object detection, enabling redundancy and simulation compatibility.

For planning and control, the pure pursuit controller dynamically adjusts speed and steering based on the estimated target point and obstacle proximity. Target point estimation is achieved through ground projection, a transformation that maps image coordinates to real-world planar coordinates using a calibrated camera model. Real-time parameter tuning and feedback mechanisms are included to handle variations in frame rate and sensor noise.

Obstacle positions are also ground-projected and used to trigger stop conditions within a defined safety zone, ensuring collision avoidance through reactive control.

Looking for similar projects?

Check out the following works on path planning with Duckietown:

Pure Pursuit Lane Following with Obstacle Avoidance: Authors

Soroush Saryazdi is currently leading the Neural Networks team at Matic, supervised by Navneet Dalal.

Dhaivat Bhatt is currently working as a Machine learning research engineer at Samsung AI centre, Toronto.

Learn more

Duckietown is a modular, customizable, and state-of-the-art platform for creating and disseminating robotics and AI learning experiences.

Duckietown is designed to teach, learn, and do research: from exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge.

These spotlight projects are shared to exemplify Duckietown’s value for hands-on learning in robotics and AI, enabling students to apply theoretical concepts to practical challenges in autonomous robotics, boosting competence and job prospects.

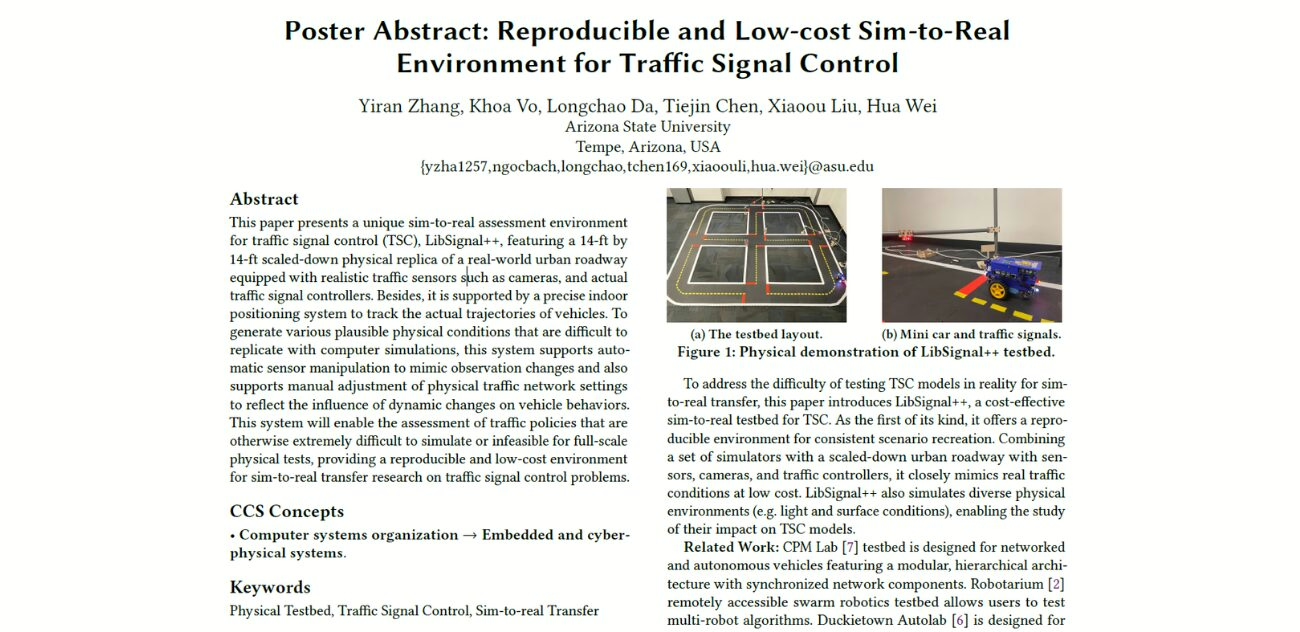

Reproducible Sim-to-Real Traffic Signal Control Environment

General Information

- Title: Reproducible and Low-cost Sim-to-Real Environment for Traffic Signal Control

- Authors: Yiran Zhang, Khoa Vo, Longchao Da, Tiejin Chen, Xiaoou Liu, Hua Wei

- Institution: Arizona State University, USA

- Citation: Zhang, Y., Vo, K., Da, L., Chen, T., Liu, X. and Wei, H., 2025, May. Reproducible and Low-cost Sim-to-Real Environment for Traffic Signal Control. In Proceedings of the ACM/IEEE 16th International Conference on Cyber-Physical Systems (with CPS-IoT Week 2025) (pp. 1-2).

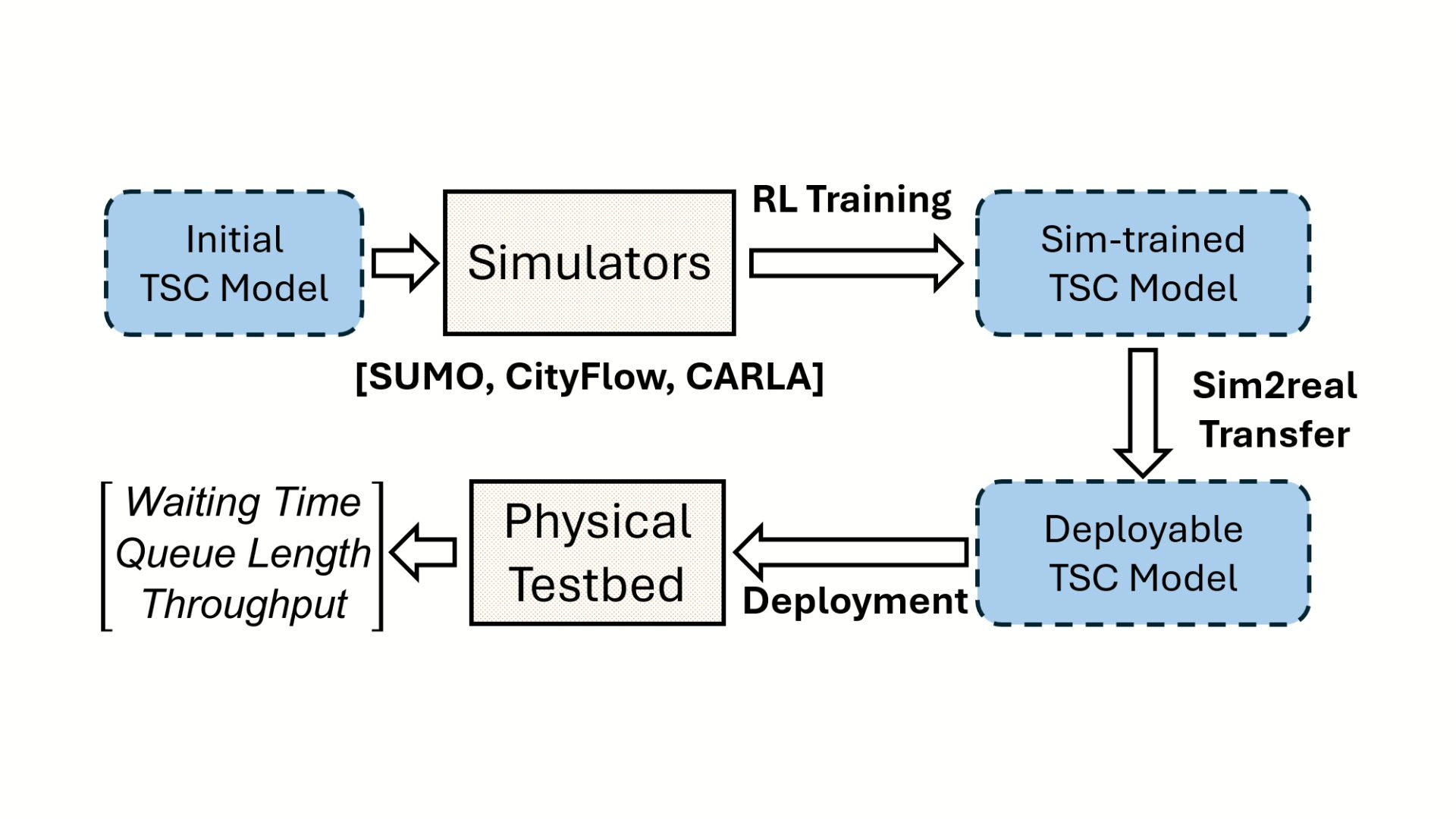

Reproducible Sim-to-Real Traffic Signal Control Environment

As urban environments become increasingly populated and automobile traffic soars, with US citizens spending on average 54 hours a year stuck on the roads, active traffic control management promises to mitigate traffic jams while maintaining (or improving) safety.

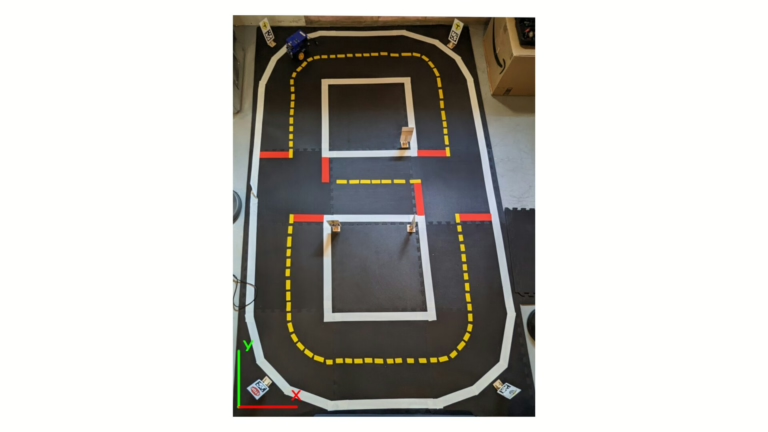

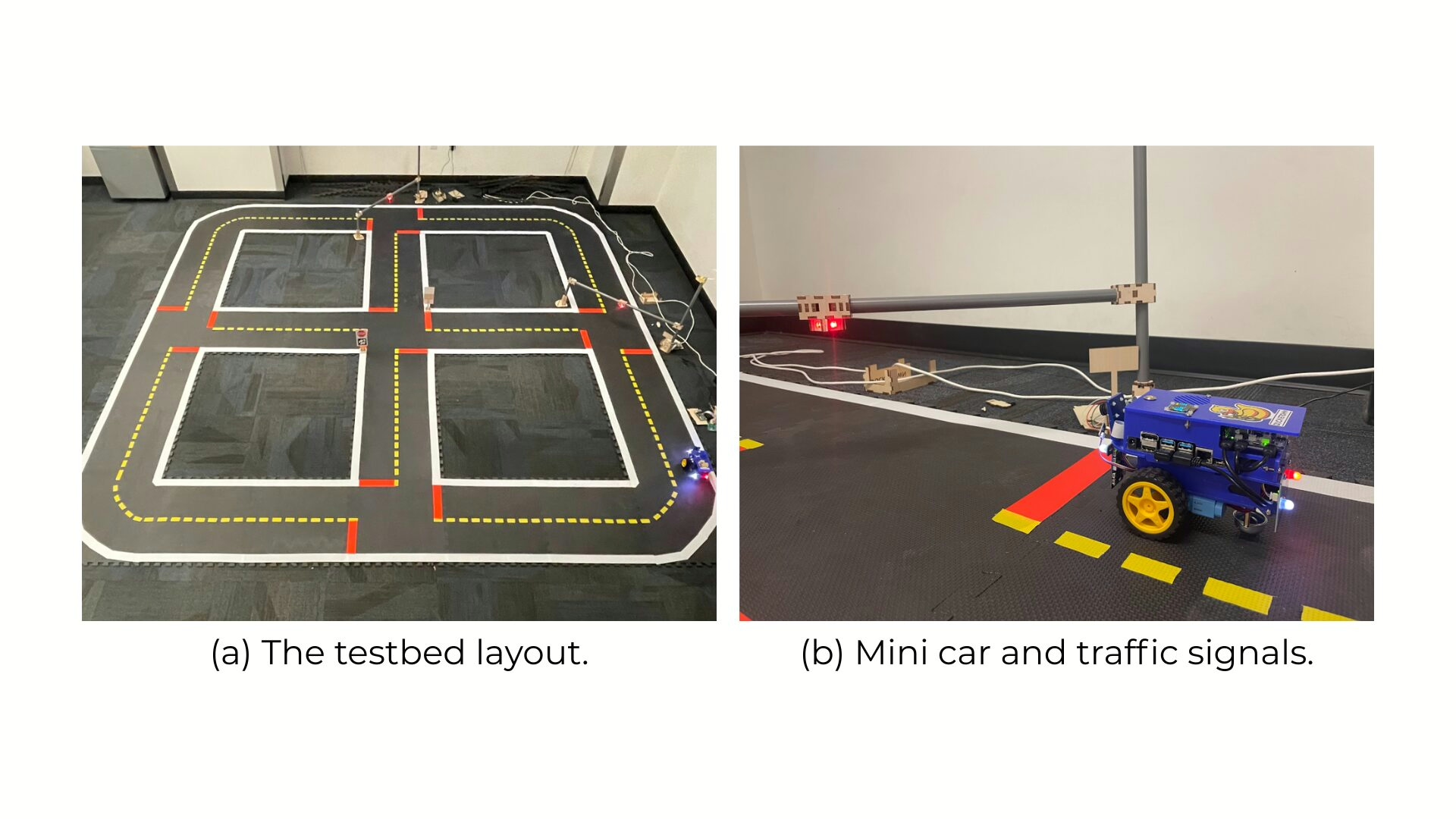

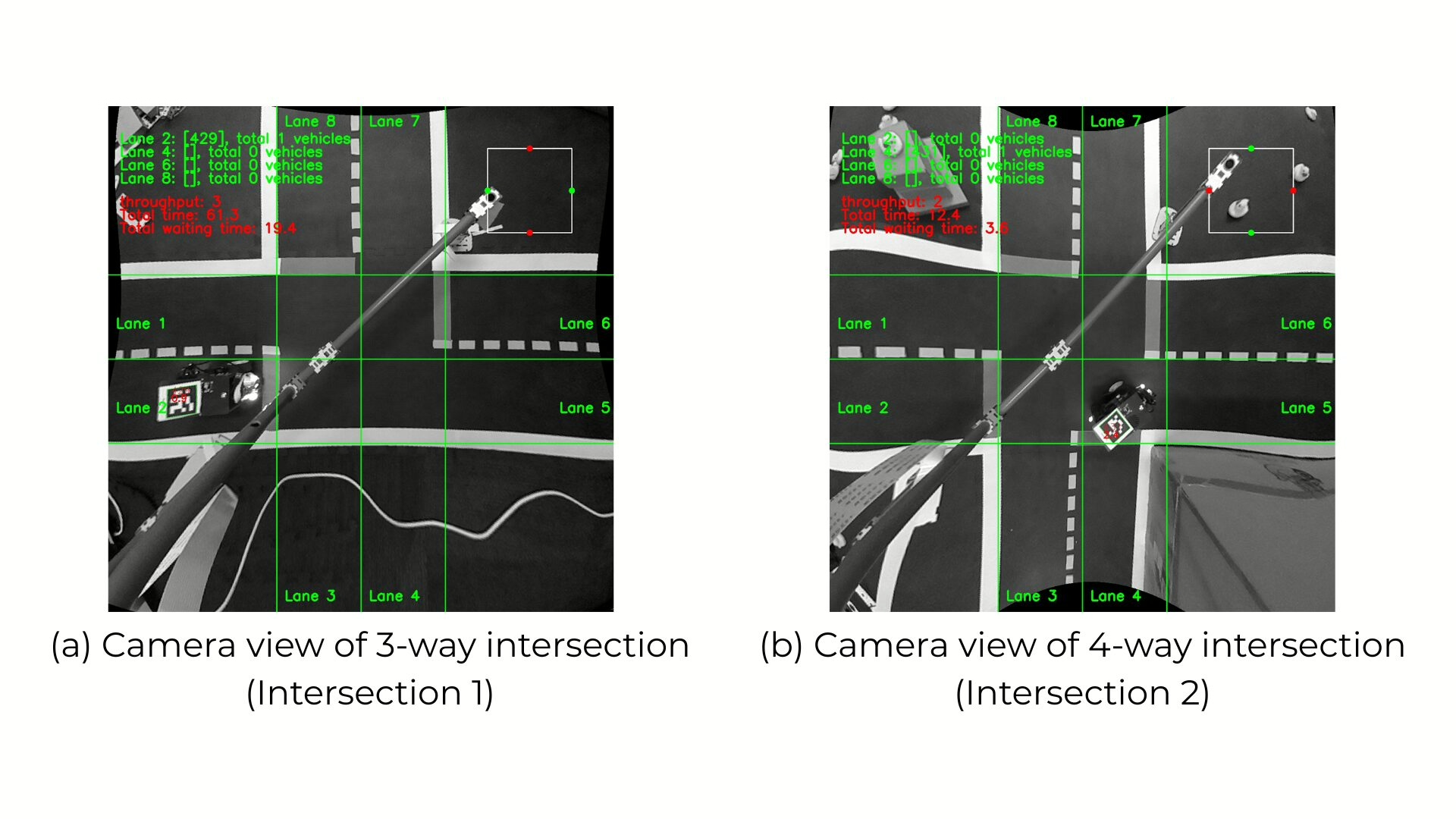

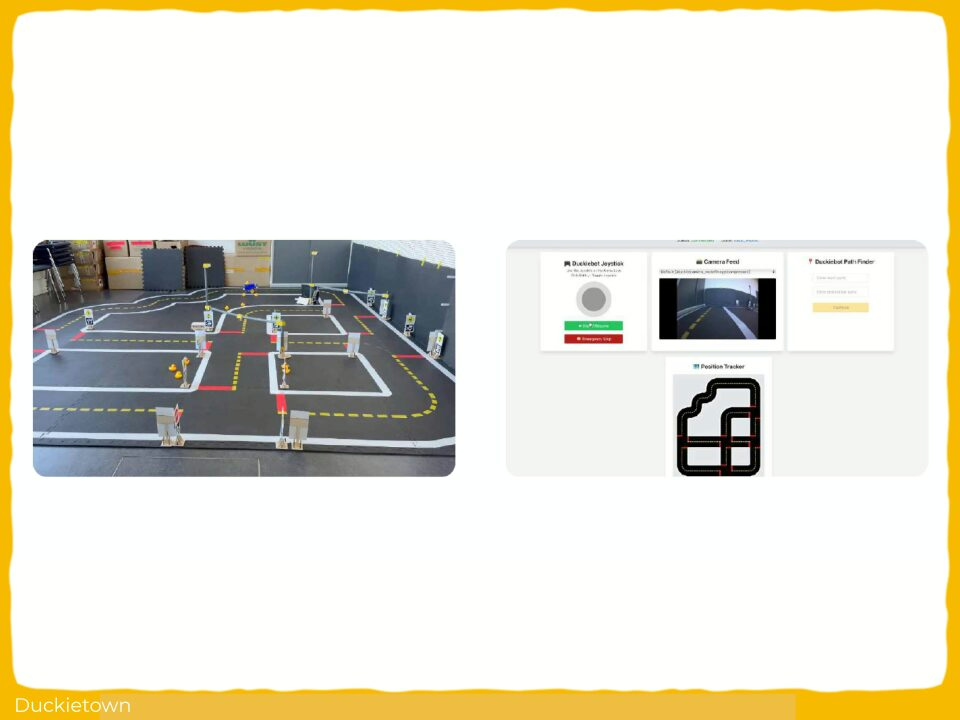

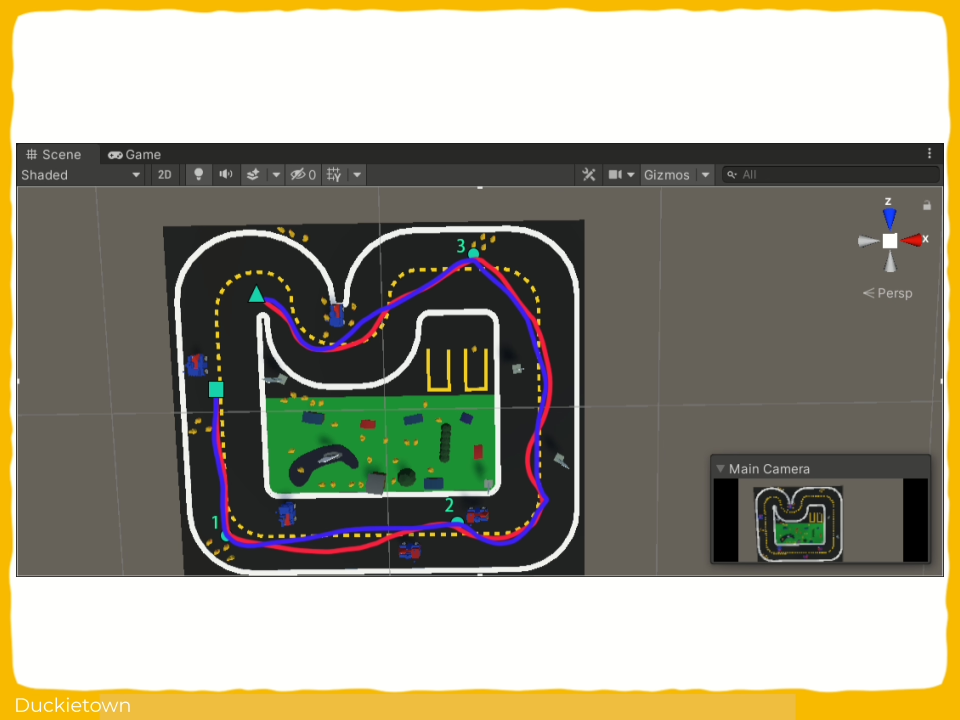

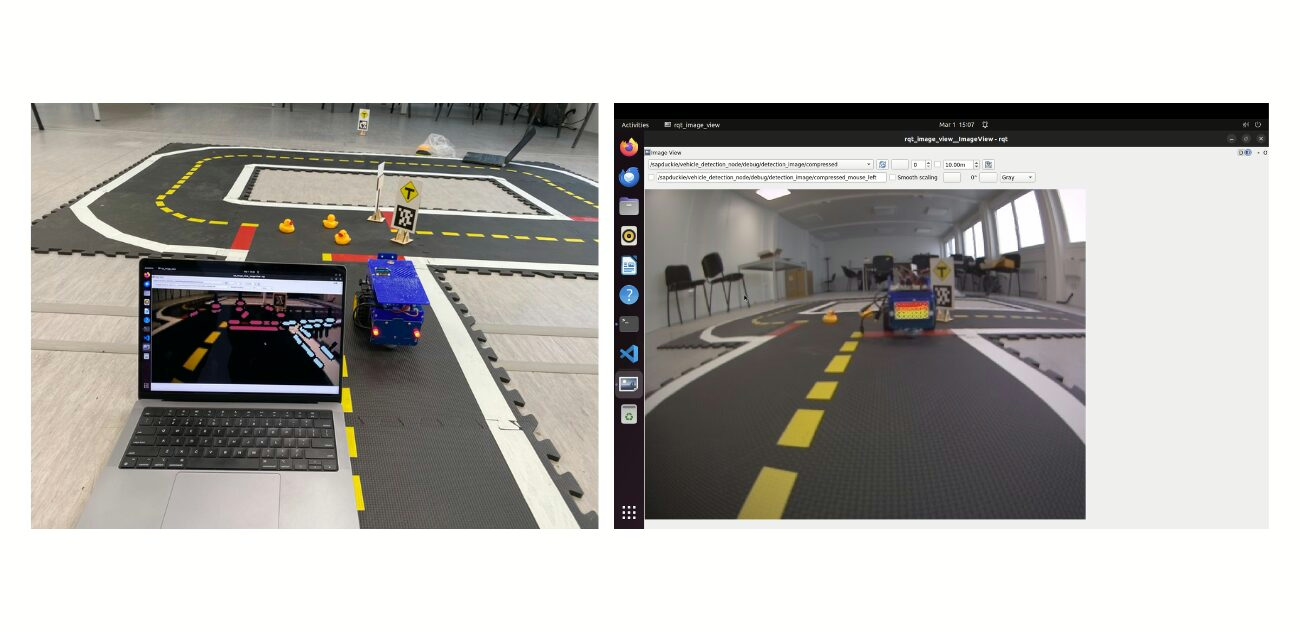

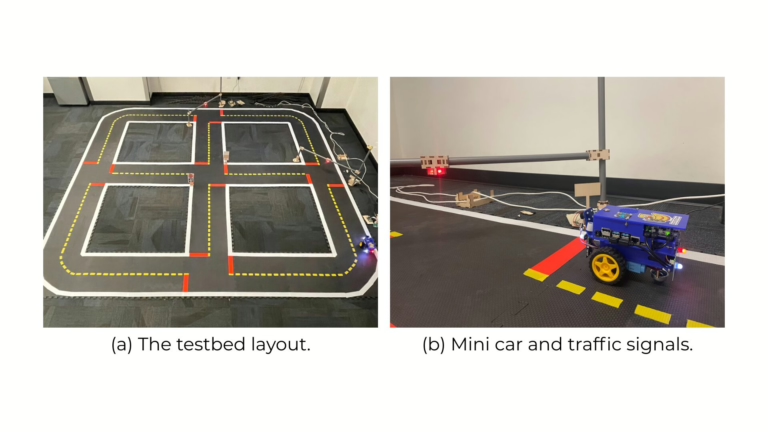

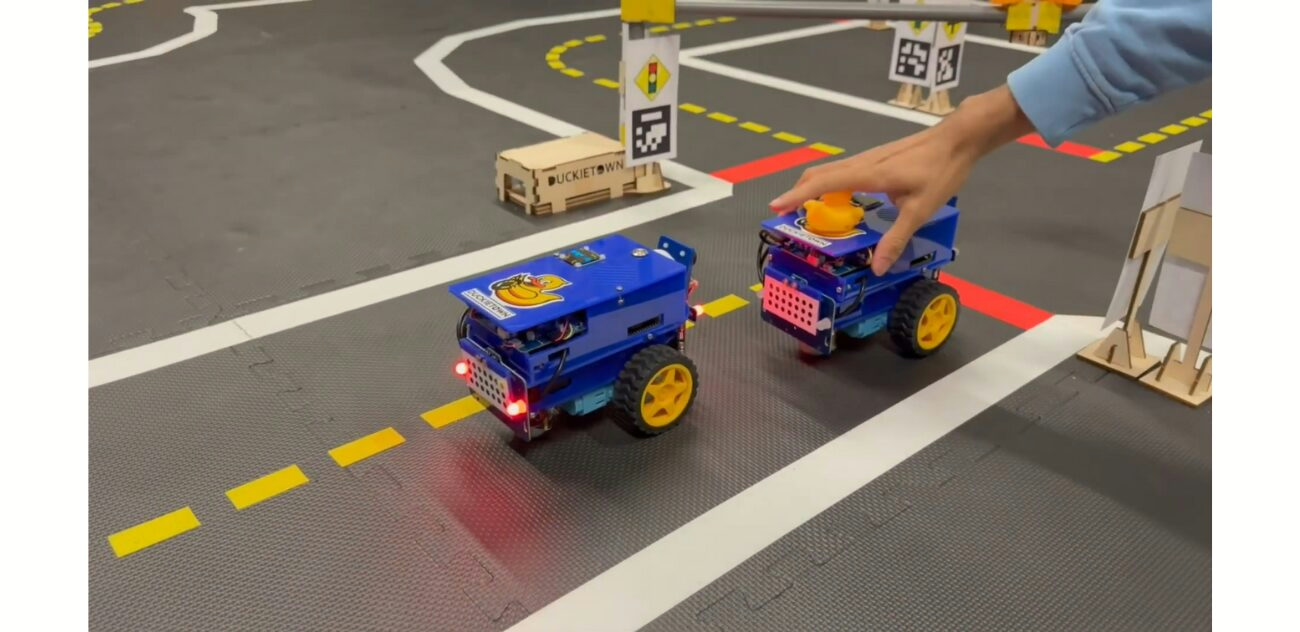

LibSignal++ is a Duckietown-based testbed for reproducible and low-cost sim-to-real evaluation of traffic signal control (TSC) algorithms. Using Duckietown enables consistent, small-scale deployment of both rule-based and learning-based TSC models.

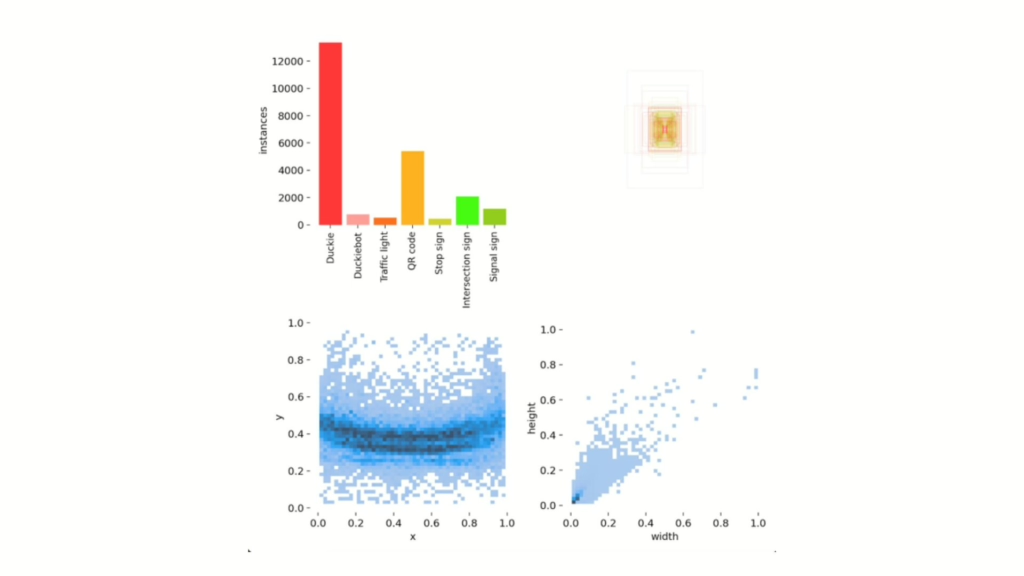

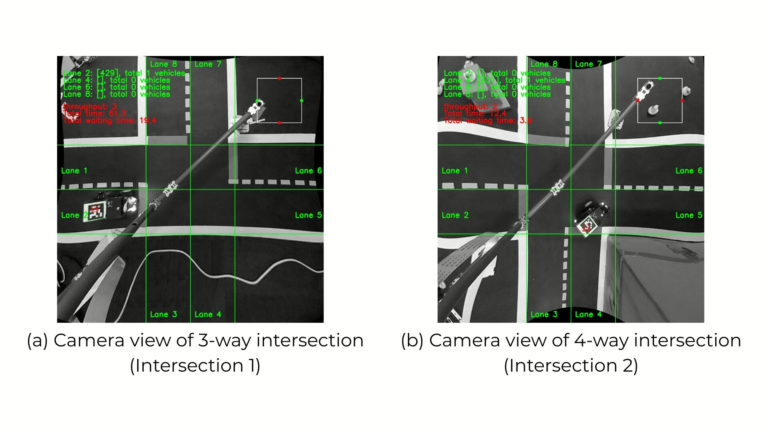

LibSignal++ integrates visual control through camera-based sensing and object detection via the YOLO-v5 model. It features modular components, including Duckiebots, signal controllers, and an indoor positioning system for accurate vehicle trajectory tracking. The testbed supports dynamic scenario replication by enabling both manual and automated manipulation of sensor inputs and road layouts.

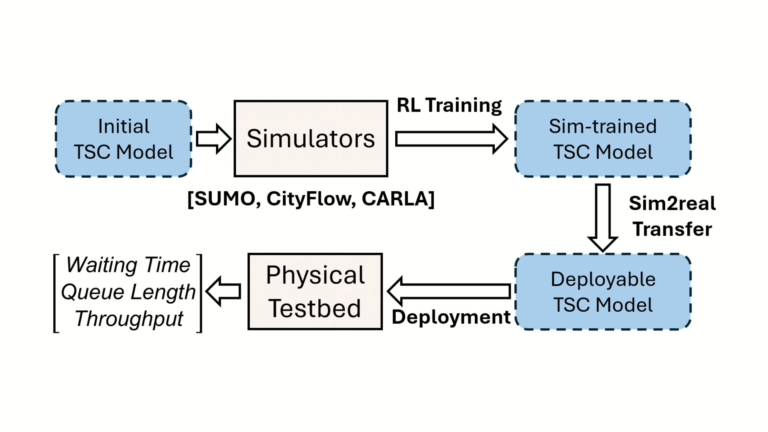

Key aspects of the research include:

- Sim-to-real pipeline for Reinforcement Learning (RL)-based traffic signal control training and deployment

- Multi-simulator training support with SUMO, CityFlow, and CARLA

- Reproducibility through standardized and controllable physical components

- Integration of real-world sensors and visual control systems

- Comparative evaluation using rule-based policies on 3-way and 4-way intersections

The work concludes with plans to extend to Machine Learning (ML)-based TSC models and further sim-to-real adaptation.

Highlights - Reproducible Sim-to-Real Traffic Signal Control Environment

Here is a visual tour of the sim-to-real work of the authors. For all the details, check out the full paper.

Abstract

Here is the abstract of the work, directly in the words of the authors:

This paper presents a unique sim-to-real assessment environment for traffic signal control (TSC), LibSignal++, featuring a 14-ft by 14-ft scaled-down physical replica of a real-world urban roadway equipped with realistic traffic sensors such as cameras, and actual traffic signal controllers. Besides, it is supported by a precise indoor positioning system to track the actual trajectories of vehicles. To generate various plausible physical conditions that are difficult to replicate with computer simulations, this system supports automatic sensor manipulation to mimic observation changes and also supports manual adjustment of physical traffic network settings to reflect the influence of dynamic changes on vehicle behaviors. This system will enable the assessment of traffic policies that are otherwise extremely difficult to simulate or infeasible for full-scale physical tests, providing a reproducible and low-cost environment for sim-to-real transfer research on traffic signal control problems.

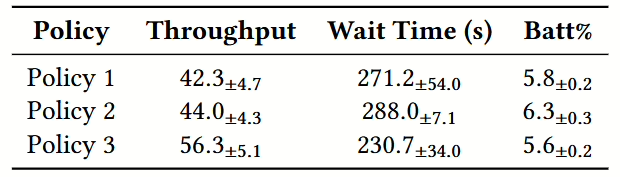

Results

Three traffic control policies were tested over a number of experiment repetitions, evaluating each time traffic throughput, average vehicle waiting times, and vehicle battery consumption. Standard deviations for all policies were found to be within acceptable ranges, leading the authors to confirm the ability of the testbed to deliver reproducible results within controlled environments.

Did this work spark your curiosity?

Check out the follow works on machine learning with Duckietown:

Project Authors

Yiran Zhang is associated with the Arizona State University, USA.

Khoa Vo is associated with the Arizona State University, USA.

Longchao Da is pursuing his Ph.D. at the Arizona State University, USA.

Tiejin Chen is pursuing his Ph.D. at the Arizona State University, USA.

Xiaoou Liu is pursuing her Ph.D. at the Arizona State University, USA.

Hua Wei is an Assistant Professor at the School of Computing and Augmented Intelligence, Arizona State University, USA.

Learn more

Duckietown is a platform for creating and disseminating robotics and AI learning experiences.

It is modular, customizable and state-of-the-art, and designed to teach, learn, and do research. From exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge, Duckietown evolves with the skills of the user.

Autonomous Navigation System Development in Duckietown

Autonomous Navigation System Development in Duckietown

Project Resources

- Objective: Enable safe and reliable autonomous navigation for Duckiebots in Duckietowns.

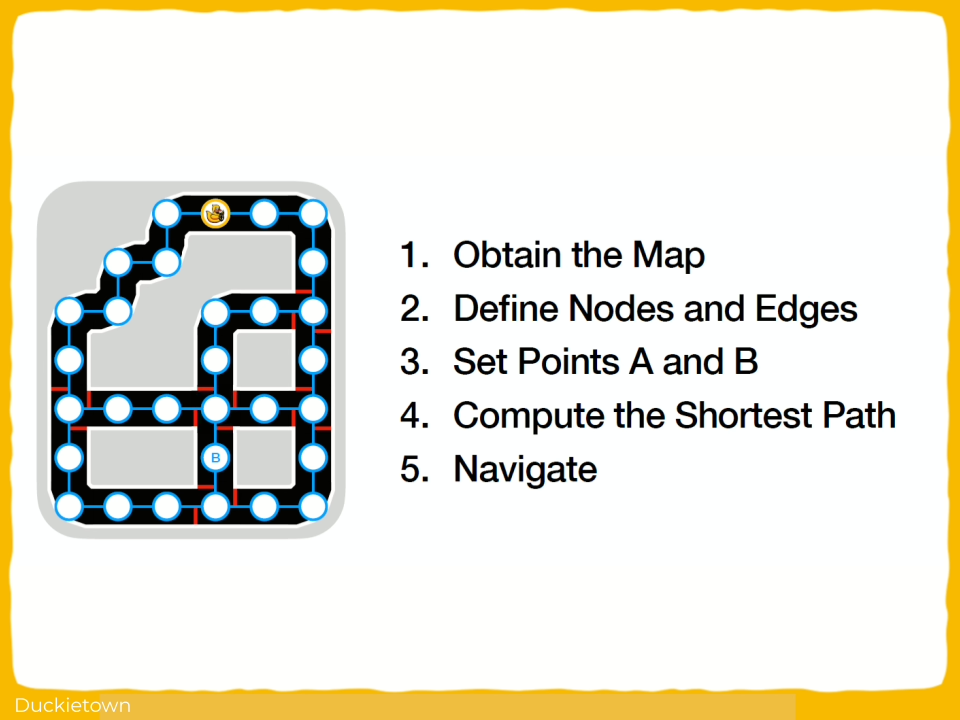

- Approach: Use onboard sensors, computer vision, and Dijkstra algorithm to navigate lanes, intersections, and avoid obstacles.

- Authors: Julien-Alexandre Bertin Klein, Andrea Pellegrin, Fathia Ismail from the Technical University of Munich, Germany.

Project highlights

Autonomous Navigation System Development in Duckietown - the objectives

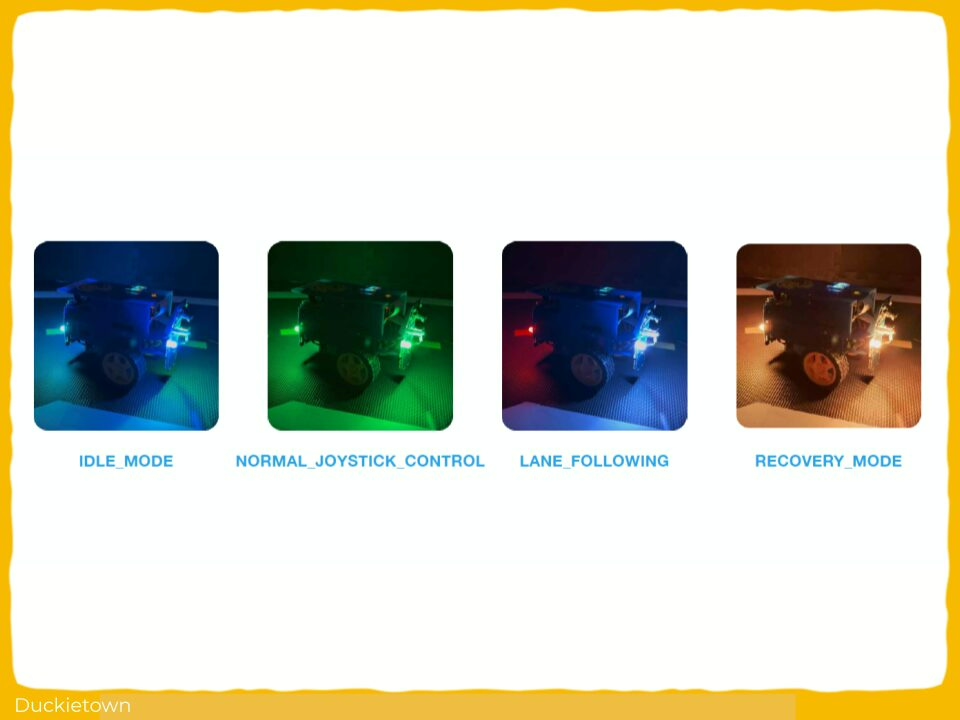

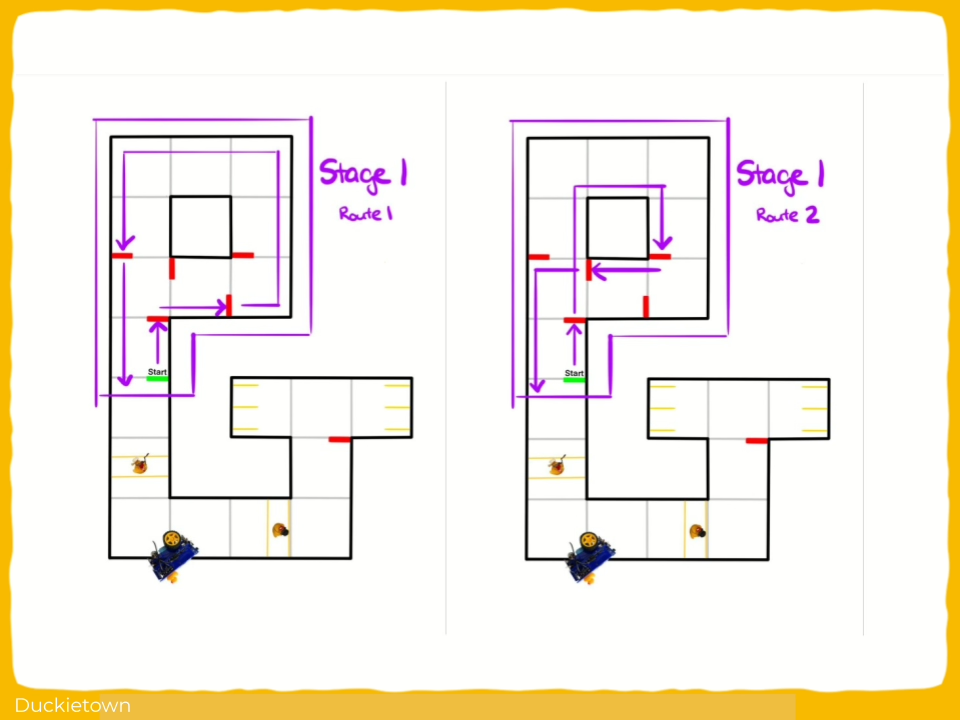

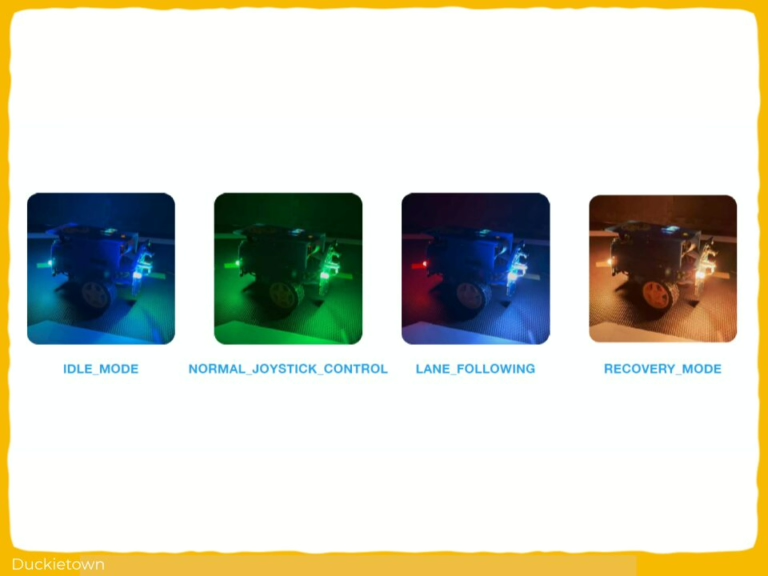

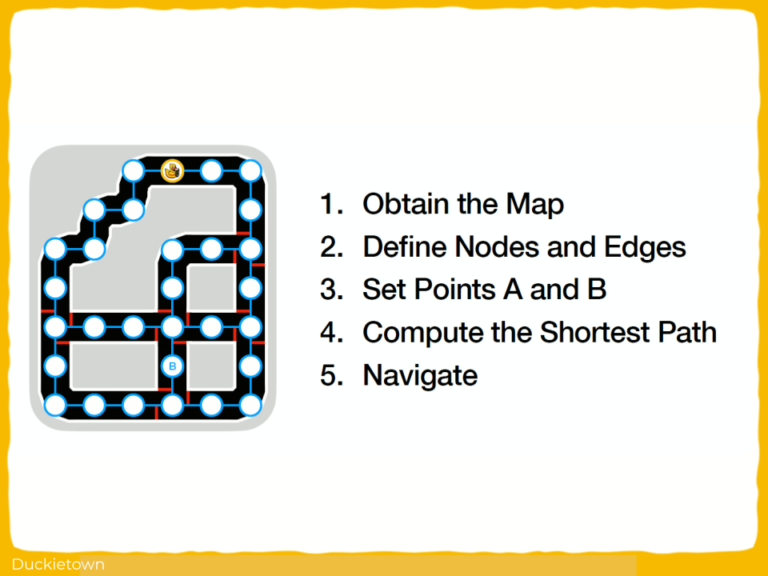

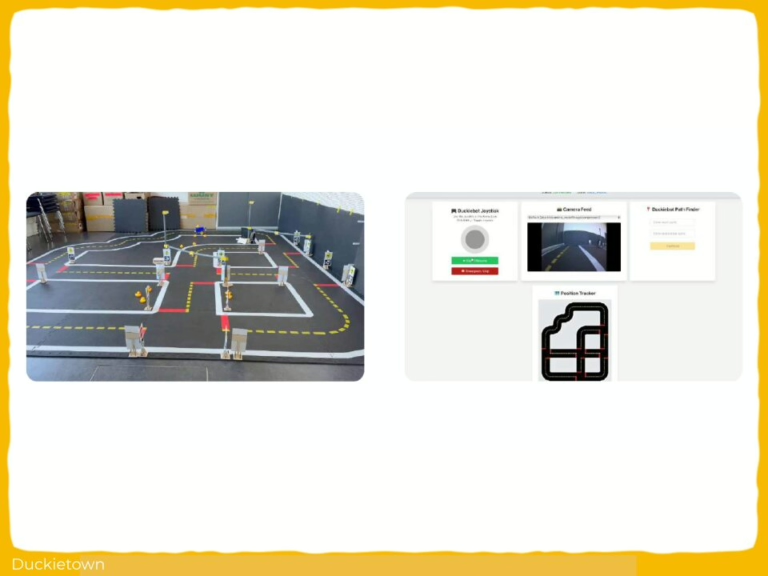

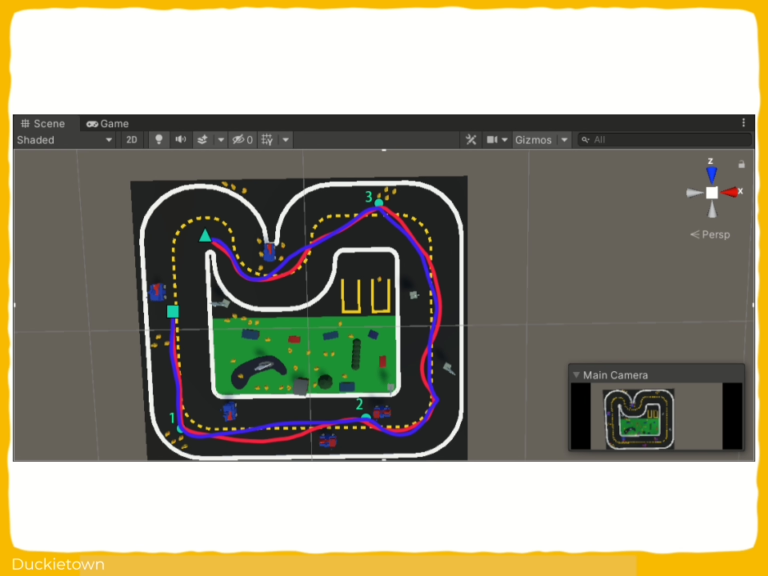

The primary objective of this project is to develop and refine an Autonomous Navigation System within the Duckietown environment, leveraging ROS-based control and computer vision to enable reliable lane following and safe intersection navigation. This includes calibrating sensor inputs, particularly from the camera, IMU, and encoders, and integrating advanced algorithms such as Dijkstra algorithm for optimal path planning. The project aims to ensure that the Duckiebot can autonomously detect lanes, stop lines, and obstacles while dynamically computing the shortest path to any designated point within the mapped environment. Additionally, the system is designed to transition smoothly between operational states (lane following, intersection handling, and recovery) using a refined Finite State Machine approach, all while maintaining robust communication within the ROS ecosystem.

Project Report

The challenges and approach

The project faced several challenges, beginning with hardware constraints, such as the physical limitations of wheel traction and battery lifespan, which affected motion stability and operational time. The integration of various ROS packages, some with incomplete documentation and inconsistent coding practices, complicated the development of a reliable and maintainable codebase. The method adopted involved precise sensor calibration to ensure accurate perception and control, incorporating camera intrinsic and extrinsic calibration for improved visual data interpretation, and adjusting wheel parameters to maintain balanced motion. The lane following module required parameter tuning for gain, trim, and heading correction to adapt to Duckietown’s environment. The original FSM-based intersection navigation system was re-engineered due to unreliability in node transitions, replaced with a distance-based approach for intersection stops and turns, ensuring deterministic and reliable behavior. Dijkstra’s algorithm was implemented to create a structured graph representation of the city map, enabling dynamic path planning that adapts to real-time inputs from the perception system. Custom web dashboards built with React.js and roslibjs facilitated monitoring and debugging by providing live data feedback and control interfaces. Through this rigorous and iterative process, the project achieved a robust autonomous navigation system capable of precise path planning and safe maneuvering within Duckietown.

Did this work spark your curiosity?

Check out the follow works on path planning with Duckietown:

Autonomous Navigation System Development in Duckietown: Authors

Julien-Alexandre Bertin Klein is currently a Bachelor of Science (BSc.), Information Engineering at the Technical University of Munich, Germany.

Andrea Pellegrin is currently a Bachelor of Science (BSc.), Information Engineering at the Technical University of Munich, Germany.

Fathia Ismail is currently a Bachelor of Science (BSc.), Information Engineering at the Technical University of Munich, Germany.

Learn more

Duckietown is a modular, customizable, and state-of-the-art platform for creating and disseminating robotics and AI learning experiences.

Duckietown is designed to teach, learn, and do research: from exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge.

These spotlight projects are shared to exemplify Duckietown’s value for hands-on learning in robotics and AI, enabling students to apply theoretical concepts to practical challenges in autonomous robotics, boosting competence and job prospects.

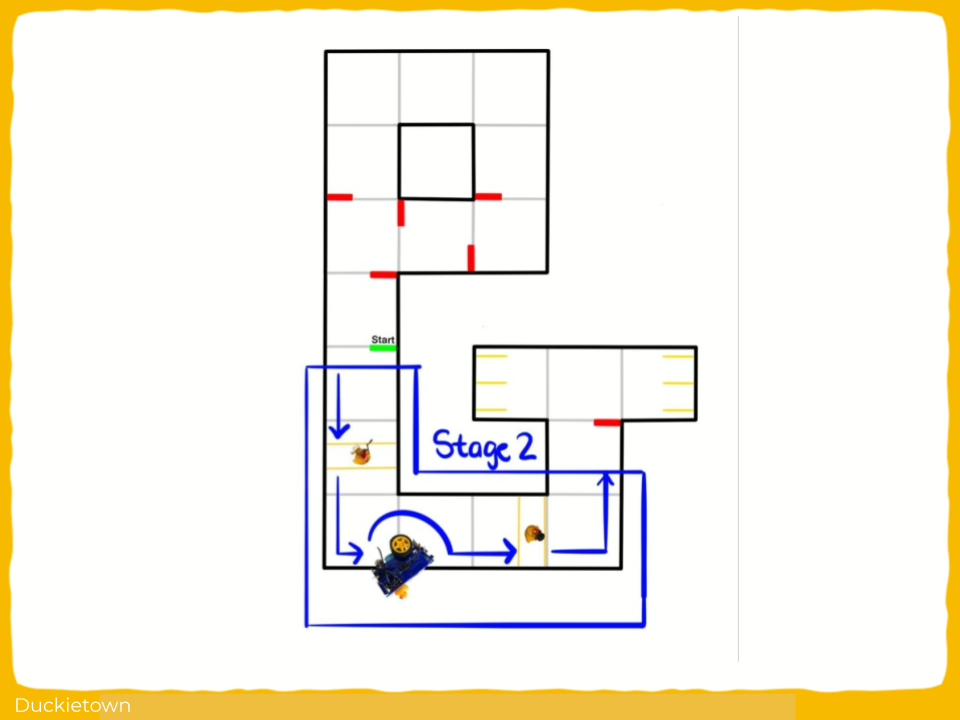

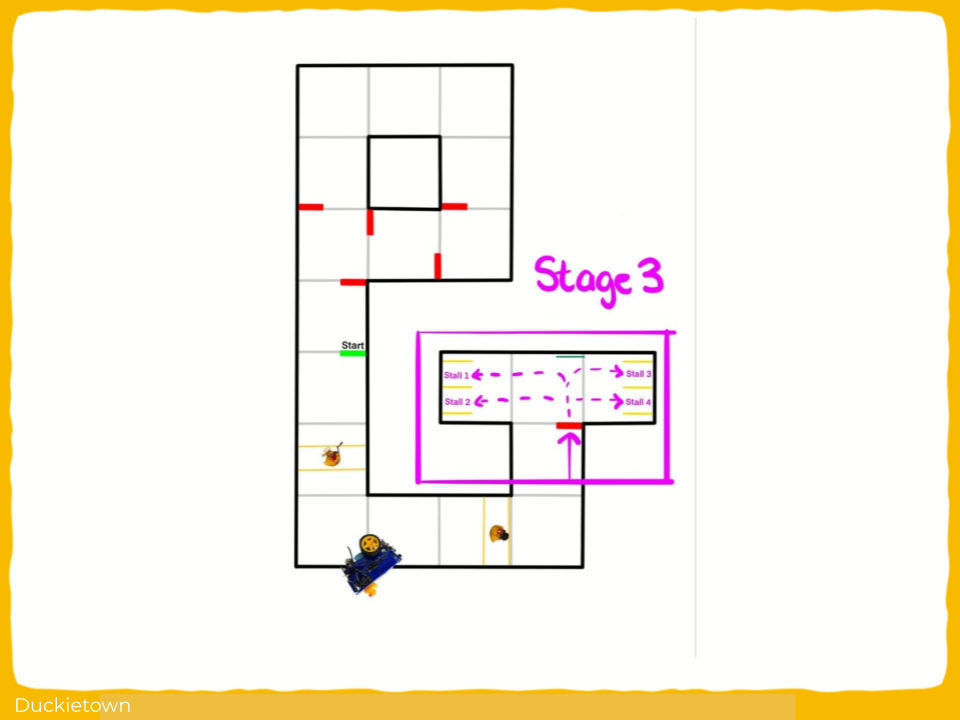

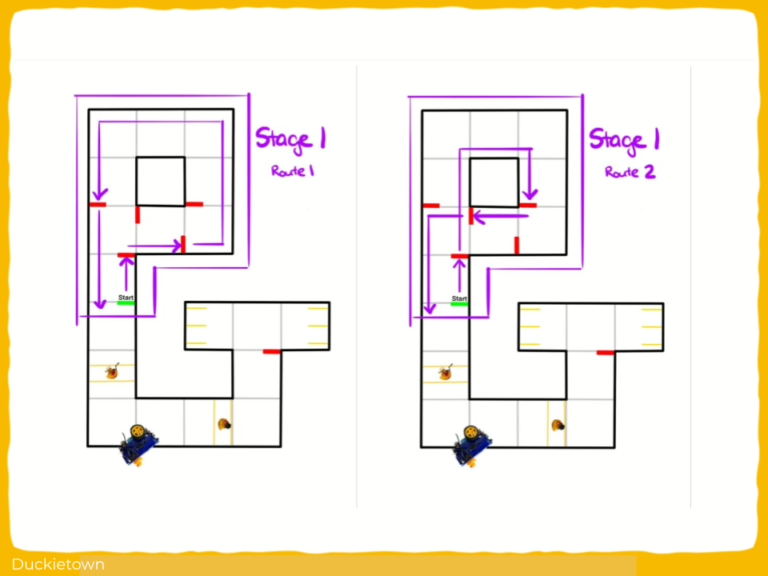

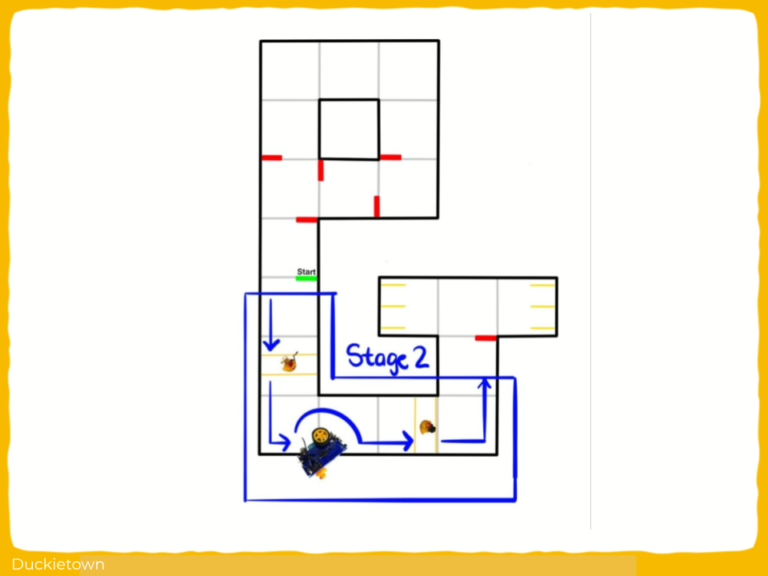

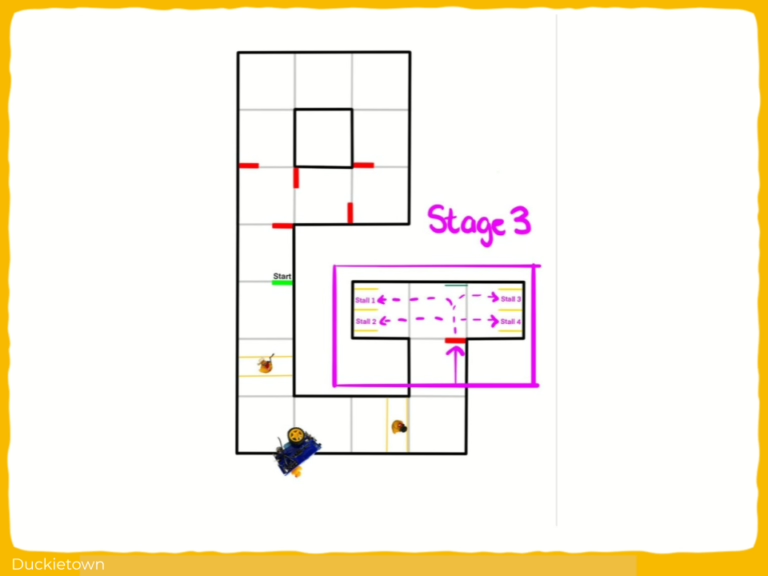

Autonomous Navigation and Parking in Duckietown

Autonomous Navigation and Parking in Duckietown

Project Resources

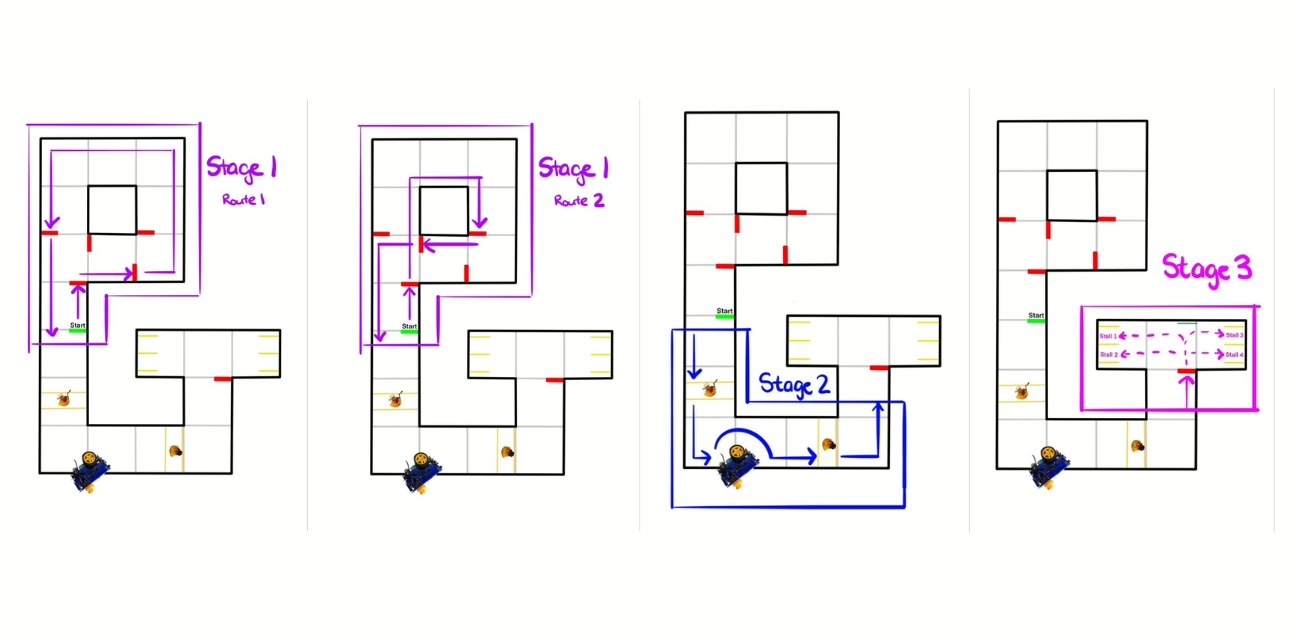

- Objective: Achieve autonomous navigation and parking in an intersection-equipped Duckietown city.

- Approach: Utilizing an FSM to transition between PID control-based lane following, intersection navigation, and parking using visual fiducial markers (AprilTags), color masking, and dead reckoning.

- Authors: Eric Khumbata, Cameron Hildebrandt, Jasper Eng from the University of Alberta.

- Course: This project is part of the CMPUT 412 Experimental Mobile Robotics course at the University of Alberta under Prof. Matthew E. Taylor.

Project highlights

Static parameters in a dynamic environment are pre-programmed failure points.

Autonomous Navigation and Parking in Duckietown: the objectives

The system uses wheel encoder data for dead-reckoning-based motion execution in the absence of visual cues, and applies HSV-based color segmentation to detect and respond to static and dynamic obstacles. Visual servoing is used for parking alignment based on AprilTag localization. The control logic is modular and supports parameter tuning for hardware variability, with temporal filtering to suppress redundant detections and ensure stability.

The challenges and approach

The key technical issues included inconsistent AprilTag detection due to motion blur and multiple redundant detections, which were mitigated using temporal filtering. PID control was used for continuous lane following, with dead reckoning based on wheel encoder data for intersection traversal when visual input was unreliable.

Obstacle detection and stopping mechanisms used HSV-based color segmentation to identify static and dynamic objects in the environment. In the parking stage, AprilTag-based localization and visual servoing were used to achieve stall alignment. The system was modular, with state-driven control logic managing transitions between lane following, intersection handling, obstacle detection, and parking.

Did this work spark your curiosity?

Check out the following works on path planning with Duckietown:

Autonomous Navigation and Parking in Duckietown: Authors

Eric Khumbata is working as a Computer Engineer at TELUS, a Canadian telecommunications company.

Jasper Eng is currently working as a Summer Research Intern at the BLINC Lab.

Cameron Hildebrandt is currently working as a Fullstack Developer at Bitcoin Well, Canada.

Learn more

Duckietown is a capable, affordable, and reliable platform for creating and disseminating robotics and AI learning experiences.

Duckietown is designed to teach, learn, and do research: from exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge.

These spotlight projects are shared to exemplify Duckietown’s value for hands-on learning in robotics and AI, enabling students to apply theoretical concepts to practical challenges in autonomous robotics, boosting competence and job prospects.

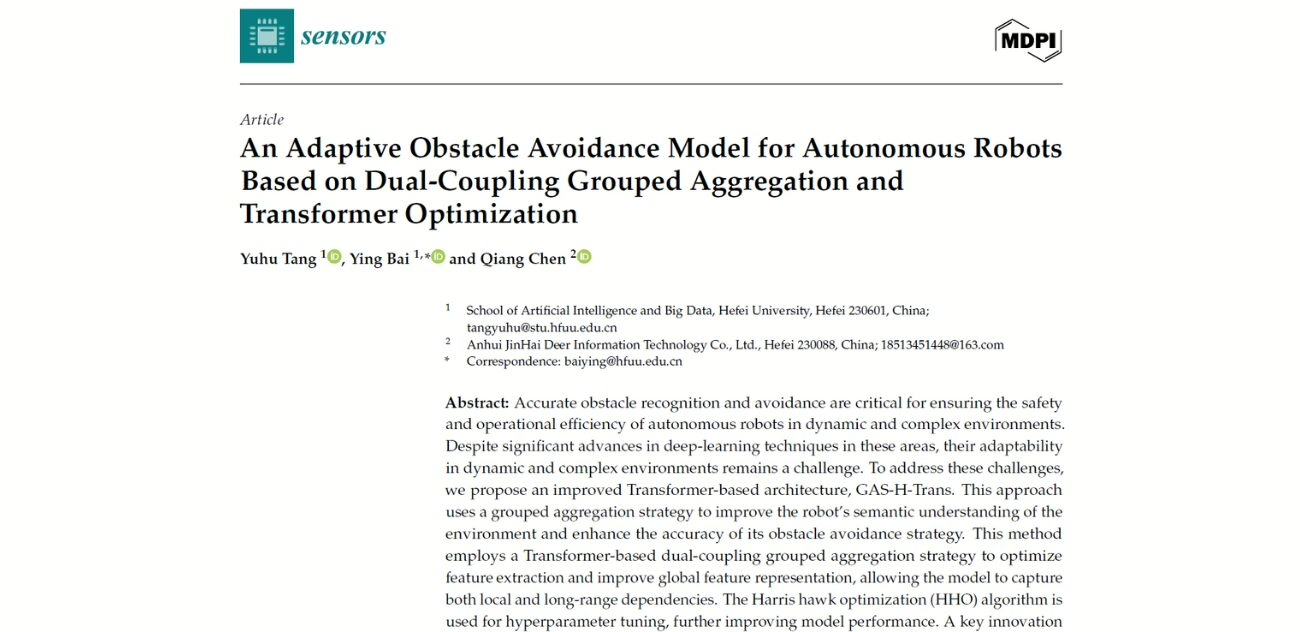

Transformer Visual Control for Dynamic Obstacle Avoidance

General Information

- Title: An Adaptive Obstacle Avoidance Model for Autonomous Robots Based on Dual-Coupling Grouped Aggregation and Transformer Optimization

- Authors: Yuhu Tang, Ying Bai, and Qiang Chen

- Institution: Hefei University, China

- Citation: Tang, Y., Bai, Y., & Chen, Q. (2025). An Adaptive Obstacle Avoidance Model for Autonomous Robots Based on Dual-Coupling Grouped Aggregation and Transformer Optimization. Sensors, 25(6), 1839. https://doi.org/10.3390/s25061839

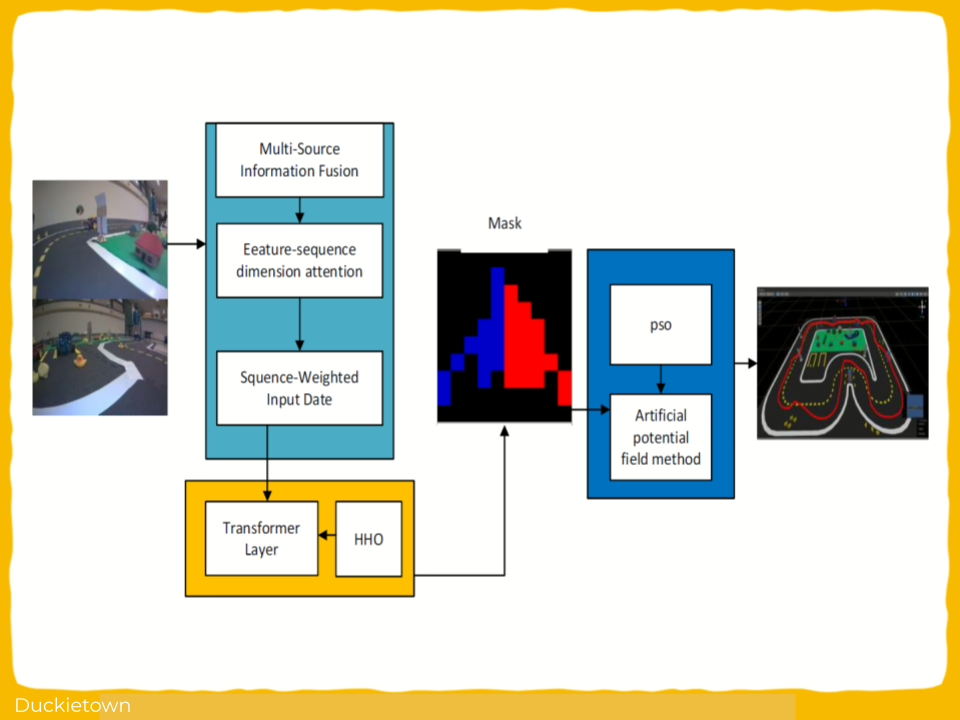

Transformer Visual Control for Dynamic Obstacle Avoidance

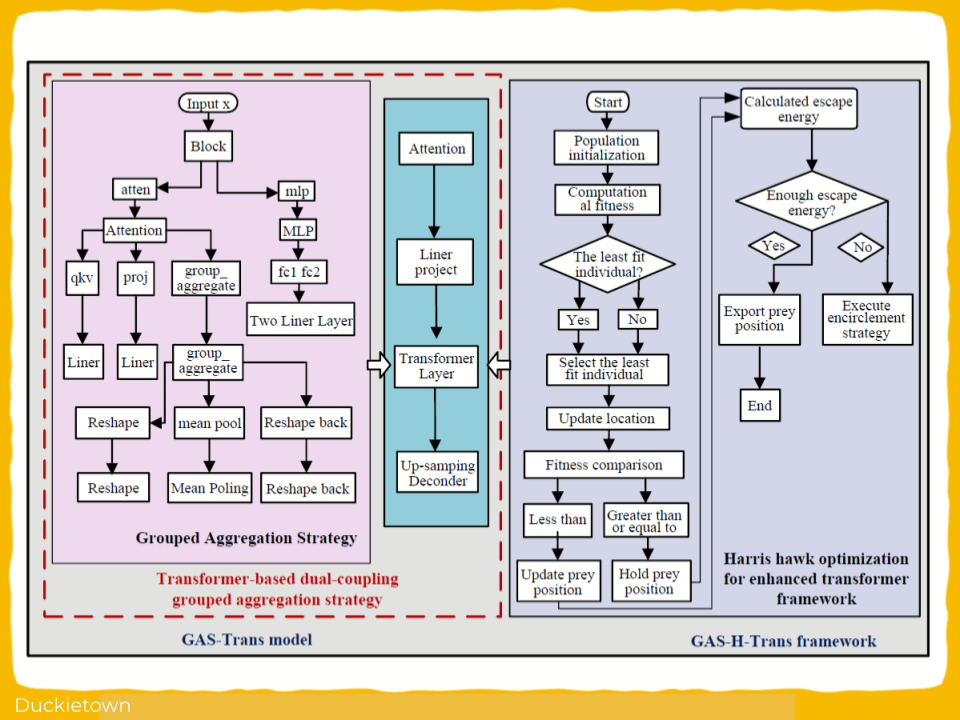

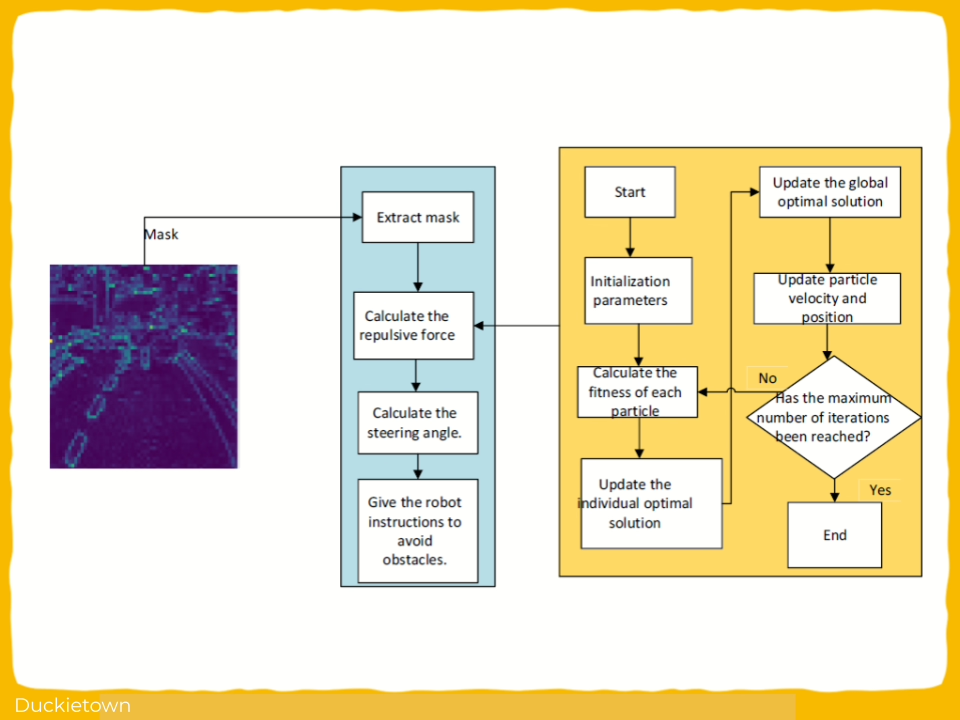

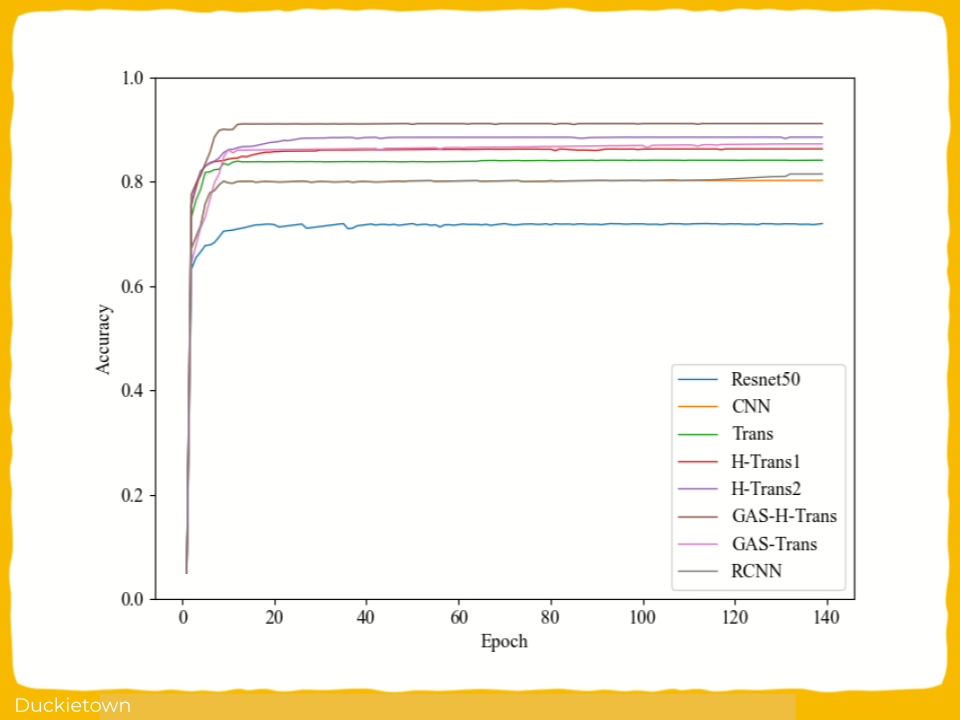

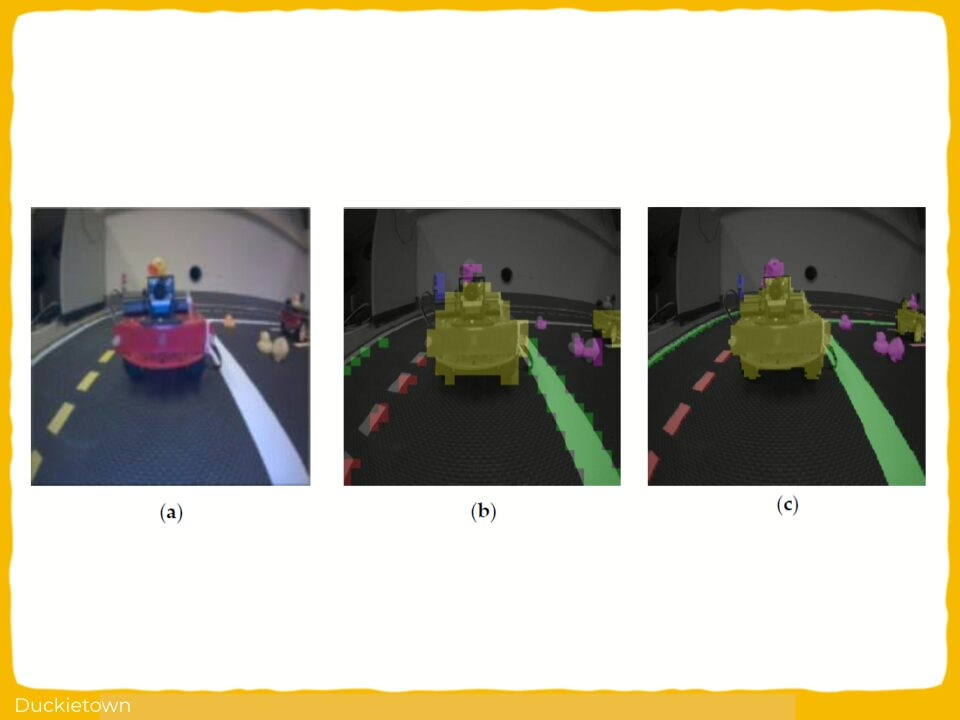

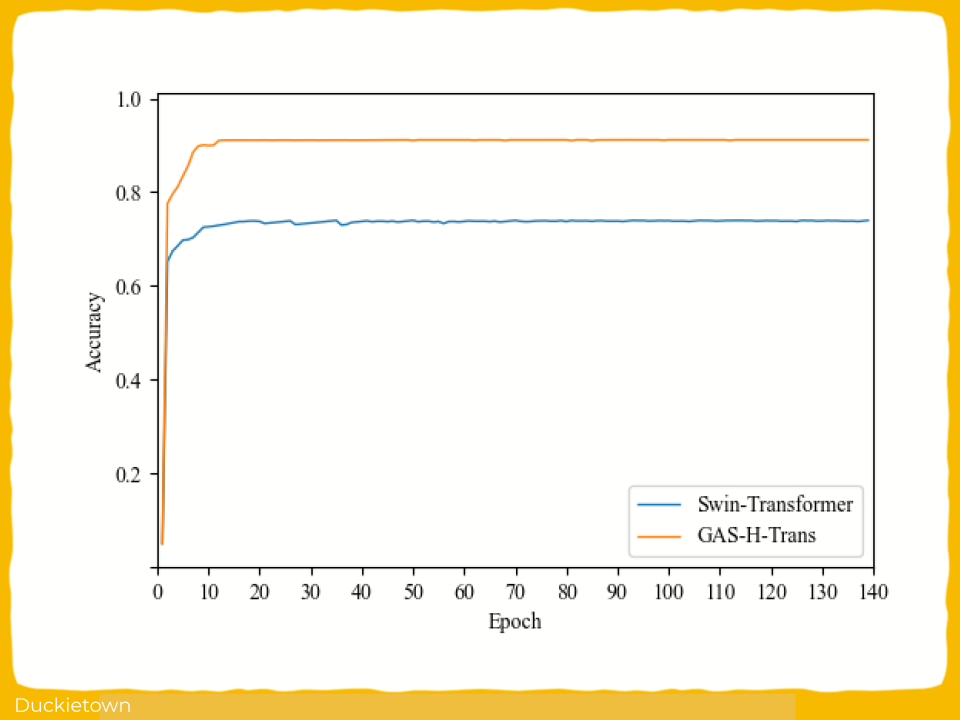

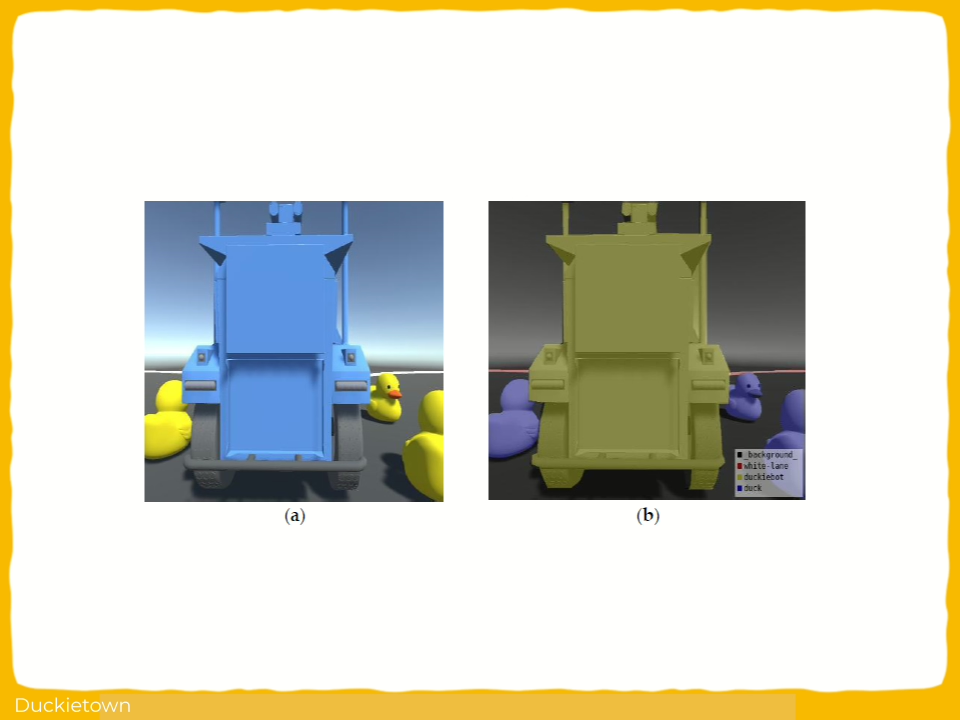

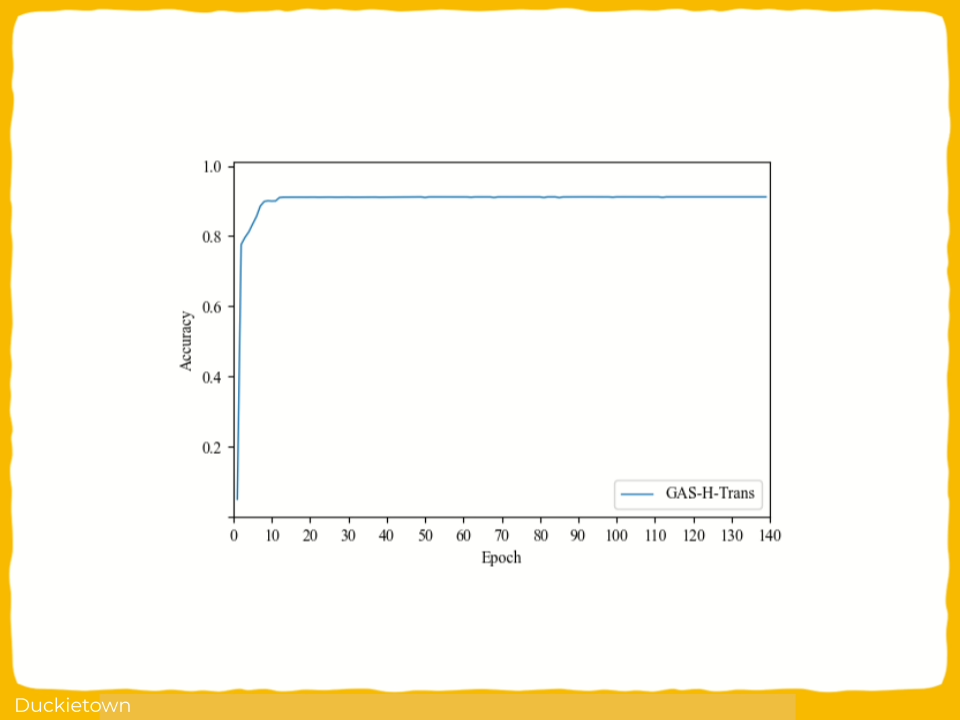

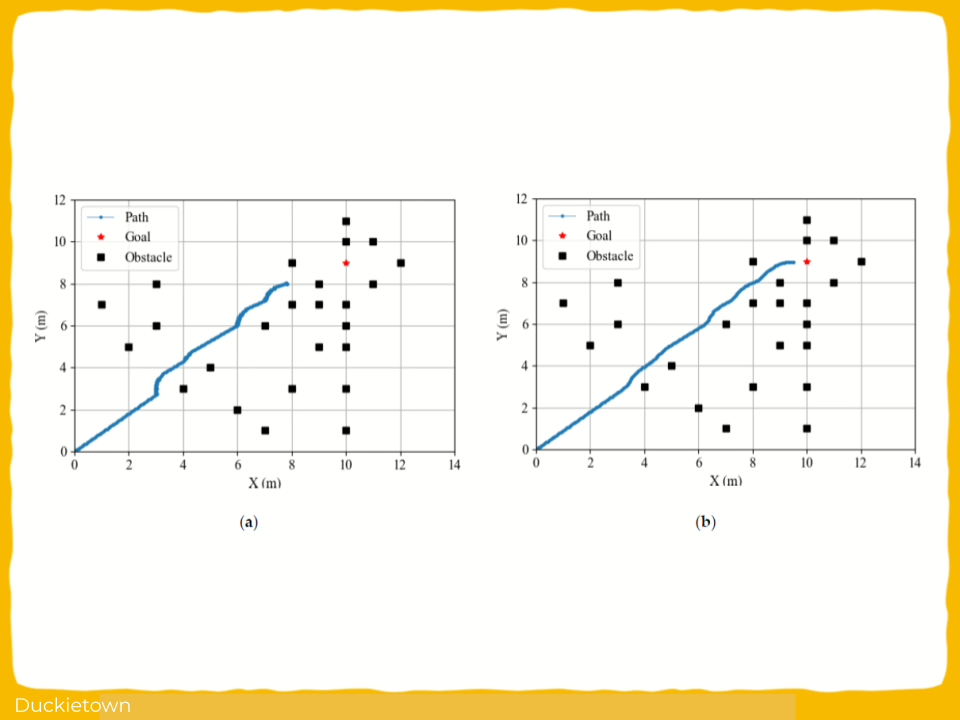

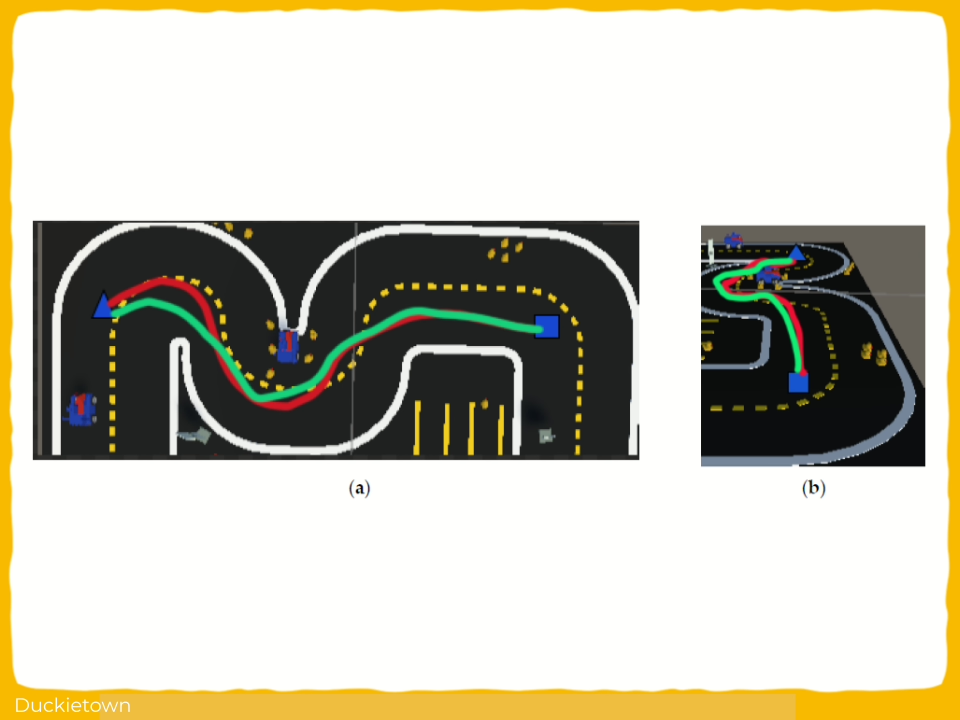

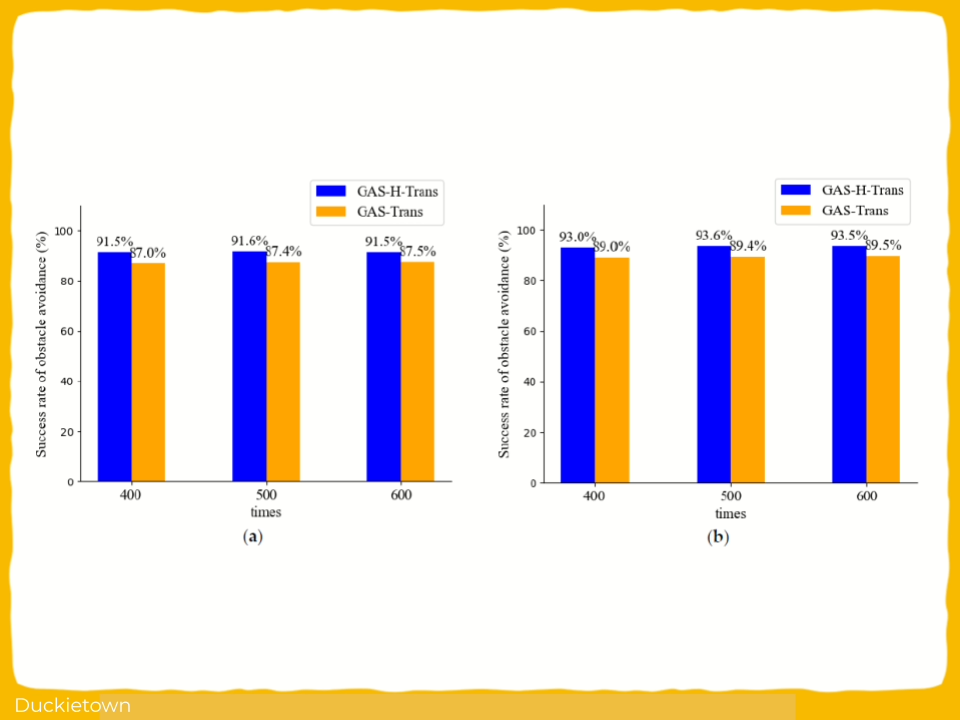

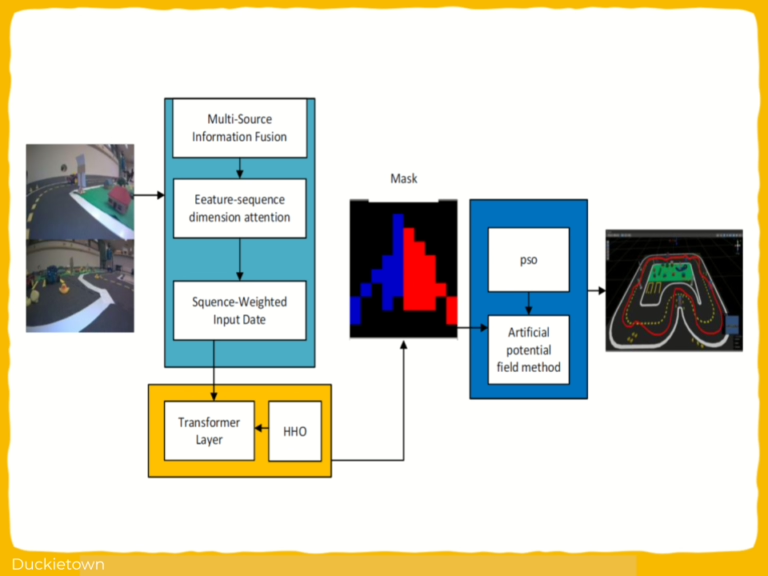

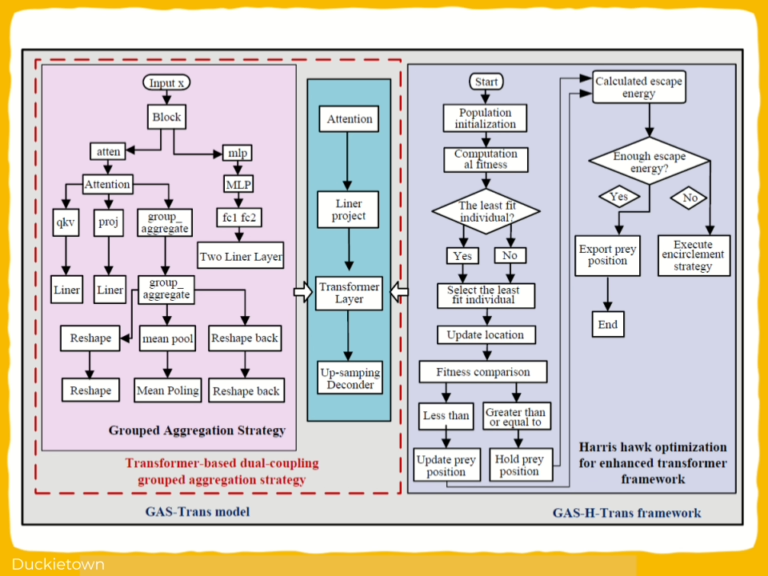

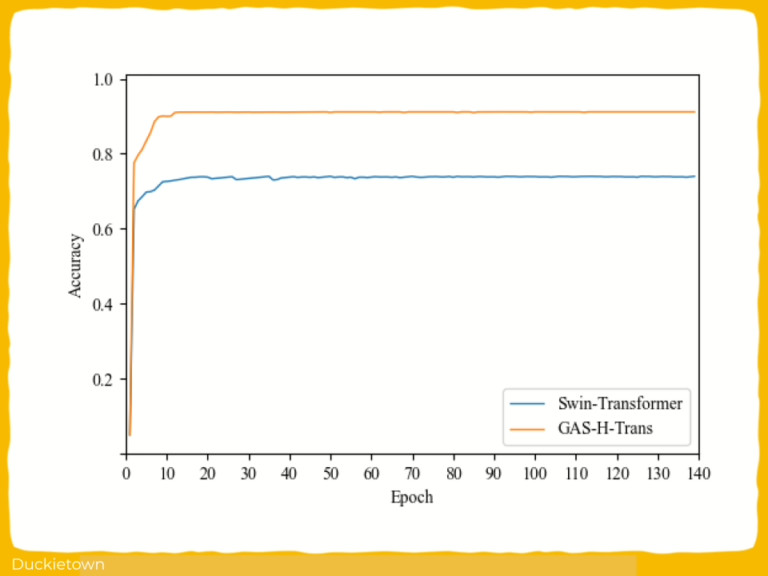

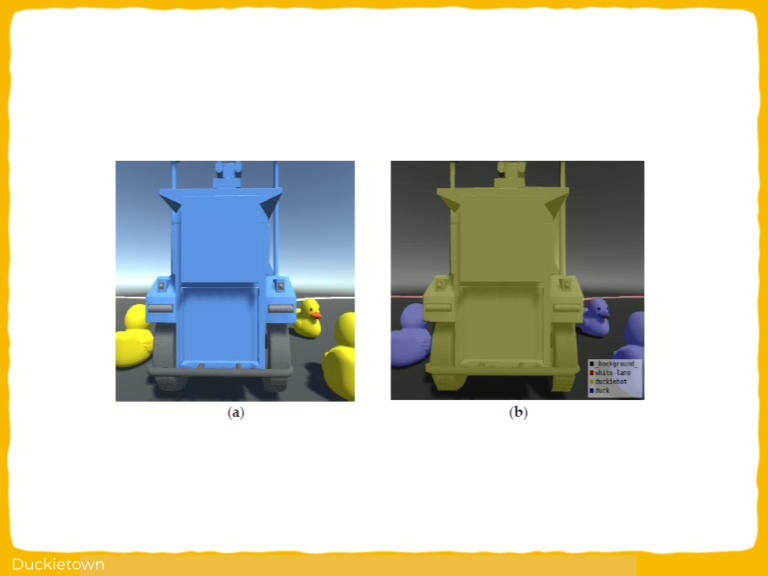

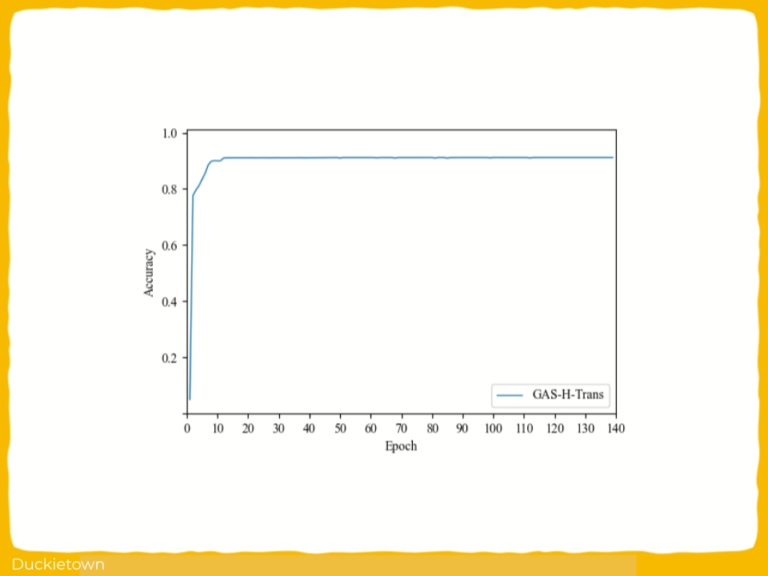

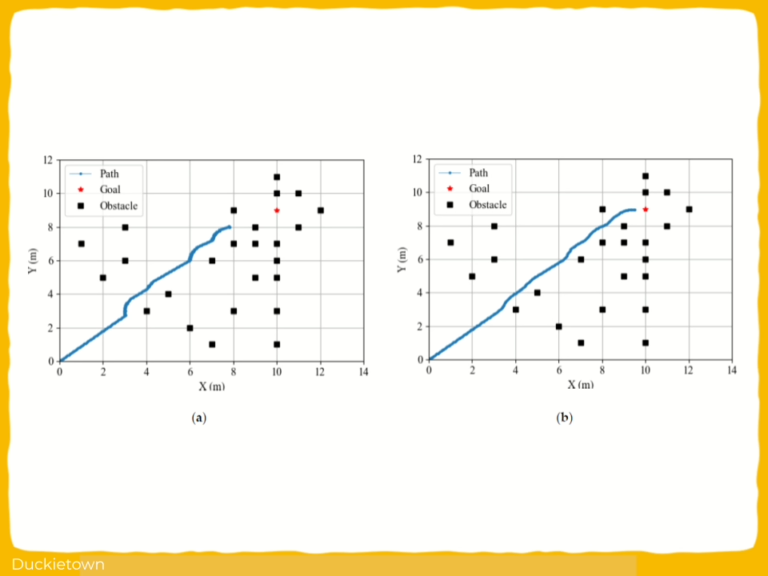

This work details a transformer visual control approach for autonomous robotic obstacle avoidance in dynamic environments. It introduces the GAS-H-Trans model, which integrates a dual-coupling grouped aggregation strategy with transformer-based attention mechanisms.

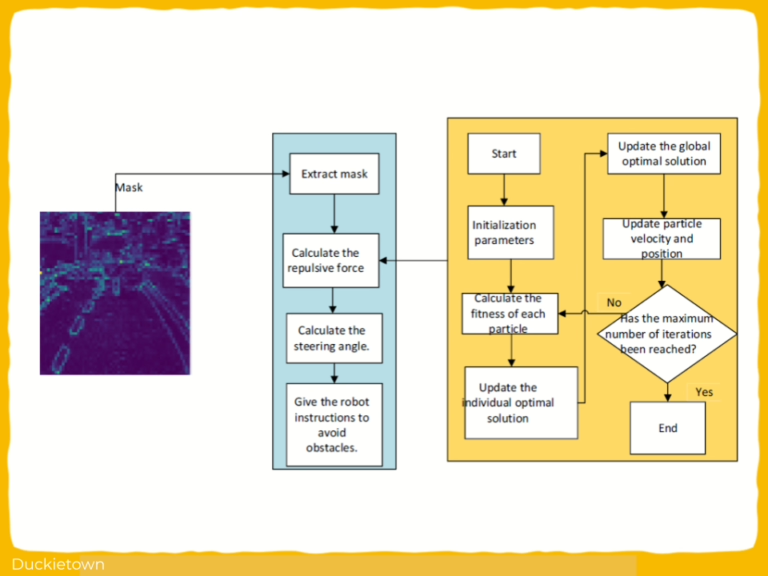

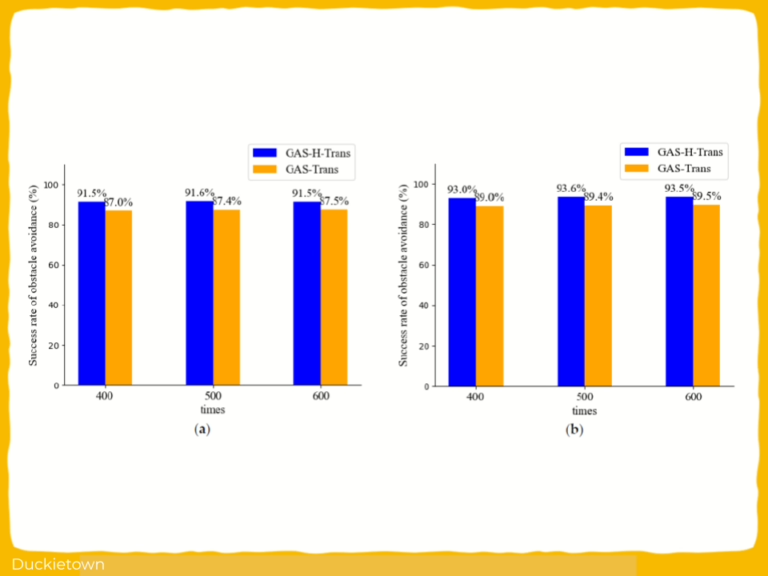

Key components of the approach include grouped spatial feature aggregation, Harris hawk optimization (HHO) for parameter tuning, and semantic segmentation for real-time visual perception. The output of the segmentation is used to compute potential fields for navigation. An artificial potential field (APF) method, further optimized using particle swarm optimization (PSO), enhances obstacle avoidance. The system was evaluated in Unity3D virtual environments and on datasets including KITTI, and ImageNet.

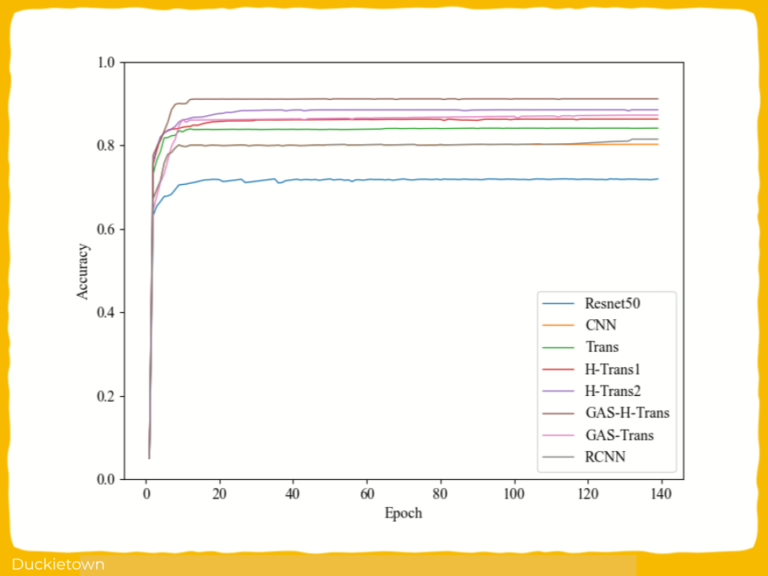

The model architecture improves local and global feature extraction, enabling adaptive navigation. Simulation results demonstrate that GAS-H-Trans outperforms baseline models in segmentation accuracy and avoidance reliability. The implementation uses Transformer structures, self-attention, and heuristic optimization for enhanced environmental understanding.

Experiments using Duckietown-based simulations confirm that the proposed Transformer Visual Control strategy with GAS-H-Trans significantly improves obstacle avoidance reliability with respect to typical approaches.

Highlights - Transformer Visual Control for Dynamic Obstacle Avoidance

Here is a visual tour of this work. For all the details, check out the full paper.

Abstract

In the author’s words:

Accurate obstacle recognition and avoidance are critical for ensuring the safety and operational efficiency of autonomous robots in dynamic and complex environments. Despite significant advances in deep-learning techniques in these areas, their adaptability in dynamic and complex environments remains a challenge. To address these challenges, we propose an improved Transformer-based architecture, GAS-H-Trans.

This approach uses a grouped aggregation strategy to improve the robot’s semantic understanding of the environment and enhance the accuracy of its obstacle avoidance strategy. This method employs a Transformer-based dual-coupling grouped aggregation strategy to optimize feature extraction and improve global feature representation, allowing the model to capture both local and long-range dependencies.

The Harris hawk optimization (HHO) algorithm is used for hyperparameter tuning, further improving model performance. A key innovation of applying the GAS-H-Trans model to obstacle avoidance tasks is the implementation of a secondary precise image segmentation strategy. By placing observation points near critical obstacles, this strategy refines obstacle recognition, thus improving segmentation accuracy and flexibility in dynamic motion planning. The particle swarm optimization (PSO) algorithm is incorporated to optimize the attractive and repulsive gain coefficients of the artificial potential field (APF) methods.

This approach mitigates local minima issues and enhances the global stability of obstacle avoidance. Comprehensive experiments are conducted using multiple publicly available datasets and the Unity3D virtual robot environment. The results show that GAS-H-Trans significantly outperforms existing baseline models in image segmentation tasks, achieving the highest mIoU (85.2%). In virtual environment obstacle avoidance tasks, the GAS-H-Trans + PSO-optimized APF framework achieves an impressive obstacle avoidance success rate of 93.6%. These results demonstrate that the proposed approach provides superior performance in dynamic motion planning, offering a promising solution for real-world autonomous navigation applications.

Conclusion - Transformer Visual Control for Dynamic Obstacle Avoidance

Here is the author’s summary and overview of lessons learned from this work:

In this study, we proposed the GAS-H-Trans framework for image segmentation and dynamic obstacle avoidance in autonomous robots. The key contributions are summarized as follows. (1) Dual-coupling grouped aggregation strategy: A Transformer-based dualcoupling grouped aggregation method optimizes feature extraction and enhances global feature representation, thereby improving the model’s perception performance in dynamic motion planning. (2) Harris hawk optimization (HHO): The integration of the HHO algorithm into the GAS-Trans framework optimizes the number of Transformer layers and iterations, improving model accuracy and reducing computational costs. (3) PSOoptimized artificial potential field (APF): We integrated the PSO algorithm with APF to optimize the attractive and repulsive gain coefficients, addressing local minima issues and enhancing the global stability of the obstacle avoidance system.

This study also proposes a secondary precise image segmentation strategy. By setting the observation points near critical obstacles for fine-tuned segmentation, the flexibility and accuracy of the segmentation model’s environmental perception are effectively enhanced, thereby improving the robot’s obstacle avoidance capabilities.

Through the integration of PSO-optimized APF with image segmentation, the GAS-HTrans + PSO-optimized APF framework demonstrated significant improvements in obstacle avoidance. In the experimental validation of this study, the obstacles remained static throughout the navigation process. Using this method, the autonomous robot dynamically adjusted its obstacle avoidance trajectory based on segmented environmental features. This integration significantly enhanced environmental perception capabilities and the accuracy of obstacle avoidance decisions, enabling more efficient navigation in static obstacle environments.

Extensive experiments on publicly available datasets (Duckiebot, KITTI, ImageNet) and in the Unity3D virtual robot environment validate the effectiveness of the proposed framework. The GAS-H-Trans framework outperformed traditional models in image segmentation tasks, achieving the highest mIoU of 85.2%. Furthermore, in virtual obstacle avoidance experiments, the GAS-H-Trans + PSO-optimized APF framework achieved an obstacle avoidance success rate of 93.6%.

These results effectively validate the proposed strategy, which combines secondary image segmentation from GAS-H-Trans with the PSO-optimized APF method, significantly improving obstacle avoidance performance in dynamic motion planning. Additionally, the GAS-H-Trans framework has the potential to be extended to fully dynamic environments by incorporating real-time object tracking and adaptive obstacle modeling. However, some limitations exist. The majority of the experiments were conducted in simulated environments, and future research will focus on validating the framework in real-world scenarios and improving real-time performance.

Additionally, the integration of multi-modal sensor data (such as LiDAR and ultrasonic sensors) will be an important direction for future work to further enhance environmental perception and robustness.

In conclusion, the new framework offers an innovative solution for autonomous robot obstacle avoidance in dynamic motion planning. Its powerful environmental perception and obstacle avoidance performance demonstrate significant potential for practical applications. With further optimization and real-world validation, this framework will play a crucial role in the future development of autonomous navigation and robotics technology.

Did this work spark your curiosity?

Check out the follow works on machine learning with Duckietown:

Project Authors

Yuhu Tang is affiliated with the School of Artificial Intelligence and Big Data, Hefei University, Hefei 230601, China.

Ying Bai is affiliated with the School of Artificial Intelligence and Big Data, Hefei University, Hefei 230601, China.

Qiang Chen is affiliated with School of Electrical Engineering and Automation

National and Local Joint Engineering Laboratory for Renewable Energy Access to Grid Technology, Hefei University of Technology, Hefei, China, Hefei University, Hefei 230601, China.

Learn more

Duckietown is a platform for creating and disseminating robotics and AI learning experiences.

It is modular, customizable and state-of-the-art, and designed to teach, learn, and do research. From exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge, Duckietown evolves with the skills of the user.

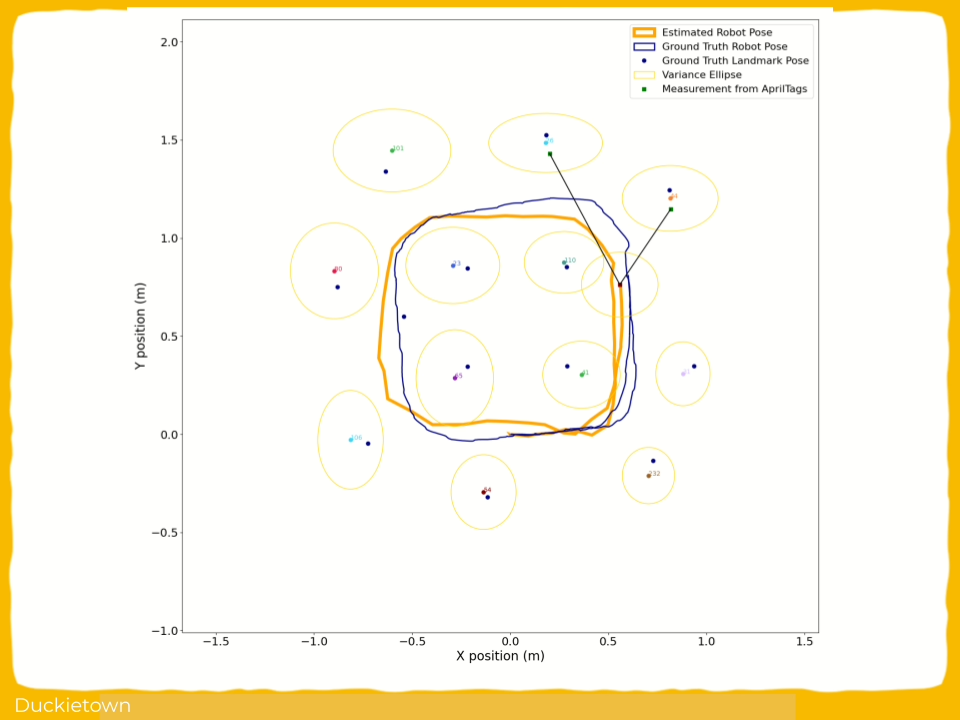

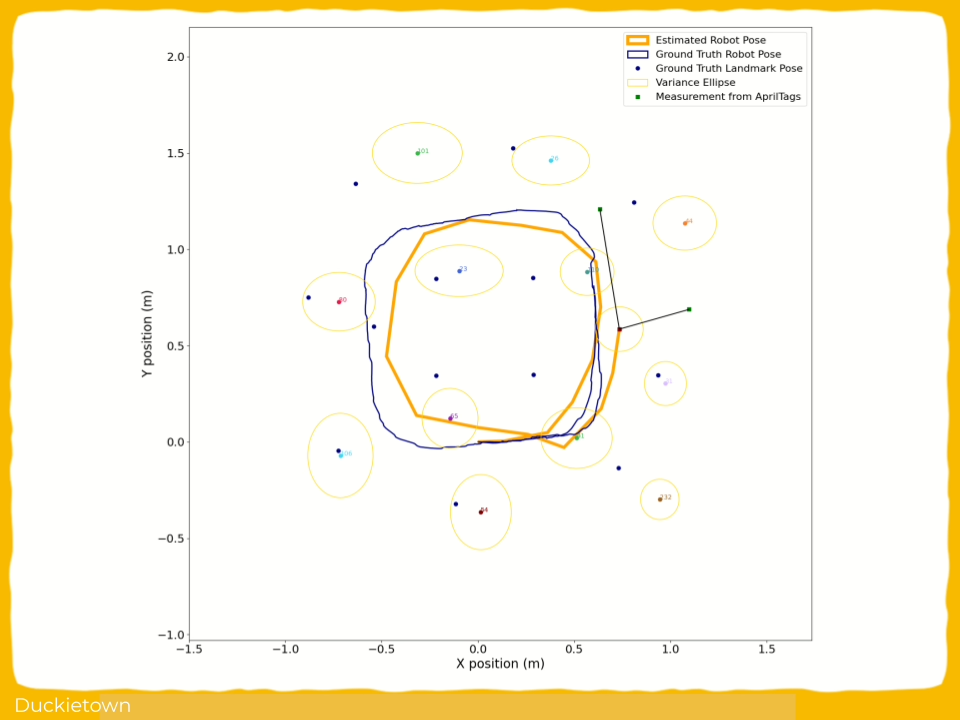

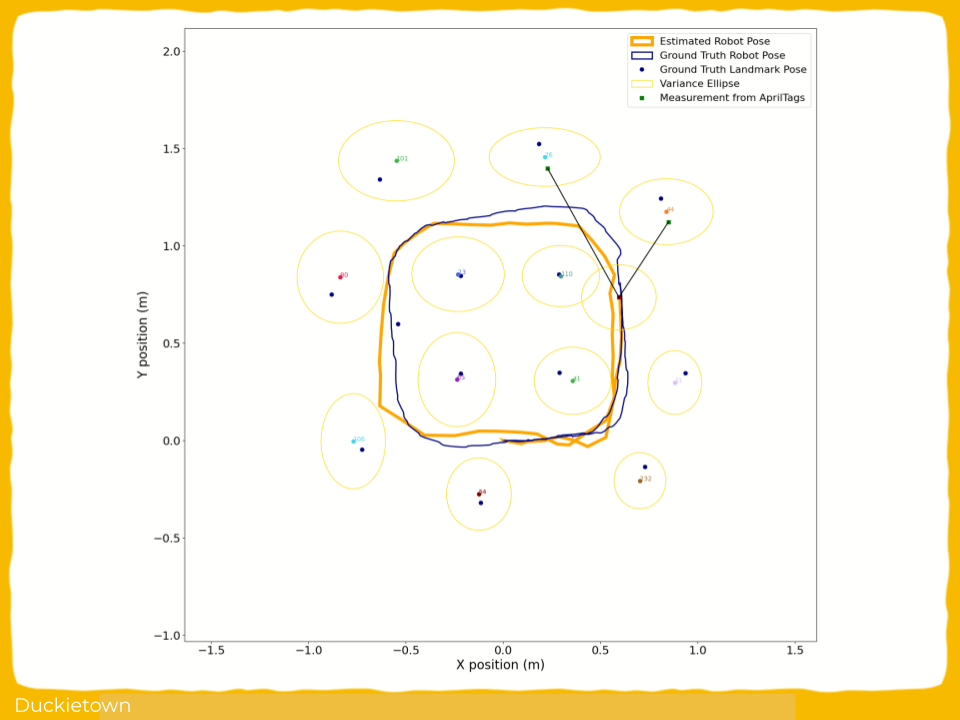

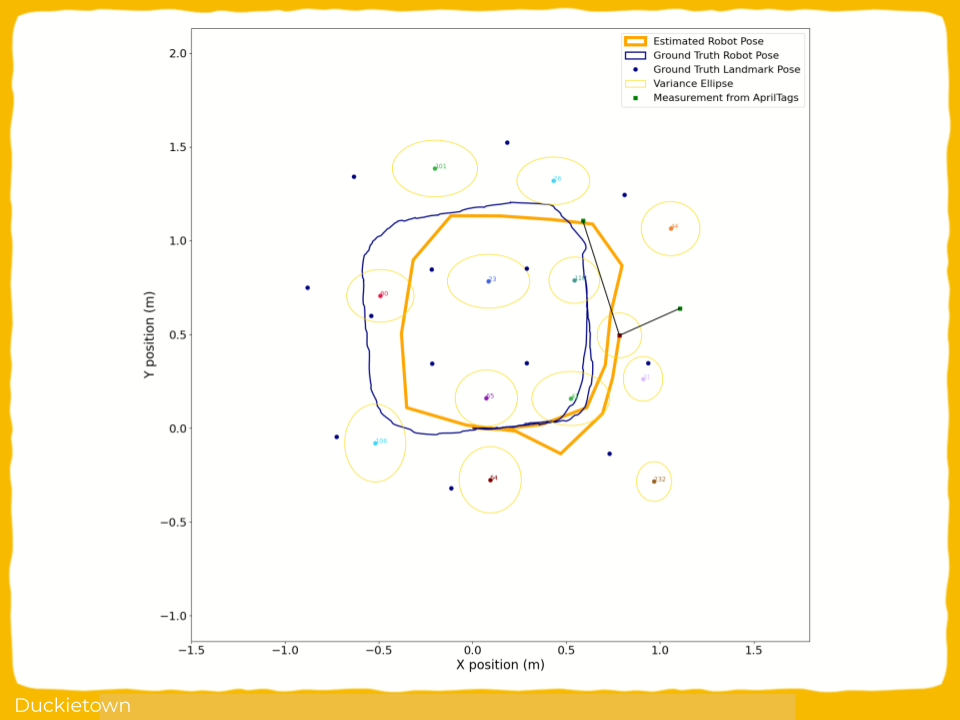

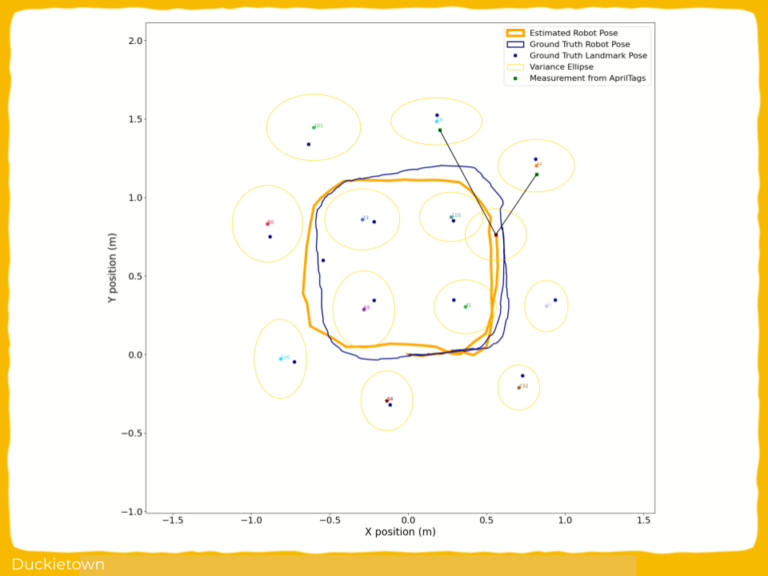

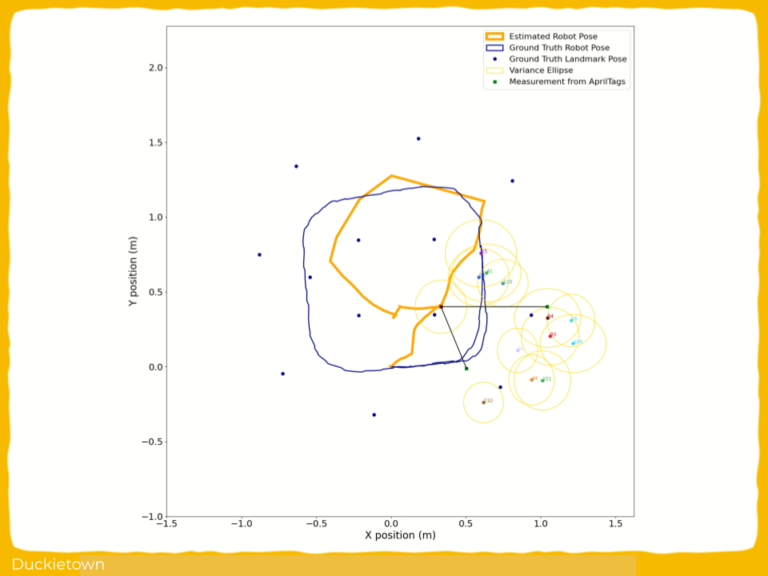

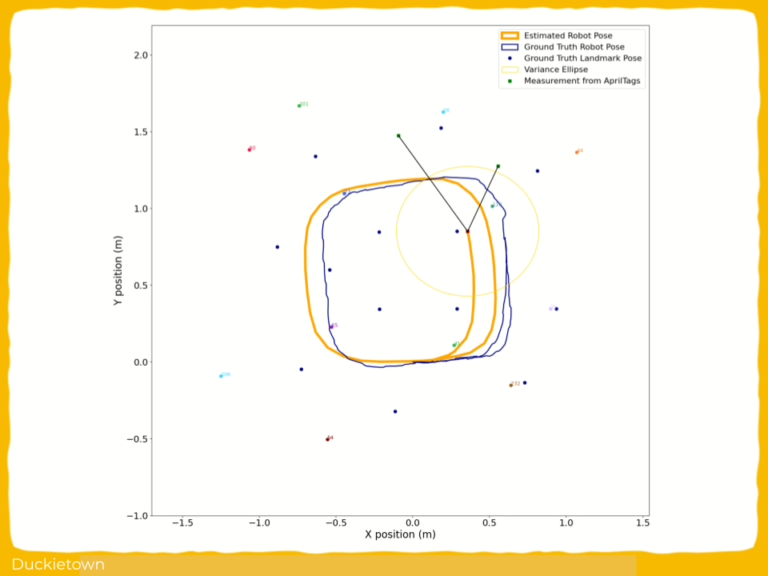

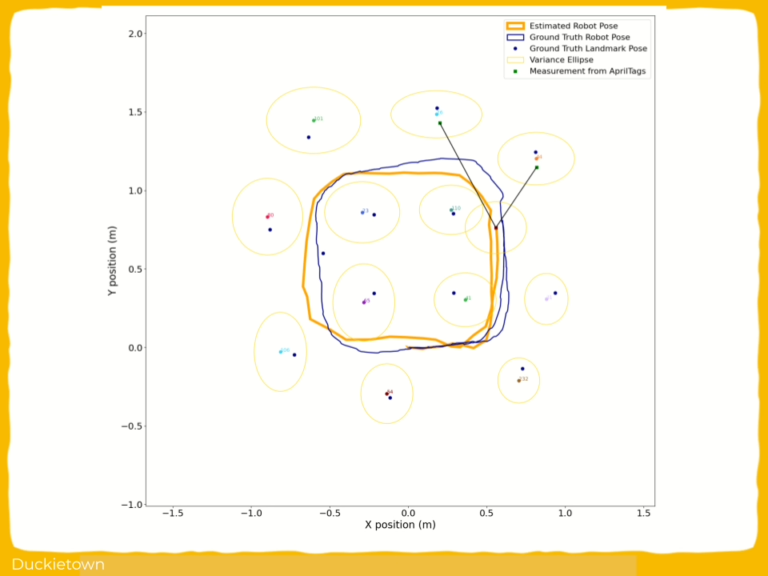

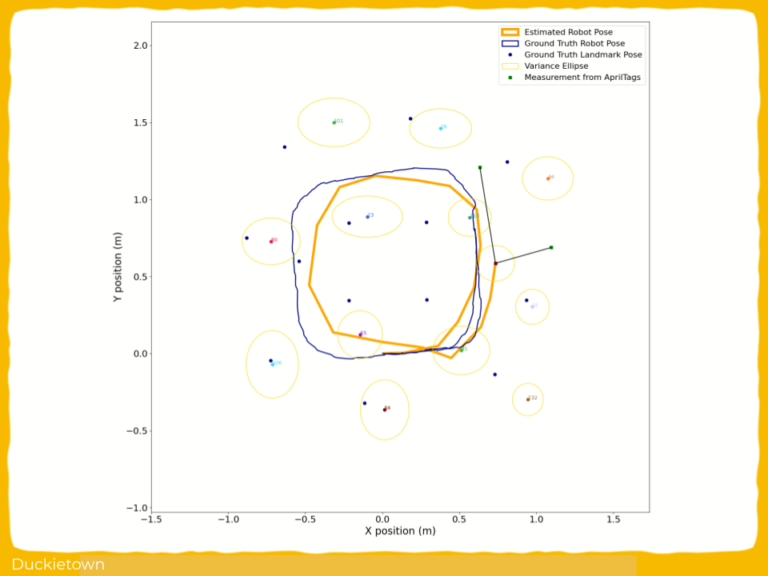

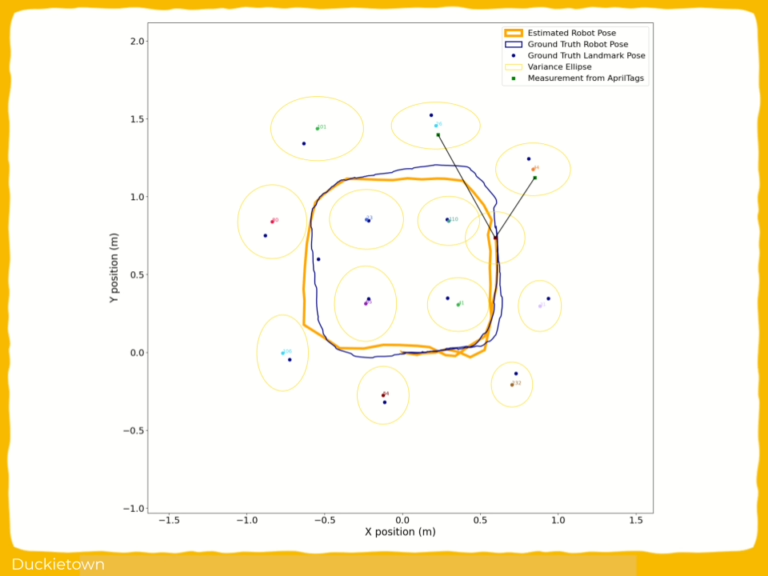

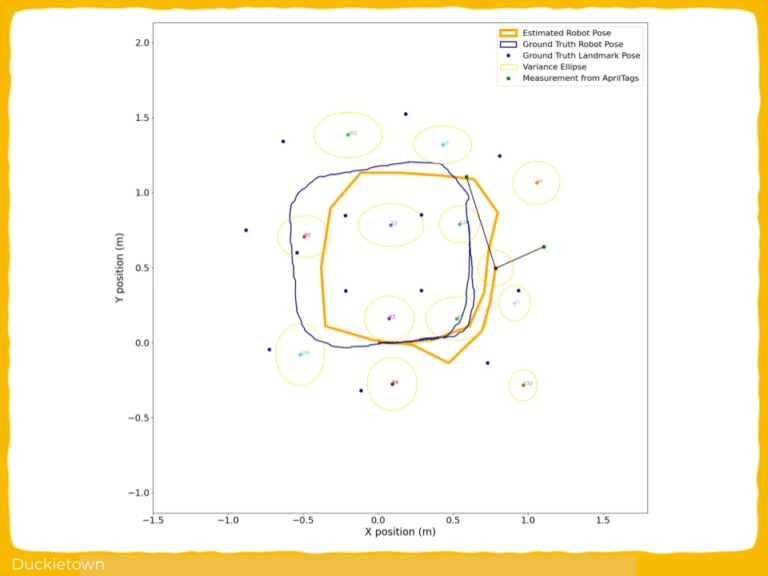

Extended Kalman Filter (EKF) SLAM for Duckiebots

Extended Kalman Filter (EKF) SLAM for Duckiebots

Project Resources

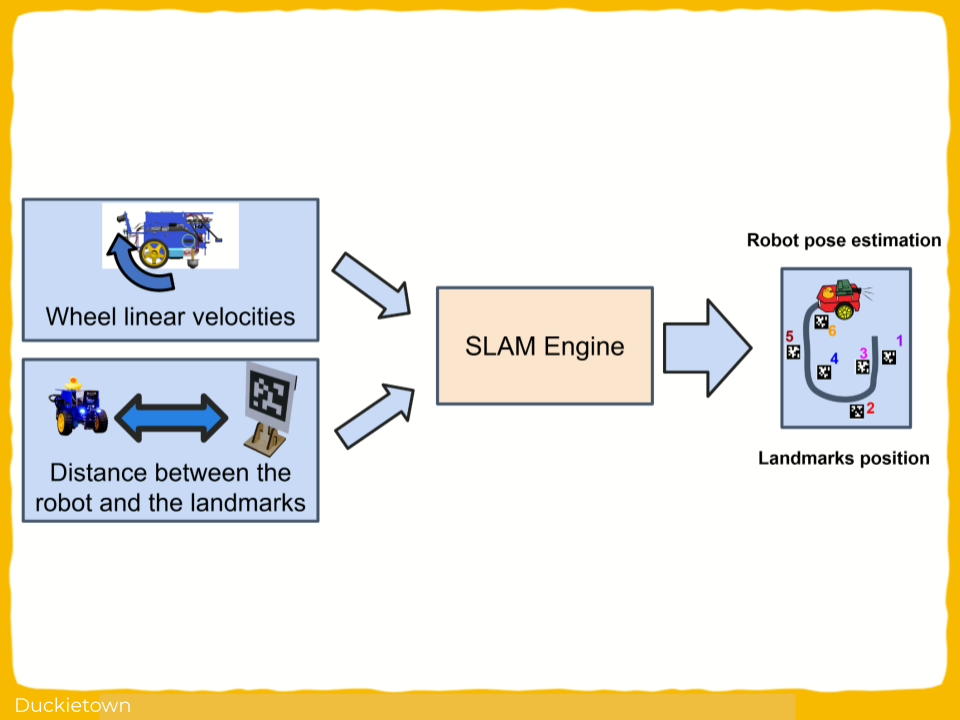

- Objective: Implement an Extended Kalman Filter (EKF) SLAM system to estimate accurate Duckiebot pose and landmark positions in Duckietown environments.

- Approach: Fuse odometry and AprilTag landmark detections using EKF to achieve robust localization and mapping for small-scale autonomous Duckiebots.

- Authors: Amir Hossein Zamani, Léonard Oest O'Leary, Kevin Lessard from the University of Montreal.

Project highlights

In SLAM, everything that can drift will drift, and the role of the filter is to drift more slowly than entropy.

Extended Kalman Filter (EKF) SLAM for Duckiebots - the objectives

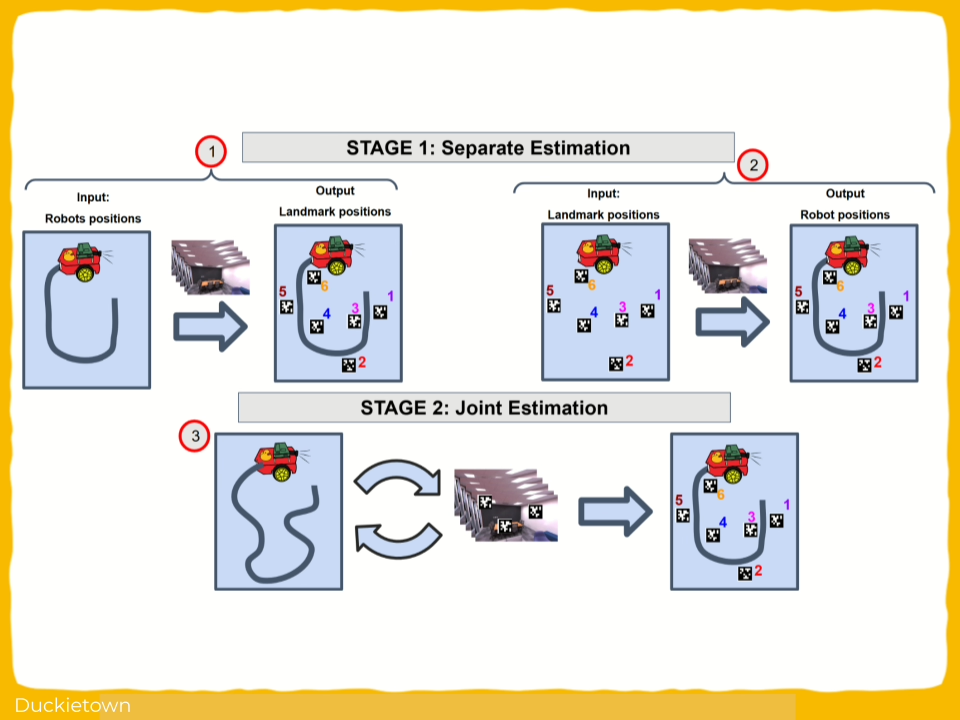

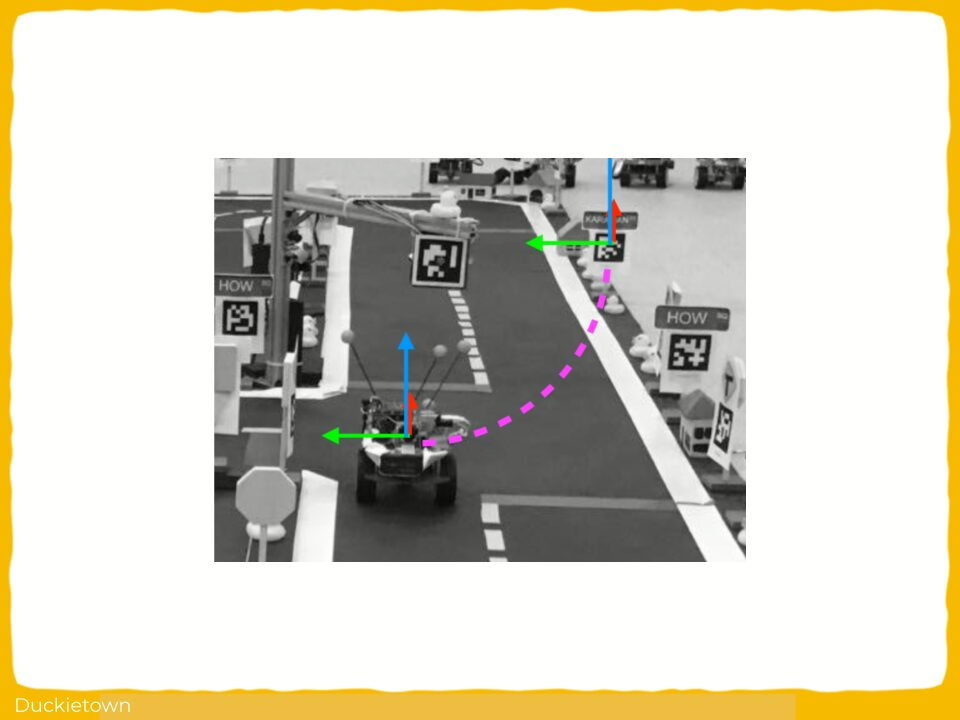

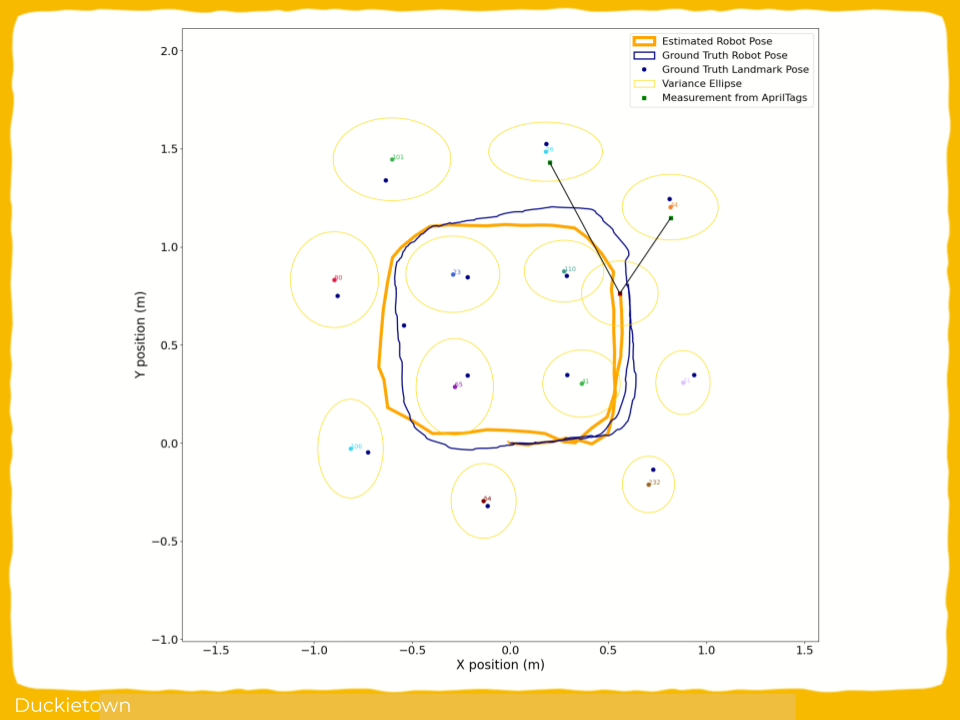

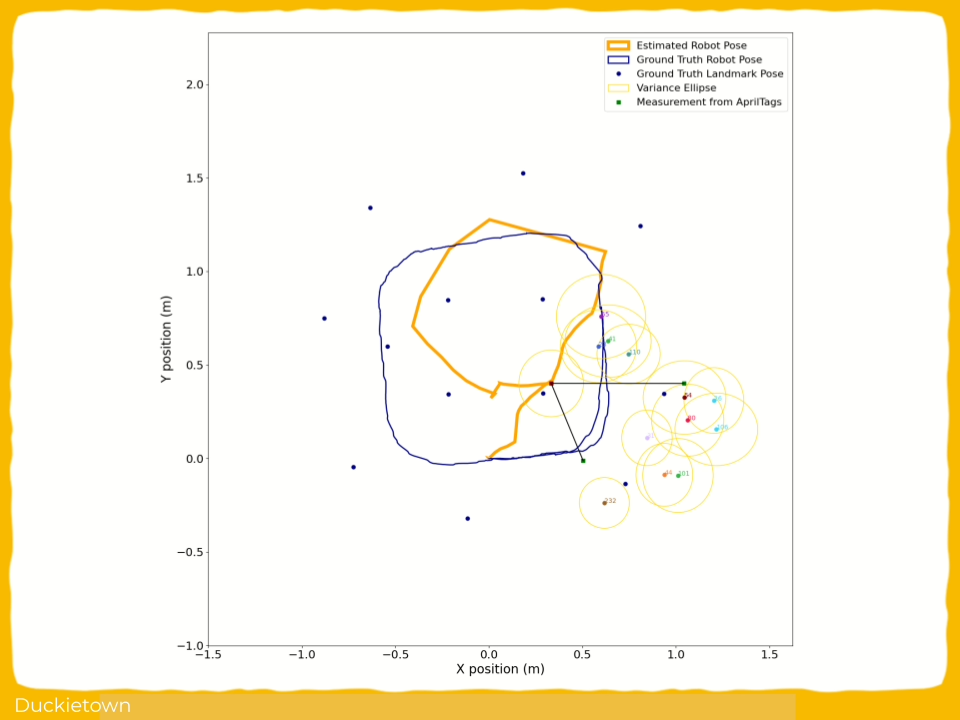

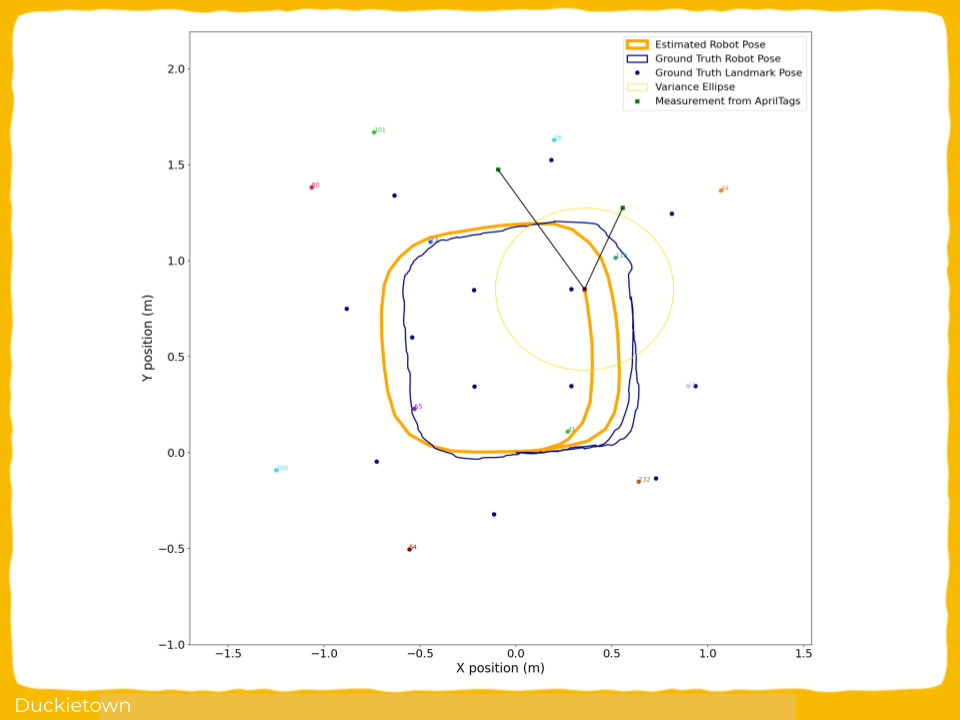

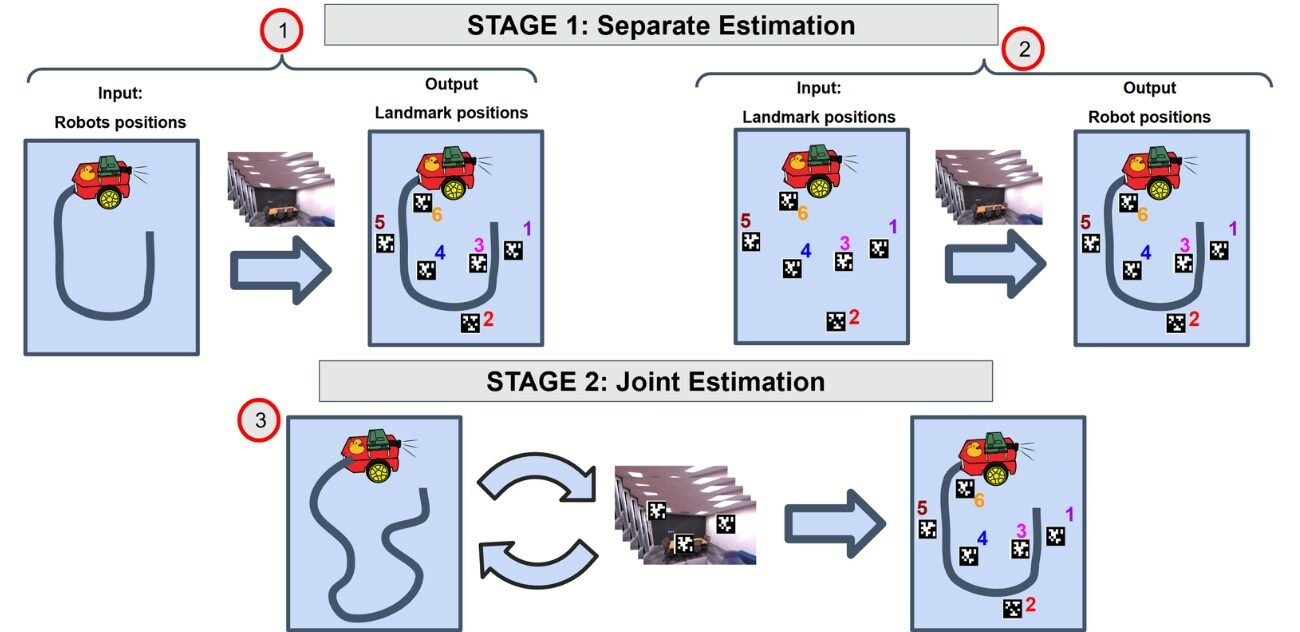

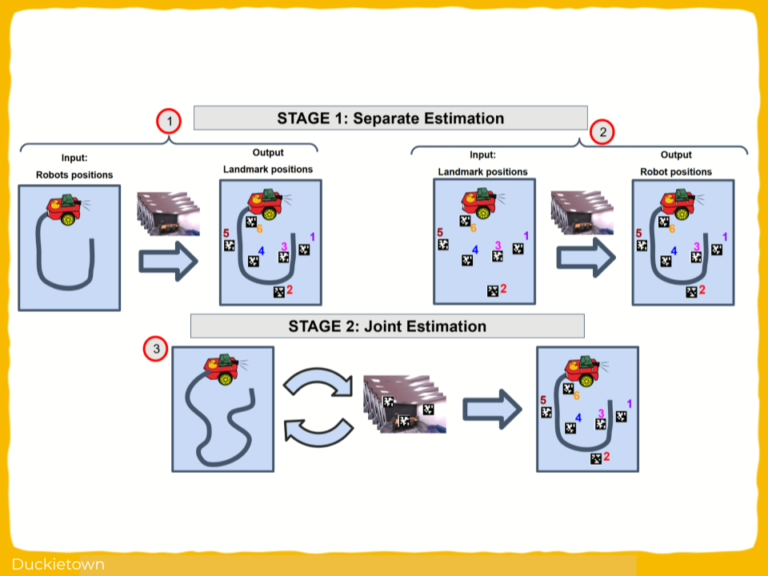

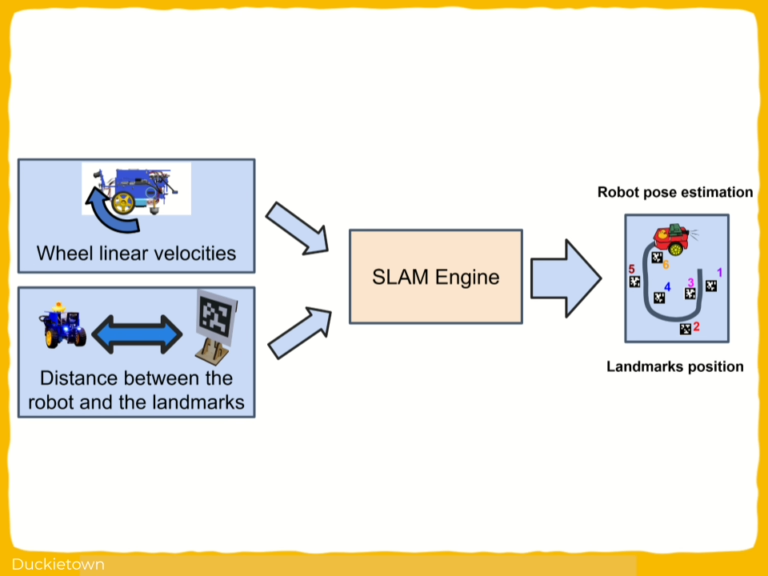

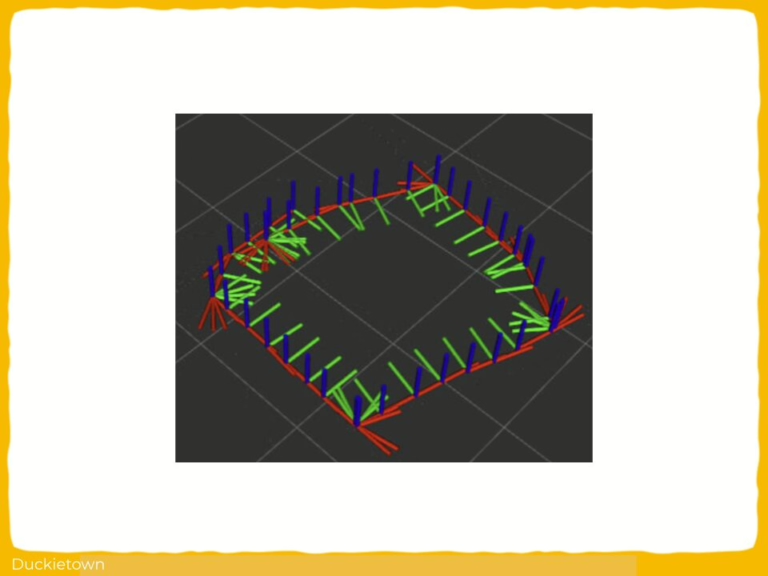

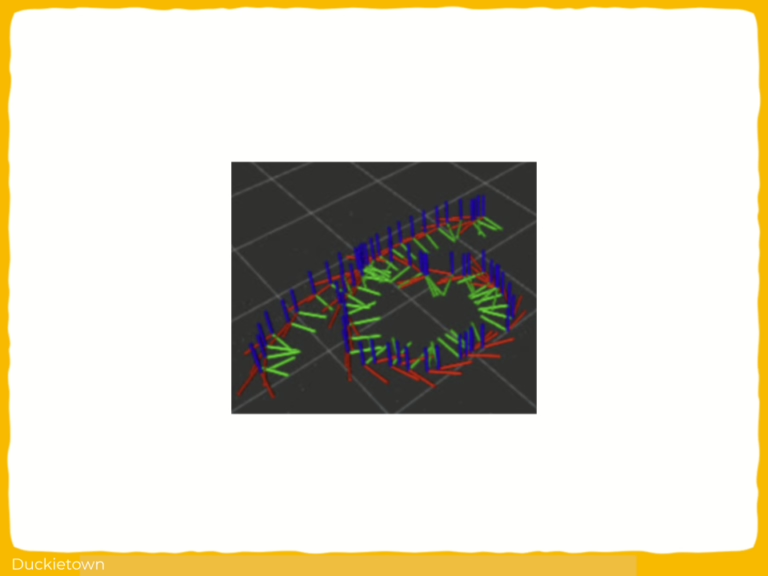

This SLAM-Duckietown project addresses a famous challenge in robotics: concurrently estimating the agent’s pose and mapping the environment under uncertainty.

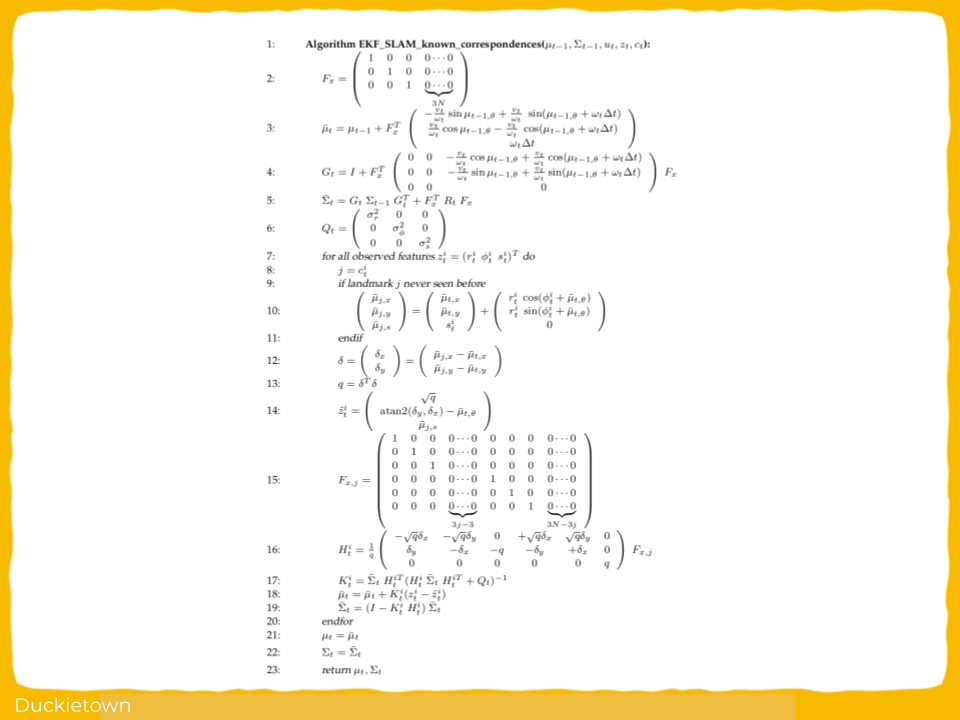

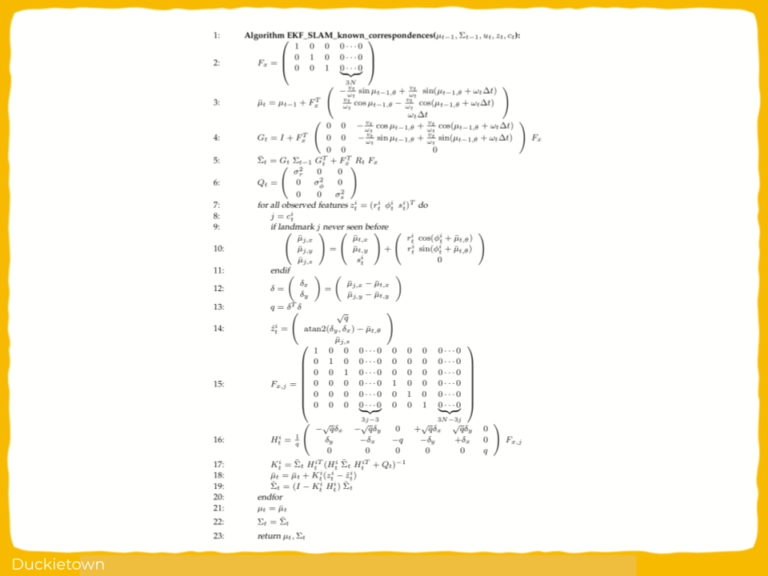

This project implements an Extended Kalman Filter (EKF) SLAM algorithm on Duckiebots (DB21-J4), combining odometry from wheel encoders and landmark observations from April tags.

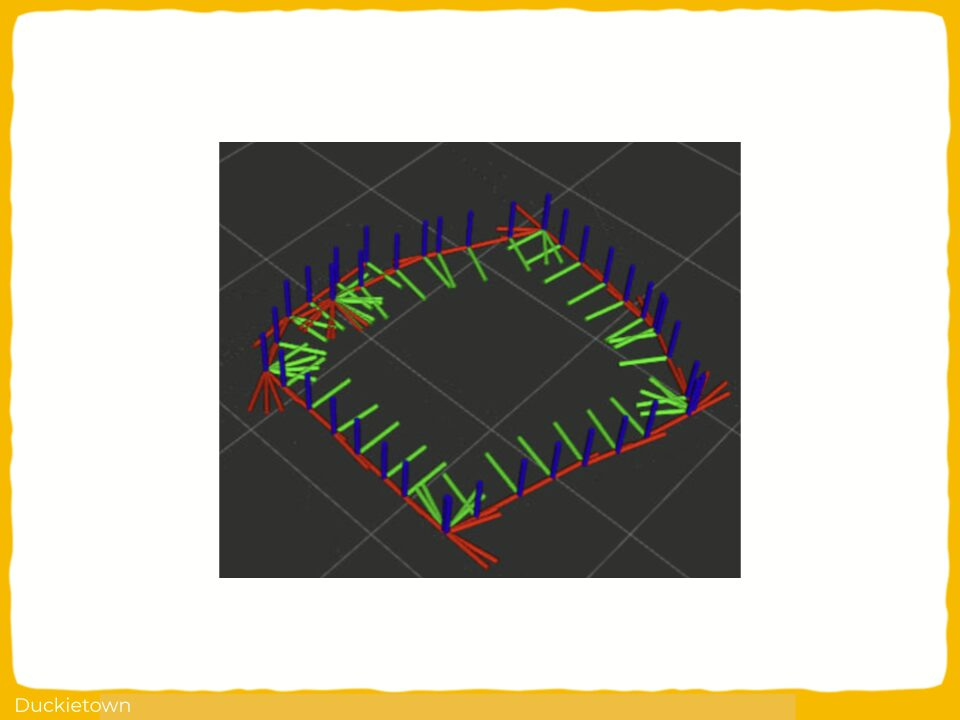

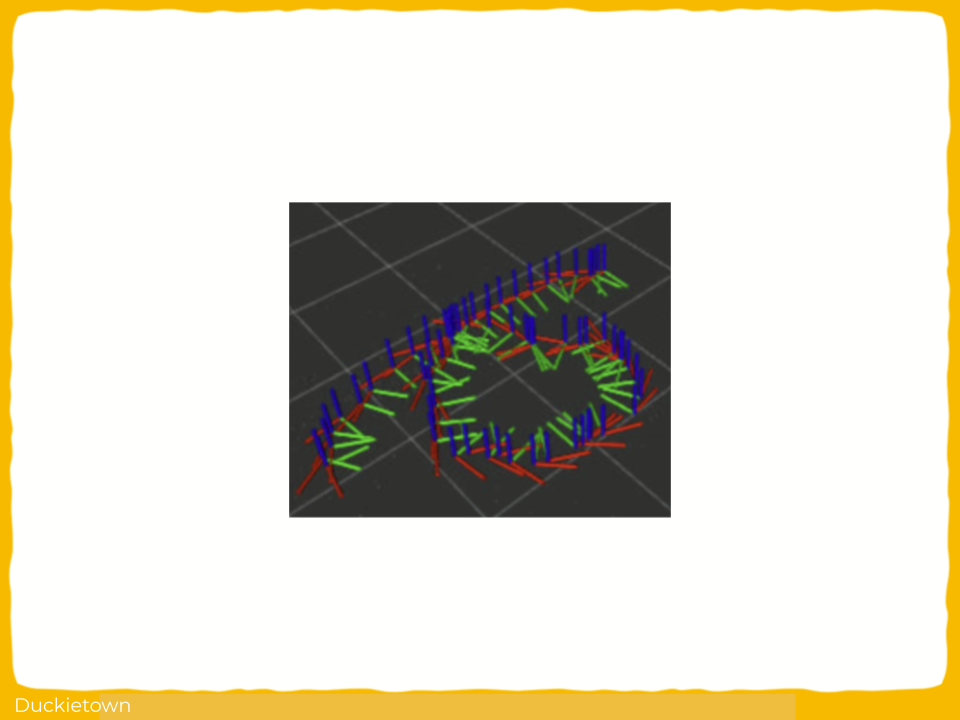

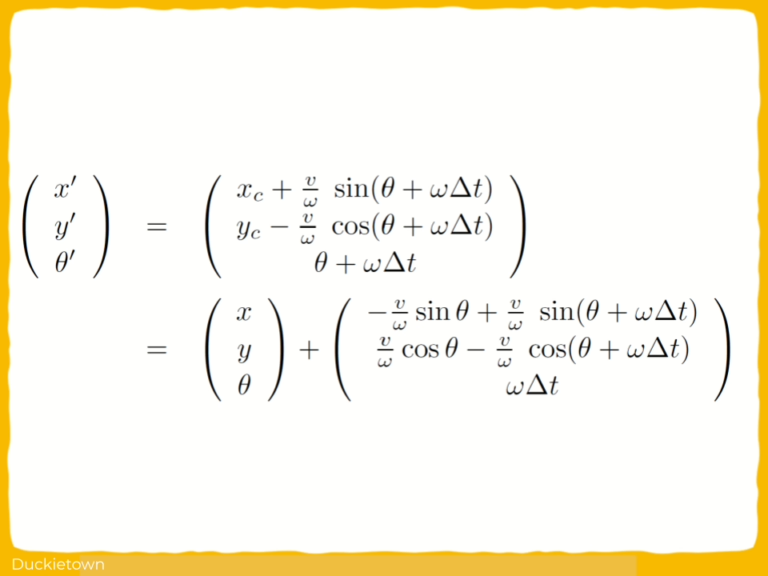

The objective is to maintain an evolving posterior over the Duckiebot’s pose (x,y,θ) and landmark positions by recursively integrating noisy control inputs and observations.

This upgrade shifts Duckiebots from open-loop dead reckoning units into closed-loop, state-estimating agents. For Duckietown, it reinforces its use as an experimental ground for real-world robotics challenges, including data association, observability, filter consistency, and multi-sensor fusion.

The challenges and approach

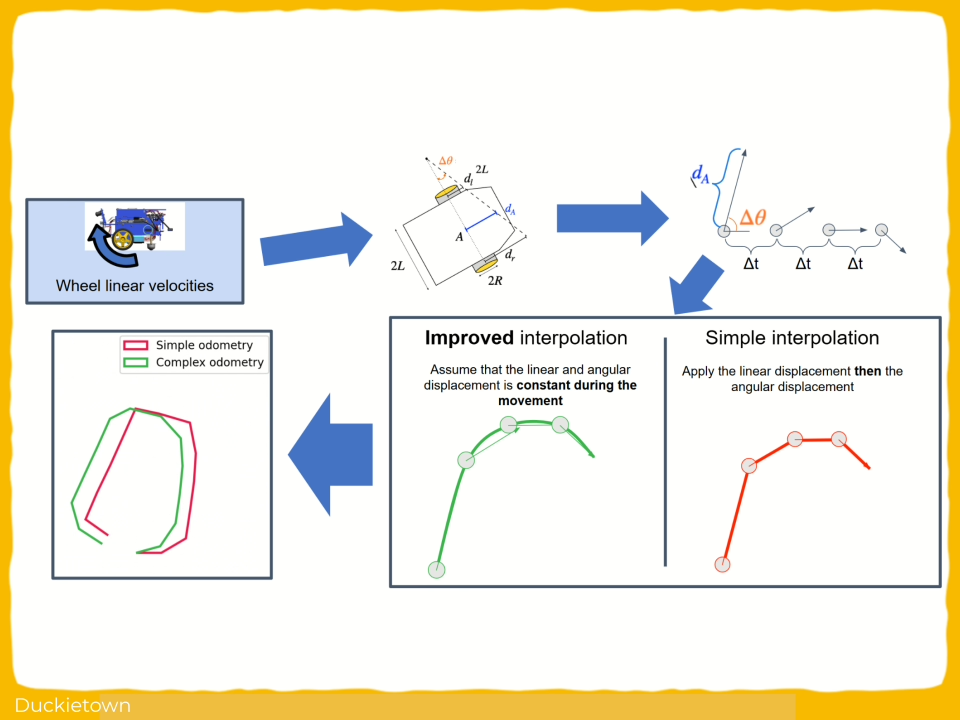

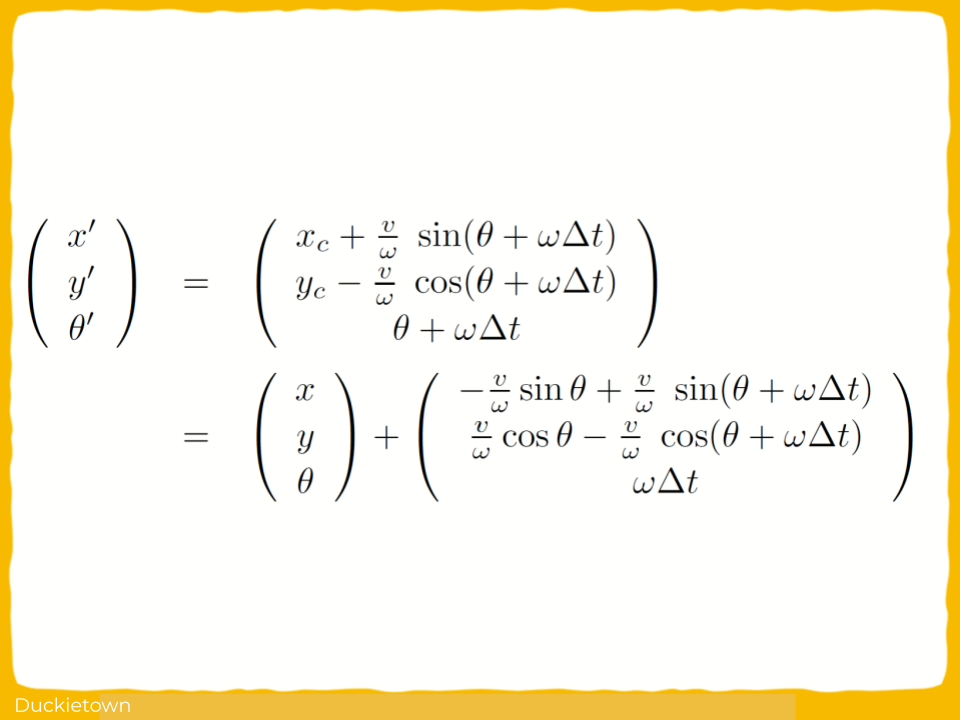

The system applies the EKF-SLAM pipeline in two stages: motion prediction and measurement correction.

Prediction propagates the robot’s belief through a non-holonomic kinematic model under process noise, using arc-based interpolation to reduce discretization error.

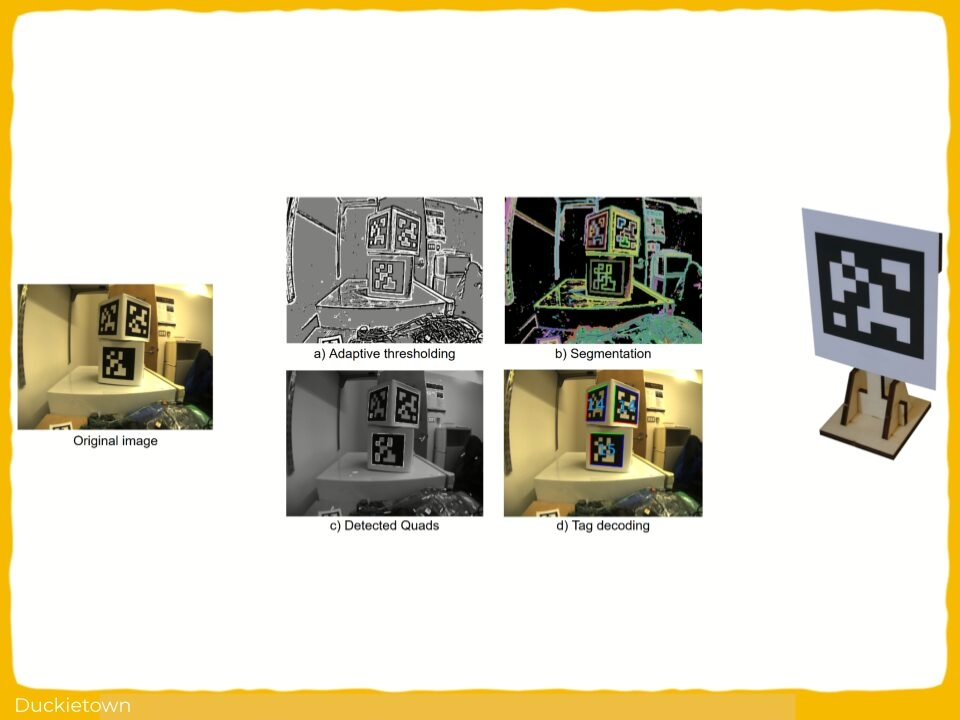

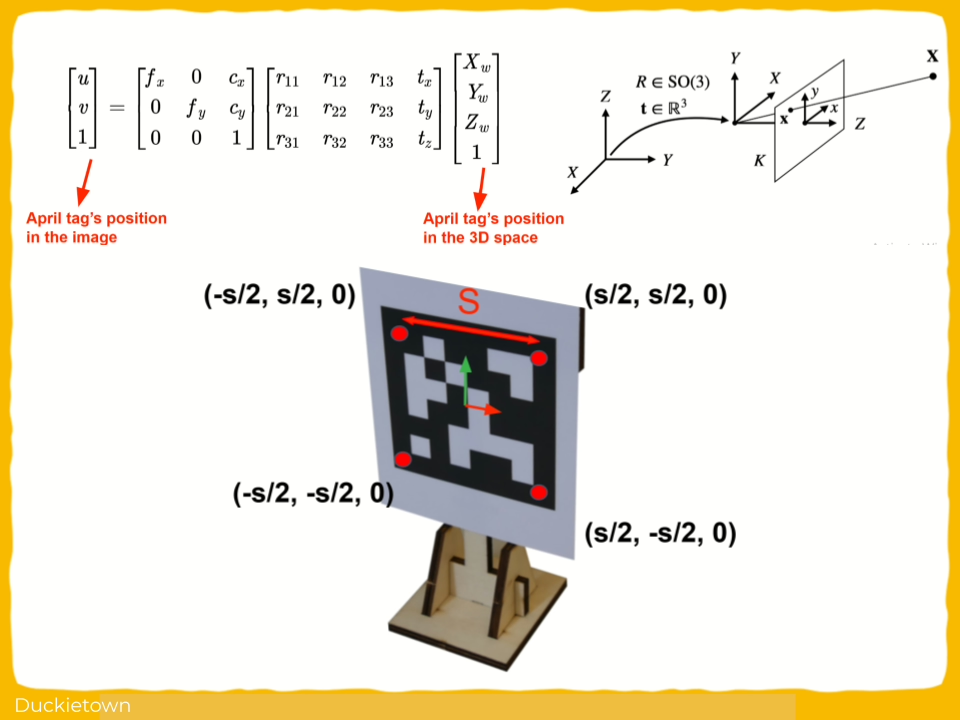

Correction incorporates April tag detections via a Perspective-n-Point (PnP) solution, updating the state with landmark-relative observations under observation noise. The state vector grows dynamically as new landmarks are observed, and the covariance matrix tracks both robot and landmark uncertainty.

The technical challenges include maintaining filter consistency under linearization errors, ensuring landmark observability despite partial fields of view, and synchronizing asynchronous data from wheel encoders, camera frames, and Vicon ground-truth captures.

Moreover, AprilTag detection is constrained by lighting artifacts and pose ambiguity at shallow viewing angles, introducing non-Gaussian errors that the EKF must approximate linearly.

Moreover, tuning noise parameters presents the classical tradeoff: too little noise leads to overconfidence and divergence; too much noise leads to filter paralysis. Deployment exposes the systemic difference between simulation and physical experiments: real Duckiebots do not move with perfect kinematics, cameras suffer from radial distortion, and computation suffers from non-deterministic latency.

In SLAM, everything that can drift will drift, and the role of the filter is to drift more slowly than entropy.

Did this work spark your curiosity?

Check out the follow works on path planning with Duckietown:

Extended Kalman Filter (EKF) SLAM for Duckiebots: Authors

AmirHossein Zamani was a former Duckietown student, and currently, he is pursuing his Ph.D. in Computer Science at Mila (Quebec AI Institute) and Concordia University, Canada. He is also working as an AI Research Scientist Intern at Autodesk in Montreal, Canada.

Léonard Oest O’Leary was a former Duckietown student, and currently, he is pursuing his Master of Science in Computer Science at the University of Montreal, Canada.

Kevin Lessard was a former Duckietown student, and currently, he is pursuing his Master of Science in Machine Learning at Mila – Quebec AI Institute in Montreal, Canada.

Learn more

Duckietown is a modular, customizable, and state-of-the-art platform for creating and disseminating robotics and AI learning experiences.

Duckietown is designed to teach, learn, and do research: from exploring the fundamentals of computer science and automation to pushing the boundaries of knowledge.

These spotlight projects are shared to exemplify Duckietown’s value for hands-on learning in robotics and AI, enabling students to apply theoretical concepts to practical challenges in autonomous robotics, boosting competence and job prospects.